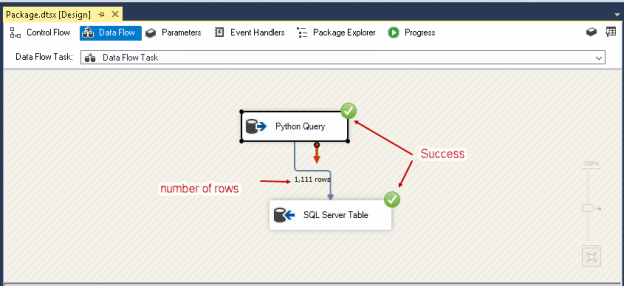

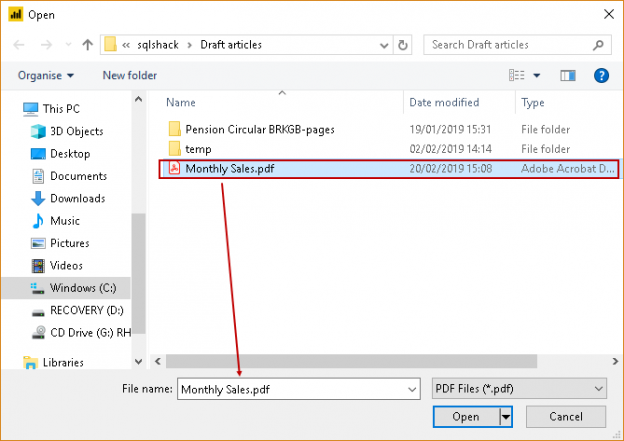

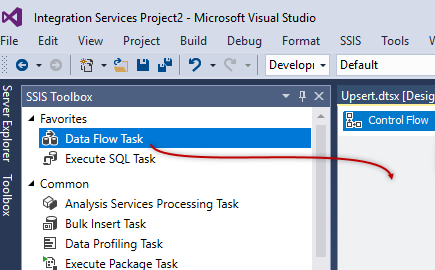

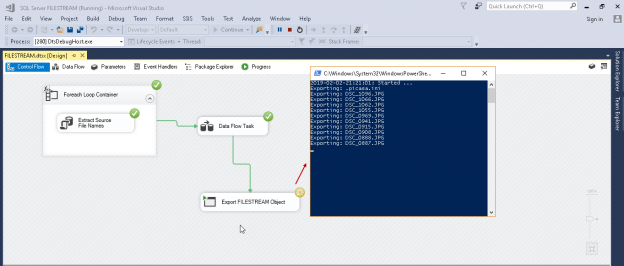

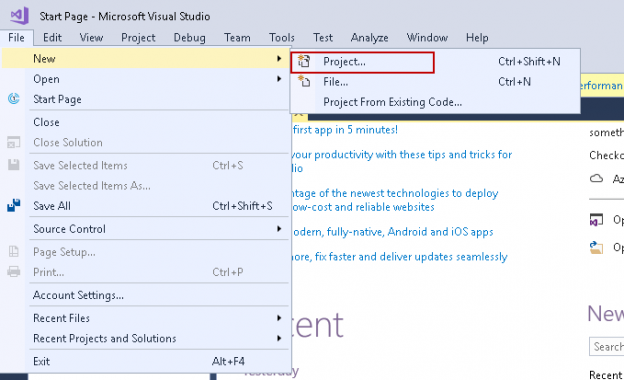

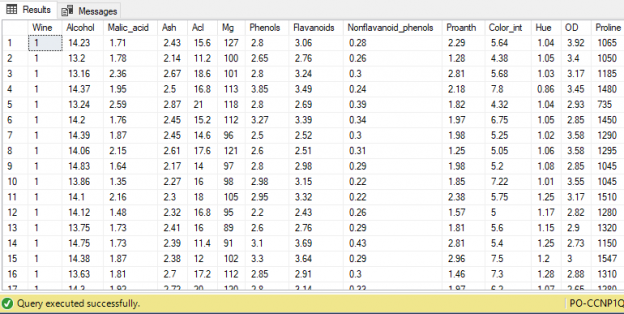

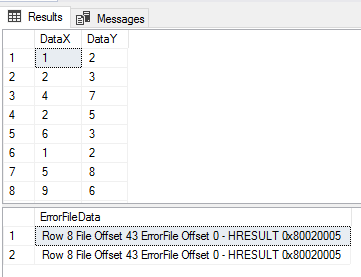

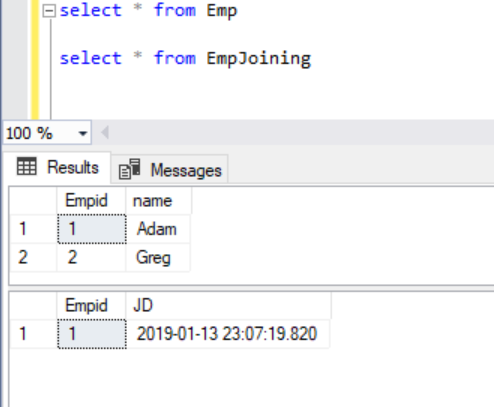

Using Python SQL scripts is a powerful technical combination to help developers and database administrators to do data analytics activities. Python provides many useful modules to perform data computation and processing of data efficiently. We can run Python scripts starting from SQL Server 2017. We can create the ETL solutions to extract data from various sources and insert into SQL Server.

Read more »