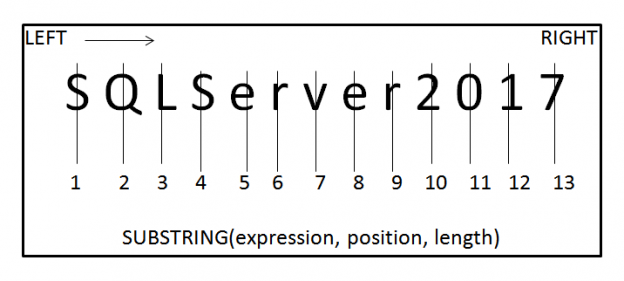

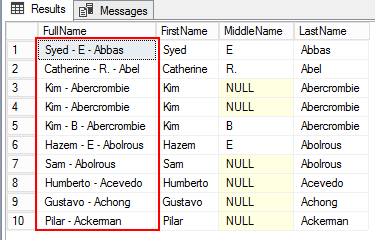

The requirement of data refactoring is very common and vital in data mining operations. In the previous article SQL string functions for Data Munging (Wrangling), you’ll learn the tips for getting started with SQL string functions, including the substring function for data munging with SQL Server. As we all agree that the data stored in one form sometimes require a transformation, we’ll take a look at some common functions or tasks for changing the case of a string, converting a value into a different type, trimming a value, and replacing a particular string in a field and so on.

Read more »