This is a small post about how you may control the cardinality estimator version and determine which version was used to build a plan.

The version of the cardinality framework is determined by the query database context, where the database has a specific compatibility level.

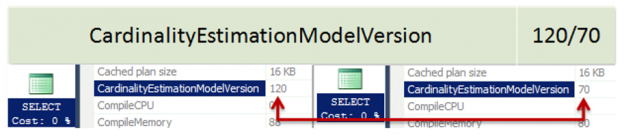

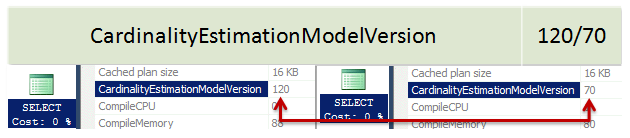

When you create a database in SQL Server 2014 it has the latest compatibility level equals 120 by default. If you issue a query in that database context, the new cardinality version will be used. You may verify this by inspecting the plan property “CardinalityEstimationModelVersion” of the root language element (the one with the green icon), SELECT, for example.

If you issue a query in a database context that is less than 120 level compatibility, the old version of the cardinality estimation framework will be used.

If the new cardinality estimation version was used you’ll see the value 120 (you may remember it as 12.0 – the SQL Server 2014 version number) or 70 (also the server version number 7.0, because the old one was first introduced in early 1998 for the SQL Server 7.0 and then was evolved without major concept changes).

Here is how it looks like:

Note: Please, make sure, when searching the CardinalityEstimationModeVersion property, that you are using the SQL Server Management Studio 2014. If you use SSMS 2012, for example, it will silently throw it out, even without telling you, and you won’t see it!

When Microsoft developed the new cardinality estimation framework, there was a goal to improve the quality of plans, however, there wasn’t a goal to avoid any regressions. That means that you should be prepared to regressions and know how to make an easy fix. Also, you should test your workload before moving on to the new cardinality mechanism.

Exactly for that purpose, Microsoft issued two new trace flags, that are officially documented. TF 2312 to force the new (120 and later) cardinality estimation behavior, the exact CE will depend on the database compatibility level, and TF 9481 to force the old one, also regardless of the compatibility level. You may use these flags on various levels, globally, session or query.

That opens a lot of possible scenarios, i’ll enlist some of them:

- You want to use the new features, like parallel SELECT INTO, but don’t want to enable the new CE – enable TF 9481 globally.

- You want to try the new CE, but you are afraid of regressions – leave the compatibility level lower than 120 and enable the new CE in a particular query with the TF 2312, using QUERYTRACEON option, to test it.

- You enabled the new CE and everything is fine, except a few queries that had a better plan with the old CE- use TF 9481 and the option QUERYTRACEON to leverage these particular queries.

You may imagine the scenario you want, what is needed to remember, that you have a full control over what’s happening – if you don’t like it – simply turn it off.

The next question naturally is, what if both TFs are enabled. In this case, they neutralize each other and the behavior is if no TF were enabled.

Now we are ready to move on to the changes of the new cardinality estimation framework!

Table of Contents

References

- ALTER DATABASE (Transact-SQL) Compatibility Level

- Enable plan-affecting SQL Server query optimizer behavior that can be controlled by different trace flags on a specific-query level

- Identifying SQL Server 2014 New Cardinality Estimator issues and Service Pack 1 improvement

- SQL Server 2017: Adaptive Join Internals - April 30, 2018

- SQL Server 2017: How to Get a Parallel Plan - April 28, 2018

- SQL Server 2017: Statistics to Compile a Query Plan - April 28, 2018