In the continuation of our SQL FILESTREAM article series, we’ll be covering transaction log backups

In SQL Server, we take transaction log backups regularly to have a point-in-time recovery of a database. It is essential to define the backup policy with the combination of full, differential and transaction log backups. A standard configuration of backups for large databases in the production database environment is as follows.

- Weekly Full backup

- Daily differential backup

- Transaction log backup every 30 minutes

We need to consider the transaction log backup size while designing a backup and recovery solution. In SQL FILESTREAM database, we store the objects into FILESTREAM container in the file system. SQL Server requires both metadata stored in relational tables and corresponding objects into the file system to recover a database. A Transaction log backup in FILESTREAM databases copies the relevant file from the FILESTREAM container as well into a backup file. It copies the files from the FILESTREAM container in the transaction log backup that is created, modified after the last transaction log backup. It is important to understand the behaviour of the log backup for FILESTREAM database. In this article, we will explore the transaction log backup for the FILESTREAM database backup.

Prerequisites

- SQL Server instance with FILESTREAM feature

- FILESTREAM database

- FILESTREAM table

In this article, we are using the [DemoSQL] FILESTREAM database with FILESTREAM table [DemoSQL].[dbo].[SQLShack]

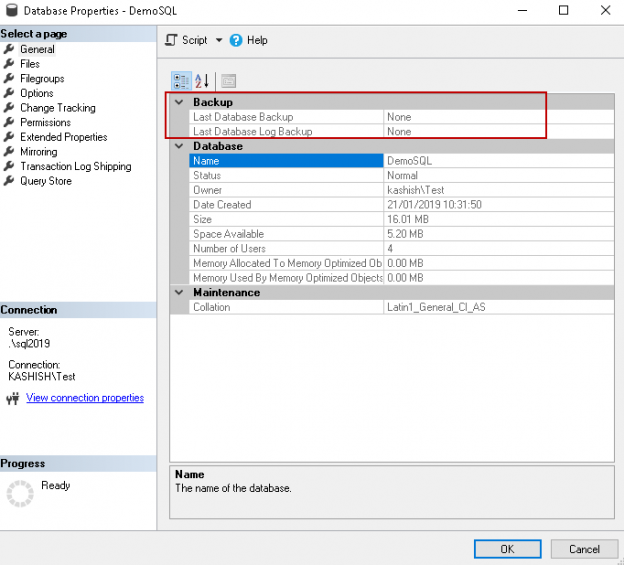

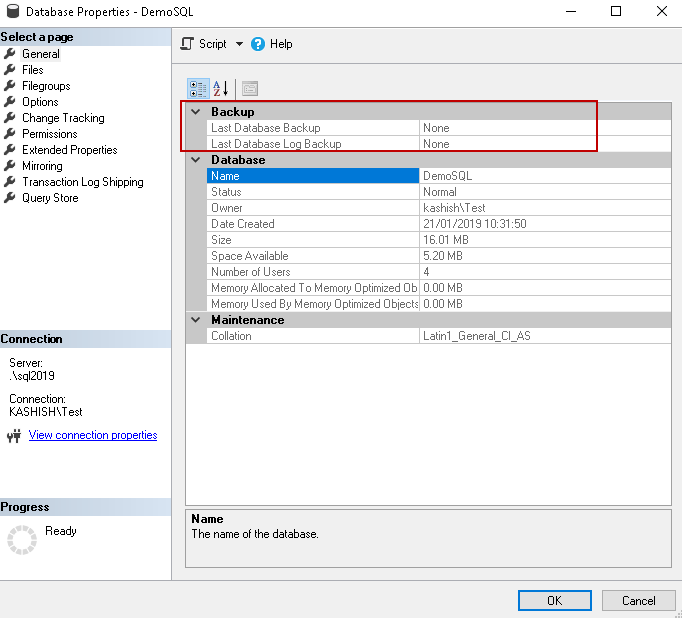

We do not have any database backups for this database as of now. You can verify it by right click on the database and Properties.

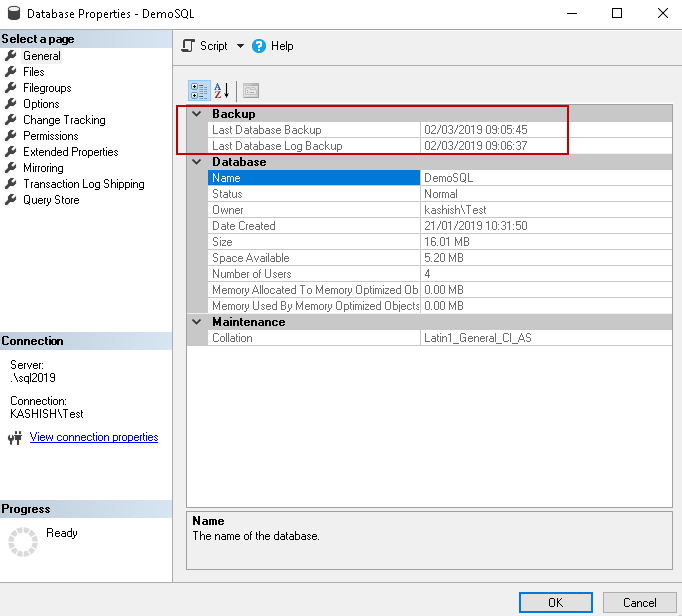

Before we proceed further, take a full backup and log backup for this SQ Server FILESTREAM database.

Execute Full backup using the following script

|

1 2 |

BACKUP DATABASE [DemoSQL] TO DISK = N'C:\Program Files\Microsoft SQL Server\MSSQL15.SQL2019\MSSQL\Backup\DemoSQL.bak' WITH NOFORMAT, NOINIT, NAME = N'DemoSQL-Full Database Backup', SKIP, NOREWIND, NOUNLOAD, STATS = 10 GO |

Execute Log backup using the following script

|

1 2 |

BACKUP LOG [DemoSQL] TO DISK = N'C:\Program Files\Microsoft SQL Server\MSSQL15.SQL2019\MSSQL\Backup\DemoSQL_log.trn' WITH NOFORMAT, INIT, NAME = N'DemoSQL-Log Database Backup', SKIP, NOREWIND, NOUNLOAD, STATS = 10 GO |

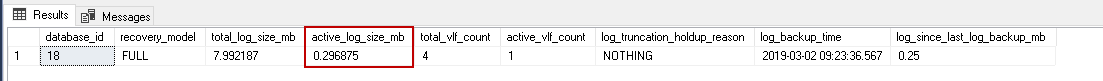

In SQL Server 2017 and onwards, we can use DMV sys.dm_db_log_stats to get information about the transaction log. Execute the following query in the FILESTREAM database.

|

1 2 3 4 5 6 7 8 9 10 11 |

SELECT database_id, recovery_model, total_log_size_mb, active_log_size_mb, total_vlf_count, active_vlf_count, log_truncation_holdup_reason, log_backup_time, log_since_last_log_backup_mb FROM sys.Dm_db_log_stats(db_id(db_name())) |

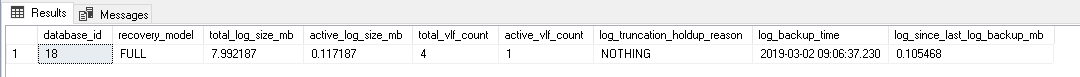

At this point, we are having 0.085937 MB active transaction log size.

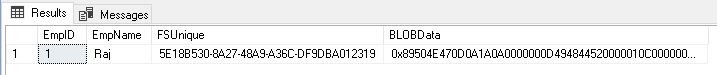

Let us insert one record in FILESTREAM table.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 |

DECLARE @File varbinary(MAX); SELECT @File = CAST( bulkcolumn as varbinary(max) ) FROM OPENROWSET(BULK 'C:\Users\rajen_000\Pictures\BBQ\DSC_0245.JPG', SINGLE_BLOB) as MyData; INSERT INTO [DemoSQL].[dbo].[SQLShack] VALUES ( 2, 'Rajendra', NEWID(), @File ) |

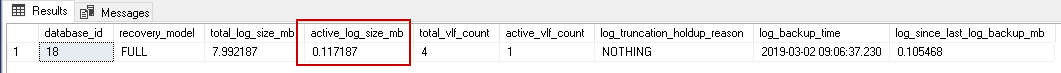

In the following screenshot, we can see a minimal change in the active log size from 0.085937 MB to 0.117187 MB

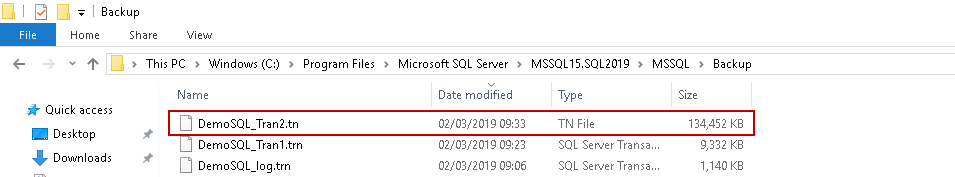

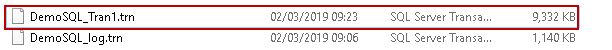

Execute the transaction log backup again. Log backup size is 9.332 KB now. We inserted only 1 record in the FILESTREAM table.

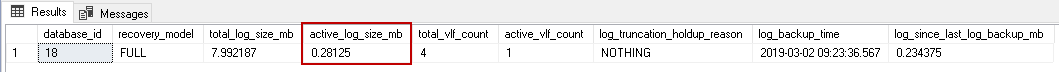

Insert one more record and monitor the transaction log size.

Update the object in FILESTREAM table as well using the following query.

|

1 2 3 4 5 6 7 |

UPDATE [DemoSQL].[dbo].[SQLShack] SET BLOBData = (SELECT * FROM OPENROWSET( BULK 'C:\Users\rajen_000\Pictures\BBQ\DSC_0246.JPG', SINGLE_BLOB) AS Document) WHERE FSUnique = '5E18B530-8A27-48A9-A36C-DF9DBA012319' GO |

After the update also, we have a small active log of 0.296875 MB

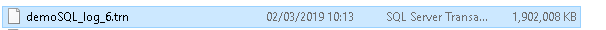

Execute the log backup again and view the transaction log size. In the following image, you can see log backup size increased to 135,452 KB.

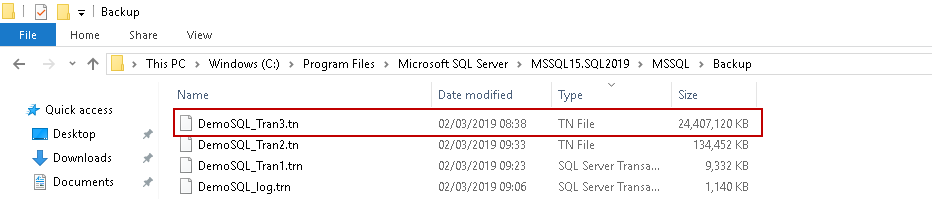

I performed a few more DML command on SQL FILESTREAM table and took the log backup. It was surprising to see the log backup size grown to 23.2 GB.

In the above examples, we observed, using the active log size, that we did not have much transaction log growth but still we have a huge size of log backups. SQL Server does not store the objects for the FILESTREAM database into its relational table. Therefore, it does not require more log size to perform the transactions. SQL Server copies the FILESTREAM files as well in the transaction log backup. It is the reason you might see a huge transaction log backup size. We need to consider below points in transaction log backup for SQL FILESTREAM databases.

- Insert statement: Suppose we are only inserting the objects into the FILESTREAM table, log backup contains the copy of the inserted object

- Update Statement: In case of update objects into FILESTREAM table, log backup contains the copy of the old object and new object as well. If we perform multiple update queries on FILESTREAM table, log backup size might increase significantly

- Delete Statement: In case of delete activity, log backup contains the copy of the deleted object as well

SQL Server removes the files from the FILESTREAM container once the transaction log backup does not require these files. Once we take the log backup, it takes backups of all required files from the FILESTREAM container, and then garbage collector process removes these files from the container.

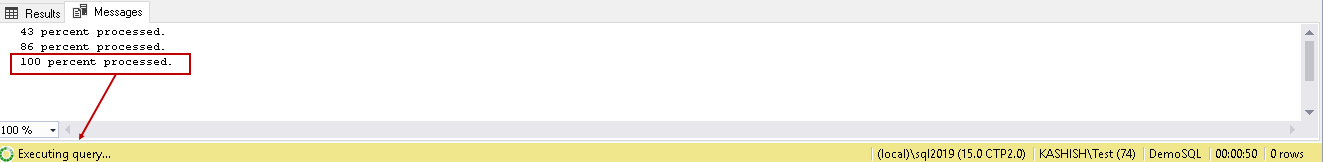

You can notice a considerable time in the log backup progress even after 100 percent processed status. It indicates that SQL Server is copying the files from the FILESTREAM container to log backup file.

Let us examine the log backup file. We can use the RESTORE FILELISTONLY command to get a list of database files included in the backup file.

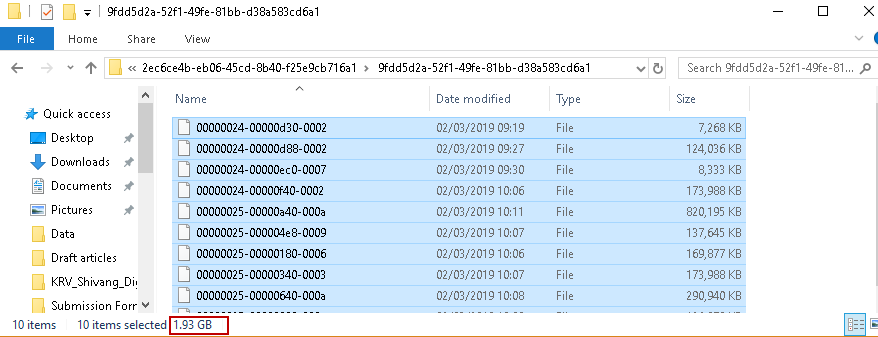

In this example, I am using the below 1.81 GB log backup file.

Execute the following query and observe the output.

|

1 2 3 |

RESTORE FILELISTONLY FROM DISK = N'C:\Program Files\Microsoft SQL Server\MSSQL15.SQL2019\MSSQL\Backup\DemoSQL_Tran6.trn' ; GO |

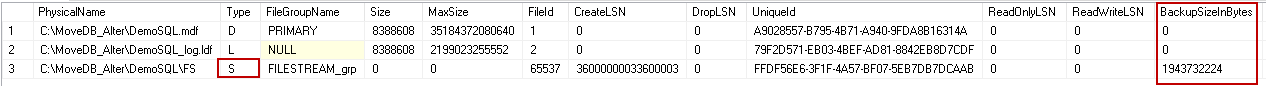

We can observe the following things from the output.

Log backup file contains all DB files

- Primary database file ( Type D)

- Transaction log file ( Type L)

- FILESTREAM container ( Type S)

Look on highlighted column BackupSizeinBytes in the output. We have following files size in this 1.81 GB log backup.

- DemoSQL.mdf backup size 0 bytes

- DemoSQL_log.ldf backup size 0 bytes

- DemoSQL FILESTREAM files backup size 1943732224 bytes i.e. 1.81 GB.

In this case, we have the log backup size(1.81 GB) equivalent to FILESTREAM container size( 1.81 GB) . It proves our point stated earlier that log backup copies the objects from FILESTREAM container into log backup.

We need to note here that SQL Server does not copy the entire files from the FILESTREAM container. You could see a difference in overall FILESTREAM container size and log backup size because it copies only those files that are required for the transaction log backup. In our case, we had FILESTREAM container size 1.93 GB however log backup size 1.81 GB.

Let us explore more on log backups for SQL FILESTREAM database using extended events in SQL Server.

Create an extended event using the following query. In this extended event session, we need to capture the SQL Server backup and restore progress trace sqlserver.backup_restore_progress_trace.

|

1 2 3 4 5 |

CREATE EVENT SESSION [Backup Monitor] ON SERVER ADD EVENT sqlserver.backup_restore_progress_trace( ACTION(sqlserver.client_hostname,sqlserver.database_name,sqlserver.session_nt_username,sqlserver.sql_text)) WITH (MAX_MEMORY=4096 KB,EVENT_RETENTION_MODE=ALLOW_SINGLE_EVENT_LOSS,MAX_DISPATCH_LATENCY=30 SECONDS,MAX_EVENT_SIZE=0 KB,MEMORY_PARTITION_MODE=NONE,TRACK_CAUSALITY=OFF,STARTUP_STATE=OFF) GO |

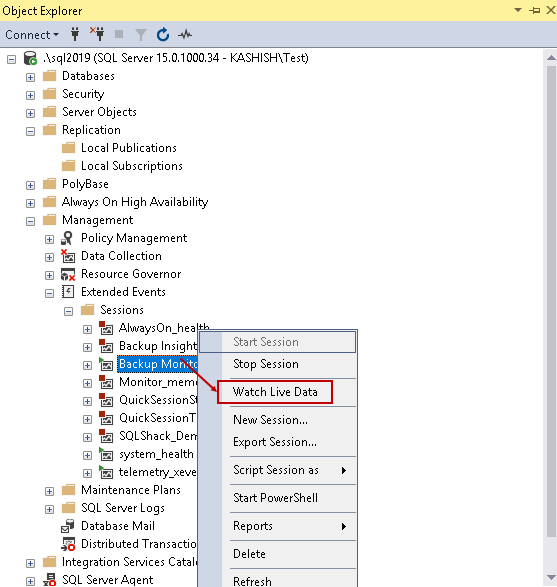

Once we have created the extended event session, right click on it under the Management and Extended Events. Click on Watch Live Data.

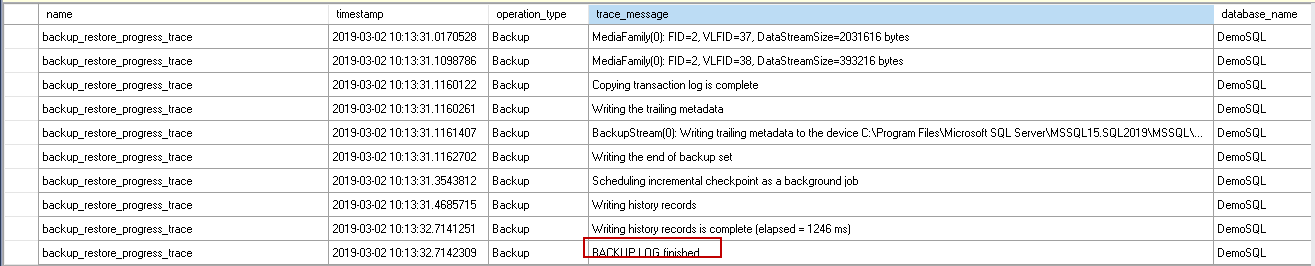

Execute the transaction log backup for FILESTREAM database now and view data in Live Data session window. In the following section, we will explore the output of the extended event session output.

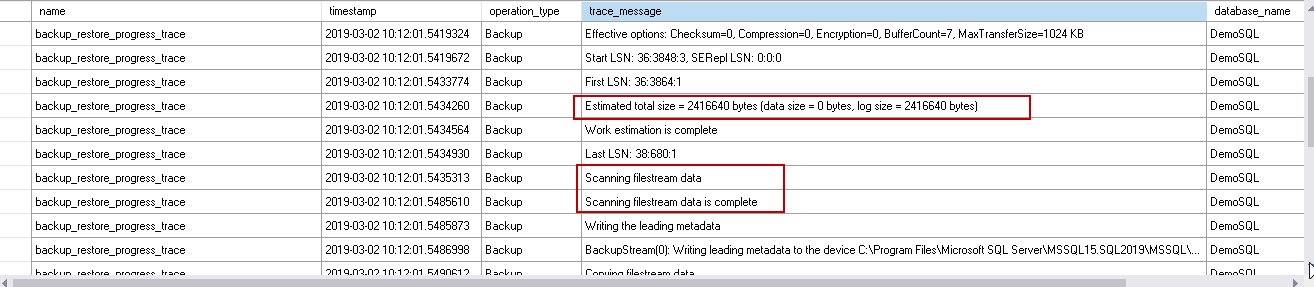

Once we start the log backup process, in the extended event session, we can see a message Backup Log started.

It acquires the required locks on the databases and opens the backup media set to write.

SQL Server performs the estimation of the backup size. If you do not have sufficient free space in the backup drive, you get an error based on this estimated size. You can note the estimated total size is 2416640 bytes, i.e. 2.30 MB. It scans SQL FILESTREAM data after the work estimation. The work estimation size does not include the FILESTREAM container files size required to be backed up.

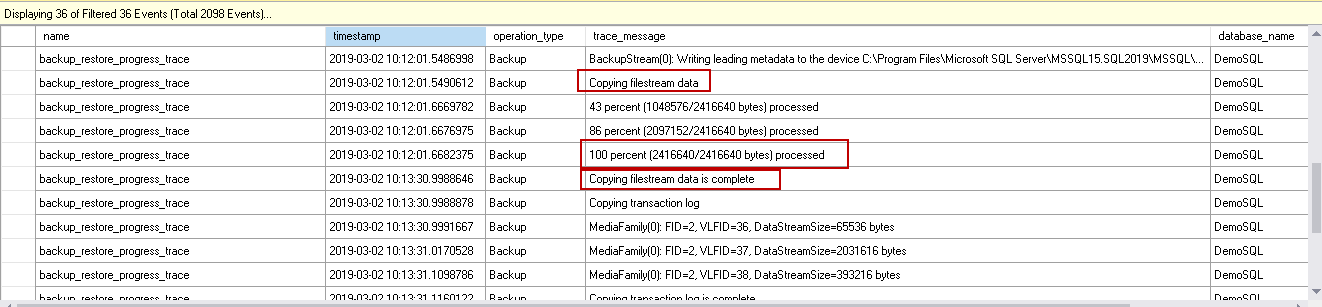

SQL Server starts copying the FILESTREAM data into the log backup. We need to note here that at 100 percent stage, we still have backup size 2416640 bytes, i.e. 2.30 MB.

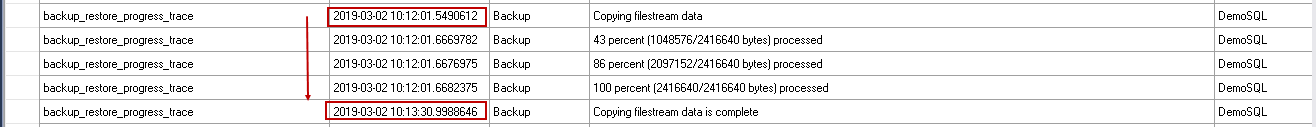

In the following screenshot, we can look at the timings for the FILESTREAM data copy.

- Copying FILESTREAM data: 2019-03-02 10:12:01.5490612

- Finish copying FILESTREAM data: 2019-03-02 10:13:30.9988646

It is the reason you can notice that transaction log backup takes time even after we receive 100 percent processed message. If SQL Server needs to copy large size of FILESTREAM container into log backup, it might take longer to finish the backup.

Once it finishes copying FILESTREAM data, it copies the required transaction log and completes the log backup.

Considerations for log backups in SQL FILESTREAM databases

- Monitor the FILESTREAM database activity in your environment to plan transaction log backups

- You should have sufficient free disk space to accommodate FILESTREAM database log backup

- Keep a watch on the log backup size for FILESTREAM database and take actions accordingly

- Schedule the log backup frequency to a minimum duration to keep log backups size to a minimum size

Table of contents

- Understanding PostgreSQL SUBSTRING function - September 21, 2024

- How to install PostgreSQL on Ubuntu - July 13, 2023

- How to use the CROSSTAB function in PostgreSQL - February 17, 2023