So far, we’ve discussed several phases of backup that starts with planning, creating, strategizing and implementing. In this article, we are going to see how database administrators can define the strategy to improve backup performance and efficiently manage backups in SQL Server 2017. The following are the topics of discussion:

- Discuss checkpoints

- Discuss the enhancements made in the Dynamic Management View (DMV) sys.dm_db_file_space_usage for smart differential backups

- Discuss the enhancements made for the Dynamic Management function (DMF) sys.dm_db_log_stats for smart transactional log backup

- Understand the functioning of smart differential backup and its internals

- Understand the Smart transaction log backup process and its internals

- T-SQL scripts

- And more…

Background

A checkpoint is a background process that writes dirty pages to disk. A checkpoint performs a full scan of the pages in the buffer pool, lists all the dirty pages that are yet to be written to the disk, and finally writes those pages to the disk. In SQL instances that do not have many dirty pages in the buffer pool, this is a trivial operation. However, with the SQL instances that have OLTP databases, use more memory and/or involve sequential scanning of all pages, the performance of the system could be impacted.

With SQL Server 2012, indirect checkpoints were introduced. In this case, the dirty page manager manages the dirty page list and generally keeps tracks of the all the dirty pages modifiers of the database. By default, it runs every 60 seconds and tracks the dirty pages that need to be flushed.

For example, if an indirect checkpoint is set to 120 seconds then individual pages would be flushed around 120 seconds after that they were made dirty. This will impact I/O. I/O also depends on the number of dirty modifiers lists of the database; we may even see a significant spike in the set target interval and see small hits as the pages change. This eliminates overhead to tracing and flushing the dirty pages which results in significant improvement in backup performance. To review further, go through the reference section below to understand more about the checkpoint and backup performance improvement process in detail.

On a database where an automatic checkpoint mode is set, tracing of the entire unit of the buffer pool is necessary to evaluate for possible dirty pages. On the other hand, with an indirect checkpoint, only those pages of the buffer pool that are dirtied have to be dealt with.

For example, let’s consider a system with 2 TB of memory that contains ~250 million buffer units and has 100 dirty pages. Assume that it requires 5 CPU cycles to trace and identify the status of each buffer unit. To traverse 250 million BUFs, it would require 1.25 trillion CPU cycles—and to what? Simply to find 100 positive dirty pages in the automatic checkpoint mode! However, 100 dirty pages are hardly anything with the indirect checkpoint mode. The entire buffer pool scanning time is eliminated.

Smart Differential Backup

Before the advent of SQL Server 2017, to understand how the extents are altered since the last full backup used to be done by querying the DBCC PAGE command. The Differential Changed Map (DCM) is a bitmap pointer referenced by SQL Server to trace the modified pages since the last full backup. It is stored in DCM pages, one per GAM interval. A differential backup process will identify those pages with CHANGED status in the DCM. There are usually three additional extents included in a differential backup.

It’s demo time

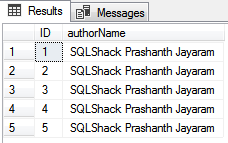

SQLShackAuthorDS is a sample table created with two columns, id and authorname.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 |

USE SQLShackDemoSBKP GO --Create the sample table CREATE TABLE [dbo].[SQLShackAuthorList]( [ID] [int] IDENTITY(1,1) NOT NULL, [authorName] [varchar](8000) NULL, PRIMARY KEY CLUSTERED ( [ID] ASC ) ) ON [PRIMARY] GO --insert 1000 dummy data INSERT INTO SQLShackAuthorList values('SQL Shack Prashanth') GO 1000 --select the sample data select top 5 * From SQLShackAuthorList |

|

1 2 3 4 5 6 7 8 9 |

--Backup the database BACKUP DATABASE SQLShackDemoSBKP TO DISK = 'F:\PowerSQL\SQLShackDemoSBKP.bak' WITH INIT -- List data and index pages allocated to the SQLShackAuthorsDS table DBCC IND('SQLShackDSDemo',SQLShackAuthorList,-1) GO --set the trace flag DBCC TRACEON(3604) DBCC PAGE('SQLShackDSDemo',1,6,3) WITH TABLERESULTS GO |

DBCC PAGE arguments:

- Database name or database id

- Page file ID: 1

- Page Number: 6; to retrieve DCM page

- Output: 3; retrieve page header and details of every page details for the data interpretation

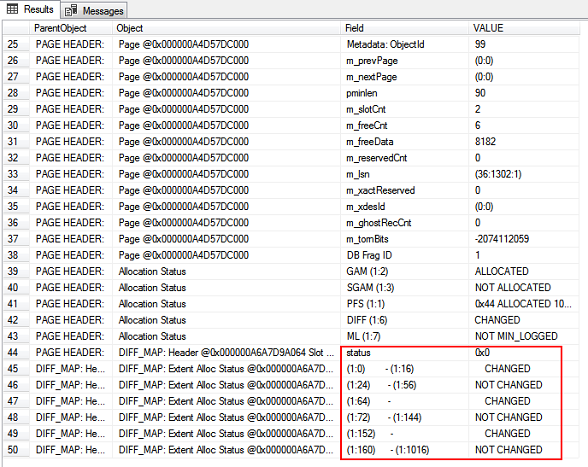

In the DIFF_MAP, we can see the status of DCM pages. The output lists the modified extents since the last full backup. There are currently five modified extents listed—1:0, 1:8, 1:16 1:64, 1:152

Now, let’s go ahead and update the SQLShackAuthorList table with some dummy values for the ids 40,50,60,70 and look for the modified extents

|

1 2 3 4 |

update SQLShackAuthorList SET authorName='SQLShack Prashan Jayaram' where id in(40,50,60,70) DBCC PAGE('SQLShackDSDemo',1,6,3) WITH TABLERESULTS |

We can see that modified extents listed are 1:0, 1:8, 1:16, 1:24, 1:40, 1:64, 1:128, 1:52, and 1:304. Total of 9 extents are modified since the last backup.

Let’s now initiate a differential backup

|

1 |

BACKUP DATABASE SQLShackDemoSBKP TO DISK = 'F:\PowerSQL\SQLShackDSDemo_Diff_1.bak' WITH DIFFERENTIAL; |

Let’s perform the insert operation and identify the modified extents

|

1 2 3 |

Insert into SQLShackAuthorList values('Prashanth Jayaram'+ CAST(NEWID() AS VARCHAR(MAX))) GO 100 DBCC PAGE('SQLShackDSDemo',1,6,3) WITH TABLERESULTS |

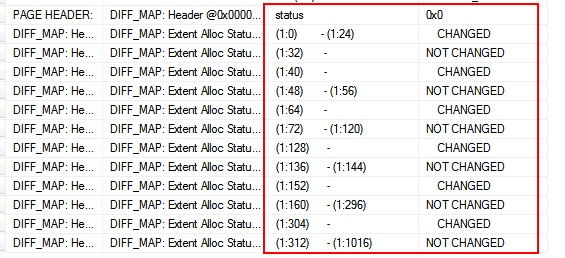

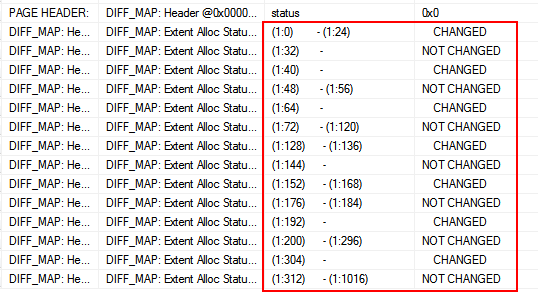

In the output, the listed modified extents are 1:0, 1:8, 1:16, 1:24, 1:40, 1:64, 1:128, 1:36, 1:52, 1:160, 1:68, 1:192, and 1:304. A total of 13 extents are modified since the last full backup.

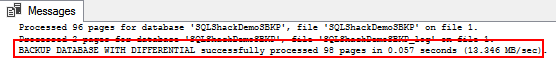

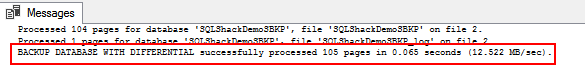

Again, let’s initiate a differential backup. As we can see, there is a total of 105 pages processed during the backup.

|

1 |

BACKUP DATABASE SQLShackDemoSBKP TO DISK = 'F:\PowerSQL\SQLShackDSDemo_Diff_2.bak' WITH DIFFERENTIAL; |

It looks like SQL Server adds three more additional extents than the listed extents in the DCM i.e. 98 pages were processed during the 1st differential backup and 4 extents were modified in the process of the update-and-insert operation. In total, 98+4+3=105 pages, as you can see in the above output. You can reset the DCM by initiating a FULL backup.

This is a hard way to estimate and identify the modified extents prior to SQL Server 2017.

Let’s walk through the details of SQL Server 2017 DMV’s and DMF’s which facilitates an extra column to measure the modified extents in a simpler way.

The DMV sys.dm_db_file_space_usage has a new field called modified_extent_page_count. This column tells us how many pages were changed since the last full backup. The modified page count can be used to track differential changes in the database since the last full backup to decide if a differential backup is beneficial.

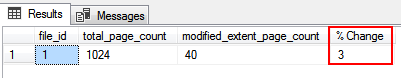

Now, run the following SQL to check how many pages were modified

|

1 2 3 4 5 6 |

SELECT file_id, total_page_count, modified_extent_page_count, (modified_extent_page_count *100) / total_page_count [% Change] FROM sys.dm_db_file_space_usage |

Let’s run the update-and-insert SQL statement and then measure the modified pages

|

1 2 3 4 5 |

UPDATE SQLShackAuthorList SET authorName='SQLShack Prashanth Jayaram' insert into SQLShackAuthorList values('SQL Shack Prashanth Jayaram'+ CAST(NEWID() AS VARCHAR(MAX))) GO 100000 |

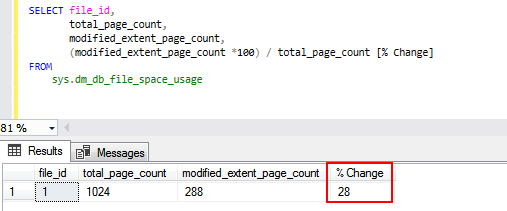

Let’s now check the modified pages by querying sys.dm_db_file_space_usage

We can see that there is a 28% change in the database. This information is very helpful and an important factor in deciding whether to initiate Full or a differential database backup.

Let’s prepare the SQL

The script can be saved as a SQL file and executed over multiple instances using the sqlcmd command.

- Variable declaration, the @fullthreshold and @diffthreshold variables require initial values, which are used to compare and initiate the full or differential backup

- Verify the SQL Server instance version

- Define a cursor to hold a list of active databases in a given instance

- Loop through every database

- Calculate the pages that have changed since last full backup and assign the value to a variable

- Prepare a dynamic SQL to compare and initiate a full or differential backup

- Reset the cursor variables

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 |

--variable declaration DECLARE @ver nvarchar(128), @fullthreshold int=80, @Diffthreshold int=35, @changes numeric(5,2), @sql nvarchar(max), @sSQL nvarchar(max), @rval int, @ParmDefinition nvarchar(max), @dbname NVARCHAR(100), @fPath NVARCHAR(256) , @fName NVARCHAR(256) , @Date VARCHAR(20) , @value varchar(10) --Checking the SQL Server versioni using serverproperty SET @ver = CAST(serverproperty('ProductVersion') AS nvarchar) SET @ver = SUBSTRING(@ver, 1, CHARINDEX('.', @ver) - 1) SET @ParmDefinition = N'@retvalOUT int OUTPUT'; IF ( @ver = '14' ) BEGIN -- backup directory SET @fpath = 'F:\PowerSQL\SmartBackup\' -- filename format SELECT @Date = CONVERT(VARCHAR(20),GETDATE(),112) --Declare the cursor DECLARE database_cursor CURSOR READ_ONLY FOR SELECT name FROM master.sys.databases WHERE name NOT IN ('master','model','msdb','tempdb') and state_Desc='ONLINE' OPEN database_cursor FETCH NEXT FROM database_cursor INTO @dbname WHILE @@FETCH_STATUS = 0 BEGIN SET @fName = @fpath + @dbname + '_' + @date --Calculate the pages that are modified since last full backup and assign it to a variable set @SQL='SELECT @retvalOUT=CAST(ROUND((SUM(modified_extent_page_count)*100.0)/SUM(allocated_extent_page_count),2) as decimal(10,2)) from '+@dbname+'.sys.dm_db_file_space_usage' EXEC sp_executesql @SQL, @ParmDefinition, @retvalOUT=@rval OUTPUT; SELECT @rval; --Initiate FULL or differential backup based on the defined threshold values SET @sSQL= 'IF ('+ cast(@rval as varchar(10))+'>' +cast(@fullthreshold as varchar(10))+') BACKUP DATABASE '+@dbname +' TO DISK ='''+ @fName+'_full.bak''' +CHAR(13) +'ELSE'+CHAR(13)+' BEGIN IF ('+ cast(@rval as varchar(10))+'>' +cast(@Diffthreshold as varchar(10))+' AND ' +cast(@rval as varchar(10))+'<'+ cast(@fullthreshold as varchar(10)) +')'+char(13) +'BACKUP DATABASE '+@dbname +' TO DISK ='''+ @fName+'_Diff.bak'''+' WITH DIFFERENTIAL'+CHAR(13)+ 'END' --Print the SQL print @sSQL --Execute the dynamic SQL EXEC sp_executesql @sSQL FETCH NEXT FROM database_cursor INTO @dbname END CLOSE database_cursor DEALLOCATE database_cursor END |

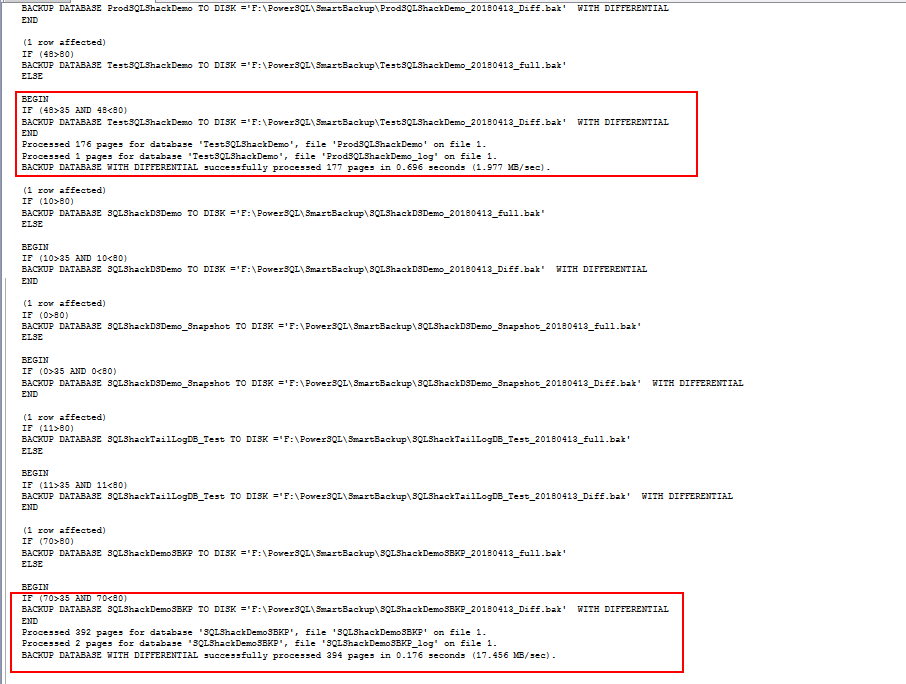

In the following output, we can see that the 48% of the database TestSQLShackDemo has changed and 70% of SQLShackDemoSBKP has changed. This results in initiating a differential backup.

Transaction log backups in SQL Server 2017

In most production environments, setting the right auto-growth value is a challenging and even daunting task. Understanding the VLFs and managing T-log backups is another area where database administrators spend a lot of time analyzing database growth and transaction rates of the database in order to configure the database to perform at an optimal level.

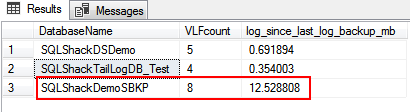

SQL Server 2017 introduces an enhancement to sys.dm_db_log_stats dynamic management function. The function has an output column called log_since_last_log_backup_mb, which has the value that denotes the amount of log data generated since the last transaction log backup. We can develop a procedure based on the value of this column and efficiently use it to initiate a transaction log backup. This facilitates maintaining a consistent log backup size and prevents abrupt database auto-growth due to transactional outbursts. It also helps database administrators to effectively manage the space issues, thereby speeding up the database recovery process.

I recommend reading the article, Transaction Log Physical Architecture to get more insight into the internals of the transaction log file.

|

1 2 3 4 5 6 7 8 9 |

SELECT name AS 'DatabaseName', total_vlf_count AS 'VLFcount' , log_since_last_log_backup_mb FROM sys.databases AS s CROSS APPLY sys.dm_db_log_stats(s.database_id) where s.state_Desc='ONLINE' |

Let’s initiate a transactional log backup for a specific database that has generated a log of 10 -20 MB. In the above output, we can see that SQLShackDemoSBKP has generated 12 MB log data.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 |

--variable declaration DECLARE @ver nvarchar(128), @logthreshold int=10, @log_since_last_log_backup_mb decimal(10,2), @sql nvarchar(max), @sSQL nvarchar(max), @rval int, @ParmDefinition nvarchar(max), @dbname NVARCHAR(100), @fPath NVARCHAR(256) , @fName NVARCHAR(256) , @Date VARCHAR(20) , @value varchar(10) --Checking the SQL Server versioni using serverproperty SET @ver = CAST(serverproperty('ProductVersion') AS nvarchar) SET @ver = SUBSTRING(@ver, 1, CHARINDEX('.', @ver) - 1) SET @ParmDefinition = N'@retvalOUT int OUTPUT'; IF ( @ver = '14' ) BEGIN -- backup directory SET @fpath = 'F:\PowerSQL\SmartBackup\' -- filename format SELECT @Date = CONVERT(VARCHAR(20),GETDATE(),112) --Declare the cursor DECLARE database_cursor CURSOR READ_ONLY FOR SELECT name, convert(decimal(10,2),log_since_last_log_backup_mb) FROM sys.databases AS s CROSS APPLY sys.dm_db_log_stats(s.database_id) where s.state_Desc='ONLINE' and recovery_model_desc='FULL' and name IN ('SQLShackDemoSBKP','SQLShackTailLogDB_Test') OPEN database_cursor FETCH NEXT FROM database_cursor INTO @dbname,@log_since_last_log_backup_mb WHILE @@FETCH_STATUS = 0 BEGIN SET @fName = @fpath + @dbname + '_' + @date --Initiate Log backup based on the defined threshold values SET @sSQL= 'IF ('+ cast(@log_since_last_log_backup_mb as varchar(10)) +'>='+cast(@logthreshold as varchar(10))+') BACKUP log '+@dbname +' TO DISK ='''+ @fName+'_log.bak''' --Print the SQL print @sSQL --Execute the dynamic SQL EXEC sp_executesql @sSQL FETCH NEXT FROM database_cursor INTO @dbname, @log_since_last_log_backup_mb END CLOSE database_cursor DEALLOCATE database_cursor END |

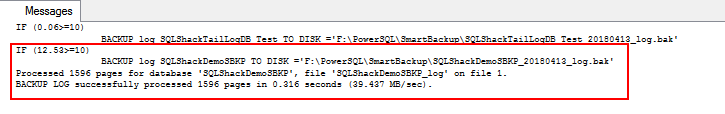

After executing the above SQL, we can see that a T-log backup has been initiated on SQLShackDemoSBKP. We can schedule the script and initiate the backup according to the defined threshold conditions.

Wrapping up

In this article we walked through the concept of the checkpoint and the impact of implementing indirect checkpoints and why indirect checkpoints are recommended on systems with larger memory footprints.

We also demonstrated the interesting and useful enhancements with DMV and DMF for efficiently managing differential and transactional log backups.

T-SQL scripts were made available and can be easily customized for an SQL Server 2017 environment.

All in all, SQL Server 2017 provides many features, of which my favorites are handling smart backups, which is a really intuitive feature.

Table of contents

References

- SQL 2016 – it just runs faster: indirect Checkpoint Default

- How we made backups faster with SQL Server 2017

- Smart Transaction log backup, monitoring and diagnostics with SQL Server 2017

- Stairway to SQL essentials - April 7, 2021

- A quick overview of database audit in SQL - January 28, 2021

- How to set up Azure Data Sync between Azure SQL databases and on-premises SQL Server - January 20, 2021