In the previous articles of this series on the SQL Server Transaction Log, we discussed the importance of the SQL Server Transaction Log and the role that it plays in maintaining the database consistency, by ensuring that the committing transactions data will be preserved and the failed transaction will be rolled back. It also helps to recover the database to a specific point in time in case of system or hardware failure. This is achieved by writing a log record to the SQL transaction log file before writing the data pages to the physical data file, using Write-ahead Logging process.

In addition, we discussed deeply the three Full, Simple and Bulk-Logged recovery models of the database, that specifies how these transactions will be logged and saved to the SQL Server transaction log file. In this article, we will see how to monitor the SQL Server Transaction Log growth and how to manage that growth.

SQL Transaction Log Growth

The SQL Server Database Engine writes a log record for every single operation in the database, that includes starting or ending a SQL transaction when a data modification process is performed, when a database table or index is created or dropped, and after each page allocation or deallocation operation. In the heavily transactional systems with excessive logging operations, the SQL Server Transaction Log file will grow rapidly, until it reaches its maximum size, generating error number 9002. And if the auto-growth option is enabled, the underlying disk drive will run out of free space.

Before going through the mechanisms that can be used to monitor and manage SQL Server Transaction Log growth, let us discuss the excessive logging operations that may lead the SQL Transaction Log file to grow rapidly and the operations that lock the transaction and prevent it from being reused by other transactions.

Index Rebuild and Reorganize

SQL Server index rebuild operation is a fully logged operations that use the Transaction Log excessively, especially when the recovery model of the database is FULL. It runs by default in a single long-running transaction, that prevents the Transaction Log space reuse while rebuilding a large index. If the recovery model of the database is changed to Simple or Bulk-Logged, the index rebuild operation will be a minimally logging operation.

The case in the index reorganizes operation is not the same. The index reorganizes operation is always fully logged, regardless of the recovery model setting of the database. You can imagine the SQL Transaction Log space that is required to reorganize a large index that is heavily fragmented. In all cases, reorganizing an index with a specific size requires less log space than rebuilding the same index.

SQL Transaction Log Backup

When the recovery model of the database is FULL or Bulk-Logged, which is the default recovery model of all newly created databases, unless you change the recovery model setting in the model system database, the inactive part of the SQL Server Transaction Log file that is waiting for truncation will not be truncated automatically unless a Transaction Log backup is performed on that database to explicitly truncate that part of the log. It is a common mistake to configure only Full backup plan on the database with the Full recovery model, as the Full backup process will not truncate the SQL transaction log file and make it available for reuse. In this case, the log file will grow continuously, without truncation, until it runs out of free space.

Active Transactions

A SQL Server Transaction is a single unit of work consists of multiple related tasks that should succeed or fail as one atomic unit. A long-running or uncommitted transaction, such as archiving and purging transactions, delays the truncation of VLFs that contain the log records related to that active transaction, even when the recovery model of the database is Simple, due to the fact that the VLF will not be truncated if it contains any active log record.

To overcome the uncommitted transaction issue, make sure to write complete transactions that are handled in case of success or failure and monitor the orphaned distributed transactions that stuck without being committed. On the other hand, you can overcome the long-running transaction issue by breaking down the long transaction into smaller transactions that work in batches. For example, you can put the DELETE statement inside a loop, that keeps searching for old data and delete it in batches.

SQL Server Replication and Change Data Capture

SQL Server Replication is a database level, high availability solution consists of a publisher server, that distributes a list of database tables to one or more subscriber servers, used for read-only reporting operations. On the other hand, Change Data Capture is a SQL Server feature used to track and capture the DML changes that are performed on the SQL Server database tables.

What is common between these two features is that both features depend on the log reader agent to read the active part of the SQL Server Transaction Log associated with the changes to be replicated. These SQL Transaction Log records will be in pending replication state until these tracked changes are replicated to the subscriber database, in case of replication, or to the tracking tables in case of CDC.

If the subscriber database is not reachable, the SQL Server Agent jobs is in stop state, the Agent jobs associated with the replication process is not working or simply the log agent reader activities are slow, all SQL Server Transaction Log records that are not replicated yet will stay part of the active log in pending replication state. Again, having these log records in an active state for a long time will prevent the VLFs that contain these log records from being truncated, causing the Transaction Log file to grow continuously until it runs out of free space.

You can troubleshoot this issue by making sure that the SQL Server Agent Service is running, the Agent jobs associated with the replication and CDC are running and finally that the subscriber server is reachable.

Database Mirroring and Always on Availability Group

SQL Server Database Mirroring is a database level high availability solution, that consists of a principal server that sends the active Transaction Log records to the mirror database in the mirror server then redoes these changes on the mirror database. On the other hand, the SQL Server Always on Availability Group feature, is a database level high availability solution, that consists of a primary server, that sends the transaction log records from the primary databases to the secondary database, to be written in the database transaction log file first for caching, and reflect the changes later to the database.

If the Database Mirroring or the Always on Availability Group is configured to work in synchronous mode, the transaction will be committed only when the SQL Server transaction log is written to the secondary server. When working in asynchronous mode, the transaction will be committed without waiting for it to be committed in the secondary site. But in all cases, the log record will not be marked as inactive until it is copied to the secondary site.

If the mirrored server or secondary replica is not reachable, the SQL Transaction Log records in the primary site that are not copied to the secondary site yet will remain part of the active log that cannot be truncated. After a long time of disconnection, the number of SQL transaction logs pending the mirroring or the availability replica will increase, leading the SQL Server Transaction Log file to run out of free space due to not being able to reuse the log space.

To overcome this issue, make sure that you troubleshoot and fix the connectivity issue between the replicas, or the issue that caused the mirroring or the availability group sites to fail as soon as possible, in order not to have a large number of records pending in the primary site.

Long Running Backup

When a database Full or Differential backup operation is running, SQL Server Transaction Log truncation will be delayed, in order to include the active portion of the Transaction Log in that backup. If this backup operation keeps running for a long time, the SQL Transaction Log truncation will be delayed for a longer time and the SQL Transaction Log file will grow, due to not being able to reuse the inactive part of the log.

You need to troubleshoot the main cause behind running the log operation for a long time, such as the internal blocking process or a performance issue on the underlying disk I/O system to overcome that log growth.

Troubleshoot Log Growth

When the SQL Server Transaction Log file of the database runs out of free space, you need first to verify the Transaction Log file size settings and check if it is possible to extend the log file size. If you are not able to extend the log file size and the database recovery model is Full, you can force the log truncation by changing it to Simple recovery model. If the database recovery model is already Simple or changing it to Simple recovery model is not applicable, you need to identify what is preventing the SQL Server Transaction Log from being truncated.

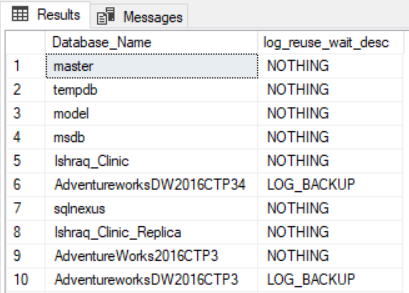

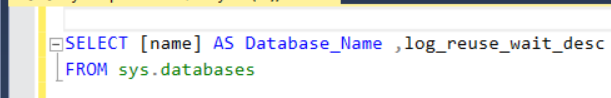

The sys.databases system catalog view can be used to retrieve useful information about the reason behind preventing the SQL Transaction Log file from being truncated, under the log_reuse_wait_desc column, as in the query below:

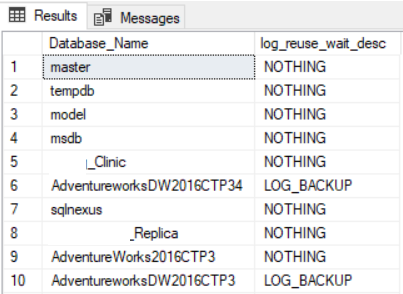

The result returned from the previous query will be like the below:

The log_reuse_wait_desc column value that indicates a healthy state for the database Transaction Log file is NOTHING, which means that the SQL Transaction Log is truncated and reusable. Other values that may return from the sys.databases in the log_reuse_wait_desc column, that shows what is preventing the SQL Transaction Log from being truncated, include:

- CHECKPOINT

- LOG_BACKUP

- ACTIVE_BACKUP_OR_RESTORE

- ACTIVE_TRANSACTION

- DATABASE_MIRRORING

- REPLICATION

- DATABASE_SNAPSHOT_CREATION

- LOG_SCAN

- AVAILABILITY_REPLICA

After identifying the reason behind preventing the SQL Transaction Log from being truncated, you can troubleshoot that blocker as discussed earlier in this article. For more information about managing the SQL Transaction Log, check Managing SQL Server transaction logs.

Monitoring SQL Server Transaction Log File

It is always better to be a proactive database administrator and keep an eye on the SQL Server Transaction Log file growth, in order to prevent catastrophic issues when having the log file running out of free space for a long time. Rather than sleeping beside the server, you can use a monitoring tool such as the System Center Operations Manager (SCOM) tool, Performance Monitor counters, or simply create an alert that reads from one of the system catalog views and notify an operator by email when the free space of the SQL Transaction Log file becomes under a predefined threshold.

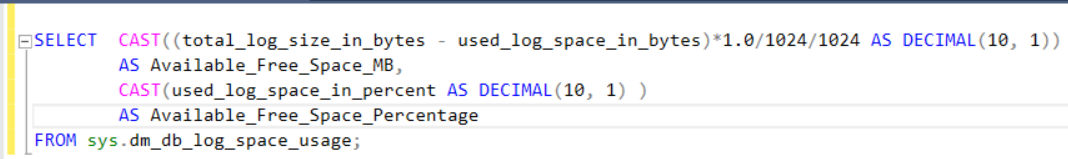

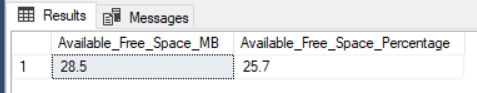

sys.dm_db_log_space_usage is a dynamic management view, introduced in SQL Server 2012, that is used to return space usage information for the transaction log. The below query can be used to check the free space percentage in the SQL Transaction Log file of the current database:

And the result will be like:

If the result returned from the previous query falls down a predefined threshold, before running the SQL Server Transaction Log file out of free space, the DBA will be notified by an email, SMS or call based on the monitoring tool used in your entity.

Summary

In the next article of this series, we will discuss the different operations that can be performed on the SQL Transaction Log including the back, truncate and shrink operations, and make it easier for the reader to identify one from the other. Stay tuned!

Table of contents

- Azure Data Factory Interview Questions and Answers - February 11, 2021

- How to monitor Azure Data Factory - January 15, 2021

- Using Source Control in Azure Data Factory - January 12, 2021