In this article, we will continue our journey to configuring a SQL Server AlwaysOn High availability configuration and failover nodes, by setting up iSCSI including an iSCSI initiator, setting up disk drives on notes, configuring our Quorum and finally installing the SQL Server cluster.

iSCSI Installation

iSCSI is storage protocol which creates storage to the client node and domain controller.

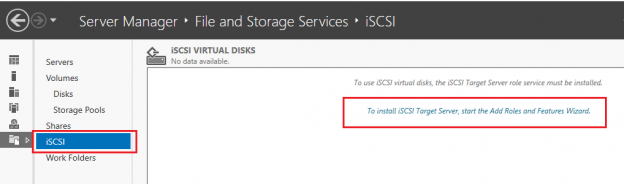

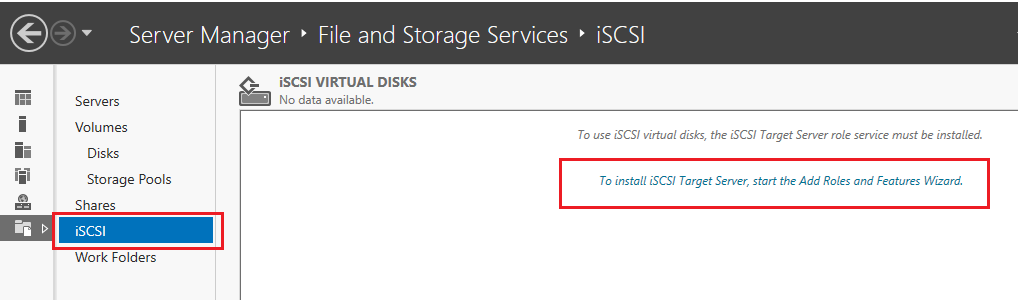

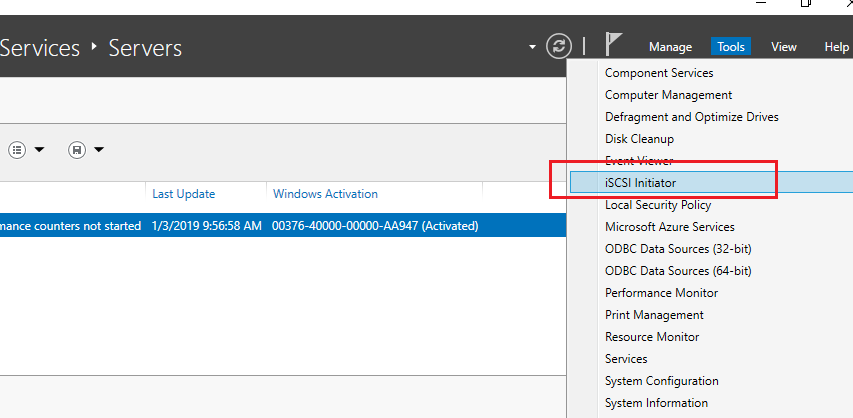

To install Go to Server manager > Tools > iSCSI

Click on To install iSCSI target server, start the Add Roles and Features wizard

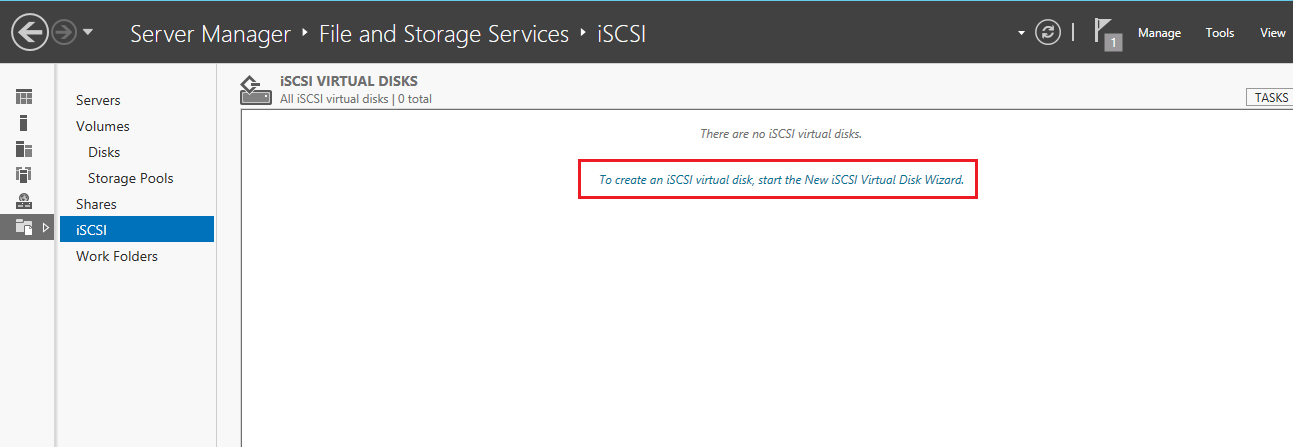

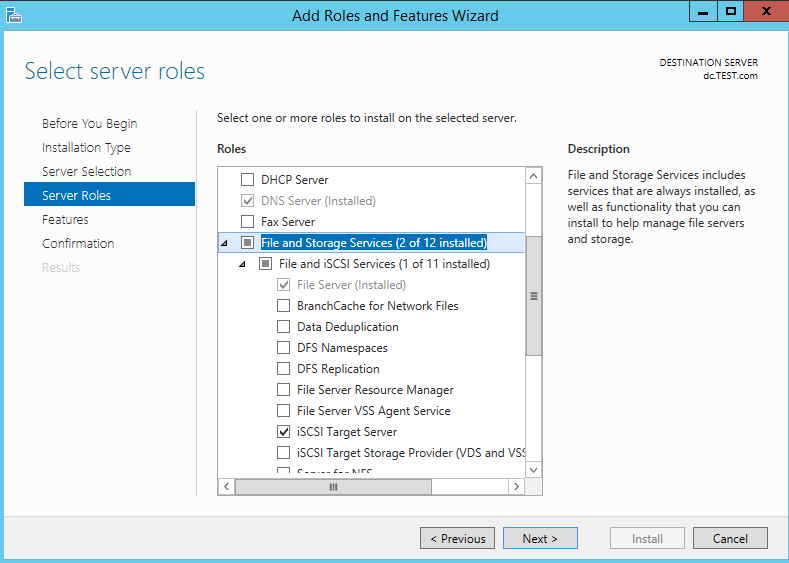

Click Next and Install. Once these features are installed, we will create iSCSI disk. We are creating these disks on the domain controller, but we can also create these disks on other nodes. If, however, the node server goes down, then the iSCSI configuration will also be down, so we will create a virtual disk configuration on the domain controller and other nodes which are configured as targets.

Once we installed the iSCSI feature, it will get back to the main iSCSI page as below.

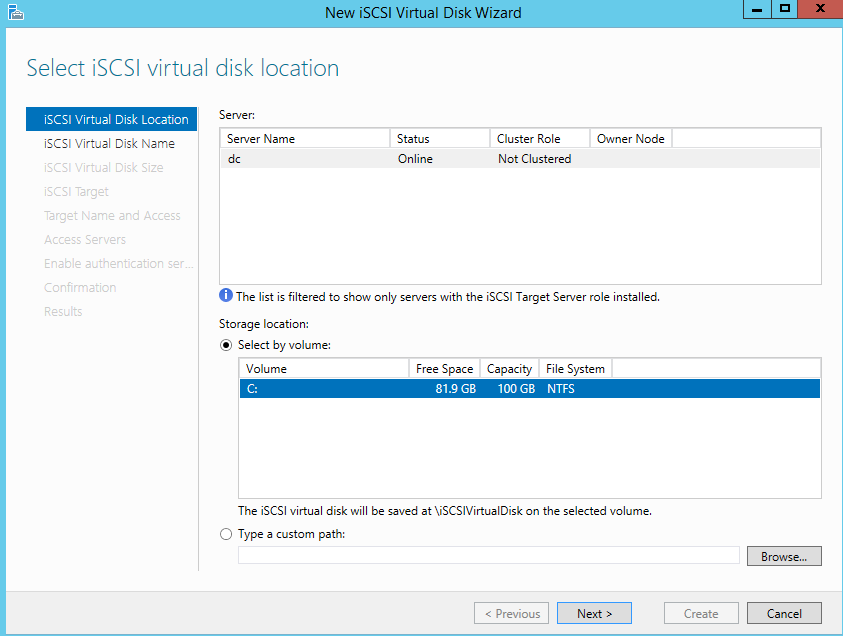

As shown in the following fig. we will use the C drive to configure as the Target disk. Click Next

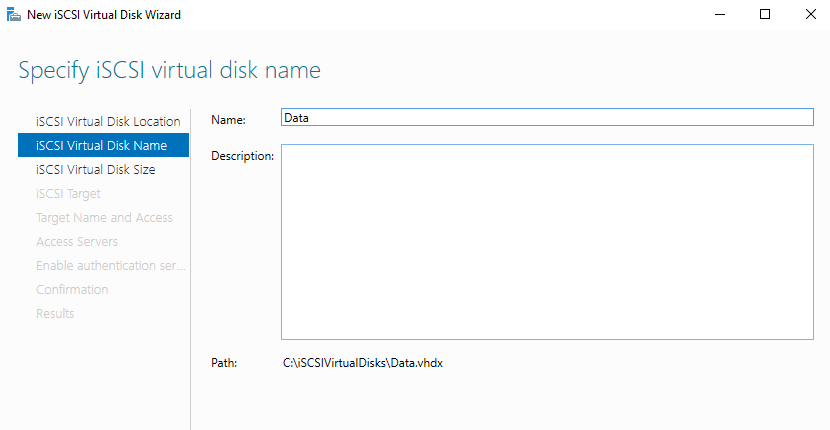

In the following screen, we need to specify the disk name. We can create a separate disk for Data and log. We will create a disk now for Data drive. we will specify a name like “data” and It will get saved in following the mentioned path.

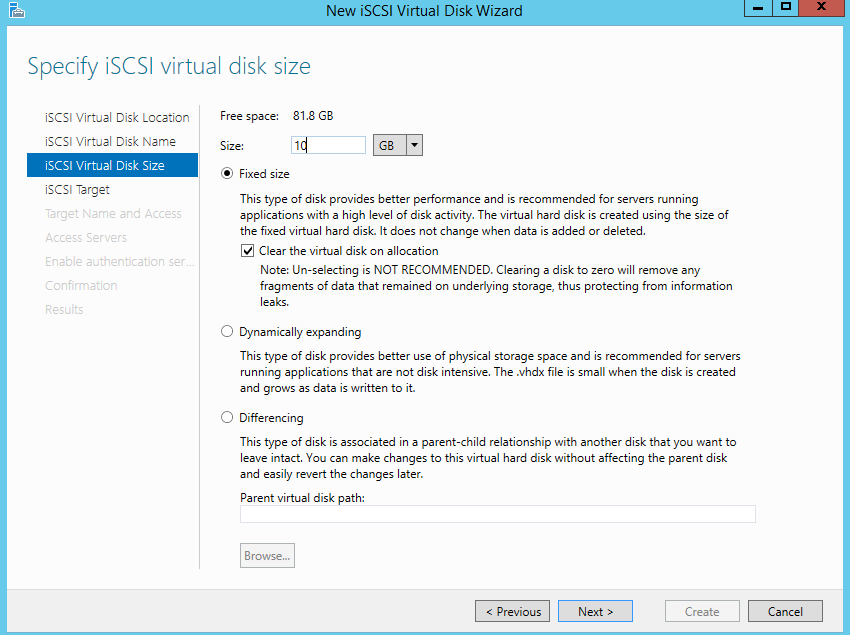

In the following screen, we will specify a size for each disk. The total free space is 81.8 GB and out of that I am allocating 10 GB for Data for demo purpose. You can provide a size as per your local requirements.

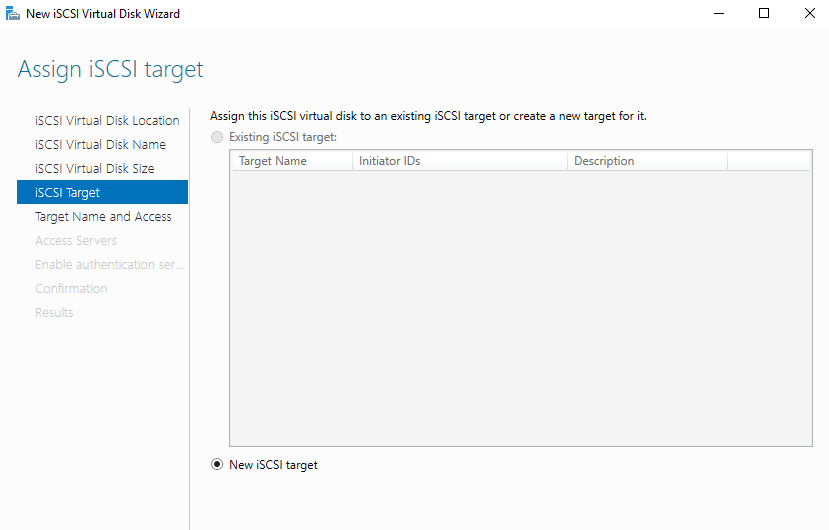

Select the default option New iSCSI target as shown below and click Next

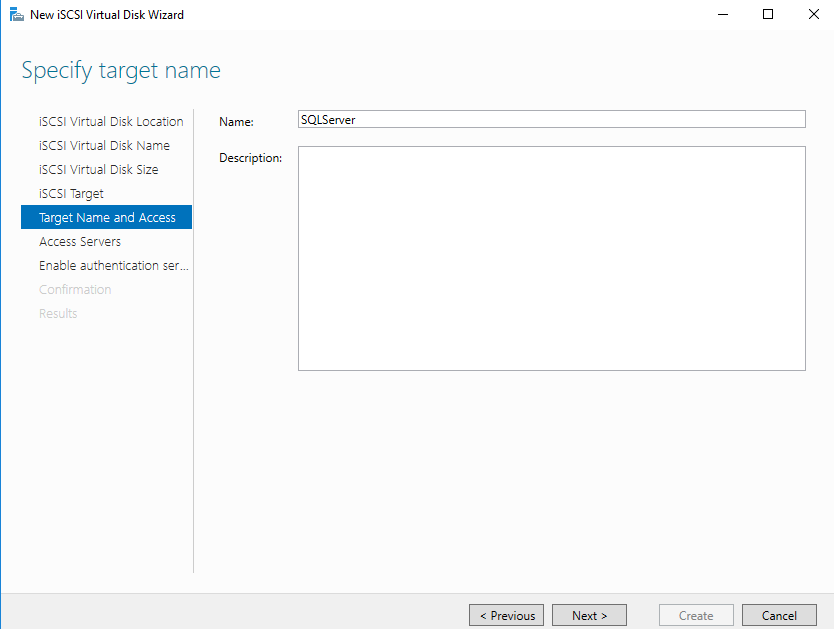

Specify the Target name and (optionally) a description and click Next

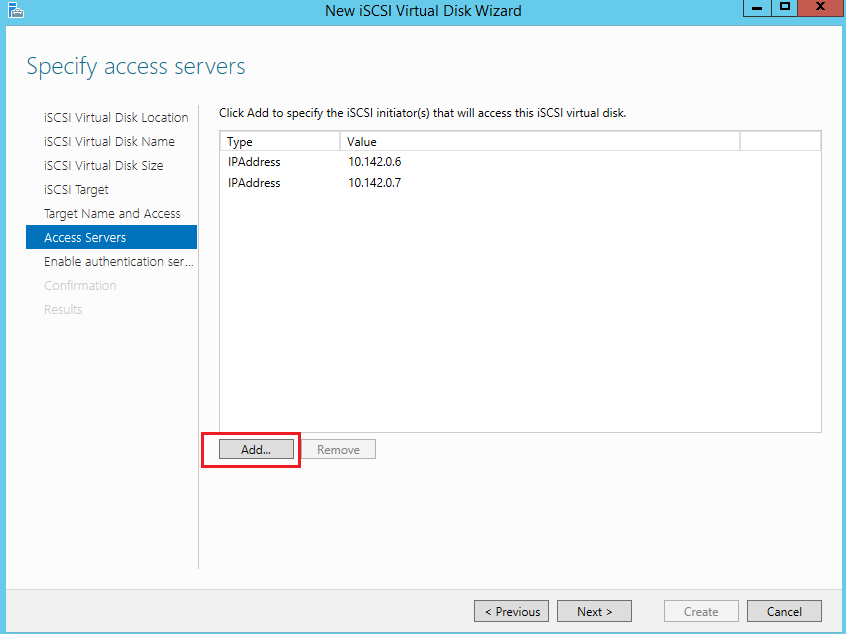

In the following screen, we will set-up an iSCSI initiator by assigning a private IP of a node server.

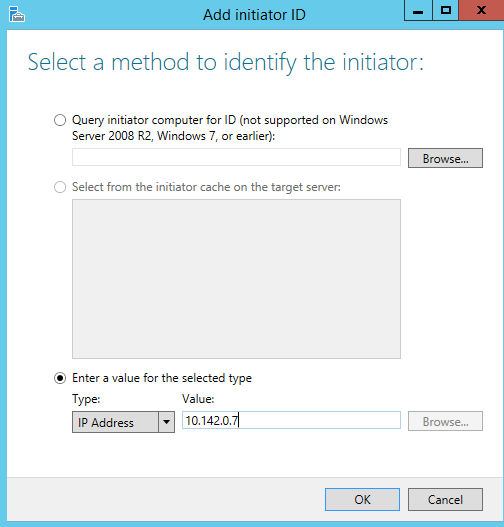

To add a node server to create the iSCSI initiator, click Add. Then when the Add iCSCI initiator dialog appears, we’ll need to select a method to identify the iSCSI initiator. To do this select Enter a value for a selected type, with Type as an IP and specify internal IP of node server SQL1 and SQL 2 in value field as shown in the following fig.

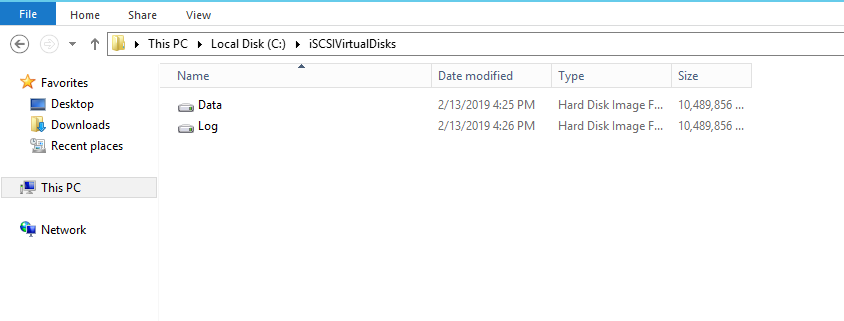

We can also verify disk path in C drive for the Data file, which we created while disk configuration step. Create the same iSCSI drive for a Log file, follow the same process to configure Log drive.

Setting up an iSCSI Initiator

Log in to each node and set-up the iSCSI Initiator in the server manager to start the iSCSI service. When we click iSCSI initiator, we will get pop-up message so click Yes to start. We need to repeat the same process to start the service on the other nodes as well.

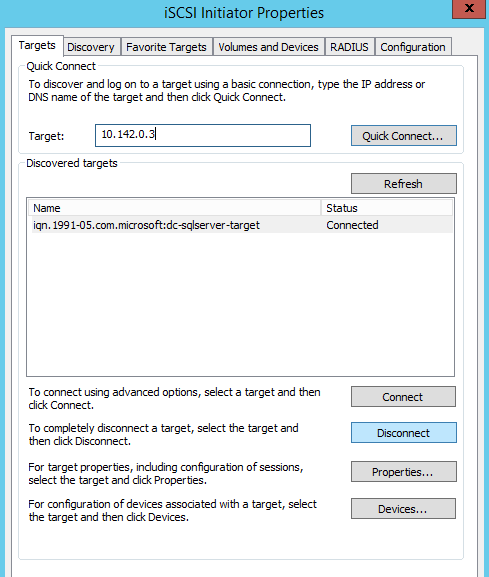

In the iSCSI Initiator properties dialog, we need to specify the Internal IP of the domain controller where disks are located and click Connect. Repeat the same process to specify the IP address of the domain controller to create an iSCSI initiator for the other nodes.

Setting up Disk drives on Nodes

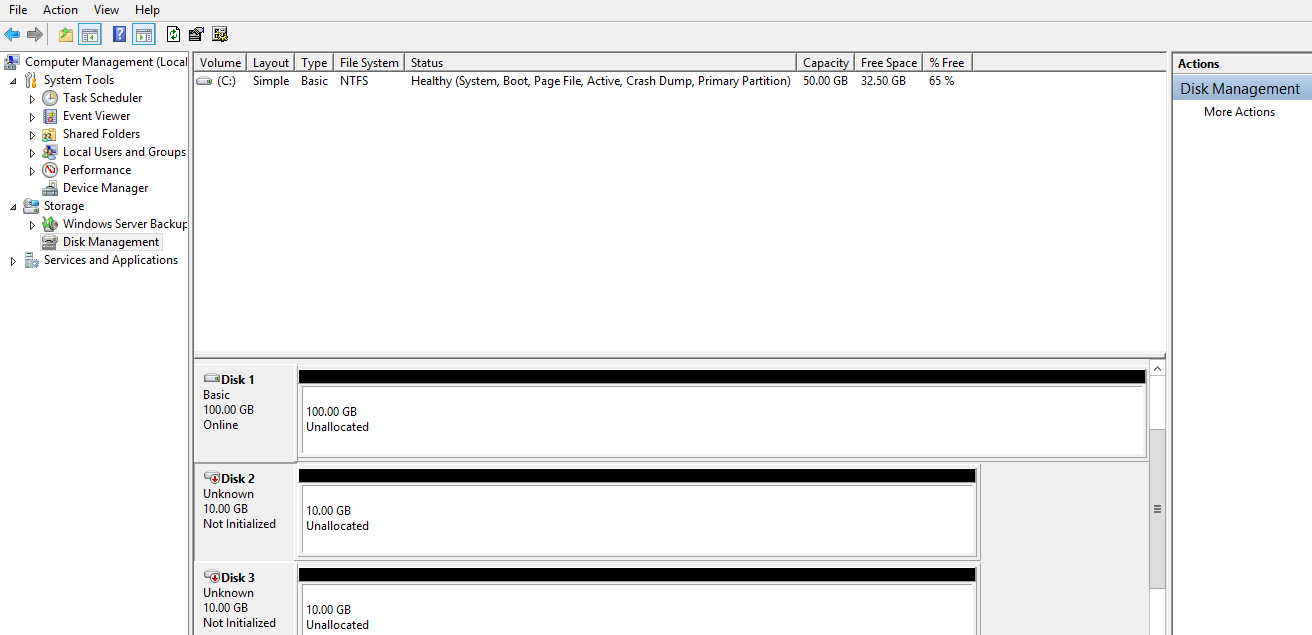

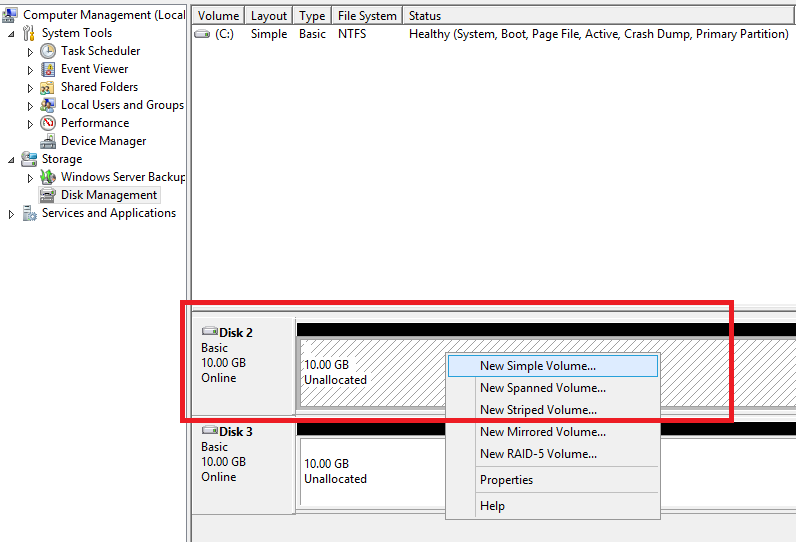

In this step, we will need to bring the iSCSI targets disk which we created previously online, log in to each node and Go to Disk Management setting as follows.

Right-click on the unallocated drive and click New Simple volume which will open the disk configure wizard. Specify a name of the drive, letter of a drive, space we need to allocate, and once configured, it will bring it online and it will be available for use.

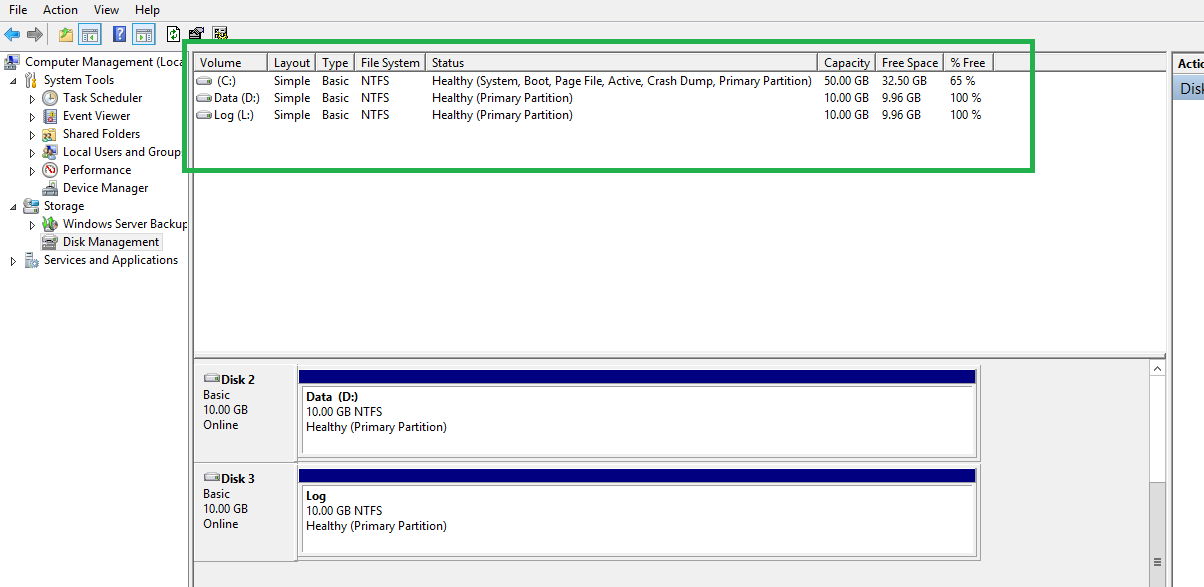

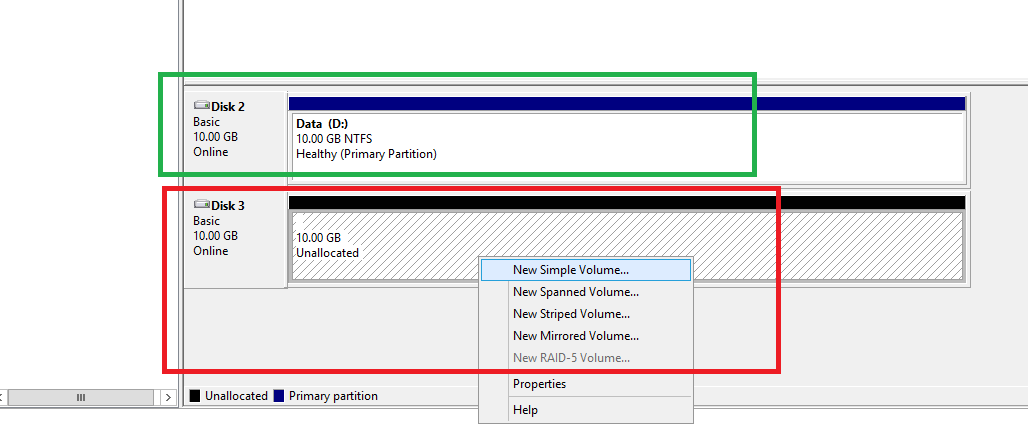

As we can see, the Data drive is brought online with 10 GB, the size which we specified in the iSCSI disk configuration. We will repeat the same process for other unallocated drives.

Quorum Configuration

Quorum configuration is another important step in the cluster environment as it it allows the cluster to keep running even nodes may be down in the failover cluster.

In the failover cluster, we have multiple nodes. If we have a two-node cluster and both node servers are at different data centers locations, and the network is down in data centers, the nodes will not able to communicate with each other. When they are not able to communicate, each node will think the another node is not available, so each node will take responsibility and become the primary server

As both nodes simultaneously self-configure primary, this causes the cluster to split into two parts. As both nodes are working, it creates a data loss situation. This is commonly referred to as a “Split-brain” situation.

To prevent such a split-brain situation, the Quorum model which implements a voting system in cluster nodes of the cluster resource, was introduced.

There are four quorum models in the cluster

- Node Majority – Each node has a vote. This is suitable for an Odd number of nodes

- Node and Disk Majority – This is suitable for an even number of nodes. The disk provides a vote to get a majority of votes

- Node and File Share Majority – This is the same as Node and Disk Majority, only a file share used instead

- No Majority – In this model, Disk is enough to form the quorum

If a Quorum is not available, and some nodes are down, the cluster will not run, and it will go down. The quorum will keep running the cluster based on the majority of votes in the group. Let’s say we have three nodes in cluster SQL1, SQL2, and SQL3. This means we have an odd number of clusters and each node will get one vote. In case any node failed then we will have two votes. So out of three votes, the group got two votes which represents the majority. so the failover cluster will keep running even if one node failed.

Let’s say, we have a two-node cluster, SQL1 and SQL2, which is an even numbered node cluster, and one of the nodes, SQL2, fails. In this case, we will have only one vote for SQL1, which is not a majority to keep the cluster running, so in this case the disk will take precedence, providing one more vote to provide majority. So, in this case, SQL1 and the disk will together provide two votes which form the majority, so in this way the quorum will keep cluster running. This is referred to as Node and Disk Majority.

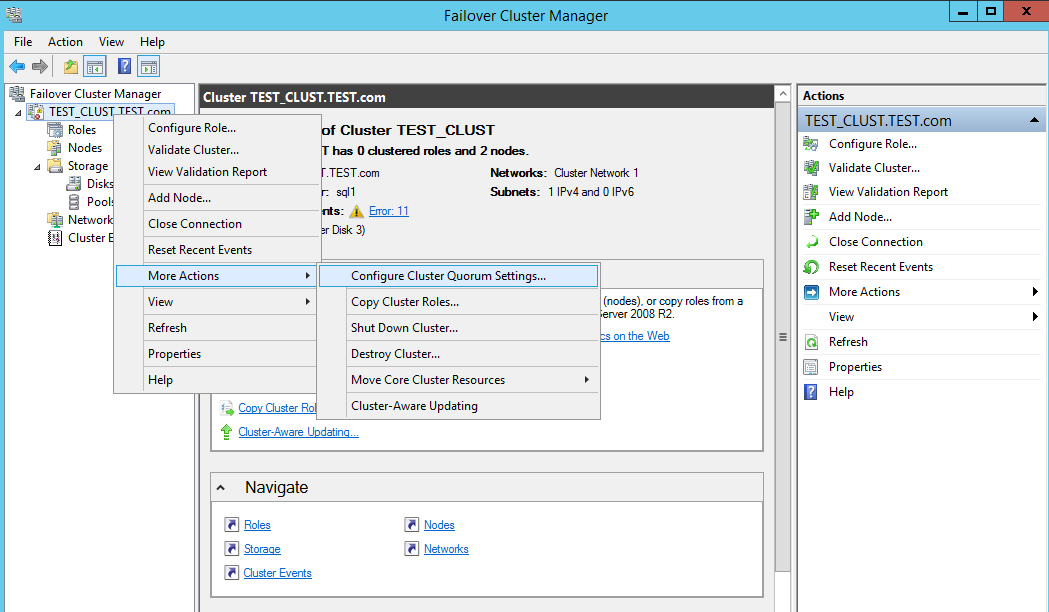

To set-up to Failover cluster > Right Click > More actions > Configure cluster Quorum settings.

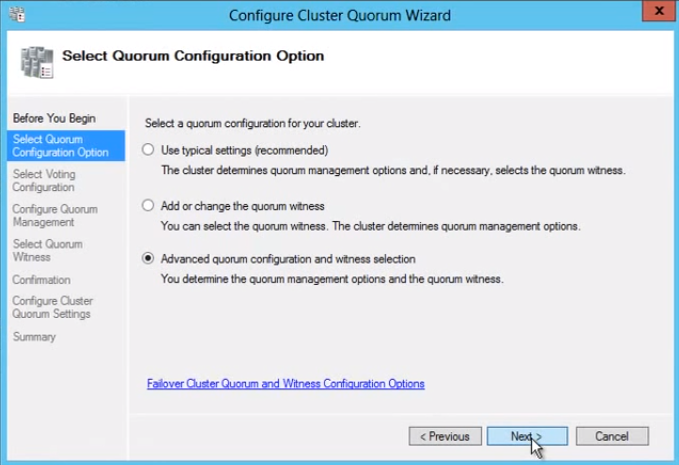

Go to Failover Cluster name > More setting > Select Quorum configuration option as following Fig

Select Advanced quorum configuration and witness selection, in this option, we will decide the quorum management option and witness option settings.

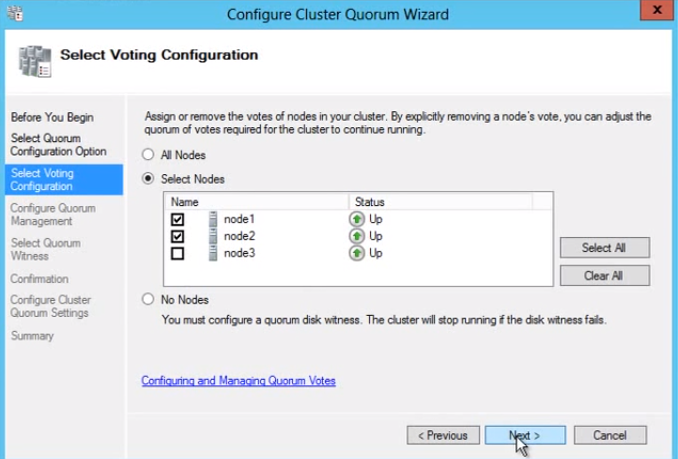

In the voting configuration, we need to select nodes which assign votes.

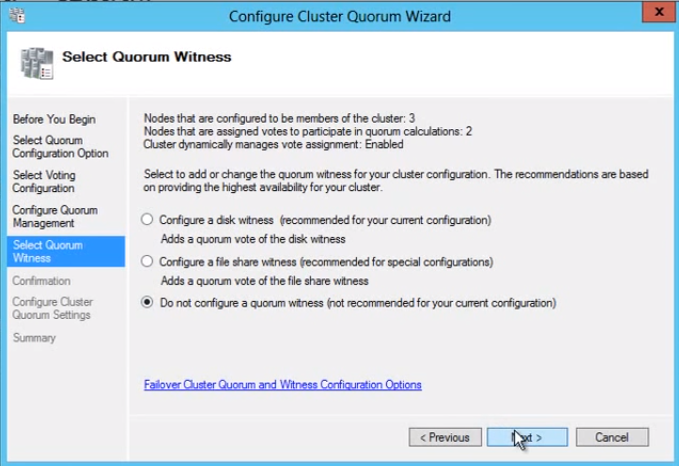

In the witness selection, we will select as a disk witness as we have even number of nodes, so the disk witness will participate in voting and provides vote majority.

In this section, we will select disk a drive for the disk witness

SQL Server Cluster Installation

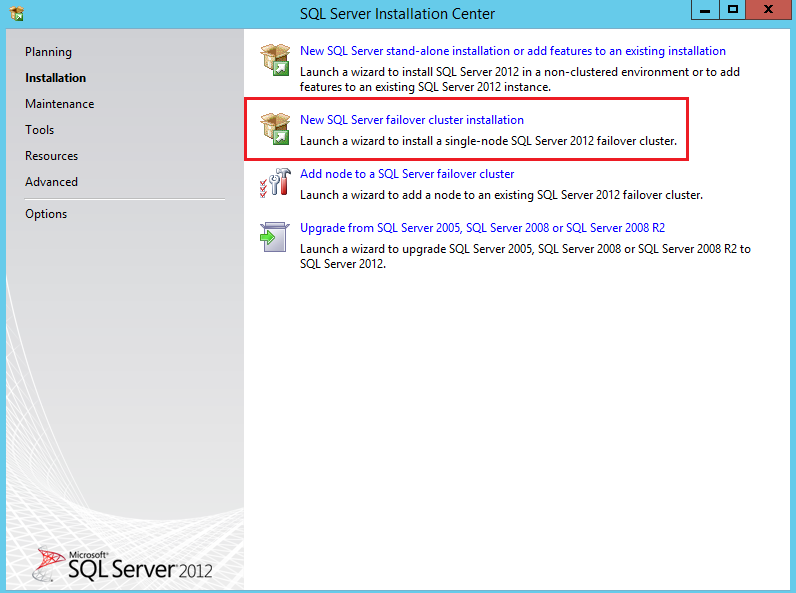

I am going to use SQL Server 2012 SP1 for SQL Server cluster installation. Please note that the SQL Cluster installation is different than traditional SQL Server installation.

Normally, we would Install using first option New SQL Server stand-alone installation, but in a cluster installation, we need to select New SQL Server failover cluster installation as follows.

Click Next and perform few steps like accept terms and condition, select update window, Let the complete process till next window.

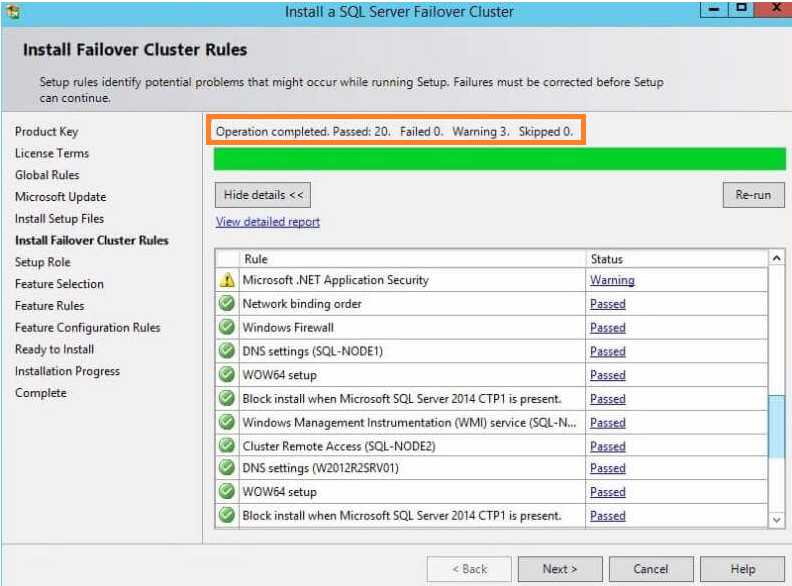

At the Install failover Cluster rules step, all operations completed, at this step, we may get an error for cluster service, WMI service not running so ensure those services are running on SQL nodes.

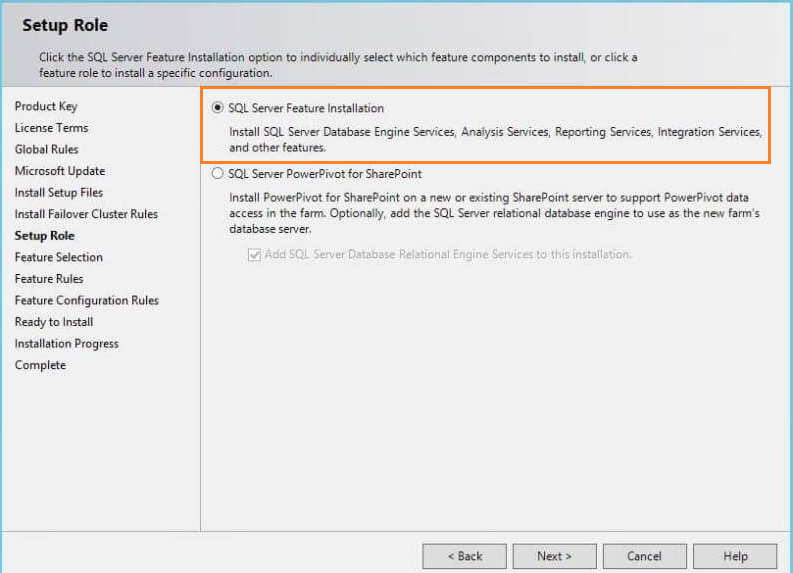

At Setup Roles step, Select SQL Server Feature Installation, in which we need to perform the installation as per feature based like SQL Server reporting services, SQL Server analysis services, SQL Server Integration services etc.

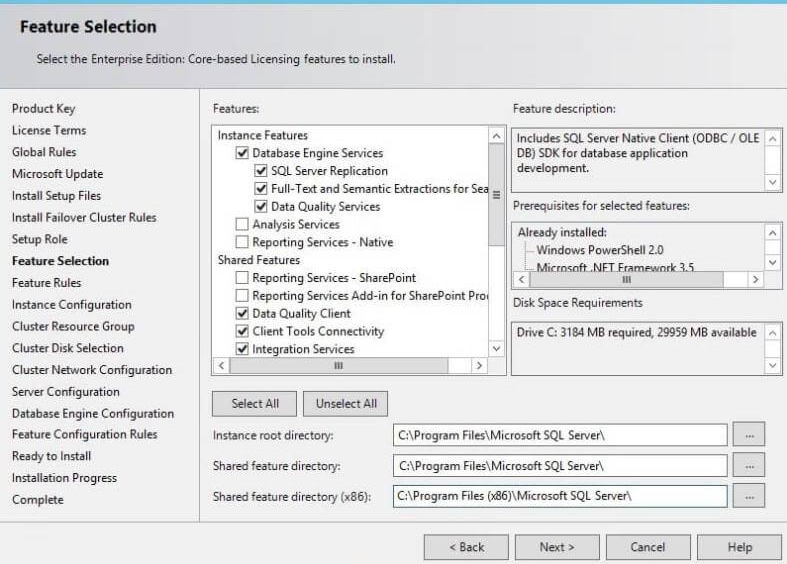

At the Feature selection step, we will select features as per our need and then click Next

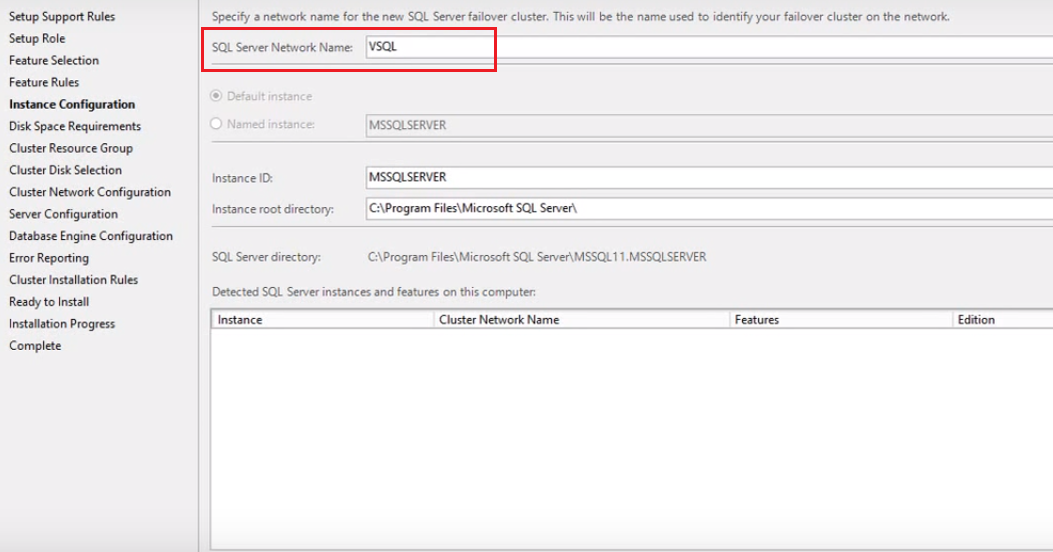

In the Instance Configuration step, a network name for the new SQL Server failover cluster is required

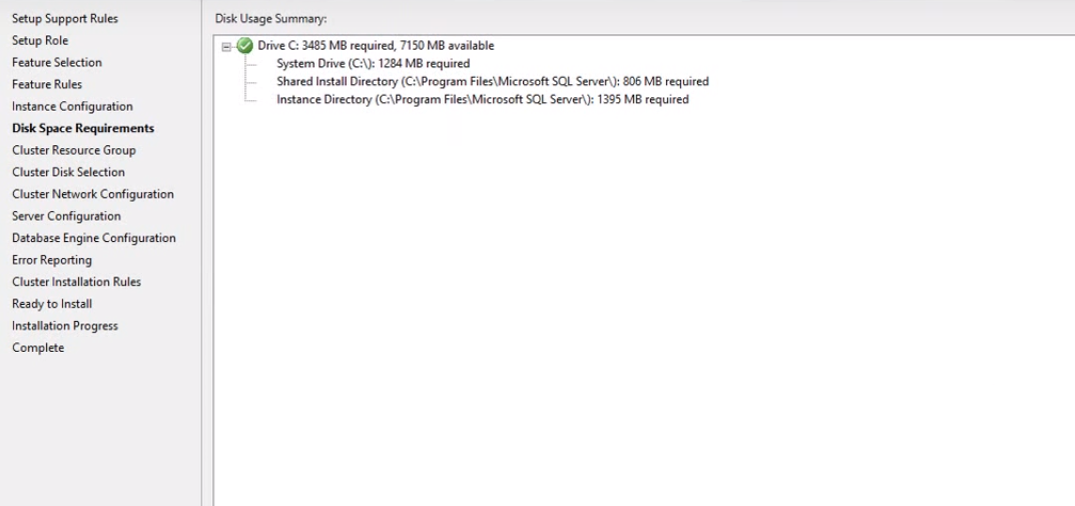

In the Disk space requirement step, the SQL server installer specifies disk drive size requirement. If enough space is not available, then it will suggest a minimum amount of drive space required to proceed with the installation.

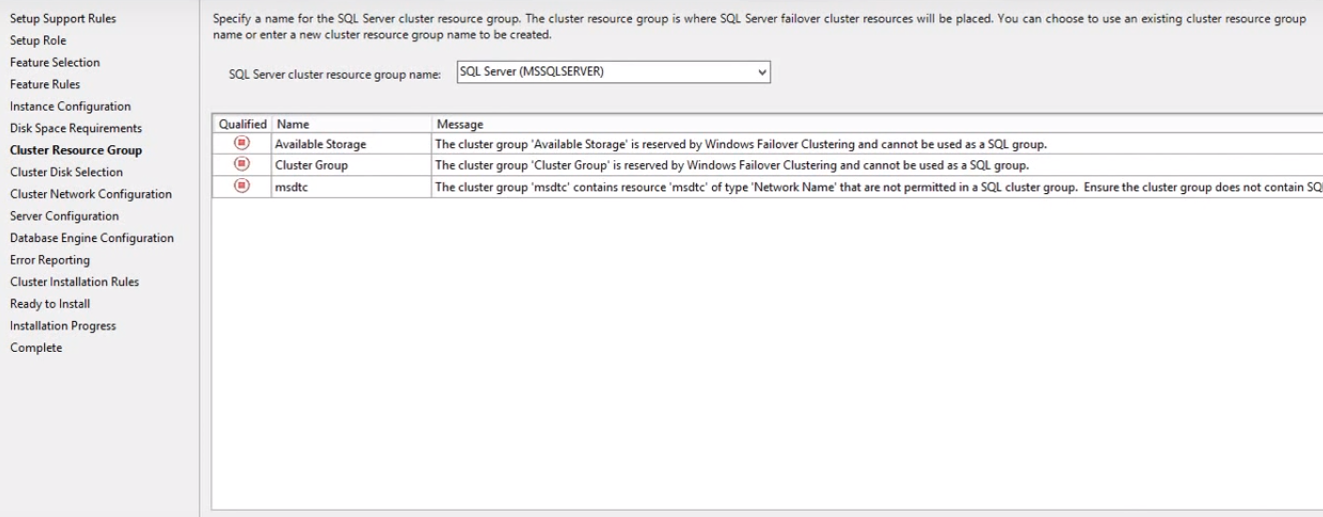

In the Cluster Resource Group step, SQL Server resources placed on disk. We can specify an existing group name or specify a new group name.

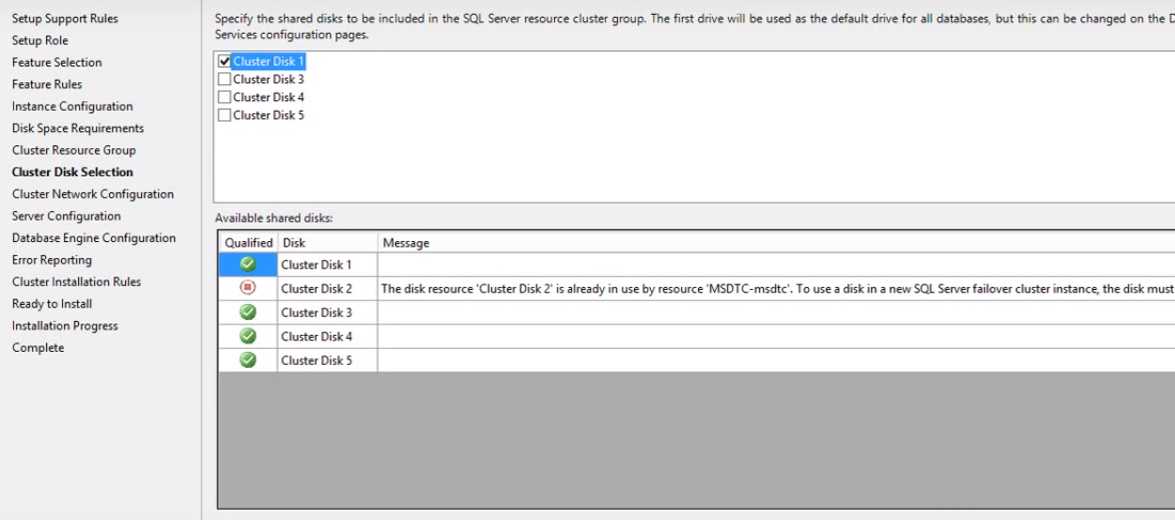

In the Cluster Disk selection step, we need to select a shared disk which used in the SQL Server cluster group.

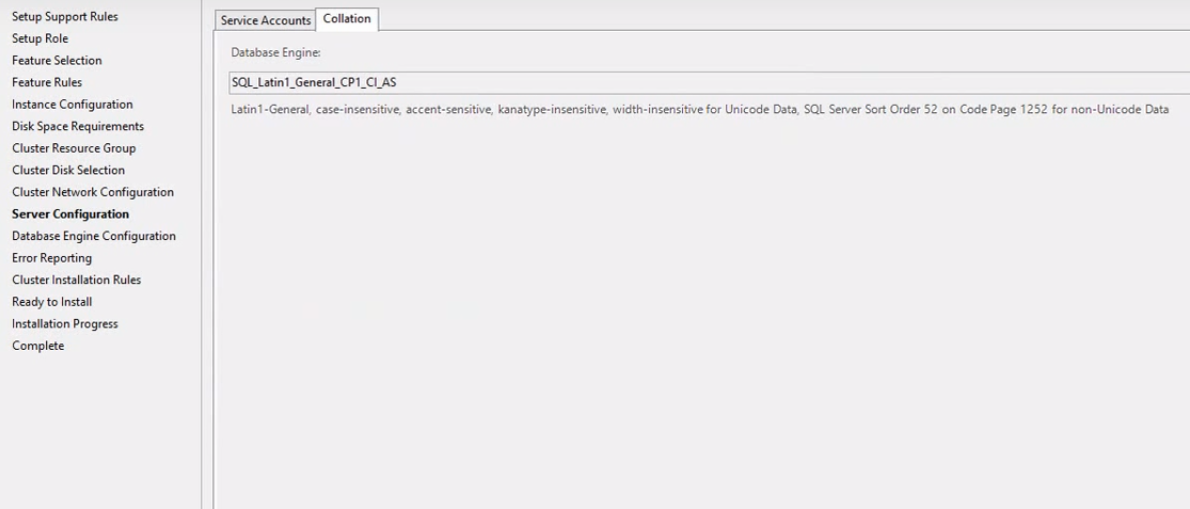

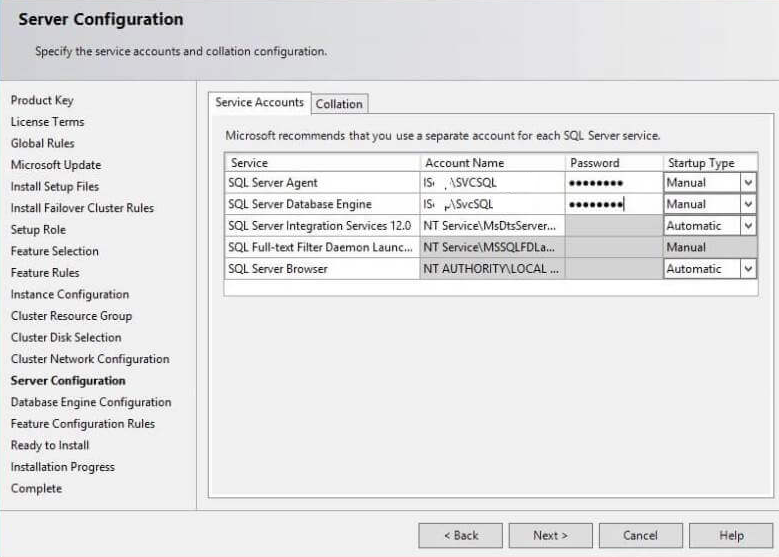

In the Server configuration step, we need to create a service account on the domain controller and allow administrator permission on each node.

In the Server configuration step, we need to select the collation

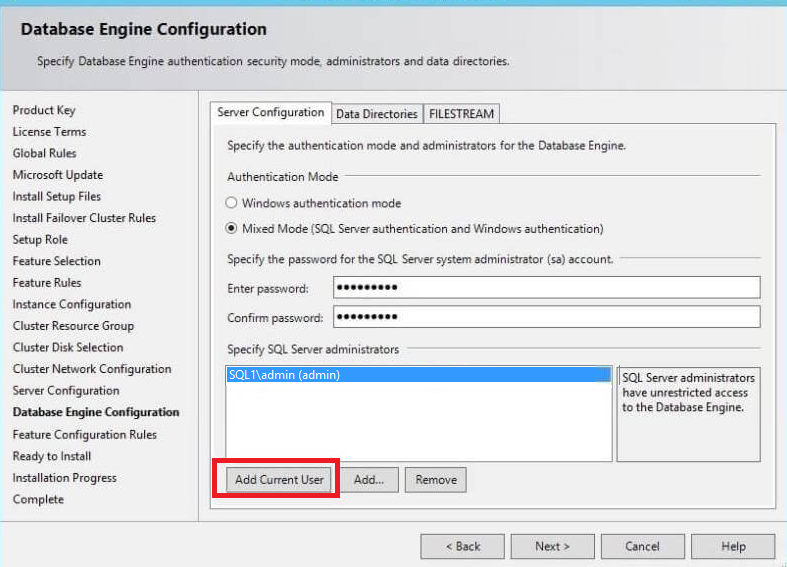

In the Database engine configuration step, we will select authentication mode either windows or Mixed mode. We have selected mixed mode authentication, specify the password and add current user as an administrator as below

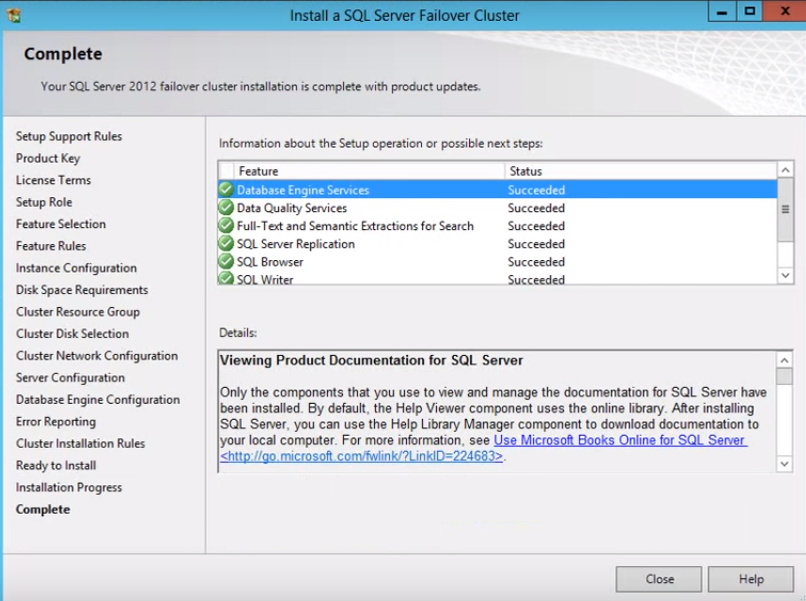

Click Next. The installation process will start. We need to follow the same process to install SQL Server cluster installation on other node servers.

Summary

In this article, we walked thru the steps of setting up iSCSI including an iSCSI initiator, setting up disk drives on notes, configuring our Quorum and finally installing the SQL Server cluster. These steps will get us closer to creating our SQL Server AlwaysOn configuration

Table of contents

| Domain Controller and Domain Client Node setup for AlwaysON High Availability |

| iSCSI, iSCSI Initiator, Quorum Configuration and SQL Server Cluster Installation |

| Configuring a SQL Server AlwaysOn High Availability Group |

- Add new articles, drop the article, change the snapshot folder path and Data filter rows in SQL Server Replication - April 2, 2019

- SQL Server replication: Configuring Snapshot and Transactional Replication - March 15, 2019

- SQL Server replication configuration: Peer to Peer and Merge Replication - March 15, 2019