Description

System views allow us to gain access to information about any objects within SQL Server, from tables to extended properties to check constraints. This information can be collected and used for many purposes, one being the need to document our objects without the need to click endlessly in the GUI or to incur an immense amount of manual work.

In our continuing effort to make good use of these views, we’ll take the data we previously collected in the previous article on Schema Documentation and use that information to build an easy-to-use and customizable solution that will allow us to efficiently reproduce, analyze, or model any database schema.

Brief Recap of Purpose

The stored procedure we are about to build will allow us to generate the CREATE statements for any tables you wish, including related objects, such as indexes, constraints, column metadata, triggers, and more.

This is very handy when researching the structure of a table or when we are looking to quickly build a copy of a table in another location. For development, QA, or documentation, this could be a very useful tool! SQL Server comes with the ability to right-click on objects and script out their creation details, but this is a slow and cumbersome process. For customization, or the ability to view many objects, endless right-clicking is not very appealing.

The results we come up with illustrate a few different ways to generate CREATE statements while allowing for customization to your heart’s content. If there are objects that you would like to include that we do not demo here, such as partitioning, encryption, or replication, feel free to add them into the script using similar techniques.

The search script in its entirety is a bit long, and therefore is attached to this article as a .SQL file. This article focuses on how to build the script, and some decisions we make along the way. Please download and experiment with the full script, in addition to reviewing the info here on how to build and use it. This will greatly improve the experience of reading this article, as well as provide a useful tool for the future.

The Structure of a Documentation Stored Procedure

Building this stored procedure will require a bit of planning. The queries against system views will be similar to what we have previously done, but how we collect and use that data will be different than our experiences thus far. Our first questions to ask will involve parameters. What parts of a documentation proc should be customizable? This is very much based on opinion, but here is what I came up with:

- Database Name: It’s unlikely we would want to script out schema for all databases on a server, nor does the prospect of writing dynamic SQL nested within dynamic SQL sound very enjoyable. We’ll pass in a single database name and script objects for that database only.

- Schema Name: Optionally, we can provide a schema name, which will restrict the schema build to only those objects within this specific schema.

- Table Name: Optionally, we can provide a table name, which will restrict results to any tables with this name.

- Print or Select results: This allows us to either print results, which is great for immediate copy/paste/use, and select results, which allows us to store the output in a table for posterity or future use.

- Customize What to Display: We can create and set bits that allow us to determine what types of objects to script out. For our example, we’ll include a single bit that enables or disables the display of extended properties, which may not be something you wish to see in your output.

Further customization is up to the user. There are many other ways to expand or restrict the result set in order to meet your own business or database scripting needs.

At this point, we need to determine what our stored procedure will do, and the most sensible order of operations. Ideally, we want to collect data as efficiently as possible, accessing system views only when needed and only collecting data for the parameters provided. In the spirit of optimization, we’ll refrain from using iteration as much as possible, instead opting for dynamic SQL, XML, or list generation, rather than WHILE loops or cursors.

Here is a basic outline of what we want to do, and the order we’ll want to accomplish it in:

- Validate data as we process parameter values and determine the work we need to do.

-

Collect metadata from system views as discussed in our previous article:

- Schemas

- Tables

-

Columns

- Ordinal positions

- Column length

- Column precision

- Column scale

- Collation

- Nullable?

-

Identity?

- Identity seed

- Identity increment

-

Computed?

- Computed column definition

- Sparse?

-

Default constraint?

- Default constraint definition

- Foreign keys

- Check constraints

-

Indexes

- Primary Keys

- Triggers

- Extended properties

- Iterate through our table list, ensuring we generate the CREATE statements in a logical order.

- Generate CREATE scripts using the metadata collected above.

- Print or select the results, based on the @Print_Results parameter.

Building the Schema Documentation Solution

With a basic understanding of what we need to do, we should discuss the tools we will use before diving in. One important component of this script is dynamic SQL.

Dynamic SQL

In order to efficiently gather metadata from a database whose name is not known until runtime, we need to generate dynamic USE statements. The syntax will look like this each time:

|

1 2 3 4 |

SELECT @Sql_Command = ' USE [' + @Database_Name + ']'; |

@Sql_Command is an NVARCHAR(MAX) string that will be used to build, store and execute any dynamic SQL that we need to use. Any dynamic SQL stored in @Sql_Command that is executed in the same statement as the dynamic USE will be executed in the context of the database given by @Database_Name.

We’ll also need to filter our metadata queries based on the schema and table provided in the parameters. This will require dynamic WHERE clauses that will insert the parameters into those queries, ensuring we filter effectively. We alternatively could collect metadata on all tables and schemas and filter later, but this will execute faster and return less extraneous data. A dynamic WHERE clause searching for a specific schema would look like this:

|

1 2 3 |

WHERE schemas.name = ''' + @Schema_Name + ''';' |

When this WHERE clause is applied to a SELECT query, the results will filter on the schema provided by the @Schema_Name parameter, allowing us to focus exclusively on the tables that we care about.

XML

One aspect of foreign keys and indexes that we need to contend with are column lists. Indexes can contain any number of key columns and (optionally) include columns. A foreign key typically consists of a one-column-to-one-column relationship, but could be comprised of two, three, or more columns. We’d like to quickly generate a column list, and do so without the need for loops or multiple additional queries.

A list can be generated using string manipulation, and can be done so very efficiently. The following example creates a comma separated list using table names from sys.tables:

|

1 2 3 4 5 6 7 8 9 |

DECLARE @string NVARCHAR(MAX) = ''; SELECT @string = @string + name + ',' FROM sys.tables WHERE tables.is_ms_shipped = 0 ORDER BY tables.name; SELECT @string = LEFT(@string, LEN(@string) - 1); SELECT @string; |

This syntax, in which we SELECT a string equal to itself plus additional table data allows that data to be compressed into the @string itself. While this syntax is extremely efficient, it cannot be combined easily with the SELECT of other columns. In order to get the best of both worlds and create a comma separated list while also gathering additional metadata, we’ll use XML instead.

With XML, we can cram the same data as above into an XML object, and then use STUFF to put it into a string, delimited by commas, just as above. The syntax will look like this:

|

1 2 3 4 5 6 7 8 9 10 |

DECLARE @string NVARCHAR(MAX) = ''; SELECT @string = STUFF(( SELECT ', ' + tables.name FROM sys.tables WHERE tables.is_ms_shipped = 0 ORDER BY tables.name FOR XML PATH('')), 1, 2, '') SELECT @string; |

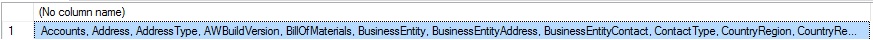

In both of the scenarios above, the output will be the expected CSV, which looks like this:

This strategy will allow us to collect index metadata, for example, while also compiling index column lists form within the same statement. While the TSQL isn’t as simple as if we collected each data element separately, it is more efficient and requires significantly less work to gather what we are looking for.

Parameter Validation

If a stored procedure accepts parameters, it’s generally a good idea to validate those parameters and return a helpful error message if any problems are found. In the case of this search proc, we’ll be allowing the user to enter a database name, and optionally a schema and table name. As a result, data could be entered that is either invalid or does not match any database schema on our server. Let’s consider a handful of common scenarios:

- If no database name is provided or if it is NULL, we should bail immediately as this is required.

- If a schema is provided, but does not match any in the database provided, end processing and exit.

- If a table is provided that matches none in the database, or none within the schema provided, also exit.

These checks ensure that we do not waste time attempting to process invalid objects. We can accomplish the database name check as follows:

|

1 2 3 4 5 6 7 |

IF @Database_Name IS NULL BEGIN RAISERROR('Document_Schema: Please provide a database name for this stored procedure', 16, 1); RETURN; END |

Once we’ve established that a database is provided, we can validate the schema provided. If NULL, then we will seach all schemas, but if it is provided, we can validate it like this:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 |

SELECT @Sql_Command = ' USE [' + @Database_Name + ']; SELECT DISTINCT schemas.name FROM sys.schemas INNER JOIN sys.tables ON schemas.schema_id = tables.schema_id WHERE schemas.name = ''' + @Schema_Name + ''';' INSERT INTO @Schemas (Schema_Name) EXEC sp_executesql @Sql_Command; IF NOT EXISTS (SELECT * FROM @Schemas) BEGIN RAISERROR('Document_Schema: The schema name provided does not exist, or it contains no user tables', 16, 1); RETURN; END |

We INNER JOIN tables here in order to validate that the schema provided has tables associated with it. If not, then there’s no work to do and we can exit immediately. Once a schema with valid objects has been established, we can perform a similar check on table

Collect Schema Metadata

This part will be fun and easy. Why? We did all of the work in the previous article! With the knowledge of what views we need to use, as well as what data to pull from them, the only remaining question is, how do we store and use this data throughout the stored procedure?

First off, we need to create some table variables that will hold all of the data that we collect. Since the data will be inserted and selected all at once, and because the volume of data will be relatively small, there’s no need to index these tables or worry too much about optimization. We’ll be careful to not collect any more data than is required for our work, and that in of itself will be the most significant way we can ensure that our stored proc will run efficiently.

Here are some of the tables we’ll create and use to store our schema metadata, until we are ready to build create scripts later on:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 |

DECLARE @Schemas TABLE (Schema_Name SYSNAME NOT NULL); DECLARE @Tables TABLE (Schema_Name SYSNAME, Table_Name SYSNAME NOT NULL, Result_Text NVARCHAR(MAX) NULL); DECLARE @Columns TABLE (Schema_Name SYSNAME NOT NULL, Table_Name SYSNAME NOT NULL, Column_Name SYSNAME NOT NULL, Type_Name SYSNAME NOT NULL, Ordinal_Position SMALLINT NOT NULL, Column_Length SMALLINT NOT NULL, Column_Precision TINYINT NOT NULL, Column_Scale TINYINT NOT NULL, Column_Collation SYSNAME NULL, Is_Nullable BIT NOT NULL, Is_Identity BIT NOT NULL, Is_Computed BIT NOT NULL, is_sparse BIT NOT NULL, Identity_Seed BIGINT NULL, Identity_Increment BIGINT NULL, Default_Constraint_Name SYSNAME NULL, Default_Constraint_Definition NVARCHAR(MAX) NULL, Computed_Column_Definition NVARCHAR(MAX)); DECLARE @Foreign_Keys TABLE (Foreign_Key_Name SYSNAME NOT NULL, Foreign_Key_Schema_Name SYSNAME NOT NULL, Foreign_Key_Table_Name SYSNAME NOT NULL, Foreign_Key_Creation_Script NVARCHAR(MAX) NOT NULL); DECLARE @Check_Constraints TABLE (Schema_Name SYSNAME, Table_Name SYSNAME, Check_Constraint_Definition NVARCHAR(MAX)); DECLARE @Indexes TABLE (Index_Name SYSNAME NOT NULL, Schema_Name SYSNAME NOT NULL, Table_Name SYSNAME NOT NULL, Is_Unique BIT NOT NULL, Has_Filter BIT NOT NULL, Filter_Definition NVARCHAR(MAX) NULL, Index_Type NVARCHAR(MAX) NOT NULL, Index_Column_List NVARCHAR(MAX) NULL, Include_Column_List NVARCHAR(MAX) NULL, Is_Primary_Key BIT NOT NULL); DECLARE @Triggers TABLE (Schema_Name SYSNAME NOT NULL, Table_Name SYSNAME NOT NULL, Trigger_Definition NVARCHAR(MAX) NOT NULL); DECLARE @Extended_Property TABLE (Object_Type NVARCHAR(60) NOT NULL, Extended_Property_Name SYSNAME NOT NULL, Extended_Property_Value NVARCHAR(MAX) NOT NULL, Schema_Name SYSNAME NOT NULL, Object_Name SYSNAME NOT NULL, Parent_Object_Name SYSNAME NULL, Parent_Column_Name SYSNAME NULL, Index_Name SYSNAME NULL); |

That sure is a lot of tables, but my goal is to create a script that is efficient, easy to understand, and easy to modify. While we could get creative and think of ways to store everything in one huge table, being able to quickly modify or validate a single object type in a single table is far easier. I can’t think of any significant benefits of doing this that wouldn’t also introduce unwanted complexity or obfuscate our code more.

Above, we have created tables for schemas, tables, columns, foreign keys, check constraints, indexes, triggers, and extended properties. Within each table, we gather as much data as we can in order to avoid having to go back for more later. The @Columns table in particular is quite hefty as it includes information about whether the column is computed, an identity, has a default constraint, if it is nullable, and more!

The @Schemas and @Tables are much simpler and are intended for use as data validation tools to ensure that we correctly use parameter inputs throughout our stored proc.

As an additional convenience to our TSQL needs later on, we’ll generate a comma-separated list for the schema list, in the event that the user did not provide a specific schema to search. This will make any WHERE clause that checks the list of schemas easy to build:

|

1 2 3 4 5 6 7 |

DECLARE @Schema_List NVARCHAR(MAX) = ''; SELECT @Schema_List = @Schema_List + '''' + Schema_Name + ''',' FROM @Schemas; SELECT @Schema_List = LEFT(@Schema_List, LEN(@Schema_List) - 1); -- Remove trailing comma. |

The result is a string that can be used whenever a list of schemas is required.

When executing dynamic SQL on another database, we have several options available in order to capture the data collected within the query and get it into one of these tables. I’ll opt for using the INSERT INTO…EXEC sp_executesql syntax, which will insert the results of the dynamic SQL statement directly into the table provided. Here is an example of what this looks like:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 |

SELECT @Sql_Command = ' USE [' + @Database_Name + ']; SELECT DISTINCT schemas.name AS Schema_Name, tables.name AS Table_Name FROM sys.tables INNER JOIN sys.schemas ON schemas.schema_id = tables.schema_id WHERE tables.is_ms_shipped = 0'; IF @Table_Name IS NOT NULL BEGIN SELECT @Sql_Command = @Sql_Command + ' AND tables.name = ''' + @Table_Name + ''''; END SELECT @Sql_Command = @Sql_Command + ' AND schemas.name IN (' + @Schema_List + ')'; INSERT INTO @Tables (Schema_Name, Table_Name) EXEC sp_executesql @Sql_Command; |

In this dynamic SQL query, we:

- Change database context to the database provided by user input.

- Select table and schema data, filtering out any system tables.

- If a table name was provided via user input, add that optional filter in.

- Add a filter for the schema list.

- Execute the dynamic SQL statement and insert the results directly into @Tables.

This syntax is convenient when we only have a single SELECT statement to be concerned with in our dynamic SQL. If there were multiple SELECTS, or scalar variables to update, we would want to consider parameterizing sp_executesql, using temporary tables, or both. For our purposes, though, directly inserting to table variables works great and fits our needs perfectly!

Much of our documentation script will follow this exact method, doing so for all of the tables we reviewed above. Some queries will be more complex, as they will need to retrieve column lists, or other more involved data from system views, but the overall process is same for each. Reviewing our work from the previous article will help explain why each of these queries is written as they are, before they are inserted into dynamic SQL statements.

Build Schema Create Statements

Once we have collected all of the schema metadata that we are interested, it’s time for the big task: Turning that information into valid CREATE statements. This is wholly an exercise in both string manipulation and patience, but one that can be easily tested and validated along the way. If our output TSQL is displaying the wrong metadata, is out of order, or is missing something, figuring out why isn’t too tough as we saved ALL of our data in table variables. Each of these can be reviewed anytime in order to validate correctness and completeness of the data within.

In order to facilitate the documentation, in order, of each table, we will use a cursor to iterate through each one-by-one. While it is possible to generate create statements in order with a set-based approach, this alternative would be significantly more complex and remove much of the desired flexibility from this solution. Let’s review how we’d generate scripts for a single table within this loop. Keep in mind that we will be saving the creation scripts in the table variable @Tables, which will provide some flexibility later on in terms of how we output our scripts.

|

1 2 3 |

DECLARE @Schema_Build_Text NVARCHAR(MAX); |

This string will be used to build and store our script as it is put together. Each table may have many objects, and will therefore need multiple steps in order to complete construction of its script. As we iterate though tables, we’ll keep track of the current schema and table using the following two strings:

|

1 2 3 4 |

DECLARE @Schema_Name_Current NVARCHAR(MAX); DECLARE @Table_Name_Current NVARCHAR(MAX); |

Each creation script will filter on these variables to ensure we are only compiling metadata for the current object (table/schema).

Tables

The start of each table creation script is as simple as CREATE TABLE:

|

1 2 3 4 5 6 7 8 9 |

SELECT @Schema_Build_Text = 'USE [' + @Database_Name + '];' + ' ' + 'CREATE TABLE [' + TABLE_DATA.Schema_Name + '].[' + TABLE_DATA.Table_Name + '](' FROM @Tables TABLE_DATA WHERE TABLE_DATA.Schema_Name = @Schema_Name_Current AND TABLE_DATA.Table_Name = @Table_Name_Current |

This first section sets the database context to whatever database we are analyzing and then puts together the CREATE TABLE statement, filtered on the current table and schema we are working on. Square brackets are used around all object names, which ensures that we are able to manage any that have spaces in their names.

Columns

Next, we need to list out the columns in this table, in the correct order, with any relevant attributes defined inline with the table creation. This is a bit more involved as we may have any number of columns in a table and any number of attributes in any combination. Our TSQL needs to be robust enough to deal with any combinations that our schema may throw at us. We’ll break our workflow down as follows:

- Output the column name.

- Is the column computed? If so, skip other attributes and go straight to its definition.

-

Print out the data type, including the necessary details:

- DECIMAL? If so, then include precision and scale.

- VARCHAR/NVARCHAR/CHAR/NCHAR? If so, then include the column length.

- If the column is sparse, include that attribute here.

- Is the column an identity? If so, add that attribute, including the identity seed and increment.

- Is the column nullable? If so, specify NULL or NOT NULL here. While SQL Server defaults columns to NULL when unspecified, we will be thorough and always include one or the other here.

- Is there a default constraint on this column? If so, include the constraint name and definition here.

- Is the column computed? If so, this is where the definition will go.

After all of these steps, we will have a column list, including important attributes. The TSQL to generate this script segment is as follows:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 |

SELECT @Schema_Build_Text = @Schema_Build_Text + ' ' + COLUMN_DATA.Column_Name + ' ' + CASE WHEN COLUMN_DATA.Is_Computed = 1 THEN '' ELSE -- Don't add metadata if a column is computed (just include definition) COLUMN_DATA.Type_Name + -- Basic column metadata CASE WHEN COLUMN_DATA.Type_Name = 'DECIMAL' THEN '(' + CAST(COLUMN_DATA.Column_Precision AS NVARCHAR(MAX)) + ',' + CAST(COLUMN_DATA.Column_Scale AS NVARCHAR(MAX)) + ')' ELSE '' END + -- Column precision (decimal) CASE WHEN COLUMN_DATA.Type_Name IN ('VARCHAR', 'NVARCHAR', 'NCHAR', 'CHAR') THEN '(' + CAST(COLUMN_DATA.Column_Length AS NVARCHAR(MAX)) + ')' ELSE '' END + -- Column length (string) CASE WHEN COLUMN_DATA.is_sparse = 1 THEN ' SPARSE' ELSE '' END + -- If a column is sparse, denote that here. CASE WHEN COLUMN_DATA.Is_Identity = 1 THEN ' IDENTITY(' + CAST(Identity_Seed AS NVARCHAR(MAX)) + ',' + CAST(Identity_Increment AS NVARCHAR(MAX)) + ')' ELSE '' END + -- Identity Metadata (optional) CASE WHEN COLUMN_DATA.Is_Nullable = 1 THEN ' NULL' ELSE ' NOT NULL' END + -- NULL/NOT NULL definition CASE WHEN COLUMN_DATA.Default_Constraint_Name IS NOT NULL THEN ' CONSTRAINT ' + COLUMN_DATA.Default_Constraint_Name + ' DEFAULT ' + COLUMN_DATA.Default_Constraint_Definition ELSE '' END END + -- Default constraint definition (optional) CASE WHEN COLUMN_DATA.Is_Computed = 1 THEN 'AS ' + COLUMN_DATA.Computed_Column_Definition ELSE '' END + ',' FROM @Columns COLUMN_DATA WHERE COLUMN_DATA.Table_Name = @Table_Name_Current AND COLUMN_DATA.Schema_Name = @Schema_Name_Current ORDER BY COLUMN_DATA.Ordinal_Position ASC; SELECT @Schema_Build_Text = LEFT(@Schema_Build_Text, LEN(@Schema_Build_Text) - 1); |

The filter ensures we only add columns for the current table we are working on, while the ORDER BY puts our data in the correct column order, based on column ordinals. The list-building syntax used here allows us to build the column list in a single statement from a set of columns of any size. The final SELECT removes the trailing comma from the string, which is left over by the list-building syntax.

Primary Keys

If the table has a primary key, then we can include that definition defined inline at the end of the table creation. Since there can only be a single primary key per table, there’s no need to worry about lists of data. We can generate the script for its creation in a single step as follows:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 |

IF EXISTS (SELECT * FROM @Indexes PRIMARY_KEY_DATA WHERE PRIMARY_KEY_DATA.Schema_Name = @Schema_Name_Current AND PRIMARY_KEY_DATA.Table_Name = @Table_Name_Current AND PRIMARY_KEY_DATA.Is_Primary_Key = 1) BEGIN SELECT @Schema_Build_Text = @Schema_Build_Text + ', CONSTRAINT ' + PRIMARY_KEY_DATA.Index_Name + ' PRIMARY KEY' + -- Primary key name Index_Type + -- Clustered vs. Nonclustered key. '(' + PRIMARY_KEY_DATA.Index_Column_List + ')' -- Column list. FROM @Indexes PRIMARY_KEY_DATA WHERE PRIMARY_KEY_DATA.Schema_Name = @Schema_Name_Current AND PRIMARY_KEY_DATA.Table_Name = @Table_Name_Current AND PRIMARY_KEY_DATA.Is_Primary_Key = 1; END |

The IF EXISTS checks if a primary key exists, and if not, this section will be skipped. Otherwise, we build the primary key creation TSQL using the index information collected earlier, verifying that Is_Primary_Key = 1. Since we built the index column list earlier, we need only return them now, without any need for further list building.

Now that we have completed the table creation statement, we can close the parenthesis from earlier and add a “GO” in order to start a new batch:

|

1 2 3 4 |

SELECT @Schema_Build_Text = @Schema_Build_Text + '); GO'; |

Foreign Keys

The remainder of the CREATE/ALTER statements can be listed in any order. The order chosen here was based on which parts of this script were coded first. Feel free to adjust order if it helps in the consumption of this scripted metadata. In the case of foreign keys, we generated ALTER TABLE statements previously, which saved time when this moment came along. Here is the collection TSQL used to initially collect foreign key information:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 |

SELECT @Sql_Command = ' USE [' + @Database_Name + ']; SELECT FOREIGN_KEY_DATA.name AS Foreign_Key_Name, FOREIGN_KEY_SCHEMA.name AS Foreign_Key_Schema_Name, FOREIGN_KEY_TABLE.name AS Foreign_Key_Table_Name, ''ALTER TABLE ['' + FOREIGN_KEY_SCHEMA.name + ''].['' + FOREIGN_KEY_TABLE.name + ''] WITH '' + CASE WHEN FOREIGN_KEY_DATA.is_not_trusted = 1 THEN ''NOCHECK'' ELSE ''CHECK'' END + '' ADD CONSTRAINT ['' + FOREIGN_KEY_DATA.name + ''] FOREIGN KEY('' + STUFF(( SELECT '', '' + FOREIGN_KEY_COLUMN.name FROM sys.foreign_keys INNER JOIN sys.foreign_key_columns ON foreign_keys.object_id = foreign_key_columns.constraint_object_id INNER JOIN sys.tables FOREIGN_KEY_TABLE ON foreign_keys.parent_object_id = FOREIGN_KEY_TABLE.object_id INNER JOIN sys.schemas FOREIGN_KEY_SCHEMA ON FOREIGN_KEY_SCHEMA.schema_id = FOREIGN_KEY_TABLE.schema_id INNER JOIN sys.columns as FOREIGN_KEY_COLUMN ON foreign_key_columns.parent_object_id = FOREIGN_KEY_COLUMN.object_id AND foreign_key_columns.parent_column_id = FOREIGN_KEY_COLUMN.column_id WHERE FOREIGN_KEY_DATA.object_id = foreign_keys.object_id AND FOREIGN_KEY_DATA.name = foreign_keys.name ORDER BY FOREIGN_KEY_TABLE.name, foreign_key_columns.constraint_column_id FOR XML PATH('''')), 1, 2, '''') + '') REFERENCES ['' + FOREIGN_KEY_SCHEMA.name + ''].['' + REFERENCED_TABLE.name + ''] ('' + STUFF(( SELECT '', '' + REFERENECD_COLUMN.name FROM sys.foreign_keys INNER JOIN sys.foreign_key_columns ON foreign_keys.object_id = foreign_key_columns.constraint_object_id INNER JOIN sys.tables REFERENCED_TABLE ON foreign_keys.referenced_object_id = REFERENCED_TABLE.object_id INNER JOIN sys.schemas REFERENCED_KEY_SCHEMA ON REFERENCED_KEY_SCHEMA.schema_id = REFERENCED_TABLE.schema_id INNER JOIN sys.columns REFERENECD_COLUMN ON foreign_key_columns.referenced_object_id = REFERENECD_COLUMN.object_id AND foreign_key_columns.referenced_column_id = REFERENECD_COLUMN.column_id WHERE FOREIGN_KEY_DATA.object_id = foreign_keys.object_id AND FOREIGN_KEY_DATA.name = foreign_keys.name ORDER BY REFERENCED_TABLE.name, foreign_key_columns.constraint_column_id FOR XML PATH('''')), 1, 2, '''') + '')); GO'' AS Foreign_Key_Creation_Script FROM sys.foreign_keys FOREIGN_KEY_DATA INNER JOIN sys.tables FOREIGN_KEY_TABLE ON FOREIGN_KEY_DATA.parent_object_id = FOREIGN_KEY_TABLE.object_id INNER JOIN sys.tables REFERENCED_TABLE ON FOREIGN_KEY_DATA.referenced_object_id = REFERENCED_TABLE.object_id INNER JOIN sys.schemas FOREIGN_KEY_SCHEMA ON FOREIGN_KEY_SCHEMA.schema_id = FOREIGN_KEY_TABLE.schema_id INNER JOIN sys.schemas REFERENCED_KEY_SCHEMA ON REFERENCED_KEY_SCHEMA.schema_id = REFERENCED_TABLE.schema_id'; INSERT INTO @Foreign_Keys (Foreign_Key_Name, Foreign_Key_Schema_Name, Foreign_Key_Table_Name, Foreign_Key_Creation_Script) EXEC sp_executesql @Sql_Command; |

List-building via XML was used in order to gather the foreign key column lists, as well as the referenced column lists all in a single statement. The syntax here is almost identical to that used for the collection of index key and included column lists. Since this work is already complete, the steps needed to generate the foreign key creation scripts will be much simpler:

|

1 2 3 4 5 6 7 8 9 10 11 12 |

IF EXISTS (SELECT * FROM @Foreign_Keys WHERE Foreign_Key_Schema_Name = @Schema_Name_Current AND Foreign_Key_Table_Name = @Table_Name_Current) BEGIN SELECT @Schema_Build_Text = @Schema_Build_Text + ' ' + Foreign_Key_Creation_Script FROM @Foreign_Keys WHERE Foreign_Key_Schema_Name = @Schema_Name_Current AND Foreign_Key_Table_Name = @Table_Name_Current; END |

IF EXISTS checks to see if any foreign keys exist on the table, and if so, add the foreign key creation script onto our schema creation script.

Check Constraints

Check constraints creation statements were also created previously using the following TSQL:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 |

SELECT @Sql_Command = ' USE [' + @Database_Name + ']; SELECT schemas.name AS Schema_Name, tables.name AS Table_Name, ''ALTER TABLE ['' + schemas.name + ''].['' + tables.name + ''] WITH '' + CASE WHEN check_constraints.is_not_trusted = 1 THEN ''NOCHECK'' ELSE ''CHECK'' END + '' ADD CONSTRAINT ['' + check_constraints.name + ''] CHECK '' + check_constraints.definition FROM sys.check_constraints INNER JOIN sys.tables ON tables.object_id = check_constraints.parent_object_id INNER JOIN sys.schemas ON tables.schema_id = schemas.schema_id'; IF @Schema_Name IS NOT NULL BEGIN SELECT @Sql_Command = @Sql_Command + ' WHERE schemas.name = ''' + @Schema_Name + ''''; END IF @Table_Name IS NOT NULL AND @Schema_Name IS NOT NULL BEGIN SELECT @Sql_Command = @Sql_Command + ' AND tables.name = ''' + @Table_Name + ''''; END IF @Table_Name IS NOT NULL AND @Schema_Name IS NULL BEGIN SELECT @Sql_Command = @Sql_Command + ' WHERE tables.name = ''' + @Table_Name + ''''; END INSERT INTO @Check_Constraints (Schema_Name, Table_Name, Check_Constraint_Definition) EXEC sp_executesql @Sql_Command; |

While no column lists were needed for this work, we did need to verify if a constraint was created with the NOCHOCK attribute. Otherwise, the creation statement is relatively simple. Optional filters on schema and table help reduce the data returned to include only what we are interested in based on the stored proc parameters. Once we have the creation script, we can script out the check constraints as follows:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 |

IF EXISTS (SELECT * FROM @Check_Constraints WHERE Schema_Name = @Schema_Name_Current AND Table_Name = @Table_Name_Current) BEGIN SELECT @Schema_Build_Text = @Schema_Build_Text + ' ' + Check_Constraint_Definition + ' GO' FROM @Check_Constraints WHERE Schema_Name = @Schema_Name_Current AND Table_Name = @Table_Name_Current; END |

If any check constraints exist, then add the previously-created scripts to our growing table creation script.

Indexes

Indexes have a number of attributes on them that need to be integrated into our TSQL here. We’ll need to check if an index is unique, XML, clustered, filtered, or has include columns:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 |

IF EXISTS (SELECT * FROM @Indexes WHERE Schema_Name = @Schema_Name_Current AND Table_Name = @Table_Name_Current) BEGIN SELECT @Schema_Build_Text = @Schema_Build_Text + ' CREATE ' + CASE WHEN Is_Unique = 1 THEN 'UNIQUE ' ELSE '' END + CASE WHEN Index_Type = 'XML' THEN 'PRIMARY ' ELSE '' END + Index_Type + ' INDEX [' + Index_Name + '] ON [' + Schema_Name + '].[' + Table_Name + '] (' + Index_Column_List + ')' + CASE WHEN Include_Column_List <> '' THEN ' INCLUDE (' + Include_Column_List + ')' ELSE '' END + CASE WHEN Has_Filter = 1 THEN ' WHERE ' + Filter_Definition ELSE '' END + ' GO' FROM @Indexes WHERE Schema_Name = @Schema_Name_Current AND Table_Name = @Table_Name_Current AND Is_Primary_Key = 0; END |

We verify that indexes exist before proceeding, and ensure in the filter that we omit the primary key, since it has already been included in our script. The hefty use of CASE statements adds some complexity to this script, but allows our script to be much shorter than if we had handled each attribute in a separate string manipulation segment.

Triggers

Trigger definitions are included in their entirety within the sys.triggers/sys.sql_modules relationship. As a result, adding trigger information to our script is quite simple:

|

1 2 3 4 5 6 7 8 9 10 11 |

IF EXISTS (SELECT * FROM @Triggers WHERE Schema_Name = @Schema_Name_Current AND Table_Name = @Table_Name_Current) BEGIN SELECT @Schema_Build_Text = @Schema_Build_Text + ' ' + Trigger_Definition + 'GO' FROM @Triggers WHERE Schema_Name = @Schema_Name_Current AND Table_Name = @Table_Name_Current END |

If triggers exist, we need only add their text to our string and we’re done!

Extended Properties

This is a bit of a quirky addition, but worth including in our script as it demonstrates some more unusual metadata within SQL Server. Extended properties can be assigned to many types of objects, such as columns, tables, foreign keys, triggers, or more!

Our TSQL will need to verify what kind of object we are dealing with and script out the generation of the extended property using the correct syntax. Extended properties are created using the sp_addextendedproperty system stored procedure. The parameters specify the property name, the value that is assigned to it, and then 3 additional parameters to specify the type of object being tagged:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 |

type of object being tagged: IF EXISTS (SELECT * FROM @Extended_Property WHERE COALESCE(Parent_Object_Name, Object_Name, '') = @Table_Name_Current AND Schema_Name = @Schema_Name_Current) BEGIN SELECT @Schema_Build_Text = @Schema_Build_Text + ' EXEC sys.sp_addextendedproperty @name = ''' + Extended_Property_Name + ''', @value = ''' + Extended_Property_Value + ''', @level0type = ''SCHEMA'', @level0name = ''' + Schema_Name + ''', @level1type = ''TABLE'', @level1name = ''' + COALESCE(Parent_Object_Name, Object_Name, '') + '''' + CASE WHEN NOT(Object_Type = 'USER_TABLE' AND Parent_Object_Name IS NULL AND Parent_Column_Name IS NULL AND Index_Name IS NULL) THEN ', @level2type = ''' + CASE WHEN Parent_Column_Name IS NOT NULL THEN 'COLUMN' WHEN Index_Name IS NOT NULL THEN 'INDEX' WHEN Object_Type LIKE '%CONSTRAINT%' THEN 'CONSTRAINT' WHEN Object_Type LIKE '%TRIGGER%' THEN 'TRIGGER' END + ''', @level2name = ''' + COALESCE(Parent_Column_Name, Index_Name, Object_Name) + '''' ELSE '' END + '; GO' FROM @Extended_Property WHERE COALESCE(Parent_Object_Name, Object_Name, '') = @Table_Name_Current AND Schema_Name = @Schema_Name_Current; END |

While that isn’t the prettiest TSQL ever written, it uses a small number of variables in order to generate valid extended property creation statements. We’ll get a chance to see what the results look like in our demo below.

Final Steps

With all attributes added to @Schema_Build_Text, we can finally store this information in @Tables and then, when we exit the loop, select or print out the results. The following TSQL stores our results after each iteration:

|

1 2 3 4 5 6 7 |

UPDATE TABLE_DATA SET Result_Text = @Schema_Build_Text FROM @Tables TABLE_DATA WHERE TABLE_DATA.Schema_Name = @Schema_Name_Current AND TABLE_DATA.Table_Name = @Table_Name_Current; |

With all of our metadata collected, we can now return our results. If @Print_Results = 0, then the @Tables table will be selected in its entirety. If @Print_Results = 0, then we will iterate through tables, printing output one section at a time. The following TSQL will accomplish this task:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 |

IF @Print_Results = 0 BEGIN SELECT * FROM @Tables; END ELSE BEGIN DECLARE table_cursor CURSOR FOR SELECT Result_Text FROM @Tables; DECLARE @Result_Text NVARCHAR(MAX); OPEN table_cursor; FETCH NEXT FROM table_cursor INTO @Result_Text; WHILE @@FETCH_STATUS = 0 BEGIN PRINT @Result_Text FETCH NEXT FROM table_cursor INTO @Result_Text; END CLOSE table_cursor; DEALLOCATE table_cursor; END |

In general, printing the results is great for testing or small result sets, but may be problematic for large volumes of information. Selecting the data will be more reliable, allows for that data to be stored somewhere permanent, and avoids character limits in SQL Server Management Studio.

The Final Results

Well, we’ve built this big script that can generate schema creation statements. Let’s take it for a whirl! The following will execute this stored procedure on Person.Person, printing results, and including extended properties:

|

1 2 3 4 5 6 7 8 |

EXEC dbo.Document_Schema @Database_Name = 'AdventureWorks2014', @Schema_Name = 'Person', @Table_Name = 'Person', @Print_Results = 1, @Show_Extended_Properties = 1; |

A segment of the results are as follows:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 |

USE [AdventureWorks2014]; CREATE TABLE [Person].[Person]( BusinessEntityID INT NOT NULL, PersonType NCHAR(4) NOT NULL, NameStyle NAMESTYLE NOT NULL CONSTRAINT DF_Person_NameStyle DEFAULT ((0)), Title NVARCHAR(16) NULL, FirstName NAME NOT NULL, MiddleName NAME NULL, LastName NAME NOT NULL, Suffix NVARCHAR(20) NULL, EmailPromotion INT NOT NULL CONSTRAINT DF_Person_EmailPromotion DEFAULT ((0)), AdditionalContactInfo XML NULL, Demographics XML NULL, rowguid UNIQUEIDENTIFIER NOT NULL CONSTRAINT DF_Person_rowguid DEFAULT (NEWID()), ModifiedDate DATETIME NOT NULL CONSTRAINT DF_Person_ModifiedDate DEFAULT (GETDATE()), CONSTRAINT PK_Person_BusinessEntityID PRIMARY KEYCLUSTERED(BusinessEntityID ASC)); GO ALTER TABLE [Person].[Person] WITH CHECK ADD CONSTRAINT [FK_Person_BusinessEntity_BusinessEntityID] FOREIGN KEY(BusinessEntityID) REFERENCES [Person].[BusinessEntity] (BusinessEntityID)); GO ALTER TABLE [Person].[Person] WITH CHECK ADD CONSTRAINT [CK_Person_EmailPromotion] CHECK ([EmailPromotion]>=(0) AND [EmailPromotion]<=(2)) GO ALTER TABLE [Person].[Person] WITH CHECK ADD CONSTRAINT [CK_Person_PersonType] CHECK ([PersonType] IS NULL OR (upper([PersonType])='GC' OR upper([PersonType])='SP' OR upper([PersonType])='EM' OR upper([PersonType])='IN' OR upper([PersonType])='VC' OR upper([PersonType])='SC')) GO CREATE NONCLUSTERED INDEX [IX_Person_LastName_FirstName_MiddleName] ON [Person].[Person] (LastName ASC, FirstName ASC, MiddleName ASC) GO CREATE UNIQUE NONCLUSTERED INDEX [AK_Person_rowguid] ON [Person].[Person] (rowguid ASC) GO CREATE PRIMARY XML INDEX [PXML_Person_AddContact] ON [Person].[Person] (AdditionalContactInfo) GO CREATE PRIMARY XML INDEX [PXML_Person_Demographics] ON [Person].[Person] (Demographics) GO CREATE PRIMARY XML INDEX [XMLPATH_Person_Demographics] ON [Person].[Person] (Demographics) GO CREATE PRIMARY XML INDEX [XMLPROPERTY_Person_Demographics] ON [Person].[Person] (Demographics) GO CREATE PRIMARY XML INDEX [XMLVALUE_Person_Demographics] ON [Person].[Person] (Demographics) GO CREATE TRIGGER [Person].[iuPerson] ON [Person].[Person] AFTER INSERT, UPDATE NOT FOR REPLICATION AS BEGIN DECLARE @Count int; SET @Count = @@ROWCOUNT; IF @Count = 0 RETURN; SET NOCOUNT ON; IF UPDATE([BusinessEntityID]) OR UPDATE([Demographics]) BEGIN UPDATE [Person].[Person] SET [Person].[Person].[Demographics] = N'<IndividualSurvey xmlns="http://schemas.microsoft.com/sqlserver/2004/07/adventure-works/IndividualSurvey"> <TotalPurchaseYTD>0.00</TotalPurchaseYTD> </IndividualSurvey>' FROM inserted WHERE [Person].[Person].[BusinessEntityID] = inserted.[BusinessEntityID] AND inserted.[Demographics] IS NULL; UPDATE [Person].[Person] SET [Demographics].modify(N'declare default element namespace "http://schemas.microsoft.com/sqlserver/2004/07/adventure-works/IndividualSurvey"; insert <TotalPurchaseYTD>0.00</TotalPurchaseYTD> as first into (/IndividualSurvey)[1]') FROM inserted WHERE [Person].[Person].[BusinessEntityID] = inserted.[BusinessEntityID] AND inserted.[Demographics] IS NOT NULL AND inserted.[Demographics].exist(N'declare default element namespace "http://schemas.microsoft.com/sqlserver/2004/07/adventure-works/IndividualSurvey"; /IndividualSurvey/TotalPurchaseYTD') <> 1; END; END; GO EXEC sys.sp_addextendedproperty @name = 'MS_Description', @value = 'Check constraint [EmailPromotion] >= (0) AND [EmailPromotion] <= (2)', @level0type = 'SCHEMA', @level0name = 'Person', @level1type = 'TABLE', @level1name = 'Person', @level2type = 'CONSTRAINT', @level2name = 'CK_Person_EmailPromotion'; GO |

The text eventually is cut off as the maximum characters SSMS will display is 8192, but we get a good taste of the output, how it is formatted, and how our metadata collection allowed us to ultimately recreate schema programmatically. For all but testing/display purposes, SELECT results, rather than print them. This will be especially useful when returning larger volumes of information, such as for an entire schema or database.

Customization

The solution presented here is a proof-of-concept that, with a bit of data analysis and collection, we can turn that metadata into schema creation scripts. It is by no means a complete solution – many objects and attributes were left out, such as compression, replication, partitioning, views, functions, and more.

Add More Objects

Adding more objects into, or modifying this process, is not difficult, though. In order to add anything new to this script, the steps are straightforward:

- Add data collection for new objects or attributes.

- Generate ALTER/CREATE statements for those new objects.

- Add those statements (in the correct order) to the final PRINT/SELECT statement.

While there is certainly still work involved in this process, it becomes easier and easier with each change that is made. Include what your business needs dictate, and leave out whatever is not needed. The intention of a customizable solution is that it can be molded to your distinct needs. Alternatively, you could continue adding objects until it becomes a very complete solution for most business needs, regardless of where you or the script is needed.

Optional Components

A single parameter was added that allowed extended properties to be optional. Making other objects optional would be very similar. Add a BIT parameter to the stored procedure and use it to skip any parts of the stored procedure that deal with the objects in question. If your goal is to simply replicate tables, but to leave off keys, constraints, triggers, etc, which is common in data validation, the disabling those objects’ creation could be very useful.,

Order of Operations

The order in which objects print out is somewhat arbitrary. Obviously, the table and associated columns must go first, but after that, whether you create foreign keys or check constraints first is up to you. If you have a preferred order of object creation, then simply adjust the output order at the end of the script.

Parameter Processing

The parameters are passed in as exact matches, ie: provide a schema and table and get that exact schema and table only. An alternative is to add wildcards to parameters and return any objects that are similar to them. This may be useful if you want all objects that are similar in name to something, or share part of their names.

Additionally, other factors could be taken into account, such as column names, object names, or other methods of filtering. Modify parameters as you see fit—the script provided fills one use case, but could be modified to fit many, many others.

Conclusion

Schema documentation can be an extremely useful tool when developing new features, testing existing ones, or when maintaining schema in source control. This project is one that began with the introduction, manipulation, and storage of data from a variety of system views. It ended with the organization of that data into creation scripts that could be used to quickly document or replicate database objects quickly & efficiently.

Since the resulting script was quite large, it is attached to this article in its entirety. Feel free to download, modify, test, and implement it as you see fit. This is another example of how system metadata can be used to accomplish what may initially seem to be a daunting task. Enjoy the results, and let me know if you find any new or interesting applications of this!

You can download the script here

References and Further Reading

This script is entirely a logical extension of previous articles that have discussed system views and applications, as well as some liberal use of dynamic SQL and string manipulation. Please see these additional articles for discussions on these topics:

Searching SQL Server made easy – Searching catalog views

Searching SQL Server made easy – Building the perfect search script

Also see part 1 of this article for a more direct introduction to this topic:

Introducing schema documentation in SQL Server

Options are documented for the built-in SQL Server scripting here:

Generate SQL Server Scripts Wizard (Choose Script Options Page)

Some basic instructions on this process can be found here:

Generate Scripts (SQL Server Management Studio)

Lastly, information on catalog views, which provide the basis for this article, can be found here:

Catalog Views (Transact-SQL)

- SQL Server Database Metrics - October 2, 2019

- Using SQL Server Database Metrics to Predict Application Problems - September 27, 2019

- SQL Injection: Detection and prevention - August 30, 2019