Database maintenance is very important, a critical part of our database administrators’ daily tasks. However, this aspect is frequently underestimated which could lead to performance problems and respectively angry, unhappy customers. In this article, we will take a look at the different maintenance operations we have in SQL Server and how we can optimize them and take the maximum out of each.

Index Reorganize operation

We are taking off with the indexes in our databases. I am not going to convince you how important is to keep our indexes in a good shape, so we are directly jumping into how we can maintain them.

One of the possibilities that we have in SQL Server to keep our indexes defragmented, is the index reorganize operation. This operation is always online, uses minimal system resources, honors the fill factor that has been used during the creation of the index (common misconception is that reorganize operation does not take into account fill factor at all) and if you kill it due to any reason, the work that has been done would still persist. So far so good! However there are several major drawbacks:

- Index statistics are not being updated

- Not efficient when you have a large fragmentation as it is only reorganizing the leaf-level pages

- Cannot change the initial fill factor used during index creation

Let’s test this with one simple scenario:

Create a test database to play with one simple table and insert some data with deliberately skipping some values to produce page splits and larger fragmentation:

12345678910111213141516171819202122232425262728293031323334353637383940USE masterGOIF EXISTS (SELECT name FROM sys.databases WHERE name = N'IndexMaintenance')DROP DATABASE [IndexMaintenance]GOCREATE DATABASE IndexMaintenance;GOUSE IndexMaintenance;GOCREATE TABLE IndexTable (c1 INT, c2 CHAR (4000));CREATE CLUSTERED INDEX IndexTable_CL ON IndexTable (c1);GODECLARE @a INTSET @a = 1WHILE (@a<80)BEGINIF (@a=5 or @a=15 or @a=22 or @a=29 or @a=34 or @a=38 or @a=45) PRINT 'Nothing to insert'ELSE INSERT INTO IndexTable VALUES (@a, 'a')SET @a=@a + 1ENDINSERT INTO IndexTable VALUES (5, 'a');GOINSERT INTO IndexTable VALUES (15, 'a');GOINSERT INTO IndexTable VALUES (22, 'a');GOINSERT INTO IndexTable VALUES (29, 'a');GOINSERT INTO IndexTable VALUES (34, 'a');GOINSERT INTO IndexTable VALUES (38, 'a');GOINSERT INTO IndexTable VALUES (45, 'a');GOCheck the index details – we are interested mainly in the fragmentation, page fullness and last statistics update for this index:

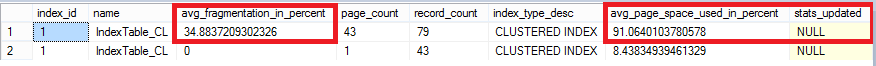

1234567USE IndexMaintenanceSELECT a.index_id, name, avg_fragmentation_in_percent, a.page_count,a.record_count,a.index_type_desc,a.avg_page_space_used_in_percent,STATS_DATE(b.object_id,b.index_id)as stats_updated FROM sys.dm_db_index_physical_stats(DB_ID(N'IndexMaintenance'), OBJECT_ID(N'dbo.IndexTable'), 1, NULL, 'DETAILED') AS aJOIN sys.indexes AS b ON a.object_id = b.object_id AND a.index_id = b.index_idOur index has above 30 % fragmentation, so we need to do something. Try with Index Reorganize first:

1234USE IndexMaintenanceALTER INDEX IndexTable_CL ON dbo.IndexTable REORGANIZEUse the query from point 2 to see what happened with the index:

Fragmentation is lower than the initial 34 %, but there is still some left so our operation did not finish the job completely. If you take a look at the page fullness, SQL Server tried to honor the fill factor, which is 100 % (or 0) in our case:

Last but not least, statistics associated with this index, have not been touched.

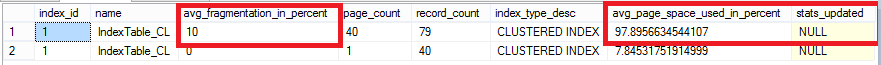

Repeat steps 1 and 2 to recreate our setup and check index details. This time, use Index Rebuild operation:

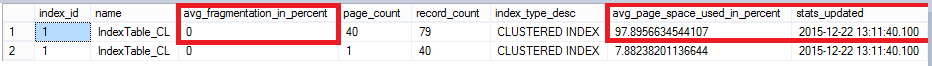

1234USE IndexMaintenanceALTER INDEX IndexTable_CL ON dbo.IndexTable REBUILDCheck the index details again after the rebuild with the script from point 2:

Fragmentation is completely gone, fill factor was honored again and this time, index statistics have been updated!

- Offline

- Online

- Take “AdventureWorks2014” database from codeplex and restore the database to your SQL Server

Then run this script created by Jonathan Kehayias to enlarge several tables:

123456789101112131415161718192021222324252627282930313233343536373839404142434445464748495051525354555657585960616263646566676869707172737475767778798081828384858687888990919293949596979899100101102103104105106107108109110111112113114115116117118119120121122123124125126127128129130131132133134135136137138139140141142143144145146147148149150151152153154155156157158159160161162163164165166167168169170171172173174175176177178179180181182183184185186187188189190191192193USE [AdventureWorks2014]GOIF OBJECT_ID('Sales.SalesOrderHeaderEnlarged') IS NOT NULLDROP TABLE Sales.SalesOrderHeaderEnlarged;GOCREATE TABLE Sales.SalesOrderHeaderEnlarged(SalesOrderID int NOT NULL IDENTITY (1, 1) NOT FOR REPLICATION,RevisionNumber tinyint NOT NULL,OrderDate datetime NOT NULL,DueDate datetime NOT NULL,ShipDate datetime NULL,Status tinyint NOT NULL,OnlineOrderFlag dbo.Flag NOT NULL,SalesOrderNumber AS (isnull(N'SO'+CONVERT([nvarchar](23),[SalesOrderID],0),N'*** ERROR ***')),PurchaseOrderNumber dbo.OrderNumber NULL,AccountNumber dbo.AccountNumber NULL,CustomerID int NOT NULL,SalesPersonID int NULL,TerritoryID int NULL,BillToAddressID int NOT NULL,ShipToAddressID int NOT NULL,ShipMethodID int NOT NULL,CreditCardID int NULL,CreditCardApprovalCode varchar(15) NULL,CurrencyRateID int NULL,SubTotal money NOT NULL,TaxAmt money NOT NULL,Freight money NOT NULL,TotalDue AS (isnull(([SubTotal]+[TaxAmt])+[Freight],(0))),Comment nvarchar(128) NULL,rowguid uniqueidentifier NOT NULL ROWGUIDCOL,ModifiedDate datetime NOT NULL) ON [PRIMARY]GOSET IDENTITY_INSERT Sales.SalesOrderHeaderEnlarged ONGOINSERT INTO Sales.SalesOrderHeaderEnlarged (SalesOrderID, RevisionNumber, OrderDate, DueDate, ShipDate, Status, OnlineOrderFlag, PurchaseOrderNumber, AccountNumber, CustomerID, SalesPersonID, TerritoryID, BillToAddressID, ShipToAddressID, ShipMethodID, CreditCardID, CreditCardApprovalCode, CurrencyRateID, SubTotal, TaxAmt, Freight, Comment, rowguid, ModifiedDate)SELECT SalesOrderID, RevisionNumber, OrderDate, DueDate, ShipDate, Status, OnlineOrderFlag, PurchaseOrderNumber, AccountNumber, CustomerID, SalesPersonID, TerritoryID, BillToAddressID, ShipToAddressID, ShipMethodID, CreditCardID, CreditCardApprovalCode, CurrencyRateID, SubTotal, TaxAmt, Freight, Comment, rowguid, ModifiedDateFROM Sales.SalesOrderHeader WITH (HOLDLOCK TABLOCKX)GOSET IDENTITY_INSERT Sales.SalesOrderHeaderEnlarged OFFGOALTER TABLE Sales.SalesOrderHeaderEnlarged ADD CONSTRAINTPK_SalesOrderHeaderEnlarged_SalesOrderID PRIMARY KEY CLUSTERED(SalesOrderID) WITH( STATISTICS_NORECOMPUTE = OFF, IGNORE_DUP_KEY = OFF, ALLOW_ROW_LOCKS = ON, ALLOW_PAGE_LOCKS = ON) ON [PRIMARY]GOCREATE UNIQUE NONCLUSTERED INDEX AK_SalesOrderHeaderEnlarged_rowguid ON Sales.SalesOrderHeaderEnlarged(rowguid) WITH( STATISTICS_NORECOMPUTE = OFF, IGNORE_DUP_KEY = OFF, ALLOW_ROW_LOCKS = ON, ALLOW_PAGE_LOCKS = ON) ON [PRIMARY]GOCREATE UNIQUE NONCLUSTERED INDEX AK_SalesOrderHeaderEnlarged_SalesOrderNumber ON Sales.SalesOrderHeaderEnlarged(SalesOrderNumber) WITH( STATISTICS_NORECOMPUTE = OFF, IGNORE_DUP_KEY = OFF, ALLOW_ROW_LOCKS = ON, ALLOW_PAGE_LOCKS = ON) ON [PRIMARY]GOCREATE NONCLUSTERED INDEX IX_SalesOrderHeaderEnlarged_CustomerID ON Sales.SalesOrderHeaderEnlarged(CustomerID) WITH( STATISTICS_NORECOMPUTE = OFF, IGNORE_DUP_KEY = OFF, ALLOW_ROW_LOCKS = ON, ALLOW_PAGE_LOCKS = ON) ON [PRIMARY]GOCREATE NONCLUSTERED INDEX IX_SalesOrderHeaderEnlarged_SalesPersonID ON Sales.SalesOrderHeaderEnlarged(SalesPersonID) WITH( STATISTICS_NORECOMPUTE = OFF, IGNORE_DUP_KEY = OFF, ALLOW_ROW_LOCKS = ON, ALLOW_PAGE_LOCKS = ON) ON [PRIMARY]GOIF OBJECT_ID('Sales.SalesOrderDetailEnlarged') IS NOT NULLDROP TABLE Sales.SalesOrderDetailEnlarged;GOCREATE TABLE Sales.SalesOrderDetailEnlarged(SalesOrderID int NOT NULL,SalesOrderDetailID int NOT NULL IDENTITY (1, 1),CarrierTrackingNumber nvarchar(25) NULL,OrderQty smallint NOT NULL,ProductID int NOT NULL,SpecialOfferID int NOT NULL,UnitPrice money NOT NULL,UnitPriceDiscount money NOT NULL,LineTotal AS (isnull(([UnitPrice]*((1.0)-[UnitPriceDiscount]))*[OrderQty],(0.0))),rowguid uniqueidentifier NOT NULL ROWGUIDCOL,ModifiedDate datetime NOT NULL) ON [PRIMARY]GOSET IDENTITY_INSERT Sales.SalesOrderDetailEnlarged ONGOINSERT INTO Sales.SalesOrderDetailEnlarged (SalesOrderID, SalesOrderDetailID, CarrierTrackingNumber, OrderQty, ProductID, SpecialOfferID, UnitPrice, UnitPriceDiscount, rowguid, ModifiedDate)SELECT SalesOrderID, SalesOrderDetailID, CarrierTrackingNumber, OrderQty, ProductID, SpecialOfferID, UnitPrice, UnitPriceDiscount, rowguid, ModifiedDateFROM Sales.SalesOrderDetail WITH (HOLDLOCK TABLOCKX)GOSET IDENTITY_INSERT Sales.SalesOrderDetailEnlarged OFFGOALTER TABLE Sales.SalesOrderDetailEnlarged ADD CONSTRAINTPK_SalesOrderDetailEnlarged_SalesOrderID_SalesOrderDetailID PRIMARY KEY CLUSTERED(SalesOrderID,SalesOrderDetailID) WITH( STATISTICS_NORECOMPUTE = OFF, IGNORE_DUP_KEY = OFF, ALLOW_ROW_LOCKS = ON, ALLOW_PAGE_LOCKS = ON) ON [PRIMARY]GOCREATE UNIQUE NONCLUSTERED INDEX AK_SalesOrderDetailEnlarged_rowguid ON Sales.SalesOrderDetailEnlarged(rowguid) WITH( STATISTICS_NORECOMPUTE = OFF, IGNORE_DUP_KEY = OFF, ALLOW_ROW_LOCKS = ON, ALLOW_PAGE_LOCKS = ON) ON [PRIMARY]GOCREATE NONCLUSTERED INDEX IX_SalesOrderDetailEnlarged_ProductID ON Sales.SalesOrderDetailEnlarged(ProductID) WITH( STATISTICS_NORECOMPUTE = OFF, IGNORE_DUP_KEY = OFF, ALLOW_ROW_LOCKS = ON, ALLOW_PAGE_LOCKS = ON) ON [PRIMARY]GOBEGIN TRANSACTIONDECLARE @TableVar TABLE(OrigSalesOrderID int, NewSalesOrderID int)INSERT INTO Sales.SalesOrderHeaderEnlarged(RevisionNumber, OrderDate, DueDate, ShipDate, Status, OnlineOrderFlag,PurchaseOrderNumber, AccountNumber, CustomerID, SalesPersonID, TerritoryID,BillToAddressID, ShipToAddressID, ShipMethodID, CreditCardID,CreditCardApprovalCode, CurrencyRateID, SubTotal, TaxAmt, Freight, Comment,rowguid, ModifiedDate)OUTPUT inserted.Comment, inserted.SalesOrderIDINTO @TableVarSELECT RevisionNumber, DATEADD(dd, number, OrderDate) AS OrderDate,DATEADD(dd, number, DueDate), DATEADD(dd, number, ShipDate),Status, OnlineOrderFlag,PurchaseOrderNumber,AccountNumber,CustomerID, SalesPersonID, TerritoryID, BillToAddressID,ShipToAddressID, ShipMethodID, CreditCardID, CreditCardApprovalCode,CurrencyRateID, SubTotal, TaxAmt, Freight, SalesOrderID,NEWID(), DATEADD(dd, number, ModifiedDate)FROM Sales.SalesOrderHeader AS soh WITH (HOLDLOCK TABLOCKX)CROSS JOIN (SELECT numberFROM ( SELECT TOP 10 numberFROM master.dbo.spt_valuesWHERE type = N'P'AND number < 1000ORDER BY NEWID() DESCUNIONSELECT TOP 10 numberFROM master.dbo.spt_valuesWHERE type = N'P'AND number < 1000ORDER BY NEWID() DESCUNIONSELECT TOP 10 numberFROM master.dbo.spt_valuesWHERE type = N'P'AND number < 1000ORDER BY NEWID() DESCUNIONSELECT TOP 10 numberFROM master.dbo.spt_valuesWHERE type = N'P'AND number < 1000ORDER BY NEWID() DESC) AS tab) AS RandomizerORDER BY OrderDate, numberINSERT INTO Sales.SalesOrderDetailEnlarged(SalesOrderID, CarrierTrackingNumber, OrderQty, ProductID,SpecialOfferID, UnitPrice, UnitPriceDiscount, rowguid, ModifiedDate)SELECTtv.NewSalesOrderID, CarrierTrackingNumber, OrderQty, ProductID,SpecialOfferID, UnitPrice, UnitPriceDiscount, NEWID(), ModifiedDateFROM Sales.SalesOrderDetail AS sodJOIN @TableVar AS tvON sod.SalesOrderID = tv.OrigSalesOrderIDORDER BY sod.SalesOrderDetailIDCOMMITNote this might take several minutes to complete.

- After the script finishes, use the table “Sales.SalesOrderDetailEnlarged” which is already with a suitable size.

- Prepare three scripts in three different tabs:

Script for reading data (select * not a good command but it will work for test)

123SELECT * FROM [AdventureWorks2014].[Sales].[SalesOrderDetailEnlarged]Rebuild offline one of the non-clustered indexes in this table:

1234567USE [AdventureWorks2014]GOALTER INDEX [AK_SalesOrderDetailEnlarged_rowguid] ON [Sales].[SalesOrderDetailEnlarged] REBUILD PARTITION = ALLWITH (PAD_INDEX = OFF, STATISTICS_NORECOMPUTE = OFF, SORT_IN_TEMPDB = OFF, IGNORE_DUP_KEY = OFF, ONLINE = OFF, ALLOW_ROW_LOCKS = ON, ALLOW_PAGE_LOCKS = ON)GOExamine the locks in your SQL Server (note to change the session_ids in your environment):

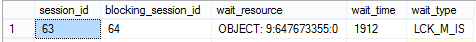

12345678910111213SELECT session_id, blocking_session_id, wait_resource, wait_time, wait_typeFROM sys.dm_exec_requests WHERE session_id = 64 or session_id = 63SELECT request_session_id,request_mode,request_type,request_status,resource_typeFROM sys.dm_tran_locksWHERE request_session_id = 63 or request_session_id=64ORDER BY request_session_id

If we run the query from a., it will work and return the result for around 1 minute without any blockings. Let’s start the index rebuild from b., then switch to a. query and run it and observe the locks with the query c. This time, we are not seeing any rows immediately coming from a. query (session 63) because the offline index rebuild on the non-clustered index is blocking our session (session 64):

- Preparation phase – you need to take at this point a Share lock for the row versioning and the lock is not compatible with an exclusive lock

- Build phase – the phase of populating data into the new index. Typically you should not see any locks here

- Final phase – declare to SQL that the new index is ready. In order this to happen, we need a Schema Modification lock is not compatible with any locks.

- Download database “AdventureWorksDW2012” from here and attach it.

Get an exclusive lock on the table:

1234567USE [AdventureWorksDW2012]GOBEGIN TRANSACTIONUPDATE [dbo].[FactProductInventory]SET UnitsIn=5Start index rebuild operation in another tab:

1234567USE [AdventureWorksDW2012]GOALTER INDEX [PK_FactProductInventory]ON [dbo].[FactProductInventory]REBUILD WITH (ONLINE= ON);Examine the locks with the same script used above (again do not forget to put your session_ids):

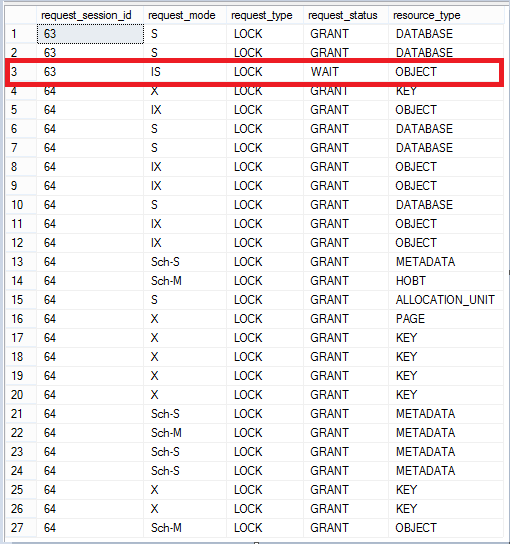

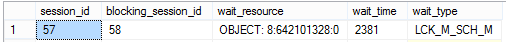

12345678910111213SELECT session_id, blocking_session_id, wait_resource, wait_time, wait_typeFROM sys.dm_exec_requests WHERE session_id = 58 or session_id = 57SELECT request_session_id,request_mode,request_type,request_status,resource_typeFROM sys.dm_tran_locksWHERE request_session_id = 58 or request_session_id=57ORDER BY request_session_id

Session 57 (online index rebuild) is waiting for all of the exclusive locks to be released. This makes the operation unpredictable as we cannot say for sure when the maintenance operation will be able to take this lock and start its work. This might take several seconds or minutes, but in some situations even hours!

Rollback the transaction holding the lock (session 58 in my case) and immediately start it again (you have several seconds to do this before the index rebuild operation finishes). Check the locks now:

Oh no! We are waiting one more time, but for a Schema Modification lock to be granted so the SQL can complete the rebuild operation. This is another unpredictable time interval at the end of our procedure.

Online maintenance is holding locks at the beginning and at the end of our operations, which might be problematic especially on critical and busy production servers. Today online index rebuild might take 1 hour, but tomorrow might need 10 hours or even more!

- MAX_DURATION – how many minutes we will wait to take a lock on the table before SQL takes any actions

- ABORT_AFTER_WAIT – what will actually happen if we are unable to receive the locks within the desired interval

- BLOCKERS – sessions, that are preventing our locks, will be killed

- SELF – our maintenance session will be killed

- NONE – we will keep waiting and nothing will happen (the same behavior we would see if we are not using this feature)

Run this to take an exclusive lock:

1234567USE [AdventureWorksDW2012]GOBEGIN TRANSACTIONUPDATE [dbo].[FactProductInventory]SET UnitsIn=5Start index rebuild operation, using wait at low priority with BLOCKERS option:

1234567ALTER INDEX [PK_FactProductInventory] ON [dbo].[FactProductInventory]REBUILD WITH(ONLINE = ON ( WAIT_AT_LOW_PRIORITY ( MAX_DURATION = 1 MINUTES, ABORT_AFTER_WAIT = BLOCKERS ) ))Check the locks (note to put your session_ids):

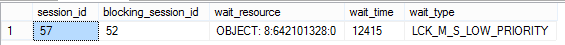

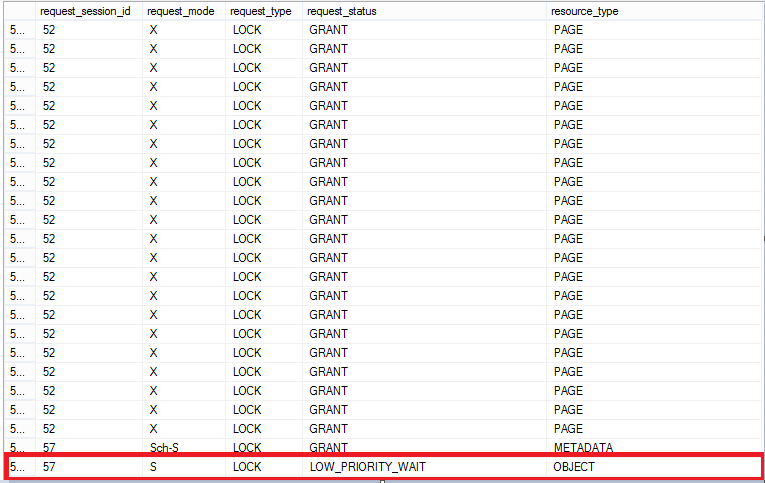

12345678910111213SELECT session_id, blocking_session_id, wait_resource, wait_time, wait_typeFROM sys.dm_exec_requests WHERE session_id = 52 or session_id = 57SELECT request_session_id,request_mode,request_type,request_status,resource_typeFROM sys.dm_tran_locksWHERE request_session_id = 52 or request_session_id=57ORDER BY request_session_id

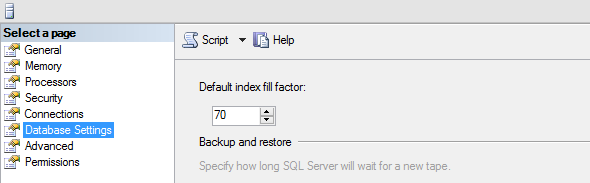

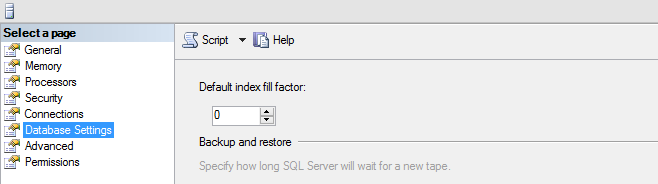

- In order this to take effect, we have to restart SQL Server otherwise the new indexes will still be created with the default fill factor of 0 or 100 %.

Changed the setting without restarting SQL Server:

Use again “AdventureWorksDW2012” database, table dbo.DimProduct without specifying the fill factor:

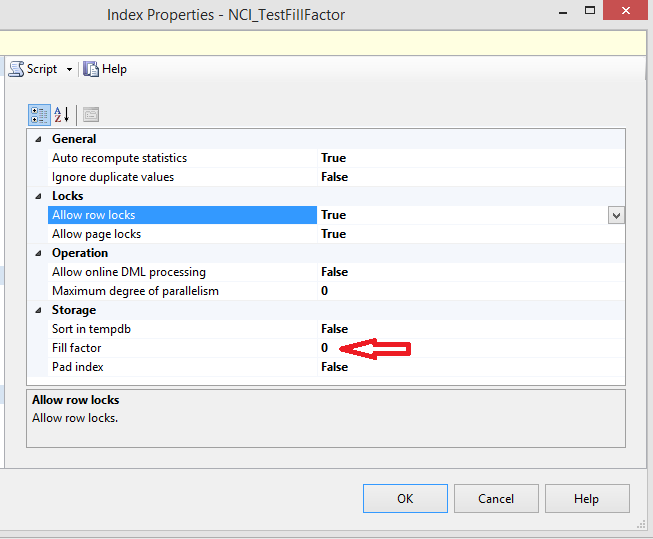

12345678910USE [AdventureWorksDW2012]GOCREATE NONCLUSTERED INDEX [NCI_TestFillFactor] ON [dbo].[DimProduct]([WeightUnitMeasureCode] ASC,[SizeUnitMeasureCode] ASC)WITH (PAD_INDEX = OFF, STATISTICS_NORECOMPUTE = OFF, SORT_IN_TEMPDB = OFF, DROP_EXISTING = OFF, ONLINE = OFF, ALLOW_ROW_LOCKS = ON, ALLOW_PAGE_LOCKS = ON)GOCheck the fill factor from SSMS:

It is still using the default fill factor.

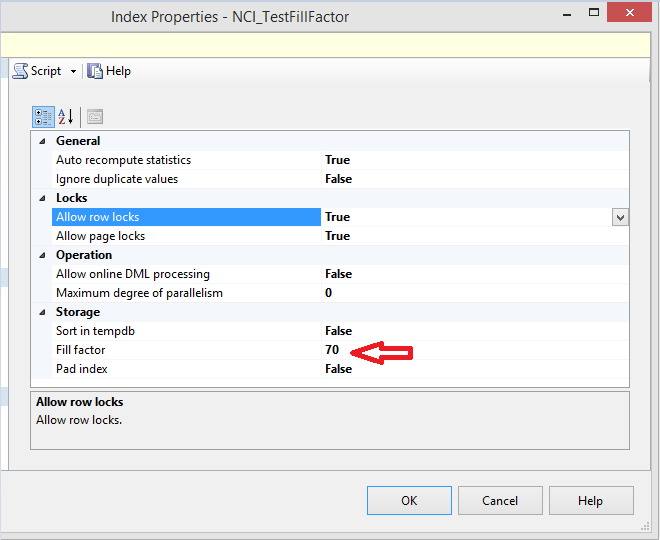

Restart SQL Server, drop the newly created index and recreate it again with the above script

It is now using the instance-level current setting.

- This has no effect on the already created indexes. They will not be rebuild automatically by SQL Server when you change this setting.

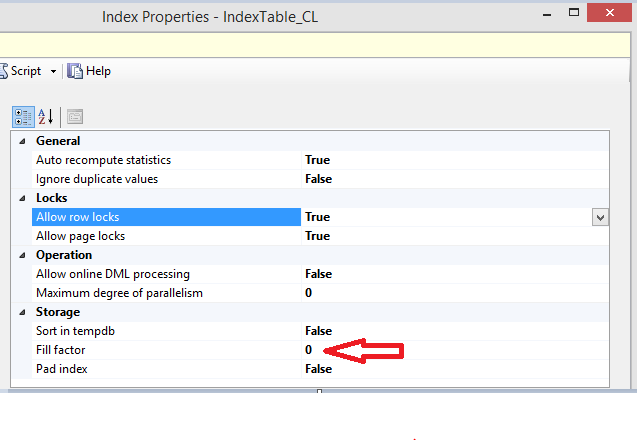

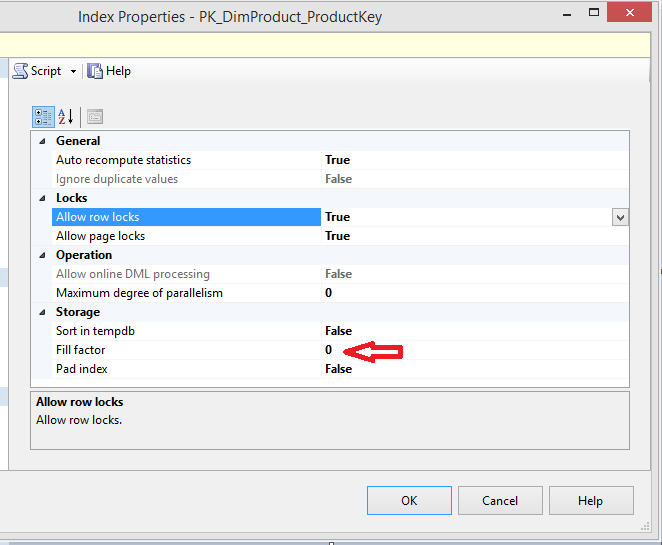

If we take a look at the PK on the same table dbo.DimProduct, it still has fill factor 0 (the one used during the creation of the index):

Even if you do rebuild the indexes after the new fill factor is already in effect and working for new indexes, the old one would still be using the fill factor specified at the time of their creation unless you specify a new one in the rebuild command.

Rebuild the index with this query:

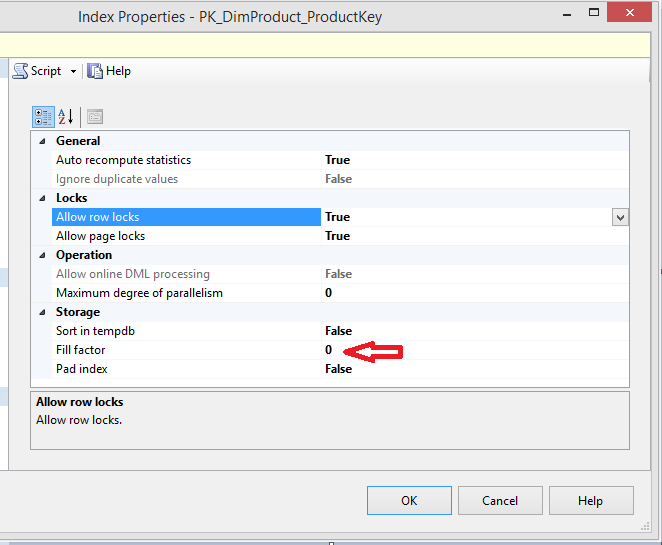

1234USE [AdventureWorksDW2012]ALTER INDEX [PK_DimProduct_ProductKey] ON [dbo].[DimProduct] REBUILDCheck the fill factor now:

It has not been changed even after the rebuild operation.

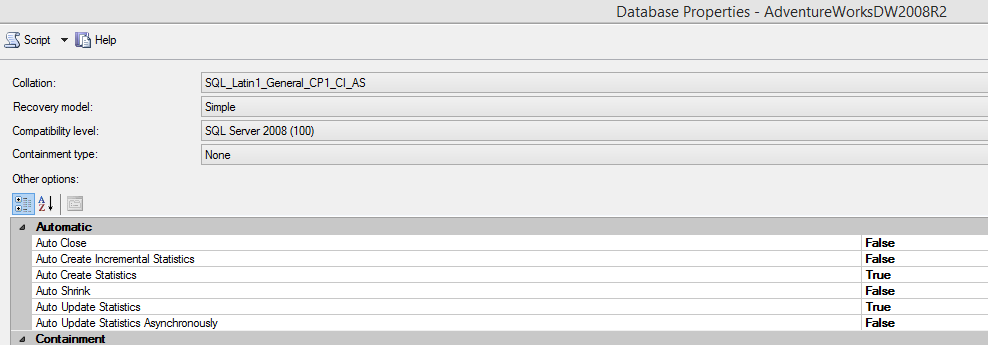

- Grab “AdventureWorksDW2008R2” database from here and attach it

Run this script to turn off Auto Create Statistics for this database:

123456USE [master]GOALTER DATABASE [AdventureWorksDW2008R2] SET AUTO_CREATE_STATISTICS OFF WITH NO_WAITGOInclude the actual execution plan and start this query:

1234567USE [AdventureWorksDW2008R2]GOSELECT RevisionNumberFROM dbo.FactInternetSalesWHERE TaxAmt = 5.08

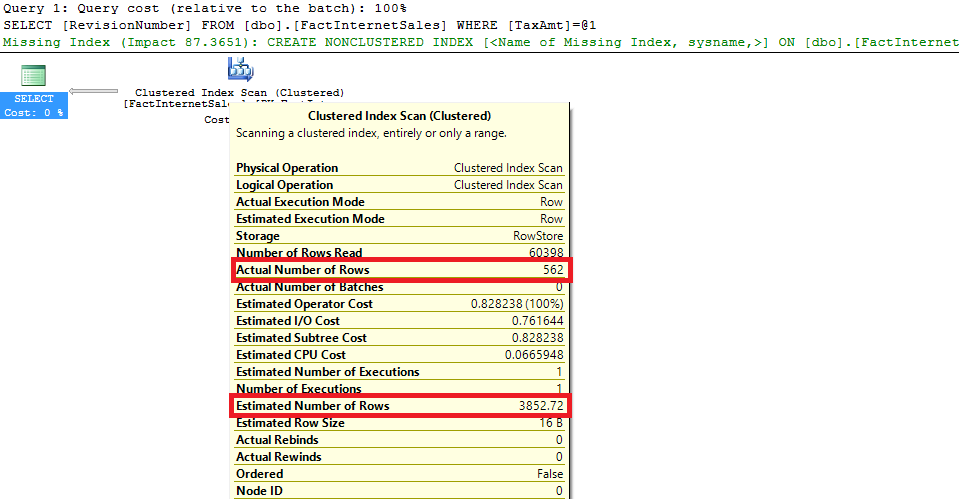

There is a great difference in the actual and estimated number of rows for this query which is an issue in most of the situations.

We can create an index on column “TaxAmt” and solve our problems but in this case, I would like to enable Auto Create Statistics and start the query again:

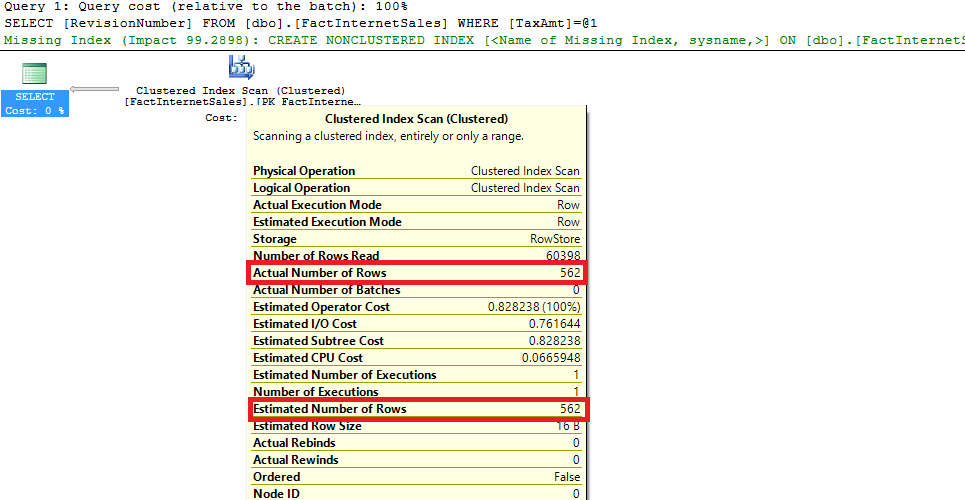

1234567891011USE [master]GOALTER DATABASE [AdventureWorksDW2008R2] SET AUTO_CREATE_STATISTICS ON WITH NO_WAITGOUSE [AdventureWorksDW2008R2]GOSELECT RevisionNumberFROM dbo.FactInternetSalesWHERE TaxAmt = 5.08

SQL Server created a column statistic that helped to receive a better cardinality.

- Index Rebuild operation is updating index statistics. This is always and it is happening with 100 % sample. General mistake is to include update statistics task for all statistics after index rebuild. Not only we will do index statistics update twice, but we will also loose the “free” 100 % sampled stats update due to the index rebuild operation as SQL Server, by default, is doing this using a dynamically determined sample lower than 100 %.

- If we are doing some kind of a “clever” maintenance based on the fragmentation of our indexes, we have to take care of the statistics attached to the indexes being maintained with Reorganize operation. Index Reorganize does not update index statistics and they might become stale in the future.

Regardless of the index operations being done on our SQL Server, we must make sure that column statistics are being updated as well.

- Create a “clever” maintenance to rebuild or reorganize indexes based on their fragmentation

- Update all of the statistics, but only if there have been changes since the most recent update

- If we reorganize an index, the second task will update its statistics (if there have been changes in the data, of course)

- If we rebuild an index, the second task will skip index statistics update for this particular index (unless there have been changes between the rebuild and statistics update operations)

- We make sure that columns statistics are being taken care of

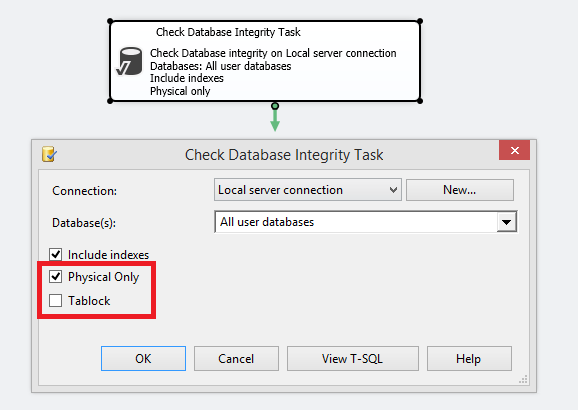

- Use always with NO_INFOMSGS – it is less likely that you will miss a critical message and this can reduce the execution time of the integrity check (the effect is greater on small databases).

- Use with PHYSICAL_ONLY – DATA_PURITY checks are taking a significant portion of the whole duration of the CHECKDB operation so it is a must to skip it for very large databases. Note that you still have to plan a complete CHECKDB operation once in a while – for example, every one month.

- Offload the checks to a secondary server – this is a great option, but unfortunately, the only one that gives you 100% guarantee is to have a backup of your production database restored on another server and run integrity checks there. HA/DR solutions (like AlwaysOn, SAN mirroring, snapshot on mirrored database) are not that trustworthy as you are involving two different storage subsystems.

- TF 2549 – instruct SQL to treat each database file as residing on a unique disk. The idea is that there is an internal list of pages that SQL has to read during this process and the list is being built per unique database drives across all files. SQL Server is making the decision based on the drive letter which means in a classic situation where you have, for example, 4 data files, residing on different LUNs, but on the same root drive, you will not take advantage of the fact that your files are actually scattered.

- TF 2562 – at the cost of higher TEMPDB utilization (usually up to 10 % of the size of the database), SQL Server is optimizing the integrity check process by treating it as one batch and reducing the latch contention (DBCC_MULTIOBJECT_SCANNER latch). Note that this flag is part of the SQL 2012 code so you do not have to turn it on, if you are running on this SQL or higher.

- TF 2528 – CHECKDB is multi-threaded by default, but only in Enterprise edition. It is not unlikely to detect CPU contentions caused by your integrity checks. By using this TF, you can turn off the parallelism for CHECKDB.

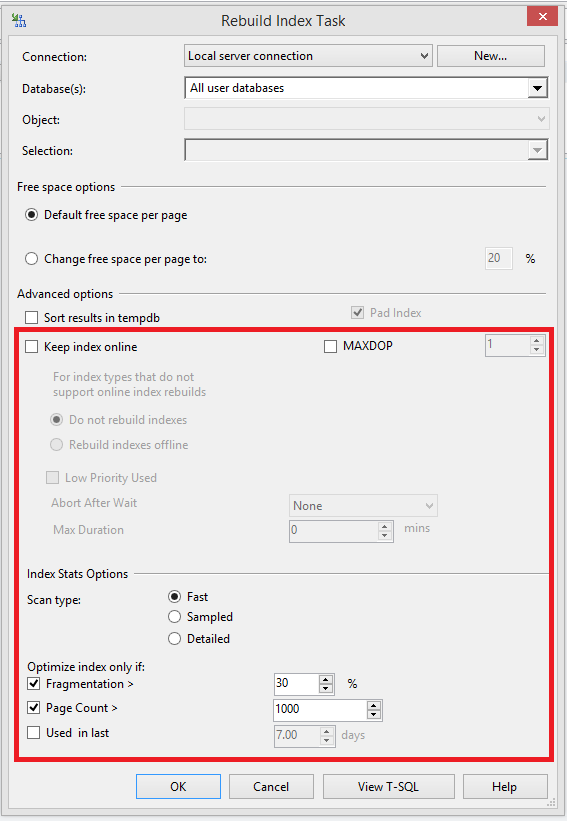

Integrity checks now have PHYSICAL_ONLY and TABLOCK options

Tons of new stuff in Index rebuild: MAXDOP, WAIT_AT_LOW_PRIORITY, Fragmentation scan type, % Fragmentation, Page count and if the Index has been used:

- How to perform backup and restore operations on SQL Server stretch databases - September 7, 2016

- SQL Server stretch databases – Moving your “cold” data to the Cloud - August 18, 2016

- Tips and tricks for SQL Server database maintenance optimization - January 11, 2016

Index Reorganize is a useful operation when your fragmentation is below 20-30 %, you do not need to change the original fill factor of your index and you are planning on doing index statistics update at a later point in time.

Index Rebuild operation

We already covered that index rebuild is the approach you should use when the fragmentation is high. When you use this method, there are two options:

Offline index rebuild means really offline! While an index is being created, dropped or rebuilt offline, the table cannot be accessed and this is valid for non-clustered indexes as well:

Even this was a non-clustered index, we were not able to read our data while the rebuild operation was taking place.

The only exception to this rule is that we are allowed to read data from the table while a non-clustered index is being created offline.

We now know for sure that offline operations are really offline. I guess this is very intuitive so we did not reinvent the wheel. The interesting point is when it comes to online operations. Are they really online?

Let’s see!

Before we go into more details, keep in mind that this is an Enterprise edition feature only.

Online operations are divided into three phases (if you are keen on learning more details, please check this whitepaper):

Example:

Wait at low priority

With SQL Server 2014, Microsoft has shipped a new functionality which could be very useful in relieving our online index operations. The feature is called: wait at low priority. It is now possible to have an influence on what is happening with our maintenance after a predefined period of time elapsed and we are still not able to acquire the required locks:

|

1 2 3 |

ONLINE = ON (WAIT_AT_LOW_PRIORITY (MAX_DURATION = 1 MINUTES, ABORT_AFTER_WAIT = BLOCKERS|SELF|NONE)) |

In this scenario we will use again “AdventureWorksDW2012” database – if you do not keep it from the previous demos, grab it from here and attach it:

Session 57, online index operation, is waiting again for a Shared lock, but, this time, waiting at low priority. After 1 minute in our case, SQL Server will kill the queries preventing us from taking this Shared lock on the table, if they have not succeeded yet and the rebuild operation will start. The good news is that we have these stuff logged in the error log:

Message

An ‘ALTER INDEX REBUILD’ statement was executed on object ‘dbo.FactProductInventory’ by hostname ‘XXXXX’, host process ID 6124 using the WAIT_AT_LOW_PRIORITY options with MAX_DURATION = 1 and ABORT_AFTER_WAIT = BLOCKERS. Blocking user sessions will be killed after the max duration of waiting time.

Message

An ABORT_AFTER_WAIT = BLOCKERS lock request was issued on database_id = 8, object_id = 642101328. All blocking user sessions will be killed.

Message

Process ID 52 was killed by an ABORT_AFTER_WAIT = BLOCKERS DDL statement on database_id = 8, object_id = 642101328.

The transaction holding the lock has been killed and we were able to complete our very important rebuild operation on time 🙂

This is a nice feature, but we have to be very careful with it! Depending on what kind of transactions are using the different tables and indexes respectively, we have to make an educated and informed choice how and if we are going to utilize this new SQL option.

Fill factor

When we are creating indexes in SQL Server, there is one very important property – fill factor: it is instructing what percentage of each leaf-level page we would like to be filled up. Recommendations are to play with the fill factor for indexes that are becoming fragmented very often. We will not go into details about page splits and how pages in indexes are becoming out of order. The focus will be what the influence is if we change the default instance-level fill factor.

SQL Server is being shipped with a fill factor of 0:

This means that if we do not specify a new fill factor during index creation, we will try to fill the leaf-level pages as much as possible (fullness will be a number close to 100 %). If there are a lot of fragmented indexes in our instance and all of the databases residing there are being utilized in a similar manner (in terms of a number of read/write operations) we can benefit of changing this setting.

Keep in mind several important stuff:

I have heard a lot of questions regarding what is happening with the indexes when I change the instance-level fill factor, so I hope you now have a better understanding 🙂

Note that on the majority of the occasions it is not recommended to change the instance-level fill factor. The better approach is to leave this at 0 and change it only for specific indexes where you have noticed a lot of fragmentation and expecting heavy page split operations.

Statistics

Statistics are very important objects in our databases as they are being used to produce optimal query execution plans. There are two types of statistics: index and column statistics. Index statistics are always generated when a new index is created. There is no way to circumvent this behavior! Column statistics are being built by SQL Server in order to have a better understanding of the data distribution and decide what the best option to execute a certain query is. Unlike index statistics, we can have our word for column ones:

On database level, we can disable Auto Create Statistics and this will be valid only for the column ones.

Let’s see a simple example:

As we now understand the importance of the statistics, we have to take care of them and include a task in our maintenance that is going to keep these objects in a good shape. Here we need to take into account several critical aspects:

Starting with SQL Server 2008 R2 SP 2, we have “modification_counter” column part of “sys.dm_db_stats_properties” which we can utilize to determine if there have been any rows modification since the most recent statistics update. This might be very useful in the following scenario:

Integrity checks

Last but not least, we will take a look the database integrity and consistency checks. This is another task that must be part of your regular maintenance. Nowadays we are all aware how critical the integrity of databases is, but we tend to schedule it by default without any additional tweaks. Of course, this is the better situation compared to the one where we do not have this at all. However, we need to customize this in order to take the maximum out of it.

Here are my tips how to reduce the duration of your integrity checks and still rely on them:

With using PHYSICAL_ONLY and TF 2549, 2562, we have managed to reduce the time for the integrity check of a 10 TB database to around 5 hours! This was a great achievement as prior to these optimizations, the CHECKDB has been finishing for around 24 hours.

New maintenance plans options in the GUI since CTP 2.4

Before SQL Server 2016 CPT 2.4, our options for creating maintenance plans via the GUI in SSMS have been very limited. Usually, in order to create a good, reliable maintenance, we needed to implement it dynamically with special scripts. This is still the best option that gives you maximum customization, but now some of the restrictions have been lifted and there are new options in the GUI:

As SQL administrators, we cannot deny that we are in love with the SSMS and GUI Since CTP 2.4 of SQL Server 2016, we have the ability to produce good enough maintenance plans with only several clicks and without the need to write complex customized solutions.

Hope the material covered will be useful for you and, at least, some of the suggestions will be utilized to improve your current maintenance setup Keep in mind that it is important to customize your maintenance it as this might save you a lot of troubles and sleepless nights.

Thanks for reading!