As our company has grown, we’ve recently added developers to our team who want to use open source tools (open source languages and libraries). In the past, we built and used our own custom libraries, but our new developers to prefer to use open source libraries or add new languages that require new libraries. We’re concerned that the use of open source libraries may not be secure and may introduce new inputs and outputs in our system that we don’t fully understand. What should we consider when we think about allowing open source software, tools or languages in our environment from the standpoint of security?

Overview

While we can use open source libraries to help us build software faster, depending on our situation, the libraries may introduce unintended effects to our applications. We’ll first look at some of the advantages of open source software and evaluate some of its drawbacks in the context of security. Since different companies have different levels of scrutiny regarding security, or in some cases, different applications require different levels of scrutiny, open source may offer us a useful tool. We may also want to avoid open source tools completely.

Advantages

We will obtain many benefits from using open source software tools and the three greatest advantages for companies where these tools apply are:

- Open source languages and (or) libraries can increase the speed of delivery for our software by reducing the time it takes to develop tools. If a tool solves a problem that we face and it takes significant time to develop, these tools can save us the development time.

- Open source languages and (or) libraries may be used frequently, which means that questions involving these tools can be common and have answers to these questions. While this can be a major advantage in some situations, it can also be a major problem. What if the questions being asked are a hacker trying to compromise something? For an example, the question “I’ve lost access to” or “someone compromises us and we need” could be a different actor than what’s implied in the question.

- Open source languages and (or) libraries can help us find technical talent easier, as we may be using tools which require talent that we struggle to find. In the case of some languages that have robust security, it can be very difficult to find people who know these languages in addition to knowing how to secure their applications.

- If open source languages and (or) libraries are heavily scrutinized from a security standpoint and we have evidence that this is the case (not assumed), these may be more robust than closed source tools as each line of code that is changed is scrutinized. Provided that we also contribute to the tool while also ensuring the community surrounding it watches security carefully, we can increase the probability of preventing security problems. This point becomes a weakness if we assume this is true for the language and (or) library without evidence.

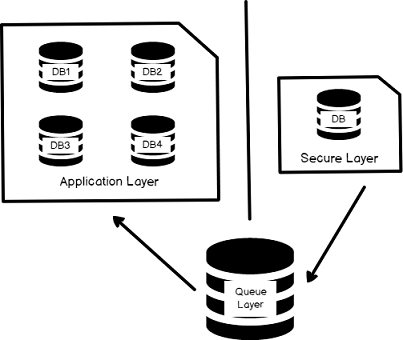

Even when we’re considering security, we should not overlook the advantages of open source tools, as these advantages can help us in some areas. As we see in the below image, in many environments we may be able to demarcate the applications which require strict security from applications which may not carry as strict rules. Consider a data environment where we have PII data, which must have strict rules, with data from public sources. We could keep and analyze the data in open source tools, while keeping our PII data separate in a more secure layer, with a queue layer that delays communication between the secure and applications layers (see below image). This means that we can use open source tooling in less strict environments (the “application layer” in the below image), if security is a concern, or we can architect our environment in a manner that allows us to use open source tooling anywhere – such as refraining from storing data that could compromise our environment.

Disadvantages

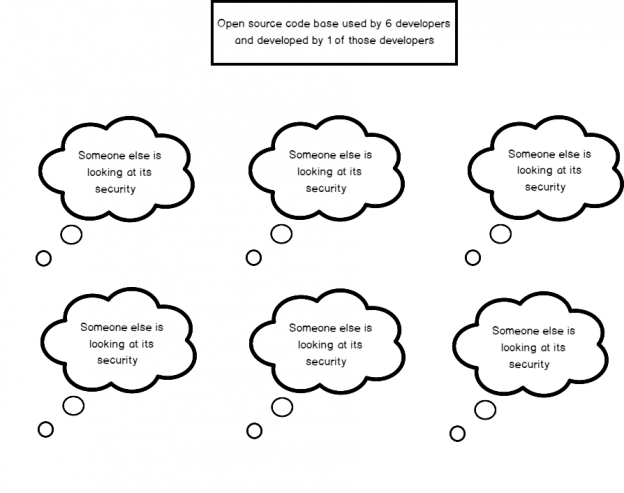

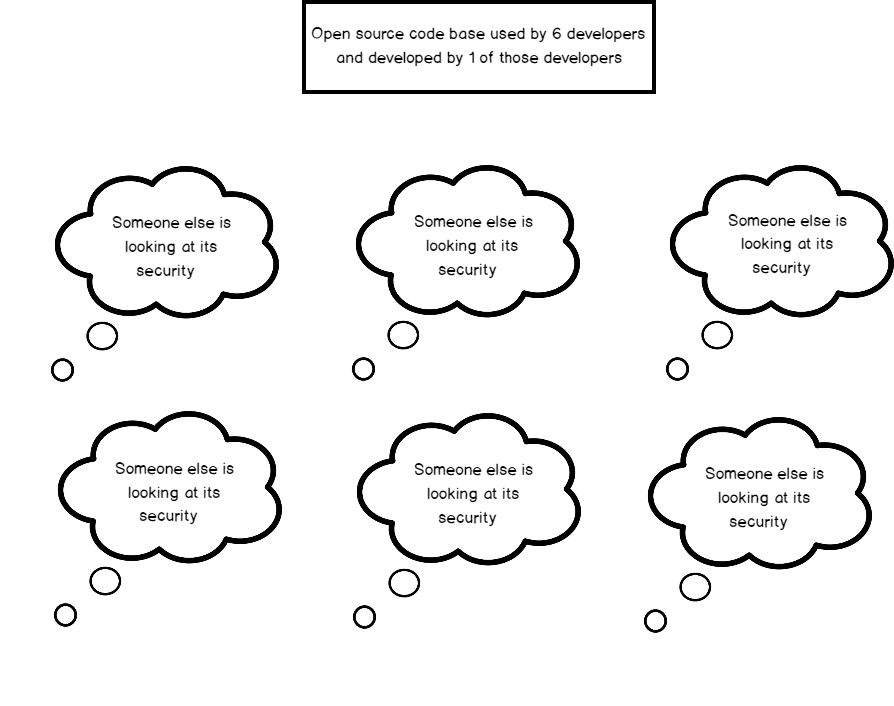

In the advantages of open source, I did not include the “many eyes” argument without evidence that we often hear and there’s a reason why. While I agree with some people that open source tools can come with many people scrutinizing the tool, this is often only true for a small set of the overall open source universe. There’s also a danger in this argument which social psychologists call the diffusion of responsibility (see below image) – “I don’t need to worry about the security of this library because other people are looking at it.” How do we actually know this?

This problem compounds when we see a proliferation of new open source tools, as attention to each tool begins to thin, increasing the probability that a flawed design is checked-in to the tool. As one security expert once warned me, “Hackers rely on developers using tools they don’t fully understand.” If a developer can only explain what the library does, that’s not enough. Where are the possible security holes in the library?

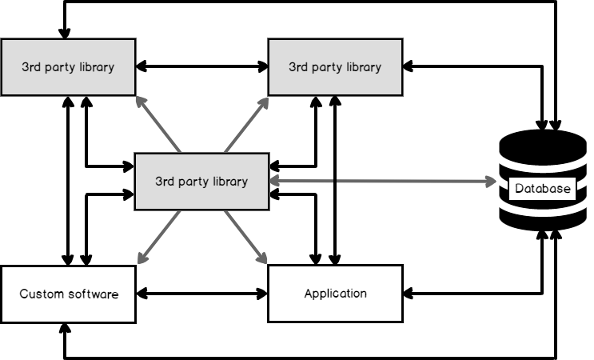

Another drawback to open source tools is that they add new inputs and outputs in our system (communication) among actors (applications, databases, tools, etc) that we may not fully understand or monitor, especially if we don’t thoroughly test them. For an example, suppose that we use a tool that needs to be updated from time to time and experiences a bug without an update. To update software means we have to add something to our system – an input into our system. This drawback increases if we assume that others are carefully evaluating the library when they are not. Tools as simple as browsers have experienced zero-day compromises, so even simpler software that we assume is safe, can come with dangers.

How to address the disadvantages

First, security concerns do not apply to every company equally, as we’re seeing a growing number of companies opting out of storing data that require complex security. This doesn’t mean that these companies can do anything they want, but it does mean they have more flexibility when they’re considering how they want to design their applications. As we continue to see security compromises, these companies will continue to have a competitive edge.

Second, we should recognize when we’re faced with the diffusion of responsibility regarding an open source tool – when people assume someone else is measuring the security of the tool. Some of the patterns with open source tools are:

- Developers emphasize the convenience of the tool, but not the security. A great example of this is an about page explaining the tool, but with no discussion about security.

- We get no answers or deferred answers about security when we ask about the security of the open source tool. A great example of this is when we ask about security and we get the reply, “Other developers are looking at that” but no names are mentioned and we have no reference points for who these developers are.

- We cannot answer how to keep the communication (inputs and outputs) of the language or tools safe or monitored. If a tool will need to be updated from time to time, how will we ensure that the update doesn’t install code that could compromise our system? Will we always allow the application to update whenever it needs to?

- The open source language and (or) library is very new and hasn’t been challenged. Anything new should be thoroughly tested in development before ever considering it in a higher environment (this also includes non-open source languages and libraries).

Once we know who’s evaluating the tool from an open source perspective, we can query the developers involving our concerns with security and our use. In addition to their answers, we can also check this information for ourselves. In a similar manner, if we’re reading about an open source tool, we should start with security, then look at the what it offers. If we’re not comfortable with the security, we should avoid it, regardless of its convenience.

To prevent watering hole hacks, never publicly discuss outside appropriate sources what open source libraries you use and when contacting developers behind an open source library, do not contact them with any affiliation to a company. While watering hole attacks are generally associated with websites, these may be used with open source tools where hackers identify popular tools and try to compromise them. Never forget that a hacker can create or contribute to an open source with the intent of compromising the people who use the tool.

Third, when we have applications with communication involving secure information and data, we should design in a manner that allows being strict in our validation of the communication. An example of this would be a request for PII data for a customer which is placed into queue and the queue delays the information from being sent for a period while the request is validated (again) and the data are sent securely. The faster we can get our own data, the faster a hacker can. If we delay requests for high priority data, the hacker has another obstacle too.

Fourth, if we are using an open source tool in a situation where we have any type of client or third-party private or personal data where we’re using a tool, we should inform these individuals with the context, such as “we use open source tools for our front-end reports” or “we use open source tools for our back-end which stores your private data.”

- Data Masking or Altering Behavioral Information - June 26, 2020

- Security Testing with extreme data volume ranges - June 19, 2020

- SQL Server performance tuning – RESOURCE_SEMAPHORE waits - June 16, 2020