You have to agree with me, when public clouds were introduced your thought was that you will never put your production data in there. Our idea was that your data is exposed, insecure and vulnerable. This was not the case back then, it is not case at the moment as well.

Microsoft Azure implemented numerous features to help us secure our Azure SQL Databases on so many different levels, from securing the inbound connections to encrypting your whole database. Along with the possibility to setup firewall rules and setup server and database audits I would suggest that now Azure is quite a safe place to run a SQL Database.

We can divide the current options for protecting our SQL databases within azure in three major categories:

- Monitoring the activities

- Controlling the access

- Physically protecting the data

Starting with Monitoring – we have the possibility to enable a server wide audit session that is by default inherited by each of the databases created within this logical server. Of course, we can disable this on server level and enable it on each database, the second option is to exclude only certain databases.

The default configurations that we see when navigating to the “Auditing & Thread detection” tab is as follows:

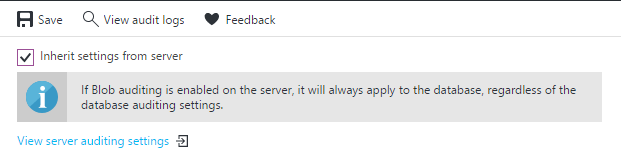

When we disable the “Inherit settings from server” additional configuration options appear.

We can choose if we would like to enable auditing and thread detection. Along with the possibility to choose where to store our audits, which thread detection types we would like to use and an email to be notified upon a new thread. Recommended is to keep the audits within a blob storage as it is both faster and less expensive than using a Table – which is now also deprecated and will not be available in the future.

Once the audit settings are altered and saved, we have the possibility to directly review the stored data from the portal using the “View audit logs” button.

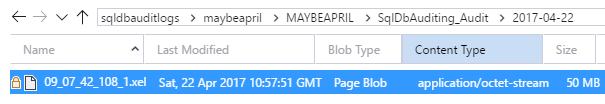

Of course, there are other ways of reviewing the log and extracting the required data, one of them is to use the “Microsoft Azure Storage Explorer”:

Note that the audit logs are stored as .xel files and you can use SSMS to review them as well.

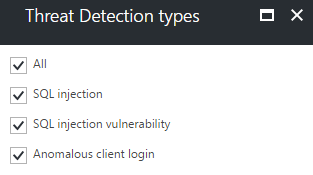

Thread detection (currently in public preview) is activated in similar way, in addition we have the possibility to choose for which kind of threads we would like to monitor – SQL Injection, SQL Injection vulnerability, Anomalous client login or all.

Once this is configured our SQL database will be monitored automatically, on the background three different methods are working on for us – Integrated threat intelligence, Behavioural analytics and Anomaly detection. Thread intelligence and Behavioural analytics rely on the insights that Microsoft gather from their broad range of services, security teams and the know patters of different attacks.

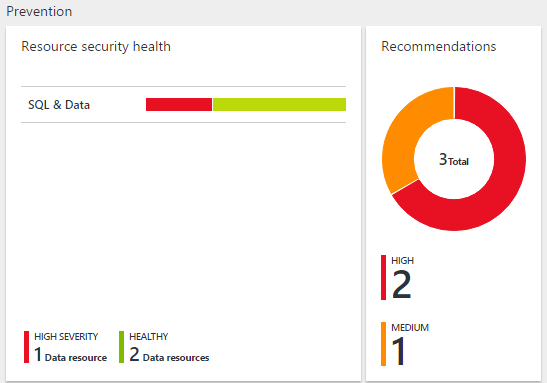

Anomaly detection is a more personalized solution which utilizes machine learning capabilities to get familiar with our environment and to spot any strange actions that may occur and deviate from the standard. All of the security recommendations and threads are reported within a central location named “Azure Security Center”, in addition it allows you to directly integrate it with PowerBI or take the recommended actions securing your environments directly from the Security Center.

In order to Control access to our SQL Databases we can rely on the Azure Firewall implementation, or technologies that we are familiar with from the on premise implementations – Active Directory authentication, Dynamic data masking and roll-level security.

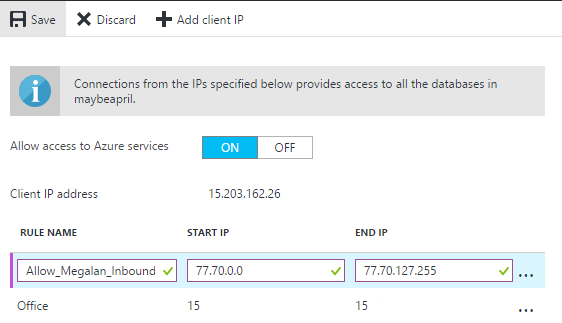

Firewall implementation is fairly simple, we have a server and database level rules that allow a range of IPs access to our resources. Without explicitly creating new rule no one has access to our SQL Databases. Server level rules can be created from the portal by specifying the Start and End IPs of a certain range:

Database level rules can be created only using TSQL or PowerShell. Creating a new firewall rule using TSQL utilizes the stored procedure “sp_set_firewall_rule”, deleting a firewall rule utilizes the “sp_delete_firewall_rule” procedure

|

1 2 3 4 |

EXECUTE sp_set_firewall_rule @name = N'Allow_Megalan_Inbound', @start_ip_address = '77.70.0.0', @end_ip_address = '77.70.127.255' |

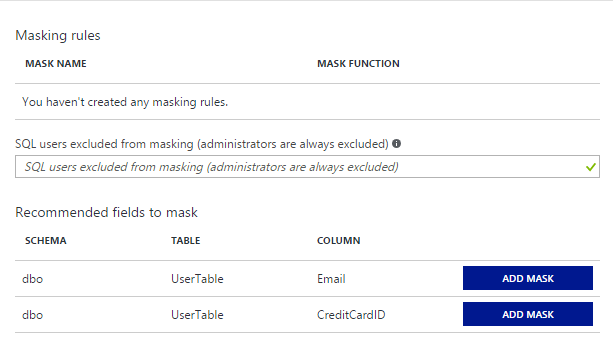

Dynamic data masking has its own configuration tab as well, in which we could see recommendations for columns that contain sensitive data and can benefit from masking them.

Adding a mask that was recommended to us is fairly simple, we only need to choose “Add mask” for the certain column and then Save the changes.

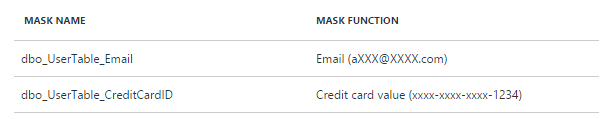

Azure is able to determine the type of data that is stored within a column and choose a suitable masking function, in our case the email masking function is hiding most of the email, and the Credit card masking function is hiding all except the last four digits.

Other available masking functions available at our disposal are the “Default value”, “Random value” and “Custom string”.

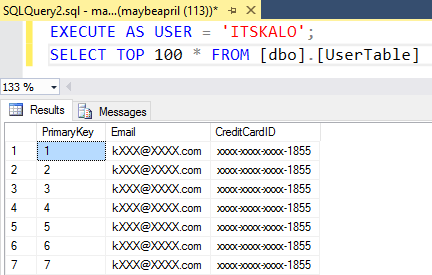

Once a masking functions is enabled only administrators and users that are explicitly listed can unmask the data, otherwise it is visible as follows:

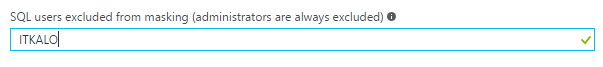

To grant a user rights to unmask data it should be listed as follows:

Physically protecting your data is something quite important, especially in the case when your database resides in somebodies else datacentre. Encrypting your data and making it readable only when using a certificate or key can be done by either using Transparent Data Encryption (TDE) or Always Encrypted.

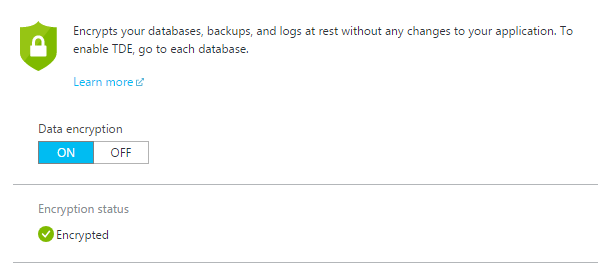

Transparent data encryption for SQL Databases in Azure is quite easily configured true its configuration tab. With SQL databases we do not have the possibility to control where the keys and certificates are placed. Using a SQL Server hosted on a virtual machine we have the additional option to use an asymmetric key from Azure Key Vault.

Once configured the physical files of your database are now encrypted and can be accessed only by the SQL engine that has the required certificates and keys*****; In addition to this the database backups, logs and geo-replicated copies are encrypted as well and Azure manages all of the required actions on our behalf. When exporting a database from Azure the export file .bacpac is a non-encrypted one and it is our responsibility to secure it once we have it.

- Performance tuning for Azure SQL Databases - July 21, 2017

- Deep dive into SQL Server Extended events – The Event Pairing target - May 30, 2017

- Deep dive into the Extended Events – Histogram target - May 24, 2017