Understanding indexing needs allows us to ensure that important processes run efficiently and that our server hardware is not being over-taxed by poorly performing queries.

Collecting metrics on SQL Server index usage and missing index needs is critical to making smart decisions. To be truly proactive, though, we need to create a framework that allows us to quickly, efficiently, and regularly report on changes in indexing needs. With a system in place that can let us know when changes are needed, we can stay ahead of the optimization game and keep our customers happy!

Introduction

In previous articles, we built processes that regularly collect and aggregate data about missing SQL Server indexes and index usage statistics. Using this information, we can take additional steps to analyze this data and begin to make decisions based on it. Our goal is to build a reporting framework that can be run as often as desired where the results are stored in a table for easy retrieval.

Once we report on index usage or missing SQL Server indexes, we can generate recommendations. Each time the process is run, we can check those recommendations and determine what has changed and if they have become more or less relevant over time. If we implemented a suggestion, we can then track the results and determine if it was a good idea, or perhaps not worth the cost.

The goal is to allow us to fully understand indexing on our important databases and to be able to proactively act on this information. Things change all the time, and being able to quantify that change before and after any database or application changes will allow us to stay on top of indexing. Our reaction times to change will be far better and, hopefully, we’ll avoid the pain of performance troubles that could have been prevented had we had more information available up-front.

SQL Server Index Metrics: A Review

In our previous work, we developed processes that collected data about index usage. First, we work with index usage statistics, which allow us to understand how often indexes are read or written to. Using this data, we can determine if an index is unused, minimally used, or misused. Once we understand those results, we can make a decision to drop the index (if unneeded) or alter it (if it could benefit from different columns).

Second, we collected data about missing indexes. As queries executed that could have benefitted from an index that does not exist, we report on this data so that we can later make decisions based on it. Many index recommendations are not worth the effort or overlap with each other, but all of this data allows us to better understand the workload that executes against our SQL Servers and decide what new indexes could be worth the resources.

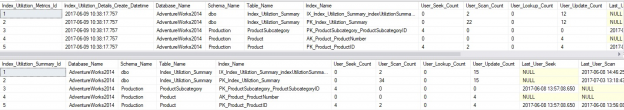

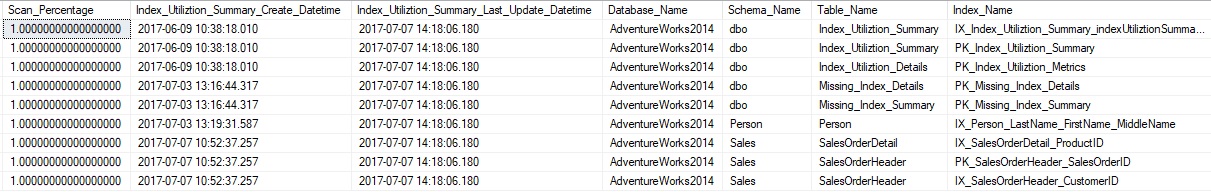

Our first process populated tables called Index_Utilization_Details and Index_Utilization_Summary. The first table provided a snapshot of usage metrics each time the process was run. The second table was an aggregate that maintained overall usage counts over time, allowing for a better long-term view of index use. A brief snapshot of data in these tables looks like this:

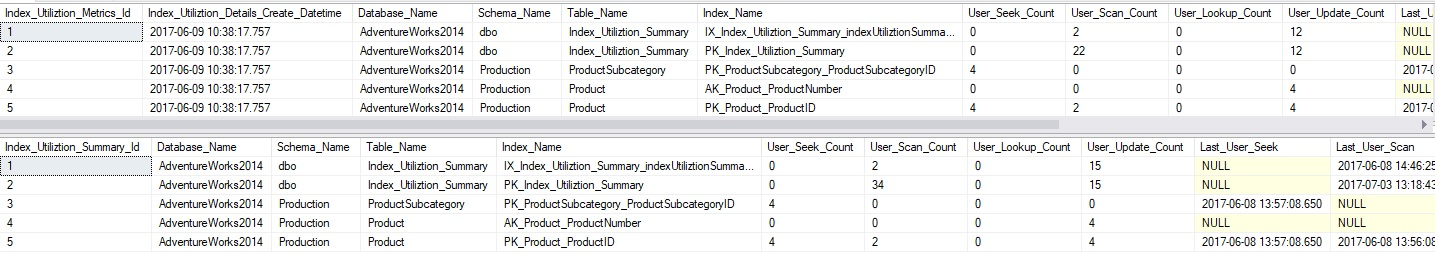

Our second process also populated 2 tables: Missing_Index_Details and Missing_Index_Summary. These tables also offered both a point-in-time view of missing index metrics, as well as a longer-term aggregated view of overall use. Two views were created in order to provide additional metrics that can be derived from this data: v_Missing_Index_Details and v_Missing_Index_Summary. Here is a screenshot of some sample data returned from these views:

We can see via this data that we now have a wealth of data that is regularly collected and aggregated. We can choose to periodically review this data and decide if any actions need to be taken, but we can remove the manual element to that process and automate a process that inspects this data for us, putting the results into a central location. From here, we can report on it using an email, SSRS, or any other tool of our choosing.

The remainder of this article will be concerned with making the best possible use of this data in order to easily report on potential indexing needs. At the same time, we will make an effort to go overboard and over-engineer a solution that is too complex to maintain or use.

Implementing a SQL Server Index Reporting Process

Before diving into our data, let’s put together a plan of attack. There are enough ways in which this process could be implemented that we should endeavor to stay focused on our ultimate goal, which is to report on important index needs. Here are is the basic structure of the stored procedure that we will be building:

-

Collect recent and aggregate data from the index usage stats process.

-

Analyze this data and determine if any indexes are currently unused.

-

If so, does long-term data collection support the removal of these indexes?

- If so, make those recommendations.

-

If so, does long-term data collection support the removal of these indexes?

-

Analyze this data and determine if any indexes are underused or misused.

-

If so, does long-term data collection support changes to these indexes?

- If so, make those recommendations.

-

If so, does long-term data collection support changes to these indexes?

-

Analyze this data and determine if any indexes are duplicates or are overlapping.

- If so, recommend that they be appropriately combined or duplicates removed.

-

Analyze this data and determine if any indexes are currently unused.

-

Collect recent and aggregate data from the missing index process.

-

Analyze this data and determine if any missing indexes are worth considering.

-

If so, does long-term data collection support the additions?

- If so, make those recommendations.

-

If so, does long-term data collection support the additions?

-

Analyze this data and determine if any indexes suggestions can be implemented via merging index suggestions with each other or with existing indexes.

-

If so, does long-term data collection support the changes?

- If so, then make those recommendations.

-

If so, does long-term data collection support the changes?

-

Analyze this data and determine if any missing indexes are worth considering.

-

Check all recommendations above with previously made recommendations.

- If a recommendation was recommended before and not implemented, add details of that here.

- If a recommendation was implemented, then also make a note of that.

-

Check previously implemented recommendations that have been utilized.

- Are they operating effectively? Report on this in a meaningful fashion.

- Are newly implemented missing indexes being utilized efficiently?

- Are indexes that were removed now showing up as significant missing indexes?

Phew! That’s quite a bit of work we have to do! It sounds like a lot, but in reality, it’s a variety of checks against data that we have already collected in order to determine if it is significant enough to report back to us. With that (slightly) reassuring thought in mind, let’s begin building this thing!

SQL Server Index Usage Stats Analysis

The easiest metric to check for are indexes that are unused. The validation is easy, and the most important piece of data we would want to know is for how long they have been unused. We can view unused indexes like this:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 |

SELECT Index_Utilization_Summary.Database_Name, Index_Utilization_Summary.[Schema_Name], Index_Utilization_Summary.Table_Name, Index_Utilization_Summary.Index_Name, Index_Utilization_Summary.Index_Utilization_Summary_Create_Datetime, Index_Utilization_Summary.Index_Utilization_Summary_Last_Update_Datetime, Index_Utilization_Summary.User_Update_Count_Total, Index_Utilization_Summary.Last_User_Update, DATEDIFF(DAY, Index_Utilization_Summary.Index_Utilization_Summary_Create_Datetime, Index_Utilization_Summary.Index_Utilization_Summary_Last_Update_Datetime) AS Days_Without_Reads FROM IndexMetrics.Index_Utilization_Summary WHERE Index_Utilization_Summary.User_Seek_Count_Total = 0 AND Index_Utilization_Summary.User_Scan_Count_Total = 0 AND Index_Utilization_Summary.User_Lookup_Count_Total = 0; |

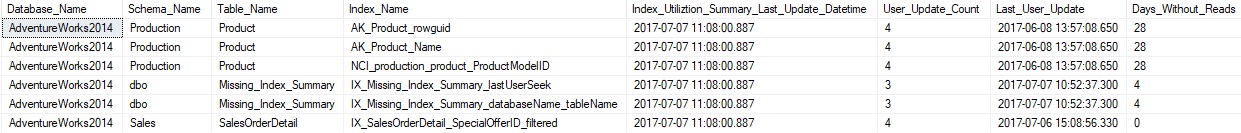

In addition to knowing that the index has not been read, the last column provides how many days have passed without any reads. While an index that has been unused for 6 months is a great candidate for removal, one that has only been unused for a day would need some more time to be sure that it is definitely not used. The results of this query are as follows:

Since the last seek/scan/lookup columns are NULL for unused indexes, we have to guess as to how long the index has been unused for. To be safe, we will only go back as far as our index metrics have been collected. Our results above show 5 indexes without any reads, 3 of which have been unused for almost a month and might be good candidates for removal. As always, an index unused for 2 months might be critical to some quarterly or yearly report that will bomb without it, so some level of verification of non-use is a good idea before making changes.

Next, we can look for indexes that are underused. These are indexes that are frequently written but rarely read. There is no universally accepted definition for what underused is, so for the sake of our demonstration, we will consider any index that has less than 2% reads. You may adjust this based on your comfort level with the schema and data that is being analyzed to be more conservative or return more data.

The following query will return any indexes that have 2% or less reads, but greater than zero (separating this data from our previous query):

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 |

WITH CTE_UNDERUSED_INDEXES AS ( SELECT *, CAST(Index_Utilization_Summary.User_Seek_Count_Total + Index_Utilization_Summary.User_Scan_Count_Total + Index_Utilization_Summary.User_Lookup_Count_Total AS DECIMAL(19,2)) AS Read_Count, CAST(Index_Utilization_Summary.User_Seek_Count_Total + Index_Utilization_Summary.User_Scan_Count_Total + Index_Utilization_Summary.User_Lookup_Count_Total + User_Update_Count_Total AS DECIMAL(19,2)) AS Operation_Count FROM IndexMetrics.Index_Utilization_Summary ) SELECT CTE_UNDERUSED_INDEXES.Read_Count / CTE_UNDERUSED_INDEXES.Operation_Count AS Percent_Read, CTE_UNDERUSED_INDEXES.Index_Utilization_Summary_Create_Datetime, CTE_UNDERUSED_INDEXES.Index_Utilization_Summary_Last_Update_Datetime, CTE_UNDERUSED_INDEXES.Database_Name, CTE_UNDERUSED_INDEXES.[Schema_Name], CTE_UNDERUSED_INDEXES.Table_Name, CTE_UNDERUSED_INDEXES.Index_Name, CTE_UNDERUSED_INDEXES.User_Seek_Count_Total, CTE_UNDERUSED_INDEXES.User_Scan_Count_Total, CTE_UNDERUSED_INDEXES.User_Lookup_Count_Total, CTE_UNDERUSED_INDEXES.User_Update_Count_Total, CTE_UNDERUSED_INDEXES.Last_User_Seek, CTE_UNDERUSED_INDEXES.Last_User_Scan, CTE_UNDERUSED_INDEXES.Last_User_Lookup, CTE_UNDERUSED_INDEXES.Last_User_Update FROM CTE_UNDERUSED_INDEXES WHERE CTE_UNDERUSED_INDEXES.Read_Count / CASE WHEN CTE_UNDERUSED_INDEXES.Operation_Count = 0 THEN 1 ELSE CTE_UNDERUSED_INDEXES.Operation_Count END > 0 AND CTE_UNDERUSED_INDEXES.Read_Count / CASE WHEN CTE_UNDERUSED_INDEXES.Operation_Count = 0 THEN 1 ELSE CTE_UNDERUSED_INDEXES.Operation_Count END <= 0.02; |

The CTE helps us to avoid divide-by-zero errors, and perform our calculations without too much repeated TSQL. The results look like this:

A single index is returned against one of our index utilization tables. Never fear—I intentionally performed a ton of writes on the table to make this appear in our filters, but this illustrates how we can separate between unused indexes and underused indexes, allowing us to broaden the scope of analysis to include indexes that are used but might still be worth some scrutiny.

The index returned above has only 1.2% of all operations as reads, meaning that we pay quite a bit for maintenance, and may not get a very good value for it. It’s possible those 1.2 % are extremely important, or perhaps they are not. These are the questions that we should consider prior to making any changes.

Our last category of the index to consider is one that is ineffectively used. These would be indexes that are scanned frequently and rarely or never the target of seek operations. While it may be by design, I would be concerned if an index on a large/busy production table were being scanned often. If we are not intentionally looking to return a large portion (or all) of the index, then a great deal of reads is being wasted.

Similar to earlier, we will set an arbitrary break point for determining if an index is effective or not. If 98% or more of operations are scans, then we will flag it as a potential problem. We can use the following query to check for any indexes that happen to meet this criterion:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 |

WITH CTE_UNDERUSED_INDEXES AS ( SELECT *, CAST(Index_Utilization_Summary.User_Seek_Count_Total + Index_Utilization_Summary.User_Scan_Count_Total + Index_Utilization_Summary.User_Lookup_Count_Total AS DECIMAL(19,2)) AS Read_Count FROM IndexMetrics.Index_Utilization_Summary ) SELECT CTE_UNDERUSED_INDEXES.Index_Utilization_Summary_Create_Datetime, CTE_UNDERUSED_INDEXES.Index_Utilization_Summary_Last_Update_Datetime, CTE_UNDERUSED_INDEXES.Database_Name, CTE_UNDERUSED_INDEXES.[Schema_Name], CTE_UNDERUSED_INDEXES.Table_Name, CTE_UNDERUSED_INDEXES.Index_Name, CTE_UNDERUSED_INDEXES.User_Seek_Count_Total, CTE_UNDERUSED_INDEXES.User_Scan_Count_Total, CTE_UNDERUSED_INDEXES.User_Lookup_Count_Total, CTE_UNDERUSED_INDEXES.User_Update_Count_Total, CTE_UNDERUSED_INDEXES.Last_User_Seek, CTE_UNDERUSED_INDEXES.Last_User_Scan, CTE_UNDERUSED_INDEXES.Last_User_Lookup, CTE_UNDERUSED_INDEXES.Last_User_Update FROM CTE_UNDERUSED_INDEXES WHERE CTE_UNDERUSED_INDEXES.User_Scan_Count_Total / CASE WHEN CTE_UNDERUSED_INDEXES.Read_Count = 0 THEN 1 ELSE CTE_UNDERUSED_INDEXES.Read_Count END >= 0.98; |

Reviewing the results, we can see that there are 9 indexes that happen to meet this criteria:

As we look at these indexes, it becomes clear that the solution may not be straightforward. Dropping these indexes is not going to solve our scan problems (if they are actually problematic). Some are clustered primary keys, which are important to our underlying data.

To investigate further, we’d want to check and see if these scans are a performance concern. If they are, then there are likely missing indexes that could address them or a known problem-query that could be responsible for them. In other words, this data is useful as it provides an additional metric when analyzing an overall indexing strategy. The correct action may be to do nothing, or it may involve further research. We may be able to use this data to corroborate other performance concerns in order to make a solid case in favor of other schema or application changes.

Missing SQL Server Index Analysis

Now that we have reviewed our existing indexes, we can begin to look at what indexes are missing and could benefit performance on a given database. As always, not all missing indexes are good indexes, so we need to evaluate each carefully before making any changes in a production environment. We can query our existing missing index data in order to determine which are important to us:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 |

SELECT 'CREATE MISSING INDEX' AS Recommended_Action, v_Missing_Index_Summary.First_Index_Suggestion_Time AS Index_Metric_Creation_Datetime, v_Missing_Index_Summary.Last_User_Seek AS Last_Recommended_Datetime, v_Missing_Index_Summary.[Database_Name], v_Missing_Index_Summary.[Schema_Name], v_Missing_Index_Summary.Table_Name, 'Index_' + CONVERT (VARCHAR, ROW_NUMBER() OVER (ORDER BY v_Missing_Index_Summary.Index_Creation_Statement)) + '_' + v_Missing_Index_Summary.Table_Name AS Index_Name, v_Missing_Index_Summary.Equality_Columns, v_Missing_Index_Summary.Inequality_Columns, v_Missing_Index_Summary.Include_Columns, v_Missing_Index_Summary.User_Seeks AS User_Seek_Count, NULL AS User_Scan_Count, NULL AS User_Lookup_Count, NULL AS User_Update_Count, NULL AS Last_User_Seek, NULL AS Last_User_Scan, NULL AS Last_User_Lookup, NULL AS Last_User_Update, NULL AS Is_Primary_Key, NULL AS Is_Clustered_Index, v_Missing_Index_Summary.Improvement_Measure, NULL AS Is_Unused, NULL AS Is_Dropped FROM IndexMetrics.v_Missing_Index_Summary WHERE v_Missing_Index_Summary.Improvement_Measure > 5 |

The filters on this query will ignore any missing index that has already been implemented but more importantly will only return missing indexes with an improvement measure greater than 5. This number is completely arbitrary and you are free to adjust up or down based on your sensitivity towards recommendations. Adjust it higher, get fewer results—adjust it lower and get more results.

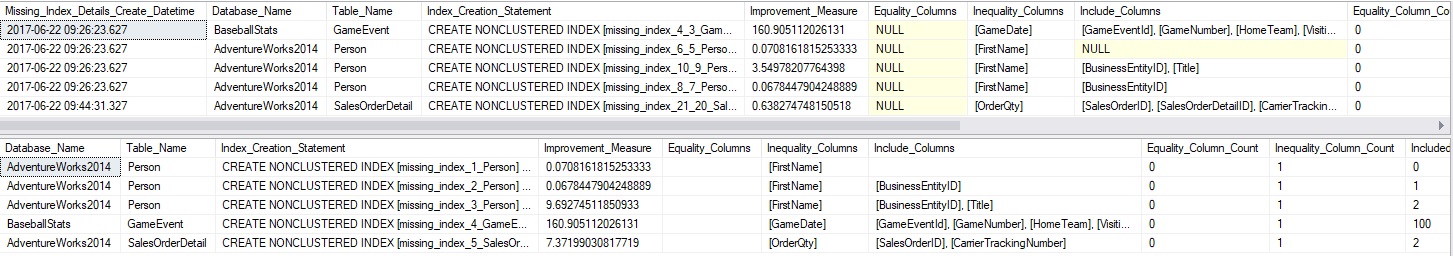

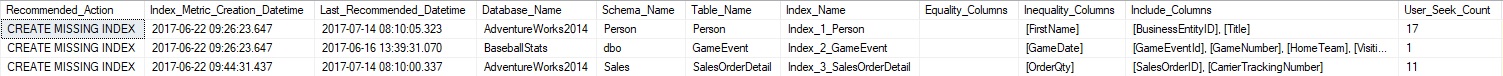

Here are the suggested missing indexes on my local SQL Server:

Included are all metrics on the missing index, including the number of benefitting seeks that would have occurred, as well as the improvement measure (off the right side of the screen). The index name is also completely arbitrary, but I find it useful to fill in the blank with something meaningful. It can easily be adjusted to fit whatever index naming convention you follow in order to make this more convenient.

Some SQL Server Index Recommendation Tweaks

Clustered indexes are special in that they form the logical basis for storage of a table. Unused clustered indexes strongly imply that the table as a whole is not used. More research is required—for example, to determine if all non-clustered indexes are also unused, but this scenario is worth noting:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 |

SELECT Index_Utilization_Summary.Database_Name, Index_Utilization_Summary.[Schema_Name], Index_Utilization_Summary.Table_Name, Index_Utilization_Summary.Index_Name, Index_Utilization_Summary.Index_Utilization_Summary_Create_Datetime, Index_Utilization_Summary.Index_Utilization_Summary_Last_Update_Datetime, Index_Utilization_Summary.User_Update_Count_Total, Index_Utilization_Summary.Last_User_Update, DATEDIFF(DAY, Index_Utilization_Summary.Index_Utilization_Summary_Create_Datetime, Index_Utilization_Summary.Index_Utilization_Summary_Last_Update_Datetime) AS Days_Without_Reads FROM IndexMetrics.Index_Utilization_Summary WHERE Index_Utilization_Summary.User_Seek_Count_Total = 0 AND Index_Utilization_Summary.User_Scan_Count_Total = 0 AND Index_Utilization_Summary.User_Lookup_Count_Total = 0 AND Index_Utilization_Summary.User_Update_Count_Total = 0 AND Index_Utilization_Summary.Is_Clustered_Index = 1; |

This query is similar to our search for unused indexes, except that it also validates if it is a clustered index with absolutely no use at all (reads or writes). The results can allow us to ask the question: “Is this table unused?”. From that question, we can determine if it is unused, and if so, is this a good thing? Are all other non-clustered indexes on the table unused? If truly unused, can it be dropped? Unused tables can result from failed or nonexistent cleanup projects, but could also be a sign that an application is failing to use a table correctly.

An alternate way to view unused index data is to constrain usage by the last time the table was used. Perhaps it is not unused for all-time, but it has been unused in the past month. The following query will determine if any indexes have not received a read or write in the past month, but were not picked up by our unused index check from earlier:

|

1 2 3 4 5 6 7 8 9 10 |

SELECT * FROM IndexMetrics.Index_Utilization_Summary WHERE (Index_Utilization_Summary.Last_User_Seek IS NULL OR Index_Utilization_Summary.Last_User_Seek <= DATEADD(MONTH, -1, CURRENT_TIMESTAMP)) AND (Index_Utilization_Summary.Last_User_Scan IS NULL OR Index_Utilization_Summary.Last_User_Scan <= DATEADD(MONTH, -1, CURRENT_TIMESTAMP)) AND (Index_Utilization_Summary.Last_User_Lookup IS NULL OR Index_Utilization_Summary.Last_User_Lookup <= DATEADD(MONTH, -1, CURRENT_TIMESTAMP)) AND (Index_Utilization_Summary.Last_User_Update IS NULL OR Index_Utilization_Summary.Last_User_Update <= DATEADD(MONTH, -1, CURRENT_TIMESTAMP)) AND (Index_Utilization_Summary.Last_User_Seek + Index_Utilization_Summary.Last_User_Scan + Index_Utilization_Summary.Last_User_Lookup + Index_Utilization_Summary.Last_User_Update <> 0) |

One final check we can make it to look for clustered index scans. Earlier, we identified misused indexes: Those with far more scans than seeks. When the index with a high scan count happens to be the clustered index, then we know that there may be some queries out there that are not covered by indexes but should be. We can filter and get info on these like this:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 |

WITH CTE_UNDERUSED_INDEXES AS ( SELECT *, CAST(Index_Utilization_Summary.User_Seek_Count_Total + Index_Utilization_Summary.User_Scan_Count_Total + Index_Utilization_Summary.User_Lookup_Count_Total AS DECIMAL(19,2)) AS Read_Count FROM IndexMetrics.Index_Utilization_Summary WHERE Index_Utilization_Summary.Is_Clustered_Index = 1 ) SELECT CTE_UNDERUSED_INDEXES.Index_Utilization_Summary_Create_Datetime, CTE_UNDERUSED_INDEXES.Index_Utilization_Summary_Last_Update_Datetime, CTE_UNDERUSED_INDEXES.Database_Name, CTE_UNDERUSED_INDEXES.[Schema_Name], CTE_UNDERUSED_INDEXES.Table_Name, CTE_UNDERUSED_INDEXES.Index_Name, CTE_UNDERUSED_INDEXES.User_Seek_Count_Total, CTE_UNDERUSED_INDEXES.User_Scan_Count_Total, CTE_UNDERUSED_INDEXES.User_Lookup_Count_Total, CTE_UNDERUSED_INDEXES.User_Update_Count_Total, CTE_UNDERUSED_INDEXES.Last_User_Seek, CTE_UNDERUSED_INDEXES.Last_User_Scan, CTE_UNDERUSED_INDEXES.Last_User_Lookup, CTE_UNDERUSED_INDEXES.Last_User_Update FROM CTE_UNDERUSED_INDEXES WHERE CTE_UNDERUSED_INDEXES.User_Scan_Count_Total / CASE WHEN CTE_UNDERUSED_INDEXES.Read_Count = 0 THEN 1 ELSE CTE_UNDERUSED_INDEXES.Read_Count END >= 0.98; |

The results of this tell us where more research is required. Odds are very good that one of two scenarios apply to this situation:

- There is a missing index on the table that is resulting in a large number of clustered index scans.

- There are queries hitting the table that SELECT *, or return enough columns that no missing index would make sense for it.

For either scenario, we have identified a potential performance problem and can address whether it is one that requires fixing or if it is indicative of normal behavior.

Tracking Index Recommendations

With all of the data collected above, it would be beneficial to collect, store, and reference it as needed. We can run queries like those shown here whenever needed, but there will be some time savings if we turn the various queries into a stored procedure and were able to reference the collected data anytime.

This process will collect the data above and then crunch it to determine if any previous recommendations were implemented. The goal is for the process to be as simple as possible:

- Collect index recommendations

- Adjust for special cases, such as clustered index usage.

- Check for implementation status.

- Done!

From that point, the data is available for review anytime. Attached to this article are updated versions of the missing index collection process, index utilization process, and a new process that will collect and analyze this data for you. This is optional but could be a nice way to codify how indexes should be managed, reducing the chance for mistaken decisions or inconsistent filters.

The missing index and index utilization collection processes can be used independently of the recommendation proc successfully. They can be scheduled to run daily and allowed to collect data that can, over time, be used to make smart indexing decisions.

A Note on Complexity

With any script that analyzes data and returns recommendations, there is the threat of building a black box so complex that it becomes uncertain how it works or why. Such a script also becomes error-prone and more likely to break under various unforeseen circumstances. Our goal in this effort is to develop insight into index metrics without going overboard.

By persisting index data, we can generate very accurate data over time without the need for guesswork. In addition, we avoid the potential pitfall that arises if recommendations are given with too little historical data to build on. On the whole, the collection process requires 4 tables, 2 stored procedures, 2 views, and 2 jobs. The recommendation process requires a single stored procedure, table, and a calling job. The intention of this is to ensure that each object is short and sweet so that no component of this process becomes excessively complex.

If it helps, you may put all objects in this process into their own schema, segregating them from other objects and making them easy to find and work with. I used IndexMetrics for the demos here, but any can be used.

I am a huge proponent of understanding how SQL Server works and using that knowledge to make great decisions. At the same time, an attempt has been made here to keep things as simple as possible. Feel free to download, test, and use the attached scripts to make smart decisions. Once understood, these scripts can be built on and made bigger, badder, and more custom-tailored to your needs.

With that in mind, please enjoy 🙂

Enhancements and Scripts

Attached to this article are copies of the scripts needed to implement index usage stats tracking, missing index stats tracking, and the reporting proc discussed here. Some enhancements have been made to the underlying processes, so the new versions should be used with preference over any older ones.

All objects reside in the IndexMetrics schema now and have been given the ability to aggregate data across all databases, ignoring database name. This is useful in environments with many databases that all share a similar design and usage patterns.

Conclusion

Smart indexing decisions require us to collect and maintain indexing metrics. Without weeks, months (or more) of data to work with, we run the risk of making short-sighted decisions that could hamstring performance in the future.

Once collected and aggregated over time, we can run queries or processes against this data in order to return a set of indexes that warrant further research. Once investigated, we can generate a list of changes that make sense and are supported by our historical data.

A process like this allows us to make smarter decisions and to track those decisions over time. If a new SQL Server index is needed, we can catch that need before it becomes a significant problem. If we remove an index, we can track performance in the wake of that decision and determine if that decision had any unintended side-effects. The result is a process that provides positive and useful feedback unto itself. Long-term, we can monitor all indexing changes, their effect, and new recommendations to determine what an application’s natural response is to our changes and be able to make smart decisions immediately without waiting for any long term repercussions to manifest.

Previous articles in this series:

- SQL Server Index Performance Tuning Using Built-in Index Utilization Metrics

- Collecting, aggregating, and analyzing missing SQL Server Index Stats

Downloads

- SQL Server Database Metrics - October 2, 2019

- Using SQL Server Database Metrics to Predict Application Problems - September 27, 2019

- SQL Injection: Detection and prevention - August 30, 2019