Introduction

A few weeks back I had been working on an interesting proof of concept for a client within the food/grocery industry. The objectives were to be able to provide the client with information on sales patterns, seasonal trends and location profitability. In our previous “get-together” we discussed how to create a tabular model project and how to create efficient and effective reports utilizing Excel.

In today’s “fireside chat” we shall be examining how efficient and effective reports may be created utilizing SQL Server Reporting Service 2016; especially with the complex reporting requirements and large amounts of data which clients tend to have.

Thus, let’s get started.

Getting Started

As our point of departure, we shall “pick up” with the Tabular project that we created in our last “get together”. Should you not have had a chance to work through the discussion, do feel free to glance at the step by step discussion by clicking on the link below:

Beer and the tabular model DO go together

Under performing enterprises

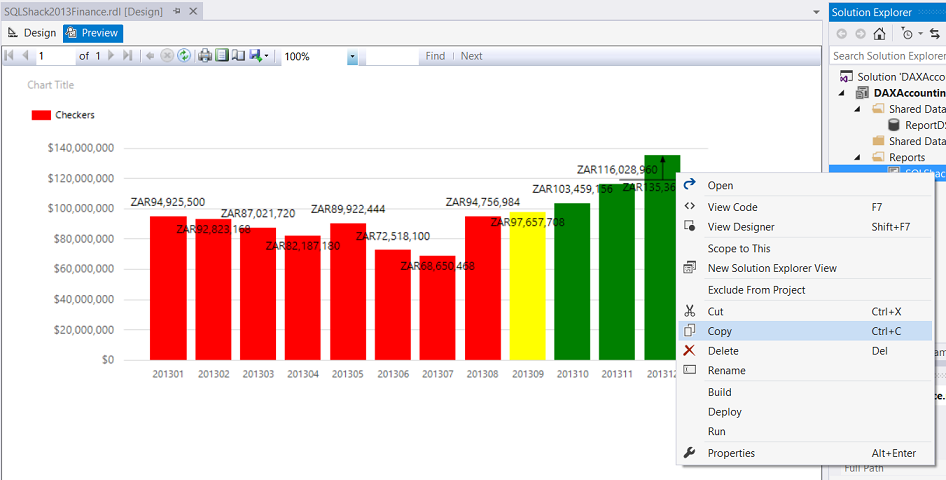

As a part of the proof of concept, our client Mary had asked for a vertical bar chart report that would reveal under-performing months. Those ‘below expected value’ months were to be highlighted in red, on target months in yellow and the ‘overachievers’ in green.

Let us now have a look at how we achieve her request.

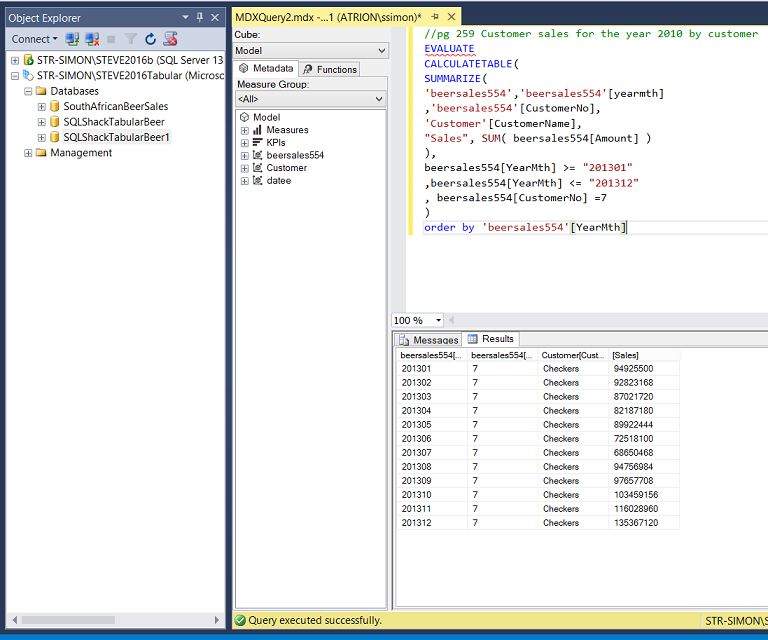

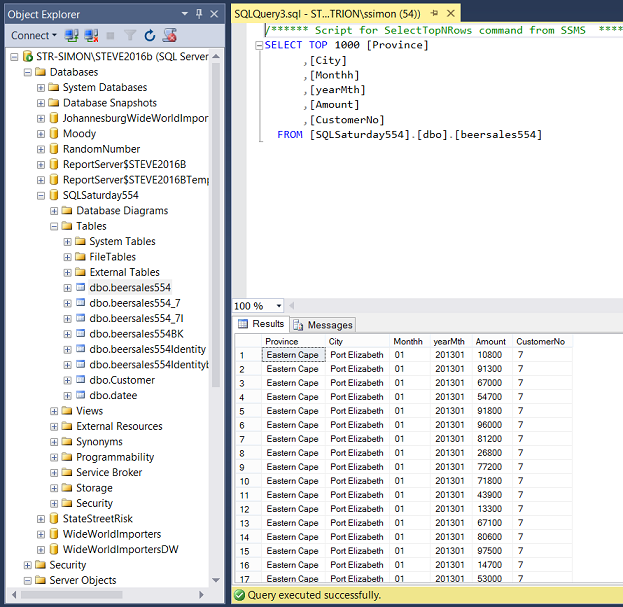

We begin by opening SQL Server Management Studio (SSMS) and open the “Beersales554” table (see below).

Now, our “beersales” table contains the sales from ‘many’ chains, for ‘many’ months, thus we shall be looking at the sales for the year 2013 from “Checkers” in our example. Further, we shall NOT be utilizing our relational table but rather the tabular cube that we created in our last get together. The query that we shall be utilizing may be seen below in the section entitled “Creating the required query”.

The important point to retain is that when our report is created that bars of the chart must indicate the firm’s performance and the bars must be color coded as follows.

For the fiscal year 2013, we are going to examine beer sales for Checkers.

ZAR 0 – ZAR 97,500,000 is under target and the bars must be red.

ZAR 97,500,001 to ZAR 100,000,000 is acceptable and must be yellow.

Above ZAR 100,000,000 is great and should be coloured green.

Creating the required query

As a reminder, what we would like to ascertain are the sales figures for Checkers for the whole of the fiscal year 2013.

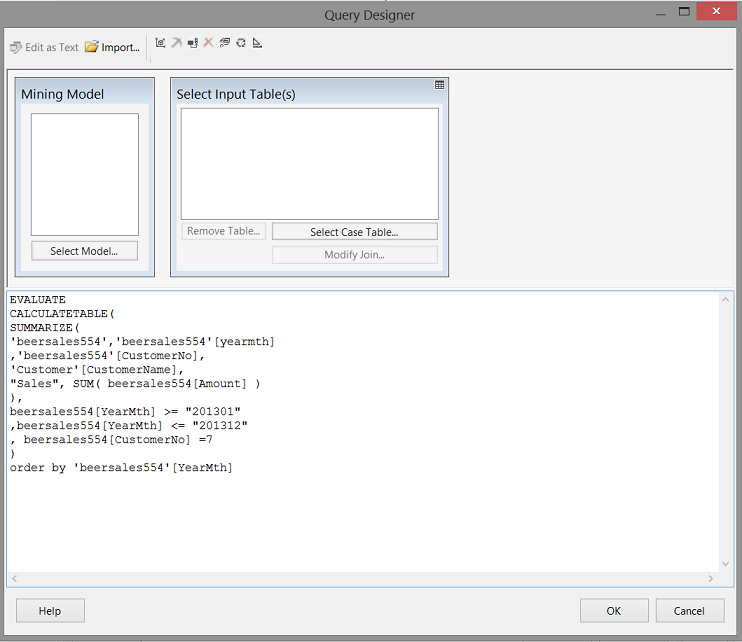

The DAX query to achieve this is shown below,

We note that in order to obtain the actual customer name we must utilize the Customer entity and the “amount”, “yearMth” and the “CustomerNo” come from the “beersales554” table. The said and for all intents and purposes, we require a join, which we created with the relationship diagram within the SSAS project. Please see Beer and the tabular model DO go together

The DAX code for the query may also be found in Addenda 1

Now that we have our core query, let us create a report to show the results.

Creating our report

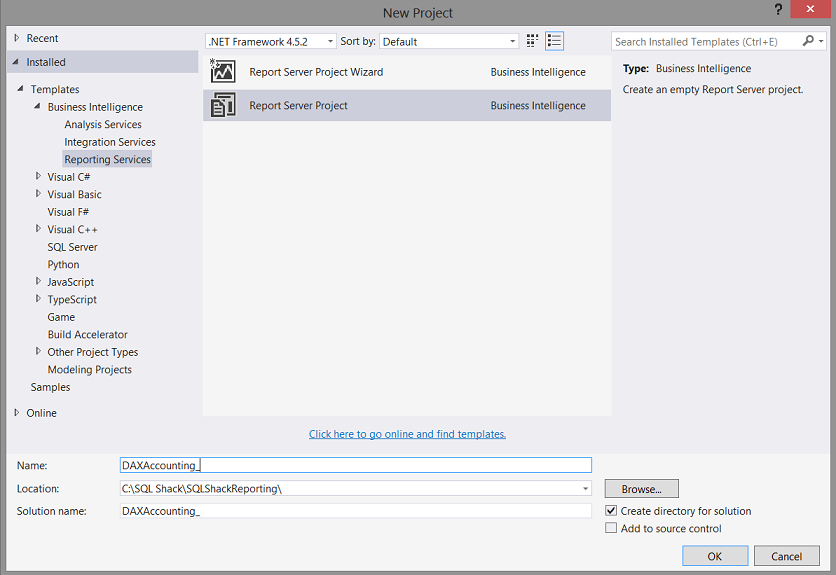

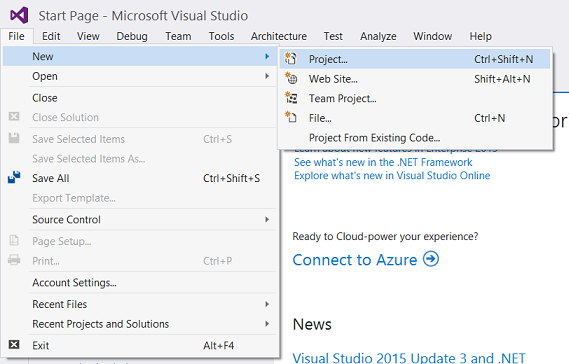

Opening either Visual Studio 2015 or SQL Server Data Tools 2010 we select “New Project” (see below)

We now find ourselves on the “New Project” screen. We give our new project the name “DAX Accounting” and click “OK” to create the project (see below).

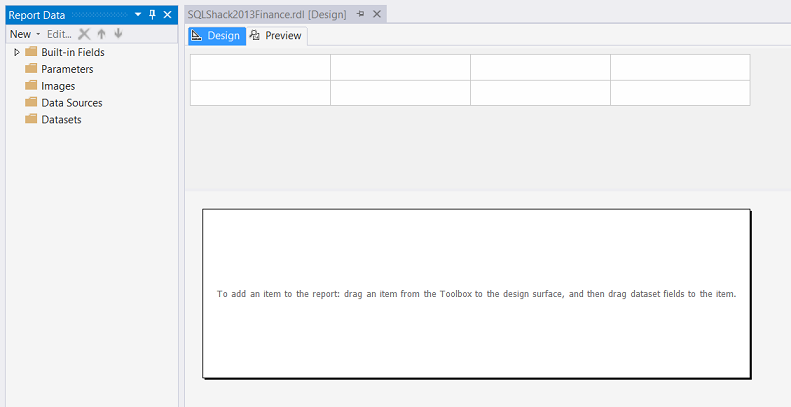

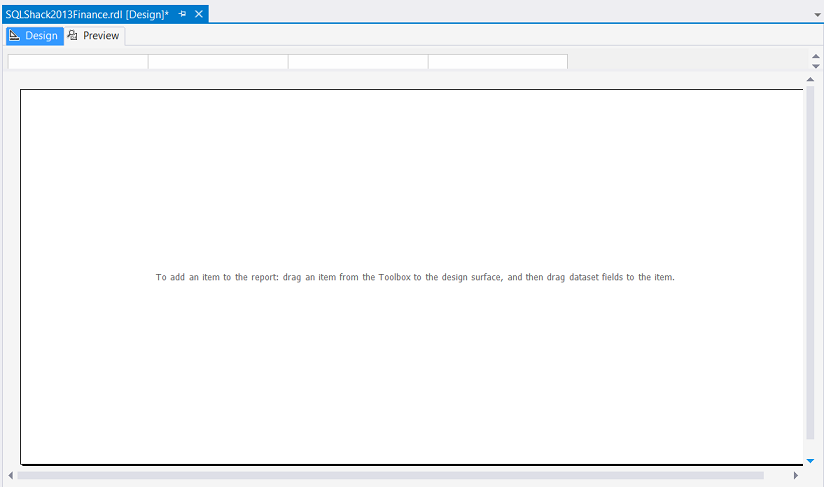

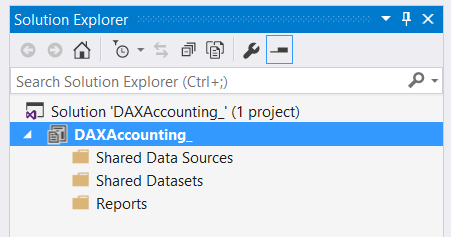

We find ourselves on our Report Design Surface (see below).

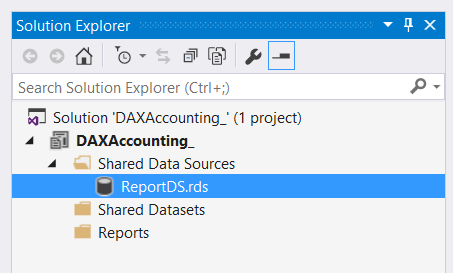

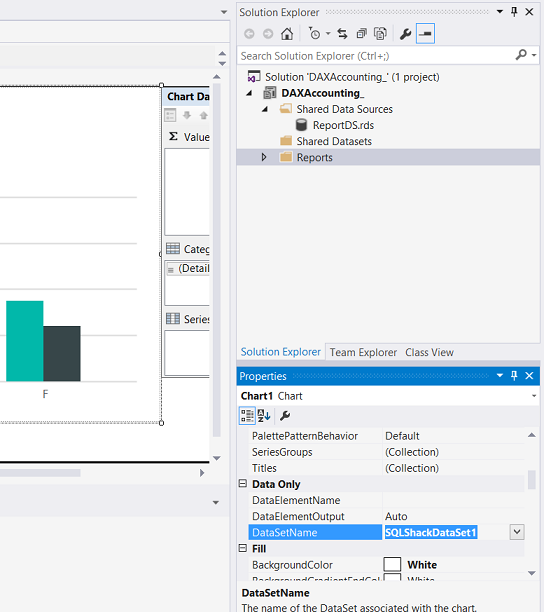

We note that under the DAX accounting project that there are three folders.

Shared Data Sources

Shared Data Sets

Reports

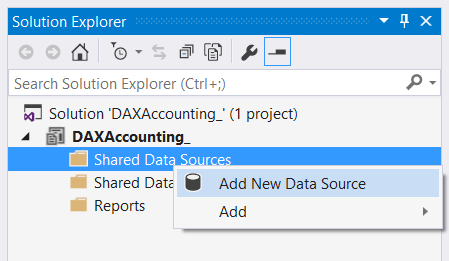

For our report, we begin by creating a “Shared Data Source”. A data source may be likened to the water hose connecting to the water tap on the side of a house.

The house being a “database”.

We right-click on the “Shared Data Source” tab and select “Add New Data Source” as may be seen above.

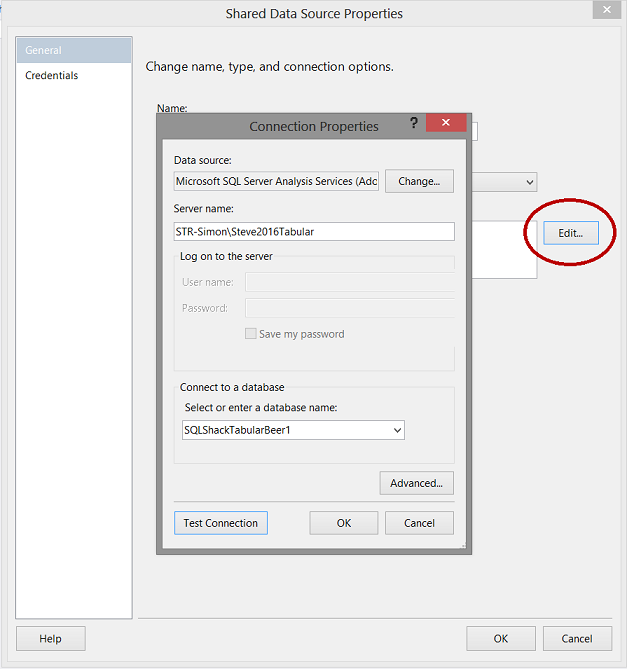

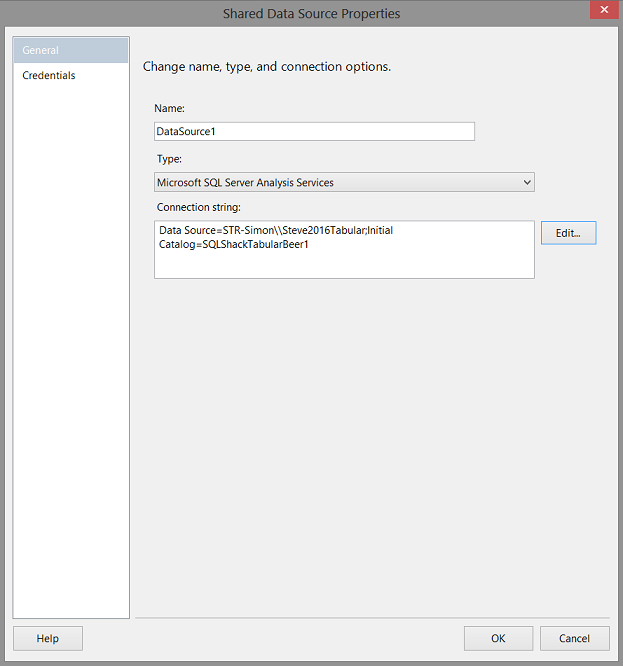

The “Shared Data Source Properties” Dialog box appears (see above).

We give our shared data source a name and click the “Edit” button (see above). The “Connection Properties” dialogue box is brought into view (see above and to the left).

Note that we tell the system that we require an “Analysis Services” connection. We also give the system the name of our Analysis Services database and then test the connection. If successful, we then click the “OK” button to exit the “Connection Properties” dialogue box.

Our screen should now look as follows:

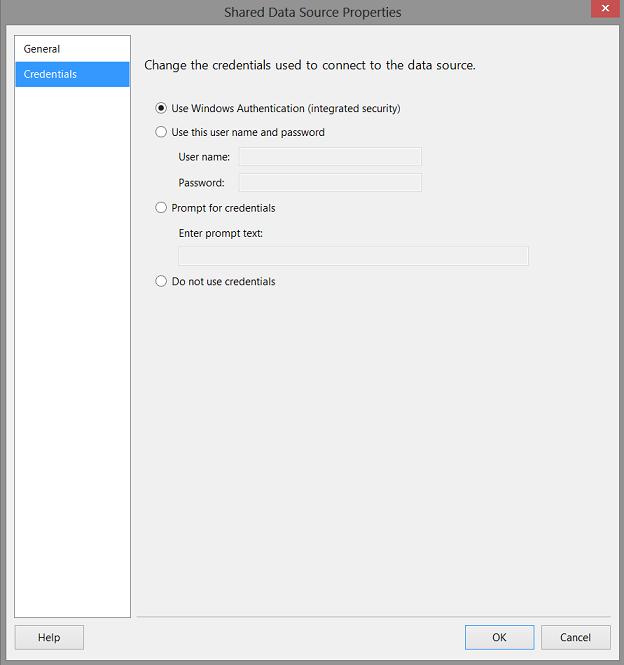

Clicking on the “Credentials” tab, we ensure (in my case) that “Windows Authentication” has been selected (see below).

We click “OK” to leave the “Share Data Source Properties” dialog box.

We note that our shared data source now appears within the “Shared Data Sources” directory (see above).

Adding our report

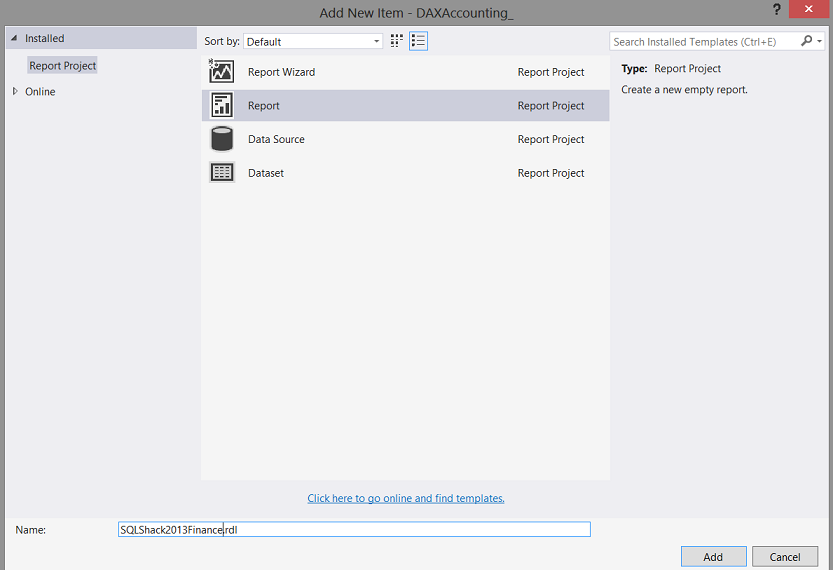

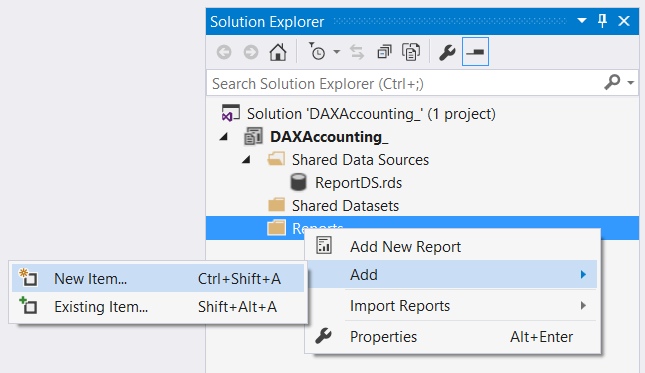

We right-click on the “Reports” directory and select “Add” and then “New Item” (see above).

We select “Report” and then give our report a name “SQLShack2013Finance” (see above).

We then click “Add”.

We now find ourselves on our report drawing surface. The reader will note the white rectangle (see above). This is where our chart will reside.

We resize our drawing surface (see above).

Creating our local data source and our local dataset

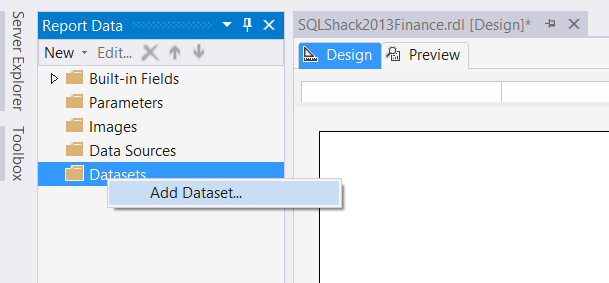

We right click on the “Data Set” directory / tab and select “Add Dataset” (see below).

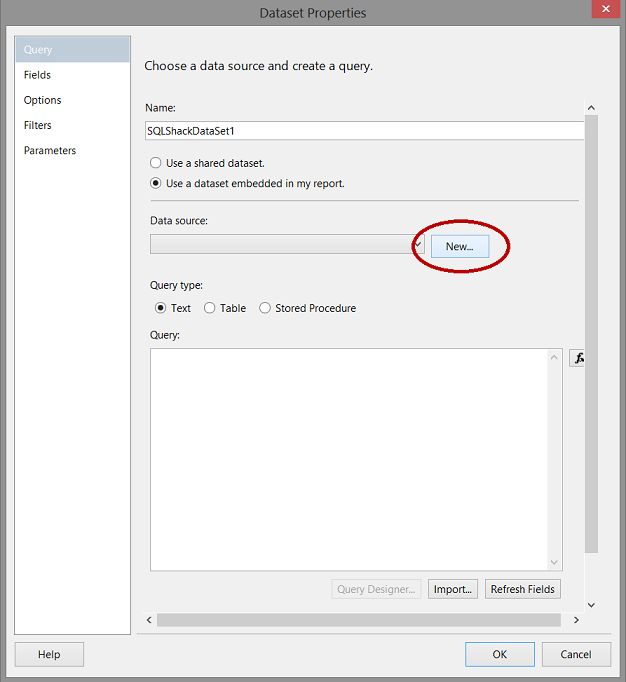

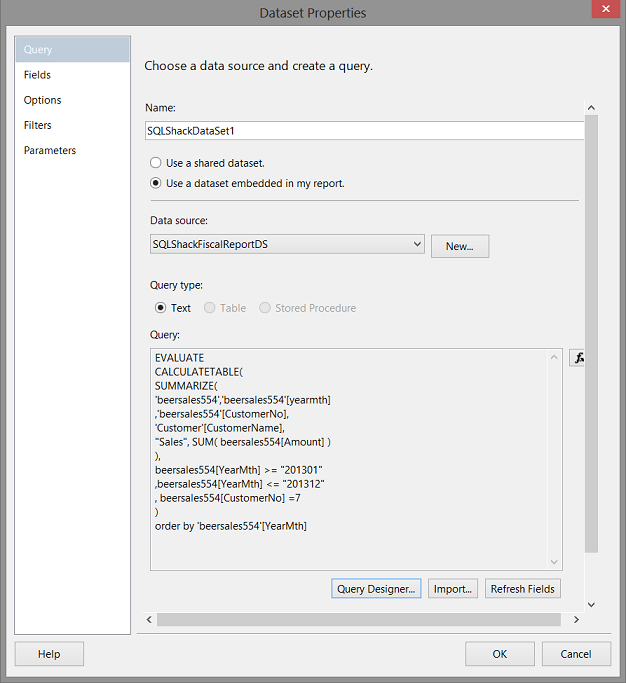

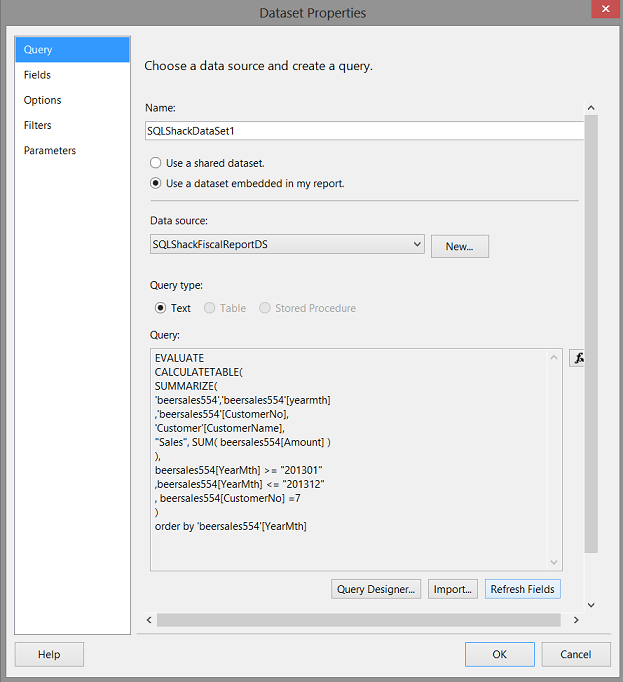

The Dataset Properties dialog box opens (see below).

We give our dataset a name and click the “New” button to create a new “local data source” (a local hose pipe) which we shall relate to the GLOBAL or Shared Data Source / hose pipe that we chatted about above.

Once again, the “eagle-eyed” reader will ask the question “Why have so many data sources?” The reason is simple. Our “Shared Data Source” acts as a global connector and if we think about it, it should be as general as possible in order for it to be “re-usable”. The local data source, which we are now going to create may be customised for this particular report only and will not affect any other reports which have been created or may be created in the future.

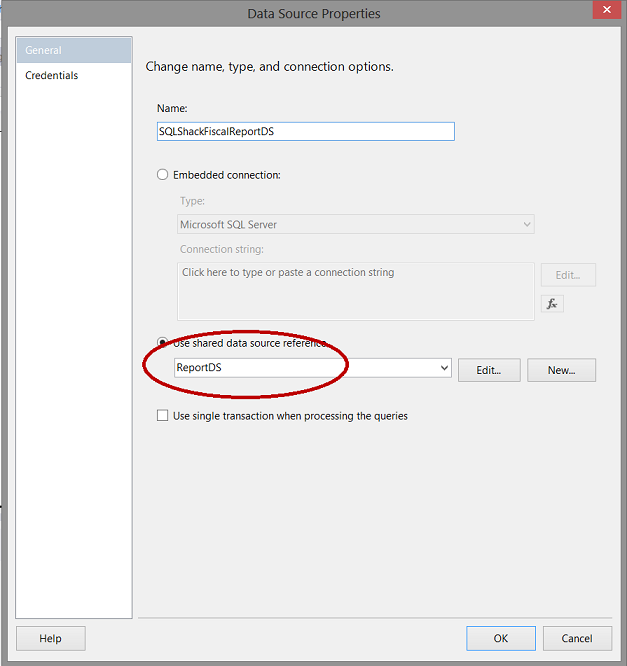

We give our local data source a name and select the GLOBAL data source with which to connect (see above). In short, by connecting to the global data source our local data source will inherit all the properties of the global source and we may add other restrictions to the local source without affecting the global data source. Once again we set the “Credentials” tab to “Windows Authentication”

We click OK” to leave this dialogue box.

Now here is where the “Fun and Games” come in! We have a GOTCHA!!!!

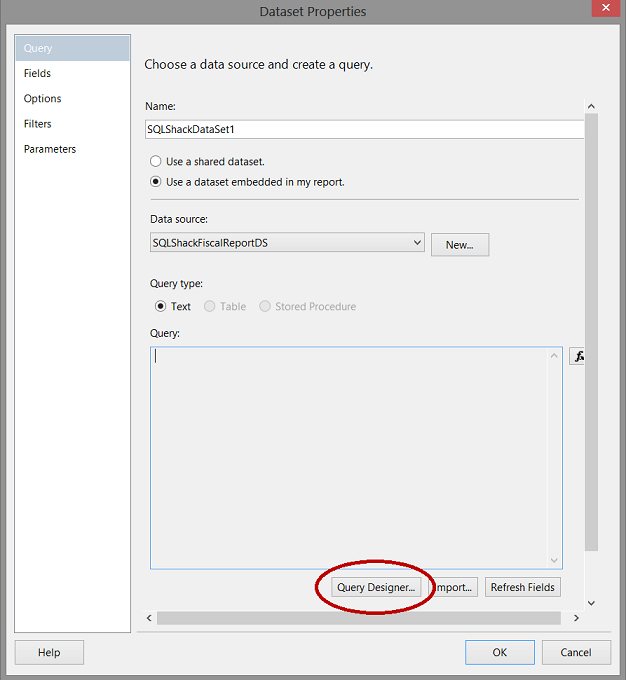

We are now back at the “Dataset Properties” dialog box and we can neither select to add our query to the “Query” box nor can we add a Stored Procedure as it is “Greyed” out. Not a bug but Microsoft claims that it is a “feature”. Wink! Wink!

What we need to do is to click the “Query Designer” button (see above).

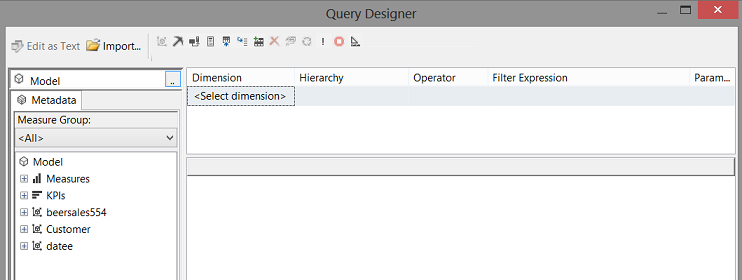

The “Query Designer” is brought up (see above).

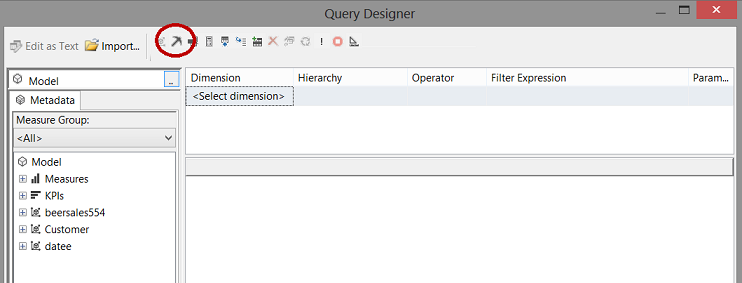

We select the “Data Mining Button” (go figure)!

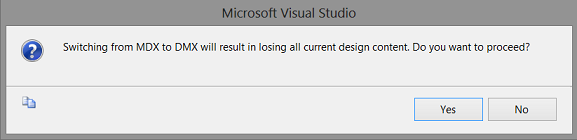

We click “Yes” to the warning which pops up (see above).

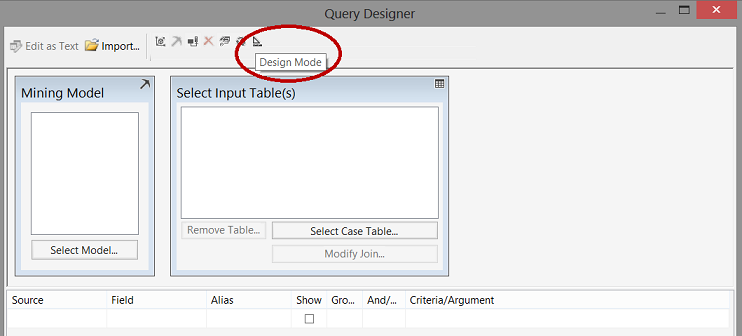

The “Query Designer” opens and we select “Design Mode” (see above).

We are now able to enter our query within the large white space above. We click “OK” to leave this screen.

We find ourselves back in the “Dataset Properties” dialogue box (see above).

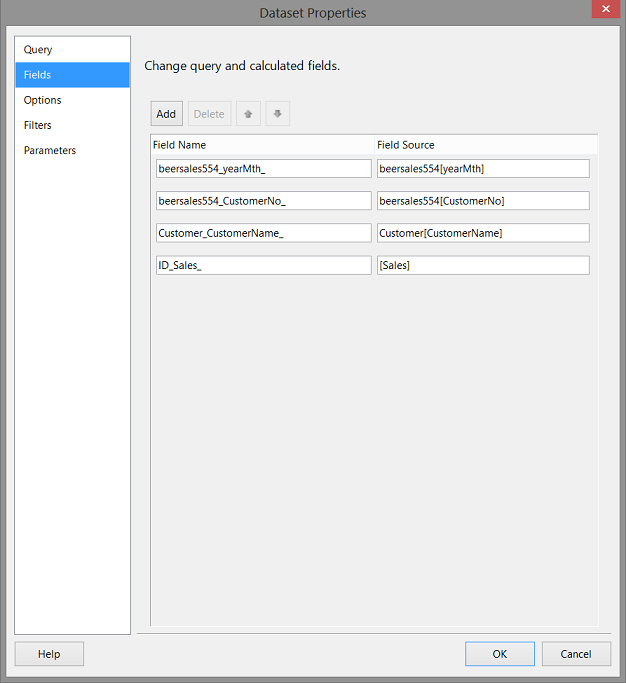

We click the “Refresh Fields” button (see above).

Wow!! By clicking our “Fields” tab, we see that the system has in fact located all the fields in our query, within the Analysis Services database (see above).We click “OK” to leave the dialogue box.

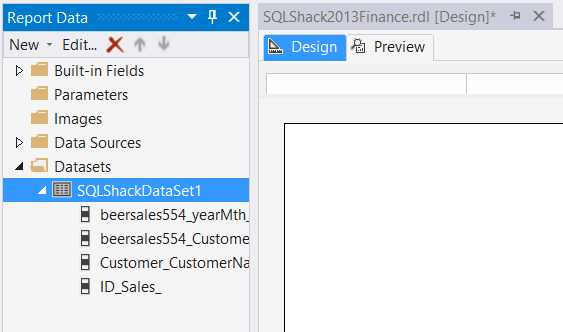

We find ourselves back on our design surface, and we note that the data set has in fact been created (see above and to the left).

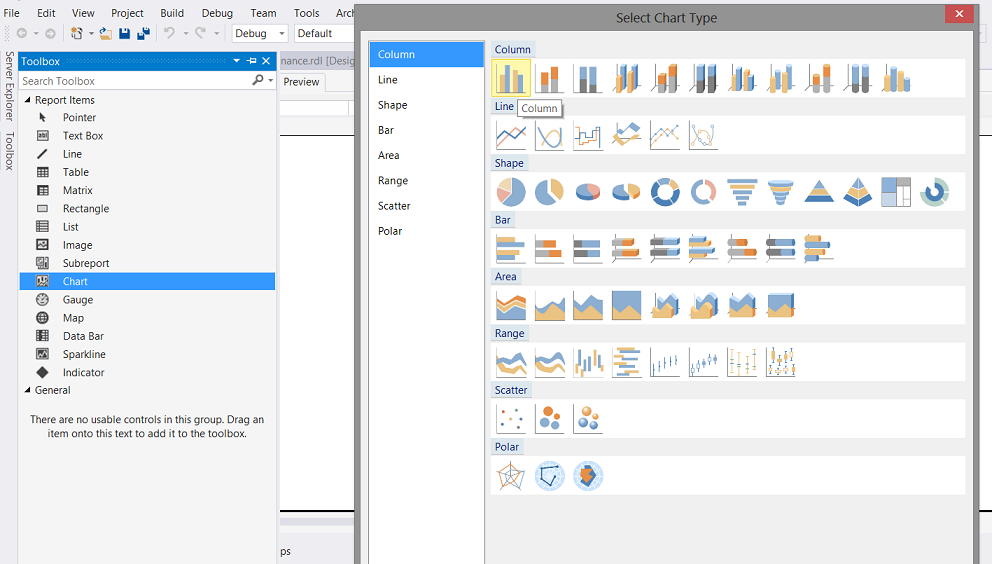

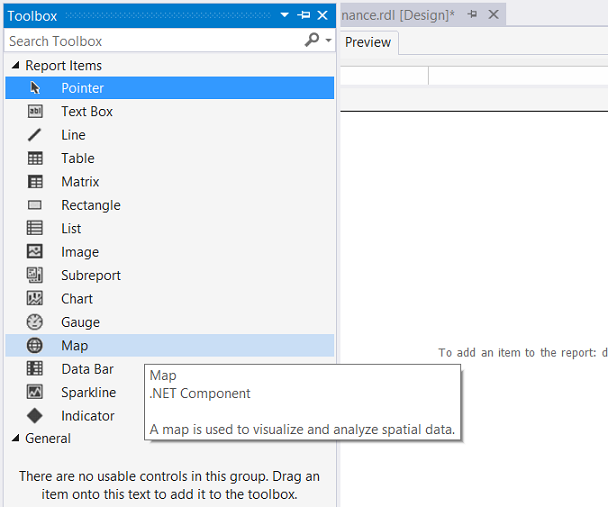

We now bring up the “Toolbox” which may be seen above.

We select a chart and then a “Column” chart (see above).

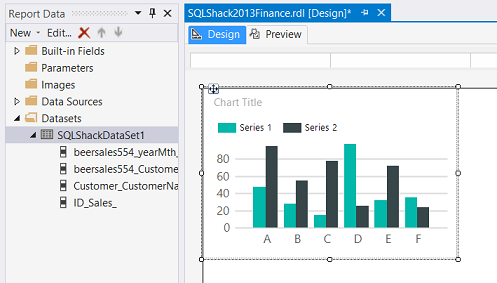

A chart now appears in the white portion of our design surface (see above).

We resize this area (see below).

Our next task is to connect the dataset to the chart.

We click on the chart. The “Properties” dialogue box will open (see above and to the bottom right). We set the “DataSetName” property to our data set.

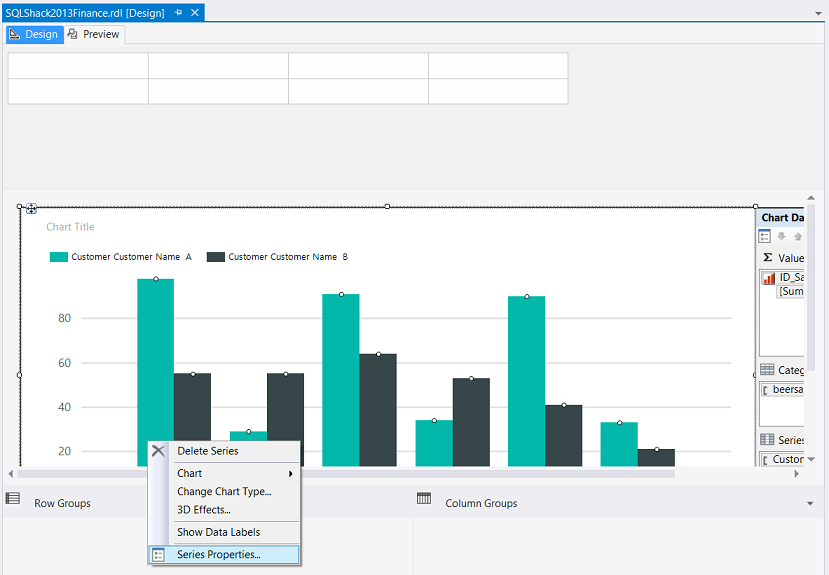

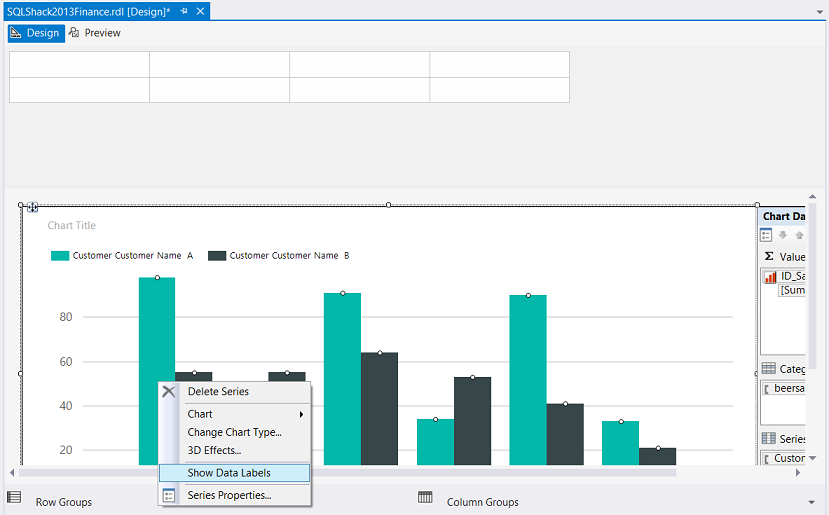

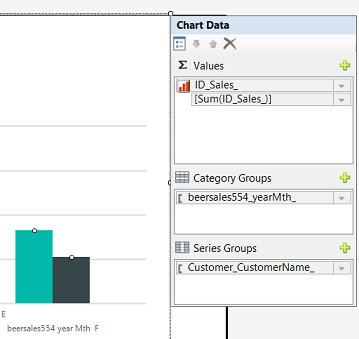

Clicking on the chart also brings up the “Chart Data” box (see above).

We set the Σ to sum the sales. The “Category Groups” to the YearMth and the “Series Groups” to the customer name (see above).

Let us give our report a “whirl”

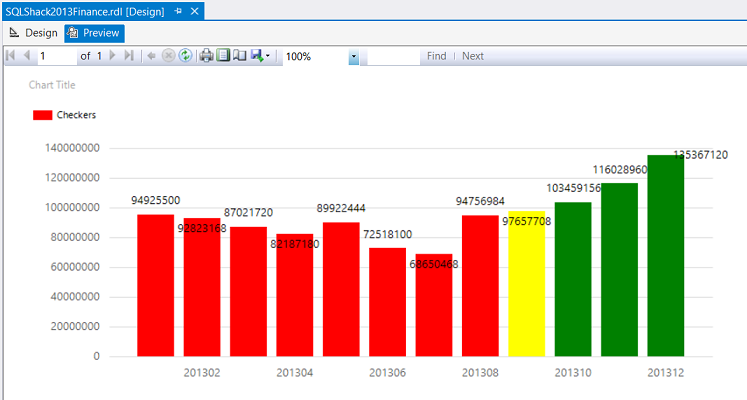

We select the “Preview” tab (see above) and the report comes into view. Unfortunately, it is not very informative. The astute reader will remember that we requested (within the query) results for Checkers only.

Let us add the required coloring to the bars (as described above) and also format the chart.

We right-click on any of the blue bars and select “Series Properties” (see below).

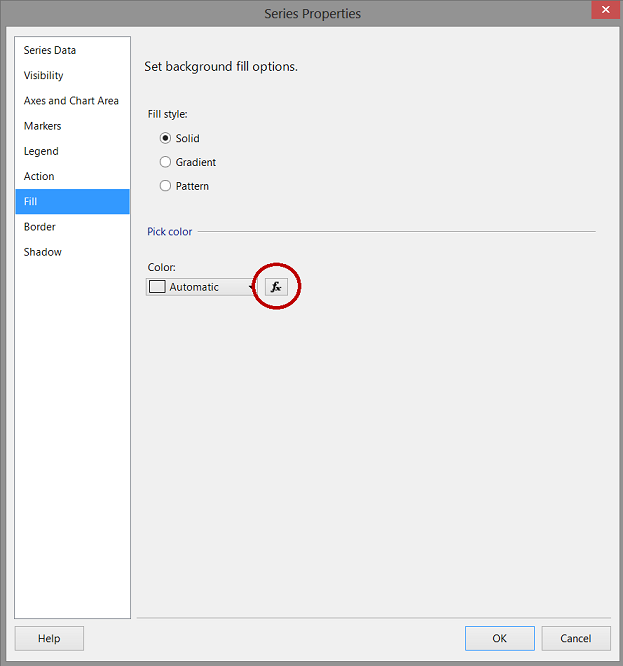

The “Series Properties” dialog box is opened (see below).

We select the “Fill” tab and select the fx tab (see circled above).

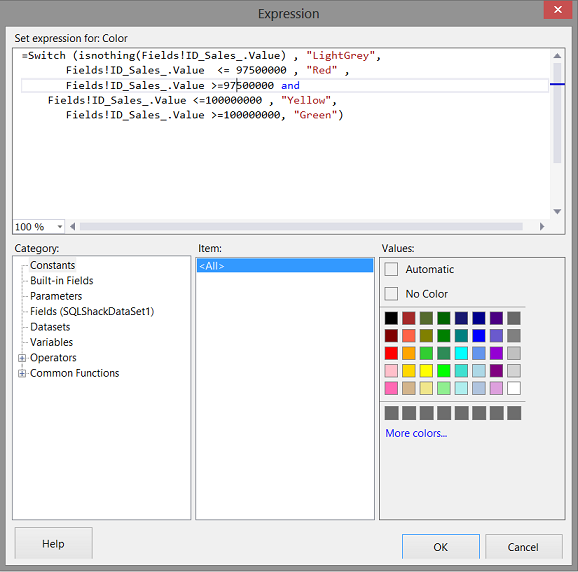

The function box opens and we add the following code (see below).

The code:

|

1 2 3 4 5 6 7 |

=Switch (isnothing(Fields!ID_Sales_.Value) , "LightGrey", Fields!ID_Sales_.Value <= 99500000 , "Red" , Fields!ID_Sales_.Value >=99500000 and Fields!ID_Sales_.Value <=100000000 , "Yellow", Fields!ID_Sales_.Value >=100000000, "Green") |

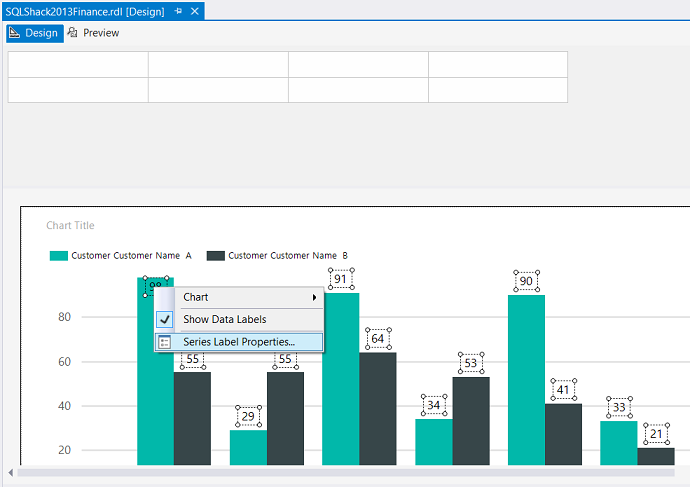

We also would like to see the sales amounts correctly formatted above each vertical bar.

Once again, we right-click on the vertical bar and this time we select “Show Data Labels” (see above).

Running our report, once again we find the following.

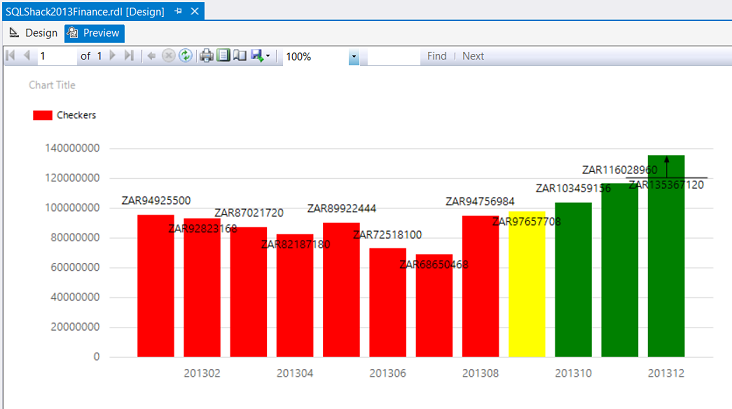

We note that the values are now present however that they are very poorly formatted. Let us fix that now.

Back in “Design Mode”, we right click on the numbers themselves (see below).

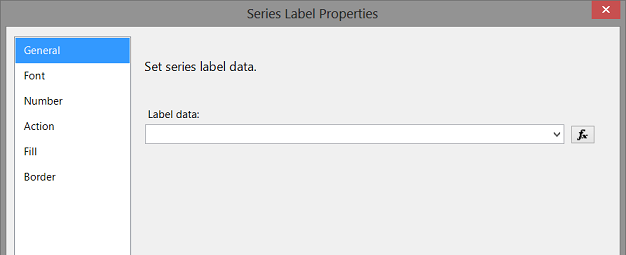

We select “Series Label Properties” (see above).

The “Series Label Properties” box is brought up. We select “Number” and set the currency and format (see below).

We click “OK” to accept these changes.

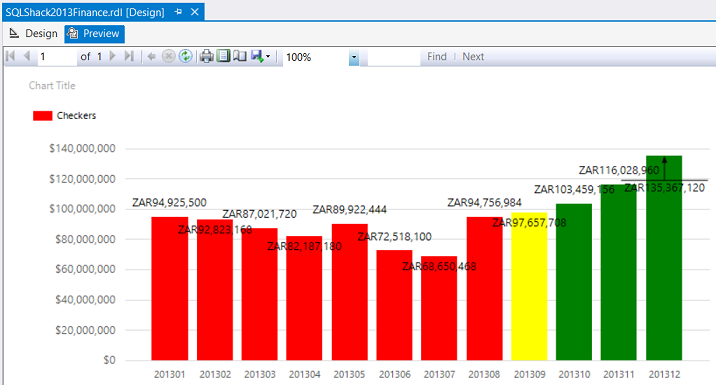

Running our report again, we find the following.

This appears much nicer, however there are still two more tasks to do.

Format the vertical axis of the graph

Show every yearmth name

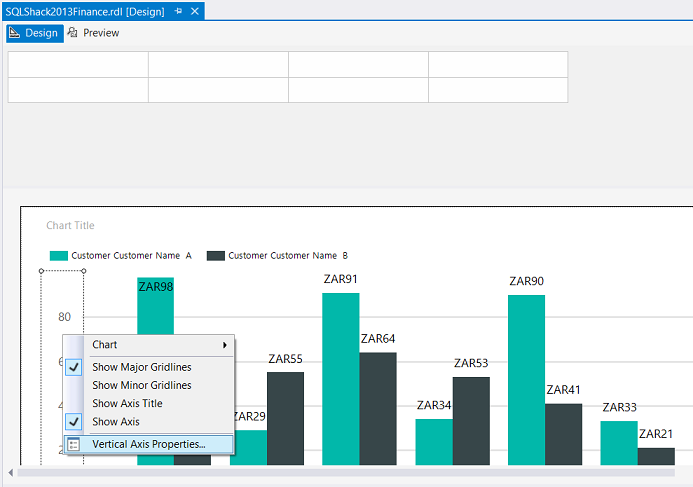

To format the vertical axis, we right-click the axis and select “Vertical Axis Properties” (see below).

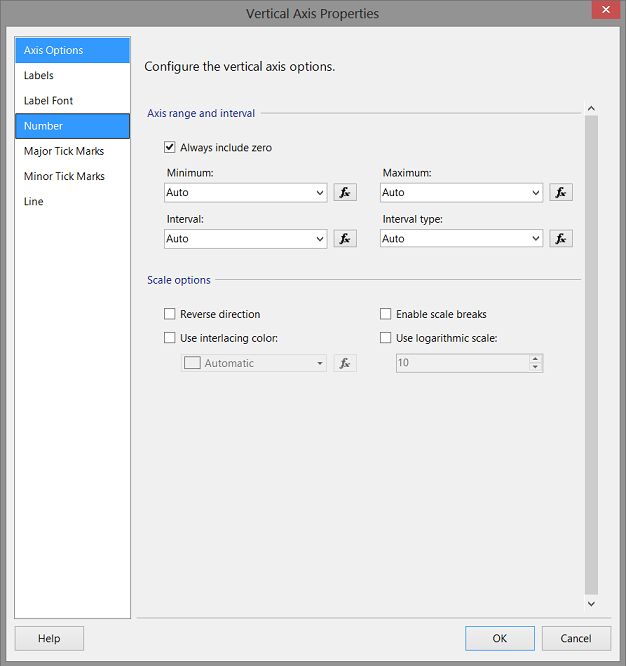

The “Vertical Axis Properties” dialogue box is brought up.

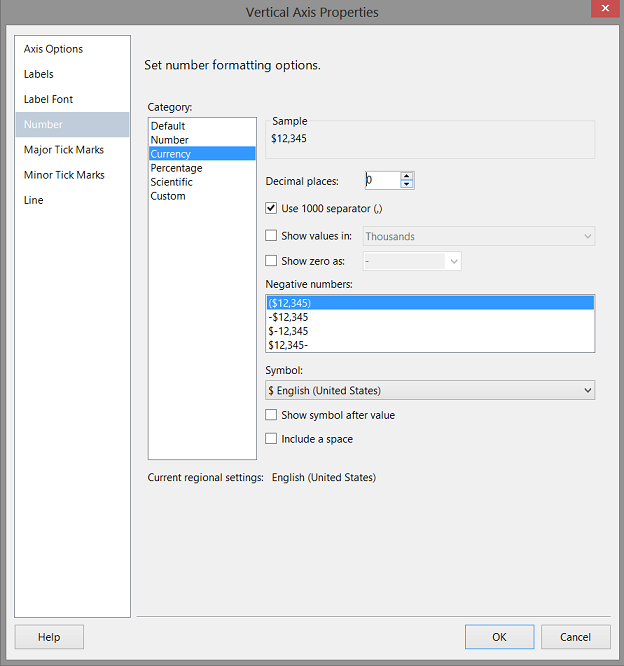

We select “Number”

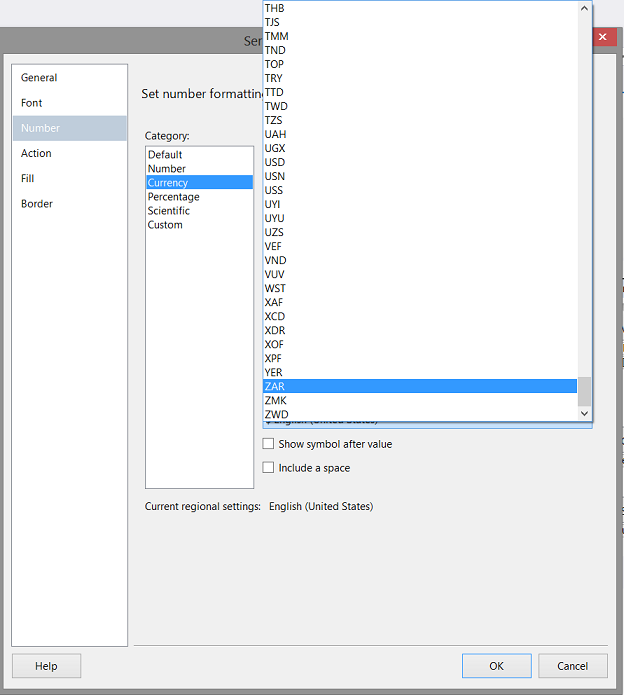

The “Vertical Axis Properties” box changes and we now select “Currency”, no “Decimal Places”, use “1000 separator” and change to currency to “ZAR” (see above).

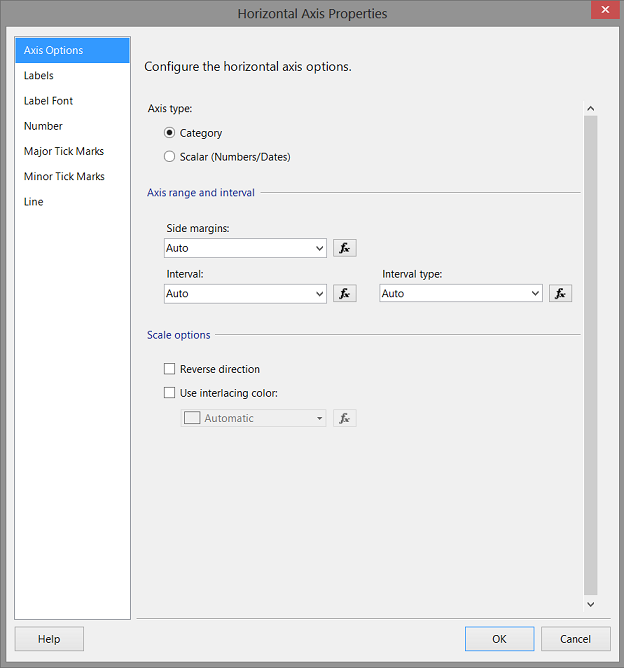

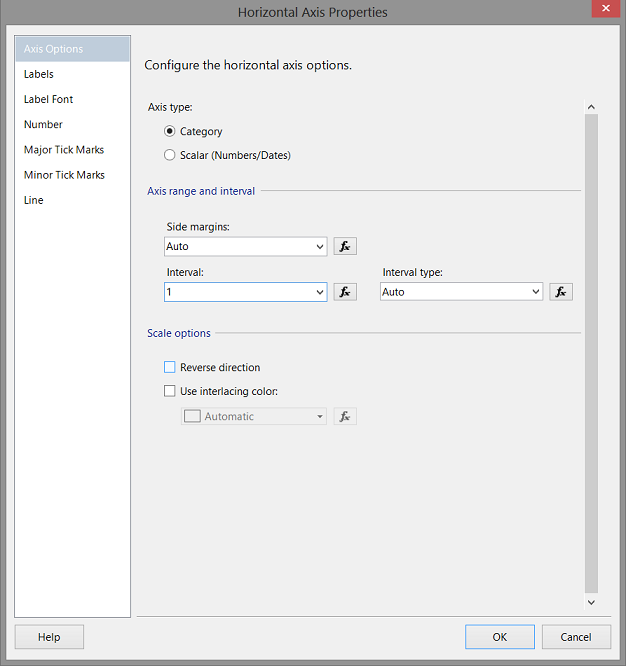

We next format the horizontal axis (see below).

The “Horizontal Axis Properties” dialog box is brought up. We change the “Interval” from “Auto” to 1 (see below).

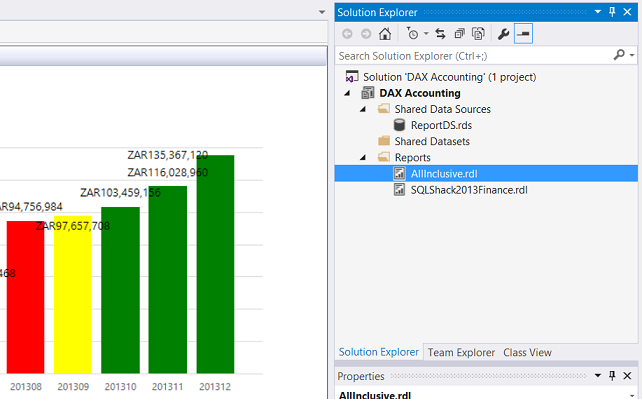

Running our report, yet again we find the following:

This report is much more pleasing, in addition to being more informative.

Being more inclusive

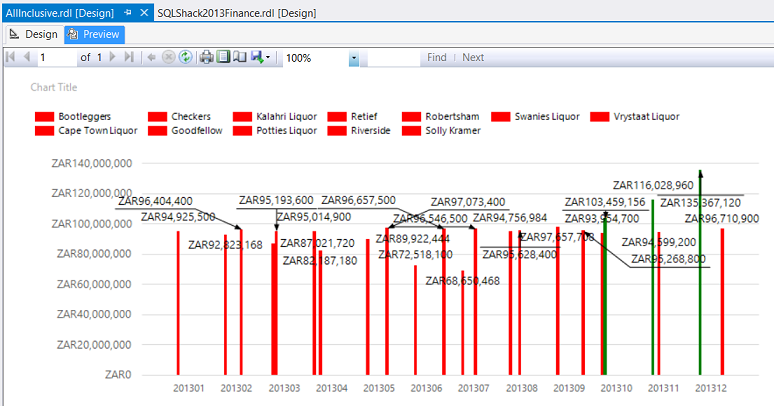

Now that we have our “Checkers” report to the stage that we want it, Mary asked to see the results from Checkers and all the other firms that we have, once again for the same period.

This is easier than it sounds.

We begin by right clicking on our “Checkers” report and click “Copy” (see below).

We paste it in the same folder and rename the file “Allinclusive” (see below).

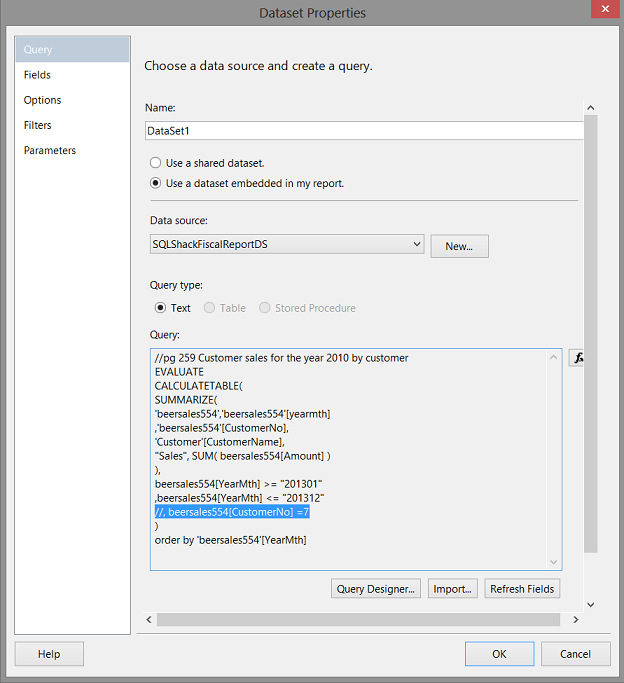

We now open “AllInclusive” and delete the local data set and create a new one in a similar fashion that we did above.

We see our query which is used to extract our data from the database (see above).

What we now do is fairly easy. Just comment out the line of code that says “Where the customer number is 7” (see above).

We are now all ready to test our query.

Our report appears as above HOWEVER we do have issues as we cannot determine “who is who”. In short using the fill feature that we used for “Checkers” is not really appropriate here.

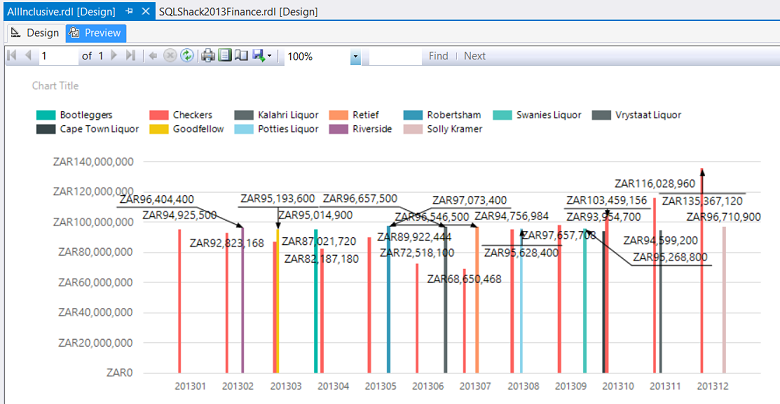

Let us set the fill back to “Automatic”. How to achieve this is described above.

This done, we find that our chart becomes more meaningful.

So we come to the end of another “fireside chat”. I sincerely hope that this presentation has proved stimulating to you and as always your feedback is always appreciated.

Conclusions

Decision makers oft times are faced with difficult decisions with regards to corporate strategy and the direction and correction that must be made in order for the enterprise to achieve its mission and objectives. While many decision-makers prefer to utilize Excel as a reporting front end, we oft time find ourselves in the position where the sheer amount of data makes this option unworkable. We have seen how we may alter the appearance of reports, to add more meaningful information. In our next “get together” we shall be looking at reporting where we are able to change the parameters such as the date and client and how this makes our reporting efforts more efficient and effective.

In the interim, happy programming.

Addenda 1

DAX query

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 |

EVALUATE CALCULATETABLE( SUMMARIZE( 'beersales554','beersales554'[yearmth] ,'beersales554'[CustomerNo], 'Customer'[CustomerName], "Sales", SUM( beersales554[Amount] ) ), beersales554[YearMth] >= "201301" ,beersales554[YearMth] <= "201312" , beersales554[CustomerNo] =7 ) order by 'beersales554'[YearMth] |

References:

- Charts (Report Builder and SSRS)

- Tutorial: Adding a Bar Chart to a Report (Report Designer)

- Bar Charts (Report Builder and SSRS)

- Reporting in SQL Server – Using calculated Expressions within reports - December 19, 2016

- How to use Expressions within SQL Server Reporting Services to create efficient reports - December 9, 2016

- How to use SQL Server Data Quality Services to ensure the correct aggregation of data - November 9, 2016