We’ve looked at methods to reduce costs within Azure. We may experience situations where a slight increase in Azure costs will benefit us to help protect our resources and customers when it involves security or other critical updates. When we consider these situations, we must keep up-to-date with the latest patches, updates to development libraries, as well as the possible effects of these updates to our existing code. Likewise, related to resource usage, a resource may be unused or seldom used by a percent of our customers that we keep when we’re ready to switch all our customers while we make the appropriate upgrades to our resources to help with costs. We’ll look at some techniques that we can use to manage the challenge of critical updates while also keeping costs down, or putting costs into a context about what may be more expensive.

The Beta Method

We can test with a fraction of our customers who will have access to the latest features with possible bugs using a beta version of our software. While this may slightly increase Azure costs because we’ll have demarcated resources for these customers, this will allow for faster proof-of-concept testing, especially in tight security contexts. Some of the worst examples of security patching in a short time frame involved software updates that had to be made in less than 1 day from when the security vulnerability was discovered. Security updates for vulnerabilities may introduce other bugs – especially in our own code base, but the risk of keeping them unpatched is not worth taking. Still, patching bugs offers a challenge in that it can introduce breaking changes in our code base that we uncover after the change.

In addition to assisting us with testing security and other updates, this method adds the benefit of assisting us with knowing our scale required for the remaining customers – if our beta is 10% of our resource use, we can derive the demand for when we upgrade the remaining resources. In these situations where we can derive the costs, this means that we will be able to predict our Azure costs for our remaining resources when we see the costs for the beta group.

Downtime and Forever Online Methods

If we can allow downtime for testing, we can take our resources offline, upgrade them, and test them while customers will receive an indication that the resources are offline during the tests. The earlier we set this expectation, the better as our customers expect this – such as a weekly or monthly schedule for maintenance. Due to growing security risks, this is one common method for addressing security updates and this will not add to our Azure costs since we are using existing resources.

Another alternative involves maintaining uptime by using an architecture that allows for changes to a part of our architecture where we change and test while the other part of our architecture remains untouched and is used by clients. If our changes are validated, we make the flip and clients will use the new architecture where we upgrade the other part. We’ll look at this setup in Azure using web apps and traffic managers where we use a traffic manager to route our traffic across web apps. We’ll note that this setup will cost us more on the Azure side. But if we require constant uptime and a bigger cost for us involves upgrading and uptime, this may be a lower cost even when we factor Azure costs (showing the flexibility of using Azure).

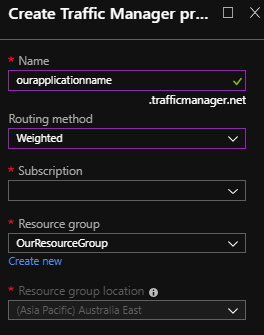

If we don’t already have them, we’ll create two web apps in Azure (not shown as I use existing test apps). We do not need to deploy code to them as we will be using both of these for testing. After these are created (or if they are already created), we’ll create a traffic manager with the routing method being weighted. For our purposes of routing traffic, based on the information Microsoft provides, weighted or priority could work for our needs here. We want the traffic manager to route traffic to these web apps, but when we’re ready to upgrade, we want one disabled (or removed) for upgrades. The traffic manager and duplicate web app add more Azure costs, but it gives us the ability to upgrade one of our web apps offline while we have another web online that customers are using. In some situations, downtime for clients will be much more costly than costs that we may receive from Azure and if we maintained our own environment, we’d pay much higher costs than what we do with Azure.

The Azure cost for routing traffic may be miniscule compared to the cost of downtime

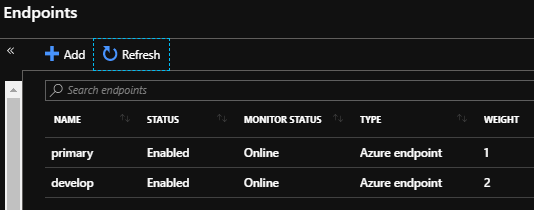

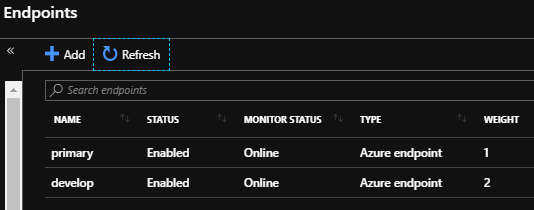

Once we’ve created our traffic manager, we’ll add our two endpoints (the 2 web apps we’ve either created or have ready to use).

The two web apps may add to our Azure costs, but offset costs associated with downtime

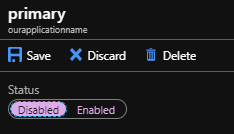

Now that we have both of our endpoints arranged with our weighted traffic manager, we’ll disable one of the endpoints and we’ll look at the web app itself that’s been disabled on the traffic manager (“primary”). The primary web app is still running, even though it’s been disabled on the traffic manager. This means that customer traffic routes to the other web app (“develop”) while we can upgrade the primary web app. While the two web apps add to our Azure costs, our customers can still connect to the develop web app while we upgrade the primary web app.

If we were upgrading our primary and wanted no traffic, we would disable it

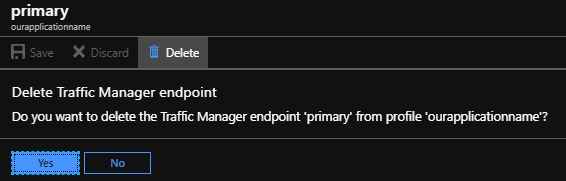

Another alternative is to remove the primary endpoint and add it when we’re ready:

Alternatively, we can remove the traffic manager endpoint and re-add it

As we’ve seen, the concern with costs may be on the Azure side, but also on customer impact. In situations where we must upgrade our code or related resources, the overall Azure cost is only one factor in our decision.

- Beta method. We have a small environment for testing that is being used and tested on clients, allowing us to discover bugs early before we roll out changes for critical updates and helping us get a preview of the costs. The downside to this method is managing the teams – as the beta team developers must be able to make code changes that will be compatible with both critical updates and existing code and keep up to date with both

- Offline method. Provided that we set the expectations early, this gives us a regular schedule for upgrading our resource without adding to our Azure costs. The only downside to this is when critical vulnerabilities are discovered and we need to upgrade resources on a faster timeline than our regular schedule. This method is most appropriate for small, medium and some large sized businesses and organizations that allow for offline periods

- Forever online method. The upside is that we now have multiple resources that allow us to make upgrades on one and flip to the other if tested appropriately – we always have a resource online. That downside to this method is that it adds to our Azure costs because we have multiple resources. This method is most appropriate for businesses that must be online at all times, which are generally larger businesses. To reduce the costs, we will use the same commitment to secondary resources that we have on primary resources

Conclusion

As we’ve seen in looking at Azure costs, we may have situations where we intentionally increase costs to reduce the likelihood of other costs, such as security risks from critical updates. Using the beta, downtime, or forever online methods or beta method will each come with advantages and costs – some of which may be from Azure. The beta method allows for continuous testing without affecting most clients. The downtime method gives us the ability to stop everything, run our updates, test our changes, and bring our resources online. Finally, the forever online method keeps our resources online constantly for our customers while we make changes underneath. While some of these methods will increase costs in Azure, the costs from downtime or security risks from critical updates may be much higher.

Table of contents

- Data Masking or Altering Behavioral Information - June 26, 2020

- Security Testing with extreme data volume ranges - June 19, 2020

- SQL Server performance tuning – RESOURCE_SEMAPHORE waits - June 16, 2020