This article aims to walk you through the process to execute a stored procedure hosted in Azure SQL Database from a data pipeline in Azure Data Factory.

Introduction

Data hosted in data repositories can be accessed using the query language of the data repository. These queries can be executed ad-hoc as required while performing ad-hoc analysis which is typically done by analysts. When the data is to be provisioned through standard and well-defined mechanisms or interfaces, typically this is done using database objects like views and stored procedures which are programmed with pre-defined business logic and expose a fixed schema. Stored procedures are one of the most common objects to host business logic in data repositories. While stored procedures are typically consumed by application or application-related interfaces, it’s not the only consumer of stored procedures. Other tools like ETL pipelines often need to perform certain operations on a database which may be scalar or bulk operation or batch process in nature. If the ETL pipelines execute ad-hoc queries on data repositories, it may not be efficient from a performance or maintenance perspective.

To promote centralization as well as reusability of data-related logic, ETL pipelines should perform data-related operations on data repositories using stored procedures. On the Azure cloud platform, Azure Data Factory is the primary service for performing ETL and building data pipelines. One of the most popular database offerings on the Azure platform is Azure SQL Database which has stored procedures as it’s a first-class database object. While developing Azure Data Factory pipelines that deal with Azure SQL database, often there would be use-cases where data pipelines need to execute stored procedures from the database. In this article, we will learn how to execute a stored procedure hosted in Azure SQL Database from a data pipeline built with Azure Data Factory.

Pre-requisites

As we are going to execute a stored procedure that would be hosted in a database using a data pipeline built with Data Factory, the first few things that we need in place is an instance of Azure SQL Database and an instance of Azure Data Factory. It is assumed that these instances are already in place and configured with the right level of network connectivity such that Azure Data Factory can access Azure SQL Database.

Once these instances are created, the next thing we need is a sample table and a stored procedure that can operate on this table. We can use SQL Server Management Studio (SSMS) on a local machine and connect to the instance of Azure SQL Database to execute T-SQL statements to create a new table as well as a stored procedure. It’s assumed that SSMS is already installed and connected to the Azure SQL Database instance.

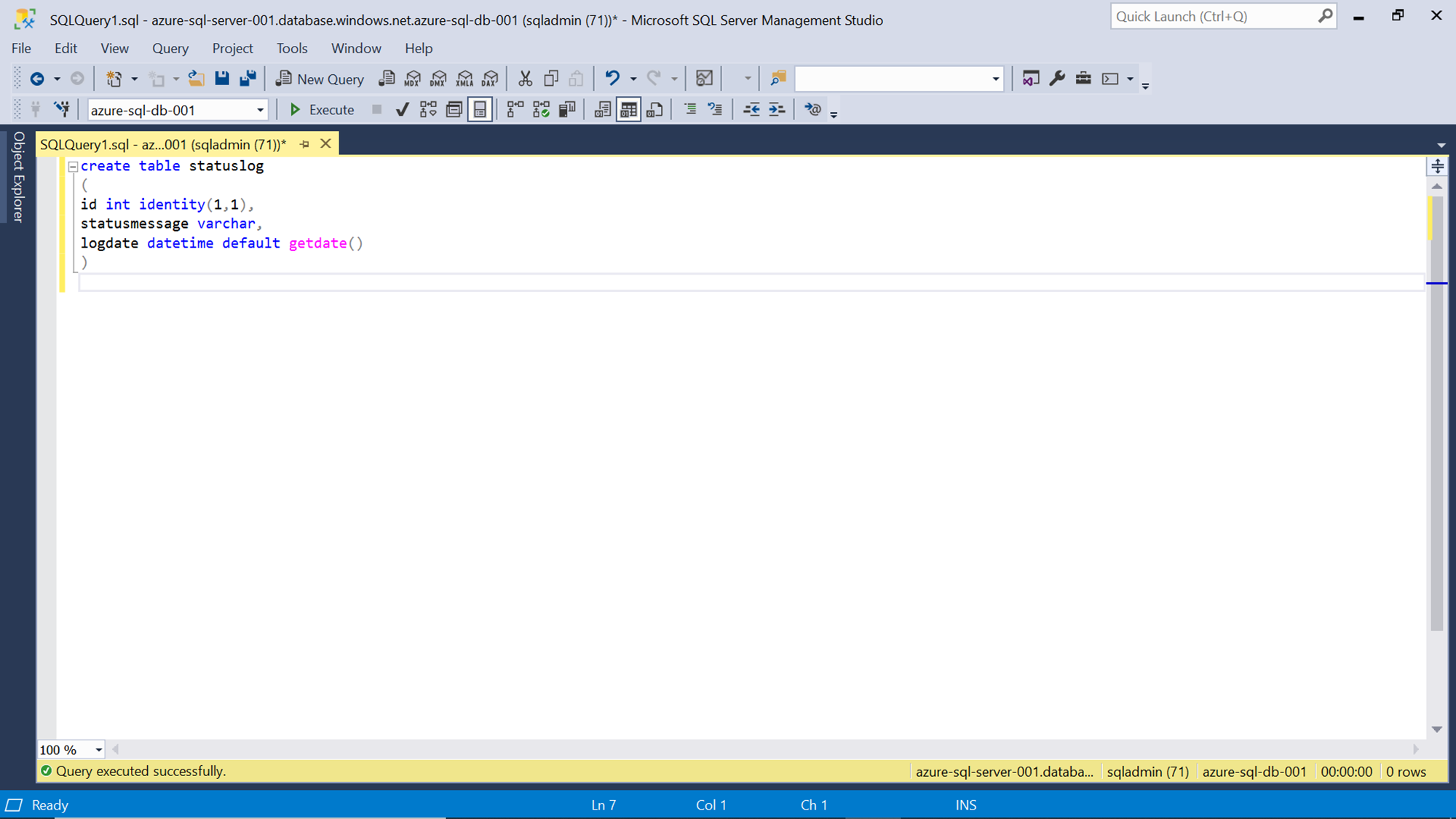

Let’s say that there is a centralized log table in the Azure SQL Database to log messages from all the data pipelines. To emulate this scenario, we can create a sample status log table where we will capture the message logs. The SQL script to create one such table is shown below. Here we are creating a table named status log with an auto-incrementing id, status message, and a log time field with a default value of the time when the record is inserted.

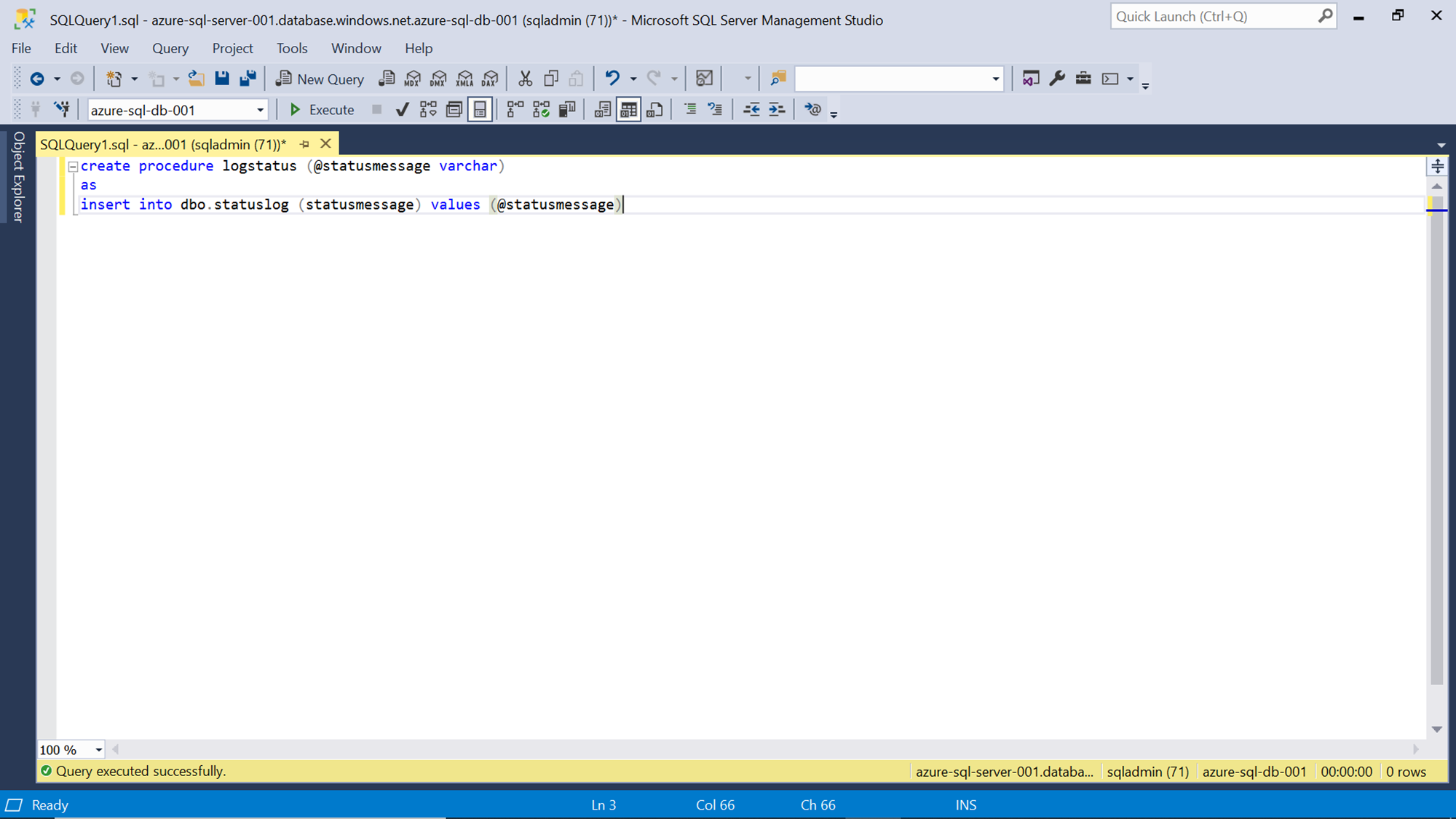

Next, we can create a store procedure that accepts the log message as the input parameter and insert a record in the table that we created above. The SQL script to create such a stored procedure is shown below. Here we are creating a stored procedure name log status with @statusmessage as the input parameter. The insert statement inserts data in the status log table with the same message that was provided as the input parameter to the stored procedure.

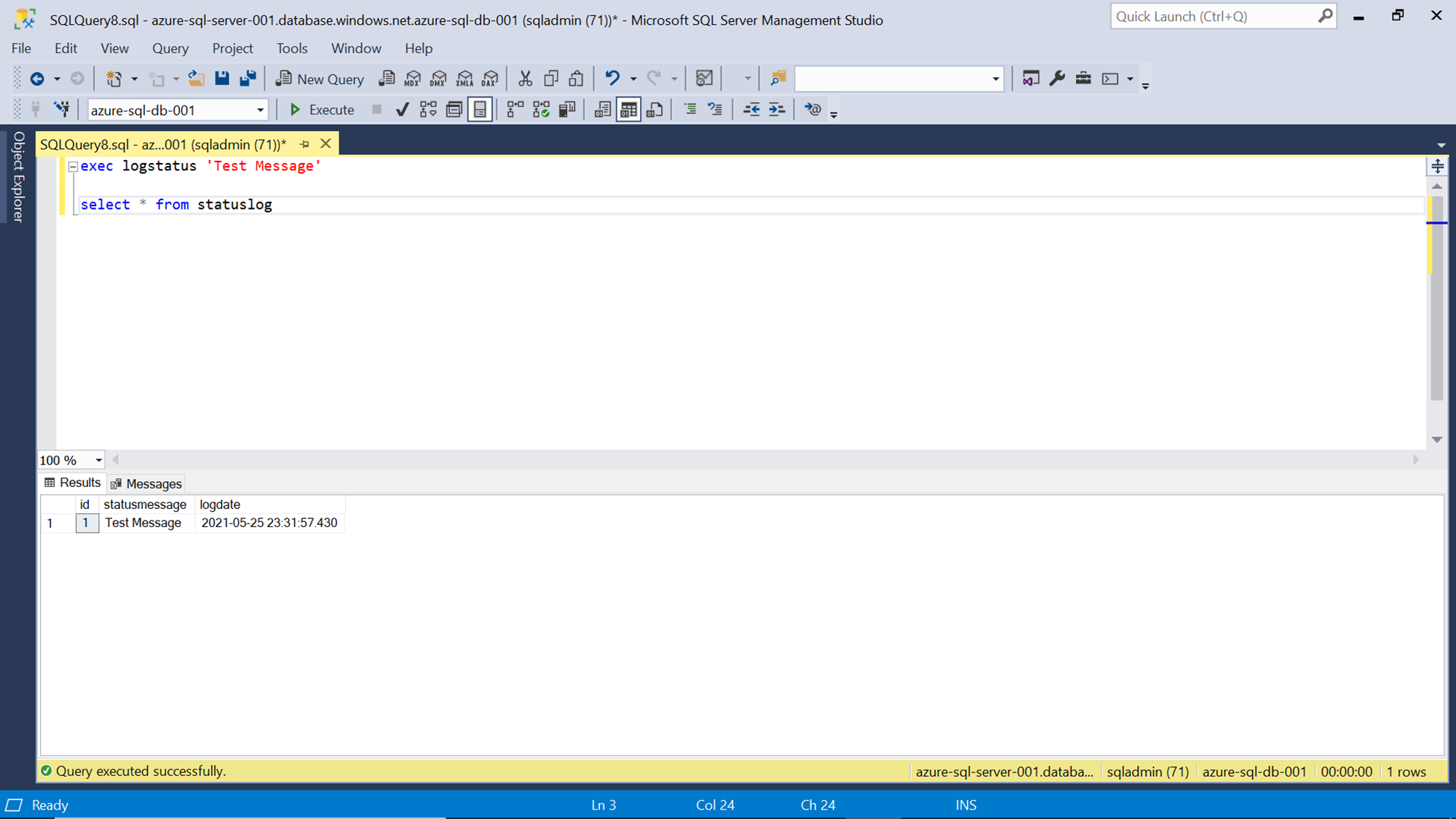

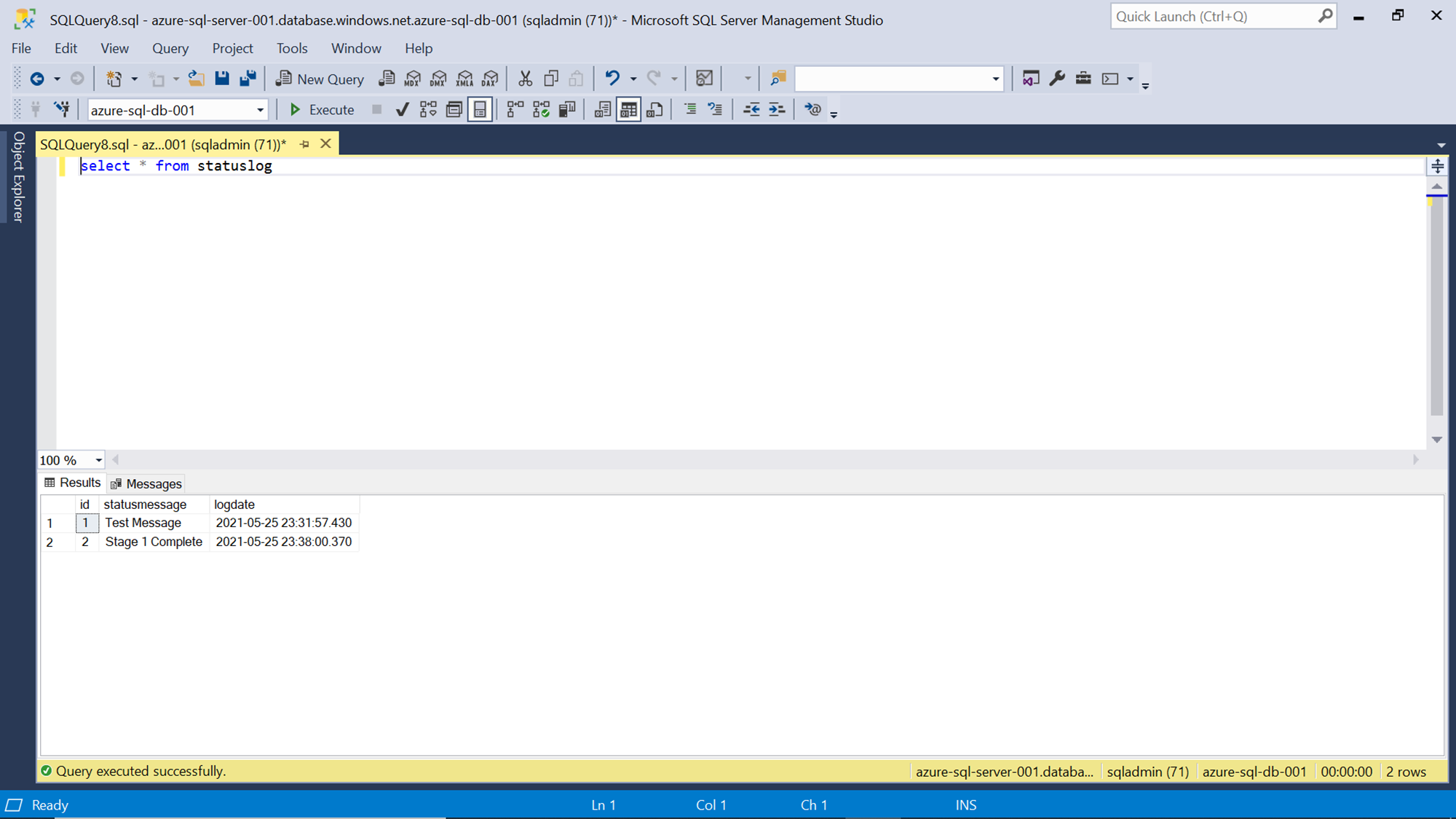

We can test this stored procedure by executing it using the exec statement as shown below. Once the stored procedure gets executed successfully, we can verify whether the record got inserted by querying the table using the select statement as shown below.

Now we have all the pre-requisites in place to start working on the data pipeline that would consume this stored procedure from Azure Data Factory.

Executing Stored Procedure from Azure Data Factory

Navigate to the Azure Data Factory instance in the Azure portal and click on the Author & Monitor link that will open the Data Factory portal as shown below. Since we intend to create a new data pipeline, click on the Create pipeline icon in the portal.

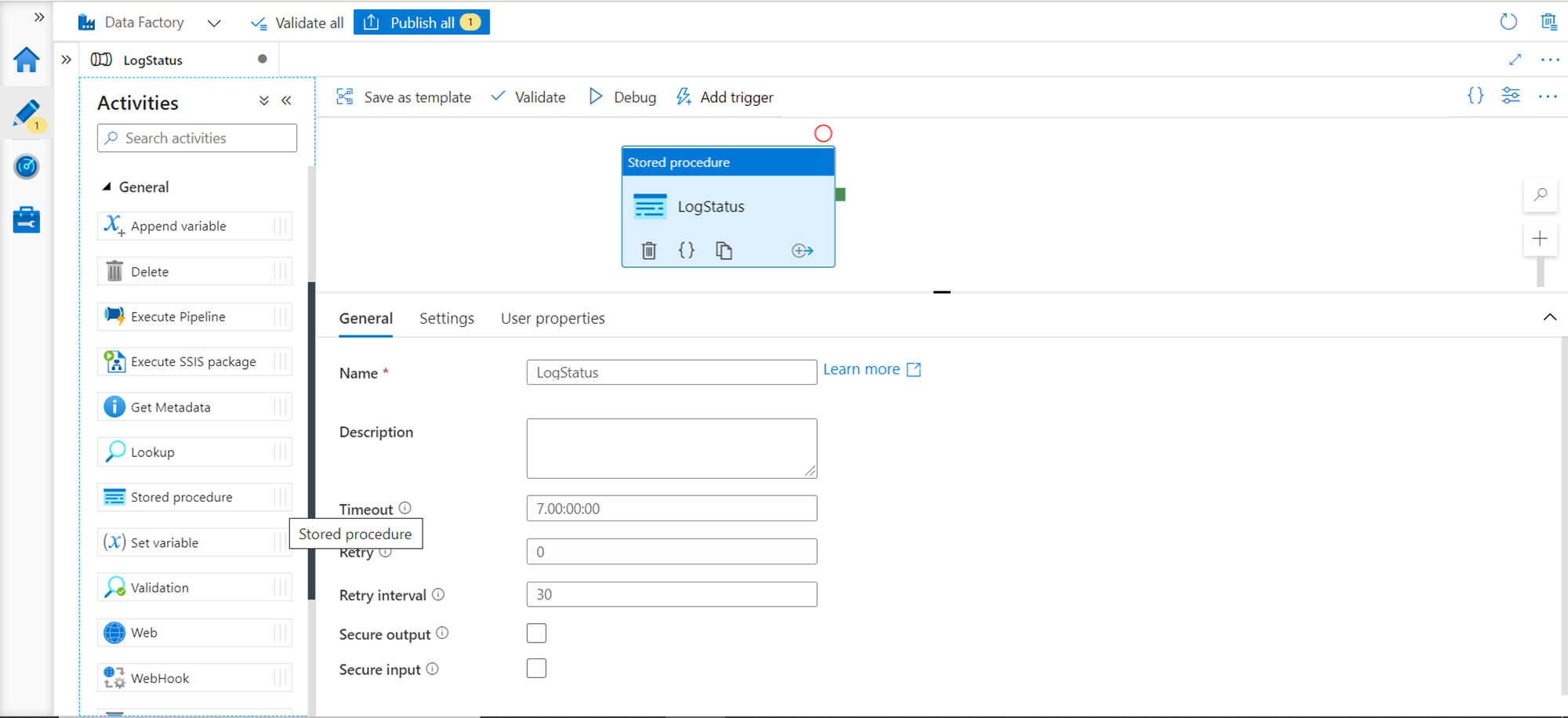

Navigate to the Factory Resources section, and from the pipelines section open the ellipsis and select the new data pipeline menu item. It would create a new blank data pipeline. Generally, there will be a number of tasks and data transforms in a data pipeline. Under the General section, we can find the Stored procedure activity as shown below. Drag it and drop it on the layout to add this control to the data pipeline. We can name it something appropriate as shown below.

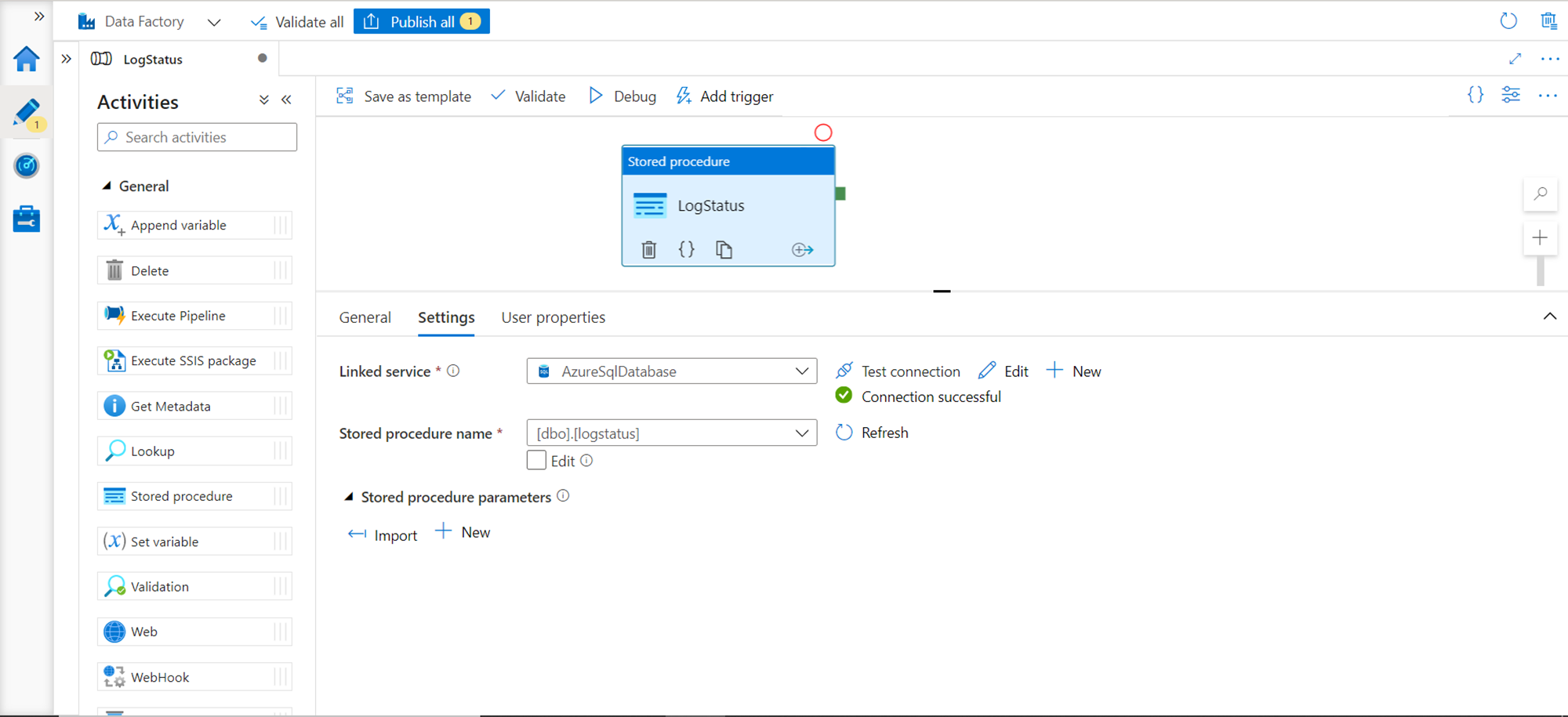

Next, we need to configure the settings of this control. Click on the Settings tab to configure the settings. We need to have a linked service created of the Azure SQL Database type. If you have not registered it already, consider creating a new one by clicking on the New button. By creating a linked service, we are creating a connection from Data Factory to Azure SQL Database instance. As mentioned in the pre-requisite section, it is assumed that this connectivity already exists between Azure SQL Database and Azure Data Factory by means of a linked service registered in Azure Data Factory. Once this linked service is created, it will appear in the drop-down of the Linked Service as shown below. After testing successful connectivity using the Test connection button, the store procedure that we created in the pre-requisite section should appear in the stored procedure name drop-down. Select the same as shown below.

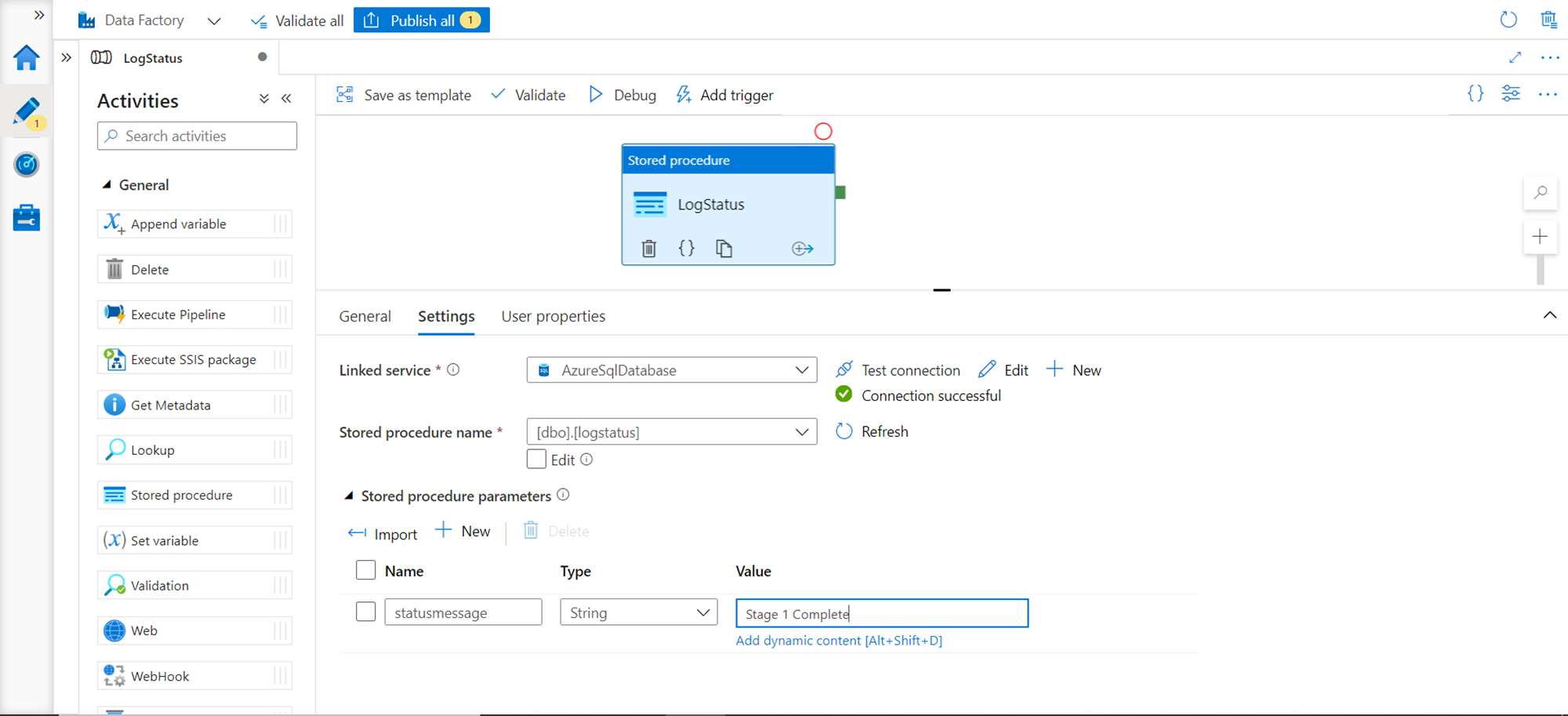

We configured this control to point to the stored procedure, but we are still not ready to execute this stored procedure. The reason being we have not configured the parameter that is expected by this stored procedure for execution. We can add parameters by clicking on the New button under the Stored procedure parameters button as shown below. Here we can add a static message that will be added every time the data pipeline executes. Alternatively, if the message must be dynamic which would depend on some variables, the same can be configured by clicking on the add dynamic content link below the value field as shown below. For this exercise, we would assume that this stored procedure is executed to log some status message when the data pipeline execution is completed to a certain level. So, we are adding a static message which would be logged as shown below.

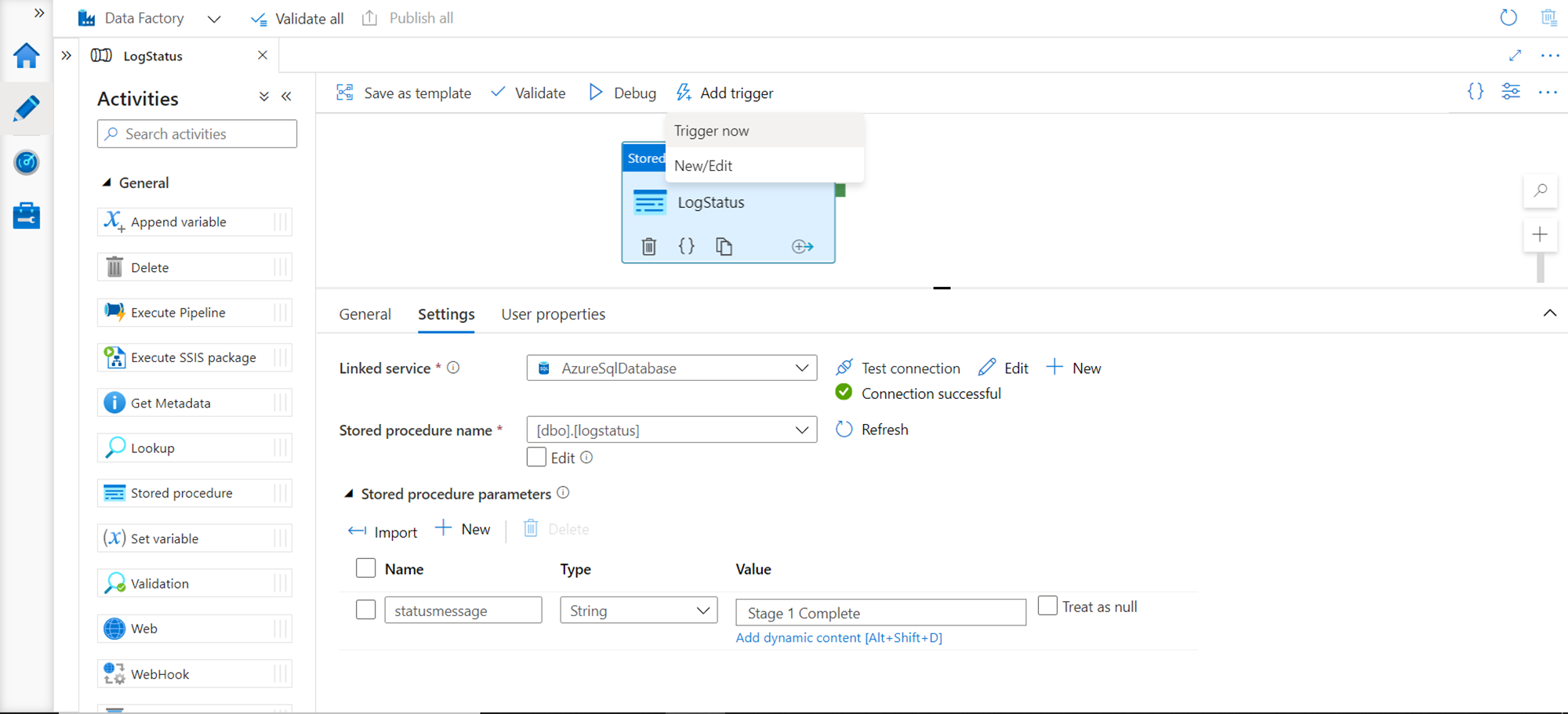

We are ready now to execute this data pipeline, but before executing we need to publish the configuration of this data pipeline, which the trigger will use for execution. Publish the changes by clicking on the Publish all button and then click on Add trigger button. Under this button, we would find a Trigger now menu option as shown below. Click on this menu item to start the data pipeline execution right away.

Once the execution is complete, navigate to SSMS and query the status log table. If the data pipeline execution was successful, we would find the new message inserted as a record in the status log table as shown below.

In this way, we can execute a stored procedure hosted in an Azure SQL Database using a data pipeline created using Azure Data Factory.

Conclusion

In this article, we learned the importance of stored procedures in centralizing business logic in database objects. We created a database instance with a stored procedure, a data pipeline in the data factory and consumed the stored procedure from this data pipeline.

- Oracle Substring function overview with examples - June 19, 2024

- Introduction to the SQL Standard Deviation function - April 21, 2023

- A quick overview of MySQL foreign key with examples - February 7, 2023