Capacity management is one of the most important tasks you have to perform as a DBA. Usually we rely on threshold alarms for the disks volumes and the databases, however I find it very suitable for me to take actions in advance – this way I am sure the SQL Servers under my supervision will have the needed space to operate without issues. And yes, there is the auto-grow, however it’s a safety mechanism which role is to ensure that even in critical situations you can have your database operational, however this does come with a negative performance impact which I try to avoid for my key production systems.

There are a lot of articles out there that provide you with a pretty detailed information about the size of databases, volumes, tables, partitions but none of them focus on the future usage. Except maybe two – the first one is from Microsoft Operations which focuses on the ‘days remaining’ metric and the second one from Chad Miller which we will use as a base and develop it further.

Why ‘days remaining’ is such an important metric for us? When you are dealing with tens of thousands of databases, thousands of disks you need to focus only on the objects that require your immediate attention.

Let us go true the steps for the deployment of the solution:

- Create the database, tables, views and the stored procedures

- Configure the SQL Servers for which we will capture data

- Schedule the data collection for the volumes and the databases

- Alternatively use SSIS to load data from external sources

- Configure data purity and data purge jobs

You can download the scripts you will need from here;

Create the database, tables, views and the stored procedures

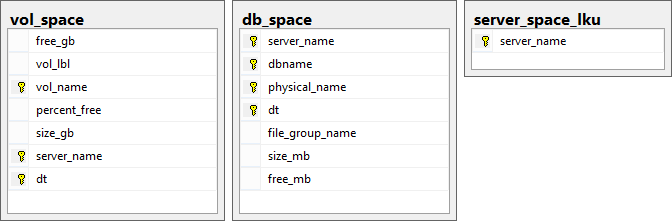

As a start we need to create a database to store our information, in my case I will name it capacity_management; Using the provided scripts (structure.sql) we will create the three tables – vol_space, db_space and server_space_lku, the views – db_space_change_vw, vol_space_change_vw and the stored procedures db_space_capacity_sp, vol_space_capacity_sp;

Configure the SQL Servers for which we will capture data

The PowerShell scripts that we will configure later use the table server_space_lku and captures data for all SQL Servers listed in it. Thus considered that you have a the possibility to connect to all your of your SQL Servers.

Let us add Europe\Kaloyan01 as a SQL Server in it:

123insert server_space_lku (server_name) values (‘Europe\Kaloyan01’)If you want to reuse your CMS server and to query specific containers from it you can create the database capacity_management there and use the PowerShell script Write-DbSpaceToDb_CMS.ps1 instead of Write-DbSpaceToDb.ps1; the only requirement is to alter the ‘WHERE’ clause at the end of the script;

Example: in the provided *_CMS.ps1 scripts I am quering the servers in group ‘SQL2012’;

123456789101112Get-SqlData $destServer $destDb "SELECT server_name FROM (SELECT DISTINCT groups.name AS 'server_group_name',svr.server_name AS 'server_name'FROM msdb.dbo.sysmanagement_shared_server_groups_internal groupsINNER JOIN msdb.dbo.sysmanagement_shared_registered_servers_internal svrON groups.server_group_id = svr.server_group_idWHERE groups.name like 'SQL2012') a" |foreach {$dataTable = Get-DBSpace $_.server_nameif ($dataTable){ Write-DataTableToDatabase $dataTable 'db_space' } }Schedule the data collection for the volumes and the databases

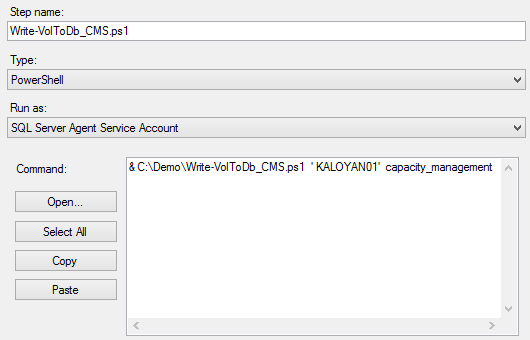

This can be done by Windows Task Scheduler or SQL Server Agent; (the two parameters that are required are the name of the SQL server and the name of the database which we created in the beginning)

In my case I have created a SQL Server Agent job that uses the PowerShell scripts Write-DbSpaceToDb_CMS.ps1 and Write-VolToDb_CMS.ps1; The two parameters that are required are names of the destination server and database capacity_management where we will store the information.

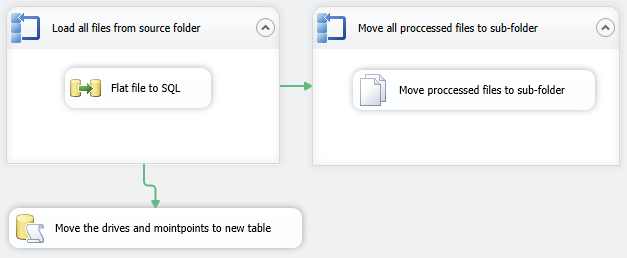

Alternatively use SSIS to load data from external sources

Considering that you do not have a direct connection to all of your SQL Servers you can use SSIS to load data from csv files for example. What I’ve done in my case is to gather data daily on each of my servers and upload it with ftp to a remote server. Then I download all the csv files to my CMS server and load them via SSIS; The SSIS package I use consist of several steps:

- For each loop container that load all files into a temporary table

- For each loop container that moves the processed files into an archive folder

A SQL script that splits the data from the temp table into vol_space and db_space tables

*hint: I am doing this for Oracle and MySQL servers.

Configure data purity and data purge jobs

I wanted to implement purge and data purity tasks in my solution in order to minimize the efforts supporting it. In the beginning I was storing data for the last 180 days; Now I store data for the last 730 days (for reporting purposes) but I use the last 180 days for calculation of the ‘days to live’ metric. Example on how to control the period will be discussed in just a moment.

The data purge job is a simple SQL Agent job that I have it running every day. It consists of the following:

1234567use capacity_managementgodeclare @730daysago datetimeSET @730daysago = DATEADD(dd, -370, GETDATE())DELETE FROM capacity_management.dbo.db_space_capacity_sp WHERE dt <= @730daysagoThe data purity job is again a SQL Agent job that is running every day just before the report generation. I am deleting the data for the tempdb disks, quorum disks, OS root disks, and other non SQL related ones.

Okay, now that we have everything configured let us go true the information that is being captured and the mechanism that is calculating the ‘days to live’ metric.

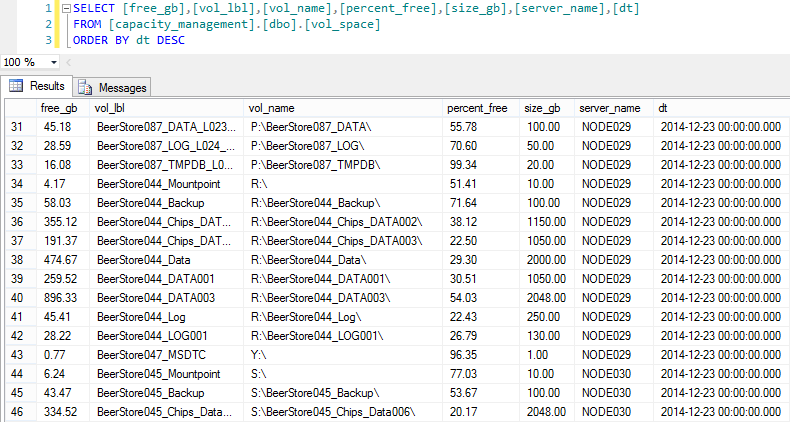

First of all let us check what type of information I have collected in my capacity_management.dbo.vol_space table.

We need only a few columns to start the calculation:

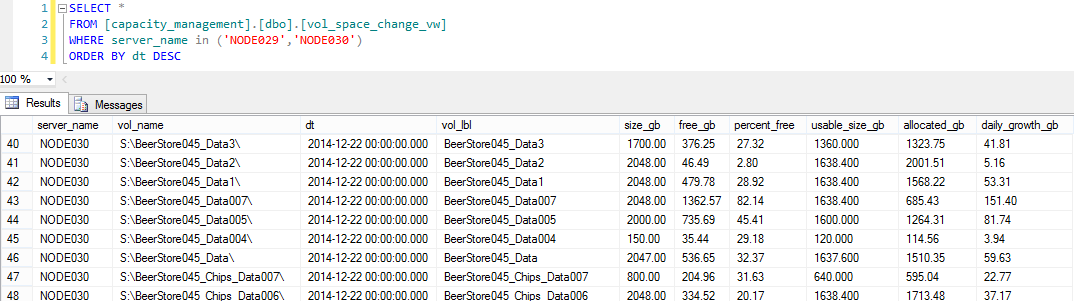

Now that we have this let us check what the view capacity_management.dbo.vol_space_change_vw is storing

Ok, we are somehow there. We have the average grow per day calculated.

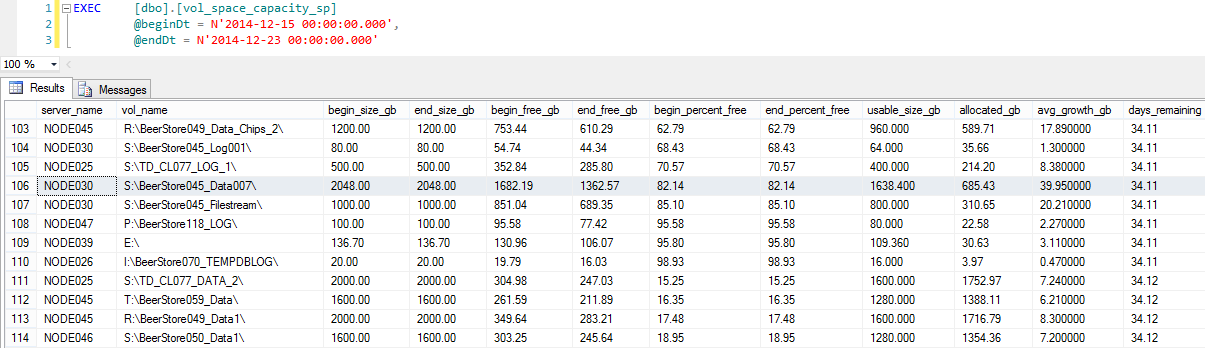

And last but not least the stored procedure that we will be using for the forecast capacity_management.dbo.vol_space_capacity_sp:

Now we have information about what was the total size and the used space of the volumes the in the first date (@beginDT), what are they at the current moment (@endDT). The stored procedure is able to calculate the average grow for each volume in our case; This is column avg_growth_gb; Once we have this information we are able to get the available space for the volume and divide it to the average grow per day resulting in the ‘days to live’ metric. In our case for disk S:\BeerStore045_Data007\ if the data on the disk continues to grow with the same rate it will take 34.11 days to fill it up.

The same can be done for database objects using the stored procedure db_space_capacity_sp instead.

I hope that you will find this useful in your day to day activities and will save you time and effort.

In the next article we will cover the build of the SSRS reports.

- Performance tuning for Azure SQL Databases - July 21, 2017

- Deep dive into SQL Server Extended events – The Event Pairing target - May 30, 2017

- Deep dive into the Extended Events – Histogram target - May 24, 2017