Introduction

In-memory technologies are one of the greatest ways to improve performance and combat contention in computing today. By removing disk-based storage and the challenge of copying data in and out of memory, query speeds in SQL Server can be improved by orders of magnitude.

TempDB is one of the biggest sources of latency in SQL Server. Servicing requests for temporary data structures and maintaining metadata about those structures comprises one of the most significant bottlenecks in SQL Server.

Memory-Optimized TempDB metadata addresses both of these concerns by allowing TempDB metadata to be moved into memory and greatly improve the performance of any workload that makes the hefty use of temporary data structures.

Background

TempDB is a shared resource used by SQL Server for a variety of different purposes, some of which include:

- Storage of temporary tables and table variables

- Storage of work files needed for hash join/aggregate operations

- Data returned by table-valued functions

- Data cached for use by cursors

- Work tables for spools and sort operations

- Some index rebuild operations, especially if SORT_IN_TEMPDB is turned on

- Row versions used by: snapshot isolation, online index rebuilds, AFTER triggers, and multiple result sets

In other words, TempDB is used all over the place, though its function is generally hidden from developers and technicians. It would not be clear that many of these things are stored in TempDB unless you either already had this insight into how SQL Server worked or a desire to test/learn to find out.

The key to TempDB operations is the fact that objects are only stored until dropped, a session disconnects, or the service restarts. When SQL Server starts up, TempDB will be empty. While TempDB operations are minimally logged, it is not imperative to persist objects permanently as its use will always be transient. As a result, it is the perfect candidate for in-memory technologies as memory is also a transient repository for data.

TempDB can roughly be summarized into 2 types of data:

- Metadata describing the data structures stored in TempDB

- The data contained within these data structures

This article discusses how SQL Server 2019 will allow us to store metadata about objects in memory, rather than on disk. This metadata includes system tables, such as sys.tables, sys.columns, or sys.procedures. The actual data stored within temporary objects is still stored in TempDB using traditional non-memory based storage methods.

Why This Matters

Metadata in TempDB is shared across all databases and processes on a given SQL Server. If a database manipulates temporary objects frequently, or if a server has a high volume of databases that make use of temporary objects, then contention on metadata tables may become problematic.

The symptom of this problem would be latency typically associated with page latch waits as TempDB pages are removed from cache, or when DDL operations are executed against existing temporary objects. The more temporary objects exist and the more they are manipulated, the greater the contention that will be seen across a given server’s workload.

There are many optimizations available that improve performance on TempDB. I’ve included some Microsoft references at the end of this article that provide quite a few optimizations and best practices for managing TempDB data and log files, as well as for making the most efficient use of TempDB when developing in TSQL.

Let’s take a quick look at the performance of some simple SELECT operations against TempDB tables:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 |

CREATE TABLE #yummy_food (id INT NOT NULL, food_name VARCHAR(10)); INSERT INTO #yummy_food (id, food_name) VALUES (1, 'taco'), (2, 'pizza'), (3, 'curry'), (4, 'sushi'), (5, 'chili'); SELECT * FROM tempdb.sys.tables; |

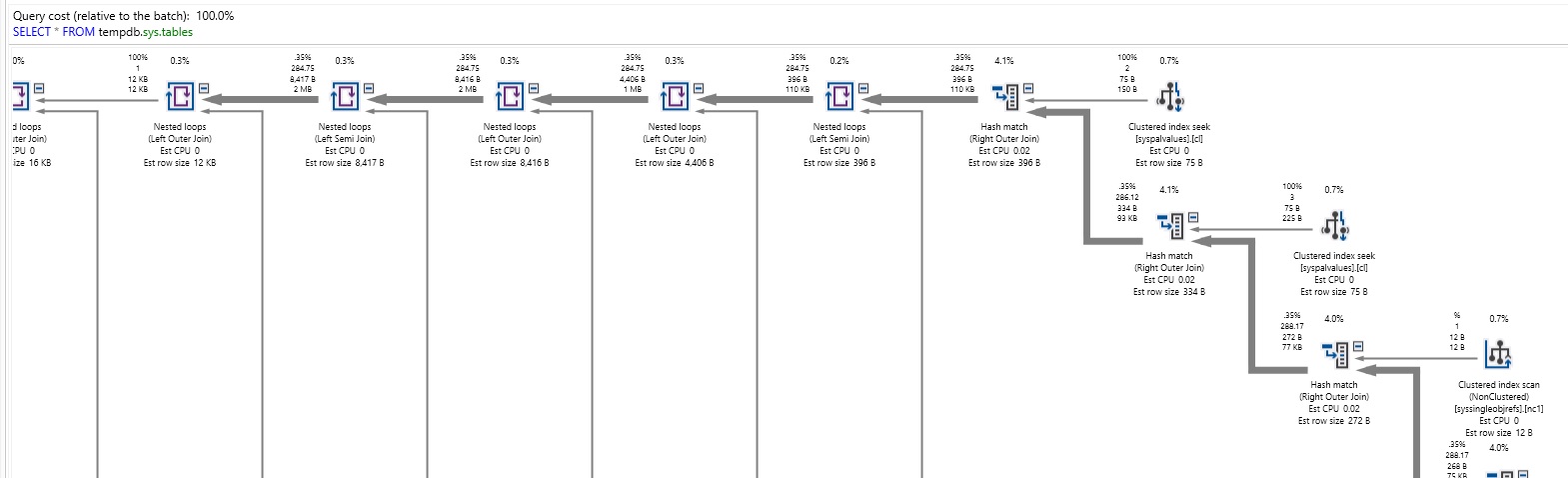

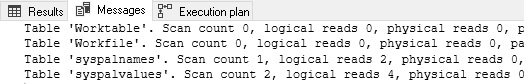

When this TSQL is executed against my relatively sleepy local server, the following are the execution plans and IO metrics returned:

Whew! That is a ton of complexity for what I thought was a simple query!! The execution plan above comprises about 10% of the plan for the SELECT! The IO stats are similarly wild. The takeaway from this simple demonstration is that metadata storage isn’t cheap and that if simply selecting data is this involved on my quiet SQL Server, then similar queries against a busy server are likely to be even more expensive.

Moving TempDB metadata into memory sidesteps much of this pain by removing the hefty IO expense. As is the case for other memory-optimized use-cases, the contention is essentially eliminated when objects are shifted from disk to memory. Faster data access with no latching is a huge win for us, and one that comes at a minimal cost.

How it Works

Turning on memory-optimized TempDB metadata storage can be accomplished with the following TSQL:

|

1 |

ALTER SERVER CONFIGURATION SET MEMORY_OPTIMIZED TEMPDB_METADATA = ON; |

Note that this requires a service restart of the SQL Server service. For a production system, you’ll want to schedule this at a time when a service restart is acceptable.

We can verify this setting at any time with the following SERVERPROPERTY check:

|

1 |

SELECT SERVERPROPERTY('IsTempdbMetadataMemoryOptimized'); |

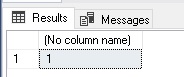

The result is straightforward:

Well, at least some questions in life can be answered in simple terms! With this setting enabled, let’s rerun our query from earlier:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 |

CREATE TABLE #yummy_food (id INT NOT NULL, food_name VARCHAR(10)); INSERT INTO #yummy_food (id, food_name) VALUES (1, 'taco'), (2, 'pizza'), (3, 'curry'), (4, 'sushi'), (5, 'chili'); SELECT * FROM tempdb.sys.tables; |

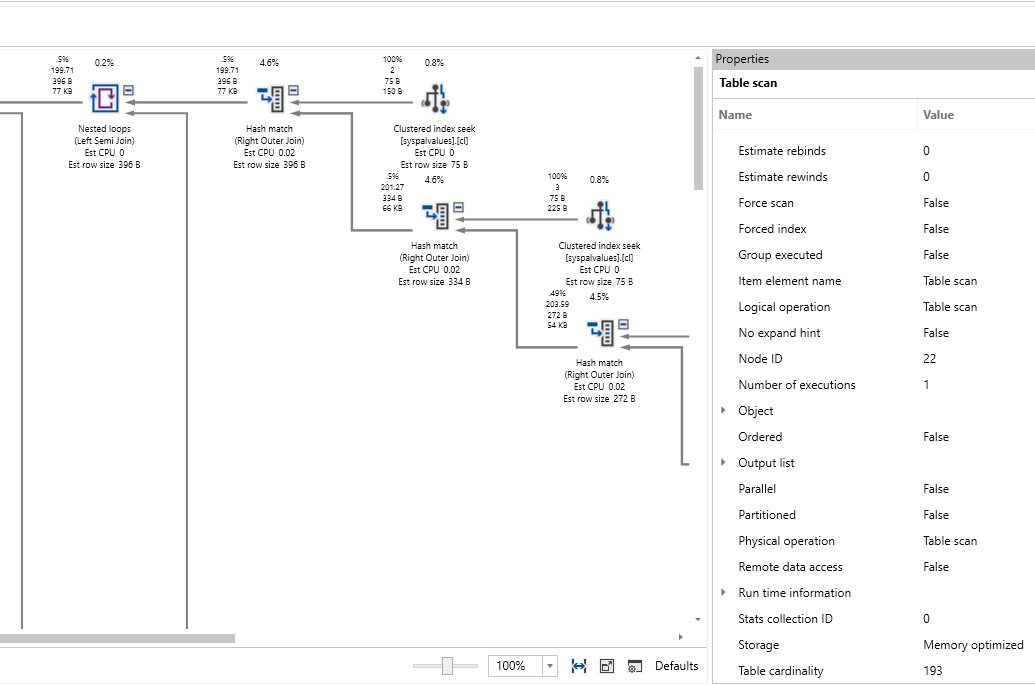

Now, when we inspect the execution plan, we’ll find it is as complex as before:

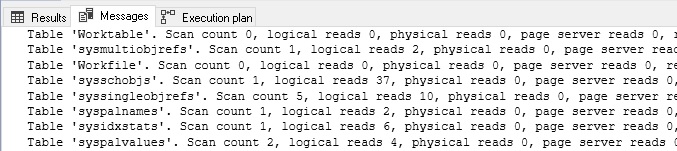

If we inspect the properties, though, the storage type for most components of this query can be verified as Memory Optimized. The IO stats can also confirm this change:

We have gone from 62 logical reads to 6. While some reads are still needed to service our query, all of the TempDB-exclusive metadata tables have been eliminated from this list. This is a big improvement, and all for a single, tiny temporary table created for use by this demo. We’ve also eliminated latching against this data, which will greatly reduce contention as we no longer need to move data back and forth to the cache when using it. Since this data is non-durable, it will vanish upon restart…though this is what it does anyway, so there’s no loss to us in that regard.

The benefits of this change are significant and can have a big performance impact on a system that makes heavy use of TempDB!

Best Practices

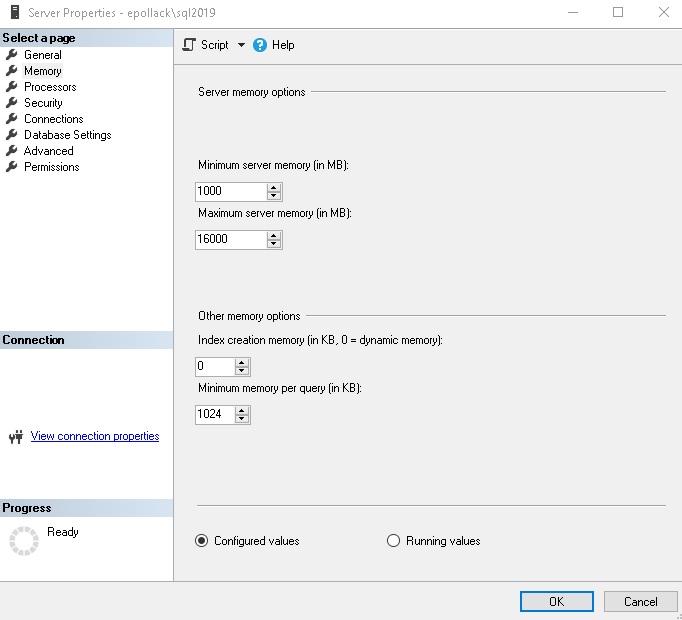

This feature is not free, and it is important to understand how to best utilize it prior to turning it on. First off, it consumes memory. Ensure that your SQL Server has enough memory allocated to it so that it can store TempDB metadata. Make sure the minimum memory setting is increased or already high enough to provide an acceptable baseline to support TempDB operations. If your server has the defaults set for its memory configuration, then it is strongly advised that you adjust the minimum and maximum memory to values that better represent your server’s workload.

My local server does not consume much memory, so my settings reflect a low minimum server memory and a generous maximum memory configuration:

At the end of this article, I’ve included a reference link to some additional resources on how to set your minimum and maximum memory settings, which goes well beyond the scope of this discussion. Suffice it to say that this is an important decision to be made when a server is built and to be reviewed as applications grow and consume more memory. Whenever we opt to utilize in-memory features, a similar review should be conducted to determine how much additional memory will be required to service this new memory utilization.

While a rare issue, be advised that you cannot query memory-optimized across multiple databases, which will not include TempDB metadata:

|

1 2 3 4 5 6 7 |

BEGIN TRAN SELECT * FROM tempdb.sys.tables; INSERT INTO AdventureWorks2017.dbo.shopping_list VALUES ('antacid', GETUTCDATE(), NULL); COMMIT TRAN |

This seemingly innocuous TSQL will result in a new error:

It’s unlikely your code has anything like this, and if it does it is likely on the maintenance/monitoring side of the house. Be sure to consult with your administration and operations folks prior to making this server configuration change, on the off-chance they have a code like this that you do not know about.

Additional TempDB Optimization

Due to its transient nature, TempDB has far more flexibility for optimization than other parts of SQL Server. For servers where TempDB is used heavily or is found to be a bottleneck, a great way to improve performance is to move it onto faster storage.

Since TempDB’s size is typically nowhere near as large as the databases it supports, moving it onto exceptionally fast flash-based storage is not an overly expensive decision. TempDB should always be separated from database and log files and this change allows it to not only be separate, but take advantage of its isolation to provide it with the biggest physical speed-boost as possible.

Another important consideration when initially configuring TempDB is how many files to allocate for data and log files, as well as autogrowth settings. Microsoft provides quite a bit of guidance on this topic and it definitely warrants further research before setting up a new server. The key is to minimize TempDB autogrowth events as these are expensive and will slow down query processing while they occur. In addition, sizing files equally ensures maximum parallelism as SQL Server will attempt to allocate data to files with the freest space. If files are equally sized, then that allocation will be relatively even across all files.

Lastly, whatever storage that TempDB lives on can be optimized for speed over durability. There is no need to back up TempDB, therefore any configuration or architecture decisions involving backups are not necessary. High-availability is important, though, as SQL Server cannot function without TempDB, so a system should be resilient towards the failure of a TempDB drive, regardless of whether this resilience is afforded by software or hardware conventions.

The Future

As memory continues to become cheaper and easier to add to SQL Servers en masse, the demand for in-memory services will increase. It is very likely that we will see features in the near future that will allow TempDB data to reside in memory as well. This will force us to further rethink how much memory a SQL Server needs and add all of the TempDB use-cases to that formula.

This is good news, though, as logically, memory is the smart place for TempDB to reside. The less we have to manage, the better, and the fewer data churn that SQL Server needs to perform while caching data to TempDB, the faster our workloads can execute. The more we rely on TempDB, the more memory we’ll need, but at the same time the greater the performance gains can be made by moving it into memory-optimized structures.

Expect in-memory technologies to continue to appear in other areas of SQL Server and existing features to become more robust and inclusive. These will be some of the best reasons to modernize to a new version of SQL Server as they have the potential to boost performance significantly, with the cost being more memory, which is no longer the cost-prohibitive upgrade that it used to be.

Conclusion

TempDB is a critical component of SQL Server that supports many different features and can easily become a bottleneck for applications that make heavy use of it.

The ability to move TempDB metadata into memory provides an excellent opportunity to boost performance on servers with high TempDB loads. The risk is minimal as this is transient data that will vanish upon restart, regardless of how it is stored.

When reviewing query and server performance, don’t forget to include TempDB in that analysis. As a shared resource, it is often overlooked as a black-box system database, but taking the time to compare a server’s settings vs. best practices can provide some easy and inexpensive ways to improve the speed and performance of your apps. Consider new features and TempDB optimizations as they are released, as they will also increase the options available to you for making your workloads faster and less contentious!

- SQL Server Database Metrics - October 2, 2019

- Using SQL Server Database Metrics to Predict Application Problems - September 27, 2019

- SQL Injection: Detection and prevention - August 30, 2019