This article will explore the process for Lift and Shift SSIS packages to Azure using Azure Data Factory V2.

Introduction

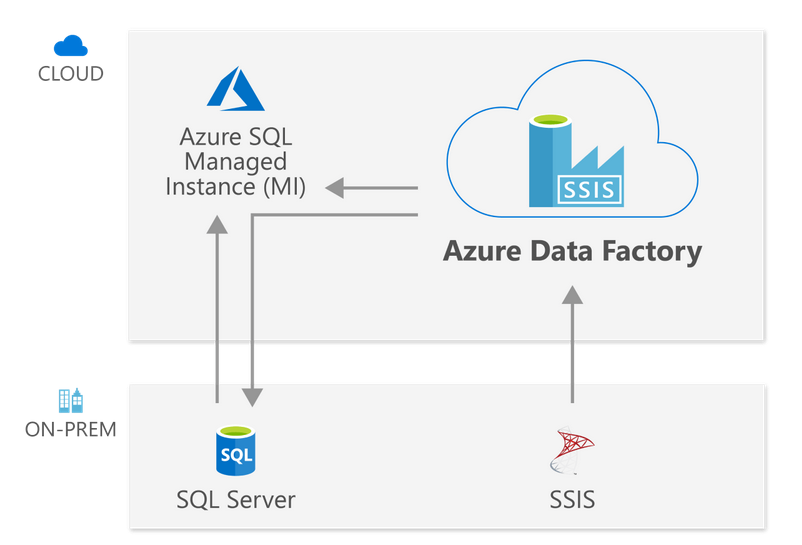

SQL Server Integration Services (SSIS) is a business intelligence tool for data Extraction, Transformation, and Loading (ETL) processes. You might be migrating your on-premises SQL Server to Azure Cloud using Azure SQL Database or Managed instances. Suppose you have SSIS packages in your on-premises SQL Server so how do you move them to the Azure Cloud?

Azure Data Factory (ADF) is a cloud solution for providing ETL solutions and data ingestion processes using its workflows. You can define the workflows for data movements with built-in connections, and data flow activities without any complicated coding.

Azure Data Factor V2 provides a feature Azure-SSIS Integration Runtime for executing your existing on-premise SSIS packages in the cloud using the lift and shift method.

You can follow this article, Starting your journey with Microsoft Azure Data Factory for learning more on the ADS.

In this article, we will explore the process to execute your on-premises packages into the Azure cloud.

Requirements

- You require an Azure subscription for the configuration of resources in the Azure cloud

- Azure SQL Database

- Azure Data Factory V2 service configuration

- An on-premise SQL Server with SQL Server data tools

- Visual Studio 2015 or later

- SQL Server Management Studio latest version

The process to lift and shift SSIS package in the Azure Data Factory V2

The lift and shift on-premise SSIS package project uses the SSIS integration run time. The Azure-SSIS is a cluster of the Azure VMs for executing the SSIS packages. You can define the number of codes, and compute capacity during the initial configuration.

In the Azure-SSIS, you get the following two options for storing the SSIS package catalog.

- In Azure SQL Database

- Azure SQL Managed instance

Image Reference: Microsoft docs

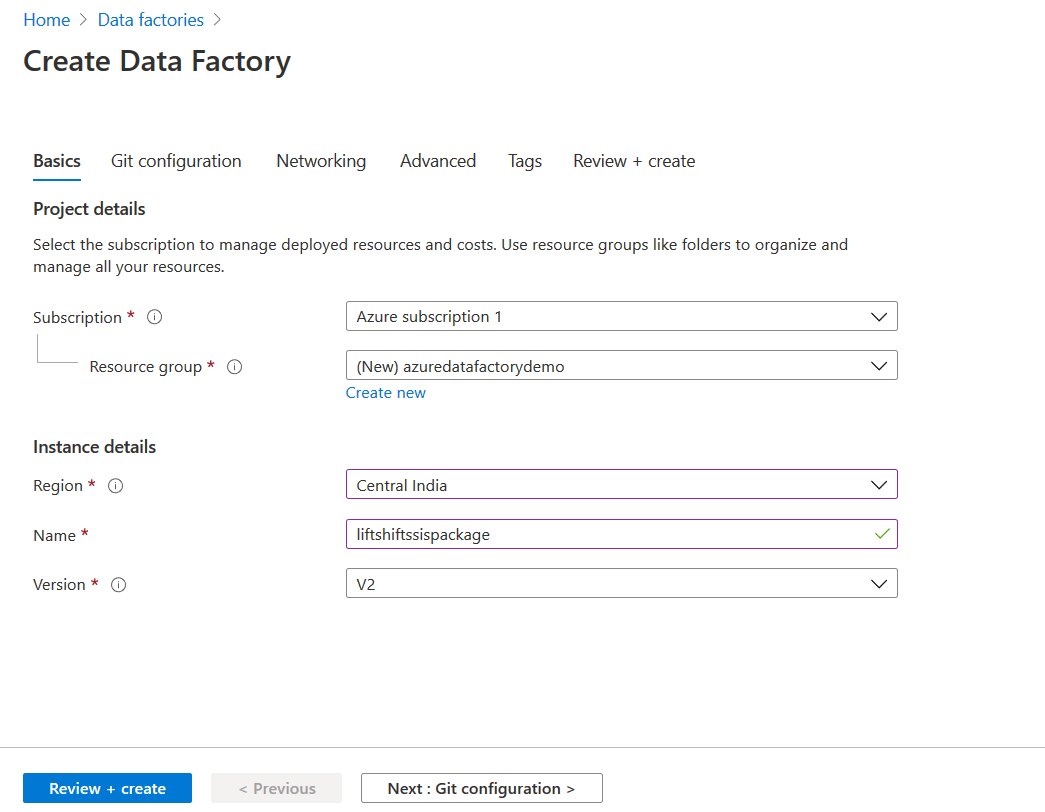

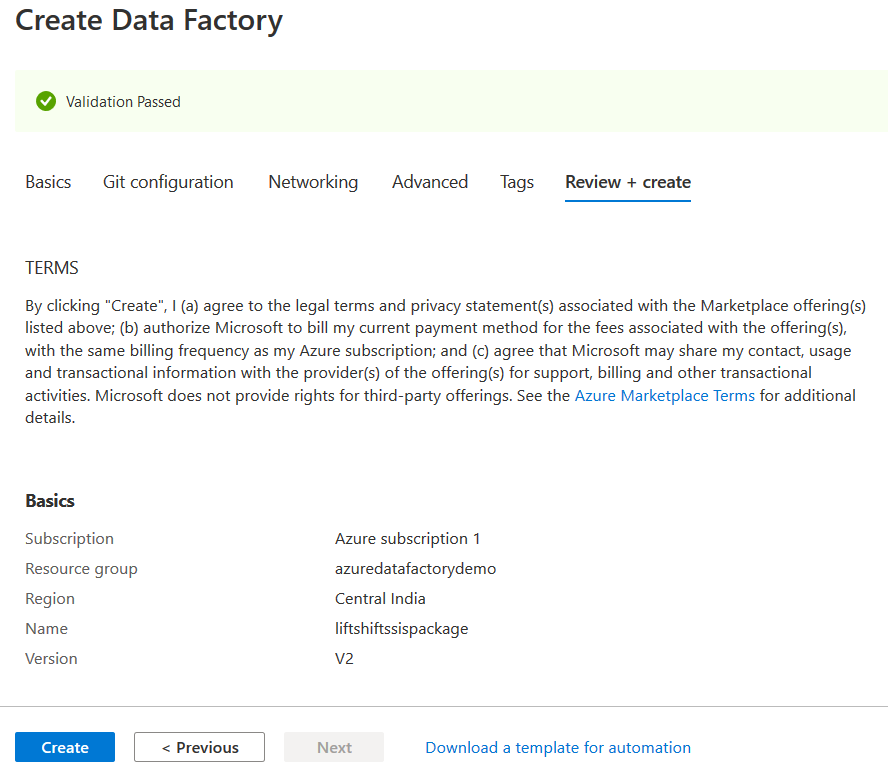

To configure the Azure-SSIS, first, we require to configure the Azure Data Factory. In the Azure portal, search for the data factory and create a new ADF.

The ADF configuration requires the following:

- Azure subscription

- Azure Resource Group

- Select the region to deploy your resources

- ADF Instance name

- ADF version: Make sure you select version V2 for Azure-SSIS configuration

Click on Review + Create for starting validation and deployment.

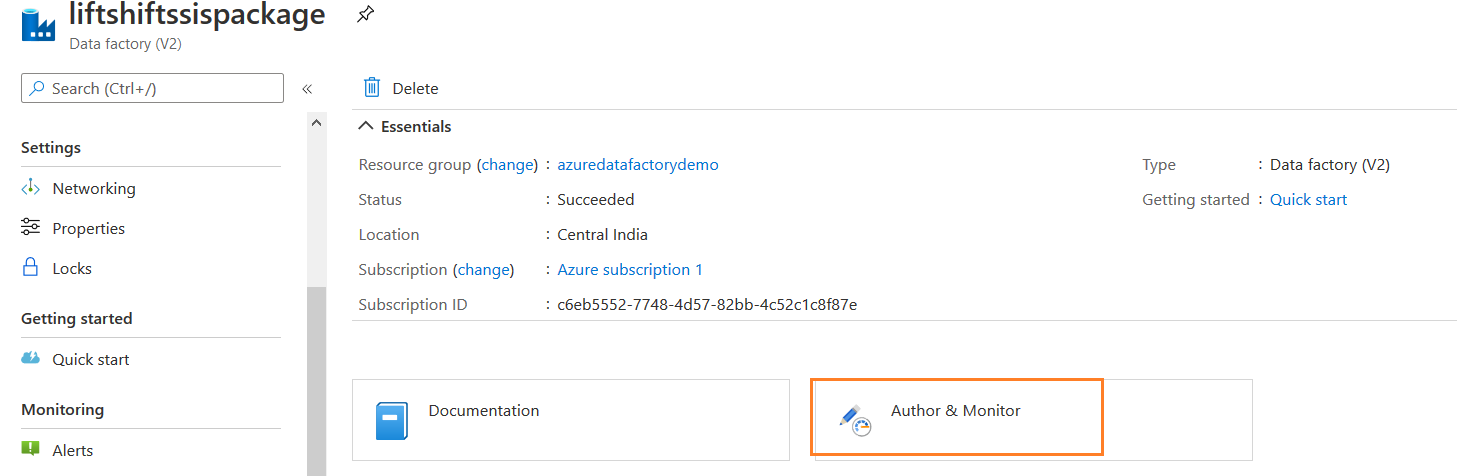

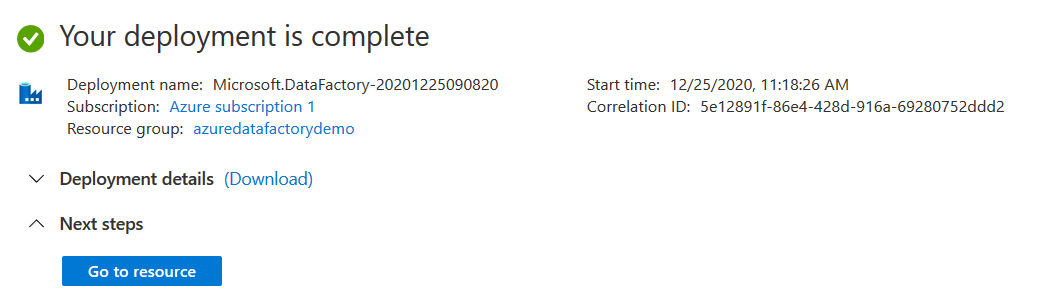

It creates the Azure Data Factory quickly.

In the resources, click on Author & Monitor from the overview section, as shown below.

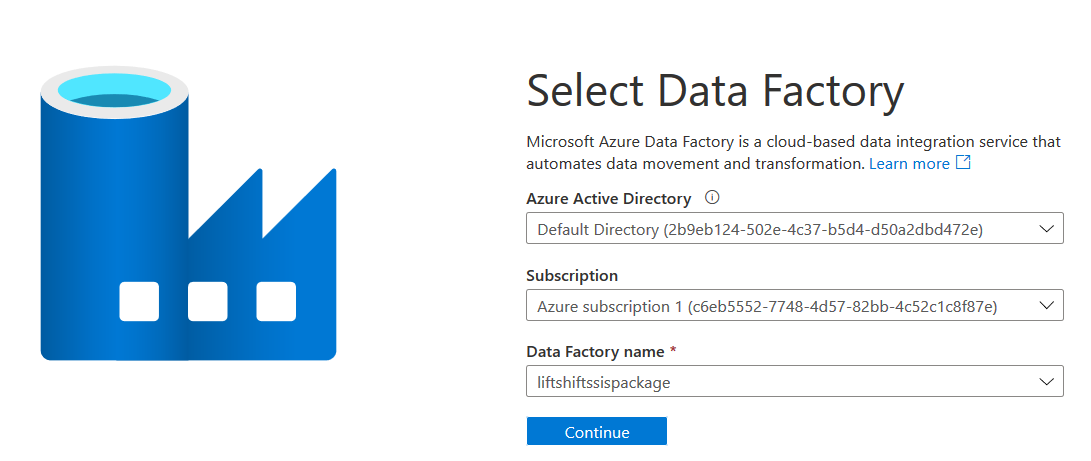

Initially, it asks you to choose the Azure active directory, subscription and data factory name. We can go with the default options on this page.

On the ADF page, click on Configure SSIS Integration.

SQL Server Integration Services Integration Runtime Setup

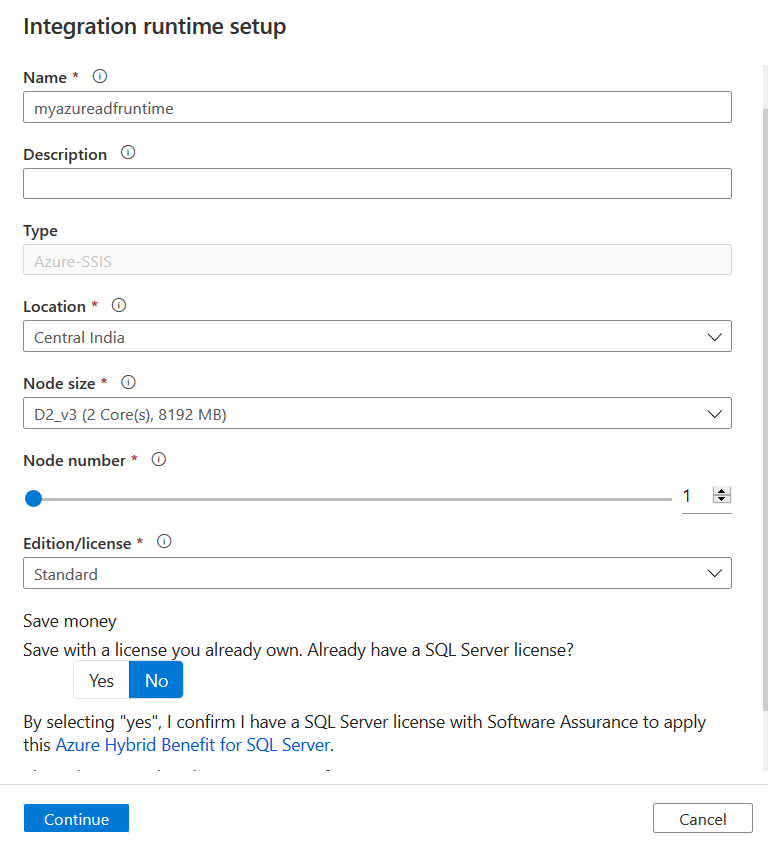

In the Integration runtime setup, specify a name, description (optional), location (same as ADF location).

Here, you also require configuration of node size, node number and edition. It would be best if you chose the node configuration appropriately. In this article, we use the smallest node size and a single node.

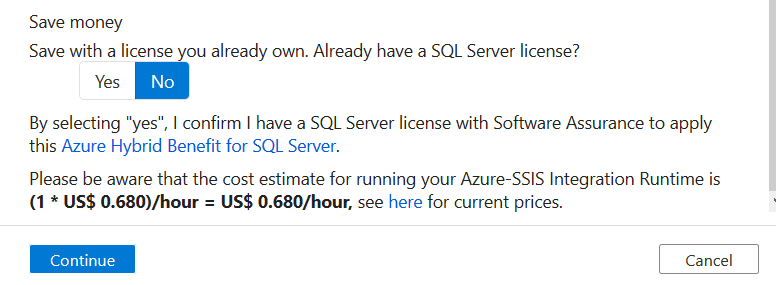

You get an estimated cost of running Azure-SSIS Integration runtime for your configuration.

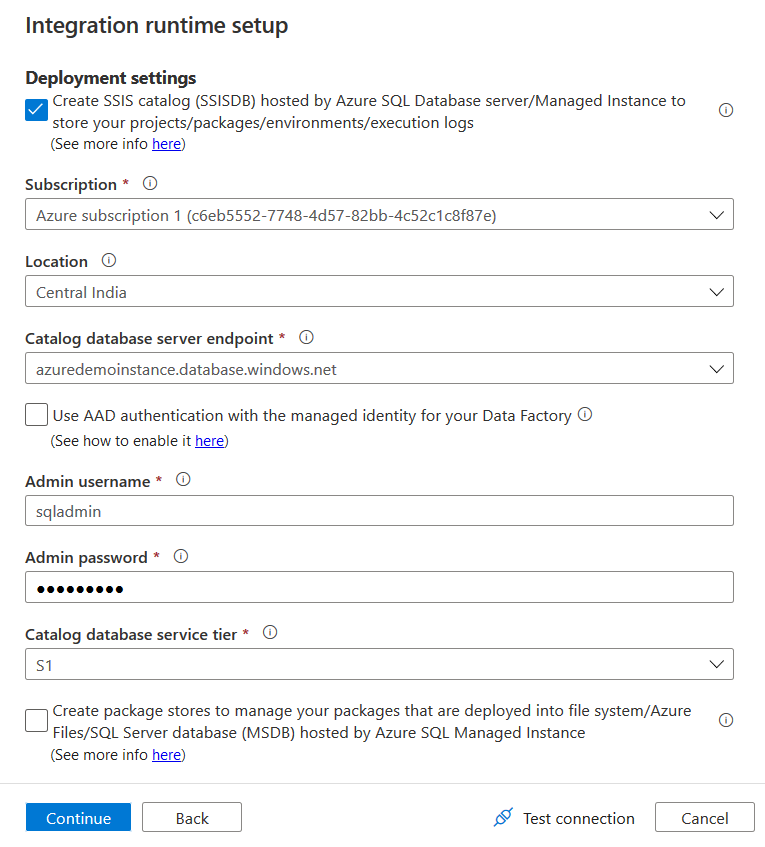

Click on Continue. On the next page, select your Azure SQL Database endpoint. The IR runtime creates an SSISDB catalog for your Azure SQL Database.

In the Admin username and password, enter the credentials for the Azure SQL Database. If you use Windows authentication (Azure Active directory authentication), you can put a tick on option – Use AAD authentication with the managed identity for Azure Data Factory.

In the catalog database tier, select the service tier. By default, it shows the standard service tier.

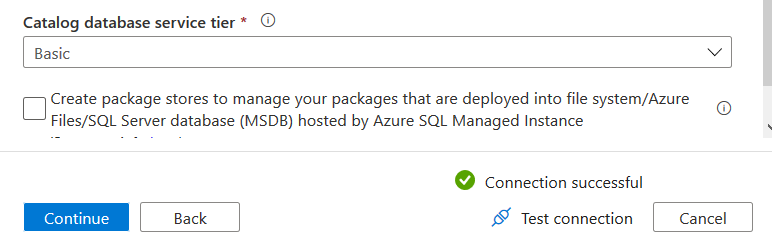

In this article, for demonstration, we can choose the basic service tier. You can navigate to Microsoft docs for understanding the service tiers.

Click on Test connection to ensure your database endpoint, and admin credentials are accurate.

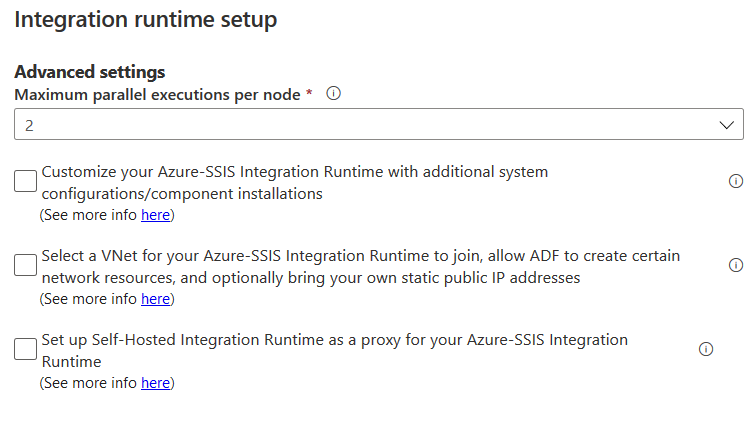

On the next page, you can configure the maximum parallel executions per node. By default, it shows 2 executions per node.

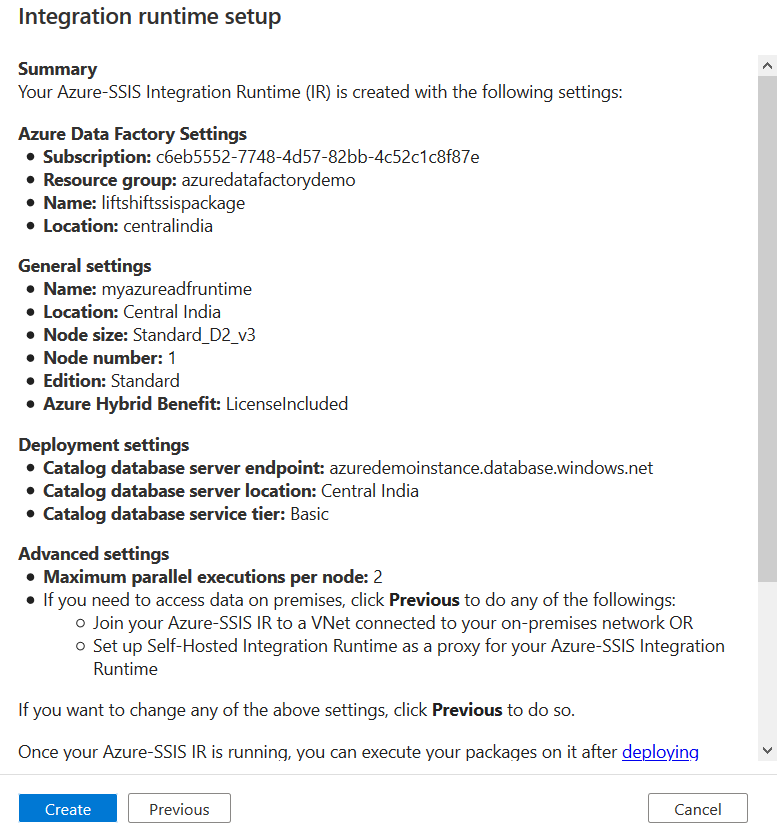

On the next page, review your Azure-SSIS Integration runtime configuration and click on Create.

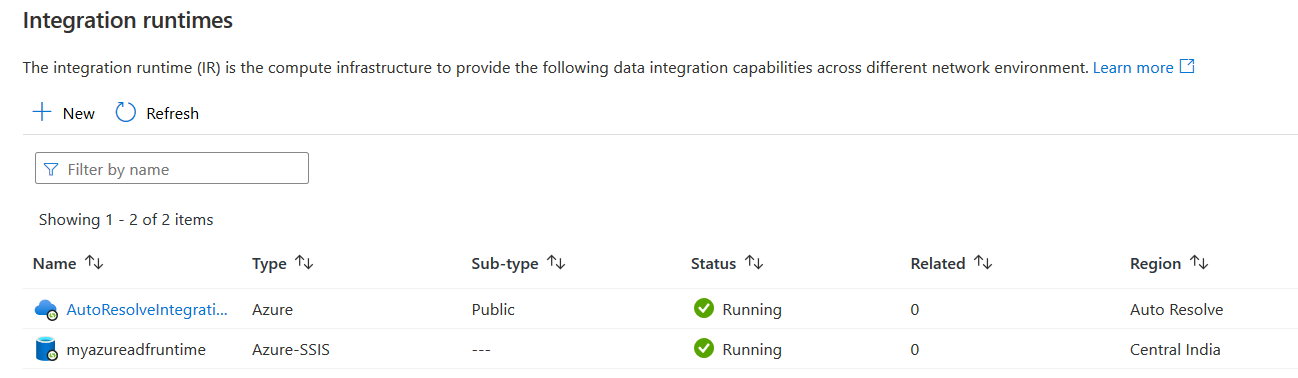

It deploys the IR runtime resources, and it comes in the running state in 10-15 minutes.

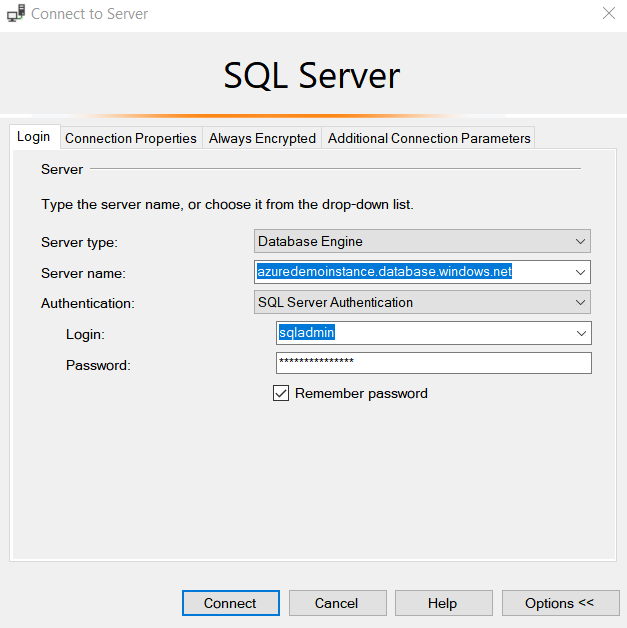

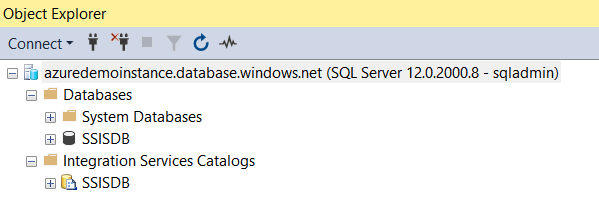

Once IR runtime deployment completes, connect Azure SQL Database in SSMS.

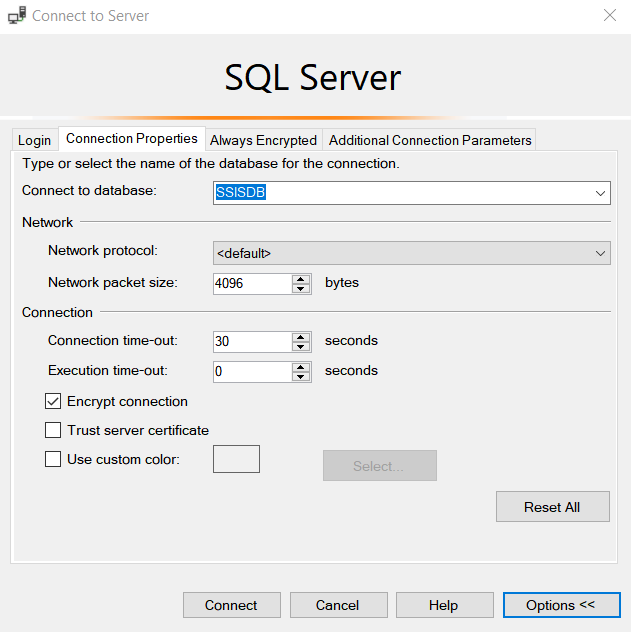

Click on Options in the SSMS connection and enter the SSISDB to connect to the database as shown below.

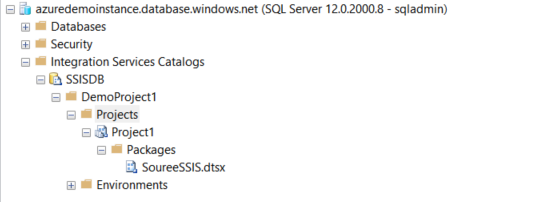

It connects to your Azure SQL Database, and you see an SSISDB database and integration Services Catalogs.

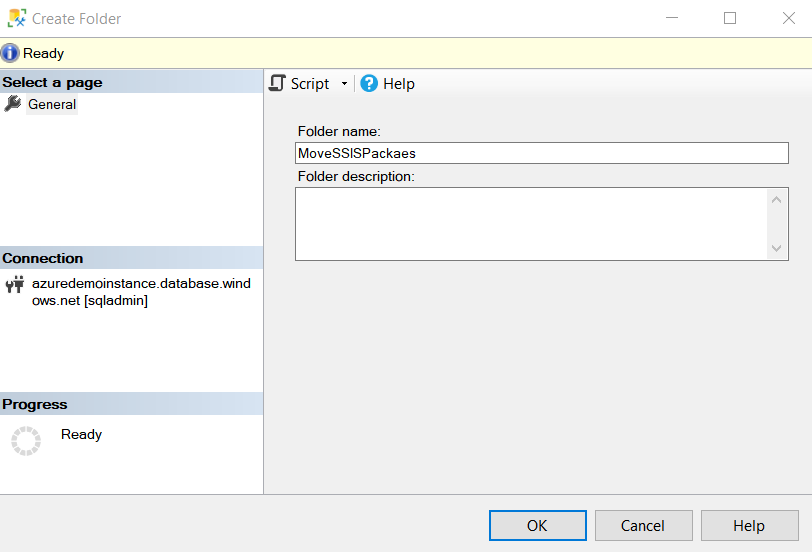

Right-click on the Integration Services Catalogs and create a new folder – [MoveSSISPacakes] as shown below.

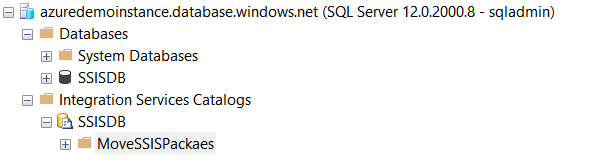

Click Ok, and you get a new folder for your SSIS package projects deployments in the SSISDB catalog of Azure SQL Database.

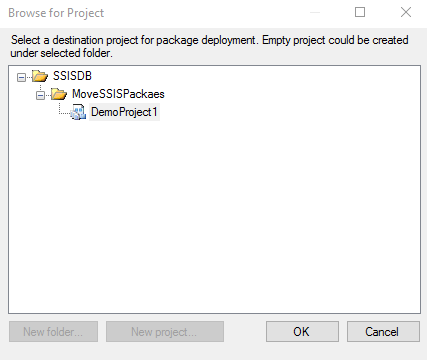

In the SSISDB catalog folder, create a new project [DemoProject1], as shown below.

Lift and Shift an Integration Service project in the Azure Data factory

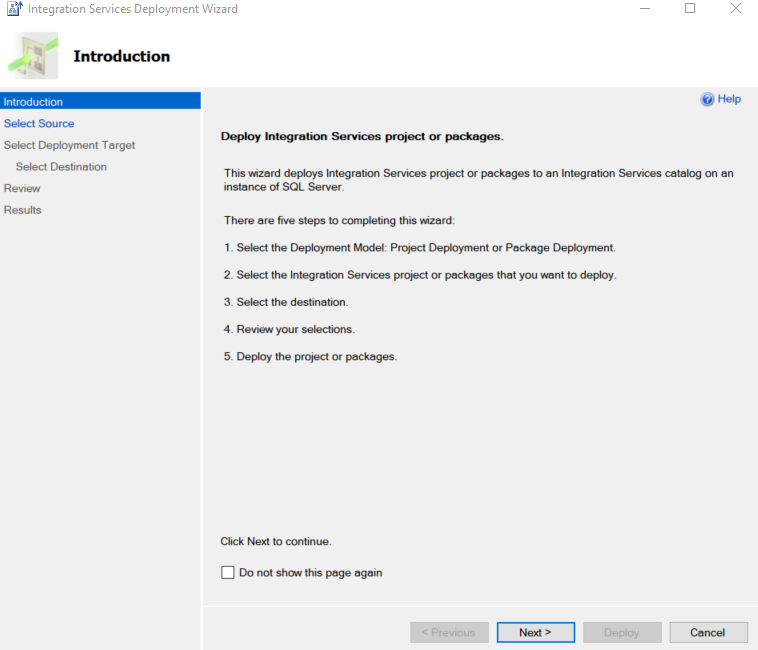

The SSIS package project deployment in the Integration service catalog in the Azure cloud is similar to the on-premise SSIS. Right-click on the project catalog folder and deploy. It launches the Integration Service Deployment wizard.

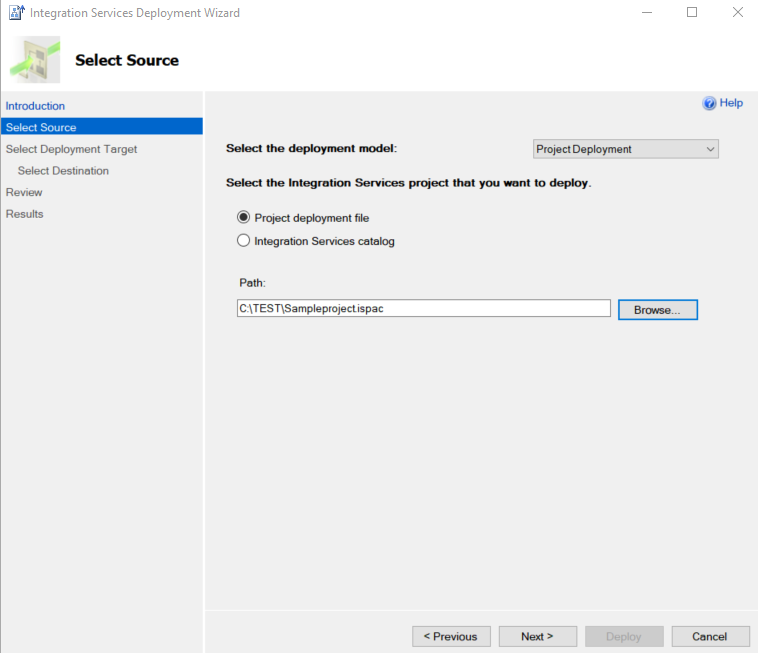

Click Next. We can choose either the project deployment file or the Integration Service catalog. Before you use the Integration Service Catalog, you should establish connectivity between Azure and On-premise network. This article uses a project deployment file ISPAC from an Integration service project stored in the On-premise SQL Server catalog.

Select the Project deployment file and specify the ISPAC file path.

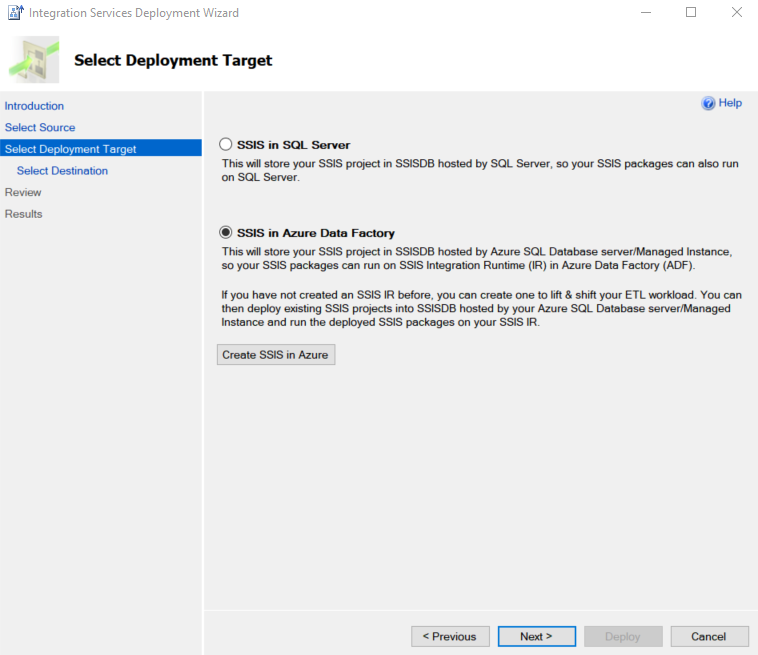

In the next window, select the option – SSIS in Azure Data Factory. You can use Create SSIS in Azure for SSIS integration runtime configuration from this window as well however I would recommend setting it up earlier as we did it.

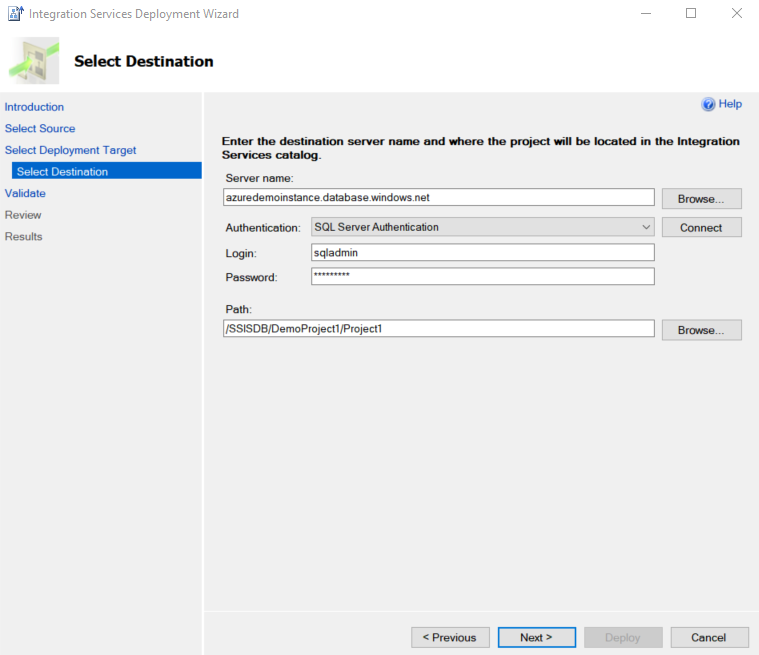

On the next page, enter the Azure SQL Database endpoint, authentication mechanism (SQL Server authentication), enter the credentials, and click on Connect.

Once connected, you can browse the catalog path from Integration Service Catalog.

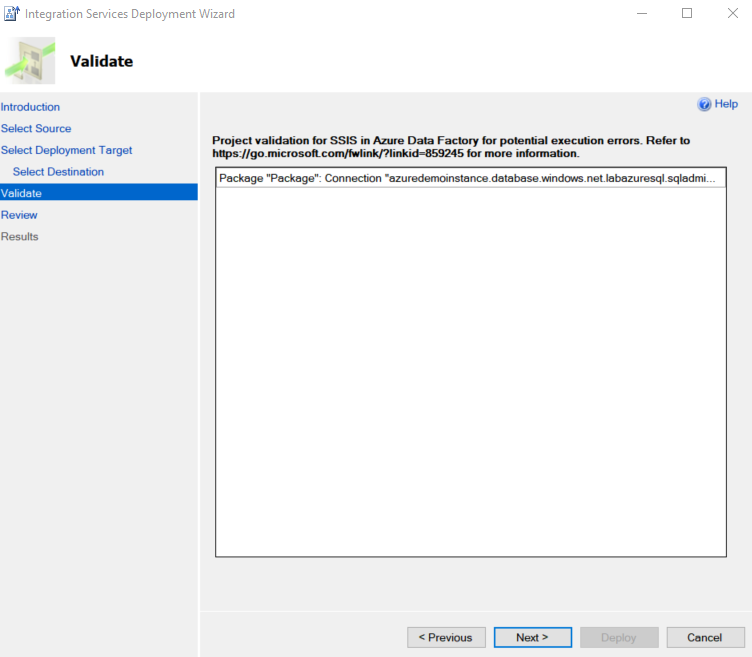

It validates the package configurations and highlights any potential execution errors.

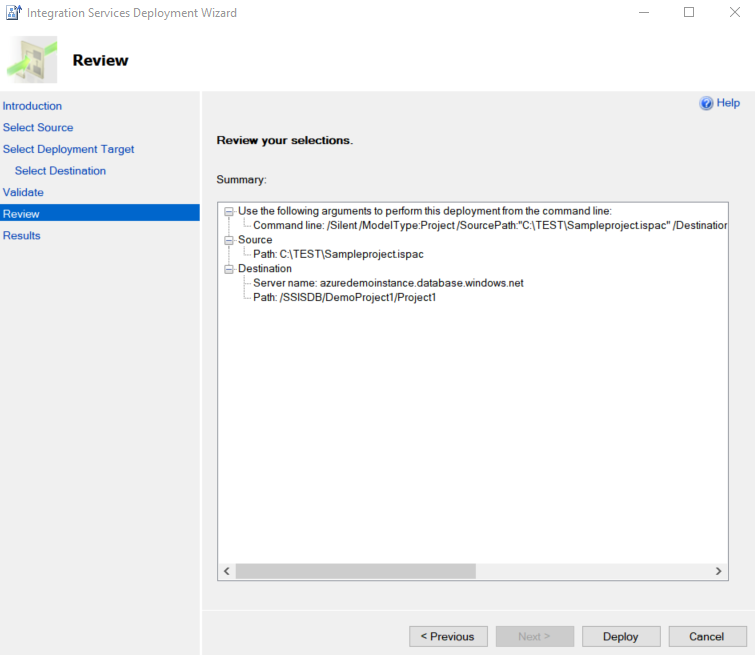

Review the Integration Service project deployment and click on Deploy. In summary, it gives a command line as well for the deployment.

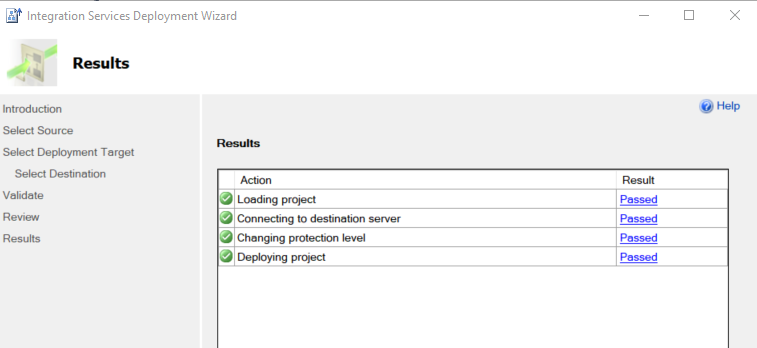

It deploys a project for your Azure-SSIS catalog.

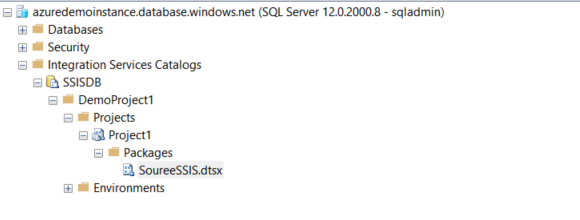

As shown below, we can see the SourceSSIS.dtsx package in the Project1 folder of the SSISDB catalog.

Manually execute Integration Service packages in Azure

Once we have shifted the package from on-premises SSIS package to Azure-SSIS, we can either run it manually or using a specific schedule. To run it manually, right-click on the package and click Execute.

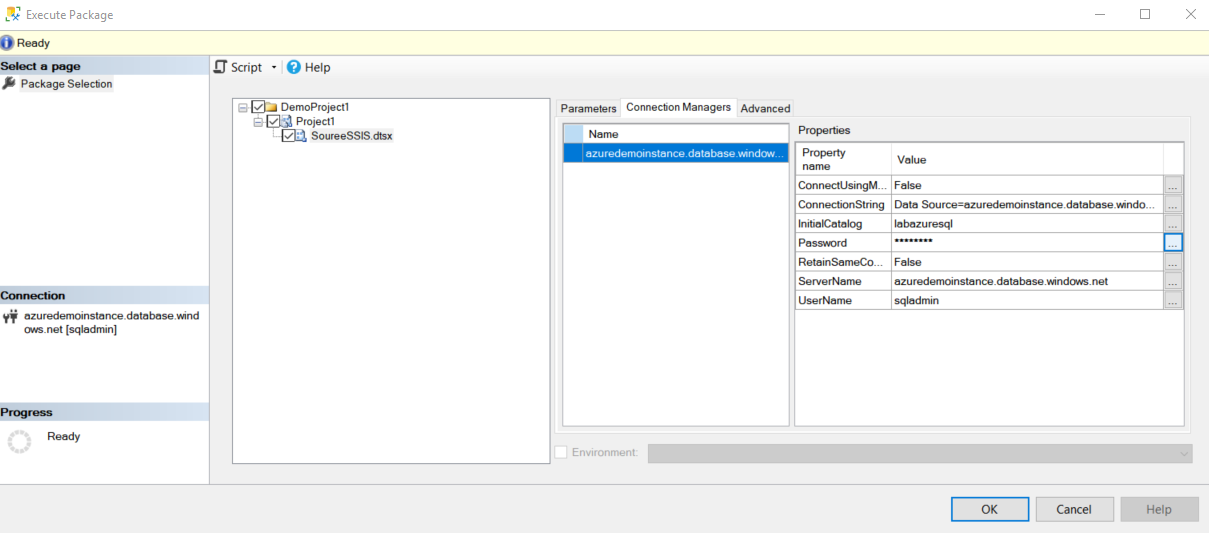

It opens the execute package window where you can configure your package connections, parameters.

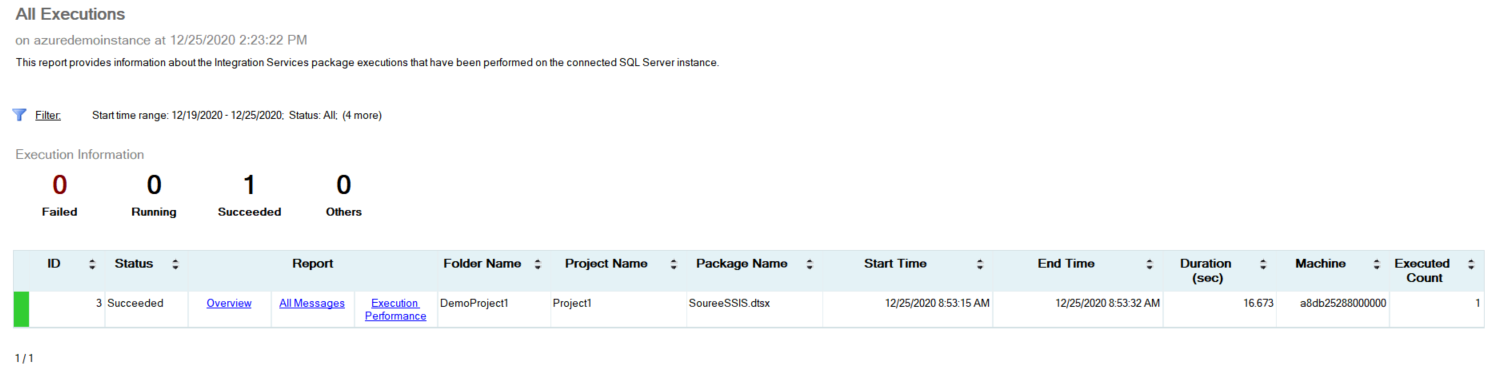

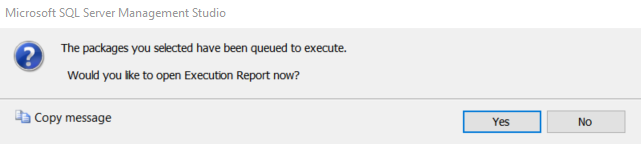

Click Ok, and it starts execution of the package in Azure-SSIS. Click Yes to open the execution report.

As shown below, the package execution completes successfully in the Azure-SSIS using the Azure Data catalog.

Schedule an Integration Service package in Azure-SSIS

Usually, in a traditional on-premises SQL Server, we use SQL Server Agent jobs for Integration Services package execution. In the Azure SQL Database, we do not have SQL Server Agent functionality. We can configure package executions using the Azure Data Factory Integration Service runtime. Right-click on the package and choose Schedule.

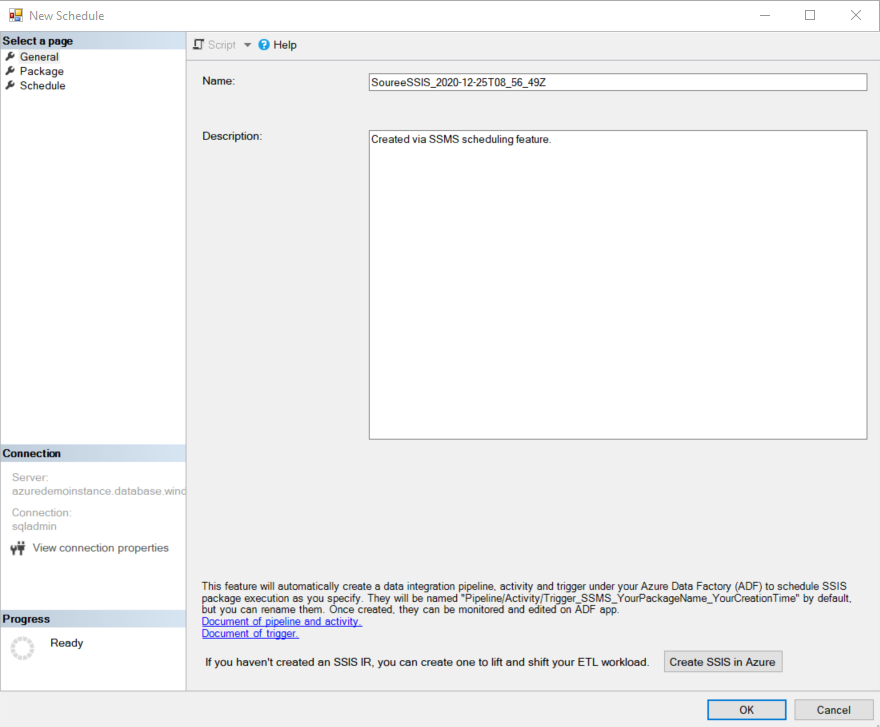

It opens a new schedule window. It has three sections.

General: You can enter the schedule name and description. By default, it gives you a schedule name, as shown below

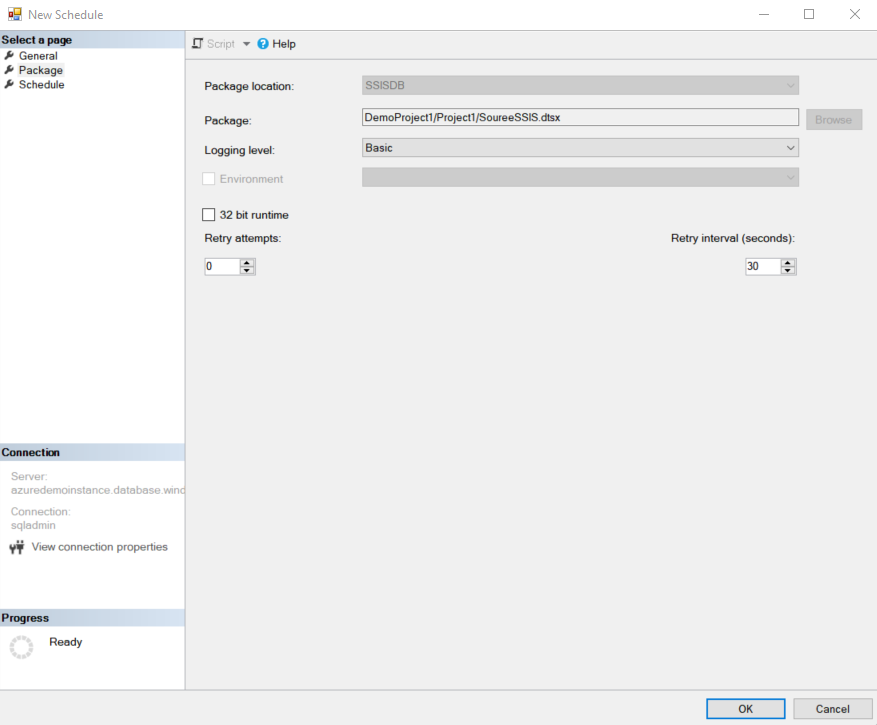

Package: On the package page, you can set logging level, 32-bit runtime and the retry attempts

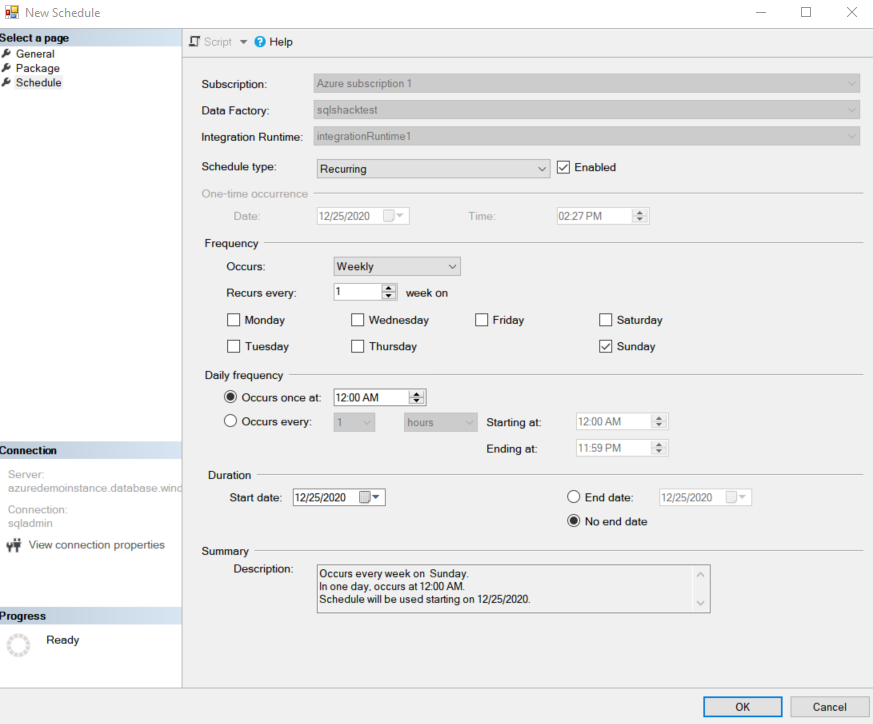

Schedule: Package execution schedule similar to an SQL Server agent job. By default, it set job execution frequency as Weekly, on every Sunday at midnight. You can modify the schedule

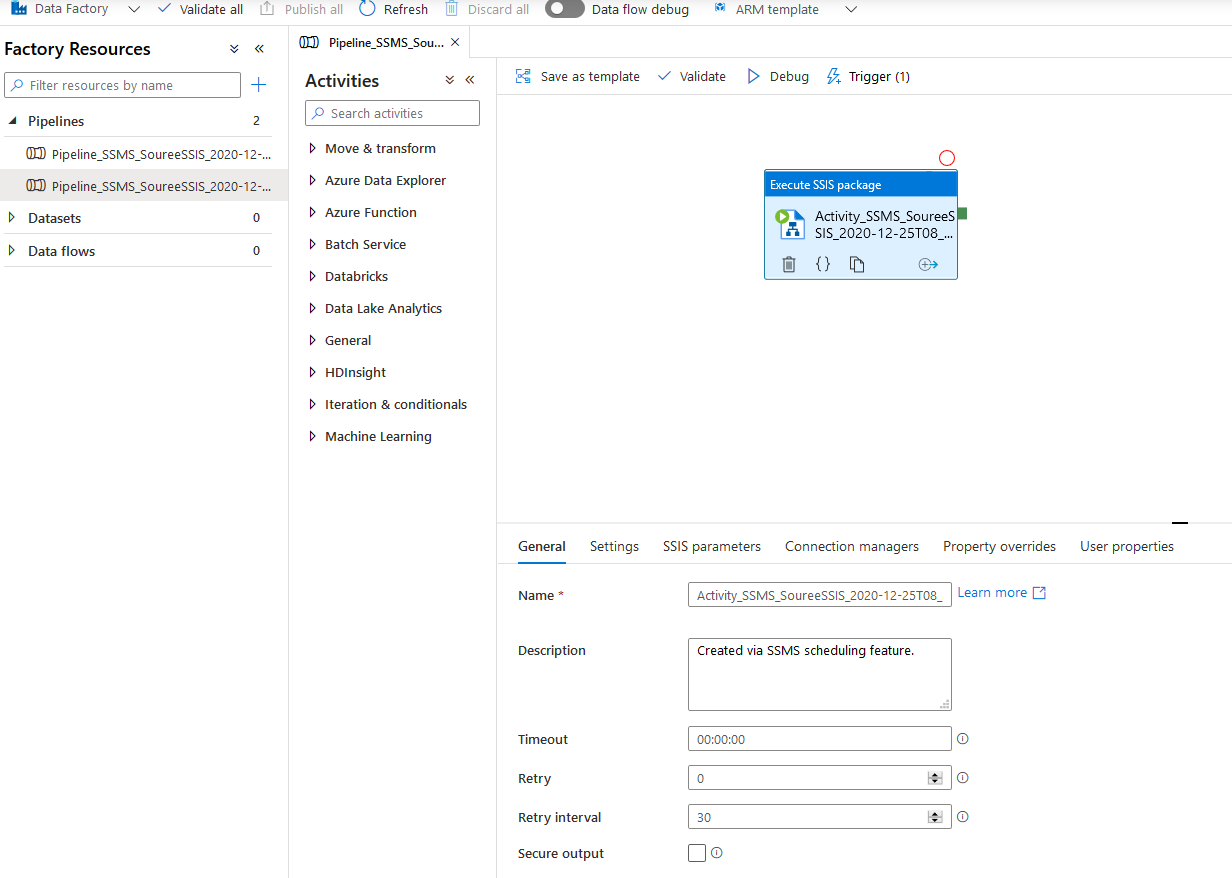

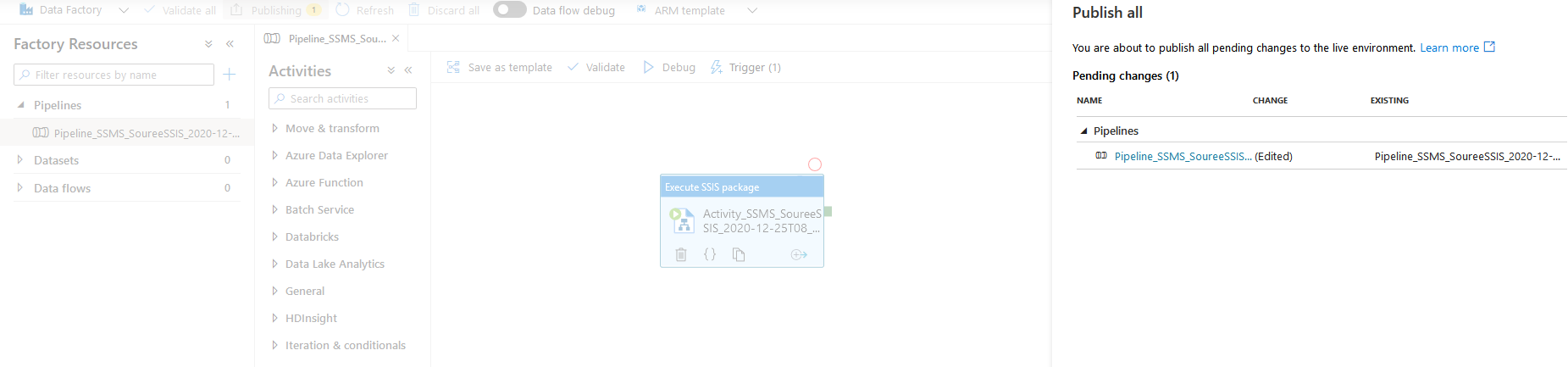

Click Ok, and it creates an ADF pipeline with the Execute SSIS Package. The ADF pipeline has triggered the job schedule, and we configured it earlier.

It triggers SSIS package execution in the Azure-SSIS as per the defined schedule. If you want to trigger the job manually, click on Trigger and execute now.

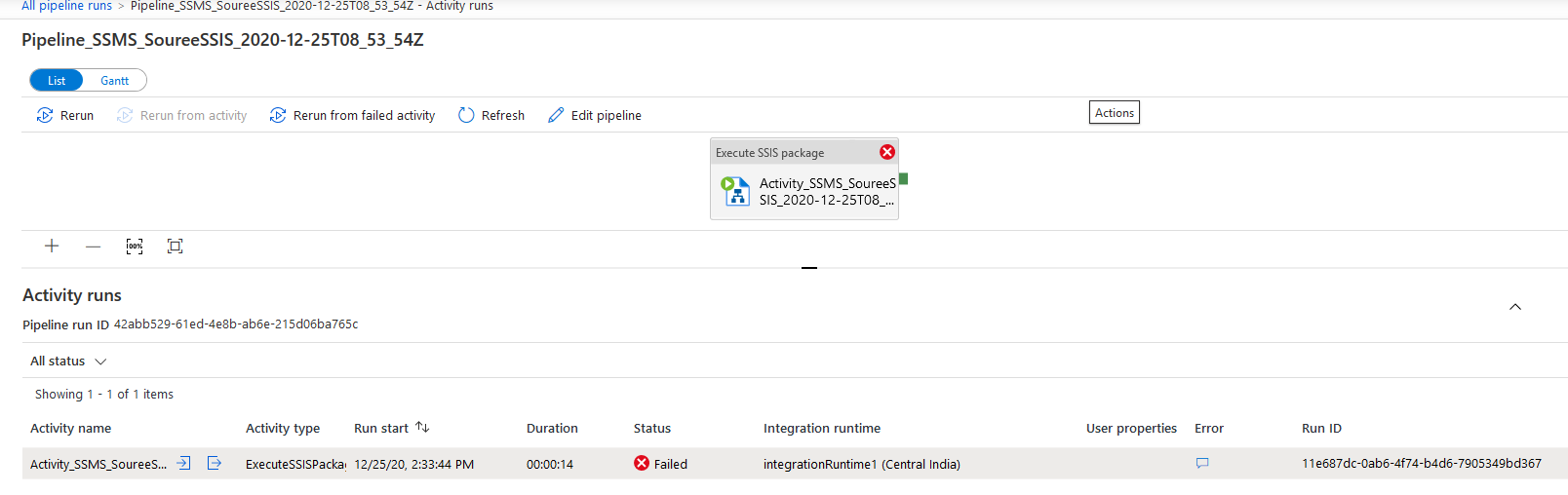

You can view the package failures from the ADF pipeline. For example, I modified the Azure SQL Database credentials, so that package execution fails. As shown below, the status of the package is failed.

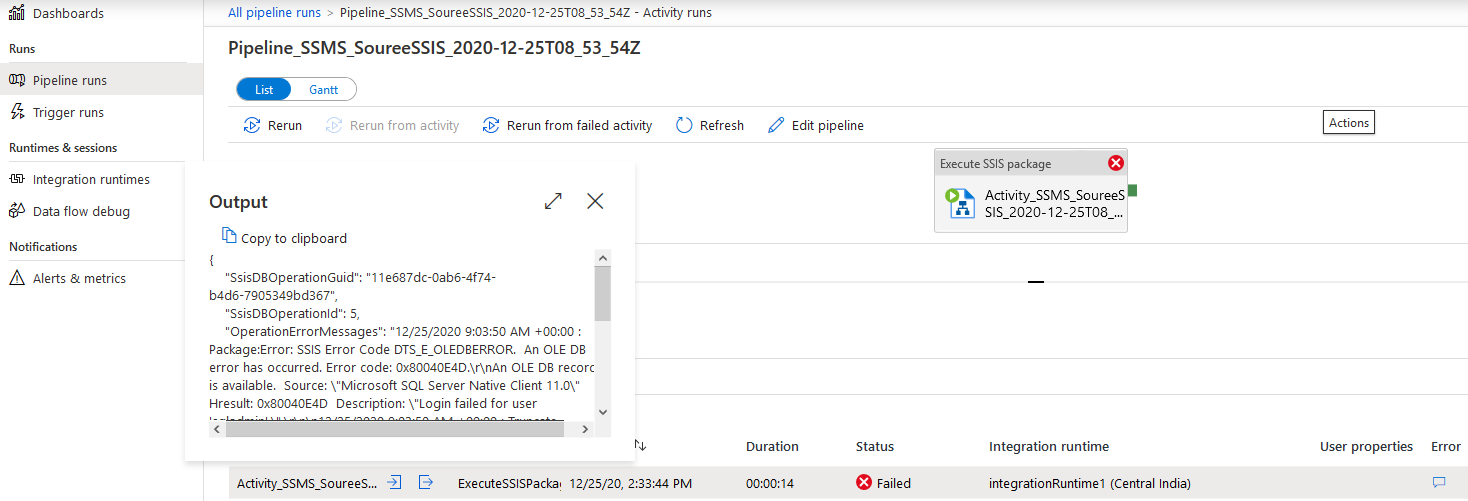

In the activity runs, you can see icons for input and outputs in the activity name columns. Click on Output, and it gives you an error message. As shown below, the package failed due to login failure.

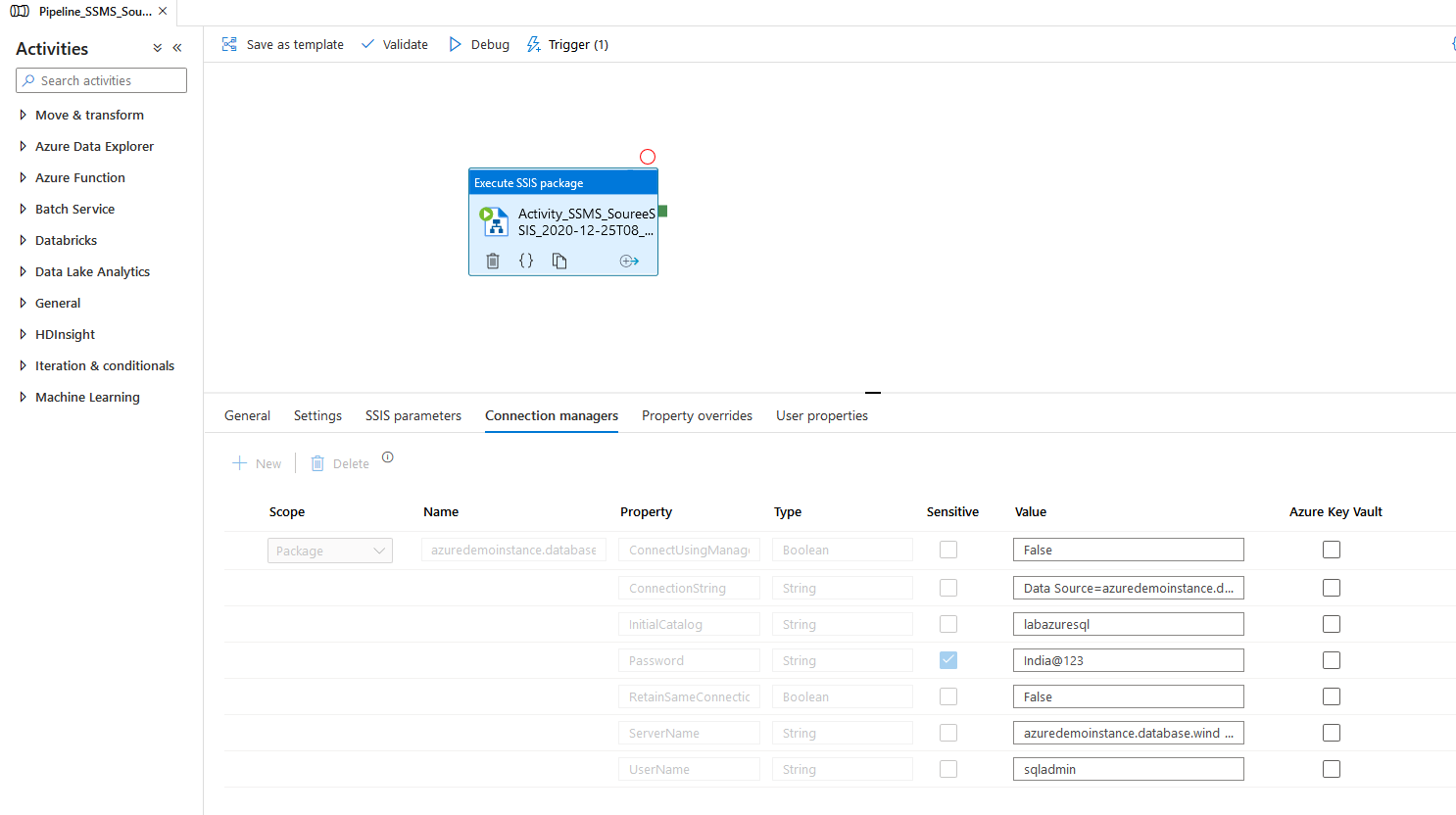

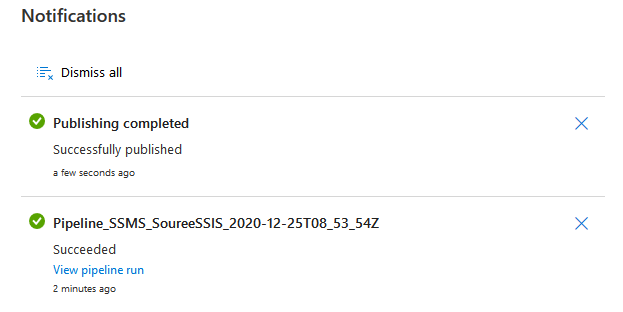

You can navigate to the ADF pipeline, modify the required parameter and publish the package with the modified configuration.

Click on Publish and give a confirmation.

In the notification window, you get messages for publishing status, pipeline execution etc.

Conclusion

This article performs a Lift and Shift migration of SSIS packages in the Azure-SSIS Integration runtime. It is a straightforward move using the Azure Data Factory, Azure SQL Database. If you haven’t started learning Azure, I would recommend you to browse Azure articles on SQLShack.

- Understanding PostgreSQL SUBSTRING function - September 21, 2024

- How to install PostgreSQL on Ubuntu - July 13, 2023

- How to use the CROSSTAB function in PostgreSQL - February 17, 2023