In the world of information, auditing serves an important purpose. It helps to provide an assurance that the data involved is accurate and safe. The level of assurance of course depends on any number of factors including the level of trust in those performing an audit (or collecting the data for the audit), the frequency the data is collected, and the types of data collected.

Any time the collection of data is this important to a process you can bet that a DBA is going to be involved. We get asked to create and run queries to pull data (frequently ridiculously complex queries, and rarely some simple ones). You could almost make it into a joke.

An auditor walked into the office one day, dropped an inch thick set of requirements down on the counter and said, “I need these by tomorrow.”

I realize it’s not terribly funny but then I’ve been through a few of them where it was either laugh or cry and I’m the laughing type.

Joke or not Auditors want a lot of data. Some of that data can be found in the existing application data or database metadata:

- The client file for account 12345

- A list of all users with sysadmin access

- A list of all clients contacted in the last 60 days whose last name has the letter E in the third position and whose birth-date has the number 7 in it.

Frequently however they want information that is not part of the “regular” data:

- Who has looked at the data in the last 60 days

- Who has updated the data in the last 60 days

- A list of all clients whose information was modified in the last two weeks

- For each user when is the last time their password was updated

- A complete list of all failed logins in the last year.

And sometimes they don’t want data, they want proof that you know when certain events have happened and report on them regularly:

- Make sure you know and report on failed logins each week

- Make sure you know any time someone’s permissions have been modified

- Make sure you know any time the structure of the database has changed.

You can easily see that a lot of this information isn’t directly available. There are however a number of techniques and tools that can be used to collect it.

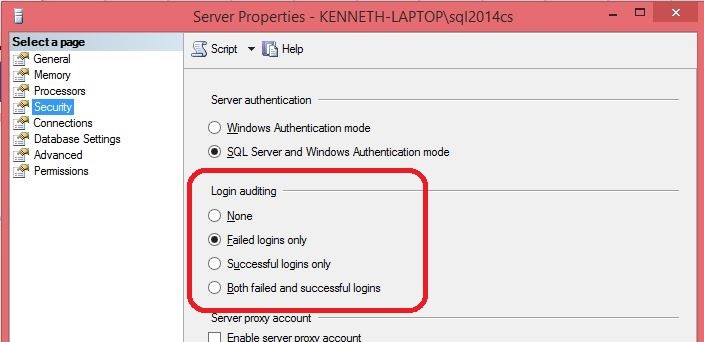

Login/Failed Login auditing

This simple option is part of the Server Properties. You have the option of logging failed logins, successful logins, neither, or both. Any changes to this property require an instance restart before they take effect.

The information logged is written to the error log for the instance. Generally I would recommend logging at least failed logins if not both failed and successful. This particular form of auditing is fairly light weight and has very important information even if it is not needed for an audit.

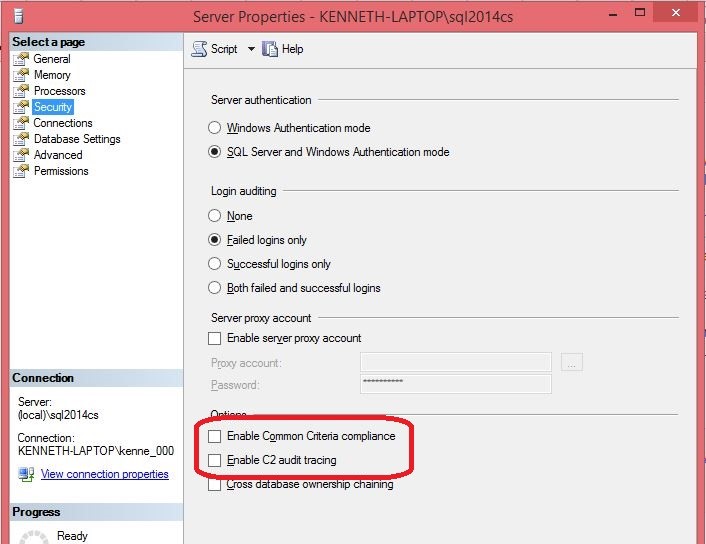

C2 Auditing and Common Criteria Compliance

If you need one of these options you will know it. If you don’t then you shouldn’t use them. They are extremely intensive and can take up huge amounts of disk space very quickly. On top of that they are configured to bring down the instance if they run out of space and cannot write the audit information.

These options are intended for specific compliance requirements and are not generally used otherwise. If you do need them you should use Common Criteria Compliance instead of C2 Auditing. C2 Auditing has been depreciated and will be removed in a future build of SQL Server.

When turning on Common Criteria Compliance it is important to remember that there is more to it than just checking the option on the permissions security tab. The full instructions can be found in the link above.

Audit columns

Adding audit columns to tables is a common method of collecting audit information. These are typically who did it and when type columns for both row creation and last updated time. The easiest way to handle this is to use a combination of defaults and a simple trigger.

For example:|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 |

CREATE TABLE AuditColumns ( PrimaryKeyColumns varchar(50) PRIMARY KEY, OtherColumns varchar(50), CreateUser varchar(50) CONSTRAINT df_CreateUser DEFAULT original_login(), CreateDate datetime CONSTRAINT df_CreateDate DEFAULT getdate(), LastUpdateUser varchar(50) CONSTRAINT df_LastUpdateUser DEFAULT original_login(), LastUpdateDate datetime CONSTRAINT df_LastUpdateDate DEFAULT getdate() ); GO CREATE TRIGGER upd_AuditColumns ON dbo.AuditColumns AFTER UPDATE AS BEGIN IF UPDATE(LastUpdateUser) AND UPDATE(LastUpdateDate) RETURN UPDATE AuditColumns SET LastUpdateUser = DEFAULT, LastUpdateDate = DEFAULT FROM AuditColumns JOIN Inserted ON AuditColumns.PrimaryKeyColumns = Inserted.PrimaryKeyColumns END; GO |

- Use the original_login() function to fill in your user columns. This way any impersonation is ignored and you get the actual login name

- The defaults on these columns will have no effect on an update so the trigger is there to force the update

- The IF UPDATE() statement is to keep the body of the trigger from running if the last update columns are already being updated

- Use the DEFAULT keyword in the update statement so that no code change is needed if the default constraint on the column changes

- As a general rule all DML triggers should be able to handle multi row changes

- When this is implemented some application code changes may be necessary.

Triggers

Triggers are a very powerful and versatile method of collecting audit information but they also have some serious limitations. There are three categories of triggers available.

- Logon Triggers

This type of trigger fires in response to a logon event. This makes it perfect for logging logins and failed logins but unless you have special requirements login auditing is easier to implement and less dangerous.

- DML Triggers

These triggers fire after or instead of INSERT, UPDATE and DELETE events. They can be perfect for logging change history, updating audit columns, and restricting certain types of data changes. However triggers must complete before the associated command can complete. This means that a long running trigger can dramatically increase the amount of time to perform operations on the table. In addition a poorly written trigger can cause all sorts of odd problems, including columns that won’t update and the inability to insert rows into a table.

- DDL Triggers

DDL Triggers fire after schema changing events. CREATE, ALTER, and DROP statements for example. This makes DDL triggers perfect for logging or restricting schema type changes. For example this would be a great way to log who dropped a table and when.

123456789101112131415161718CREATE TABLE ToDrop (Col1 int)GOCREATE TABLE DropLog (DropTable nvarchar(2000), UserName varchar(255),OccurDate datetime)GOCREATE TRIGGER WhoDroppedMeON DATABASE FOR DROP_TABLEASINSERT INTO DropLog VALUES (EVENTDATA().value('(/EVENT_INSTANCE/SchemaName)[1]','nvarchar(max)') + '.' +EVENTDATA().value('(/EVENT_INSTANCE/ObjectName)[1]','nvarchar(max)'),ORIGINAL_LOGIN(),GETDATE())GO- However as with all triggers when poorly written they can cause some odd issues. For example not being able to make schema changes at all or all database files being created on a single drive regardless of where you specify.

Every now and again an auditor will have a requirement that you prove that certain changes cannot be made. For example no one can delete from or alter the log tables. For this type of work triggers are going to be one of your go-to tools.

Tracing

Historically this has been the most common method of collecting audit information. It should be noted that traces have been official depreciated and will be removed in a future version of SQL Server. Of course it should also be noted that Microsoft recently added a new feature (Distributed Replay) that requires trace files.

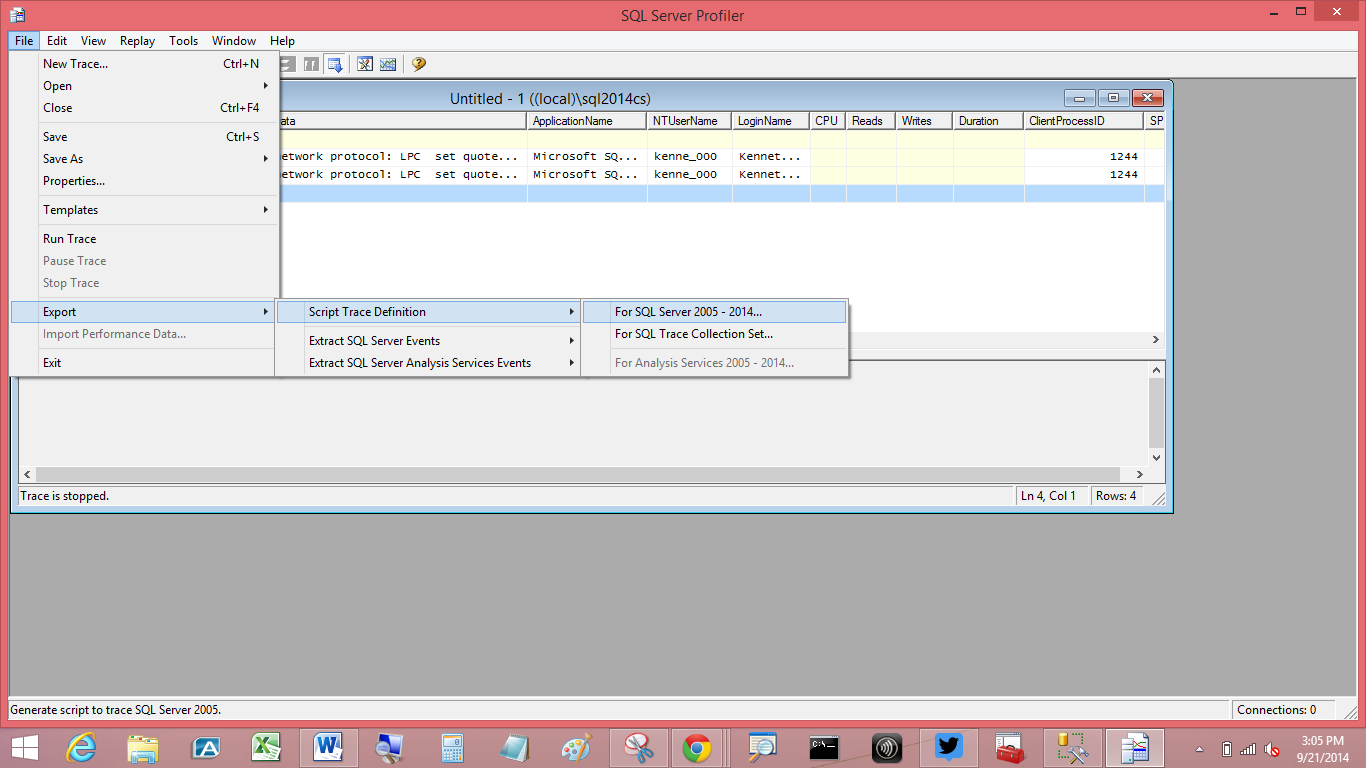

Traces can be created to capture large amounts of information on events that occur on the instance. While it is possible to run traces using SQL Server Profiler, for the purposes of auditing it is best to generate T-SQL scripts. However because these scripts can be quite complex it is often easiest to use Profiler to generate the script.

First use Profiler to generate your trace then select the File->Export option:

There are several important factors to take into account when using traces to collect audit data.- When using traces for auditing the information should be written to a file not to a table. Writing to a table is slow. Frequently, on a high transaction system, too much data is generated for the system to keep up if the data is being written to a table.

- Minimize the amount of data you collect. Any information that is not required by the audit should be excluded. This minimizes the amount of storage need for the data and also the amount of unnecessary data to look through before finding the required information. Even more importantly traces are high overhead. The more data included in the trace the more overhead involved.

- If working with an audit that requires a high level of security, make sure the trace files are stored in a secure location.

- Process the trace files frequently. In many cases the audit information can be summarize and the storage space required minimized. In addition once the trace information has been moved into tables it is much faster and easier to query and report on. Also once the data has been processed the trace files can be deleted.

Extended events

Introduced in SQL 2008, Extended Events is a replacement for SQL Tracing. There are a few important differences. Including but not limited to:

- Extended events are considerably lighter weight. This means that there is less concern about running them on a production server.

Instead of a separate tool (profiler), Extended Events uses the New Session and New Session Wizard which can be found in SSMS

- Extended Events uses the commands CREATE/ALTER/DROP EVENT SESSION instead of the sp_trace* stored procedures

- Extended Events sessions cannot be saved directly to tables

- Extended Events do not have the option to shut down the instance if the event cannot be written

- Extended Events can be created to start on instance startup

- The EVENT_RETENTION_MODE allows you to determine how much data loss is acceptable including none (NO_EVENT_LOSS). Of course the less data loss allowed the more your Extended Events session can slow down the system. If no data loss is allowed then the system has to wait for the event to be written before continuing

- Extended Events does not include the auditing specific events. This might seem like a problem when trying to use Extended Events for auditing but there is still a fair amount of audit information available that just isn’t tagged as Audit.

- As fast as Extended Events can be it can still slow the system down by collecting to much information

- If the audit information needs to be secure make sure the files generated are secure

- Process the audit information frequently to minimize storage use and maximize usability.

Server/Database Auditing

So far most of the options have been tools that can be used for auditing, not specifically auditing tools. SQL Server Audit is Microsoft’s offering in this arena. While based on Extended Events this tool includes a number of important auditing features. The audit events that are excluded from Extended Events are available in Audit. As are the ability to shut down the instance if the event cannot be written and the ability to write to the Windows Security event log, Windows Application event log or a file.

Once you are used to them both the GUI interface and the T-SQL commands are fairly simple and intuitive. Add all of this together and Audits are the go-to tool for dealing with; well; audits. The best practices for Extended Events still apply with one final warning. Server level audits are available in any edition while Database audits are only available in the Enterprise, Developer and Evaluation versions.

Audit information is not just for auditing

Having collected all of this audit information, use it. Generate automated reports to let you know what’s going on. The following are just a few examples of the types of reports that can be generated using audit data.

- A list of failed logins in the last week

- A notification if there has been a high number of failed logins from a single source in a short period of time

- Security changes in the last week

- In time you can create a report listing users that have not accessed the database in the last 90 days and may be a candidate for removal

- The number of rows added and updated in the last week on key tables.

- Reviewing the SQL Server Audit - December 25, 2014

- Intro to Auditing in SQL Server - September 30, 2014