Introduction

Sometimes we need to move our local files, SQL scripts, backups from our local machine to Azure or vice versa. This can be done manually by accessing to Azure and using a browser, but there are other methods to automatically do this. This article describes the Microsoft Azure Storage Tool. This is a command line tool used to upload data to Azure from a local machine or to download data from Azure to our local machine. The article will describe step by step how to work with this tool.

Figure 0.

Requirements

- An Azure subscription.

- A local machine with Windows installed.

- It comes with the PowerShell installer, which can be downloaded here.

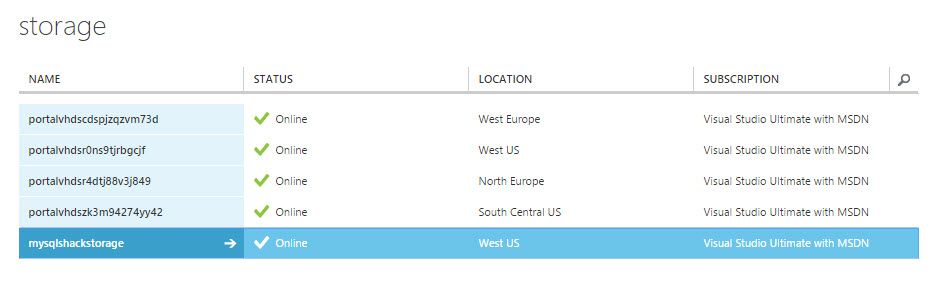

You will also require having a storage in Azure. For more information about creating a storage in Azure, refer to our article to create storage section.

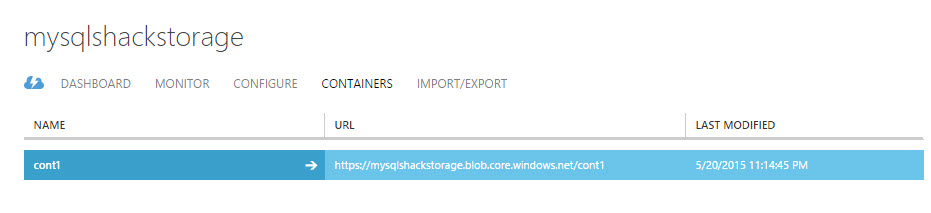

In the storage, it is requited a container. For more information, refer to our article related to storage and containers.

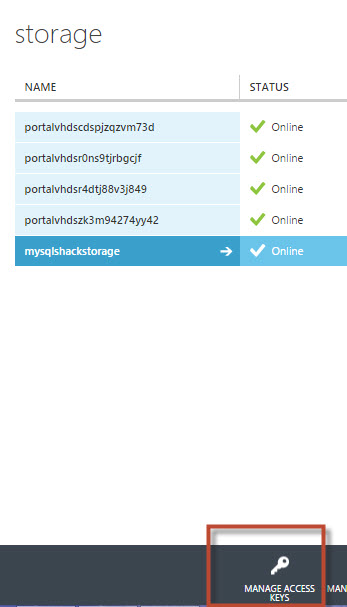

The storage contains an option to manage access keys. This option will let you handle the keys.

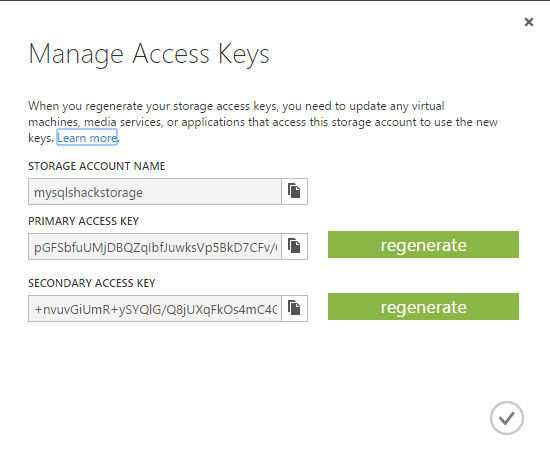

Req3. The manage access keysThe access keys will be used to connect to azure from the AzCopy command line.

Req 4. The different access keys.

Getting started

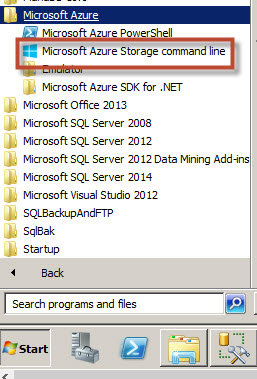

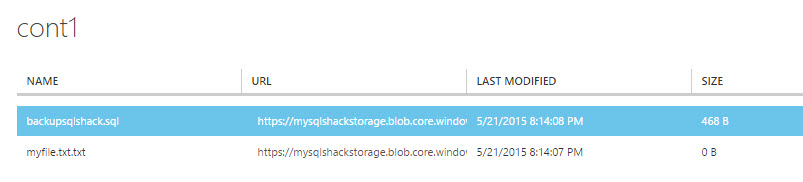

Once installed the PowerShell as specified, open the Microsoft Azure Storage command line.

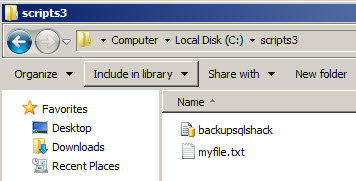

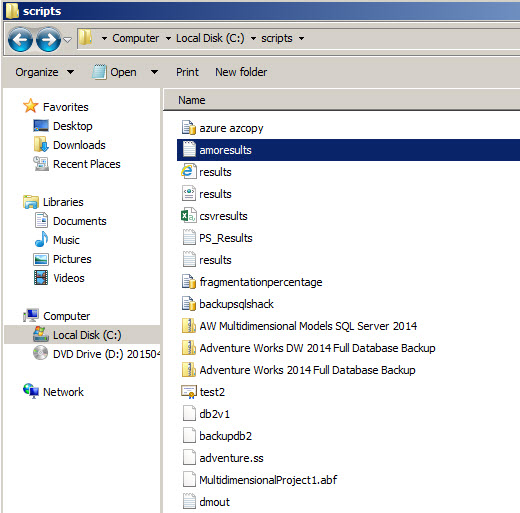

Figure 1. The Microsoft Azure Storage command lineLet’s start copying files from the local machine to Azure. Imagine that we have the following folder with SQL notes and SQL Server scripts in our local machine:

Figure 2. The local folderIn order to copy the information from the local folder to our container created on the requirements section, use the following command:

AzCopy /Source:c:\scripts3\

/Dest:https://mysqlshackstorage.blob.core.windows.net/cont1/

/DestKey:pGFSbfuUMjDBQZqIbfJuwksVp5AzCopy is the command to copy file(s) from/to Azure. It is similar to the copy command line in the cmd. It requires a source and a destination. In this example, source is the c:\scripts3 folder. The destination is the URL of the container (see the req 2 picture on requirements). The container will store the files of the scripts folder. Finally, the DestKey is the key to access to Azure. You can find the key in the req 4 picture of the requirements section.

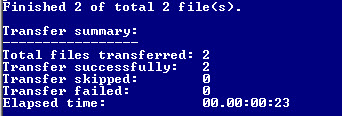

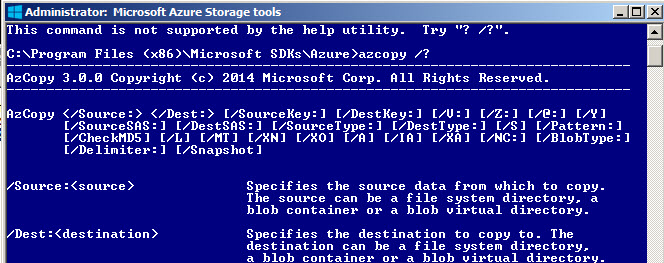

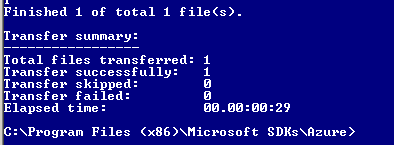

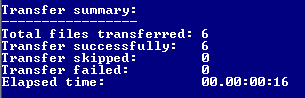

Once you run the commands, you will have a transfer summary result similar to this one:

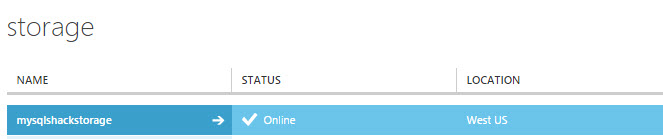

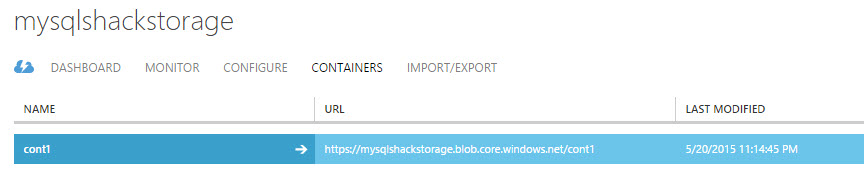

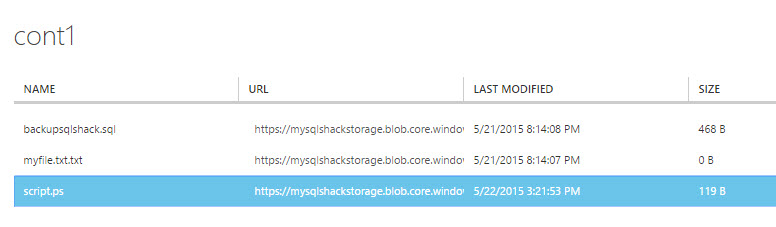

Figure 3. The AzCopy resultsIn order to verify, go to storage and click on the storage created on the requirements.

Figure 4. The storageIn the storage, go to the container created on the requirements.

You will find the files of the local folder of the Figure 2 copied to Azure.

In order to obtain more information about the azcopy command, write this commands at the command line:

Azcopy /?

The command will show information about the parameters and some useful examples.

Figure 7. The Azcopy helpLet’s try another example. In this example we will copy the myfile.txt from Azure to a local folder:

AzCopy

/Source:https://mysqlshackstorage.blob.core.windows.net/cont1/

/Dest:c:\test\

/SourceKey:pGFSbfuUMjDBQZqIbfJuwksV/Pattern:”myfile.txt”To source is the url of the azure storage container. The destination is the c:\test folder. This forder is empty. The Azure container contains multiple files. In this example we only one to copy the file myfile.txt. We will use the Pattern parameter for this purpose.

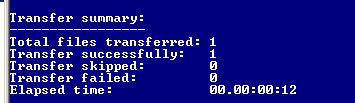

You will receive a message similar to this one after executing the command:

Figure 8. Downloading resultsYou will be able to see the file copied from azure to your local machine:

Figure 9. The local folder with the file copied from AzureThere are other parameters that the command line includes:

/S which means recursive mode and includes the subfolders.

/Y is used to supress the confirmation prompts

/L is used to list operations

/A is used to upload files with the Archive Attribute Set

/MT is used to keep the source modified date time

/XN excludes files if they are newer than the destination

/XO excludes the source files if they are older than the destination

/V:[verbose log-file] this parameter is used to save the output in a log file, by default the log file is in the %LocalAppData%\Microsoft\Azure\AzCopyIn this new example, we have a folder with multiple files. We want to copy only the PowerShell scripts:

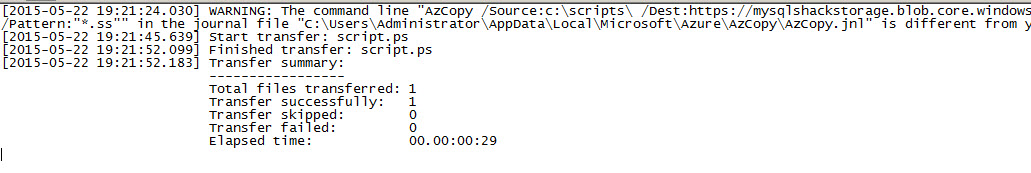

Figure 10. Several files in a local folderThe following command will copy all the files with ps (PowerShell) extension and store the results in a log file:

AzCopy /Source:c:\scripts\

/Dest:https://mysqlshackstorage.blob.core.windows.net/cont1/

/DestKey:pGFSbfuUMjDBQZqIbfJuwksVp5 == /Pattern:”*.Ps” /VIf everything is OK, you will receive a message similar to this one:

Figure 11. The copy resultsYou will also be able to see the results in a log file. The /V parameter is used to store the information in this folder %LocalAppData%\Microsoft\Azure\AzCopy.

You will also be able to see the the ps files copied in Azure.

Finally, you can execute the commands from a file. Let’s create a file with AzCopy commands named AzCommand.txt:

/Source:c:\scripts\

/Dest:https://mysqlshackstorage.blob.core.windows.net/cont1/

/DestKey:pGFSbfuUMjDBQZqIbfJuwksVp5BkD7CFv/GxvdrOwiWvAYGLc5D5J5ZKjtIpipb2djiaEmOX3QhExWVOHSC0sQ== /Pattern:”*.Ps” /VNow, execute the txt file with the following command line:

azcopy /@:”C:\azcommand.txt”

The command will execute the txt content. If everything is OK you will have the following result:

You can also verify in Azure that the files were copied

Figure 14. The transfer summary

Conclusion

As you can see, the Azure Storage Tools can be used to automate some tasks using the command line. Once you have a Storage with a container with Azure, the process to upload or download files is very simple. We also learned different parameters and how to specify patterns to choose specific files.

Note: This AzCopy command line is currently in the version 4.1 (Current Previous Version). The new versions may include new commands in the future. Make sure to have the last version.

- PostgreSQL tutorial to create a user - November 12, 2023

- PostgreSQL Tutorial for beginners - April 6, 2023

- PSQL stored procedures overview and examples - February 14, 2023