Introduction

A few months back, I encountered an interesting challenge at a client site. For those of you whom have read my previous article entitled “Excel in loading multiple workbooks into SQL Server“, you will know that the challenge centered around loading the data from multiple spreadsheets into our SQLShack financial database.

Now, one of the enterprises business rules was that the loading of this data was NOT to occur before that last of the daily spreadsheets arrived in the common data repository.

Imagine in your own mind the client had offices in California, Boston, London, Munich, Sydney and Hong Kong. Being a 24 x 7 global organization it is not surprising that the data files will arrive at different time. We are now going to have a look at one innovative way of achieving this load task utilizing Visual Studio as our front end.

The Visual Studio project that we are going to construct, once completed and activated, WILL REMAIN RUNNING continually. It is never stopped. The overhead of this continuous run is minimal as most of the time it remains dormant and is only awakened on the arrival of new files. The arrival of a dummy file called “finish.txt” is the signal to start the actual data processing.

Getting Started

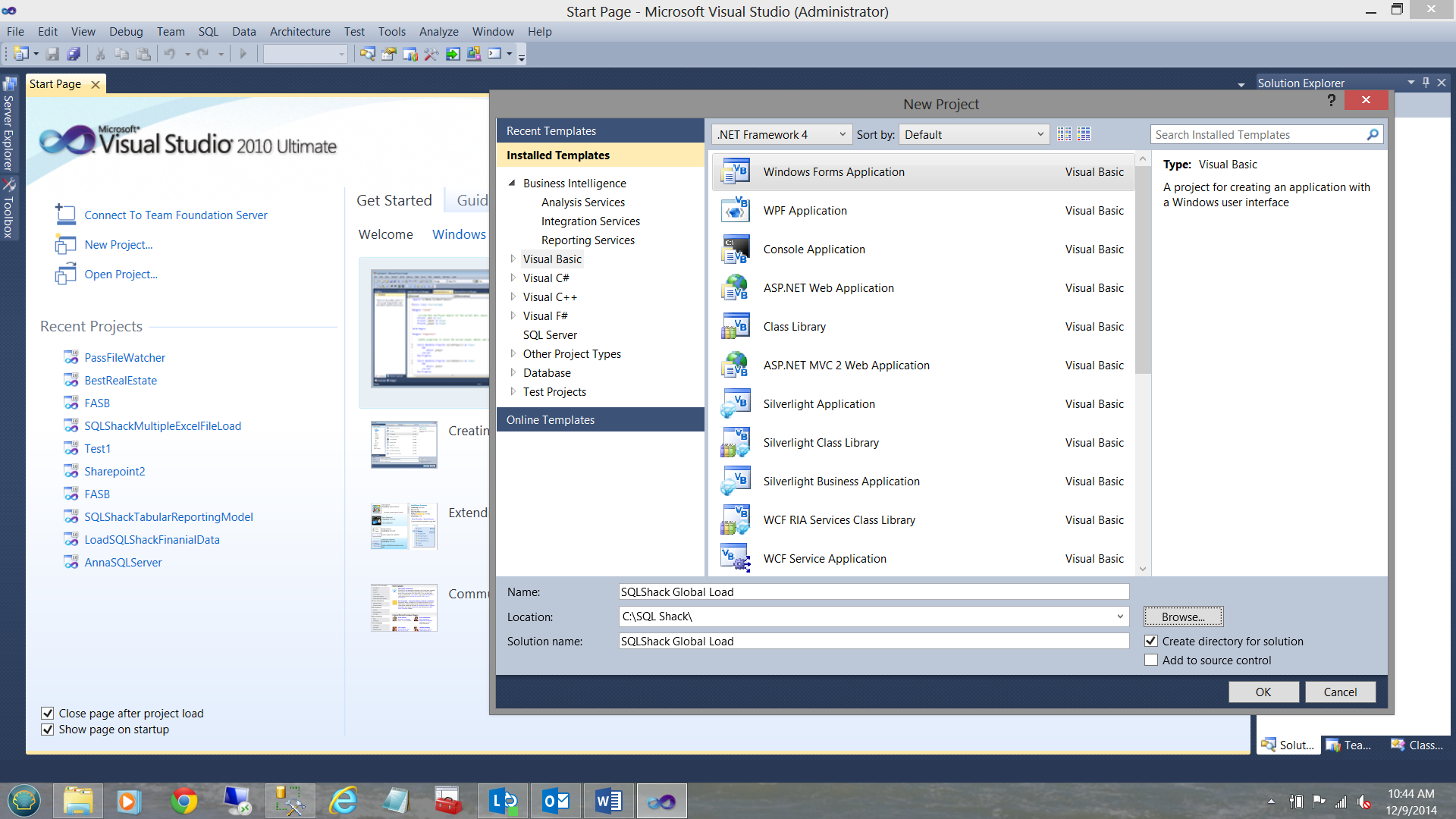

Opening Visual Studio, I am going to create a new VB.net project entitled SQL Shack Global Load.

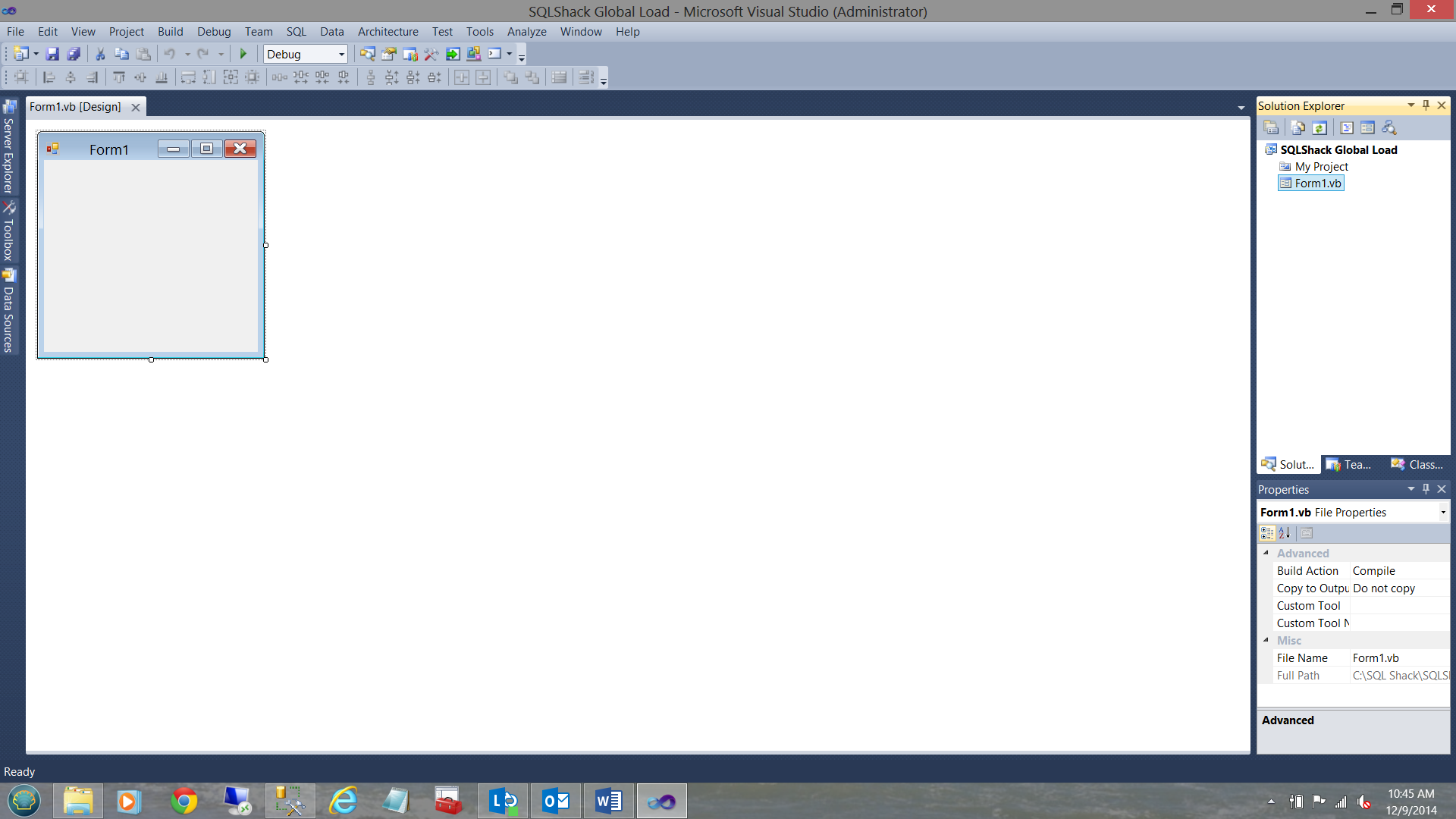

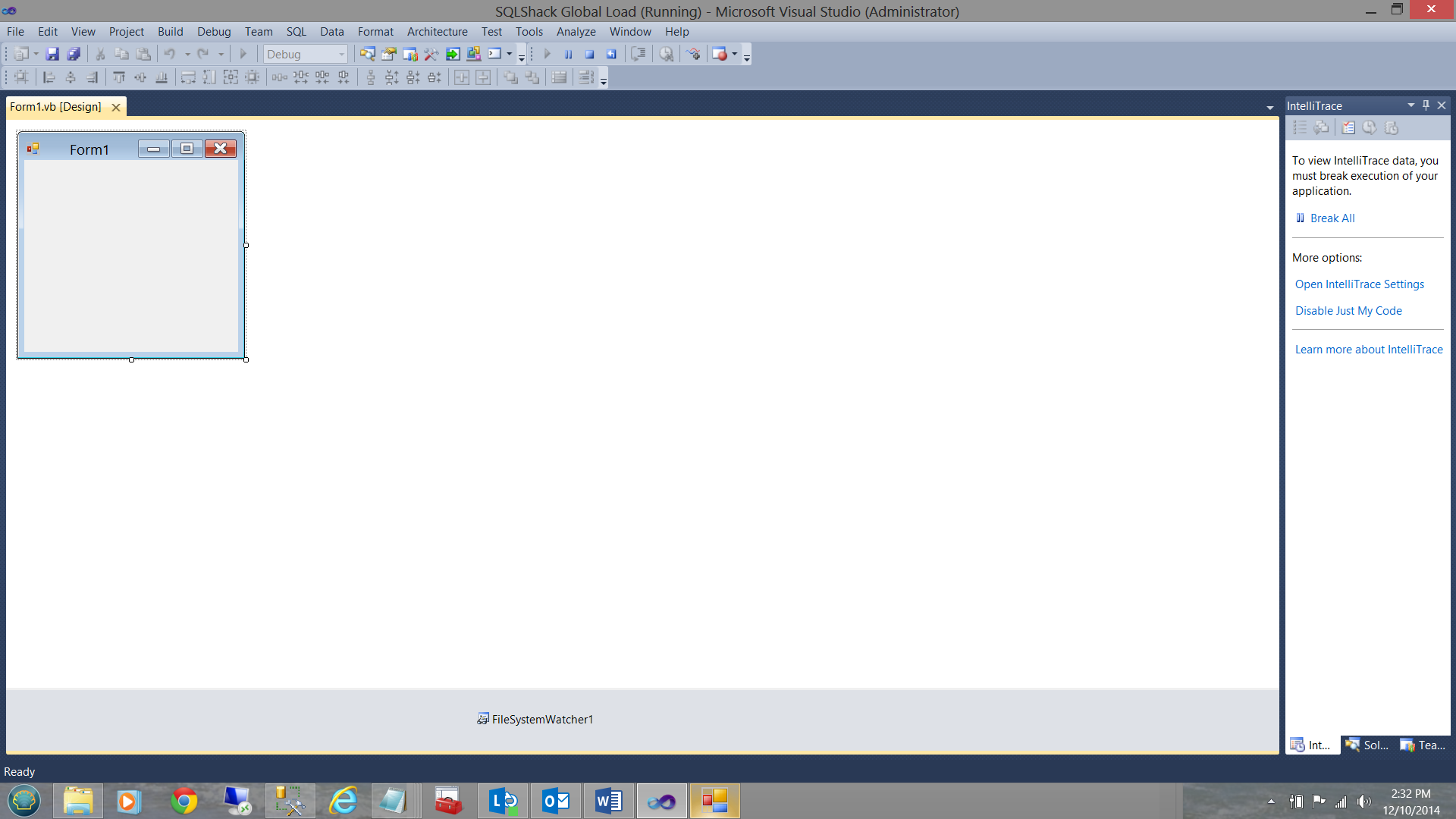

My drawing surface is now in view.

It is not necessary to change the size of the “Form” as we shall not be adding any visible controls nor visual objects to the Form.

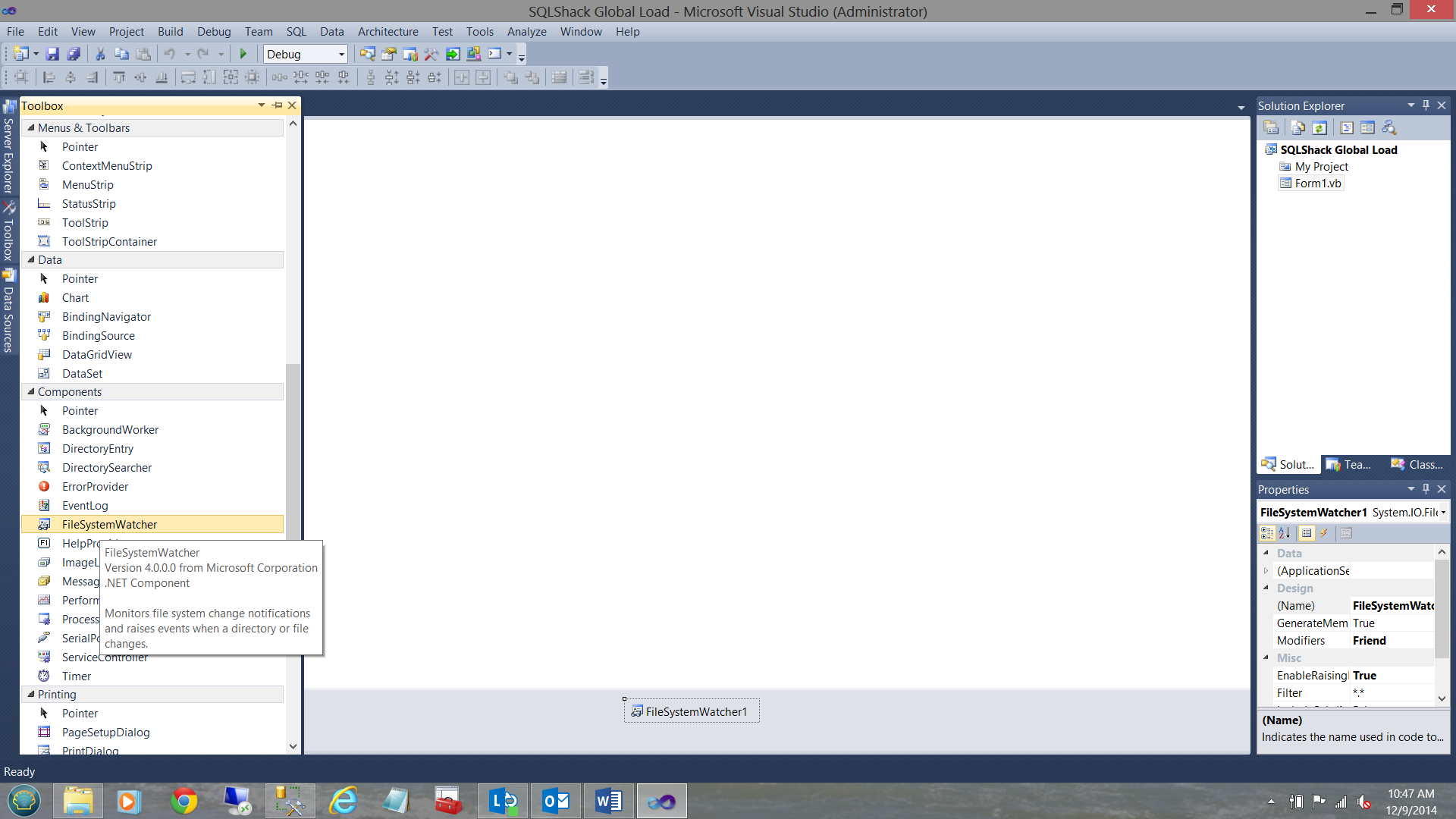

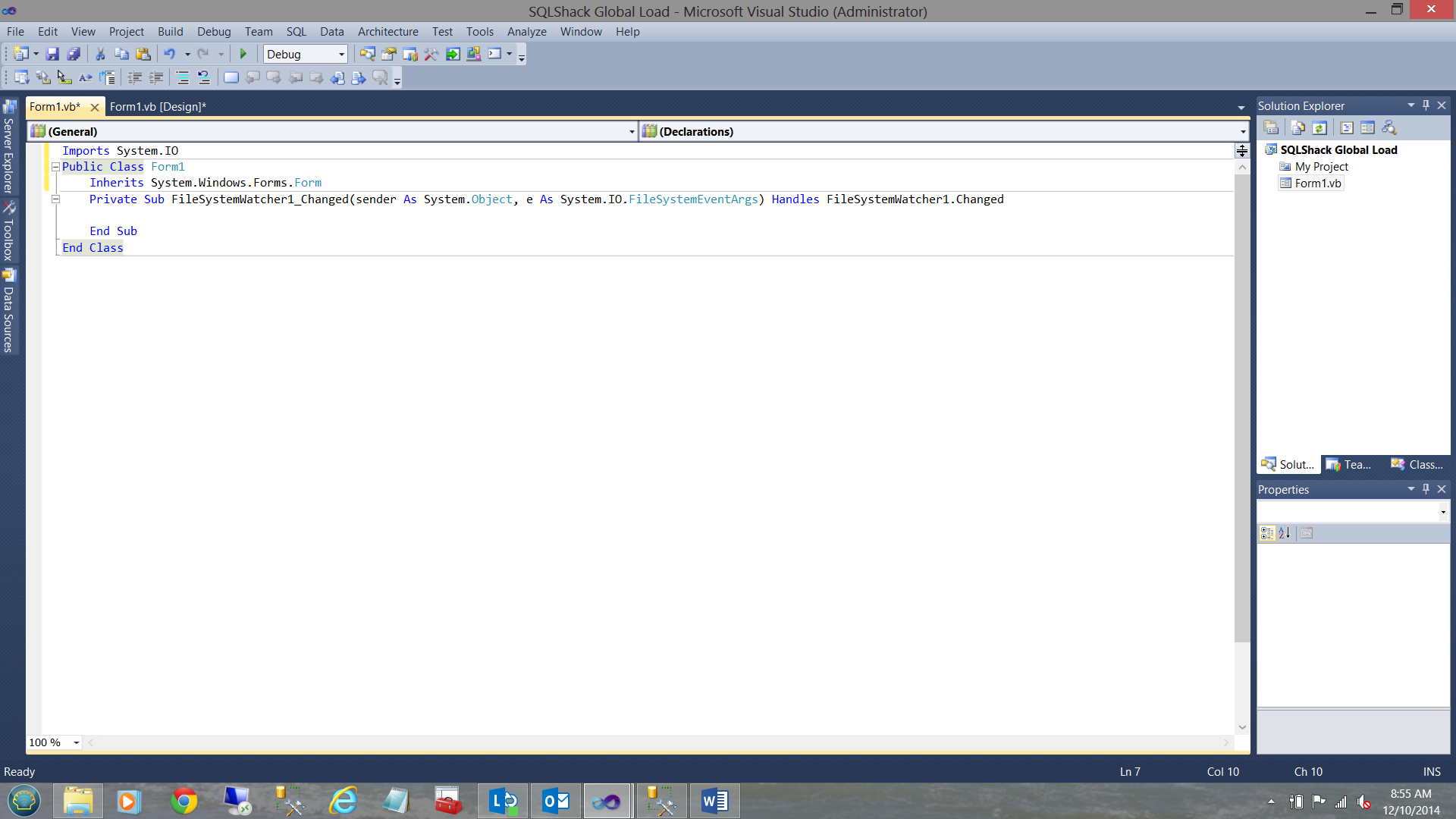

By “Double Clicking” the “FileSystemWatcher” control, the FORM code page is opened (see below).

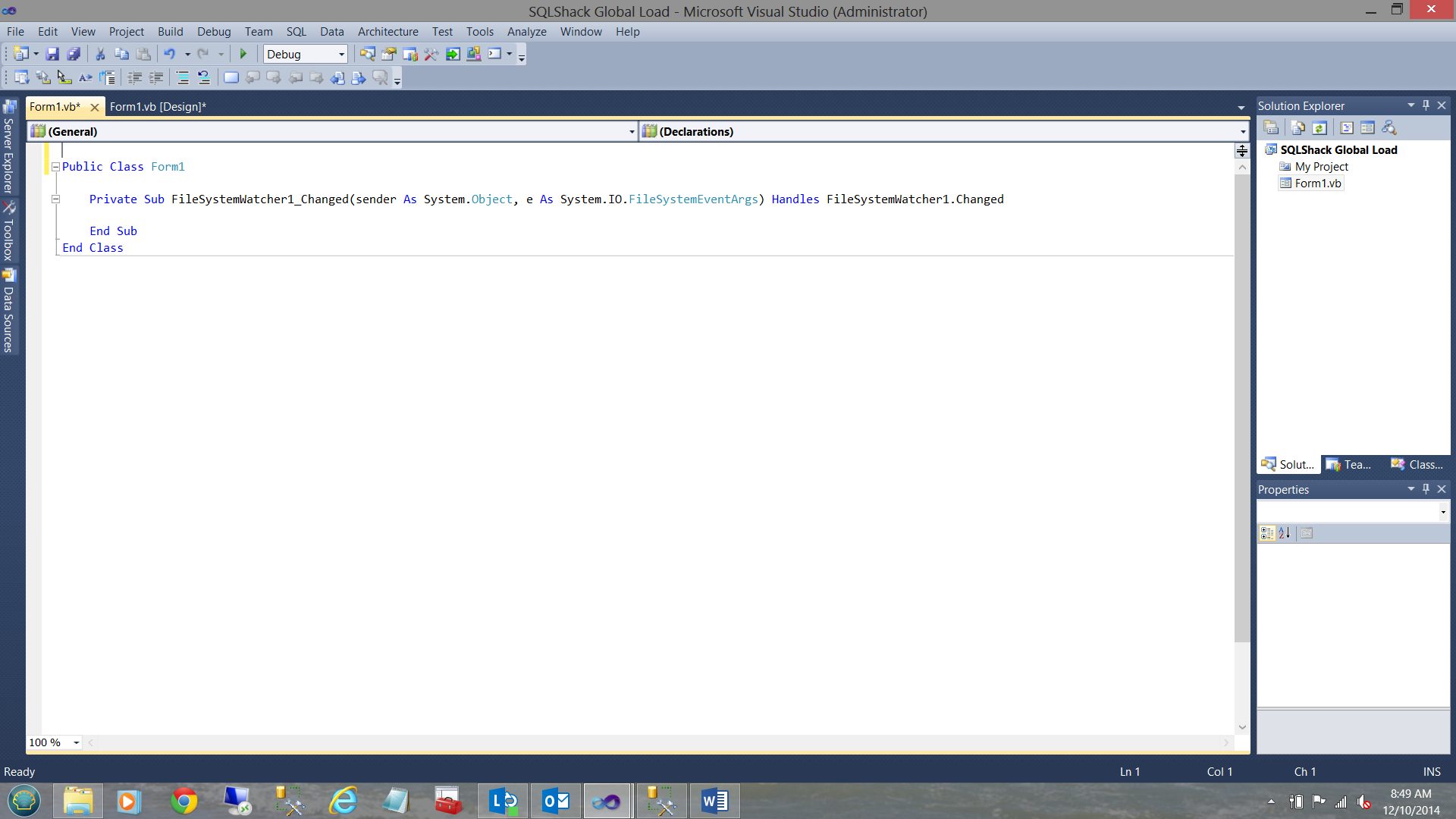

We now add the System.IO namespace and “Inherits System.Windows.Forms.Form” immediately below the Public Class declaration (see below).

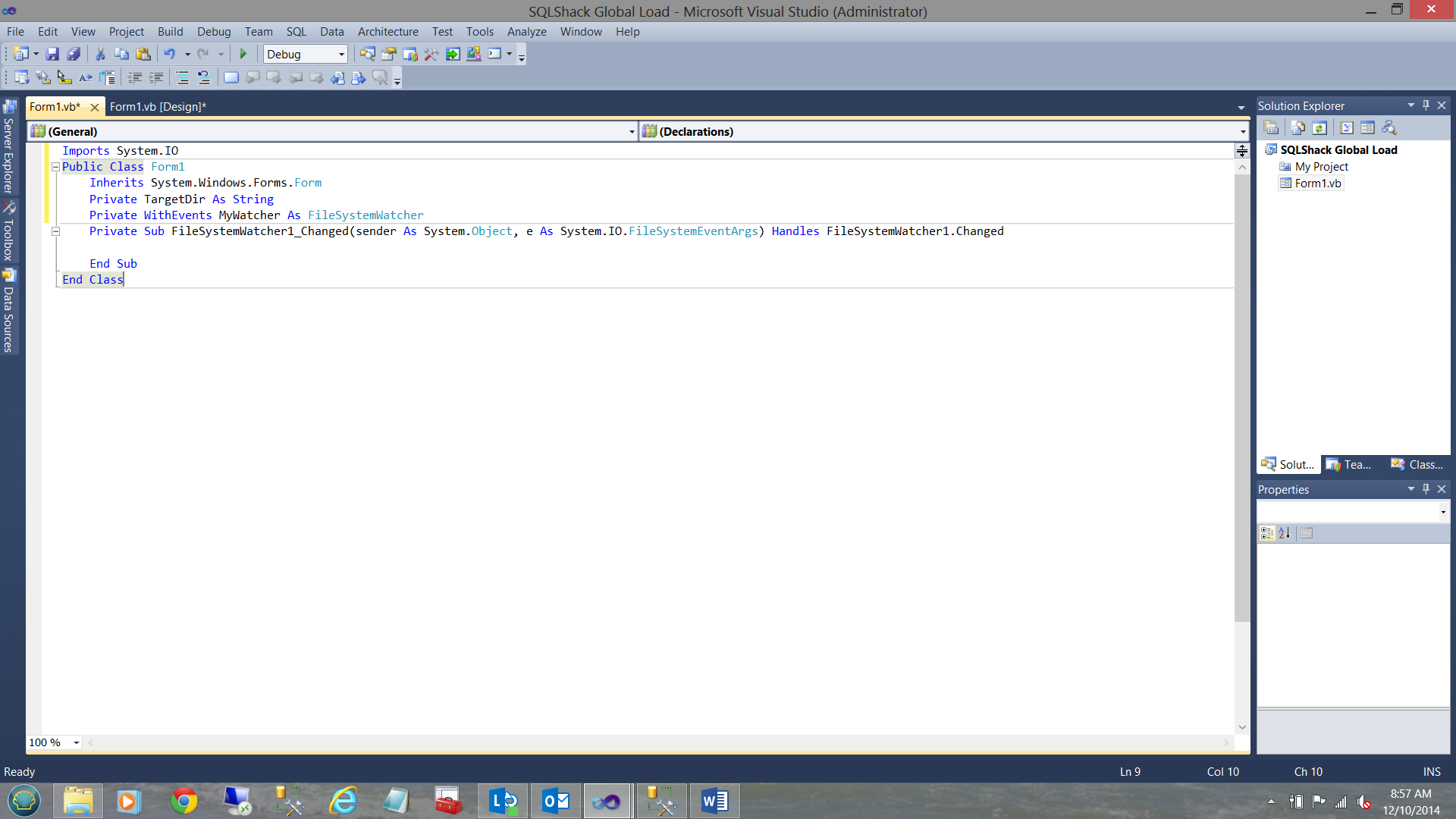

We next declared two local variables which will be utilized later in the process

“TargetDir” is used to point to where the new Excel data spreadsheets are to be found. Once again the data within these spreadsheets is loaded into our SQLShackFinancial database on a daily basis.

“MyWatcher” is merely the name that we have given to our instance of a “FileSystemWatcher” object.

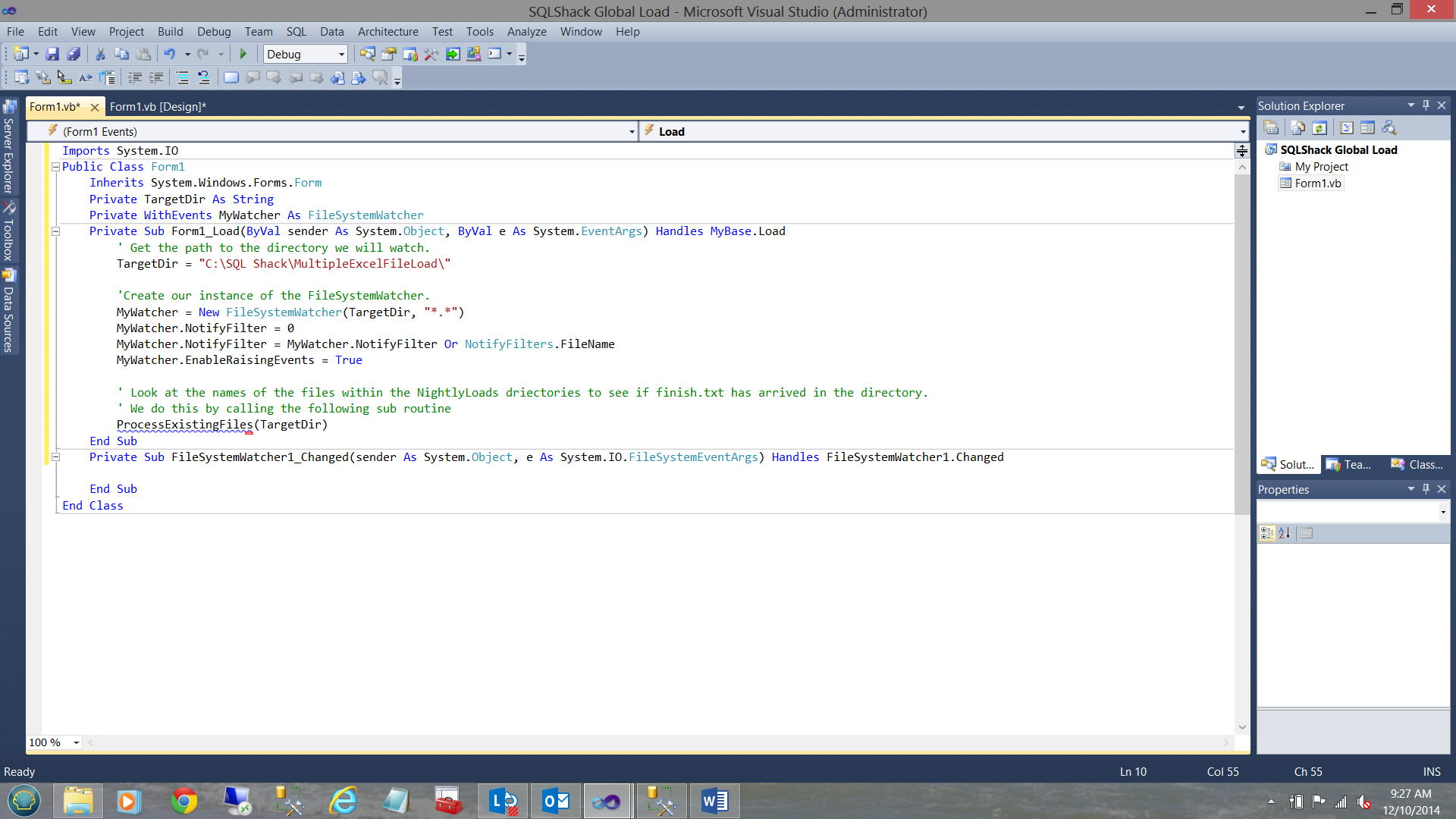

Our next task will be to create the necessary code which is to be executed during the “Form” load event (see below).

Note that we have “hard wired” the source directory where the Excel spreadsheets are to be found by our load process. Whilst not the best of coding techniques, I wanted to describe what each line of code achieves in the easiest possible manner.

|

1 |

“TargetDir = "C:\SQL Shack\MultipleExcelFileLoad\". |

Next we instantiate our instance of the “FileSystemWatcher” essentially telling our ‘solution’ to consider all the files within the given directory “TargetDir”

|

1 2 |

'Create our instance of the FileSystemWatcher. MyWatcher = New FileSystemWatcher(TargetDir, "*.*") |

We next set the “NotifyFilter” property (see above). This tells the “FileSystemWatcher” what type of element(s) it will be reporting back upon. In our case it will be the name of the file(s). After all we are looking for a dummy “txt” file to be inserted into the production spreadsheet Windows directory in order to signify that the SSIS load should now be executed as all the necessary data is present.

We then enable the “FileSystemWatcher” to process events that occur within the directory.

|

1 |

MyWatcher.EnableRaisingEvents = True |

Parsing the existing files

The last step within our load event is to parse the names of the files that currently reside within our target directory. A call is made to the “ParseExistingFiles” subroutine (see the code immediately below). As we shall remember “TargetDir” was initialized above.

|

1 |

‘ParseExistingFiles(TargetDir)’ |

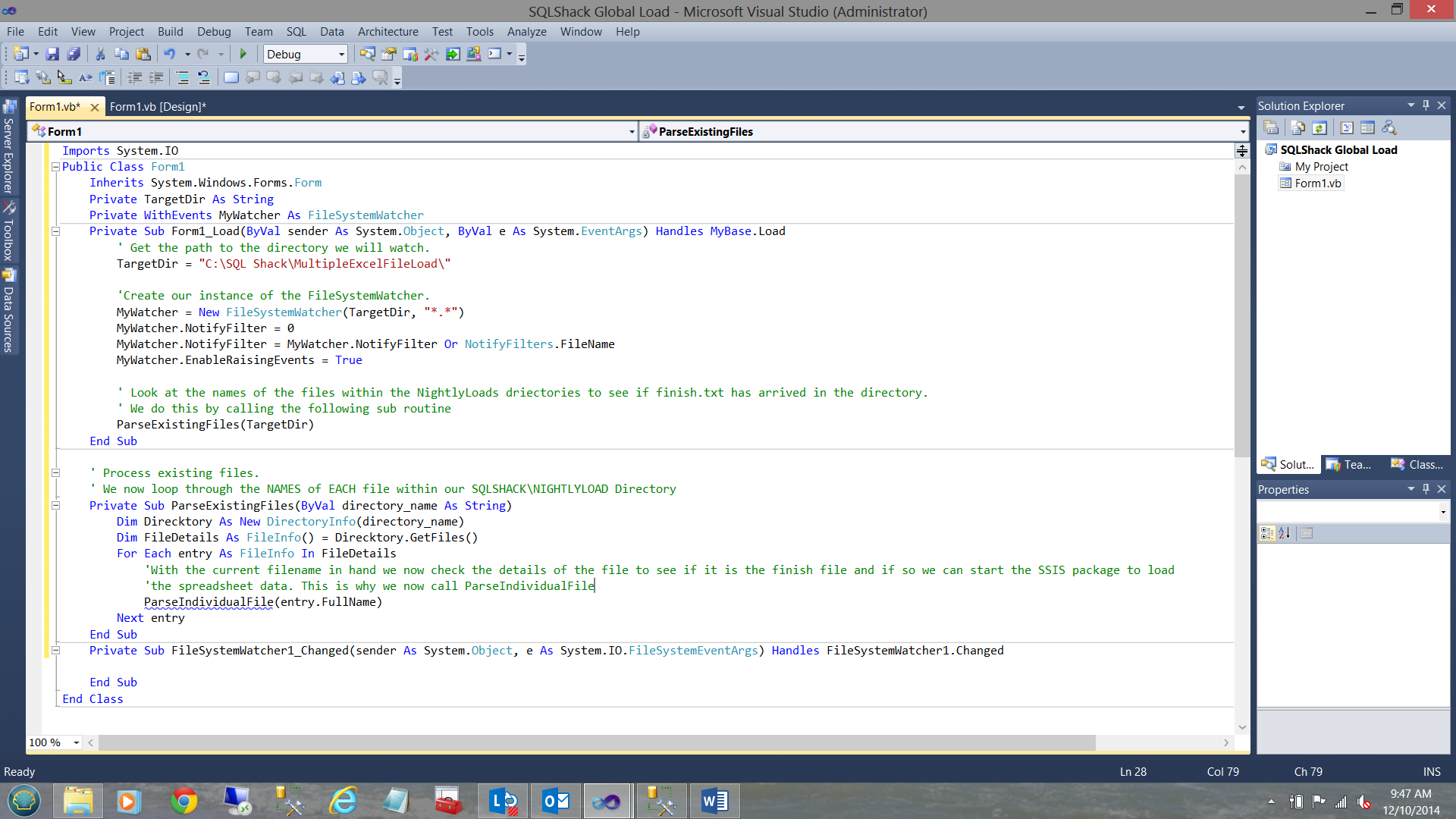

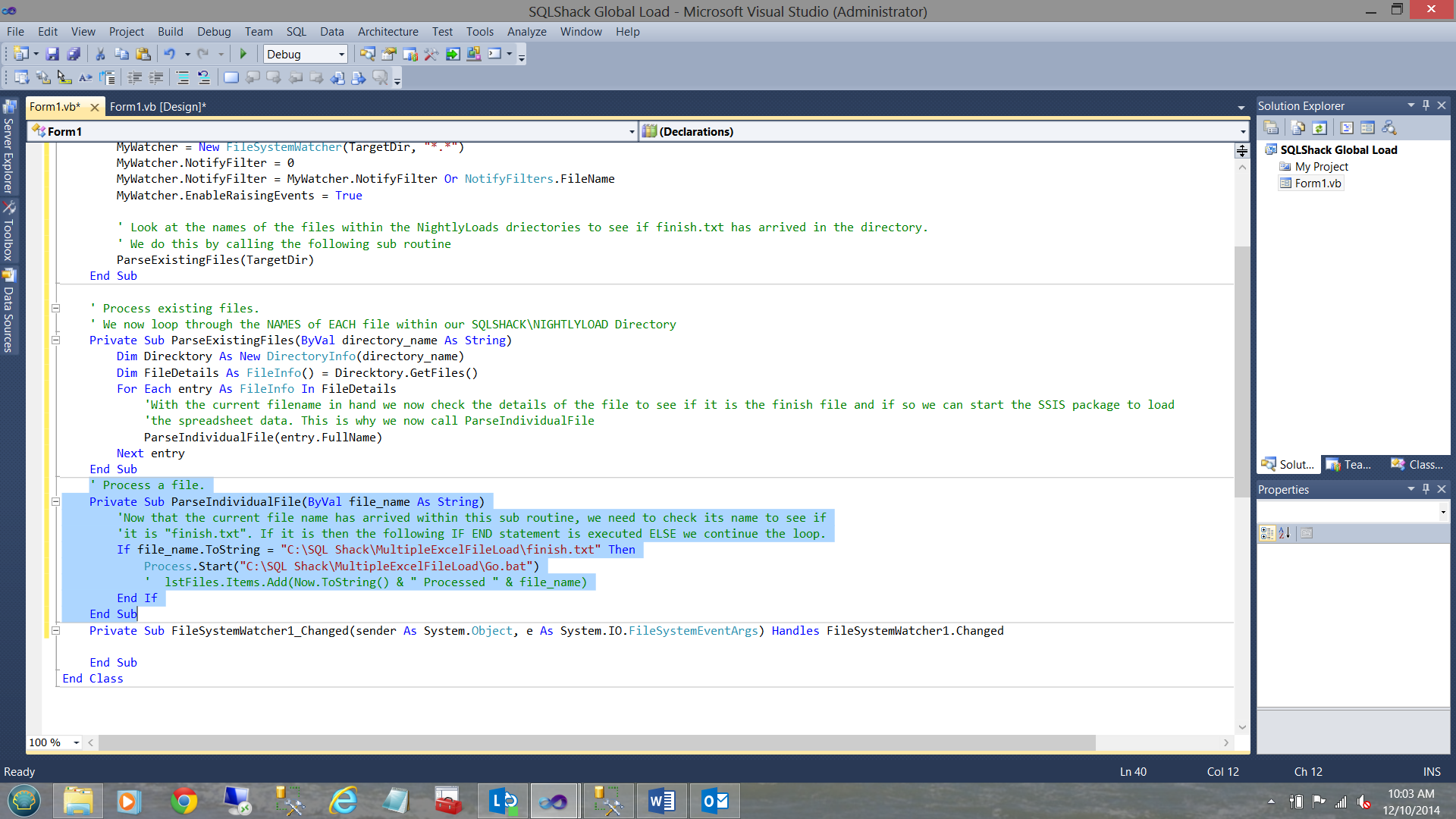

The ParseExistingFiles subroutine may be seen below:

We first create “Direktory” as an instance of a “DirectoryInfo” object. FileDetails is set to an array instance which will contain the name of all the files within the given directory (see above).

Now for the meat of the process!!

The code below is used for the files that are CURRENTLY resident within the directory. In our case the spreadsheets and any other files that may reside within the directory.

We now loop through the file names contained within the array “FileDetails” ONE NAME AT A TIME and pass EACH name, one by one through to an “are you the filename that I need to start the SSIS daily batch load” subroutine. This subroutine is called ”ParseIndividualFile”:

|

1 2 3 4 5 6 |

For Each entry As FileInfo In FileDetails 'With the current filename in hand we now check the details of the file to see if it is ‘the “finish file” and if so, then we can start the SSIS package to load 'the spreadsheet data. This is why we now call ParseIndividualFile ParseIndividualFile(entry.FullName) Next entry |

As we shall note above, the “ParseIndividualFile” subroutine has NOW been added to our source code.

The code for this subroutine is seen below:

|

1 2 3 4 5 6 7 |

'Now that the current file name has arrived within this sub routine, we need to check its ‘name to see if 'it is "finish.txt". If it is, then the following IF END statement is executed ELSE we ‘continue the loop. If file_name.ToString = "C:\SQL Shack\MultipleExcelFileLoad\finish.txt" Then Process.Start("C:\SQL Shack\MultipleExcelFileLoad\Go.bat") ' lstFiles.Items.Add(Now.ToString() & " Processed " & file_name) End If |

Once the trigger file arrives, its name will be ‘finish.txt’ and it will therefore be permitted to enter the IF / END loop. Whilst in the loop, a system process is started up and we pass as parameter the name of a Windows DOS batch file (Go.bat) which is to be executed. We shall discuss the contents of this batch file in a section below.

NOW!! , there should only be one trigger file. Once the file has been found, we could then code a breakout from the loop, as any further processing would be pointless. This exercise is left to the reader.

When any NEW file arrive in the directory

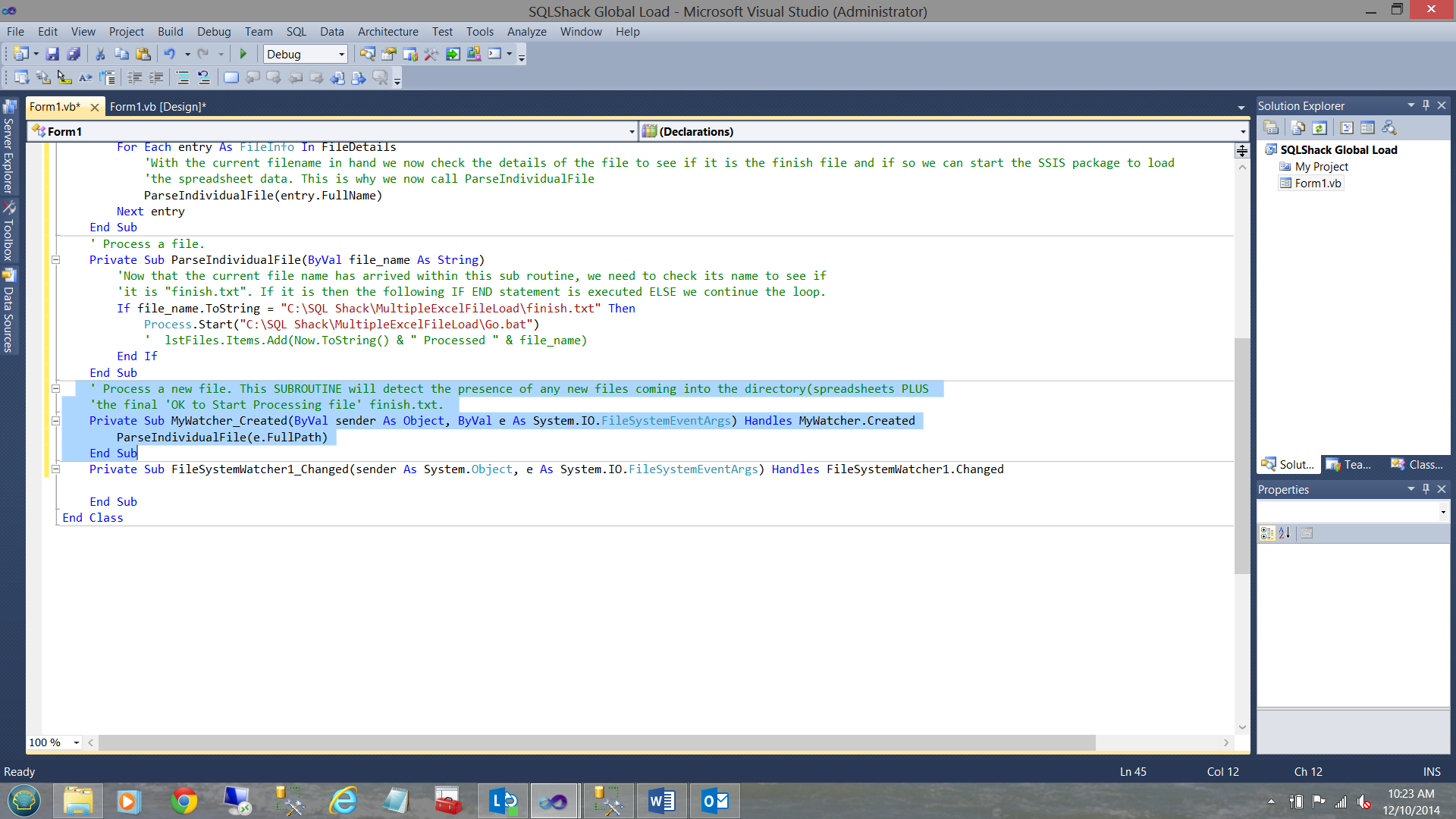

Thus far, we have failed to cater for newly arriving files. In other words the code that we have created (thus far) caters for files that existed within the directory when the VB application starts or prior to the next run. More than likely we wish to start the VB application running and wait for the file(s) to arrive.

This said, we now add another subroutine which ‘reacts’ to the “created event” of the “FileSystemWatcher”. After all, when a file is deposited into the directory is has in fact been “created”. This includes new spreadsheets etc.

The code for this subroutine is shown below.

Once again a call is made to the “ParseIndividualFile” subroutine to “see” if this is the trigger file.

The contents of the finish.txt file may be any text that you wish to place in the file. The text is a space filler and is irrelevant to any processing (see below):

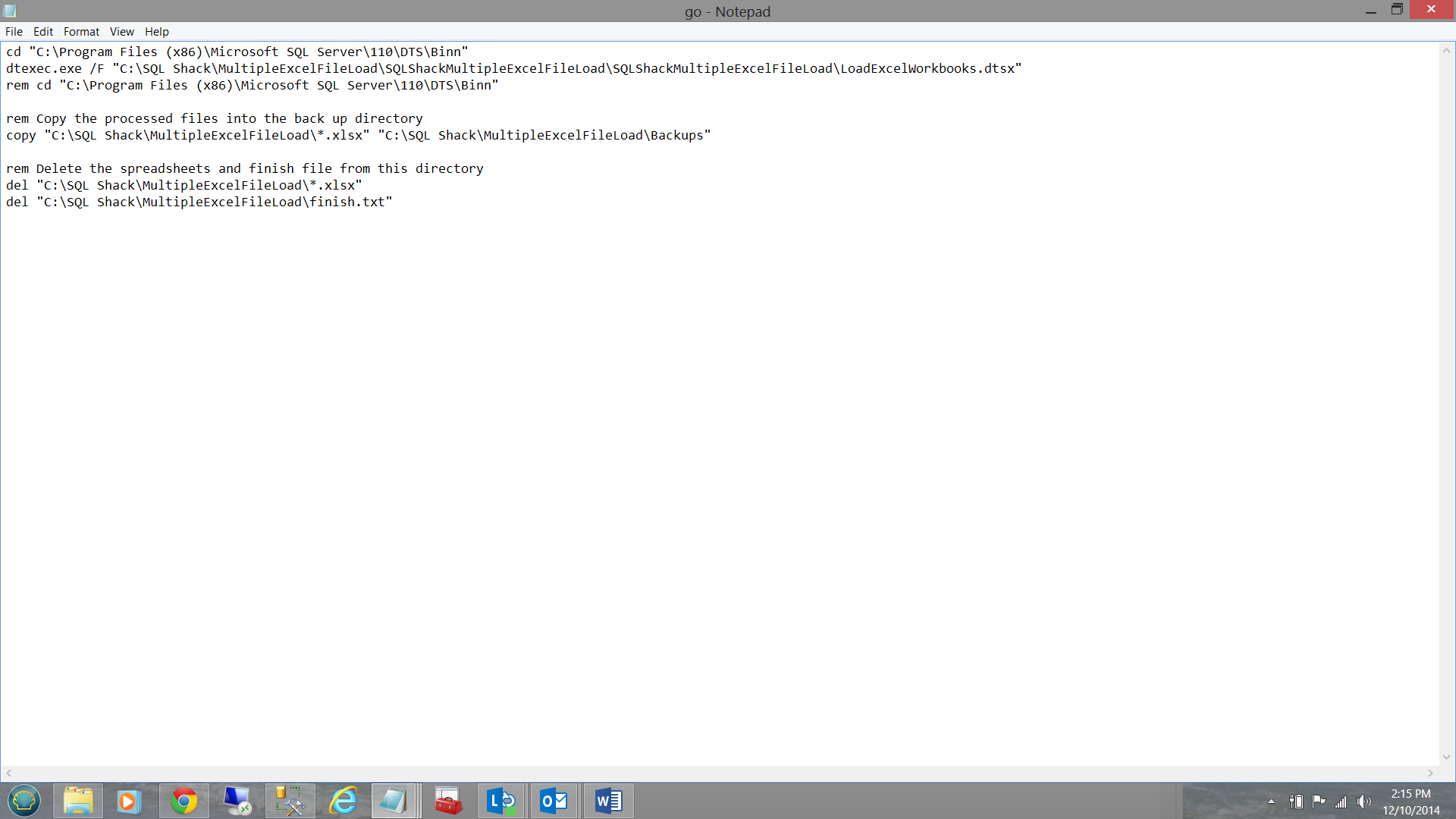

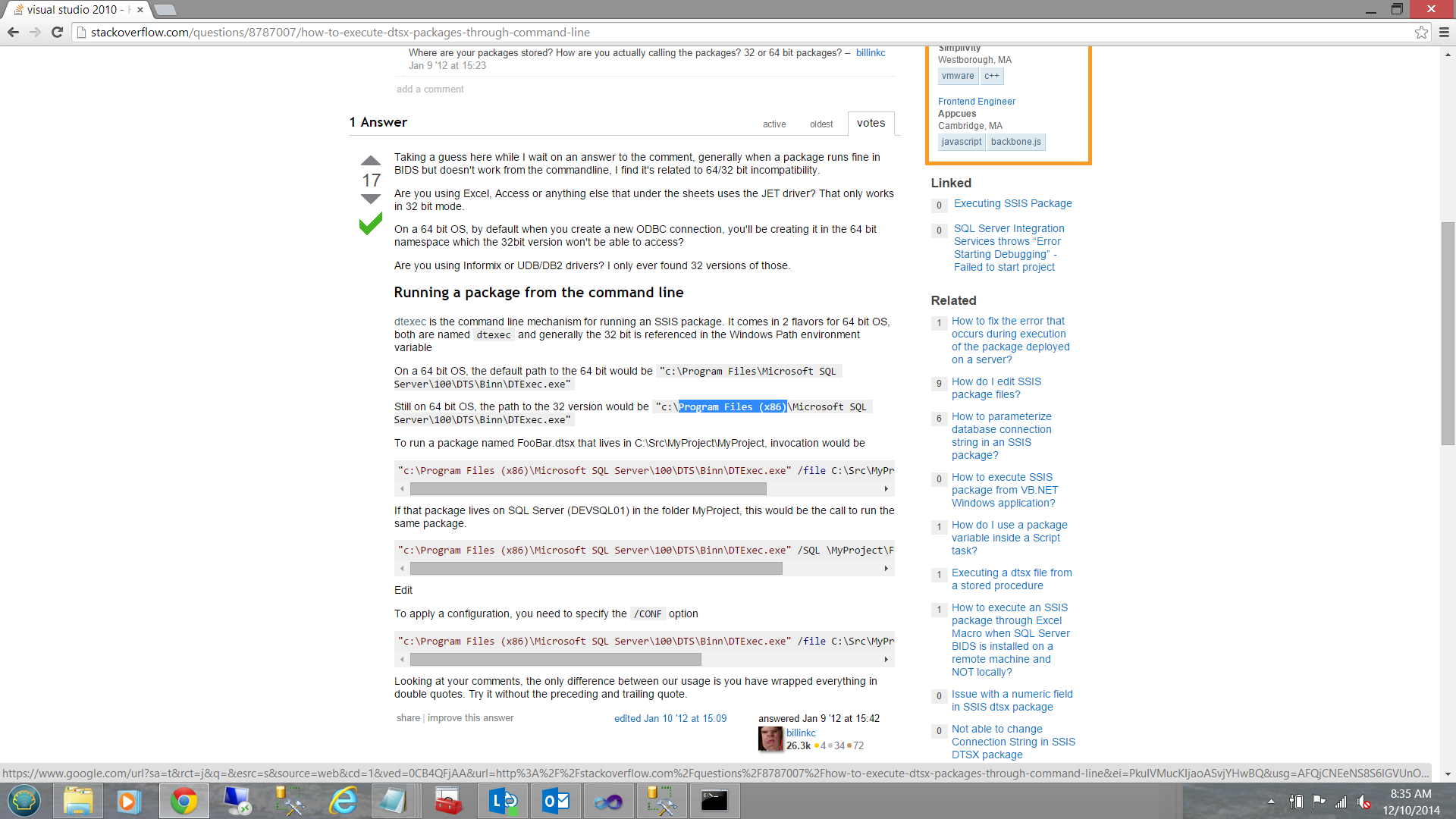

The contents of Go.Bat (the Windows DOS batch file) is as follows:

As my version of Office 2013 is a 32 bit version, I must use the 32 bit version of dtexec.exe which is located in the SQL Server subdirectories under ‘program files (x86)’ directory. Note that if you attempt this with the 64bit version of dtexec.exe (and your version of Office is a 32 bit version) you will generate a runtime error (see the Addenda below).

After the package has been executed, we copy the spreadsheets out of the production directory to a backup directory for further processing (however this is out of the scope of the present discourse).

Let us give it a test drive

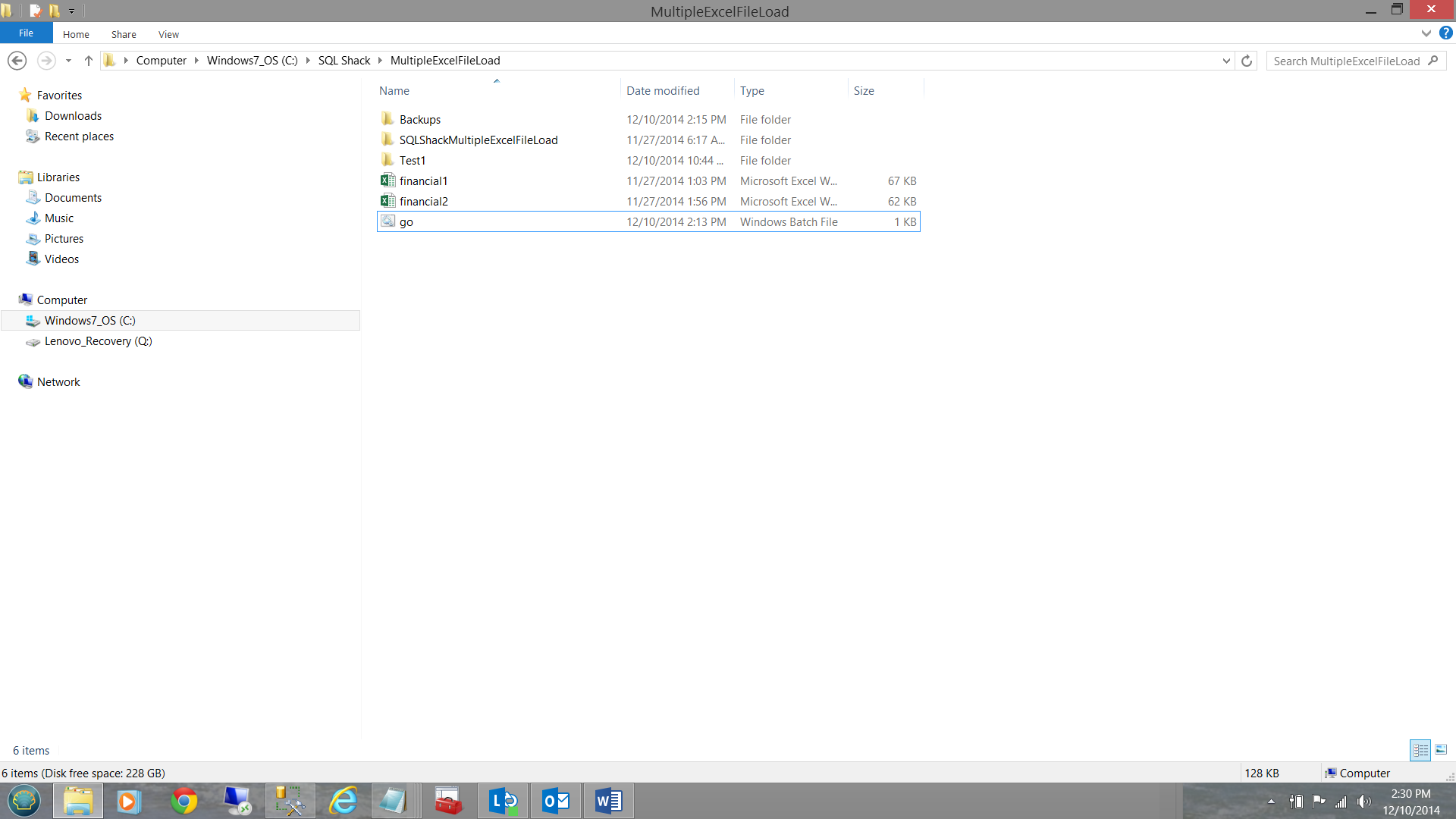

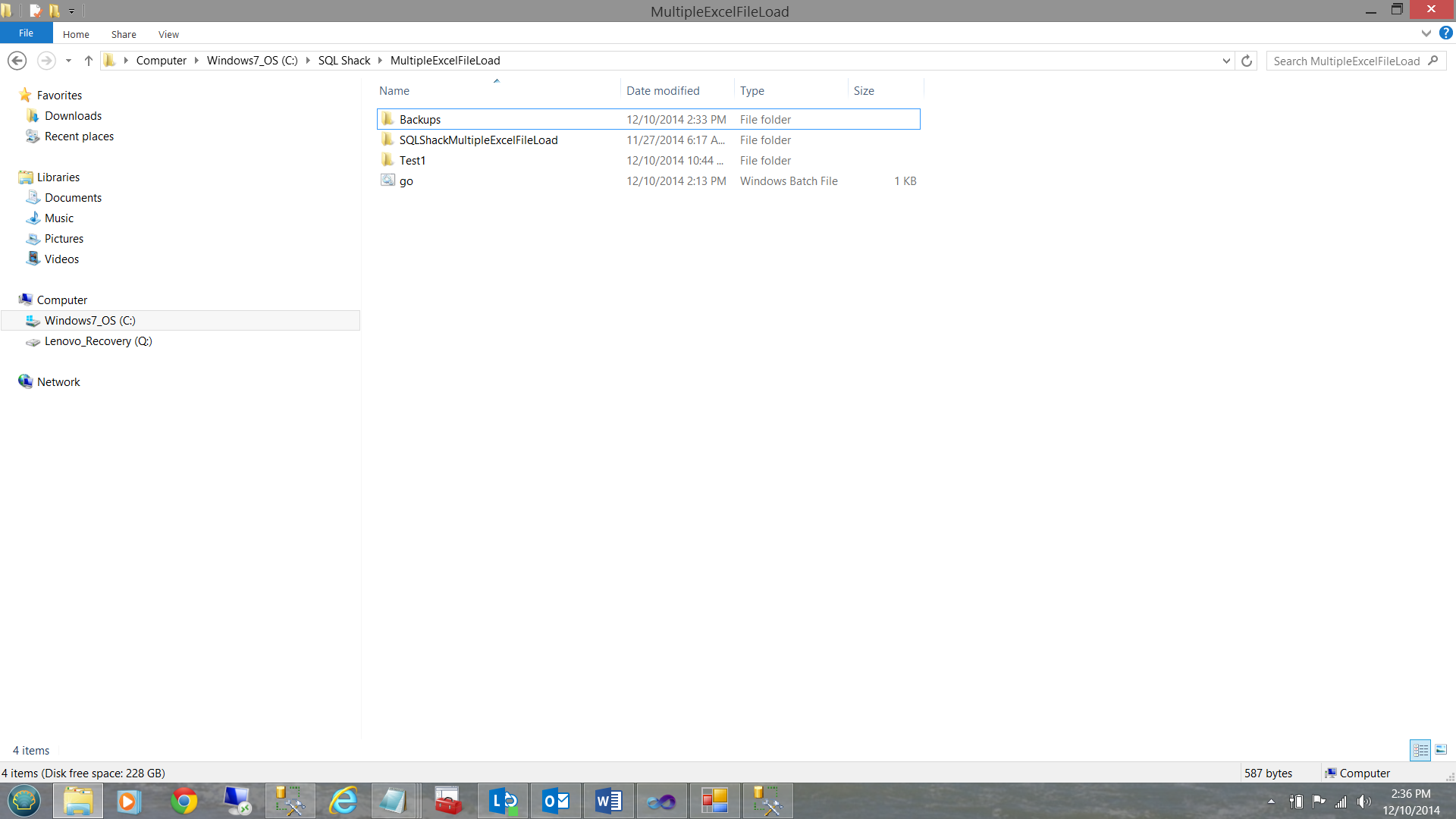

To begin, our production directory appears as follows:

Let us start our Visual Studio Project.

Now let us drop the “finish.txt” file into the subdirectory

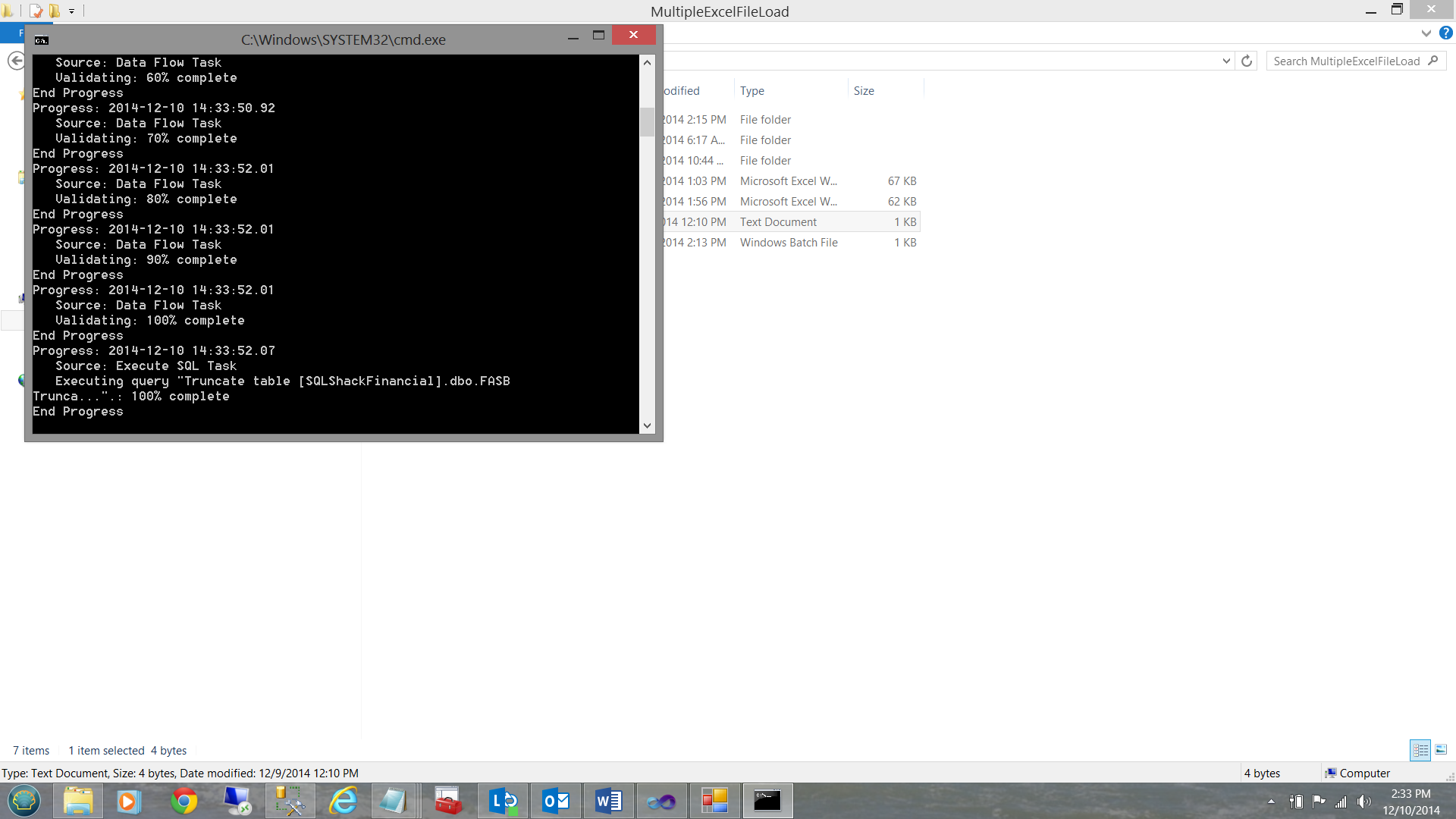

Note that because the trigger file “finish.txt” has arrived that “Go.bat” was executed (see above).

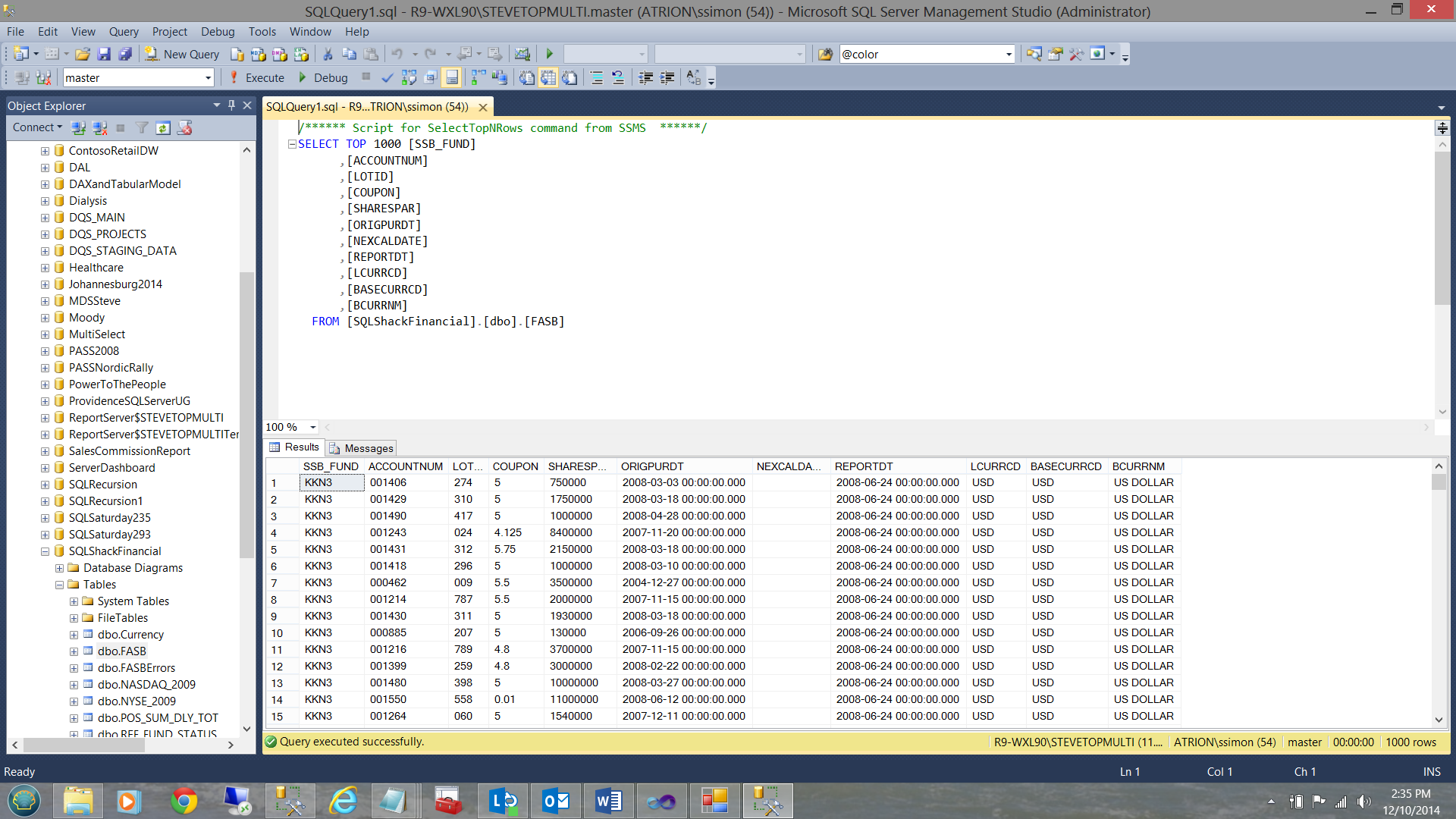

The SQL Server tables within the SQLShackFinancial database have been populated (see above).

Meanwhile back in the production directory things have definitely changed.

Note that the spreadsheets and the finish /trigger file are missing.

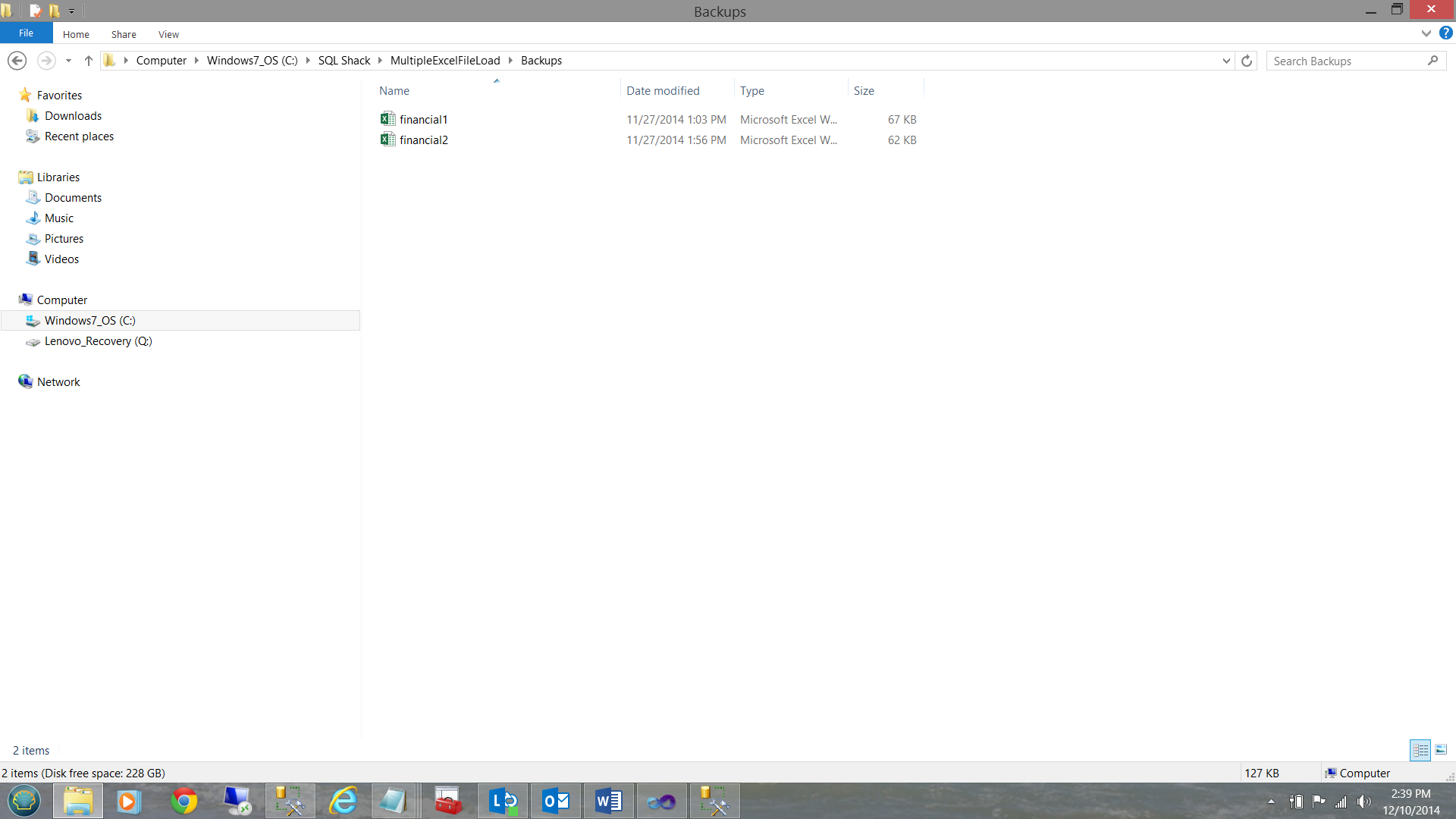

The spreadsheets have been moved to the ‘Backups’ directory (see below)

..and the finish / trigger file has been deleted.

This said we have achieved what we started out to accomplish.

Conclusions

A few weeks back, we saw how the data from multiple Excel spreadsheets could be loaded into our SQL Server database, utilizing SQL Server Data Tools. At the end of the article, I mentioned that we could automate the load. If we knew with certainty that all of the data would be present in the production directory at a given time, we could have run the load easily enough via the SQL Server Agent.

In reality in many 24 x 7 enterprises with offices around the world, have the ‘arrival times’ of their data files varied AND most of these firms wish to process ONLY when all of the data has arrived.

The processing method discussed above can help you achieve your end goals.

As always, should you wish the code for this exercise, please let me know.

In the interim, happy programming.

Addenda

Running in 32 bits mode requires a change to the call batch file.

- Reporting in SQL Server – Using calculated Expressions within reports - December 19, 2016

- How to use Expressions within SQL Server Reporting Services to create efficient reports - December 9, 2016

- How to use SQL Server Data Quality Services to ensure the correct aggregation of data - November 9, 2016