A couple of weeks ago I got this idea to capture twitter feeds and store it in a relational database structure. I wanted to be able to do some trend analysis, to see what days certain hashtags get used more than other as well as to be able to go back to certain tweets and see what I can learn from them. I tried to get the Twitter API to work for me, but had no luck. So I came across Microsoft Flow which seems to make this process very easy.

Using Azure SQL Database seemed like a no brainer, by using Azure I have a system that is always on without me worrying if my Database is online.

Discussion

I do not have a SQL Server environment that I could play with that is always accessible. For the most parts I have a couple of VM’s on my laptop that I use as my sandbox area. This led me to the decision to use Azure SQL Database as I don’t have to setup a Dynamic DNS for my SQL Instance to be accessible over the internet as my home IP changes daily and keep my laptop on 24/7.

I came across Microsoft Flow from a tweet that someone I follow retweeted, and started to play around to see what this new product is capable off. The first thing that caught my attention was the twitter connector, so I connected my twitter profile to the connector which was surprisingly easy. The most difficult part of the process was to get my Azure SQL Database connected to the SQL connector and then I had to create the table structure for the Twitter feeds to get inserted into. Twitter has great documentation around this if you want to capture tweets in a No-SQL environment, but could not find a lot of information on creating the tables in SQL Server.

Considerations

There is a limit on how many tweets you can pull every hour, it seems to be in the range of about 500 tweets an hour. I did try to contact Microsoft to define the limit, but at the time of this article I had no response yet (I am also not sure if it is a Twitter API limit). Also, you have don’t have to create a very big Azure SQL Database as it is small inserts that occur every few minutes or so.

Prerequisites

Microsoft Azure SQL Database

Microsoft Flow Account

Objective

In this article, we will create the tables needed to capture twitter information and then create a Flow to capture tweets into a database that we have created on an Azure SQL Server.

Assumptions

We will be working with the assumption that you already have an Azure SQL Database up and running. For this, you do not need to use and Azure SQL Database as you can use any instance of Microsoft SQL Server as long as you can access it over the internet.

Solution

First, we will create a table in SQL Server to prepare for some basic twitter data.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 |

CREATE TABLE [dbo].[Twitter] ( [id] [INT] IDENTITY(1, 1) NOT NULL , [RetweetCount] [INT] NULL , [TweetText] [NVARCHAR](500) NULL , [TweetedBy] [NVARCHAR](100) NULL , [CreatedAt] [NVARCHAR](100) NULL , [TweetID] [NVARCHAR](50) NULL , [SearchTerm] [NVARCHAR](50) NULL , PRIMARY KEY CLUSTERED ( [id] ASC ) ); |

You will notice that I have specified TweetText to have a limit of 500 instead of the 140 characters that twitter allows, this might seem like overkill. Twitter has made some recent changes to their character limit where they no longer include URLs among other things in the character count. To provision for longer text’s I decided to go with 500. The SearchTerm will be used to split our tweets if we have multiple different HashTags that we want to capture.

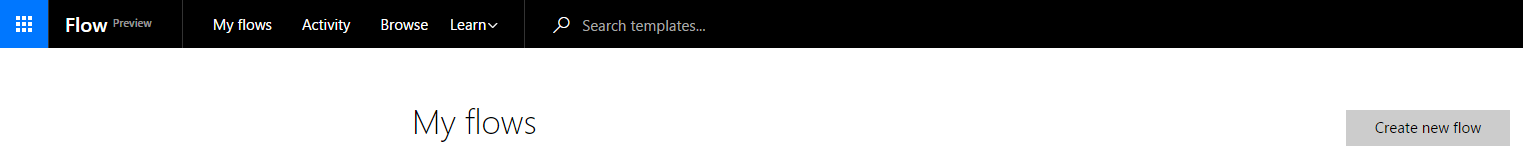

Next, we will create a flow with Microsoft Flow to connect to our Azure SQL Database and Twitter. You will have to create an account here. Once your account is active you can go to the “My Flows” tab in the top left-hand corner of the page and click on “Create new flow”.

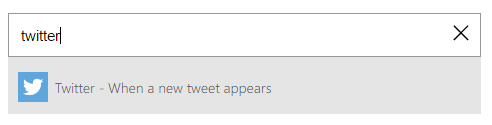

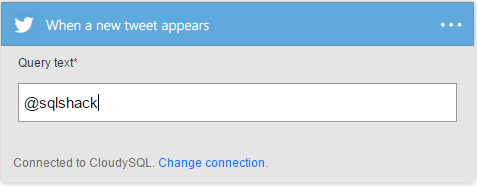

Next, you will see the first part of our flow, you can now search in the text box for the twitter connector as per the below image.

You will have to connect your twitter account to the Flow and enter a Twitter search string, you can read more on how to use the twitter search string here.

For this article, we will store everything that @SQLShack tweets about.

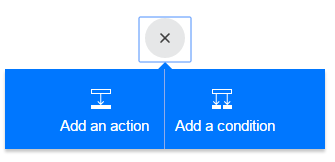

Now the fun part start where we will connect to our Azure SQL Database to our flow. You can click on the “+” just under the Twitter connector and select “Add an Action”.

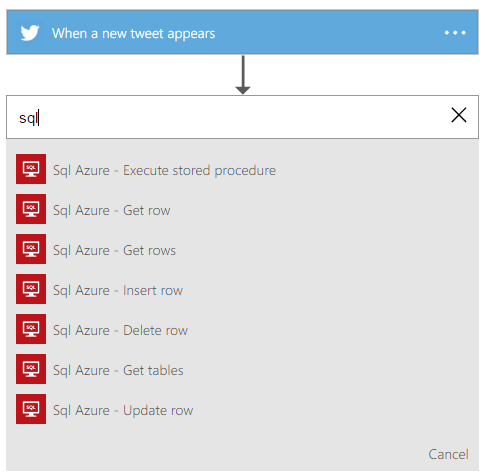

This will add an action to our flow, we need to search for the “Sql Azure – Insert Row” action. This will allow for the tweets that match our search text to be inserted into our Azure SQL Database once we have setup the connection and done all the required mappings.

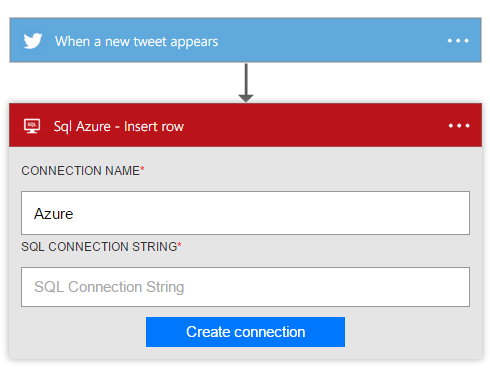

Once the action has been loaded into our flow, we need to setup the connection to our database. The connection string will look like the below code snippet.

|

1 2 3 |

Server=[ServerName].database.windows.net;Database=[Database];User Id=[Username];Password=[Password] |

You can enter any name for the connection you like.

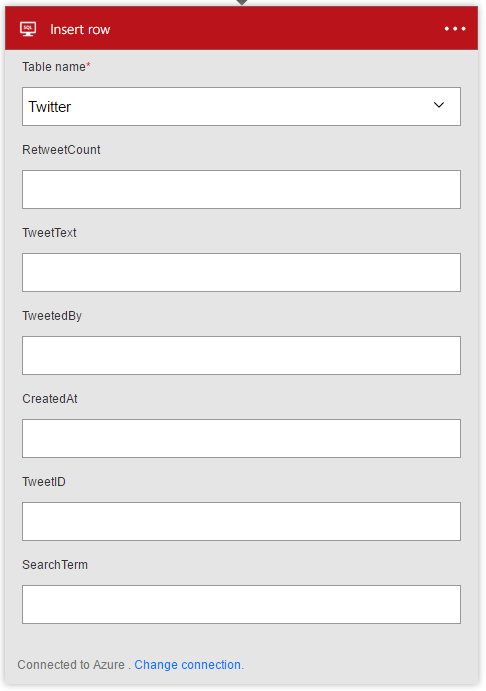

Once all of the required fields has been entered we can click on the “Create Connection” and select the table we have created earlier on in the drop down. This will load the table schema into the flow, we are now ready to start with the mappings to our table. It takes a few second to load, while it is busy it seems like nothing is happening.

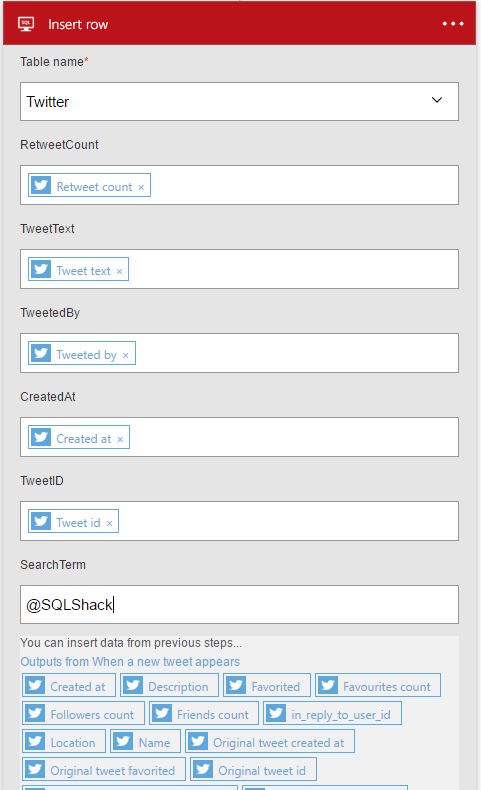

Once your connector has loaded the table schema you should see something like the above image. We now need to click on each of the above fields and assign the Twitter values to our table.

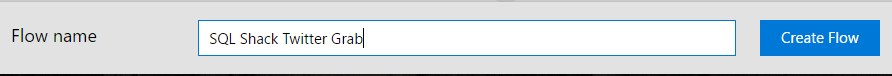

As mentioned earlier our SearchTerm field is free form text and this is where we enter our search term we defined in our Twitter connector in our case we decided to go with “@SQLShack”. We are now done and can give our Flow a name and create it.

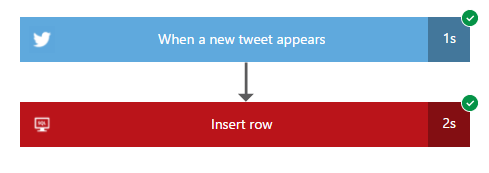

After we have created our flow we can monitor the flow in the live window which represents itself in the same way as SSIS where you can see if everything works according to plan. The best way to test is to post a test tweet.

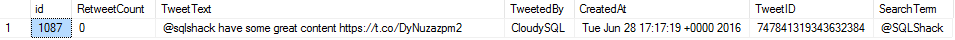

If both connectors complete successfully you will see our test tweet in our table that we have created earlier.

Now we will have to leave this on for a couple of days to enable us to do some more in-depth analysis of the tweets we are monitoring.

Final Thoughts

I am sure there are better ways to accomplish this outcome, but this was to show how easy Microsoft Flow is to work with and how easy it is to work with Twitter data. This is not an enterprise solution as we have a couple of restrictions like the poll limit which I am not sure if this is a Twitter API restriction or a restriction in Microsoft Flow. I would like to do some word analysis to create a predicted emotion/feeling on the topics that I am capturing, like for instance to see how many people think the product is great or what percentage of people believe that a product or service is bad.

- Running with running totals in SQL Server - July 29, 2016

- The new SQL Server 2016 sample database - July 22, 2016

- Storing Twitter feeds with Microsoft Flow in Azure SQL Database - June 29, 2016