Introduction

As a person who has always enjoyed finding new and innovative ways to perform tasks more efficiently and effectively with SQL Server, I have endeavored to document some of the more ‘innovative’ ways and means of doing these things in our SQLShack ‘get-togethers’.

Today, we shall be looking at one of my favourites, the ‘Data Access Layer’, not to be confused with ‘Data Access Layers’ from the Visual Studio world.

A Data Access Layer (hence forward referred to as a ‘DAL’) for all intents and purposes is a user defined table function. A DAL is capable of accepting arguments to its parameters and in doing so is able to process data for the end user, whether or not any arguments have been passed to its varied parameters. More on this in a few minutes.

A bit of history

When working at a client site around 5 years ago, I was working on a financial project where many of the business folks wrote their own queries. The client had offices around the world and oft times upon arriving at work in the morning (eastern USA), I would find that overnight there were queries from say the ‘Timbuktu’ office that were still running from the night before. In fact one query ran for twenty six hours and yielded four rows. Enough said!

Under these circumstances we had to find a way to continue permitting the business folks to create their own queries yet not to bring the system to its knees in doing so. Hence the birth of the DAL concept, a well-tuned suite of user defined table functions (tuned by the DBA’s with the end clients in mind).

Why not use a well-tuned view?

A DAL is capable of accepting arguments and passing these arguments to the DAL parameters.

Getting started

Whilst the client had hundreds of millions of rows of data in most tables, with between 100 and 300 fields in each, the sample that we shall be dealing with is considerably smaller. The important point being that what we are now going to look at, is truly scalable. This in my opinion is most important.

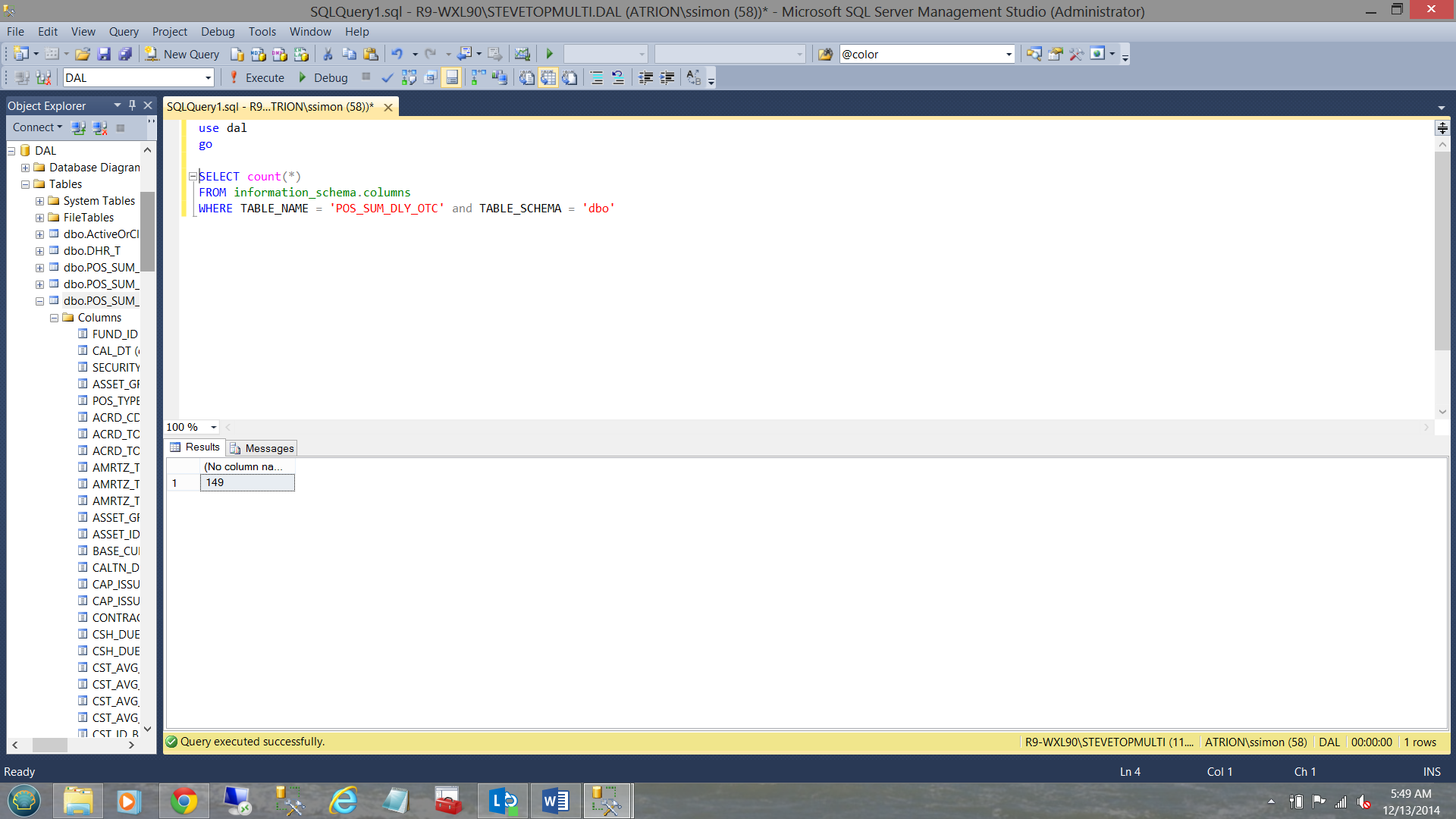

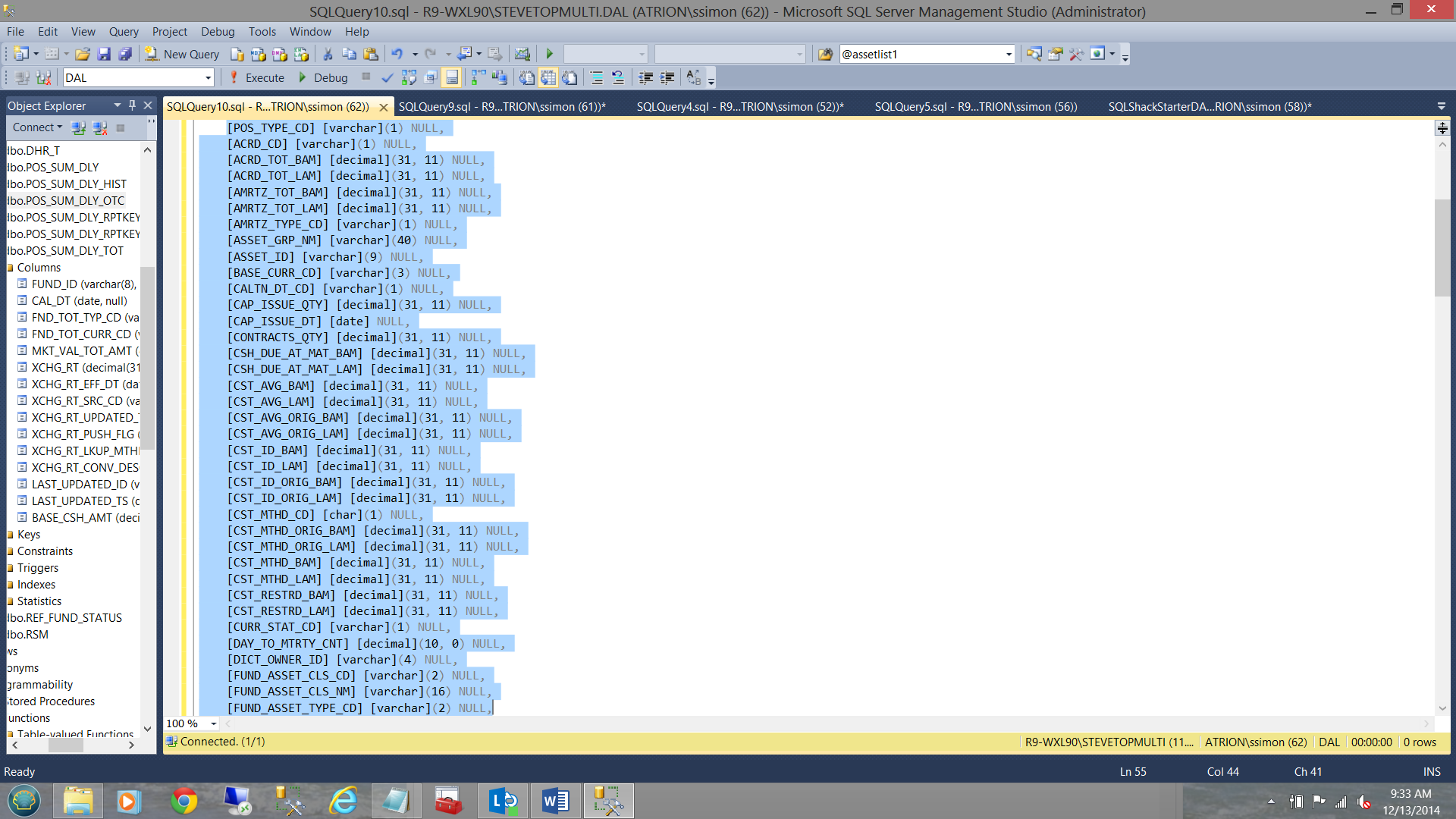

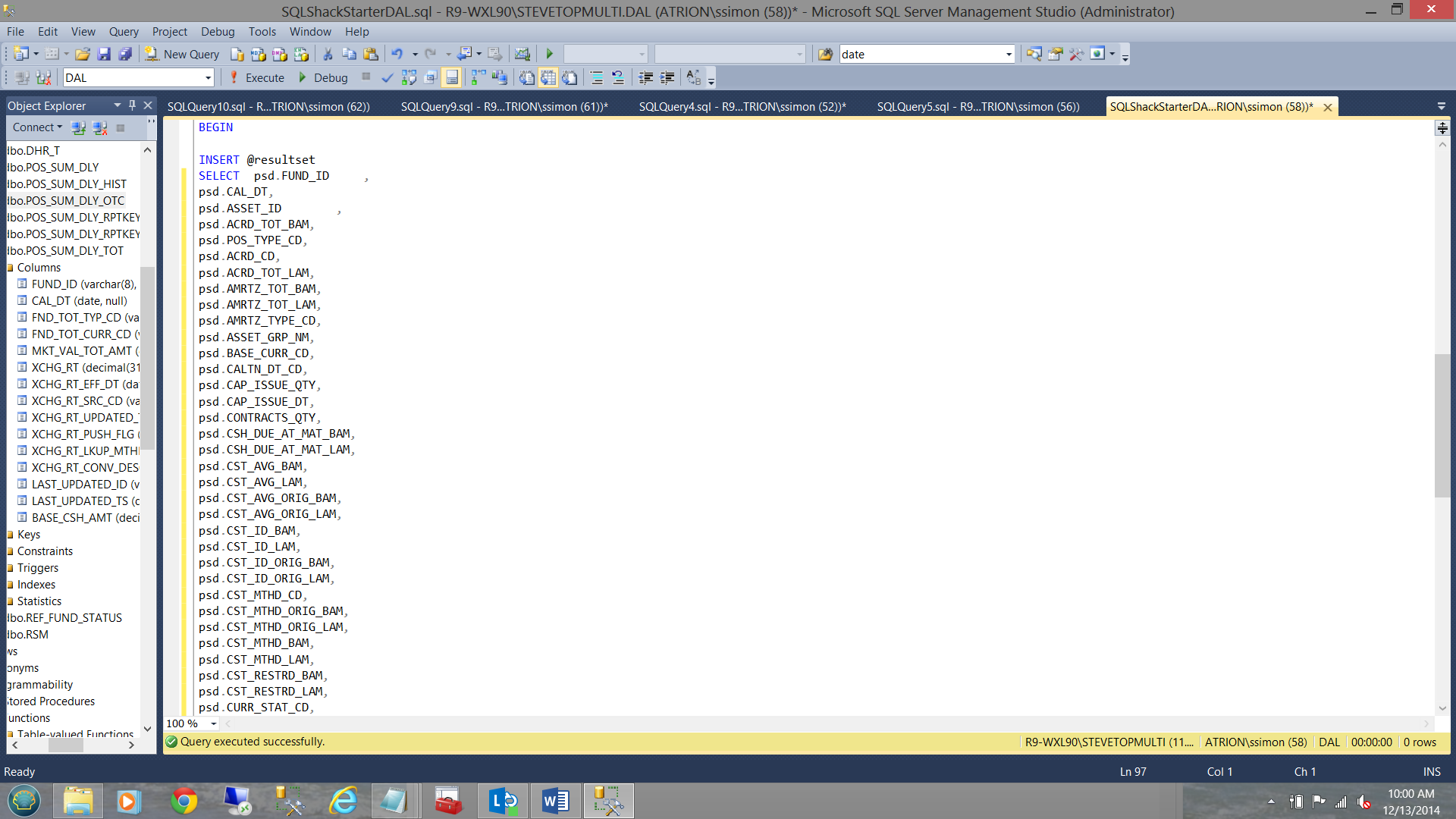

The table shown above is a great example. It has 149 fields (see the code above).

Having done the proper ‘due diligence’ with the user community and after having a myriad of meetings with the power users, we found that there was much commonality in the queries that they wrote.

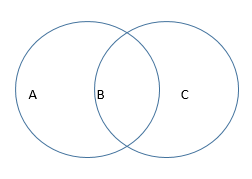

The simple Venn diagram (above) shows that one or more folks used column B and one (person/group) used only A and another (person/group) used only C.

However there were commonalties in their processes thus it made sense to combine fields A,B, and C into one DAL function.

Let’s construct our first DAL

We start by opening SQL Server Management Studio and by opening a new query.

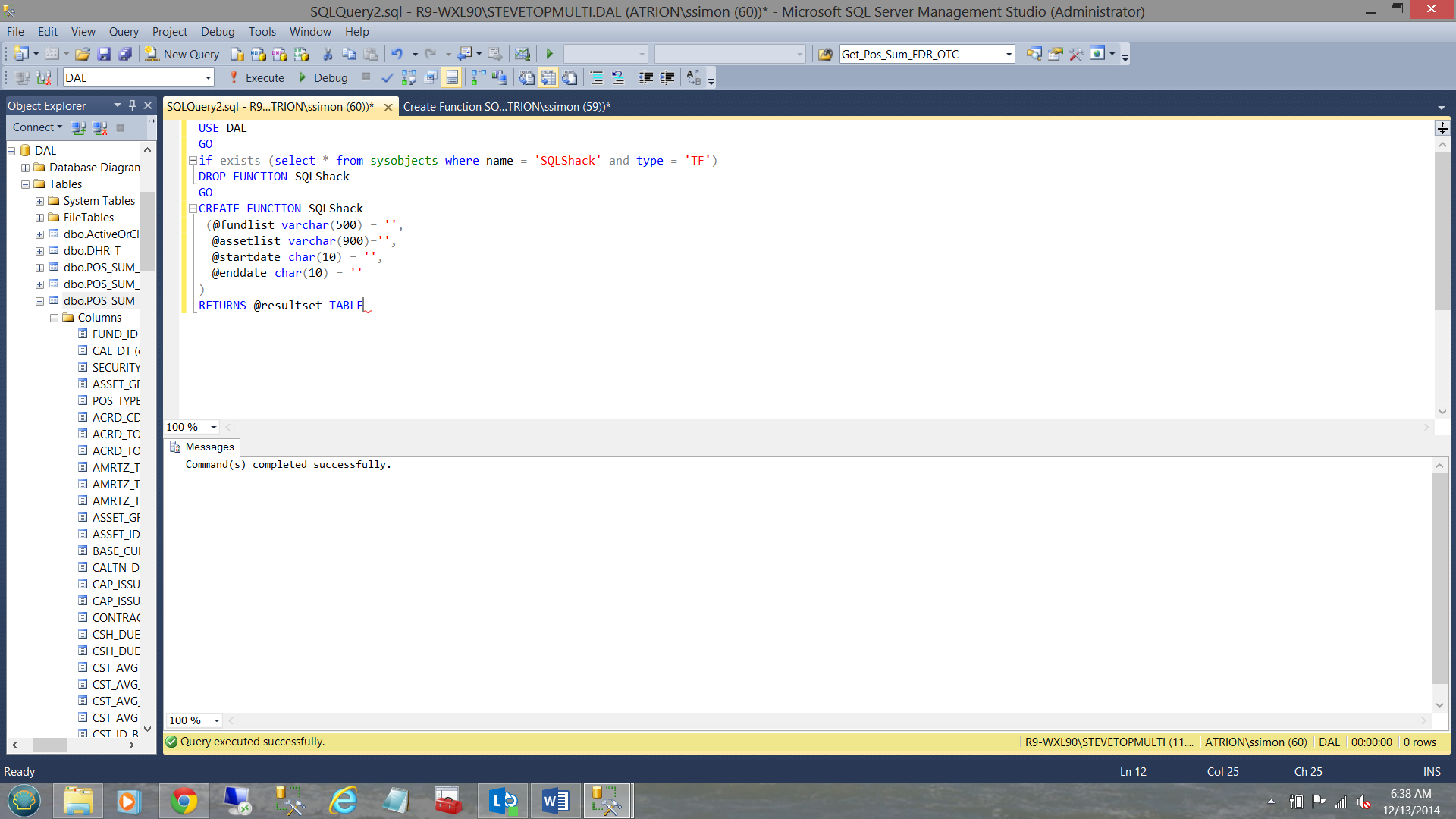

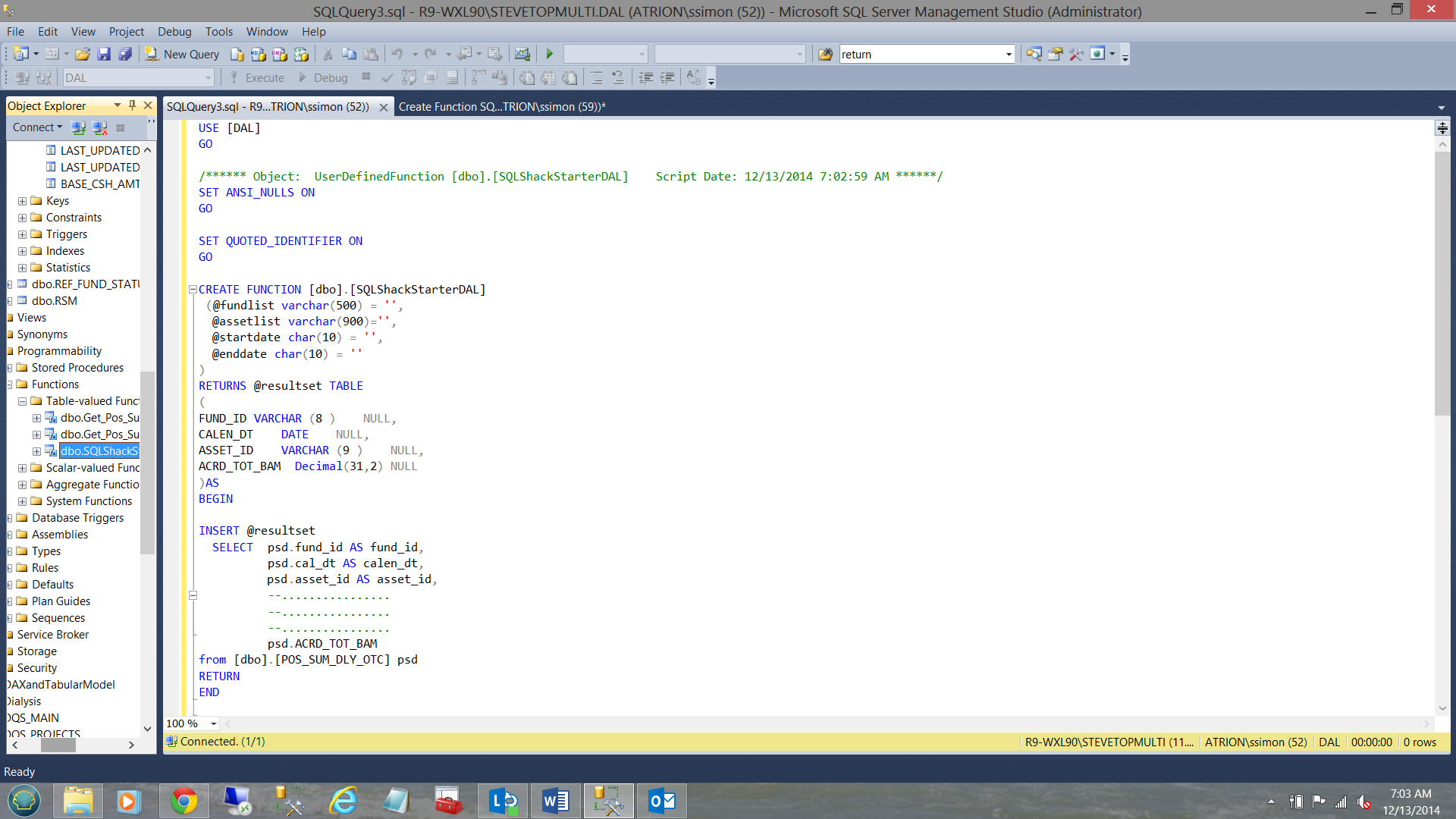

As the reader will note above we shall be using the DAL database. Note that we first check for the existence of an object with the name ‘SQLShack’ and TYPE = ‘TF’. Should there be such an object then we know that we are really going to re-create the object.

The astute reader will note the four (4) parameters that are passed through to our DAL:

- A comma delimited list of funds (none, one or more may be passed)

- A list of assets (the children of a ‘fund’). Once again (none, one or more may be passed)

- A start date parameter

- An end date parameter

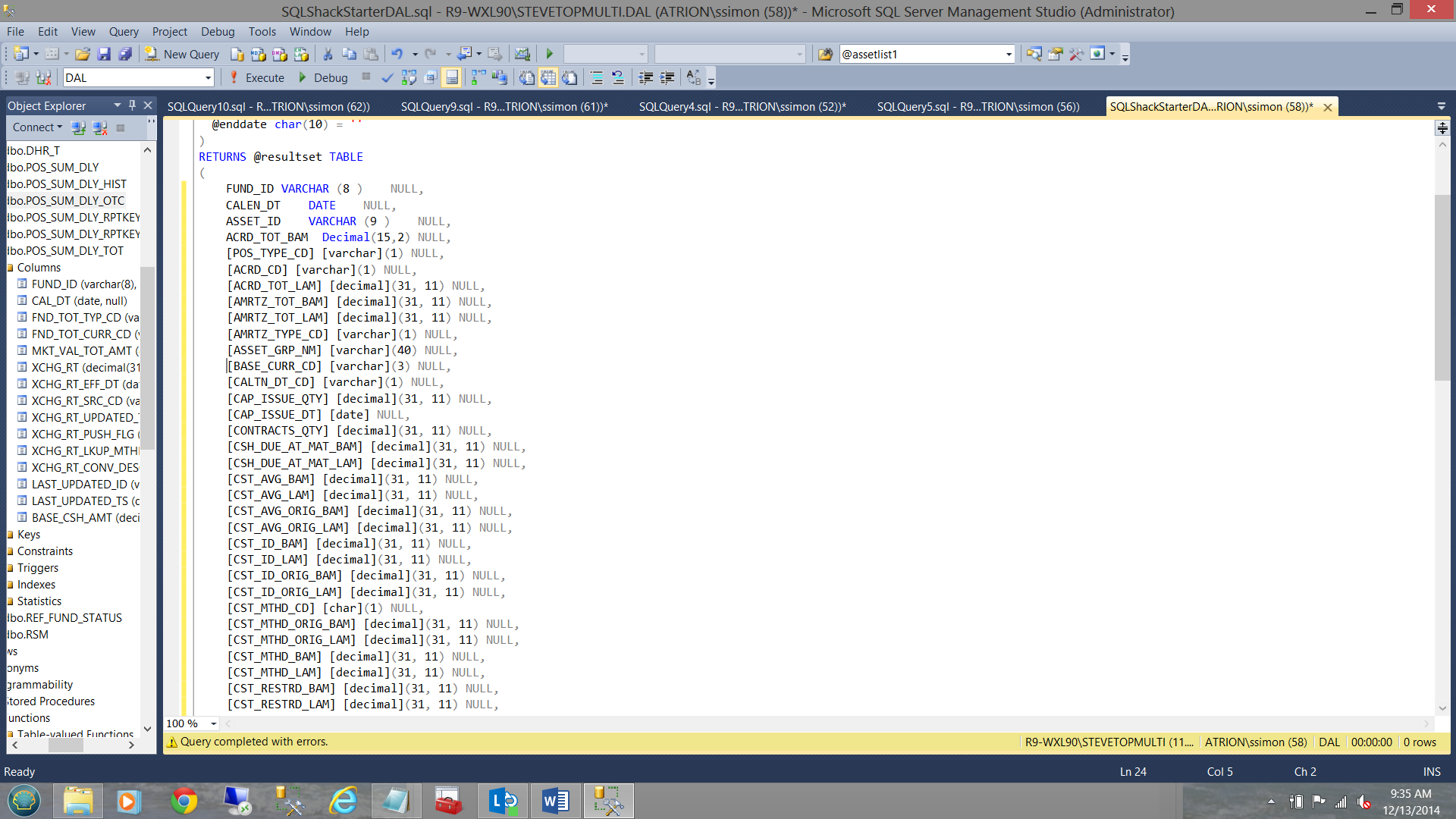

We also indicate that the function will return a table in the form of a table variable (@resultset).

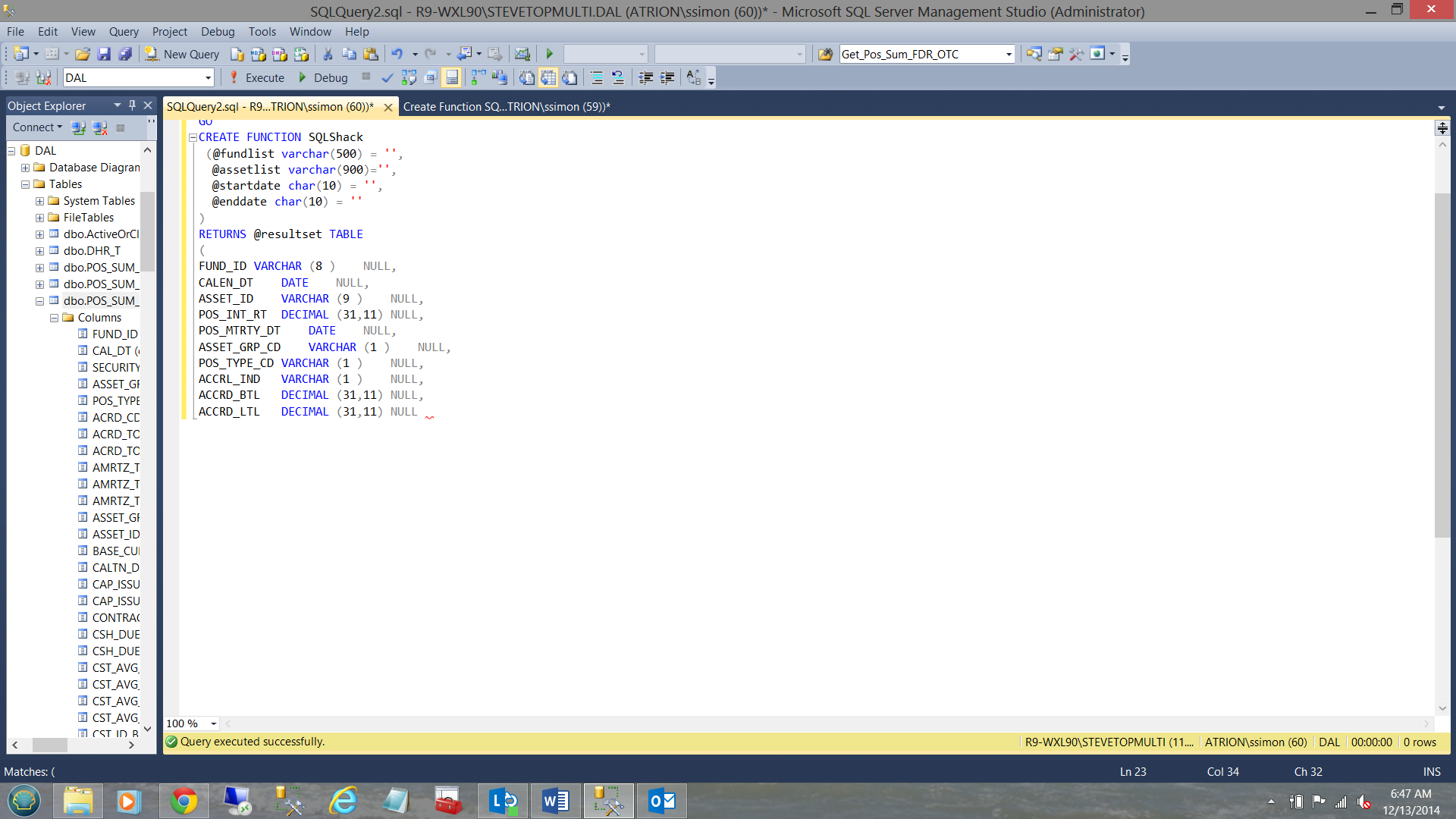

We are now in the position to define the output of our table function (see below). In other words the data that will be returned to our end users.

Whilst the actual SQLShack DAL has 100 + fields, we see a few of them in the screen shot above. The one piece that is still missing is the SQL code to pull the data.

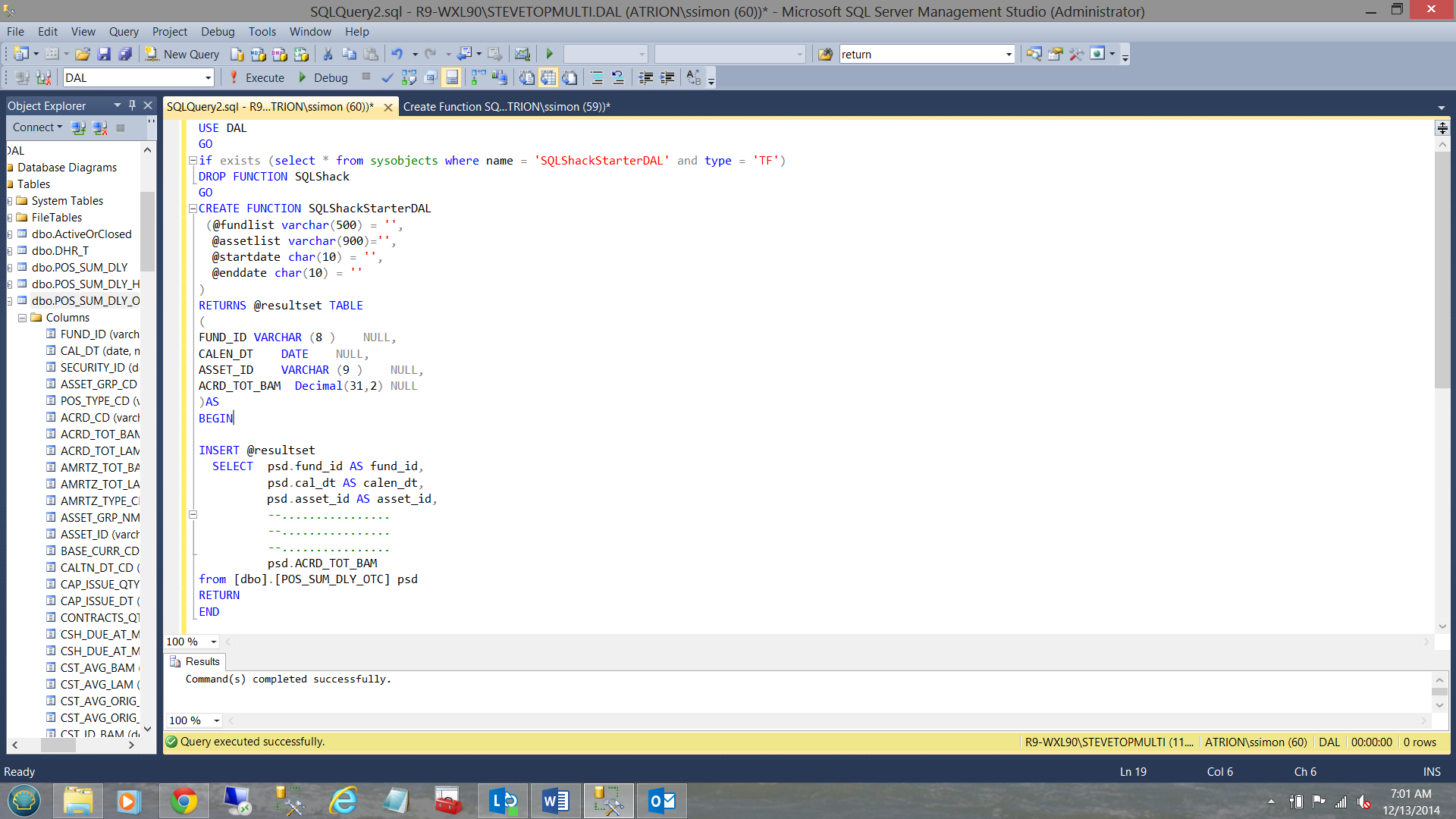

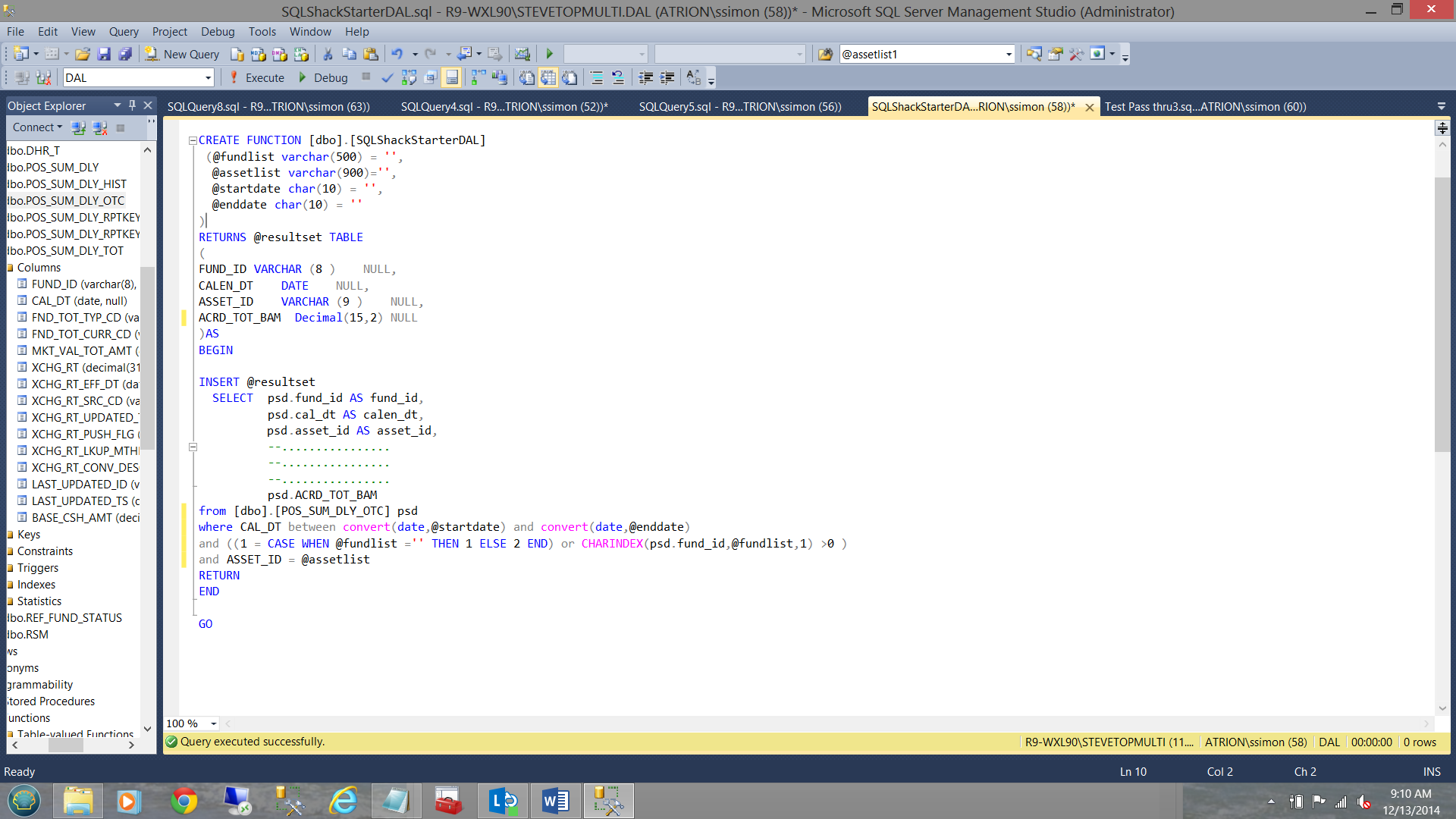

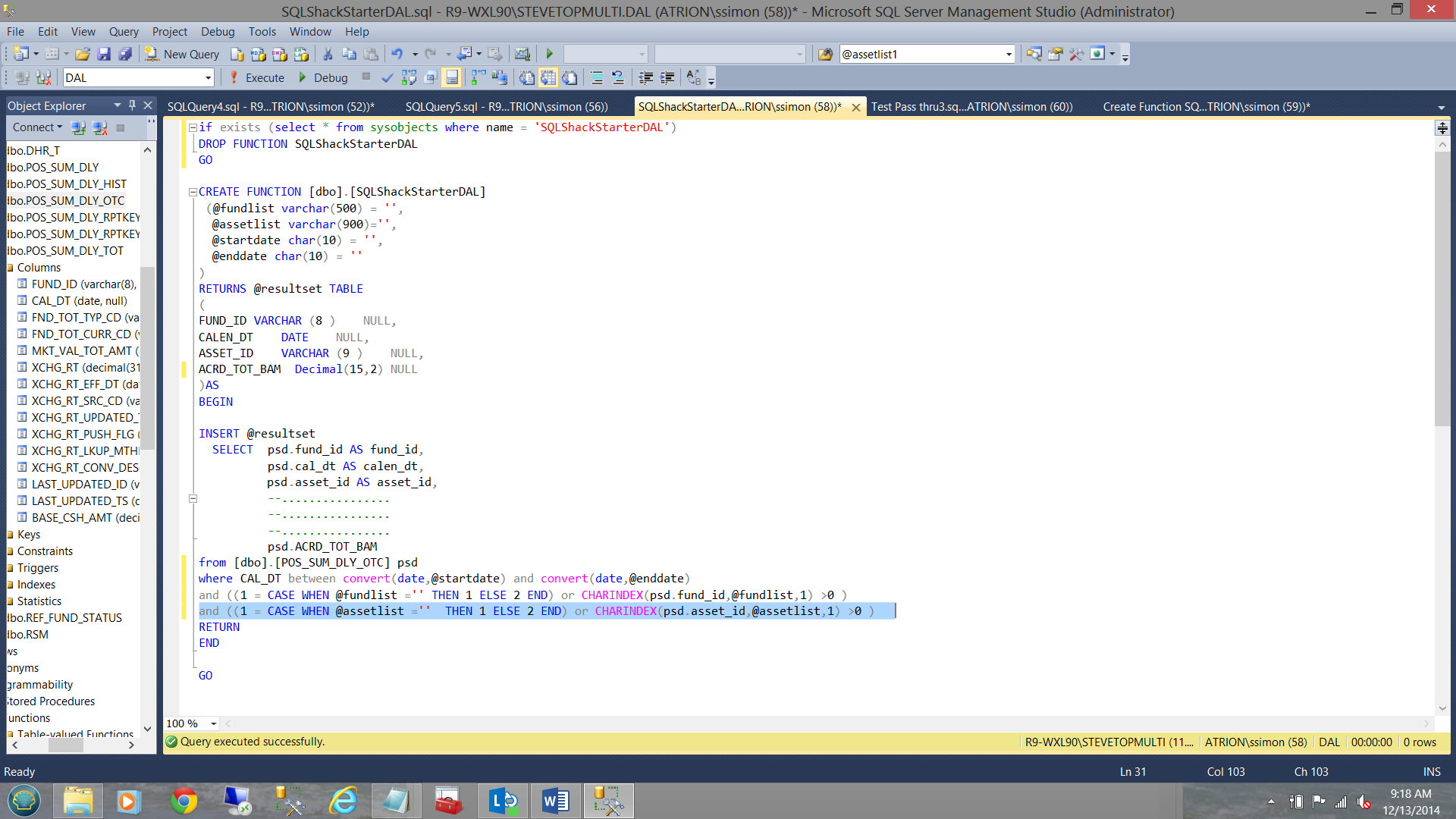

Our completed starter DAL (renamed “SQLShackStartedDAL”) may be seen below and once created will be found amongst the “Table-valued functions” (see below).

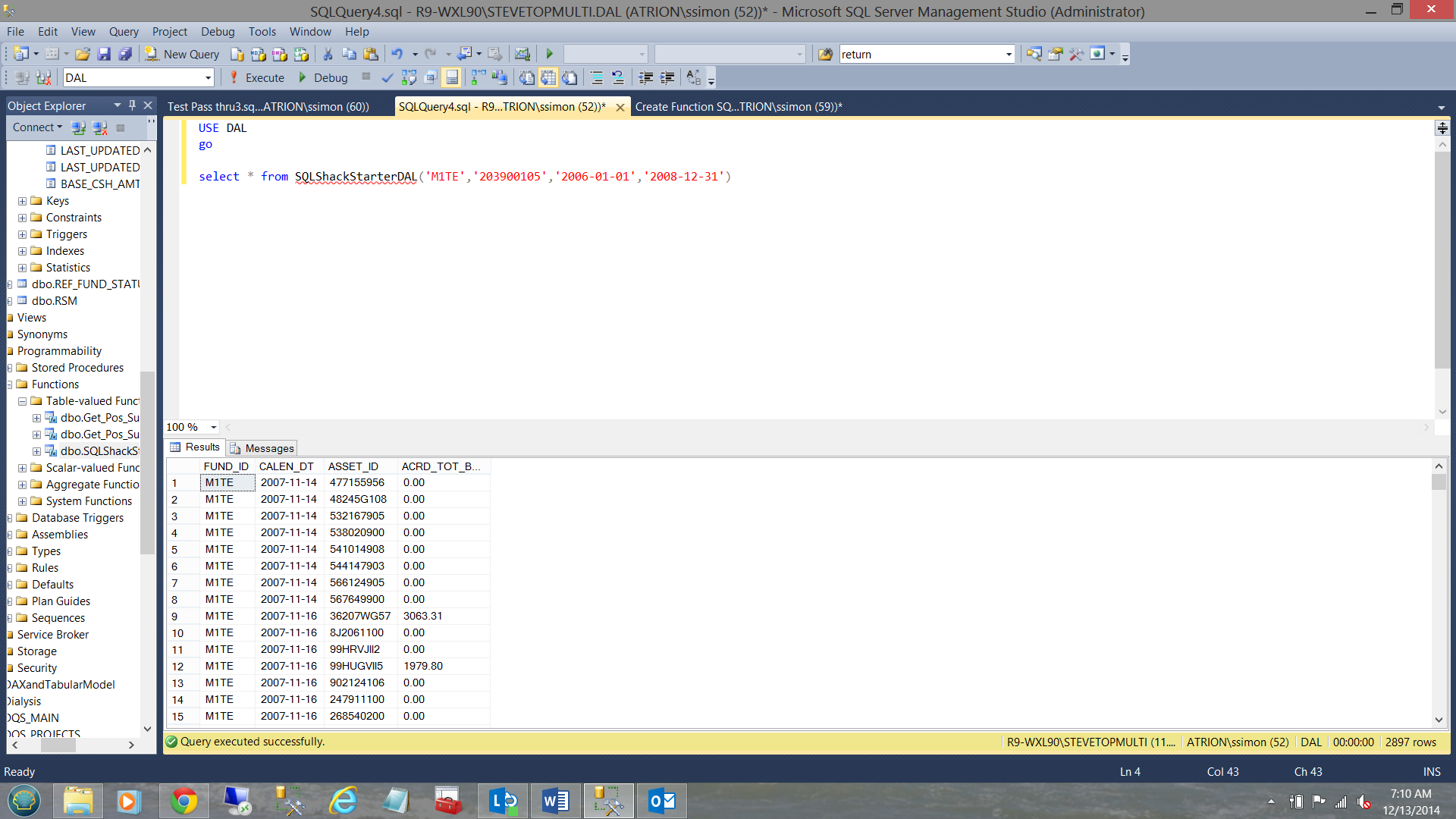

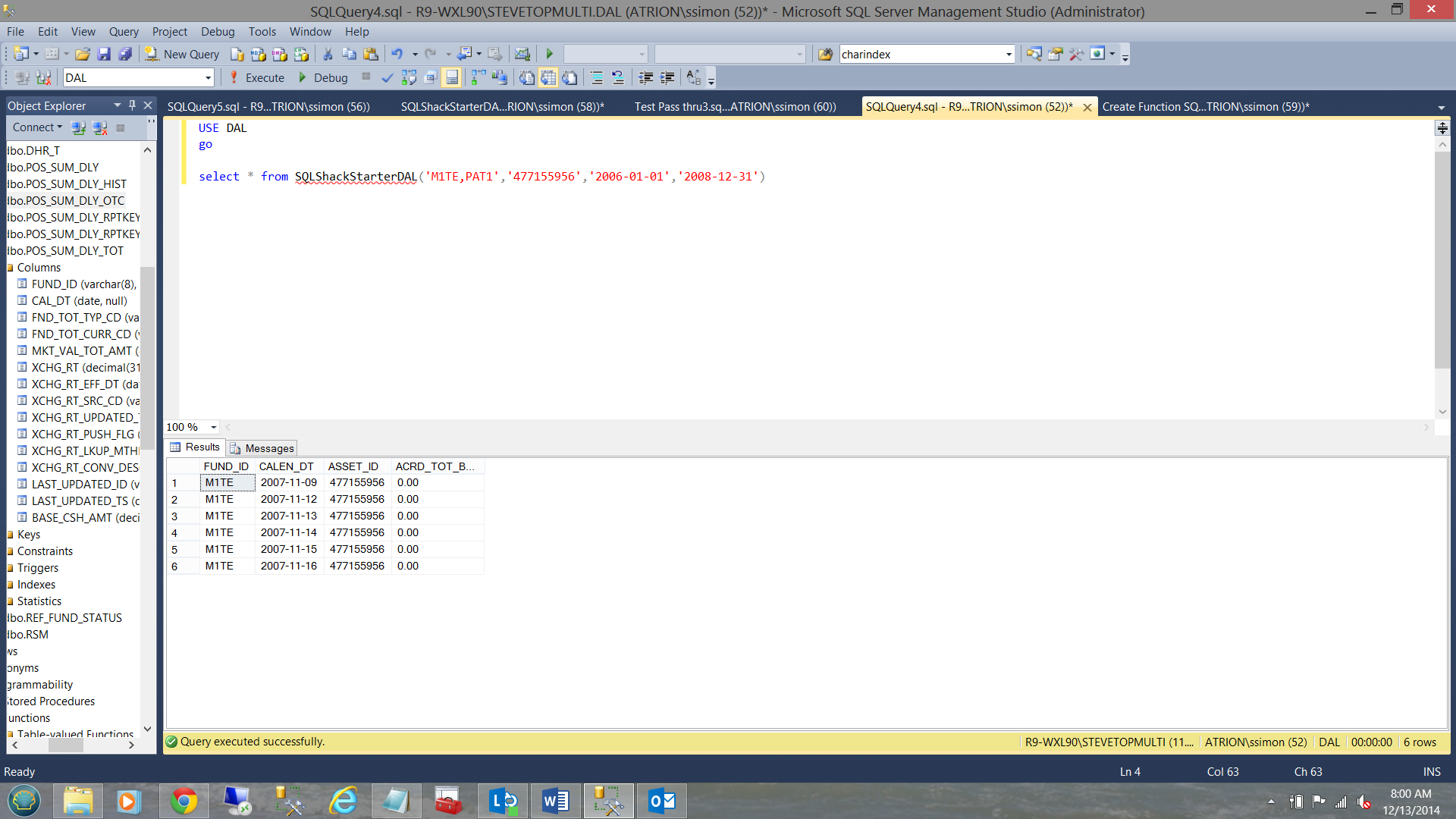

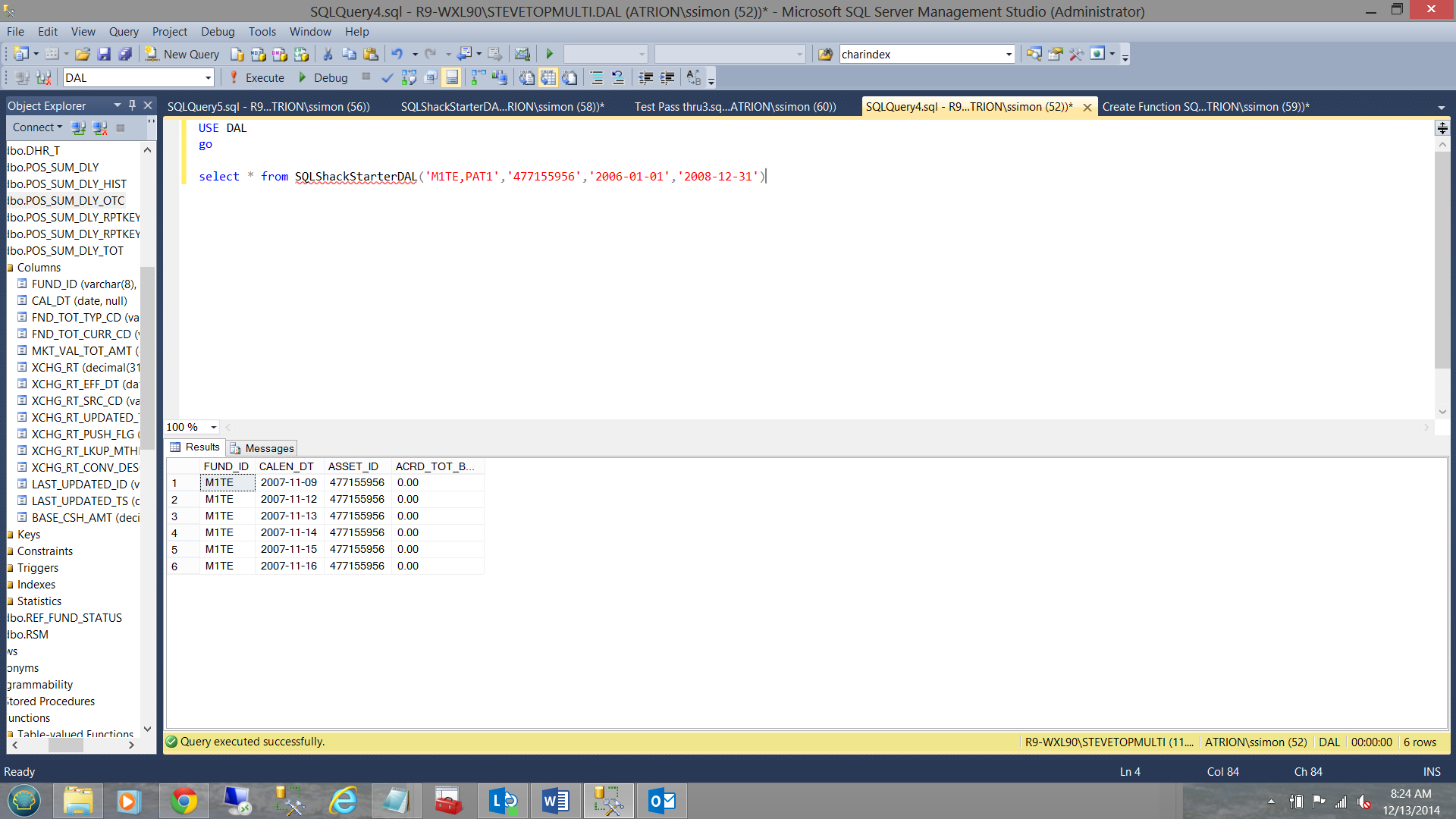

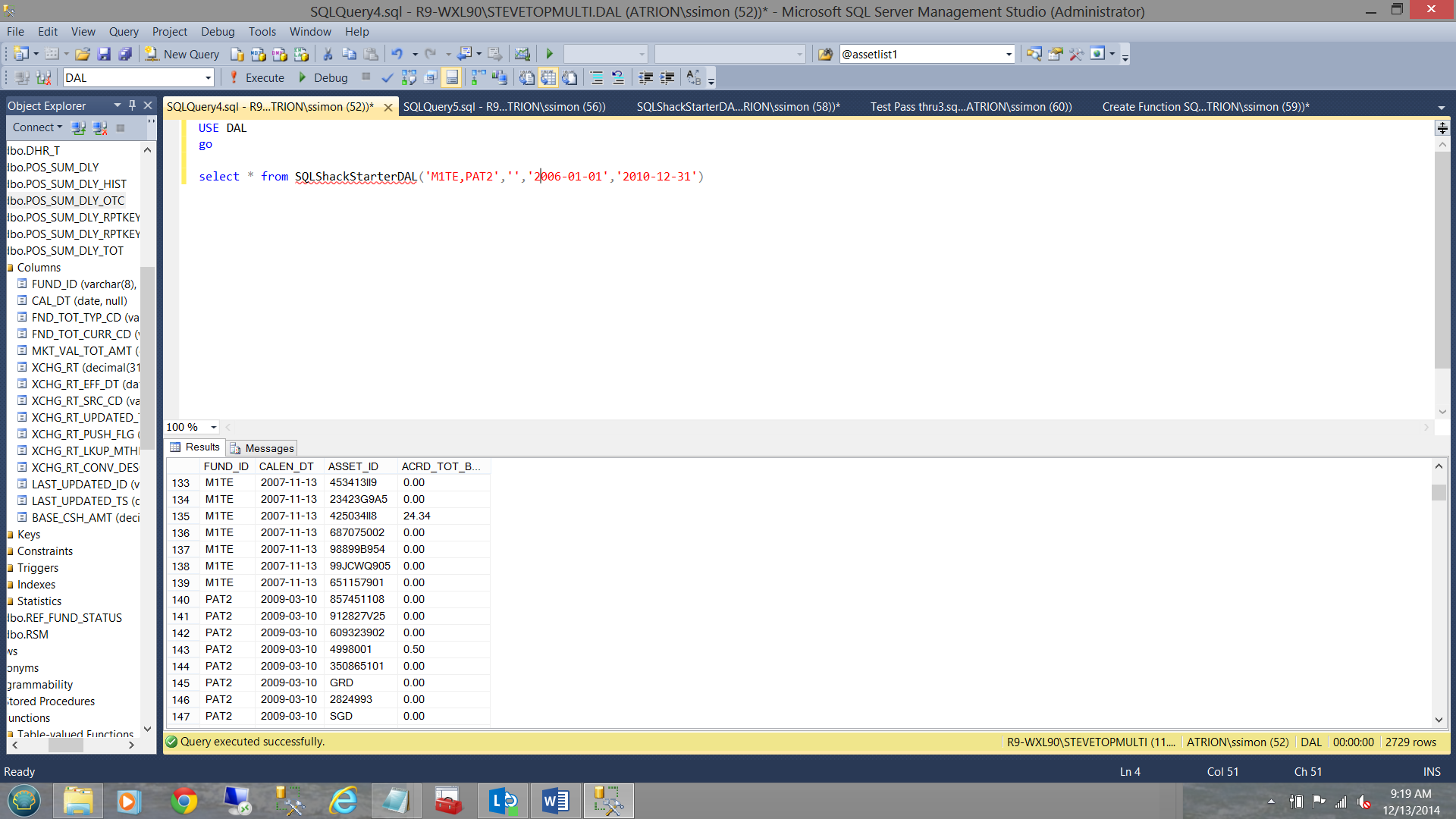

Now that we have created a very simple DAL, it is time to give it a try.

As we shall note above, I have create a simple query to pull data from the DAL. I have given the query my fund list, my asset list and a start and an end date. Running the query I obtain ‘tonnes’ of data, HOWEVER we forgot one important point and that was to insert a predicate into the function. Let us do that now.

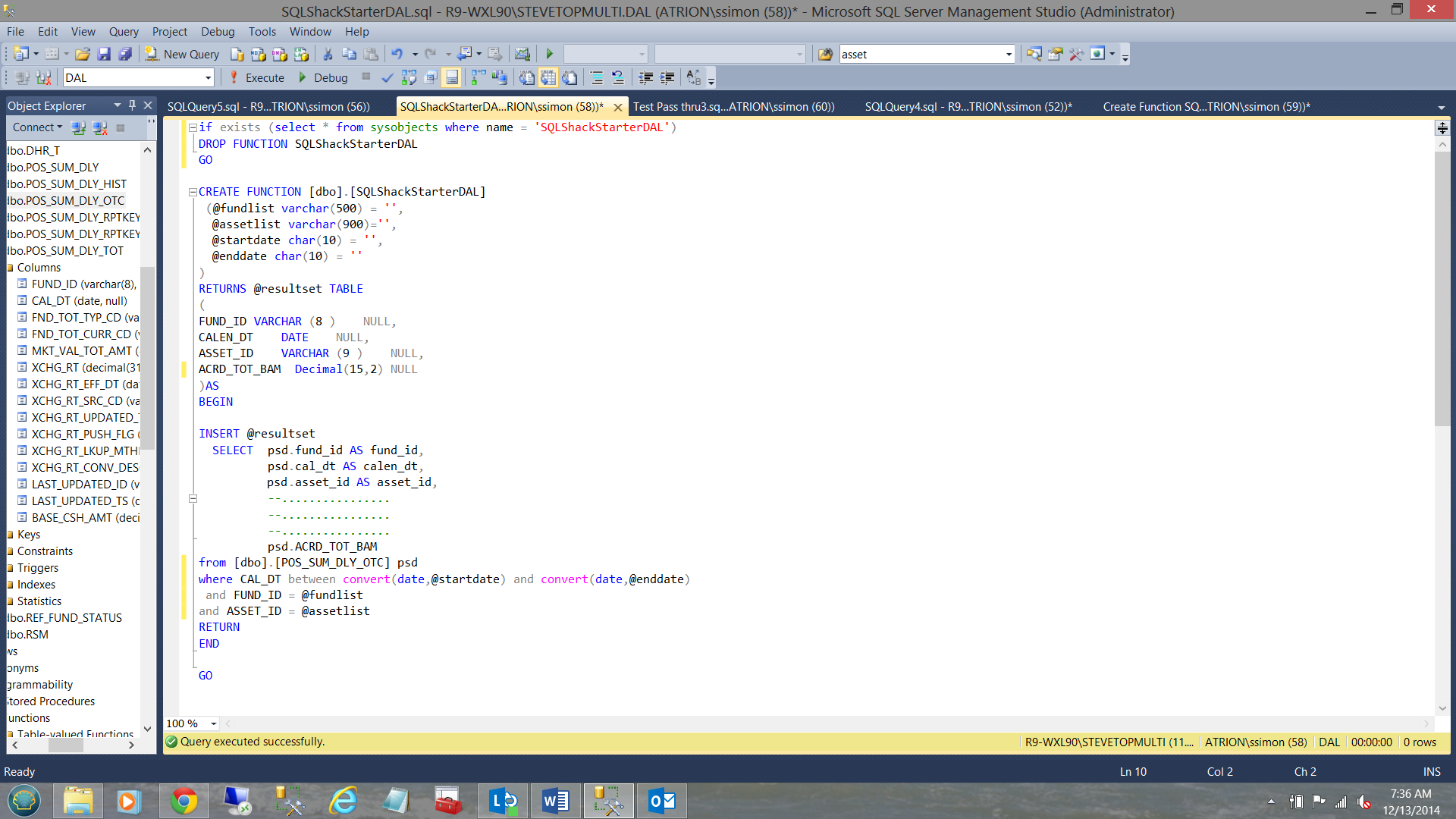

That looks better (see the predicate above).

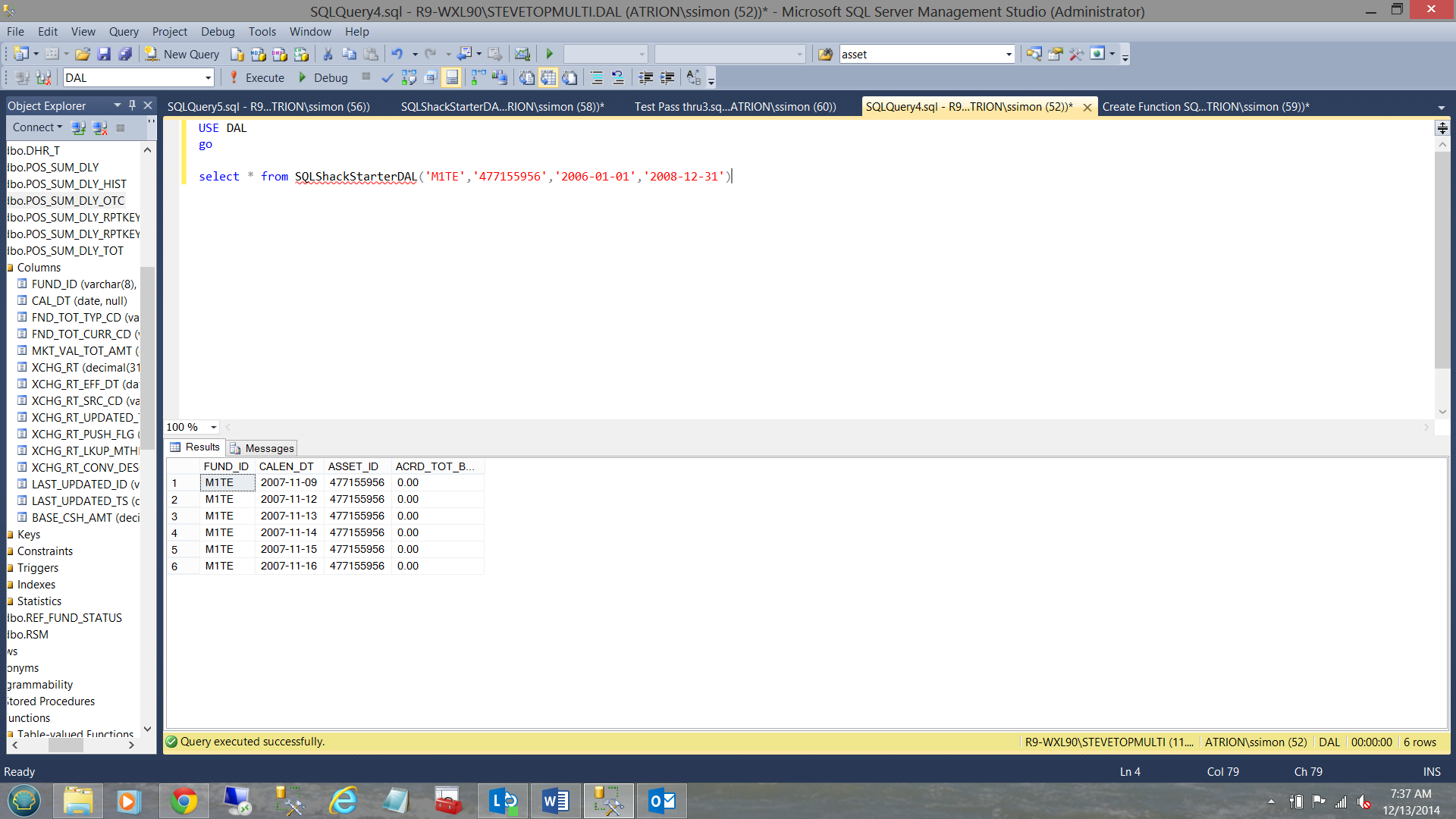

Our result set, may be seen above.

On beyond ‘Mickey Mouse’ queries.

At this point, we may want to see more than one fund, how do we achieve this?

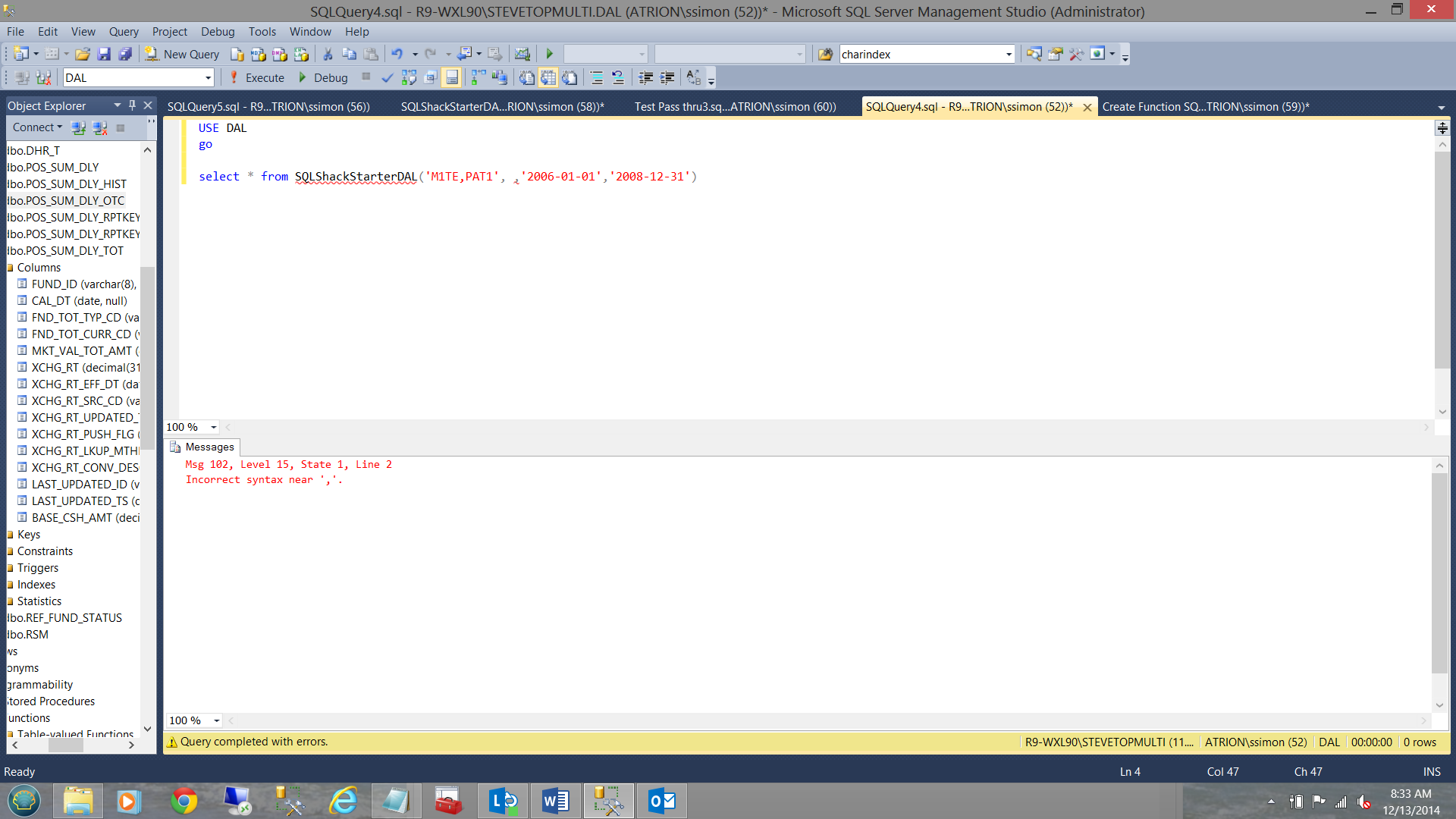

Note that in the screen shot above, I have added another fund to the fund list. We are now looking for ‘M1TE’ and ‘PAT1’. Note that the two funds are separated by a comma.

It is obvious that as the query now stands, nothing will be returned as SQL Server will interpret the fund as being “M1TE,PAT1” and this is not the case. Let us fix this now.

In the screen dump above, note the replacement for “and FUND_ID = @Fundlist” with

|

1 2 3 4 |

“and ((1 = CASE WHEN @fundlist ='' THEN 1 ELSE 2 END) or CHARINDEX(psd.fund_id,@fundlist,1) >0 ) “ |

This requires an explanation. SQL Server parses from left to right. This said, should @fundlist be blank, and with the case statement (being evaluated first) 1 = 1, which is TRUE. No filter is applied and no further parsing is done. Should there be a list of funds being passed through, then @fundlist is NOT empty therefore 1 = 2 which is FALSE and therefore the second part or the OR clause is parsed.

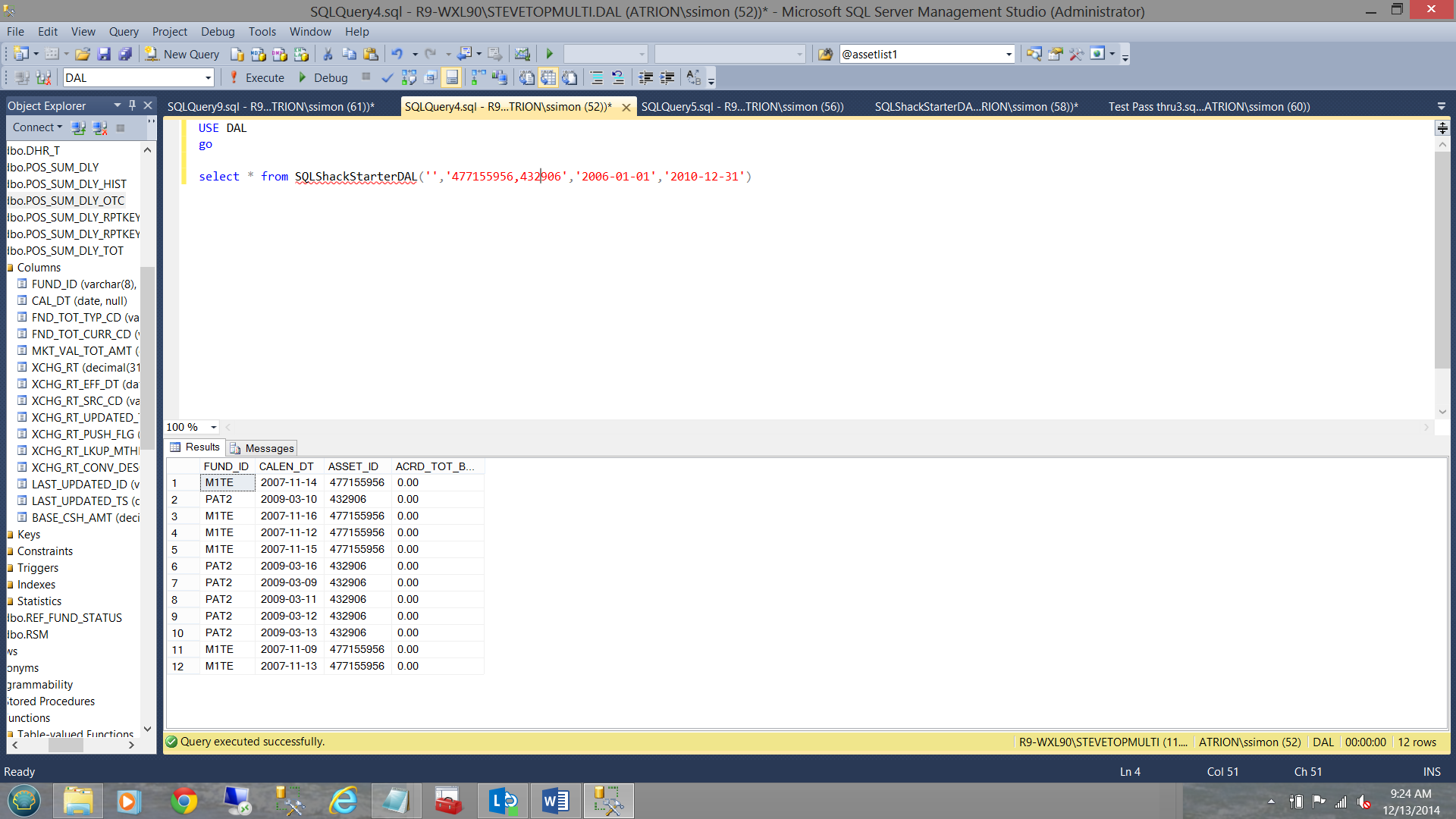

When we now run the query we find the following:

Note that we have returned data for fund M1TE but none for fund PAT1 (which is to be expected) as an asset belongs to a fund and one fund only (a business rule).

So here is the “Kicker”!

How do we alter our query to permit two or more funds to be returned? As mentioned above an asset belongs to one and only one fund. Our predicate (with in the DAL function) is defined via “and’s” (see below).

|

1 2 3 4 5 6 |

where CAL_DT between convert(date,@startdate) and convert(date,@enddate) and ((1 = CASE WHEN @fundlist ='' THEN 1 ELSE 2 END) or CHARINDEX(psd.fund_id,@fundlist,1) >0 ) and ASSET_ID = @assetlist |

Changing the asset list in our query from

|

1 2 3 |

select * from SQLShackStarterDAL('M1TE,PAT1','477155956','2006-01-01','2008-12-31') |

to

|

1 2 3 |

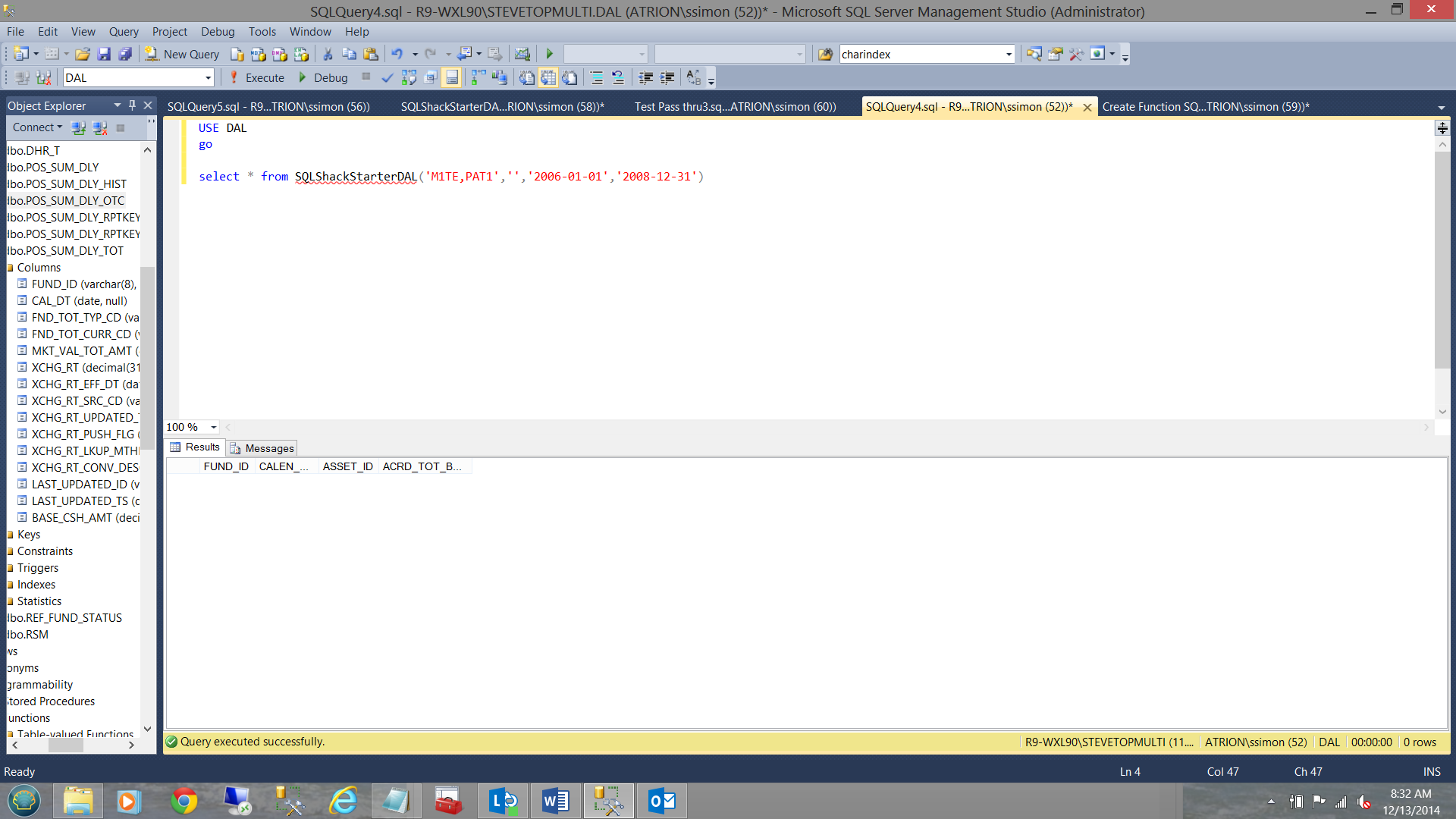

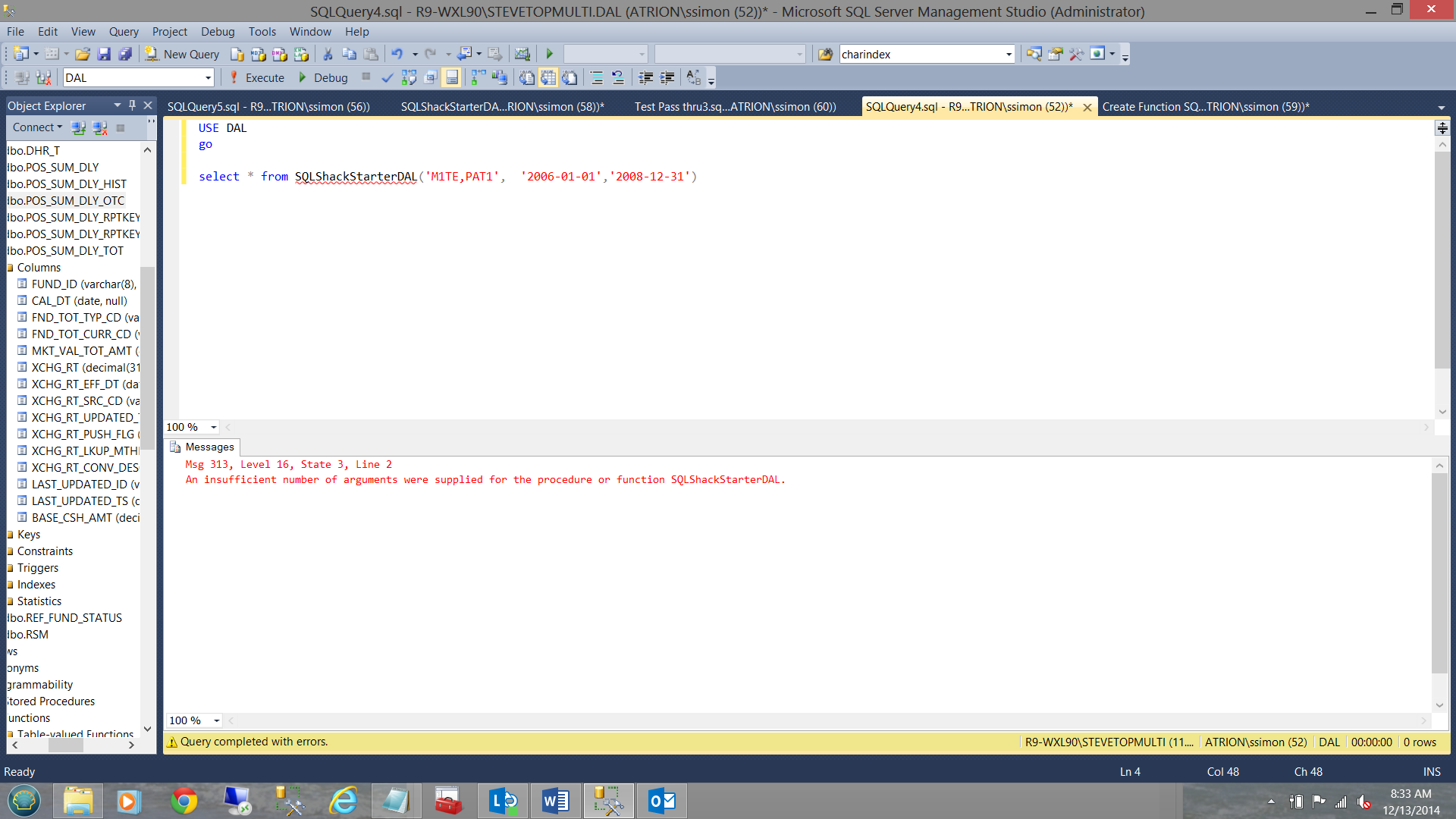

select * from SQLShackStarterDAL('M1TE,PAT1','','2006-01-01','2008-12-31') |

will not work as can be seen on the screen dump below:

The reason being that we have no fund with a blank on NULL name.

By taking the parameter out altogether will not work either, as may be seen below:

Even by removing the comma, we generate a run time error (see below).

So what do we do?

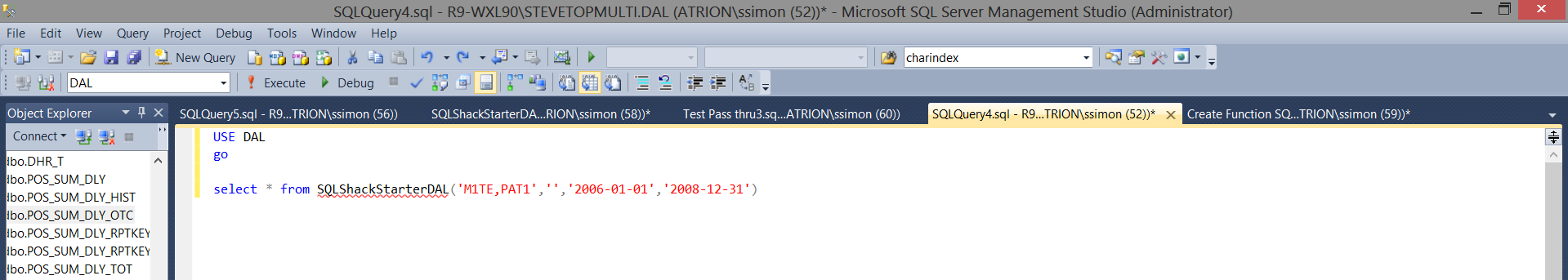

Let us back up a tad and return the query to its original form, yet remove the ‘asset ID’ value. At this point in time we do nothing further (see below).

NOW!! The astute reader will now say “Why not set a default?” Remember that your predicate is constructed with “and” therefore what value do you put in for the default. A blank or NULL will NOT do the job as there are no assets called ‘‘ nor ‘NULL’.

Going back to the DAL function, let us make a small change and add the following piece of code for the asset_ID

|

1 2 3 4 |

and ((1 = CASE WHEN @assetlist ='' THEN 1 ELSE 2 END) or CHARINDEX(psd.asset_id,@assetlist,1) >0 ) |

Our DAL now is structured as follows:

Let us see how this looks back in our test query. Note that going forward I shall be using fund PAT2 as one of our funds.

Note that both funds are now showing regardless of the asset ID.

Similarly we could set the funds to ‘ ‘ and place two asset ID’s within the query (see below).

So where are we going with this?

When we look at a larger version of this DAL function (i.e. add more fields to the DAL) such as the one seen below, we shall note that with the list of fields that is contained within the DAL, we are in a position to customize what each user requires.

Above is a list of +/- 20 fields that we are now going to insert into our starter DAL table function.

The fields definitions have been inserted into our DAL function (see above) and the same fields added to our select statement (see below).

We now recreate the DAL function and re-run our query.

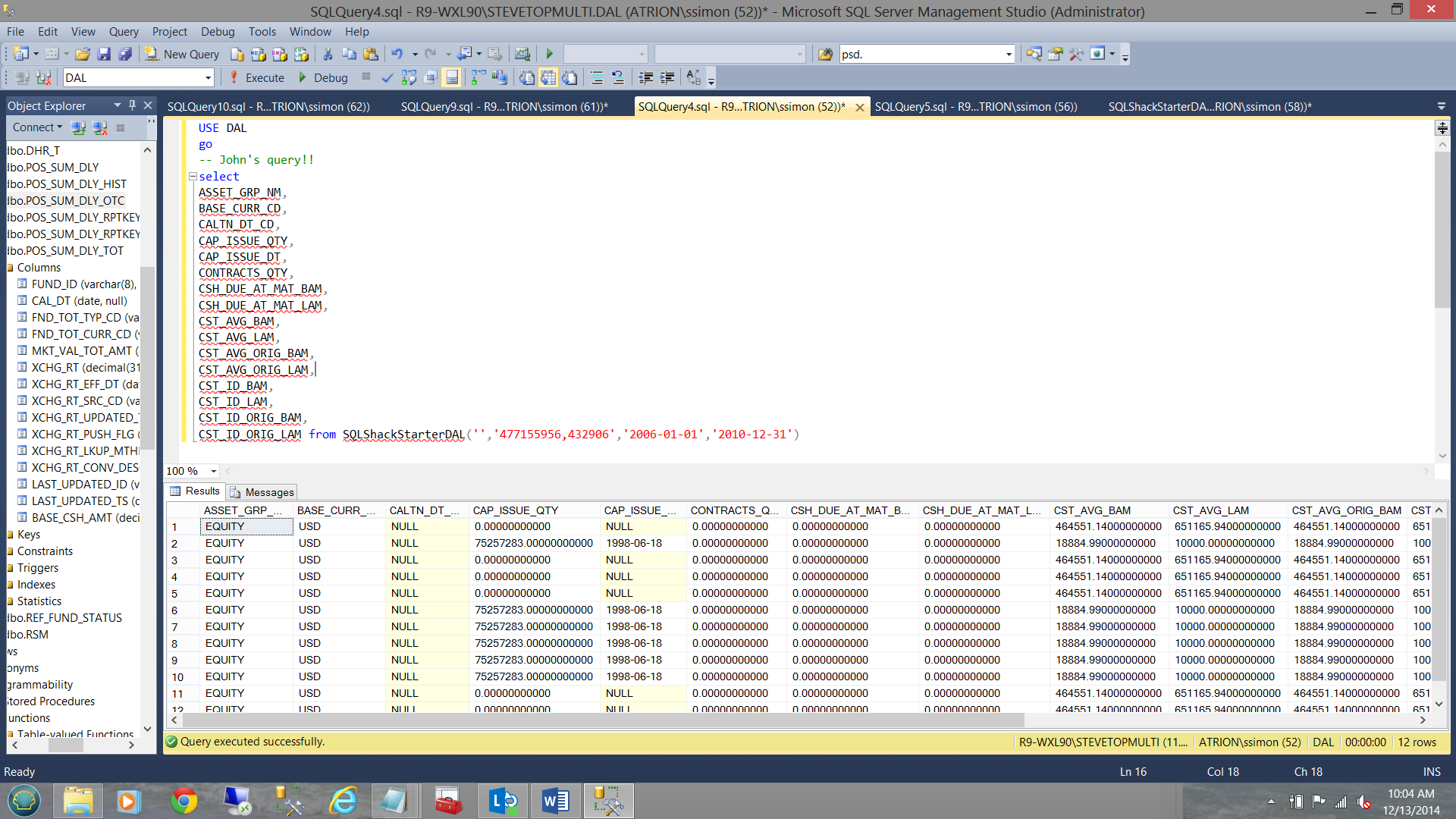

What John wishes to see:

..and now what the fields that Mary wishes to view:

Thus we can see how flexible final data extraction may be; remembering that the query encased within the DAL has been well tuned by the DBA’s. This said we are being both efficient and effective!

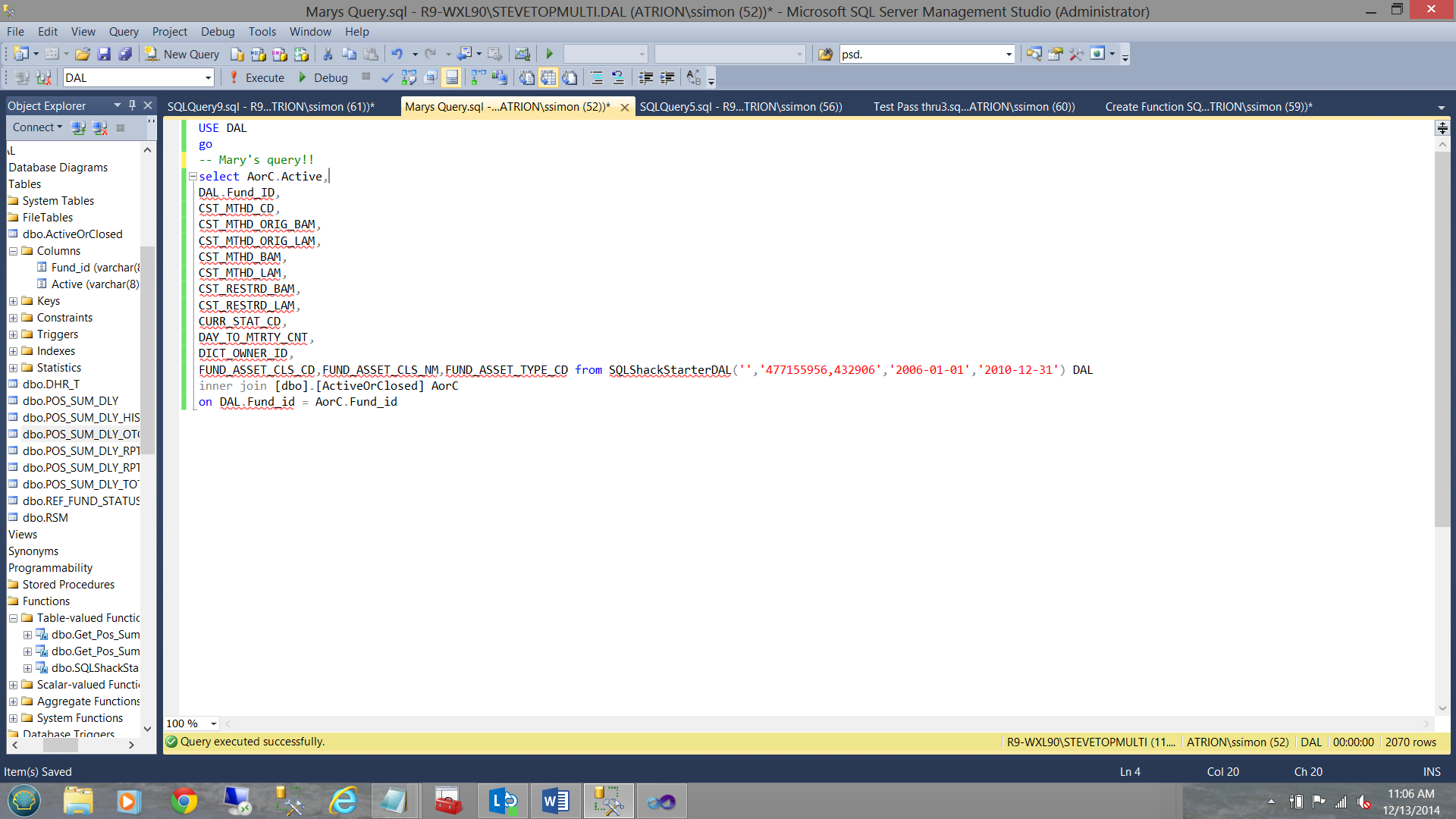

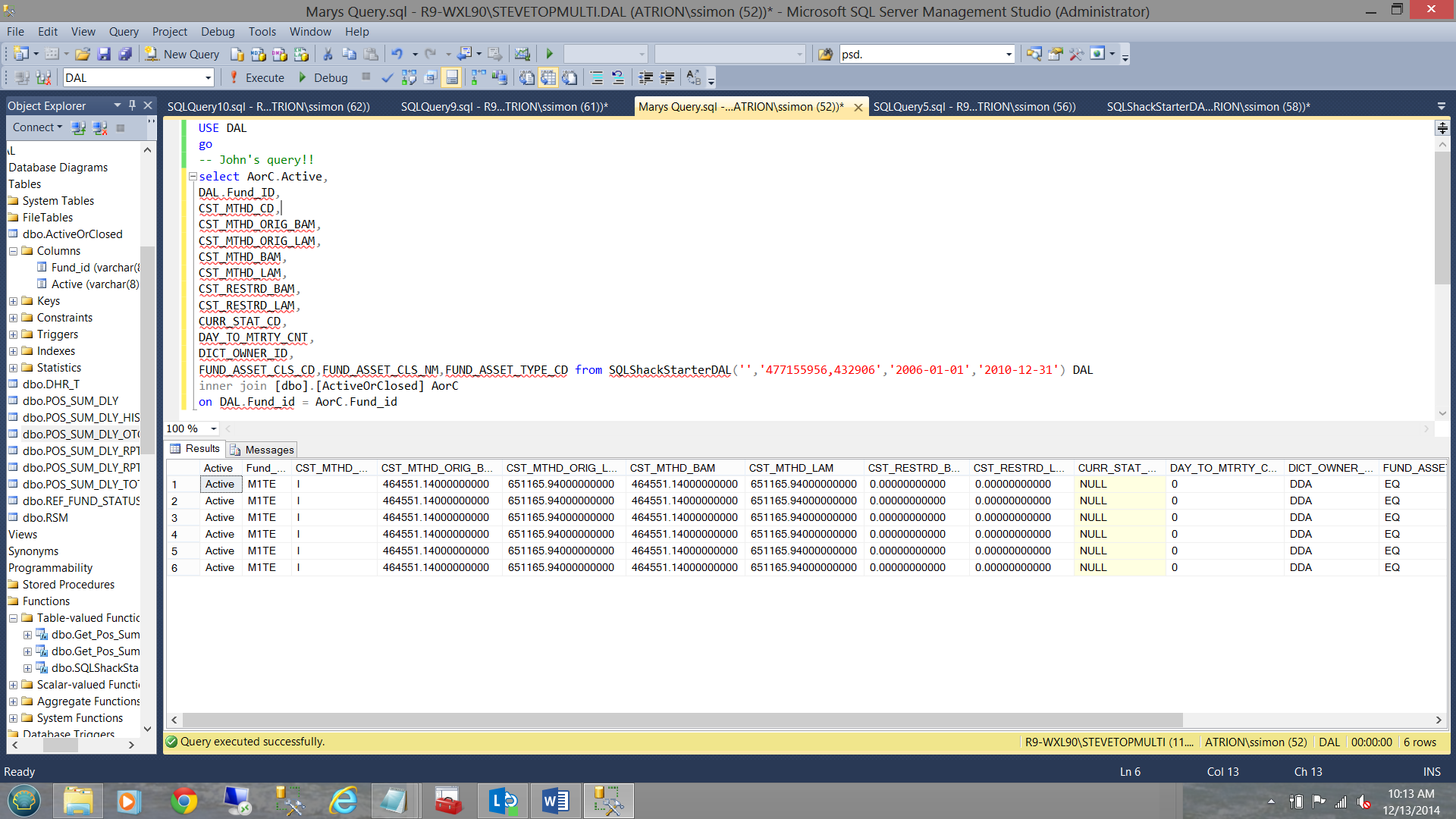

We should also realize that if allocated the necessary rights, we are STILL ABLE to effect ‘joins’ to other tables and view (see below).

Note that in the screen above we check to see if the fund is still active or closed. This data comes from the “ActiveOrClosed” table.

Reporting from a DAL

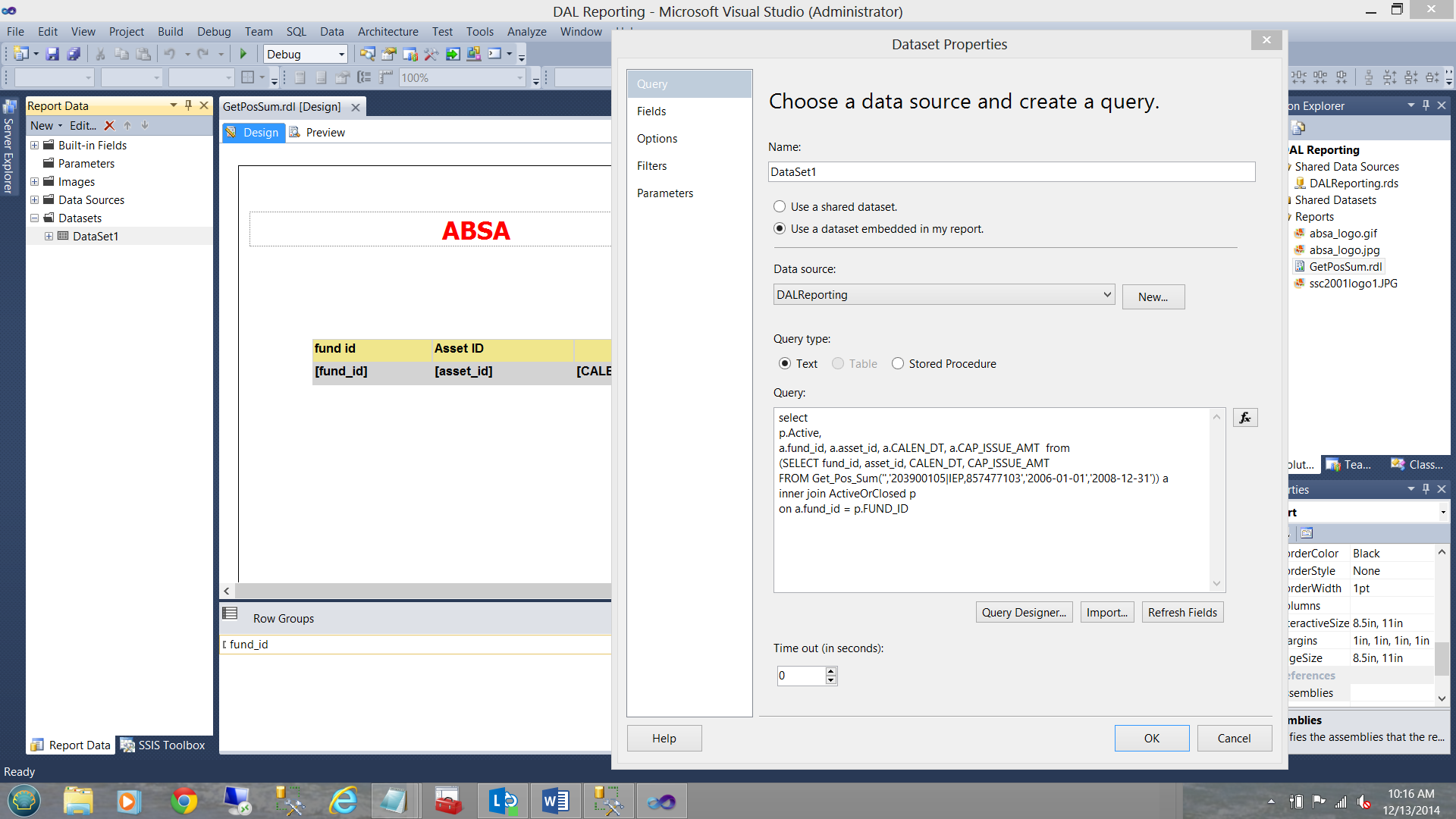

Our final exercise is to show you that the same DAL may be utilized for reporting. Immediately below, I show a report that I have created in SQL Server Reporting Services. Note that in this case I have used T-SQL as the query type. Should you be unfamiliar with SQL Server Reporting Services, please do yourself a favour and have a look at some of the earlier articles that I have posted on this website.

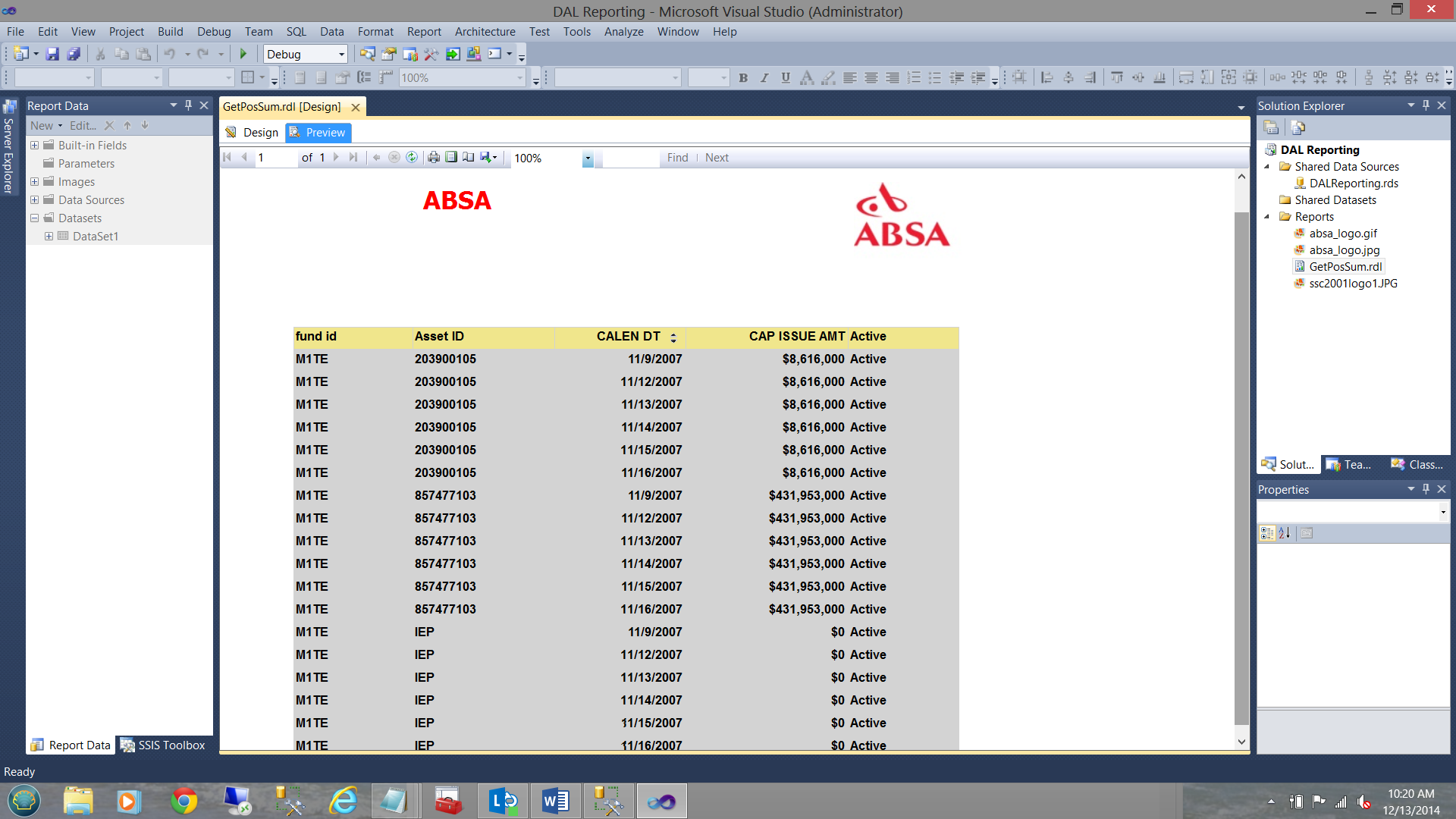

Running the report we find the following:

Conclusions

Power users and business analysts (with SQL experience) can at time issue queries that can bring the system to its knees. As developers and DBA’s, it is our duty to ensure that these folks are able to obtain the necessary data in a timely manner as opposed to a query that runs in excess of twenty four hours and returns four rows.

Well-tuned generic queries encased within a user defined table function can yield a myriad of combinations of required data (to the end user) PLUS ensure that rendering of this data is done in the most efficient and effective manner.

We have discussed how parameters ensure that the queries and DAL’s are flexible and we have also seen that with a properly constructed DAL it is not necessary to pass an argument.

Finally, I do hope that you will give it a try.

Until the next time, happy programming!

- Reporting in SQL Server – Using calculated Expressions within reports - December 19, 2016

- How to use Expressions within SQL Server Reporting Services to create efficient reports - December 9, 2016

- How to use SQL Server Data Quality Services to ensure the correct aggregation of data - November 9, 2016