Introduction

In any application, we will likely have some need to control input data, either altering, filtering or otherwise changing text to fit our application’s needs.

Sometimes these needs arise from a desire to remove characters or text that are logically not compatible with an application. For example, a name, phone number, or ID number might need to be validated for correctness, or updated to remove or alert on invalid characters.

Security is also a concern in that we need to verify any freeform text to ensure that is properly delimited to ensure we cannot be the victim of SQL injection, regex hacking, or any other undesired string manipulation by the end user.

Input Modification: Why Do We Care?

Most applications we build will perform some level of input validation, whether it be to remove unwanted characters, check for SQL injection attempts, or parse inputs into a specific format. Despite this, we still should consider any text input from uncontrolled sources and what we may or may not want to be stored from it. The following are worthwhile to check:

- Is NULL allowed? If not, ensure that stored procedure parameters don’t provide a default and that a specific logic path is taken when a NULL is explicitly provided for a field that should always be populated with a meaningful value.

- Is a blank or empty string allowed? If not, check for it and act similarly to the NULL scenario above.

- Are there any special symbols, formatting characters, extended ASCII, or Unicode characters that we want to disallow from our input?

- Do we want to perform validation against how our input is delimited, in order to prevent SQL injection attacks?

- Do we wish to control regex and string manipulation character combinations, in order to prevent the user from accessing data they shouldn’t normally be able to view?

- Is the target data intended to fit a specific format, such as credit card number, ID number, phone number, or other specific data type that should only allow certain characters, a specific length, or follow other important rules?

If the answer to all of these questions is always “No”, then we are free to move on and not worry about input cleansing. It would be both unusual and worrisome though, if in an application, we had no reason to validate any aspect of inputs for any security or data integrity purpose. Given this almost constant need, we can begin tackling each of these problems one-by-one. In doing so, we’ll discuss a variety of different solutions that will allow for methodical, simple, efficient, and/or customizable ways of validating, cleansing, and modifying input data.

NULL and Empty Input Strings

This first example is simple, and a fun way to move into the topic of input validation. The most common unwanted input for a parameter is going to be a NULL or empty string. Consider the following (very simple) stored procedure:

|

1 2 3 4 5 6 7 8 |

CREATE PROCEDURE dbo.Null_Test @Int_Parameter INT AS BEGIN SELECT @Int_Parameter; END |

A single parameter is passed in, and it is not given a default value. If we execute it, the value is returned. The following TSQL returns the input value, 17:

|

1 2 3 |

EXEC dbo.Null_Test @Int_Parameter = 17; |

Supplying no parameter yields an error:

|

1 2 3 |

EXEC dbo.Null_Test; |

Msg 201, Level 16, State 4, Procedure Null_Test, Line 0 [Batch Start Line 26]

Procedure or function ‘Null_Test’ expects parameter ‘@Int_Parameter’, which was not supplied.

Despite not providing a default value, it is possible to pass NULL in as the parameter value. Having no default means that a value must be passed in, but it can be anything, even NULL. If NULL is not valid and we want to avoid it from being entered, you can manage it like this:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 |

ALTER PROCEDURE dbo.Null_Test @Int_Parameter INT AS BEGIN IF @Int_Parameter IS NULL BEGIN SELECT 'NULL is not valid for @Int_Parameter'; RETURN; END SELECT @Int_Parameter; END |

Now when you try to execute the stored procedure with NULL, the results are as follows:

|

1 2 3 4 5 |

EXEC dbo.Null_Test @Int_Parameter = NULL; NULL is not valid for @Int_Parameter |

The SELECT statement can be replaced with a RAISERROR, TRY…CATCH…THROW, logging, or any other TSQL you can think of, including assigning a specific value, if needed. RETURN ensures that stored procedure execution ends immediately. While not obvious, RAISERROR does not immediately halt stored procedure execution for all severity levels. If you want a stored procedure to end at a given point, then RETURN will manage that for you.

We can perform very similar logic for empty strings, specific invalid values, or other any other undesirable input conditions that your business demands of you. Simply change the IS NOT NULL check to include additional checks as needed.

Removing/Replacing Unwanted Characters

There are a multitude of ways to alter an input string in order to remove, replace, or otherwise detect unwanted characters or string patterns. For very simple use-cases, simple solutions will suffice, but for more complex examples, the quick & dirty solutions can become cumbersome, inefficient, or error-prone.

In general, it’s important for applications to manage as much input-cleansing as possible. We can confirm and validate text in the database (or explicitly not care and leave it be), but it’s important to have a full understanding or what is consuming our data and the format that is expected.

Basic String Manipulation

The following is a very basic attempt at removing unwanted characters from an input parameter:

|

1 2 3 4 5 6 7 8 9 |

CREATE PROCEDURE dbo.My_Stored_Procedure @String_Parameter VARCHAR(289) AS BEGIN SELECT @String_Parameter = REPLACE(REPLACE(REPLACE(@String_Parameter, '@', ''), '#', ''), '%', ''); SELECT @String_Parameter; END |

This stored procedure accepts a single string input, which is run through a series of REPLACE operations in order to remove a handful of unwanted characters (replacing them with empty strings). This process is effective when a small number of targeted characters is defined, but gets messy quickly if there is a large number of characters to strip out of the string. In addition, not all characters are easy to copy and paste into a command. In general, it’s always advisable to use the ASCII or UNICODE numeric identifier for characters as these are static and will not be affected by fonts, keyboard input, or any other variances in text input, escape characters, or other potential interferences:

|

1 2 3 4 5 6 7 8 9 |

ALTER PROCEDURE dbo.My_Stored_Procedure @String_Parameter VARCHAR(289) AS BEGIN SELECT @String_Parameter = REPLACE(REPLACE(REPLACE(@String_Parameter, CHAR(64), ''), CHAR(35), ''), CHAR(37), ''); SELECT @String_Parameter; END |

The only difference is to replace the apostrophe-delimited characters with the CHAR representation of each character’s ASCII value. Here is an example execution using either method:

|

1 2 3 |

EXEC dbo.My_Stored_Procedure 'Test String!@#$%'; |

Test String!$

Note that the “@”, “#”, and “%” were all removed, and all other characters left as-is. Using SUBSTRING, REPLACE, or other string-manipulation functions on specific characters will become both messy and difficult to maintain/troubleshoot as our character lists get more complex. Consider the following example, which attempts to remove every non-alphanumeric character I could find on my keyboard:

|

1 2 3 4 5 6 7 8 9 |

ALTER PROCEDURE dbo.My_Stored_Procedure @String_Parameter VARCHAR(289) AS BEGIN SELECT @String_Parameter = REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(@String_Parameter, '@', ''), '#', ''), '%', ''), '!', ''), '$', ''), '^', ''), '&', ''), '*', ''), '(', ''), ')', ''), '_', ''), '-', ''), '=', ''), '+', ''), '[', ''), ']', ''), '{', ''), '}', ''), '\', ''), '|', ''), ';', ''), ':', ''), '''', ''), '"', ''), ',', ''), '<', ''), '.', ''), '>', ''), '/', ''), '?', ''), '`', ''), '~', ''), ' ', ''); SELECT @String_Parameter; END |

Yes, this works, and no, it’s not a great idea 🙂 Aside from being exceedingly messy, I omitted many special characters that are not shown explicitly on my keyboard, such as tab, newline, accents, printer feed characters, and more. In addition, if we were working with UNICODE input, there would be a very long list of foreign characters that are definitely not represented on my keyboard.

For small, controlled input scenarios in which we are familiar with the data and know exactly what we want to manipulate, this is a quick & easy way to get results, but be cautious when applications get bigger and more complex. Also, if a script is to be used with UNICODE input and/or internationalized inputs, then the list of potentially undesired characters may make the silly-looking stored procedure above even sillier.

RegEx & TSQL

In lieu of not wanting to implement an endless string of character replacements, we can consider solutions that evaluate a string in its entirety. RegEx is a simple way to choose a string pattern, evaluate a string, and return a cleansed version:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 |

CREATE FUNCTION dbo.Remove_Non_Alphanumeric (@String_Parameter VARCHAR(289)) RETURNS VARCHAR(289) AS BEGIN DECLARE @Alphanumeric_Characters VARCHAR(289) = '%[^A-Z^a-z^0-9]%'; WHILE PATINDEX(@Alphanumeric_Characters, @String_Parameter) > 0 BEGIN SELECT @String_Parameter = STUFF(@String_Parameter, PATINDEX(@Alphanumeric_Characters, @String_Parameter), 1, ''); END RETURN @String_Parameter; END |

In this function, we define a set of characters that we want to keep, composed of all capital letters, lowercase letters, and numbers. We then iterate through each invalid character, replacing it with an empty string, until no more exist. We can test this with our previous input string:

|

1 2 3 |

SELECT dbo.Remove_Non_Alphanumeric('Test String!@#$%'); |

TestString

The output has the space, as well as five trailing characters removed. For good measure, we can test this function against a more complex string:

|

1 2 3 4 5 |

SELECT dbo.Remove_Non_Alphanumeric('Te s((t @#*&)@#S{]''>t r ing!@#$%'); |

Despite being a mess, the results are the same as before:

TestString

This is a great method for string-cleansing when our output is intended to be straight-forward. Saving only alpha-numeric characters is easy, and the RegEx to do so is not difficult to read or understand. It’s certainly simpler than having dozens of REPLACE strung together. This approach is iterative, but it’s executed over a scalar variable, meaning that performance won’t be bad. If the function were used as part of a WHERE clause, JOIN, or other table access logic, the results could be table scans. It’s always recommended to transform input first, before applying to any direct table access.

Where RegEx begins to fail is when we have more complex string manipulation needs. Let’s say we are accepting phone number inputs and also want to retain a variety of special characters, such as parenthesis, dashes, and pound signs, but also wanted to remove whitespaces, quotes, apostrophes, and other problematic characters for a given system. The function above can be rewritten for this custom case as follows:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 |

CREATE FUNCTION dbo.Remove_Non_Alphanumeric (@String_Parameter VARCHAR(289)) RETURNS VARCHAR(289) AS BEGIN DECLARE @Characters_to_Retain VARCHAR(289) = '%^[a-zA-Z0-9 ()-]%'; WHILE PATINDEX(@Characters_to_Retain, @String_Parameter) > 0 BEGIN SELECT @String_Parameter = STUFF(@String_Parameter, PATINDEX(@Characters_to_Retain, @String_Parameter), 1, ''); END RETURN @String_Parameter; END |

This function explicitly keeps alphanumeric characters, parenthesis, space, and the dash and returns the output we’d expect:

|

1 2 3 4 5 6 7 |

SELECT dbo.Remove_Non_Alphanumeric('1234567890'); SELECT dbo.Remove_Non_Alphanumeric('(123) 456-7890'); SELECT dbo.Remove_Non_Alphanumeric('123 456 7890'); SELECT dbo.Remove_Non_Alphanumeric('123-456-7890'); SELECT dbo.Remove_Non_Alphanumeric('(123) 456-7890 x123'); |

1234567890

(123) 456-7890

123 456 7890

123-456-7890

(123) 456-7890 x123

This is effective when we know exactly what characters to keep and can easily list them out. If our needs are more customized, then this can become difficult to code in an easy-to-read function or stored procedure. As we work towards a more scalable solution, we’ll look at another alternative first.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 |

CREATE FUNCTION dbo.Remove_Non_Alphanumeric (@String_Parameter VARCHAR(289)) RETURNS VARCHAR(289) AS BEGIN DECLARE @Rebuilt_String VARCHAR(MAX) = ''; DECLARE @Current_Character VARCHAR(1); DECLARE @Character_Position INT = 1; WHILE @Character_Position <= LEN(@String_Parameter) BEGIN SET @Current_Character = SUBSTRING(@String_Parameter, @Character_Position, 1); IF ASCII(@Current_Character) BETWEEN 48 AND 57 OR ASCII(@Current_Character) BETWEEN 65 AND 90 OR ASCII(@Current_Character) BETWEEN 97 AND 122 BEGIN SELECT @Rebuilt_String = @Rebuilt_String + @Current_Character; END SELECT @Character_Position = @Character_Position + 1; END RETURN @Rebuilt_String; END |

This function iterates through the input string one character at a time. If the current character matches our criteria, then it is added to the output string. Invalid characters are skipped, and @Rebuilt_String will end up containing only what remains. The example above explicitly calls out numbers, capital letters, and lowercase letters, so any test input we enter will be stripped of all other characters:

|

1 2 3 |

SELECT dbo.Remove_Non_Alphanumeric('Test String!@#$%'); |

TestString

|

1 2 3 |

SELECT dbo.Remove_Non_Alphanumeric('@#^%!@%!*@}{":?><;''[]/.,'); |

The second example returns an empty string as every character provided is filtered out by our input criteria. The benefit of an approach like this is that we are matching characters based on their ASCII code, rather than typed out literals. This is generally more reliable and allows us to explicitly call out characters that may not exist on our keyboards. If you’re working in a Unicode character set, you may substitute NVARCHAR for all VARCHAR parameters/variables and the UNICODE function for the ASCII function. The results of these changes will allow filtering to occur over the entire Unicode character set, instead of only the ASCII character set, which would be useful in applications in which we are accepting inputs in double-byte languages (such as Japanese or Chinese) or wish to process Unicode characters.

The Dim_Ascii Solution

The solutions presented so far are great for simple use-cases in which our needs are consistent and unlikely to change or need to be customized over time. In larger applications in which we may have a variety of string cleansing needs, it is advantageous to not maintain all of this string logic within functions or stored procedures – especially if we have many different types of string fields that each carry their own rules.

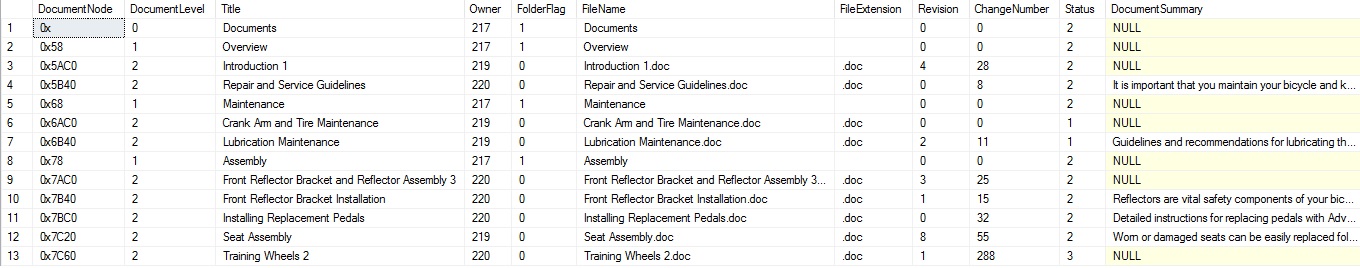

An elegant solution, and one that scales very well over time, is to create a dimension table for our character set (ASCII or Unicode) and populate it with the rules for our application needs. For example, let’s take the Production.Document table within the Adventureworks demo database. This table contains a variety of text fields:

Let’s say we wanted to load new data into this table, and each column had unique rules to follow in terms of what characters are allowed or not:

Title: Any alphanumeric, as well as any of !@#$%&*()-_+={}”:;.,?/\~

FileName: Any alphanumeric, as well as underscores and dashes.

FileExtension: Any alphanumeric and a period.

DocumentSummary: Any non-system characters.

Each column has unique rules, and hard-coding those rules into different functions or stored procedures would be difficult to keep track of, document, and maintain. As more tables, columns, and strings are managed, we’d encounter greater chances of mistakes happening due to utilizing the wrong function, method, or code.

To facilitate easier storage and understanding of our string-cleansing needs, we will create a table called Dim_Ascii:

|

1 2 3 4 5 6 7 8 9 10 11 |

CREATE TABLE dbo.dim_ascii ( Ascii_ID SMALLINT NOT NULL CONSTRAINT PK_dim_ascii PRIMARY KEY CLUSTERED, Ascii_Character VARCHAR(1) NOT NULL, Is_Valid_In_Production_Document_Title BIT NOT NULL, Is_Valid_In_Production_Document_FileName BIT NOT NULL, Is_Valid_In_Production_Document_FileExtension BIT NOT NULL, Is_Valid_In_Production_Document_DocumentSummary BIT NOT NULL ); CREATE NONCLUSTERED INDEX IX_dim_ascii_Ascii_Character ON dbo.dim_ascii(Ascii_Character); |

In this table, we store the numeric ASCII ID, its corresponding character representation, and a separate bit column for each string cleansing use-case. Even if we devise many different string manipulation needs, the only change to this table that is required is the addition of another bit column. This ensures that this table, even if it becomes wide, will still require little storage and will perform well.

Now let’s populate it with an initial set of characters:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 |

DECLARE @Ascii_Count SMALLINT = 1; WHILE @Ascii_Count <= 255 BEGIN INSERT INTO dbo.dim_ascii (Ascii_ID, Ascii_Character, Is_Valid_In_Production_Document_Title, Is_Valid_In_Production_Document_FileName, Is_Valid_In_Production_Document_FileExtension, Is_Valid_In_Production_Document_DocumentSummary) SELECT @Ascii_Count, CHAR(@Ascii_Count), 0, 0, 0, 0; SELECT @Ascii_Count = @Ascii_Count + 1; END |

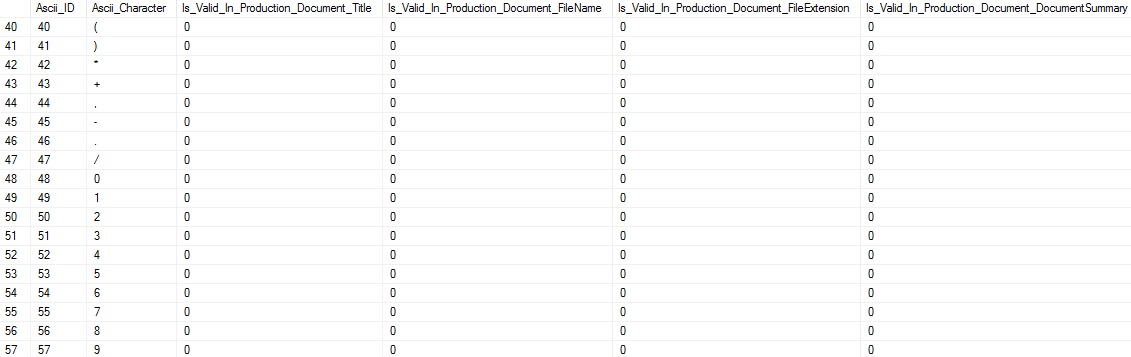

This will loop through every value in the extended ASCII character set and insert its numeric and character equivalent. Not all of these characters will be viewable in a meaningful fashion in our TSQL editor, which is why maintaining and using the ASCII codes is very important! Running a SELECT against the table shows us our progress so far:

The next step is to turn our business rules into sets of ones and zeroes for Dim_Ascii:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 |

UPDATE dim_ascii SET Is_Valid_In_Production_Document_Title = 1 FROM dbo.dim_ascii WHERE Ascii_ID BETWEEN 32 AND 126; UPDATE dim_ascii SET Is_Valid_In_Production_Document_FileName = 1 FROM dbo.dim_ascii WHERE Ascii_ID BETWEEN 48 AND 57 OR Ascii_ID BETWEEN 65 AND 90 OR Ascii_ID BETWEEN 97 AND 122 OR Ascii_ID IN (45, 95); UPDATE dim_ascii SET Is_Valid_In_Production_Document_FileExtension = 1 FROM dbo.dim_ascii WHERE Ascii_ID BETWEEN 48 AND 57 OR Ascii_ID BETWEEN 65 AND 90 OR Ascii_ID BETWEEN 97 AND 122 OR Ascii_ID = 46; UPDATE dim_ascii SET Is_Valid_In_Production_Document_DocumentSummary = 1 FROM dbo.dim_ascii WHERE Ascii_ID BETWEEN 9 AND 13 OR Ascii_ID BETWEEN 32 AND 126; |

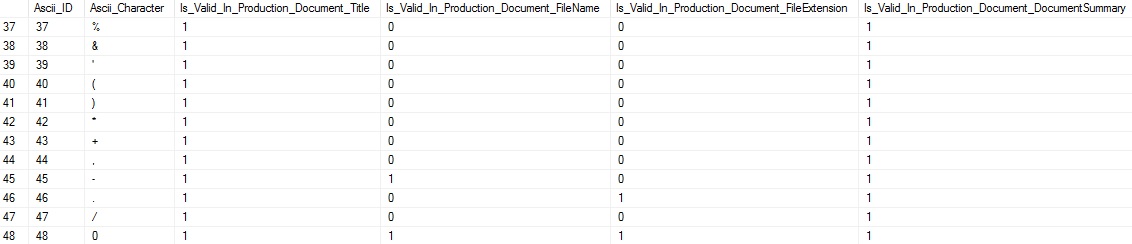

For each of the scenarios introduced, we update the appropriate Dim_Ascii column to indicate when characters are valid for it. The results look like this:

We can see that, depending on the rules for each column, different ASCII characters are allowed (or not allowed). This table can be updated whenever business needs change, new use-cases arise, or wish to customize any existing data further. Now that this table is complete, we can move on to using this metadata in order to effectively populate the Production.Document table.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 |

CREATE FUNCTION dbo.Remove_Non_Alphanumeric (@String_Parameter VARCHAR(MAX), @String_Filter_Column VARCHAR(50)) RETURNS VARCHAR(MAX) AS BEGIN DECLARE @Input_String_Characters TABLE ( Character_Position INT NOT NULL IDENTITY(1,1) PRIMARY KEY CLUSTERED, Character_Ascii_Value SMALLINT NOT NULL ); IF @String_Parameter IS NULL OR @String_Filter_Column IS NULL BEGIN RETURN NULL; END IF NOT EXISTS (SELECT * FROM sys.tables INNER JOIN sys.columns ON columns.object_id = tables.object_id INNER JOIN sys.types ON types.user_type_id = columns.user_type_id WHERE tables.name = 'Dim_Ascii' AND columns.name = @String_Filter_Column AND types.name = 'bit') BEGIN RETURN NULL; END DECLARE @Input_String_Length INT = LEN(@String_Parameter); DECLARE @Character_Counter INT = 1; WHILE @Character_Counter <= @Input_String_Length BEGIN INSERT INTO @Input_String_Characters (Character_Ascii_Value) SELECT ASCII(SUBSTRING(@String_Parameter, @Character_Counter, 1)); SELECT @Character_Counter = @Character_Counter + 1; END IF @String_Filter_Column = 'Is_Valid_In_Production_Document_Title' BEGIN UPDATE Input_String_Characters SET Character_Ascii_Value = -1 FROM @Input_String_Characters Input_String_Characters INNER JOIN dbo.dim_ascii ON dim_ascii.Ascii_ID = Input_String_Characters.Character_Ascii_Value WHERE dim_ascii.Is_Valid_In_Production_Document_Title = 0; END ELSE IF @String_Filter_Column = 'Is_Valid_In_Production_Document_FileName' BEGIN UPDATE Input_String_Characters SET Character_Ascii_Value = -1 FROM @Input_String_Characters Input_String_Characters INNER JOIN dbo.dim_ascii ON dim_ascii.Ascii_ID = Input_String_Characters.Character_Ascii_Value WHERE dim_ascii.Is_Valid_In_Production_Document_FileName = 0; END ELSE IF @String_Filter_Column = 'Is_Valid_In_Production_Document_FileExtension' BEGIN UPDATE Input_String_Characters SET Character_Ascii_Value = -1 FROM @Input_String_Characters Input_String_Characters INNER JOIN dbo.dim_ascii ON dim_ascii.Ascii_ID = Input_String_Characters.Character_Ascii_Value WHERE dim_ascii.Is_Valid_In_Production_Document_FileExtension = 0; END ELSE IF @String_Filter_Column = 'Is_Valid_In_Production_Document_DocumentSummary' BEGIN UPDATE Input_String_Characters SET Character_Ascii_Value = -1 FROM @Input_String_Characters Input_String_Characters INNER JOIN dbo.dim_ascii ON dim_ascii.Ascii_ID = Input_String_Characters.Character_Ascii_Value WHERE dim_ascii.Is_Valid_In_Production_Document_DocumentSummary = 0; END DELETE FROM @Input_String_Characters WHERE Character_Ascii_Value = -1; DECLARE @Output_String VARCHAR(MAX) = ''; SELECT @Output_String = @Output_String + CHAR(Character_Ascii_Value) FROM @Input_String_Characters Input_String_Characters; RETURN @Output_String; END |

This function accepts a string input and column name to apply rules from and returns the cleansed string based on those rules. The process is as follows:

- Create a table variable to store the characters from the input string.

- Populate the table variable with each character.

- Join the table variable to Dim_Ascii, setting all values for disallowed characters to -1.

- Delete all rows from the table variable that contain -1 as their ASCII value.

- Recreate the output string from the table variable and return it from the function.

You may look at this TSQL and scratch your head thinking, “Why does a simple operation take so many steps?” The answer is that SQL Server functions cannot contain any TSQL constructs. Since they can be run in-line with table access requests, we cannot use side-effecting functions, such as multi-table deletes, dynamic SQL, SELECT, or others constructs that might prove useful here.

Dynamic SQL would have made this an elegant solution in which we could build the exact DELETE statement and join to Dim_Ascii one time, based on whatever table input was provided. In a stored procedure, we’d have more options, but the benefit of a function is its ability to be used easily within other operations. For inserting any amount of data in bulk, this is quite beneficial!

Let’s test out the function for a given input for each string cleansing need:

|

1 2 3 |

SELECT dbo.Remove_Non_Alphanumeric('Test-String number 1!@#$%', 'Is_Valid_In_Production_Document_Title'); |

Title allows any alphanumeric, as well as most symbols. As a result, the output looks the same as the input:

Test-String number 1!@#$%

|

1 2 3 |

SELECT dbo.Remove_Non_Alphanumeric('Test-String number 2!@#$%', 'Is_Valid_In_Production_Document_FileName'); |

Filename is more restrictive and only allows alphanumeric characters, as well as dashes and underscores. The result shows all non-alphanumeric charactes removed, except for the lone dash:

Test-Stringnumber2

|

1 2 3 |

SELECT dbo.Remove_Non_Alphanumeric('Test-String number 3!@#$%', 'Is_Valid_In_Production_Document_FileExtension'); |

FileExtension allows only alphanumeric characters and periods. As a result, the output will look like this:

TestStringnumber3

|

1 2 3 |

SELECT dbo.Remove_Non_Alphanumeric('Test-String number 4!@#$%', 'Is_Valid_In_Production_Document_DocumentSummary'); |

Lastly, DocumentSummary allows any characters outside of the system ASCII value range and is unchanged by the application of our function:

Test-String number 4!@#$%

From here, we could load data into that table, or update existing rows in bulk, using the function we created above:

|

1 2 3 4 5 6 7 8 |

UPDATE Document SET Title = dbo.Remove_Non_Alphanumeric(Document.Title, 'Is_Valid_In_Production_Document_Title'), FileName = dbo.Remove_Non_Alphanumeric(Document.FileName, 'Is_Valid_In_Production_Document_FileName'), FileExtension = dbo.Remove_Non_Alphanumeric(Document.FileExtension, 'Is_Valid_In_Production_Document_FileExtension'), DocumentSummary = dbo.Remove_Non_Alphanumeric(Document.DocumentSummary, 'Is_Valid_In_Production_Document_DocumentSummary') FROM Production.Document; |

Customization & Conclusion

This process is made for customization. The TSQL provided is more about ideas to expand on than completed solutions, although any of these functions can be used as-is successfully. If your process allows for its use in a stored procedure instead of a function, then the ability to use dynamic SQL is opened up, allowing for a simplified custom script that automatically matches BIT column names to input parameters and filters accordingly. This would remove the need for any explicitly called out column names within our code, making it even more portable and maintainable.

The use of a dimension table to store information about how and what we do to clean up string inputs allows for all of this logic to be stored in a single place. This removes the need for many disparate stored procedures, TSQL blocks, or other business logic being spread throughout our schema. For simple/singular needs, though, a function using RegEx or ASCII ranges can accomplish what we want with relatively minimal effort.

For any inputs that originate from outside of your control, i.e. the internet, always check that string inputs are valid and if you are using any dynamic SQL, ensure they are properly delimited to prevent any SQL injection, string search manipulation, or other bad behavior by your users.

Lastly, if possible, have the application and SQL Server objects validate and cleanse strings. Layering string cleansing greatly reduces the chance of bad inputs making it into the database and helps keep your data in an acceptable form. If rules are very specific and difficult to follow, also consider check constraints to control what can be stored in a table. If a given column doesn’t allow certain characters, then the need to cleanse exists more for error prevention than data integrity.

- SQL Server Database Metrics - October 2, 2019

- Using SQL Server Database Metrics to Predict Application Problems - September 27, 2019

- SQL Injection: Detection and prevention - August 30, 2019