Having worked in busy dev-test environments, it isn’t uncommon to come across situations where someone detached a database from an SQL server, but forgot to reattach it, or drop it from the server. The next task we are then required to undertake, upon coming across such a situation, is to clean up the traces. This task can be complicated if the detached databases get deregistered from the SQL Server metadata catalog.

Of course, there’s a way to track these using T-SQL. All we need to do is enable extended stored procedure (XP) and list all the files, and then compare them with the database files. Handling files using T-SQL is not the ideal approach, though. Also, running multi-database scripts can be cumbersome using T-SQL. So, how about using a PowerShell script to find out the detached databases on the local or remote drives of any database instance of any server?

Introduction

In this article, we take the sledgehammer approach in searching for MDFs and then comparing them against files which are available on the listed drive. The idea behind this is to generate a report of detached database files across multiple servers. That’s one way to keep our databases compliant in terms of meeting SLAs, and ensuring optimal performance. Running such reports periodically helps us to achieve compliance in terms of file auditing.

At times, finding files seems like a necessary evil for IT pros; like finding a needing in a haystack. Imagine tens or hundreds of computers being the haystack! Is Get-ChildItem a good option? Sure, it works, but it could be considerably resource-intensive when working on an entire hard drive.

Okay, so, the other way to go about it is to use the CIM_Datafile class of the WMI. This can be done using PowerShell 3.0 or higher. And as far as I know, WMI has the files registered as well. We would have to go about querying all the instances of the CIM_Datafile class. But it is as time-consuming as Get-ChildItem. If used wisely, though—like being specific with the query, with respect to the properties you look for—it can become an efficient and effective solution.

Let’s now look at the highlights of the script we would write to handle the task of finding such detached files:

- Works across multiple servers

- Finds any detached file across multiple all instances on each server

- Customized code to scan the drives

- Use of credentials

- Detailing the file details such as path, name and size

- And more…

Getting started

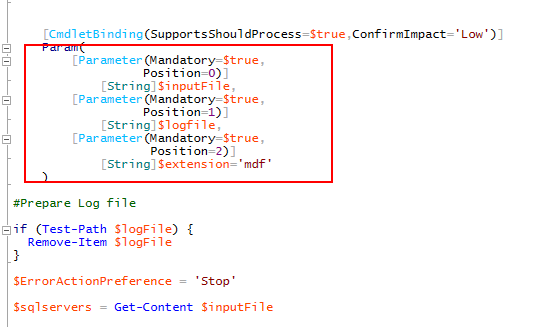

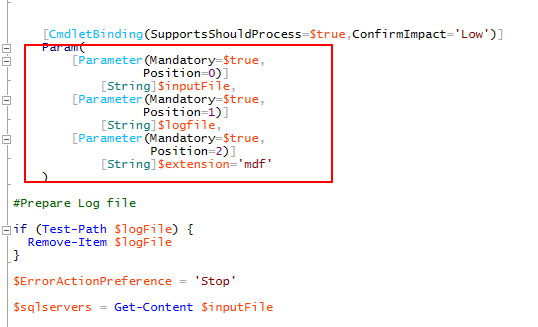

Step 1: Input parameter declaration

The parameters Inputfile, logfile, filtering parameter file-extension are defined and declared. The path of the log file is verified and recreated, if it exists.

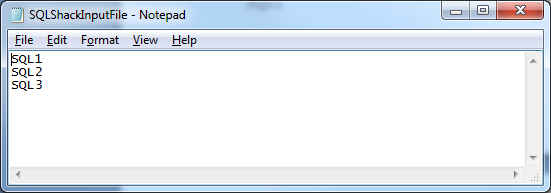

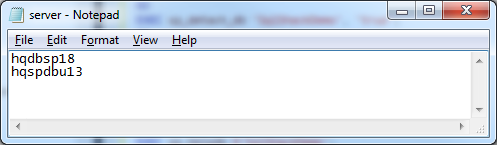

The input file should contain the list of the SQL instances which we’d like the report for. For example, the input file should look something like this:

SQL1

SQL2

SQL3

The following PowerShell script takes a text file with server names as input, and iterates through the list. It first tests of the server is reachable, and then lists out the active database files. The script also logs its progress in a separate log file. Database file extension and drive letters are used for filtering.

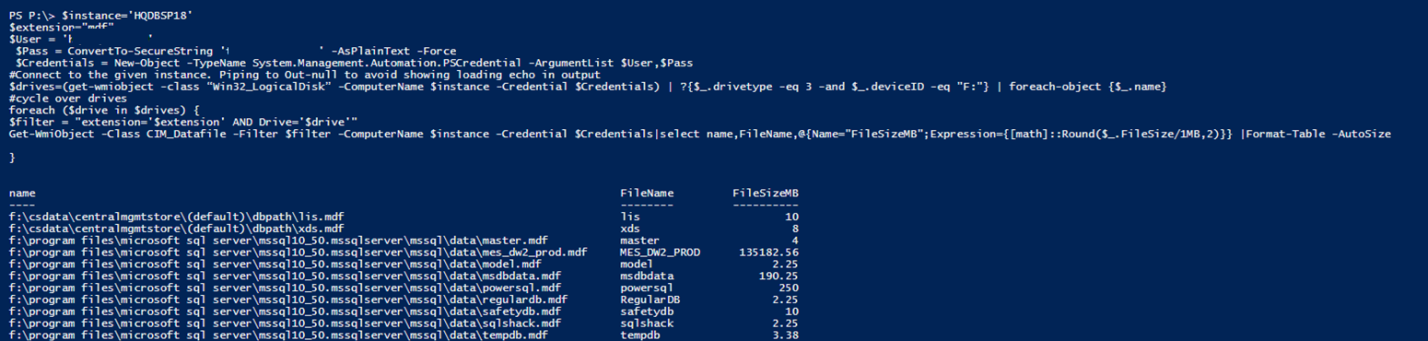

The script takes in the username and the password, and stores these values in $Credentials. This variable is then passed as a parameter for Get-WmiObject.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 |

$instance='HQDBSP18' $extension="mdf" $User = 'abc\abcd' $Pass = ConvertTo-SecureString 'SQLShack@2017' -AsPlainText -Force $Credentials = New-Object -TypeName System.Management.Automation.PSCredential -ArgumentList $User,$Pass #Connect to the given instance. Piping to Out-null to avoid showing loading echo in output $drives=(get-wmiobject -class “Win32_LogicalDisk” -ComputerName $instance -Credential $Credentials) | ?{$_.drivetype -eq 3 -and $_.deviceID -eq "F:"} | foreach-object {$_.name} #cycle over drives foreach ($drive in $drives) { $filter = "extension='$extension' AND Drive='$drive'" Get-WmiObject -Class CIM_Datafile -Filter $filter -ComputerName $instance -Credential $Credentials|select name,FileName,@{Name="FileSizeMB";Expression={[math]::Round($_.FileSize/1MB,2)}} |Format-Table -AutoSize } |

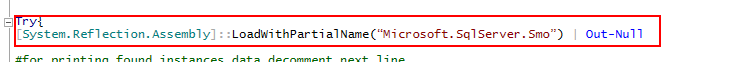

Step 2: Loading SMO libraries

If you know the full assembly name, use the. Load() method.

PS:>[reflection.assembly]::Load(“Microsoft.SqlServer.Smo, Version=13.0.0.0, Culture=neutral, PublicKeyToken=89845dcd8080cc91”)

or

PS:>Add-Type -Assembly “Microsoft.SqlServer.Smo, Version=13.0.0.0, Culture=neutral, PublicKeyToken=89845dcd8080cc91”

Note: The LoadWithPartialName has been deprecated. The recommended solution now is to use the Add-Type or load a specific library.

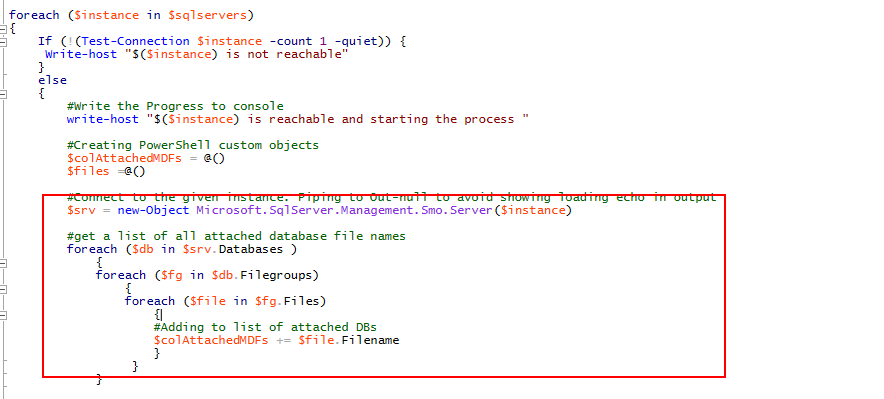

Step 3: Looping through each server to list active data files

For each server, the connection is opened, and all the active data files are retrieved. The result set is prepared for step 4.

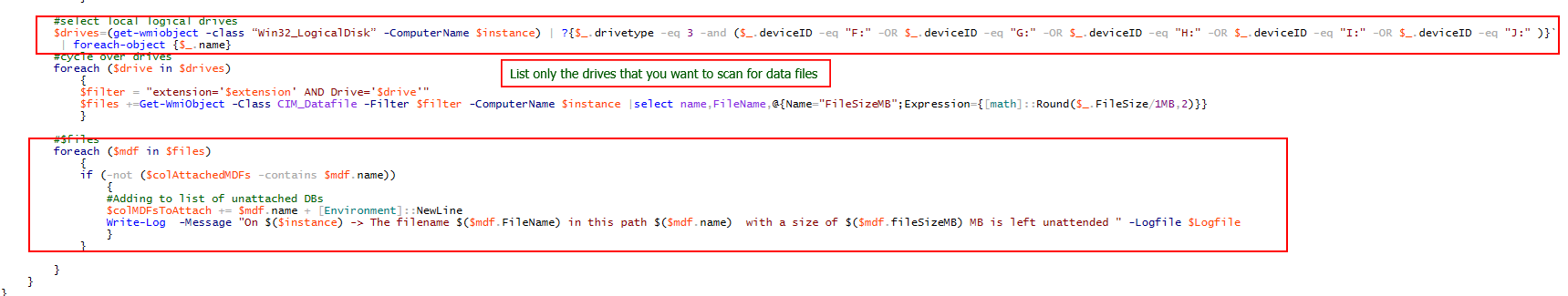

Step 4: List and compare the mdf files

This steps list the mdf files from the listed drives. The generated files are compared with the active data files.

It helps if we customize the drives. This way we can significantly reduce the traversing time of the query.

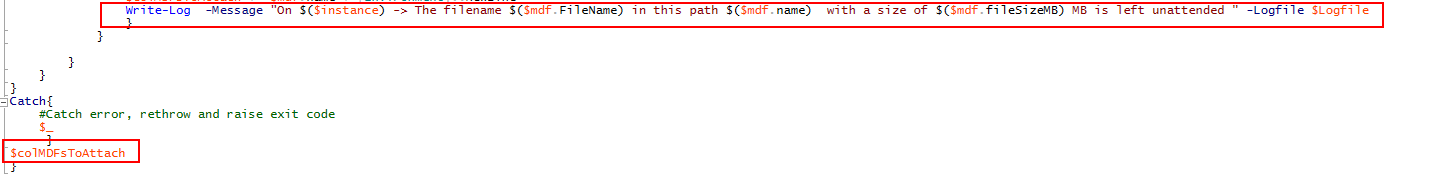

Step 5: Write the output to a log file and to the console

The complete PowerShell script is given in Appendix A.

Demonstration

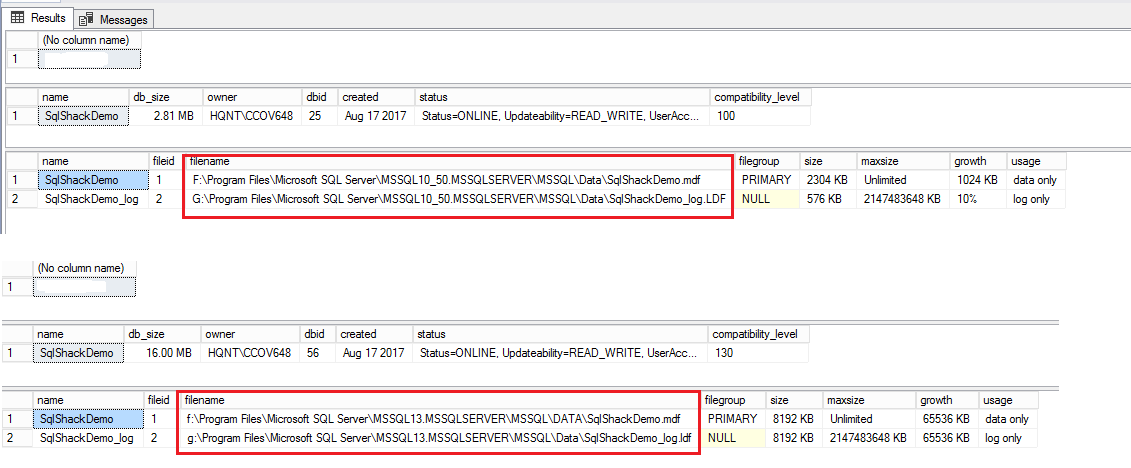

For the purpose of demonstration, we’ve created a new database called sqlShackDemo on two servers. After creating the databases, we’ll run a series of SQL statements to get the file locations, and detach the databases. SQLCMD is used to perform series of SQL operations.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 |

:connect DBSP18 GO SELECT @@Servername GO CREATE DATABASE SqlShackDemo; GO EXEC sp_helpdb N'SqlShackDemo'; GO EXEC sp_detach_db 'SqlShackDemo', 'true'; GO :connect spdbu13 GO SELECT @@Servername GO CREATE DATABASE SqlShackDemo; GO EXEC sp_helpdb N'SqlShackDemo'; GO EXEC sp_detach_db 'SqlShackDemo', 'true'; |

The output shows that the database SQLShackDemo is created. The sp_helpdb system stored procedure is used to generate the metadata for the database. sp_detachdb is used to detach the database from database engine.

Input file

The input file should have the names of the two database instances.

There are, in fact, two ways to run the script:

- Modify the script, and save the file

- Create a function and manually call the function by passing the required arguments

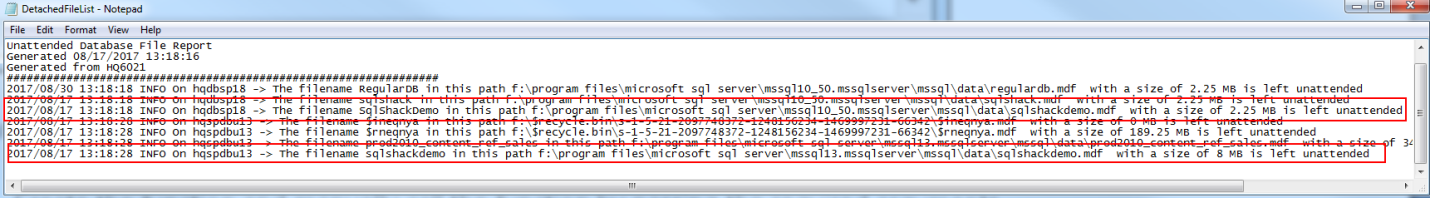

The output shows the detached files from the system.

Conclusion

Orphaned database files eat up a lot of space in our environments.. Finding those file can be labor intensive. In this article, we discussed the different ways to find and handle such orphaned data files. One of the ways we’ve focused on is using a multi-server PowerShell script, which is a very efficient way of finding out the unattended files from the entire environment. Periodic generation of such detached reports comes in handy when cleaning up the system. This way, we can prevent many space-related problems on the servers. The script and the report it generates also helps us keep an eye on the detached files on each of the database instances we list in the input file, thereby ensuring that we’re compliant in terms of resource utilization, apart from maintaining space effectively.

Appendix A

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 |

Function Get-SQLUnattendedFile { <# .Synopsis The objective of the script is to make use of Input file in which it consists of list of database server instances as a source for various parts of the script. .Description This article is taking the sledge hammer approach and searching for mdf's and then comparing them against files which are available on the listed drive. Function to log Output and display the details on the console. .Parameter InputFile Path to the file where the input details are saved. Example: c:\InputServer.txt .Parameter LogFile The file logs all the information about the detached files or orphan file along with its size. This also contains the source of the server. .Example Write-Log -Message "$($Server) is reachable and starting the process " -Logfile $Logfile .Example Write-Log -Message "$(.Server) message " -Logfile $Logfile .Example Get-SQLUnattendedFile -inputfile c:\server.txt -logfile c:\DetachedFileList.txt .Link https://powershellsql.wordpress.com/ Jump #> [CmdletBinding(SupportsShouldProcess=$true,ConfirmImpact='Low')] Param( [Parameter(Mandatory=$true, Position=0)] [String]$inputFile, [Parameter(Mandatory=$true, Position=1)] [String]$logfile, [Parameter(Mandatory=$true, Position=2)] [String]$extension='mdf' ) #Prepare Log file if (Test-Path $logFile) { Remove-Item $logFile } $ErrorActionPreference = 'Stop' $sqlservers = Get-Content $inputFile # Prepare headers for the log file for each execution of script Add-Content $logFile "#################################################################" Add-Content $logFile "Unattended Database File Report" Add-Content $logFile "Generated $(get-date)" Add-Content $logFile "Generated from $(gc env:computername)" Add-Content $logFile "#################################################################" Function Write-Log { [CmdletBinding()] Param( [Parameter(Mandatory=$False)] [ValidateSet("INFO","WARN","ERROR")] [String] $Level = "INFO", [Parameter(Mandatory=$True)] [string] $Message, [Parameter(Mandatory=$False)] [string] $logfile ) $Stamp = (Get-Date).toString("yyyy/MM/dd HH:mm:ss") $Line = "$Stamp $Level $Message" If($logfile) { Add-Content $logfile -Value $Line } Else { Write-Output $Line } } Try{ [System.Reflection.Assembly]::LoadWithPartialName(“Microsoft.SqlServer.Smo”) | Out-Null #for printing found instances data uncomment next line #$Instances foreach ($instance in $sqlservers) { If (!(Test-Connection $instance -count 1 -quiet)) { Write-host "$($instance) is not reachable" } else { #Write the Progress to console write-host "$($instance) is reachable and starting the process " #Creating PowerShell custom objects $colAttachedMDFs = @() $files =@() #Connect to the given instance. Piping to Out-null to avoid showing loading echo in output $srv = new-Object Microsoft.SqlServer.Management.Smo.Server($instance) #get a list of all attached database file names foreach ($db in $srv.Databases ) { foreach ($fg in $db.Filegroups) { foreach ($file in $fg.Files) { #Adding to list of attached DBs $colAttachedMDFs += $file.Filename } } } #select local logical drives $drives=(get-wmiobject -class “Win32_LogicalDisk” -ComputerName $instance) | ?{$_.drivetype -eq 3 -and ($_.deviceID -eq "F:" -OR $_.deviceID -eq "G:" -OR $_.deviceID -eq "H:" -OR $_.deviceID -eq "I:" -OR $_.deviceID -eq "J:" )} | foreach-object {$_.name} #cycle over drives foreach ($drive in $drives) { $filter = "extension='$extension' AND Drive='$drive'" $files +=Get-WmiObject -Class CIM_Datafile -Filter $filter -ComputerName $instance |select name,FileName,@{Name="FileSizeMB";Expression={[math]::Round($_.FileSize/1MB,2)}} } #$files foreach ($mdf in $files) { if (-not ($colAttachedMDFs -contains $mdf.name)) { #Adding to list of unattached DBs $colMDFsToAttach += $mdf.name + [Environment]::NewLine Write-Log -Message "On $($instance) -> The filename $($mdf.FileName) in this path $($mdf.name) with a size of $($mdf.fileSizeMB) MB is left unattended " -Logfile $Logfile } } } } } Catch{ #Catch error, rethrow and raise exit code $_ } $colMDFsToAttach } Get-SQLUnattendedFile -inputfile c:\server.txt -logfile c:\DetachedFileList.txt Invoke-item c:\DetachedFileList.txt |

- Stairway to SQL essentials - April 7, 2021

- A quick overview of database audit in SQL - January 28, 2021

- How to set up Azure Data Sync between Azure SQL databases and on-premises SQL Server - January 20, 2021