Backup and recovery are some of the most important DBA tasks, although they look simple enough and usually you setup them, leave them running on schedule and only come back if they fail – there is a whole new world in regards to optimization you can do to make them faster, better and … smaller.

But why bother? Considering that the modern databases grow at such fast pace you may face a situation where you are not able to fit in your maintenance windows or service contract obligations. Let us take a look on the three main areas where we can work on:

Part one, optimize the database for faster backup and recovery operations, part two, optimize the exact process of database backup and part three, optimize the backup itself.

Before diving deep, let us take a look on the lab environment we will be working today with – We are having couple of azure machines running on MS SQL Server 2014 SP1 running on Windows 2012 R2. The databases data and log files are spread among different disks, the tempdb is having its own disks. In addition we are having a dedicated backup volume as well.

| TIER |

CPU CORES | MEMORY |

MAX. DATA DISK |

MAX. IOPS (300 PER DISK) |

| A3 | 4 cores | 7 GB | 8 | 8×500 |

The database that we will be using for tests is an AdventureWorks2008R2 enlarged* up to 17GB (only).

Part one, Optimize the database

At the moment we are having one database file, one log file and one backup file. Standard backup operations are happening in just over 36 minutes, backing up 1823434 pages in a 14GB backup file.

|

1 2 3 4 5 6 7 8 9 |

BACKUP DATABASE [AdventureWorks2008R2] TO DISK = N'N:\AdventureWorks2008R2.bak' WITH NOFORMAT, NOINIT GO Processed 1823456 pages for database 'AdventureWorks2008R2', file 'AdventureWorks2008R2_Data' on file 1. Processed 2 pages for database 'AdventureWorks2008R2', file 'AdventureWorks2008R2_Log' on file 1. BACKUP DATABASE successfully processed 1823458 pages in 2159.616 seconds (6.596 MB/sec). |

To optimize the database it is important for you to be familiar with the objects in it. With AdventureWorks database I will review the two biggest objects Sales.SalesOrderDetailEnlarged and Sales.SalesOrderHeaderEnlarged and sample the possible size reductions if I apply row or page compression for them.

Sample the possible savings using the stored procedure “sp_estimate_data_compression_savings”:

| Object name | IndexID | Type | Current Size(KB) | Estimated(KB) | Sample Current Size(KB) | Estimated Comp Sample(KB) |

| SalesOrderDetailEnlarged | 1 | ROW | 6445472 | 4641120 | 27120 | 19528 |

| SalesOrderDetailEnlarged | 2 | ROW | 2158240 | 2464576 | 9976 | 11392 |

| SalesOrderHeaderEnlarged | 1 | ROW | 2539304 | 1764888 | 39584 | 27512 |

| SalesOrderDetailEnlarged | 1 | PAGE | 6445472 | 3312688 | 26368 | 13552 |

| SalesOrderDetailEnlarged | 2 | PAGE | 2158240 | 2459472 | 9744 | 11104 |

| SalesOrderHeaderEnlarged | 1 | PAGE | 2539304 | 1228808 | 38552 | 18656 |

The sample results represent a portion of the data within the object compressed by the SQL Server – be aware that the final compression may have different ratios.

Judging by the results we will have best results using PAGE compression. You can apply the compression per index or on the whole table as follows:

|

1 2 3 4 5 6 7 8 9 10 |

USE [AdventureWorks2008R2] GO ALTER TABLE Sales.SalesOrderDetailEnlarged REBUILD PARTITION = ALL WITH (DATA_COMPRESSION = PAGE); GO ALTER TABLE Sales.SalesOrderHeaderEnlarged REBUILD PARTITION = ALL WITH (DATA_COMPRESSION = PAGE); GO |

The results from the compression:

| Table | Original(KB) | Compressed(KB) |

| SalesOrderDetailEnlarged | 6425624 | 2116376 |

| SalesOrderHeaderEnlarged | 2529792 | 1149608 |

Comparing the duration of the backup operation:

|

1 2 3 4 5 6 7 8 9 |

BACKUP DATABASE [AdventureWorks2008R2] TO DISK = N'N:\AdventureWorks2008R2.bak' WITH NOFORMAT, NOINIT GO Processed 1110760 pages for database 'AdventureWorks2008R2', file 'AdventureWorks2008R2_Data' on file 1. Processed 2 pages for database 'AdventureWorks2008R2', file 'AdventureWorks2008R2_Log' on file 1. BACKUP DATABASE successfully processed 1110762 pages in 1653.560 seconds (5.247 MB/sec). |

Processed 1110760 pages for database ‘AdventureWorks2008R2’, file ‘AdventureWorks2008R2_Data’ on file 1.

Processed 2 pages for database ‘AdventureWorks2008R2’, file ‘AdventureWorks2008R2_Log’ on file 1.

BACKUP DATABASE successfully processed 1110762 pages in 1653.560 seconds (5.247 MB/sec).

The backup operation completed in 27 minutes, 8 minutes faster and the backup files is 5.5GB smaller.

We can move even further and split the database in two parts, this will help us by doubling the read operations for the backup process. To achieve this we will create a new database file within a new filegroup named SECONDARY, after that we will move roughly 50% of the database objects in terms of size there.

Creating the new FILEGROUP:

|

1 2 3 4 5 6 |

USE [master] GO ALTER DATABASE [AdventureWorks2008R2] ADD FILEGROUP [SECONDARY] GO |

Creating a new file J:\AdventureWorks2008R2_Data2.ndf in the filegroup SECONDARY.

|

1 2 3 4 5 6 7 8 9 |

USE [master] GO ALTER DATABASE [AdventureWorks2008R2] MODIFY FILE (NAME = N'AdventureWorks2008R2_Data', SIZE = 16977920KB) GO ALTER DATABASE [AdventureWorks2008R2] ADD FILE (NAME = N'AdventureWorks2008R2_Data2', FILENAME = N'J:\AdventureWorks2008R2_Data2.ndf, SIZE = 16977920KB, FILEGROWTH = 1048576KB) TO FILEGROUP [SECONDARY] GO |

Rebuilding the Clustered index of the biggest table with specifying the exact filegroup in order to move it to the new file:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 |

USE [AdventureWorks2008R2] GO --- Dropping the clustered index ALTER TABLE [Sales].[SalesOrderDetailEnlarged] DROP CONSTRAINT [PK_SalesOrderDetailEnlarged_SalesOrderID_SalesOrderDetailID] GO -- Creating the cluster index again ALTER TABLE [Sales].[SalesOrderDetailEnlarged] ADD CONSTRAINT [PK_SalesOrderDetailEnlarged_SalesOrderID_SalesOrderDetailID] PRIMARY KEY CLUSTERED ( [SalesOrderID] ASC, [SalesOrderDetailID] ASC) WITH (PAD_INDEX = OFF, STATISTICS_NORECOMPUTE = OFF, SORT_IN_TEMPDB = OFF, IGNORE_DUP_KEY = OFF, ONLINE = OFF, ALLOW_ROW_LOCKS = ON, ALLOW_PAGE_LOCKS = ON) ON [SECONDARY] --- This one is important GO |

The backup operation is reading from two data files as you can see from the outcome:

|

1 2 3 4 5 6 7 8 9 10 |

BACKUP DATABASE [AdventureWorks2008R2] TO DISK = N'N:\AdventureWorks2008R2.bak' WITH NOFORMAT, NOINIT GO Processed 704048 pages for database 'AdventureWorks2008R2', file 'AdventureWorks2008R2_Data' on file 1. Processed 267384 pages for database 'AdventureWorks2008R2', file 'AdventureWorks2008R2_Data2' on file 1. Processed 2 pages for database 'AdventureWorks2008R2', file 'AdventureWorks2008R2_Log' on file 1. BACKUP DATABASE successfully processed 971434 pages in 1078.168 seconds (7.039 MB/sec). |

Benefiting from the two streams we have managed to save 10 more minutes from the duration of the backup operation.

You can consider the SQL trace flag –T1117** when using multiple data files – it will cause all the files in a filegroup to auto grow together by their specified auto grow increment. Note that this affects all databases on the server you enable it.

Part two, Optimizing the backup process

Now that we are having two data files there are two read IO steams reading the data during the backup process but a single one writing the data into the backup container. To further improve the process we can back up the database in two backup files. The data will be split among the two containers and you will need both of them during recovery operations.

|

1 2 3 4 5 6 7 8 9 10 11 |

BACKUP DATABASE [AdventureWorks2008R2] TO DISK = N'N:\AdventureWorks2008R2P1.bak', DISK = N'N:\AdventureWorks2008R2P2.bak' WITH NOFORMAT, INIT GO Processed 704048 pages for database 'AdventureWorks2008R2', file 'AdventureWorks2008R2_Data' on file 1. Processed 267384 pages for database 'AdventureWorks2008R2', file 'AdventureWorks2008R2_Data2' on file 1. Processed 2 pages for database 'AdventureWorks2008R2', file 'AdventureWorks2008R2_Log' on file 1. BACKUP DATABASE successfully processed 971434 pages in 712.489 seconds (10.651 MB/sec). |

Using two write streams saved us some more time, but be cautious as splitting the backup containers into multiple files complicates the recovery operations a bit.

Now let’s tweak some of the parameters that are available to the BACKUP command – MAXTRANSFERSIZE and BUFFERCOUNT.

MAXTRANSFERSIZE specifies the unit of transfer used by the SQL Server to perform the backups. The default value is 1024MB – the possible values are multiples of 65536 bytes (64KB) ranging up to 4MB.

BUFFERCOUNT determines the number of IO buffers used by the backup operations. The values for it are dynamically calculated by the MSSQL Server, however they are not always optimal. However be cautious as very high values may lead to ‘out of memory’ errors.

The two parameters do work together – BUFFERCOUNT determines how many IO buffers you will be working with and MAXTRANSFERSIZE sets how full this buffers will be. IO buffers = BUFFERCOUNT * MAXTRANSFERSIZE

You can enable two trace flags to see additional information about your backups and to see the calculated BUFFERCOUNT by the SQL server:

3605 – That send the output to errorlog; and 3213 – Which provide information about backup or restore throughput and other configurations.

To tweak the MAXTRANSFERSIZE we can do the following:

|

1 2 3 4 5 6 7 8 9 10 |

BACKUP DATABASE [AdventureWorks2008R2] TO DISK = N'N:\AdventureWorks2008R2P1.bak', DISK = N'N:\AdventureWorks2008R2P2.bak' WITH NOFORMAT, INIT, MAXTRANSFERSIZE = 2097152 Processed 704048 pages for database 'AdventureWorks2008R2', file 'AdventureWorks2008R2_Data' on file 1. Processed 267384 pages for database 'AdventureWorks2008R2', file 'AdventureWorks2008R2_Data2' on file 1. Processed 2 pages for database 'AdventureWorks2008R2', file 'AdventureWorks2008R2_Log' on file 1. BACKUP DATABASE successfully processed 971434 pages in 495.826 seconds (15.306 MB/sec). |

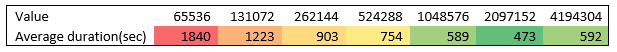

And these are the average results using every possible value:

The default value SQL server calculated for my backup was 14, I found that the optimal is close to XYZ. In order to tweak the BUFFERCOUNT we can do the following:

|

1 2 3 4 5 6 7 8 9 10 |

BACKUP DATABASE [AdventureWorks2008R2] TO DISK = N'N:\AdventureWorks2008R2P1.bak', DISK = N'N:\AdventureWorks2008R2P2.bak' WITH NOFORMAT, INIT, MAXTRANSFERSIZE = 2097152, BUFFERCOUNT = 50 Processed 704048 pages for database 'AdventureWorks2008R2', file 'AdventureWorks2008R2_Data' on file 1. Processed 267384 pages for database 'AdventureWorks2008R2', file 'AdventureWorks2008R2_Data2' on file 1. Processed 2 pages for database 'AdventureWorks2008R2', file 'AdventureWorks2008R2_Log' on file 1. BACKUP DATABASE successfully processed 971434 pages in 351.935 seconds (21.564 MB/sec). |

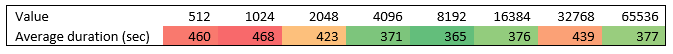

And again this are the results using different values.

Part three, Optimize the backup

There are two possibilities for us here – we can increase the backup speed a little bit more, and reduce the size of the backup.

The last parameter for the BACKUP command is BLOCKSIZE. It specifies the physical block size, in bytes. The supported sizes are from 512 bytes to 65536 (64 KB) bytes. The default for backup containers on disk drivers is 512 bytes and 64KB for tape devices. The command is as follows:

|

1 2 3 4 5 6 7 8 9 10 11 |

BACKUP DATABASE [AdventureWorks2008R2] TO DISK = N'N:\AdventureWorks2008R2P1.bak', DISK = N'N:\AdventureWorks2008R2P2.bak' WITH NOFORMAT, INIT, MAXTRANSFERSIZE = 2097152 , BUFFERCOUNT = 50, BLOCKSIZE = 8192 Processed 704048 pages for database 'AdventureWorks2008R2', file 'AdventureWorks2008R2_Data' on file 1. Processed 267384 pages for database 'AdventureWorks2008R2', file 'AdventureWorks2008R2_Data2' on file 1. Processed 2 pages for database 'AdventureWorks2008R2', file 'AdventureWorks2008R2_Log' on file 1. BACKUP DATABASE successfully processed 971434 pages in 390.318 seconds (19.443 MB/sec). |

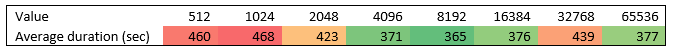

Below are the tests from using all possible values;

To get the last possible increase in terms of speed and size we will be using backup compression. It is now available in Standard edition as well from SQL 2008R2. It uses a zip type compression and the size of the backup is reduced during its creation – meaning that in most scenarios the backup will be not only smaller but faster (considering that you are not storing only jpegs and you use encryption). This is the easiest and most transparent way to optimize the backup operations. We can create a SQL compressed backup as follows:

|

1 2 3 4 5 6 7 8 9 10 11 |

BACKUP DATABASE [AdventureWorks2008R2] TO DISK = N'N:\AdventureWorks2008R2P1.bak', DISK = N'N:\AdventureWorks2008R2P2.bak' WITH NOFORMAT, INIT, MAXTRANSFERSIZE = 2097152 , BUFFERCOUNT = 50, BLOCKSIZE = 8192, COMPRESSION Processed 704048 pages for database 'AdventureWorks2008R2', file 'AdventureWorks2008R2_Data' on file 1. Processed 267384 pages for database 'AdventureWorks2008R2', file 'AdventureWorks2008R2_Data2' on file 1. Processed 2 pages for database 'AdventureWorks2008R2', file 'AdventureWorks2008R2_Log' on file 1. BACKUP DATABASE successfully processed 971434 pages in 230.368 seconds (32.944 MB/sec). |

You can also alter the Server wide settings so all backup are created compressed – be sure to check if all your SQL servers support compressed backups.

The backup operation completed at ~230 seconds, ~90% faster than the original we started with; the size is 2.5GB, 83% smaller than the original. And you are guessing it right – the recovery operations will be faster as well.

References

- Performance tuning for Azure SQL Databases - July 21, 2017

- Deep dive into SQL Server Extended events – The Event Pairing target - May 30, 2017

- Deep dive into the Extended Events – Histogram target - May 24, 2017