Summary

There are a variety of ways of managing data to insert into SQL Server. How we generate and insert data into tables can have a profound impact on performance and maintainability! This topic is often overlooked as a beginner’s consideration, but mistakes in how we grow objects can create massive headaches for future developers and administrators.

In this article we will explore the different ways we can create and insert data into both permanent and temporary objects. Performance, syntax, documentation, and maintainability will be evaluated for each method. By delving deeper into this topic, we can improve database design, script quality, and build objects that are easier to upkeep and less likely to break due to maintenance or software releases.

Demo Data

All demos in this article will use new objects we create here. This will allow us full reign to customize, test, and break it independently of anything else we are working on.

The following is the TSQL to create a table called dbo.accounts:

|

1 2 3 4 5 6 7 8 9 |

CREATE TABLE dbo.account ( account_id INT NOT NULL IDENTITY(1,1) CONSTRAINT PK_account PRIMARY KEY CLUSTERED, account_name VARCHAR(100) NOT NULL, account_start_date DATE NOT NULL, account_address VARCHAR(1000) NOT NULL, account_type VARCHAR(10) NOT NULL, account_create_timestamp DATETIME NOT NULL, account_notes VARCHAR(500) NULL, is_active BIT NOT NULL); |

This is a fairly simple table with an identity ID and some string/date columns for account data. As we work through this article, we will add and remove columns, as well as customize this further.

Insert Data into SQL Server Using an Explicit Column List

Let’s begin by diving straight into some of the simplest of syntaxes in T-SQL: The INSERT statement. The most common way to insert rows into a table is by doing so with an INSERT statement where we explicitly cite the entire column list prior to providing the values:

|

1 2 3 4 5 6 7 8 9 10 |

INSERT INTO dbo.account (account_name, account_start_date, account_address, account_type, account_create_timestamp, account_notes, is_active) VALUES ('Ed''s Account', '5/1/2019', 'Ed''s Address', 'TEST', GETUTCDATE(), 'This is a test account to model this data.', 0); |

In this example, we provide a complete column list and use the VALUES syntax to list out scalar values to insert into the table. If desired, we can insert multiple rows via this syntax, separating each row by a comma.

We also have the option of omitting columns from the column and SELECT lists. This can be used for columns that allow NULL (and we want to be left NULL), or for columns that have default constraints defined on them (and we want the column to accept the default value). The following example shows an account insertion where we omit the account_notes column:

|

1 2 3 4 5 6 7 8 9 |

INSERT INTO dbo.account (account_name, account_start_date, account_address, account_type, account_create_timestamp, is_active) VALUES ('Initech', '2/19/1999', '4120 Freidrich Ln.', 'LIVE', GETUTCDATE(), 1); |

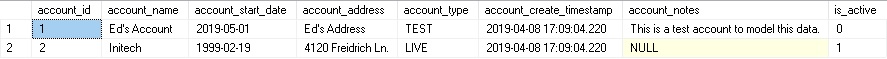

After the two above insertions, we can view the resulting data and note that the results are what we expect:

SQL Server allowed us to omit the account_notes column and in doing so assigned NULL in its place. Let’s add a default constraint to this column:

|

1 |

ALTER TABLE dbo.account ADD CONSTRAINT DF_account_account_notes DEFAULT ('NONE PROVIDED') FOR account_notes; |

With a default constraint on the column, we may test another INSERT where we intentionally leave out the account_notes column:

|

1 2 3 4 5 6 7 8 9 |

INSERT INTO dbo.account (account_name, account_start_date, account_address, account_type, account_create_timestamp, is_active) SELECT 'Dinosaur Corp', '1/1/2003', 'The Triassic Time Period', 'DEMO', GETUTCDATE(), 1; |

The results show us how the new row looks in our table:

We can see that the default value from the constraint was applied to account_notes, as expected. Creating a default constraint can be useful for ensuring that a column can be made NOT NULL and always be assigned a value. It is also useful when we wish to have a column that typically is not assigned a value, but requires one for an application or reporting purpose. A default constraint should never be used to generate placeholder, fake, or obfuscated data. For example, -1 is a poor choice for an integer column and 1/1/1900 is a lousy choice for a date column as each provides confusing meaning that is not intuitive to a developer or someone consuming this data.

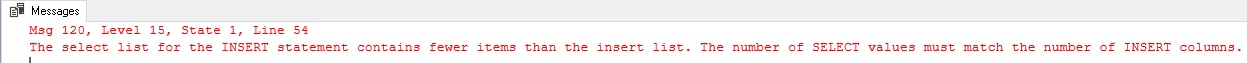

The primary benefit of inserting data with an explicit column list are that you document exactly what columns are being populated, and what data is being put into each column. If a column is left off the list, then it will be made NULL. If a NOT NULL column with no default constraint is left off of the list, an error will be thrown, similar to this:

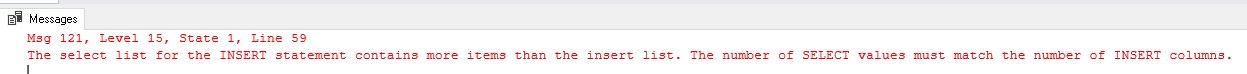

Similarly, if you accidentally leave off a column from the column list, you’ll get this error:

As a result, the explicitly provided column list makes it hard to accidentally leave columns out.

This syntax has a downside, though, and that is maintainability in scenarios where table schema changes often and there is a desire to always SELECT *. If you are dumping data to an output table and do not care about column order, typing, or quantity, then having to always adjust the column list to match the SELECT details may be a hassle and not worth the effort.

Insert Data into SQL Server Without an Explicit Column List

This leads us into the alternative way to insert data into an existing table, which is to do so without a column list. An insert of this form will look like this:

|

1 2 3 4 5 6 7 8 9 |

INSERT INTO dbo.account SELECT 'The Pokemon Company', '4/23/1998', 'Roppongi Hills Mori Tower 8F, Tokyo, Japan', 'LIVE', GETUTCDATE(), 'Very valuable. They make all the Pokemon!', 1; |

This statement executes successfully, despite no column list being provided. When SQL Server binds this query to its underlying objects, it will pull the column list in order and apply it to the data being inserted. If everything matches up, then we’re good to go, otherwise we will receive an error similar to the two we saw earlier that indicate column lists that do not match up.

The upside (if we wish to call it an upside) of this approach is that it is fast and requires less upkeep as there’s no need to write and maintain a list of columns with the insert. The downside is that if you mismatch columns, you may get a different sort of error message. Consider the following query:

|

1 2 3 4 5 6 7 8 |

INSERT INTO dbo.account SELECT 'The Pokemon Company', '4/23/1998', 'Roppongi Hills Mori Tower 8F, Tokyo, Japan', GETUTCDATE(), 'Very valuable. They make all the Pokemon!', 0; |

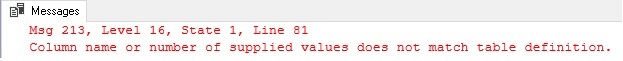

When executed, we get a new error message:

In this scenario, we left out a column, but since this table includes NULLable columns, SQL Server tried to match up the table’s columns anyway using the data we provided, but was unable to make a meaningful match.

Because of the potential for confusing errors and the inability to easily match up columns to data, inserting into a table without providing a column list is not seen as a best practice and should be avoided whenever possible. I would recommend including a column list in all INSERT statements unless you happen to have a very unique use-case in which the convenience of the simpler syntax outweighs the risk of future code breaking if the table’s schema changes.

In addition, the column list provides clear documentation to the user as to what is being inserted and which columns the data will be going into. We can improve upon this further if we wish by adding aliases to all inserted columns:

|

1 2 3 4 5 6 7 8 9 10 |

INSERT INTO dbo.account (account_name, account_start_date, account_address, account_type, account_create_timestamp, account_notes, is_active) SELECT 'Microsoft' AS account_name, '4/4/1975' AS account_start_date, 'One Microsoft Way in Redmond, Washington' AS account_address, 'LIVE' AS account_type, GETUTCDATE() AS account_start_date, 'They make SQL Server. Thanks!' AS account_notes, 1 AS is_active; |

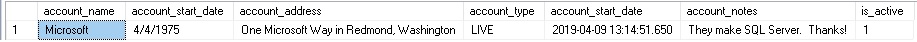

Adding column aliases may seem like overkill, but when working with longer INSERT statements, dynamic SQL, or complex queries, the added documentation can greatly aid in writing, modifying, and troubleshooting those queries. You can also test your insert statement by highlighting the SELECT list only and executing it, which returns values along with the handy column headers:

Alternatively, you can provide that documentation in comments:

|

1 2 3 4 5 6 7 8 9 10 |

INSERT INTO dbo.account (account_name, account_start_date, account_address, account_type, account_start_date, account_notes, is_active) SELECT 'Microsoft', -- account_name '4/4/1975', -- account_start_date 'One Microsoft Way in Redmond, Washington', -- account_address 'LIVE', -- account_type GETUTCDATE(), -- account_start_date 'They make SQL Server. Thanks!', -- account_notes 1; -- is_active |

This can be a nice way to document without adjusting the query. The only downside is that you lose the handy column names that were demonstrated above with the test SELECT. Both are worth the extra 30 seconds to include in your code as they will save far more time in the future when the time comes to modify or troubleshoot that code.

Insert Data into SQL Server with SELECT INTO

It is possible to create a new table object and SELECT data into it as a part of a single statement. This can be a convenient way to avoid the hassle of defining a table up-front and enumerating column names. Typically this is used to populate temporary tables, but it could be used for permanent tables if a scenario called for it. SELECT INTO may not be used to automatically create table variables.

The query below shows how we can accomplish this task quickly and efficiently:

|

1 2 3 4 5 6 |

SELECT 'Ed Pollack' AS developer_name, 'SQL Server 2019 CTP1' AS database_engine_of_choice, 'Pizza' AS food_choice, 10 AS spice_level INTO #developer_info; |

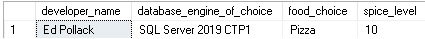

When executed, a temporary table will be created on-the-fly with the column names provided. When we query the table, we get the following results:

The data types for the columns are automatically chosen by SQL Server. We can verify them by querying TempDB as follows:

|

1 2 3 4 5 6 7 8 9 10 11 |

SELECT tables.name AS TableName, columns.name AS ColumnName, columns.max_length AS ColumnLength, types.name AS TypeName FROM TempDB.sys.tables INNER JOIN TempDB.sys.columns ON tables.object_id = columns.object_id INNER JOIN TempDB.sys.types ON types.user_type_id = columns.user_type_id WHERE tables.name LIKE '#developer_info%'; |

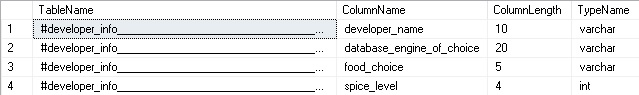

The results show columns that were sized to meet the minimum needs of the data that I inserted:

Had I inserted multiple rows, then the column sizes would match the smallest sizes needed to fit all of that data. This is amazingly convenient assuming that we do not need to add additional data to the temporary table later. If we do, we would need to ensure that the new data does not exceed the column sizes that have already been defined.

The SELECT INTO syntax provides a huge convenience when we want to insert data into a table that may vary greatly from execution-to-execution, or that may undergo schema changes often enough so as to make the maintenance of column lists challenging.

You can control data types if you wish. Let’s repeat our example above using the following TSQL:

|

1 2 3 4 5 6 |

SELECT CAST('Ed Pollack' AS VARCHAR(50)) AS developer_name, CAST('SQL Server 2019 CTP1' AS VARCHAR(30)) AS database_engine_of_choice, CAST('Pizza' AS VARCHAR(25)) AS food_choice, CAST(10 AS DECIMAL(5,2)) AS spice_level INTO #developer_info; |

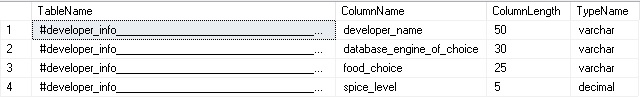

When we consult with system views, we can confirm that the data types have been defined as we wanted them and not as the minimal sizes required to support the data that I created:

The primary downside of using the SELECT INTO syntax is that the resulting table will have no indexes or constraints defined on it. If any additional structure is required for the table, it will need to be added after-the-fact. For scenarios where this is not needed, then SELECT INTO provides a very fast and convenient syntax that can shorten code and improve maintainability by reducing the number of places that require change when the data’s underlying schema or definition undergo change.

SELECT INTO can also be used for a generic SELECT statement with no additional definitions, such as this:

|

1 2 3 4 |

SELECT * INTO dbo.account_backup FROM dbo.account; |

In a very short TSQL statement, we created a new permanent table and inserted all of the contents of dbo.account into it. Note that when this syntax is used, the data types in the resulting table will match the source table as they are currently defined.

Insert Data SQL Server via Stored Procedure or Dynamic SQL

Stored procedures are often used to return data to applications, reports, or additional TSQL scripts for processing. The results of any stored procedure can be inserted directly into a predefined table like this:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 |

CREATE TABLE #temp ( SPID VARCHAR(100), STATUS VARCHAR(100), LOGIN VARCHAR(100), hostname VARCHAR(100), blkby VARCHAR(100), dbname VARCHAR(100), command VARCHAR(100), cputime VARCHAR(100), diskit VARCHAR(100), latbatch VARCHAR(100), programname VARCHAR(100), spid2 VARCHAR(100), requestid VARCHAR(100)) INSERT INTO #temp EXEC sp_who2; SELECT * FROM #temp WHERE dbname = 'AdventureWorks2016CTP3'; DROP TABLE #temp; |

This monitoring script will execute sp_who2 to return a list of current processes in a given database. By default, this stored procedure returns all sessions, though parameters can be supplied to filter by login or session ID. To filter by database, though, would otherwise require returning all data and then manually removing the irrelevant rows. By creating a temporary table up front and inserting the results directly into it, we are then freed up to filter the result set by whatever criteria we wish. Any table may be used for this purpose, including permanent tables, as well as table variables.

The same syntax can be used to insert the results of dynamic SQL into a table, like this:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 |

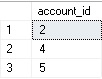

DECLARE @sql_command NVARCHAR(MAX); DECLARE @account_type SYSNAME = 'LIVE'; SELECT @sql_command = ' SELECT account_id FROM dbo.account WHERE account_type = ''' + @account_type + ''';'; CREATE TABLE #id_list (account_id INT NOT NULL PRIMARY KEY CLUSTERED); INSERT INTO #id_list (account_id) EXEC sp_executesql @sql_command; SELECT * FROM #id_list; DROP TABLE #id_list; |

The end result of this code is that we use sp_executesql to execute dynamic SQL, placing the results directly into a temporary table.

In both of these scenarios, we were able to execute procedural TSQL and insert the results directly into an existing table. SELECT INTO is not supported with this syntax, but everything else we have discussed so far does work with this convention.

Insert Data SQL Server with OUTPUT INSERTED

A bonus way we can generate data is via an existing statement. When we execute any write operation, we may output data from before or after the change to another table. Here is an example of how this looks:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 |

CREATE TABLE #account_ids (account_id INT NOT NULL PRIMARY KEY CLUSTERED); UPDATE account SET is_active = 1 OUTPUT INSERTED.account_id INTO #account_ids FROM dbo.account WHERE account_type = 'LIVE'; SELECT * FROM #account_ids; DROP TABLE #account_ids; |

The goal above is to update all accounts of type “LIVE” to be active. We also want to return the account_id for each account that was updated. Using OUTPUT INSERTED allows us to accomplish both tasks in a single set-based solution. The results show us which IDs were affected by the update statement:

INSERTED will contain all columns in the table as they appear after changes have been applied. Similarly, DELETED will contain the previous versions. We can mix and match these for maximum effect:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 |

CREATE TABLE #account_ids (account_id INT NOT NULL PRIMARY KEY CLUSTERED, is_active_previous BIT NOT NULL, is_active_current BIT NOT NULL); UPDATE account SET is_active = 0 OUTPUT INSERTED.account_id, DELETED.is_active, INSERTED.is_active INTO #account_ids FROM dbo.account WHERE account_type = 'LIVE'; SELECT * FROM #account_ids; DROP TABLE #account_ids; |

The results show that we not only captured the account IDs, but also the previous and new values for the is_active flag. This is a huge convenience as we can use OUTPUT INSERTED in INSERT, DELETE, UPDATE, and MERGE statements to quickly pull before & after data for use in additional processing.

This is a far superior solution to iteration and/or using SCOPE_IDENTITY() and is the only easy way to capture data in this fashion with so little code. OUTPUT INSERTED is a great method for getting before & after data from DML statements. It is also useful for collecting a list of rows that were altered in a given TSQL statement, so we can take additional actions or report on them as needed.

Which Method is Best?

With a variety of methods to insert data into SQL Server available, the first question we will ask is which syntax should we use? The answer is going to depend on your use-case and specifically what is most important to a given application. To summarize our work so far:

Use an INSERT with an explicit column list for applications where column lists, inputs, and outputs do not change often. These are scenarios where change typically consist of column additions or alterations resulting from software releases. The column lists also add a layer of protection against logical errors if a column is added, removed, or altered without the INSERT statement also being updated. An error being thrown is a far better outcome than data quietly being handled incorrectly. This syntax is generally considered a best practice as it provides both documentation and protection against inadvertent mistakes should the schema change in the future.

An INSERT with no column list carves out a niche in scenarios where the columns are unknown or frequently changing. This might be useful in ETL, reporting, or scenarios involving transient data when the data is unstructured. Despite that possible application, I would lean towards using SELECT INTO for these applications as they provide a bit more safety against inadvertent mistakes with your data. An alternative to cover the need to insert into a pre-existing table would be to use SELECT INTO to create a temporary data structure and then insert from that temporary data into the permanent table using a formal column list.

SELECT INTO provides us the ability to quickly create a new table and dump data into it with very little setup or coding. For small data sets or those that are going to be scanned in their entirety, this is a great way to move data with minimal TSQL and maintenance. The primary downside is the inability to include indexes or constraints on the table until after it is created. This syntax must create a new table, which lends itself towards using temporary tables as a target to insert data to.

OUTPUT INSERTED allows us to return data from before, after, or before and after a DML statement. This is a hugely useful syntax and one that has no intrinsic downsides. It is a great alternative to iteration, functions, or triggers that may attempt to accomplish the same tasks. The syntax is relatively simple and can be applied to any data elements prior to or after a given change. You may even include data that is not directly affected by a DML statement, but was joined for use in the query!

Conclusion

There are many ways to insert data into SQL Server, but not all were created equal. Picking the right syntax can have a significant impact on performance, documentation, and maintainability. This article provides a comparison of a variety of syntaxes, as well as the pros, cons, and demos of each one.

Always consider your application when writing code and adjust your style based on how often schema and code are expected to change. Schema and applications that are static and rarely changed can afford to have hard-coded INSERT statements with columns, aliases, and/or reference documentation to make them easy to read and maintain.

For schema or business needs that changes often, SELECT INTO or the omission of the column list from an INSERT may allow for more fluidity in places where code would greatly benefit from it.

Having a variety of options at our disposal allows us to make better decisions when smart choices are needed and will help us write better code that will make future developers appreciate us that much more as it is updated and maintained!

References and Further Reading

- MSDN reference for OUTPUT INSERTED and general use of the OUTPUT clause

- Demos of how to use system catalog views from Microsoft’s documentation

- Microsoft’s Extensive documentation on the INSERT statement

- SQL Server Database Metrics - October 2, 2019

- Using SQL Server Database Metrics to Predict Application Problems - September 27, 2019

- SQL Injection: Detection and prevention - August 30, 2019