On the last article about the best modes to move the disk-based tables to using the In-Memory feature we covered all the aspects and styles available on SQL Server 2014. Continuing on the migration process now we’re going to look at some of the new enhancements of makes SQL Server 2016

Data Collector [Transaction Performance Analysis Overview Report]

The Management Data Warehouse was incorporated into SQL Server 2008 for one specific objective, collect different sets of data and store in a relational database for report purposes.

A data collector is a centralized place where we can collect and store performance and analytic data for further analysis and troubleshooting purposes.

If you have never used it before, I strongly recommend that you to test it in your development environment first. Please refer to the article here to configure and understand more about the MDW.

In SQL Server 2014 the Data Collector added a new report called – Transaction Performance Analysis Overview with the main purpose to provide a smart way to identify which tables and stored procedure will give you the best performance gain after being migrated to In-Memory.

Those new collectors were added to SQL Server 2014 and named the Analysis, Migrate and Report (AMR) tool.

The main point to adding this into SQL Server 2014 was to provide a better way for the DBA to have a location to discover and find the most applicable tables and SPs that can be migrated to memory-optimized objects. The AMR basically consists of the:

- Transaction Performance Collector

- Memory Optimization Advisor

- Native Compilation Advisor

In SQL Server 2016, new enhancements have been introduced in the Data Collector feature for this particular report that we’re looking into. Now the contention and usage analysis is more precise.

Those sets of collectors are responsible for analyzing the workload and discovering the necessary patterns to suggest the best tables and SPs to be ported to In-Memory.

The AMR tool leverages the new Transaction Performance Analysis Overview report in order to identity bottlenecks and provide assistance to convert the disk-based tables to memory-optimized objects.

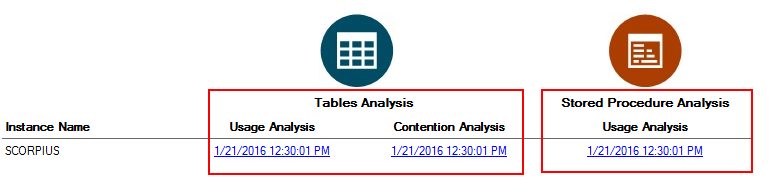

The Report page displays two forms to analyze your workload/environment divided by Tables and SPs

- Usage Analysis

- Contention Analysis

As starting point let’s check the Table Analysis bar by digging into the 2 reports – Usage Analysis and Contention Analysis.

Table Analysis – Usage & Contention Analysis

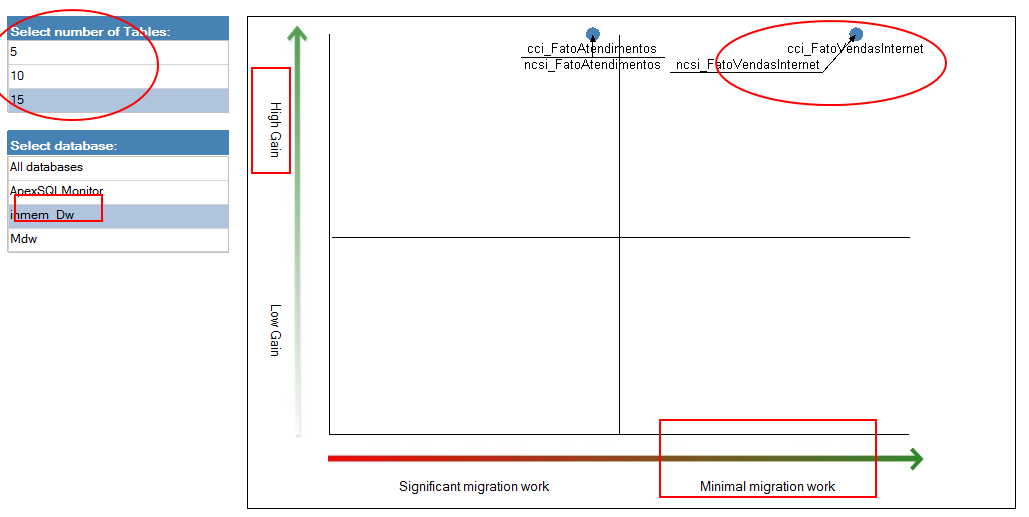

Figure 2 – Recommended Tables Based on Usage The most important information that we can take from here is the magic quadrant that appears on both reports. These arrows indicates 2 major bits of information – High Gain and Minimal Migration Work. This combination is very attractive because if one of the tables appears there means that the migration from disk-based tables to in-memory tables will be able to take the most advantage of the migration with minimal effort.

Clicking on one such table, we will step into detailed information about the possible gains to port the table to memory.

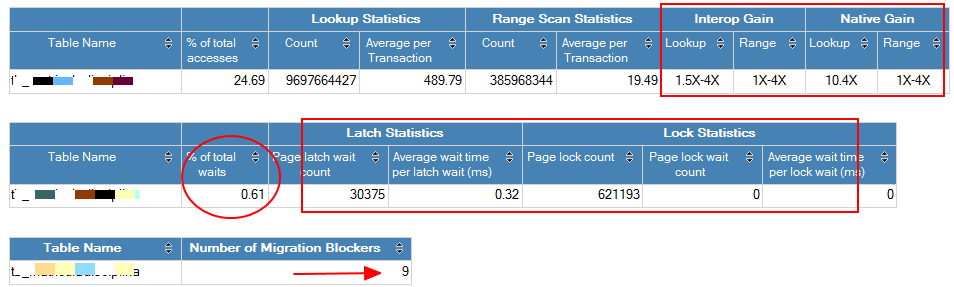

Figure 3 – Details for Table The report leverages a lot of useful information but unfortunately the data related to Lookup and Range Scan Statistics are blank at this stage because data is not being generated from the high workload environment.

On this report are two key things that are really interesting to capture, the interop and native gain aspects. Normally we break the In-Memory migration process into 2 steps, first we recommend the Interop Gain and then if your gains were satisfactory, but you want more, you can jump and go to the Native Gain.

The Interop Gain is the easiest and safest way to migrate the data to the memory-optimized objects because it will require a minimum of effort from. The process is quite simple, just use the Memory Optimization Advisor, to move the table to In-Memory. This will not interfere or affect the application because you can rename the new optimized-memory table to the same name of the table that the application uses making for a clean, fast and easy migration. The catch here is that the potential gains of the Interop Mode are not so significant as the Native Gains because every query that hits that table in Interop Mode needs to pass through the Query Interoperability component inside of the Sqlservr.exe.

On the other hand the Native Gain doesn’t use the Query Interoperability component to access the memory objects. Actually using this mode, SQL Server will simply point to the Natively Compiled Stored Procedure component that will require the Storage Engine to access the already persisted and compiled object in native code. This access is faster than the Interop Mode but comes with some drawbacks like:

- Limited Code Functionality

- Logic Change on SPs

- Cost of Time and Effort

Application Change

Stored Procedure Analysis – Usage Analysis

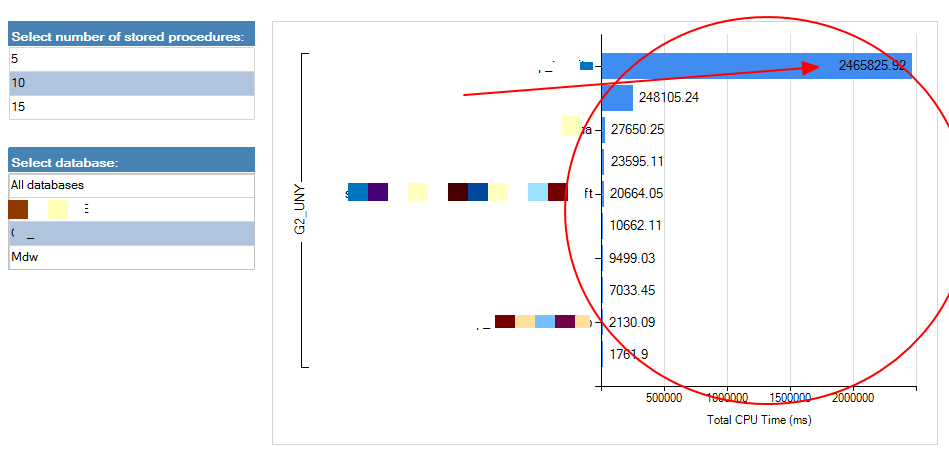

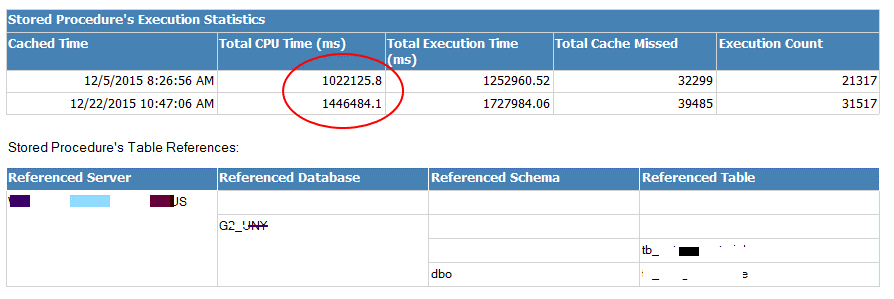

Figure 4 – Recommended Stored Procedure Based on Usage Based upon the usage, the report gives you the top rated SPs by analyzing the code and checks if they are good candidates to become Natively Stored Procedures. By clicking on the first SP showed at the prior on the list we can retain more statistical information. After reviewing this information the next move is deciding if it is pertinent to rewrite to code and turn this into a Natively Stored Procedure. Please note that Natively Stored Procedures just access memory-optimized tables.

Figure 5 – Details for SP

Generate In-Memory OLTP Migration CheckList on GUI & PowerShell

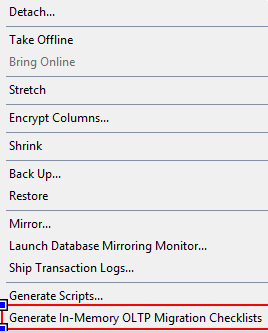

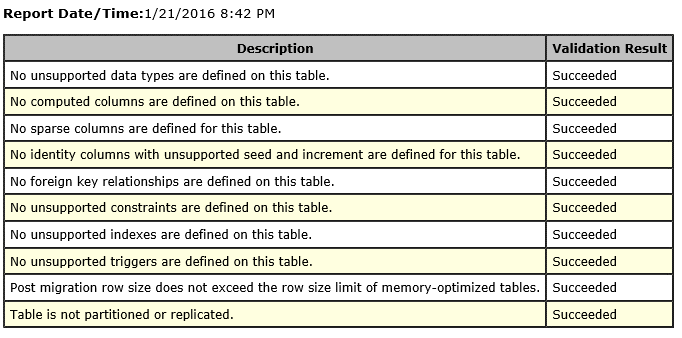

The new migration checklist option identifies any table or stored procedure that are not supported to be ported to a memory-optimized object in form of a report representation. This advisor doesn’t have any dependency on the Management Data Warehouse and gives a complete checklist for a single or multiple disk-based tables or interpreted stored procedure as part of the SQL Server 2016 enhancements.

There are 2 different forms to use the In-Memory OLTP Migration CheckList. The first option is to use the UI command, so for this go to the database that has the tables or SPs that you want to verify, right click – tasks and go to Generate In-Memory OLTP Migration CheckList.

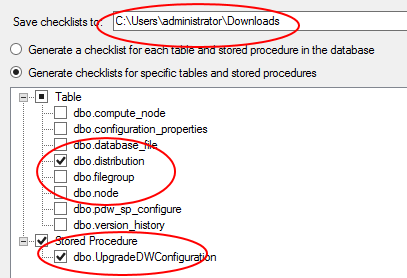

Select the place that you want to save the checklist and choose the Tables and SPs that you want to verify if they’re complying with all the pre-requisites established and finish the prompt.

Openning the folder that you selected that in my case is the C:\Users\administrator\Downloads\DWConfiguration\Tables you will see all the tables that you selected to be reported.

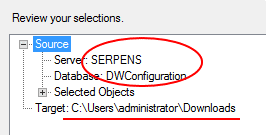

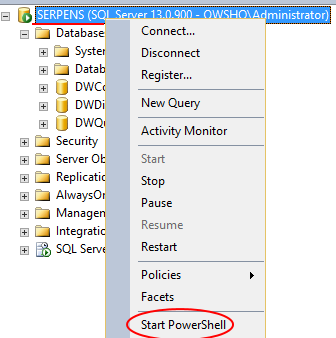

The second option available will return the same input of the generated report and you can accomplish it by using PowerShell Commandlets. Open PowerShell on SQL Server and use this command to generate the same report that we looked at before. To learn more about the PowerShell commandlet, please click here.

|

1 2 3 4 5 6 7 8 9 |

PS C:\Users\administrator> $objectsList = "dbo.distribution","dbo.UpgradeDWConfiguration" for ($i = 0; $i -le $objectsList.count-1; $i++) { $schema = $objectsList[$i].Split(".")[0] $object = $objectsList[$i].Split(".")[1] Save-SqlMigrationReport -Server 'SERPENS' -Database 'DWConfiguration' -Schema $schema -Object $object -FolderPath 'C:\Users\administrator\Downloads' } |

Conclusion

At the end of these two articles, we visited all the migration steps/possibilities to transform disk-based objects into memory-optimized objects passing through the SQL Server 2014 and 2016 versions.

Now that you already know the steps to proceed with this, you are ready to start your first migration and see the power of the In-Memory Optimization for yourself.

- In-Memory OLTP Series – Data migration guideline process on SQL Server 2016 - January 29, 2016

- In-Memory OLTP Series – Data migration guideline process on SQL Server 2014 - January 28, 2016

- In-Memory OLTP Series – Table Creation & Types - March 13, 2015