Introduction

SQL Server keeps track of all database modifications and every database transaction. This is done in a file called the transaction log or TLOG. This transaction log is particular to a SQL Server database and there is, at least, one transaction log per SQL Server database.

As explained on MSDN, the transaction log is a critical component of the database and, if there is a system failure, the transaction log might be required to bring your database back to a consistent state. The transaction log should never be deleted or moved unless you fully understand the ramifications of doing this.

So, we can conclude that the information contained inside the transaction log is valuable and maybe we could even take advantage of this information to answer questions like “Who is responsible for this table drop?” or “When did the operation occurred?” in order to recover a data alteration or data loss. Let’s explore which tools can help and what we actually can do with it…

Why getting back information from transaction log?

Let’s say there is an issue: somebody dropped a table or deleted rows in a particular table and you want to get the version of those rows just before he dropped it. Well, you’ve well done your job and have taken backups but, in general, you can’t simply restore it (somewhere else than on the production database) and take back data because the time between the last backup and the moment when the person who did the mistake actually fired its DROP or DELETE query.

To be able to do it and being sure of the data we get back, we need to know precisely in which transaction the query was executed so that we can get a Log Sequence Number (LSN) and be able to use the STOPBEFOREMARK option of RESTORE LOG command like the code below.

|

1 2 3 |

RESTORE LOG WITH STOPBEFOREMARK = 'lsn:'; |

How to get back information from the transaction log?

The first way to have access to information inside the transaction log is to use DBCC LOG(<DbName>) command. But as you will see in the figure below, we won’t go that far with its output: you don’t know anything about the object that has been impacted and it’s not really helpful in recovery.

Fortunately, there is another way. Unfortunately, this operation will require the use of undocumented SQL functions and, since they are not documented by Microsoft, they are not supported and you must use it at your own risk.

The first one we will discuss is called sys.fn_dblog. This function reads the active part of the transaction log in a log sequence number (LSN) interval. What I call “the active part of a transaction” is the portion of the transaction log where transaction details are still there and have not been truncated. The truncation can happen automatically if the database is in simple recovery model, when a TRUNCATE LOG operation has been fired or after a backup occurred.

You will find below a simple way to call it:

|

1 2 3 4 |

SELECT * FROM fn_dblog(NULL, NULL) -- (StartLSN,StopLSN) – NULL means Everything -- The LSN must be decimal "X:Y:Z" |

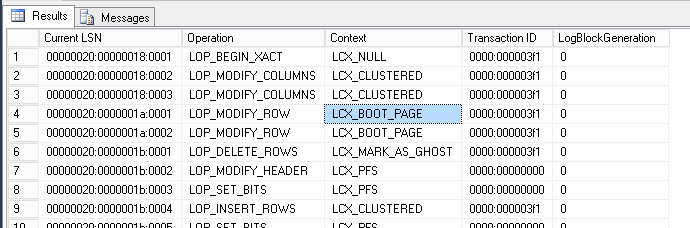

Here is a sample result. As you can see, the first columns are [CurrentLSN] and [Operation]. The former allows to know the log sequence number corresponding to the entry in transaction log while the latter tells the kind of operation that have been made. There is also a [TransactionId] column. So, we can tell what have been done for a single TransactionId and maybe. Two columns that are not displayed here but seem interesting is [AllocUnitId] and [AllocUnitName].

One more word about the way to call the sys.fn_dblog function is that, as written in the code sample above, you can’t use as a parameter the values returned by the function in the [CurrentLSN] column. The reason is that the [CurrentLSN] value is expressed in hexadecimal and the function takes decimal expressions of the LSN. So, you need to convert a [CurrentLSN] value before using it. There are multiple ways to do it: either using a website resource like BinaryHexConverter. To get it right, you will have to firstly split the [CurrentLSN] value into three pieces using the ‘:’ character as separator then convert each individual value using the website and finally concatenate everything with the ‘:’ character in the same order. The operation is quite the same if you want to use this LSN in conjunction with the STOPBEFOREMARK option of RESTORE LOG command: you still split the [CurrentLSN] the same way but concatenate differently. In fact, the difference resides in the fact that the second number is padded by leading 0 so that its length is 10 digits and the third number is padded by leading 0 so that its length is 5 digits.

As an alternative, you can use the bit of T-SQL code shown below:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 |

SET NOCOUNT ON DECLARE @LSN NVARCHAR(64); SET @LSN = 'CHANGEME' ; -- To test : -- SELECT TOP 1 [Current LSN] FROM fn_dblog(NULL, NULL) DECLARE @LSN_Decimal NVARCHAR(64); -- LSN expression to use with fn_dblog, db_dump_dblog DECLARE @LSN_Decimal2 NVARCHAR(64); -- LSN expression to use with STOPBEFOREMARK DECLARE @tsql NVARCHAR(MAX); DECLARE @tbl TABLE ( id INT identity(1,1), val VARCHAR(16) ); -- Extract first part SET @tsql = 'SELECT CONVERT(VARCHAR(16),CAST(0x' + SUBSTRING(@LSN, 1, 8) + ' AS INT))'; INSERT INTO @tbl EXEC(@tsql); SELECT @LSN_Decimal = val , @LSN_Decimal2 = val FROM @tbl; -- table variable => SQL Server always thinks it only returns 1 row. -- deleting content delete from @tbl; SET @tsql = 'SELECT CONVERT(VARCHAR(16),CAST(0x' + SUBSTRING(@LSN, 10, 8) + ' AS INT))'; INSERT INTO @tbl EXEC(@tsql); SELECT @LSN_Decimal = @LSN_Decimal + ':' + val, @LSN_Decimal2 = @LSN_Decimal2 + RIGHT('0000000000'+isnull(val,''),10) /*10 digits*/ FROM @tbl; delete from @tbl; SET @tsql = 'SELECT CONVERT(VARCHAR(16),CAST(0x' + SUBSTRING(@LSN, 19, 4) + ' AS INT))'; INSERT INTO @tbl EXEC(@tsql); SELECT @LSN_Decimal = @LSN_Decimal + ':' + val, @LSN_Decimal2 = @LSN_Decimal2 + RIGHT('00000'+isnull(val,''),5) /*5 digits*/ FROM @tbl; delete from @tbl; PRINT 'LSN Decimal translation for fn_db_log :' + @LSN_Decimal; PRINT 'LSN Decimal expression for STOPBEFOREMARK :' + @LSN_Decimal2; /* Choose : SELECT * FROM ::fn_dblog(NULL, @LSN_Decimal); SELECT * FROM ::fn_dblog(@LSN_Decimal, NULL); */ |

So, what kind of operations can be found with it? You will find below a partial list of the operations that can be found:

| OPERATION | DESCRIPTION |

| LOP_ABORT_XACT | Indicates that a transaction was aborted and rolled back. |

| LOP_BEGIN_CKPT | A checkpoint has begun. |

| LOP_BEGIN_XACT | Indicates the start of a transaction. |

| LOP_BUF_WRITE | Writing to Buffer. |

| LOP_COMMIT_XACT | Indicates that a transaction has committed. |

| LOP_COUNT_DELTA | ? |

| LOP_CREATE_ALLOCCHAIN | New Allocation chain |

| LOP_CREATE_INDEX | Creating an index. |

| LOP_DELETE_ROWS | Rows were deleted from a table. |

| LOP_DELETE_SPLIT | A page split has occurred. Rows have moved physically. |

| LOP_DELTA_SYSIND | SYSINDEXES table has been modified. |

| LOP_DROP_INDEX | Dropping an index. |

| LOP_END_CKPT | Checkpoint has finished. |

| LOP_EXPUNGE_ROWS | Row physically expunged from a page, now free for new rows. |

| LOP_FILE_HDR_MODIF | SQL Server has grown a database file. |

| LOP_FORGET_XACT | Shows that a 2-phase commit transaction was rolled back. |

| LOP_FORMAT_PAGE | Write a header of a newly allocated database page. |

| LOP_HOBT_DDL | ? |

| LOP_HOBT_DELTA | ? |

| LOP_IDENT_NEWVAL | Identity’s New reseed values |

| LOP_INSERT_ROWS | Insert a row into a user or system table. |

| LOP_LOCK_XACT | |

| LOP_MARK_DDL | Data Definition Language change – table schema was modified. |

| LOP_MARK_SAVEPOINT | Designate that an application has issued a ‘SAVE TRANSACTION’ command. |

| LOP_MIGRATE_LOCKS | |

| LOP_MODIFY_COLUMNS | Designates that a row was modified as the result of an Update command. |

| LOP_MODIFY_HEADER | A new data page created and has initialized the header of that page. |

| LOP_MODIFY_ROW | Row modification as a result of an Update command. |

| LOP_PREP_XACT | Transaction is in a 2-phase commit protocol. |

| LOP_SET_BITS | |

| LOP_SET_BITS | Designates that the DBMS modified space allocation bits as the result of allocating a new extent. |

| LOP_SET_FREE_SPACE | Designates that a previously allocated extent has been returned to the free pool. |

| LOP_SORT_BEGIN | A sort begins with index creation. – SORT_END end of the sorting while creating an index. |

| LOP_SORT_EXTENT | Sorting extents as part of building an index. |

| LOP_UNDO_DELETE_SPLIT | The page split process has been dumped. |

| LOP_XACT_CKPT | During the Checkpoint, open transactions were detected. |

So, basically, the transaction log contains information not only on DML and DDL operations but also on SQL Server internal operations like a checkpoint, a buffer write or a page split. But it has a limit as explained above. Let’s prove this with this simple example.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 |

--Create DB. USE [master]; GO IF(DB_ID('DBLogReading') IS NULL) Exec sp_executesql N'CREATE DATABASE DBLogReading'; GO -- Create tables. USE DBLogReading; GO CREATE TABLE [City] ( [CityId] INT IDENTITY, [Country] CHAR (32) DEFAULT 'Belgium', [Name] CHAR (32) DEFAULT 'Liege', [LastVisitDate] DATETIME DEFAULT GETDATE () ); GO -- Insert some data SET NOCOUNT ON USE DBLogReading go INSERT INTO City DEFAULT VALUES ; GO 100 -- How many rows returned by fn_dblog ? SELECT COUNT(*) FROM fn_dblog(null,null) GO -- take a backup BACKUP DATABASE DBLogReading TO DISK = 'D:\Backup\DBLogReading_Full.bak' -- How many rows returned by fn_dblog ? SELECT COUNT(*) FROM fn_dblog(null,null) GO |

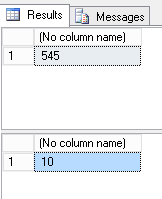

You should see a tremendous reduction in the number of returned values. For me, it passed from 545 to 10. This means that the inactive part of the transaction log which tracked the transactions has been dumped to a backup file and the original entries from the log file have been flushed which means the log truncation can occur to reduce space consumption inside the transaction log.

You will find demos later. Those demos are a step by step procedure to recover data after a DELETE or DROP operation has occurred using sys.fn_dblog function.

Before jumping to the other function, you will find another query below which just lists committed DML operations. You can customize it to get some other information:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 |

SELECT [Current LSN], [Transaction ID], [Operation], [Transaction Name], [CONTEXT], [AllocUnitName], [Page ID], [Slot ID], [Begin Time], [End Time], [Number of Locks], [Lock Information] FROM sys.fn_dblog(NULL,NULL) WHERE Operation IN ('LOP_INSERT_ROWS','LOP_MODIFY_ROW', 'LOP_DELETE_ROWS','LOP_BEGIN_XACT','LOP_COMMIT_XACT') |

How to get back information from a transaction log backup file?

Here again, you must use it at your own risks and according to Paul Randal on his blog post, it seems that every time this function is called, a new SQLOS scheduler and three threads are created. Those threads won’t disappear until a server restart. Still according to Paul Randal, this has been addressed as a bug to Microsoft. It’s fixed in SQL Server 2012 SP2+ and SQL Server 2014 but won’t be backported to SQL Server 2008 (R2).

This function has a lot of parameters. The two first parameters are the same as sys.fn_dblog i.e. an interval in LSN. Then it takes a device type which can be NULL (which means DISK and is the default value), ‘DISK’, ‘TAPE’, or ‘VIRTUAL_DEVICE’. The next parameter is called @seqnum with a default value of 1. I don’t currently know its meaning. Finally, there are 64 parameters for specifying paths to a log backup device.

Here is its interface:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 |

create function sys.fn_dump_dblog ( @start nvarchar (25) = NULL, @end nvarchar (25) = NULL, @devtype nvarchar (260) = NULL, --@devtype values: NULL(DISK) | DISK | TAPE | VIRTUAL_DEVICE @seqnum Int = 1, @fname1 nvarchar (260) = NULL, @fname2 nvarchar (260) = NULL, @fname3 nvarchar (260) = NULL, ... @fname64 nvarchar (260) = NULL ) returns table … |

Let’s now see an example call, assuming we have a backup file on disk which is on the following path:

|

1 2 |

D:\Backup\DBLogReading_log1.bak |

When the following query will be fired against a database, we’ll get the same kind of dataset as returned by sys.fn_dblog function:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 |

SELECT * FROM fn_dump_dblog ( NULL, NULL, N'DISK', 1, N'D:\Backup\DBLogReading_log1.bak', DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT, DEFAULT); GO |

Demos

In the following, we will try two things: recover data after a DELETE statement occurred and recover data after a DROP statement occurred. As the principles are the same for sys.fn_dblog and for sys.fn_dump_dblog functions, we will just use the first one.

Demo setupLet’s first create a new, empty database and add a simple table inside this database.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 |

--Create DB. USE [master]; GO CREATE DATABASE DBLogReading; GO -- Create tables. USE DBLogReading; GO CREATE TABLE [City] ( [CityId] INT IDENTITY, [Country] CHAR (32) DEFAULT 'Belgium', [Name] CHAR (32) DEFAULT 'Liege', [LastVisitDate] DATETIME DEFAULT GETDATE () ); GO select OBJECT_ID('dbo.City'); -- Keep the returned Object_ID somewhere (Mine:357576312) |

Let’s launch a FULL and a LOG backup to initialize things:

|

1 2 3 4 5 6 7 8 9 |

-- FULL Backup BACKUP DATABASE [DBLogReading] TO DISK = N'D:\Backup\DBLogReading-InitialFULL.bak' GO -- LOG Backup BACKUP LOG [DBLogReading] TO DISK = N'D:\Backup\DBLogReading-InitialLog.trn' GO |

|

1 2 3 4 5 6 |

SET NOCOUNT ON USE DBLogReading go INSERT INTO City DEFAULT VALUES; GO 100 |

Use case 1: Recover deleted data and get to know who did it

Let’s pretend we are doing the following operation by error. The operation consists in some data deletion:

|

1 2 3 4 5 6 7 |

USE DBLogReading Go DELETE City WHERE [CityId] < 12 go --select min(CityId) from City |

We have made some transactions so far. The transaction log must be filled with some useful information and rows have been “unexpectedly” deleted.

The following query calls sys.fn_dblog function and filters its output to only get back information on delete operations in City table:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 |

use DBLogReading go SELECT [Current LSN], [Transaction ID], Operation, Context, AllocUnitName FROM fn_dblog(NULL, NULL) WHERE Operation = 'LOP_DELETE_ROWS' and AllocUnitName = 'dbo.City' ; |

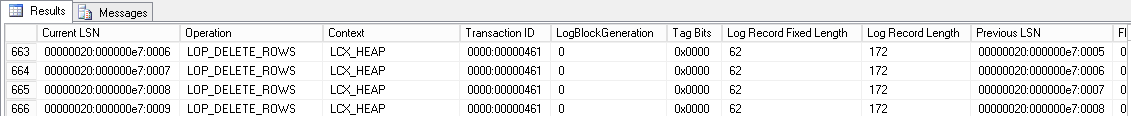

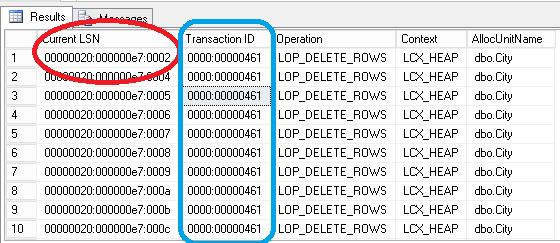

The output of this query is as follows:

With this query, we are able to know the starting LSN for delete operation at which delete operation started for the transaction Id 0000:00000461. But the transaction was already running! We need to find the LSN corresponding to the beginning of the transaction. This can be obtained by filtering the output on LOP_BEGIN_XACT operations only for the given transaction.

The following query will provide this information:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 |

USE DBLogReading Go DECLARE @TranId VARCHAR(32); SET @TranId = '0000:00000461'; -- CHANGEME SELECT [Current LSN], Operation, [Transaction ID], [Begin Time], [Transaction Name], [Transaction SID] FROM fn_dblog(NULL, NULL) WHERE [Transaction ID] = @TranId AND [Operation] = 'LOP_BEGIN_XACT'; |

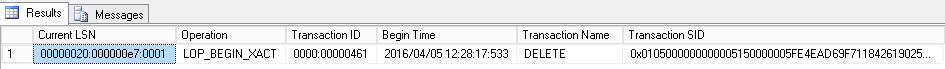

We now know that the DELETE operation started at [Begin Time] value of the column with LSN 0000020:0000e7:0001

We need to convert this value to decimal using one of the methods described previously and only consider the result for STOPBEFOREMARK option usage. In his case, the value to use is 32000000023100001

By the way, at the same time, we get a [TransactionSID] column which tells us which database user ran the DELETE statement.

We can get to know it by running the following query:

|

1 2 3 |

SELECT SUSER_SNAME(0x0105000000000005150000005FE4EAD69F711842619025A78B530000) |

This can be a plus to ensure that it was the good DELETE statement we considered so far.

So, basically, we have the value to use during the last log restore. We have to first restore a full backup media to another location and database with NORECOVERY option enabled then successfully restore logs until the LSN is the expected boundary i.e. 32000000023100001

But before that, we need to run a transaction log backup. That’s because we are using sys.fn_dblog which queries the active part of transaction log. It wouldn’t be the case if we were using sys.fn_dump_dblog since it queries a transaction log backup

|

1 2 3 4 5 |

-- LOG Backup BACKUP LOG [DBLogReading] TO DISK = N'D:\Backup\DBLogReading_logbackup_ThisMorning.trn' GO |

If we had not found the corresponding operation with sys.fn_dblog, we would have used sys.fn_dump_dblog with the last transaction log backup media the same way we did.

Once we have all the transaction log backups needed to recover, we can start restoring. An example code sample is provided below:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 |

--Restoring Full backup with norecovery. RESTORE DATABASE DBLogReading_COPY FROM DISK = 'D:\Backup\DBLogReading-InitialFULL.bak' WITH MOVE 'DBLogReading' TO 'P:\MSSQL\DBLogReading_copy.mdf', MOVE 'DBLogReading_log' TO 'L:\MSSQL\DBLogReading_copy_log.ldf', REPLACE, NORECOVERY; GO -- Restore successive Logs with norecovery until we come to -- the last relievant transaction log backup. RESTORE LOG DBLogReading_COPY FROM DISK = N'D:\Backup\DBLogReading-InitialLog.trn' WITH NORECOVERY; GO --Restore Log backup with STOPBEFOREMARK option to recover exact LSN. RESTORE LOG DBLogReading_COPY FROM DISK = N'D:\Backup\DBLogReading_logbackup_ThisMorning.trn' WITH STOPBEFOREMARK = 'lsn:32000000023100001'; -- CHANGEME GO |

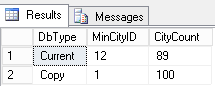

If we take a look at the content of City table, we will see that the rows we deleted are present.

The query to check:

|

1 2 3 4 5 6 7 8 9 10 11 12 |

select 'Current' as DbType , min(CityId) as MinCityID , COUNT(*) as CityCount from DbLogReading.dbo.City union all select 'Copy' , min(CityId) , COUNT(*) from DbLogReading_COPY.dbo.City; |

Its output:

In summary, we’ve found the transaction that did the DELETE operation (this is the hard part of the work in production environment where DELETE statements can occur regularly against multiple tables). Then we got back the LSN corresponding to the beginning of the transaction that contains the DELETE statement. We translated this LSN to a decimal padded value and restored to that LSN in another database. The next step would be to export the deleted rows from the restored database to the actual production database.

Use case 2: Discover LSN corresponding to the start of a table drop operationAs the instructions to restore to a given LSN we get back from sys.fn_dblog and sys.fn_dump_dblog functions, this section will only address the question to retrieving the starting LSN of a transaction which dropped a table.

So, let’s get started by dropping the City table:

|

1 2 3 4 |

USE DBLogReading GO DROP TABLE [City] |

|

1 2 3 4 5 6 7 8 9 10 11 12 13 |

SELECT [Current LSN], Operation, [Transaction Id], [Transaction SID], [Transaction Name], [Begin Time], [SPID], [Description], AllocUnitName FROM fn_dblog (NULL, NULL) WHERE [Transaction Name] = 'DROPOBJ' |

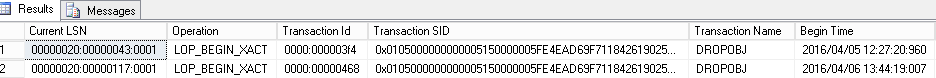

Well, all the demos I’ve seen on the internet which show this operation only have one row returned. This means only one object has been dropped since last log backup. The authors tell then “ok, we have the LSN, restore to that LSN and everything is gonna be alright.” But, it’s not representative of a true production system where there can be multiple object drops between two transaction log backups, or where the application uses tables for storing kind of “sequence numbers” and drops it daily (seriously, we have an application that does it here). Plus, in my experience, the DBA is rarely called directly after such an error.

Here, we have two rows returned.

Fortunately, we can segregate thanks to the [BeginTime] column in our case (we take the last one). If you remember the previous use case, we also segregated on the [AllocUnitName] column, but this column is null for every row returned. This means that, in some cases, we cannot know with exactitude which [CurrentLSN] value should be taken, but I’ve not seen this warning anywhere on the other demos…

I’ve tried different things but except if you know the Object_ID previously used by the object in the database, it’s more or less impossible to know with precision the right [CurrentLSN] value to be used.

So, to me, it’s more a “guess and check” process than a straightforward step by step procedure. But, when you have a very big database, it’s practically impossible due to the time needed to restore.

By the way, here is a sample code, given as is which extracts information on the object_id of the dropped objects:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 |

if(OBJECT_ID('tempdb..#TransactionLog') IS NOT NULL) DROP TABLE #TransactionLog ; if(OBJECT_ID('tempdb..#ObjectInfo') IS NOT NULL) DROP TABLE #ObjectInfo ; -- Capture transaction log content SELECT * INTO #TransactionLog FROM fn_dblog (NULL, NULL); CREATE TABLE #ObjectInfo ( DropStartLSN VARCHAR(32), TransactionId VARCHAR(32), ObjectLockLSN VARCHAR(32) ); WITH DropLSNs as ( SELECT [Current LSN],[Transaction ID] FROM #TransactionLog WHERE [Transaction Name] = 'DROPOBJ' ) INSERT INTO #ObjectInfo ( DropStartLSN,TransactionId,ObjectLockLSN ) select DropLSNs.[Current LSN],DropLSNs.[Transaction ID],MIN(f.[Current LSN]) as LockObjectLSN FROM DropLSNs inner join #TransactionLog f on DropLSNs.[Transaction ID] = f.[Transaction ID] where [Operation] = 'LOP_LOCK_XACT' And [Lock Information] like '%ACQUIRE_LOCK_SCH_M OBJECT:%' group by DropLSNs.[Current LSN],DropLSNs.[Transaction ID]; -- The lock information contains the object_id of the object select oi.*, tl.[Lock Information] FROM #ObjectInfo oi INNER JOIN #TransactionLog tl on tl.[Current LSN] = oi.ObjectLockLSN if(OBJECT_ID('tempdb..#TransactionLog') IS NOT NULL) DROP TABLE #TransactionLog ; if(OBJECT_ID('tempdb..#ObjectInfo') IS NOT NULL) DROP TABLE #ObjectInfo ; |

Demo cleanup

Dropping the databases:

|

1 2 3 4 5 6 7 |

use master; GO IF(DB_ID('DBLogReading') IS NOT NULL) DROP DATABASE [DBLogReading]; IF(DB_ID('DBLogReading_COPY') IS NOT NULL) DROP DATABASE [DBLogReading_COPY]; |

- How to perform a performance test against a SQL Server instance - September 14, 2018

- Concurrency problems – theory and experimentation in SQL Server - July 24, 2018

- How to link two SQL Server instances with Kerberos - July 5, 2018