Introduction

There has always been some debate as to whether or not there are real benefits to be gained from accessing the information in the transaction log. This article will endeavor to answer that question by looking at the following:

- What is the SQL Server Transaction Log?

- What information is stored in the transaction log?

- What can be gained by accessing the information in the transaction log?

- How does the transaction log work?

- What tools are available for reading the transaction log?

- And ultimately, is this something we should be doing at all?

What is the SQL Server Transaction Log?

The main purpose of the SQL Server Transaction Log is to ensure that your database can be brought back to a consistent state in case of a system failure. In addition, it is used to perform other functions such as rollbacks when a rollback command is issued and supporting transactional replication and high availability solutions.

SQL Server logs information about each and every transaction made, into the transaction log before the changes are written to the database. The amount of information logged depends on the recovery model of your database. SQL Server offers 3 different recovery models: Full, Bulk Logged and Simple.

Recovery Models

FULL recovery modelThis recovery model logs every change to every row as well as a copy of each page added to indexes or table. As such the log contains enough information to be able to completely re- construct every action which occurred on the database, allowing you to restore your database back to any specific time, provided that you have a full log chain. All entries are kept in the online transaction log until the log is backed up, after which only active transactions will remain in the online log. This means that in order to get information about completed transactions from the log, the log backups will have to be taken into account.

When you are using the BULK_LOGGED recovery option, all minimally logged operations are not written to the Log. Minimally logged operations are operations such as SELECT INTO, BULK INSERT and Index operations. Essentially just enough information is logged to be able to undo the transaction, but not enough to redo it. The log is handled in much the same way as the FULL recovery model, and inactive transactions are moved to the log backup when a log backup is taken. Of course no information about bulk transactions are available.

The SIMPLE recovery model only logs enough information to allow you to recover your database. All inactive log entries are automatically truncated when a checkpoint occurs. All operations are still being logged, but as soon as a checkpoint occurs the log is automatically truncated, which means that it becomes available for re-use and older log entries can now be over-written.

What is logged in the transaction log?

SQL Server logs every event in a database to a more or lesser extent.

- When a transaction begins or ends

- Every update , insert or delete

- Drop and creation of tables and indexes

- Extent and page allocations and de-allocations

- Truncation of tables

- All locks

Some operations may be minimally logged when the database is in simple or bulk logged recovery model, such as bcp, BULK INSERT, SELECT INTO and SELECT … INSERT command.

What can be gained from reading the Transaction Log?

There are four main reasons why one might be interested in reading the transaction log.

Auditing \ Forensics

SQL Server offers a myriad of methods which can be implemented as preventative measures to circumvent the need to use the SQL Server transaction log to audit a database. This includes SQL Server Auditing (SQL 2008 +), traces and extended events, change data capture to name but a few. Most of these with the exception of the default trace, requires implementation prior to any event occurring.

The SQL Server transaction log however, is always present and as such can offer valuable information after an event occurred despite the fact that no special advanced configuration has been done.

In the absence of all preventative measures, being able to read the SQL Server Transaction log offers the ability to find out who performed a specific transaction after the fact, as well as the ability to obtain the values which have been modified with the option to roll them back.

Recovery

Because every action is logged in the transaction log in such a way that a transaction may be either rolled forward or rolled back, the SQL Server transaction log may be used to recover lost data. Since only changes are logged and data in the log is not stored in a human readable format, getting this information out of the log is not easy, but the data is none the less available if you know where to look and how to read it. More about that later in this article in the section on interpreting the data in fn_dblog and fn_dump_dblog.

Troubleshooting

In certain instances being able to see what exactly happened in a database for a specific period of time is necessary. Let’s say that your database size has grown inexplicably large during the course of the night. It would be impossible to say just by looking at the data what the reason behind this might be. However reading the transaction log will give you exact information about what occurred during the specific period which might have resulted in the rapid growth of your database.

Identifying a restore point

Last but not least, when it comes to having to restore a backup, knowing the exact point in time to which the database can be restored to get the lost or damaged data back is a huge advantage. Instead of restoring multiple backups until you find one which still has the relevant data intact, you can read the transaction log to determine when the event occurred and restore to the precise moment right before the event occurred.

Should Microsoft provide a log reader tool?

There has been some debate as to whether or not Microsoft should provide a log reader tool. Both Kalen Delaney and Paul Randal agrees that there are useful applications of such a tool but that Microsoft should be focusing on more critical things and leave the log reading tools to third party vendor’s who have mastered the art of reading the log.

Anatomy of the SQL Server Transaction Log

The SQL Server transaction log is a single file which usually has an .LDF file extension. Although possible to have multiple log files for a database, the transaction log is always written sequentially and multiple physical log files get treated as one continuous circular file.

SQL Server uses the transaction log to ensure that all transactions maintain their state even in case of a server or database failure. All transactions are written to the Transaction Log before it is written to the data files. This is known as write ahead logging.

Every action performed on SQL Server is logged in the SQL Server transaction log, multiple entries may be created for a transaction as well as all locks that were taken during the operation. Each log entry has a unique number known as the LSN (log sequence number).

Enough information is written to the log to allow for a transaction to be either re-done (rolled forward) or undone (rolled back). In some cases this means that the actual change gets logged, in other instances changes may be logged efficiently by just logging the pages which have been changed as in the case of a table truncation.

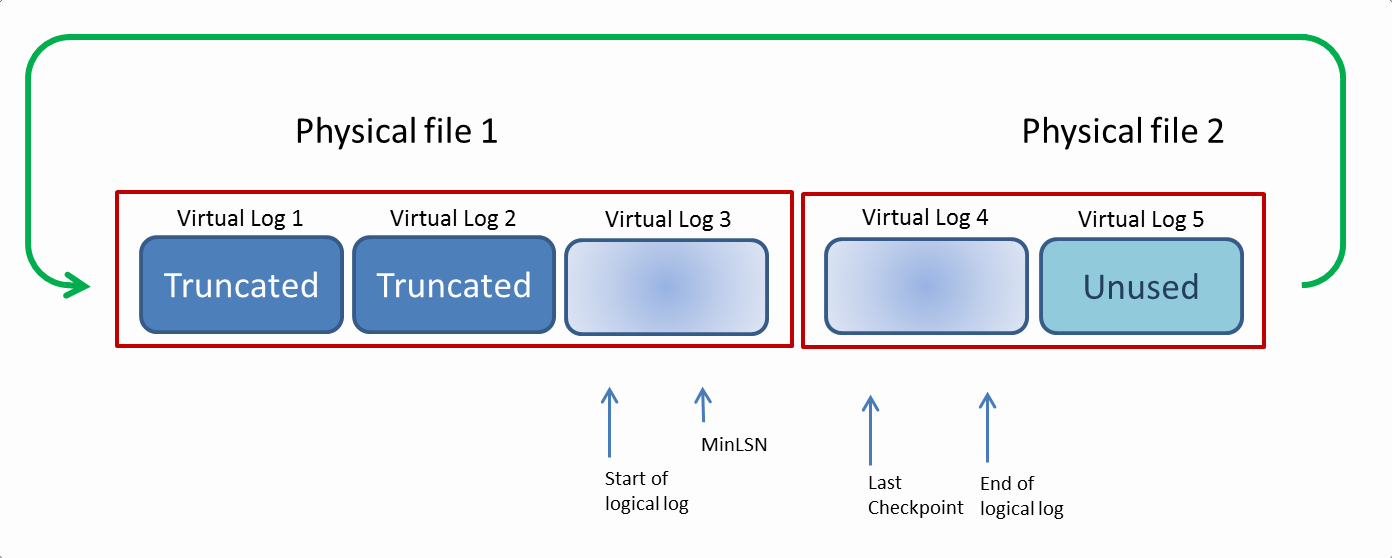

Logically the SQL Server Transaction log is divided into multiple sections known as virtual log files or VLFs. The logical transaction log gets truncated and expanded in units of VLFs. If a VLF no longer contains an active transaction, that VLF can be marked for re-use. If the log needs more space, space is allocated in increments of VLFs. The number and size of the VLFs is decided by the database engine and it will endeavor to assign as few VLFs as possible. Although the size and number of VLFs cannot be configured, it is affected by the initial size and the growth increment of the transaction log. If the log growth increment is set too low, it may result in an excessive amount of VLFs which can have an adverse effect on performance. In order to avoid this, it is important to size the log correctly and grow it in sufficiently large increments.

The following command can be executed to see how many virtual log files there are, how many has been used and what their sizes are. This will be used to determine what the correct size and increment should be.

|

1 |

DBCC LOGINFO |

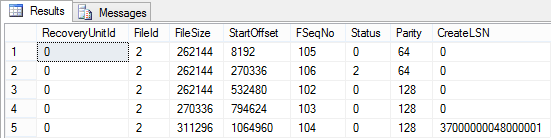

This procedure returns the following columns:

| Column | Description |

| RecoveryUnitID | |

| FileID | This is the identification number of the physical log file. It only applies if you have more than one physical log file. |

| FileSize | The size of the file in bytes |

| StartOffset | This is the offset of where the VLF starts in bytes. The output is sorted on this column. |

| FSeqNo | This is the order in which the VLF will be used. The largest number is the one which is currently being used. |

| Status | There are 2 possible values 0 and 2. 2 means that the VLF cannot be reused and 0 means that it is ready for re-use. |

| Parity | There are 2 possible values 64 and 128. |

| CreateLSN | This is the LSN when the VLF was created. If the createLSN is 0, it means it was created when the physical transaction log file was created. |

Reading the SQL Server Transaction Log

Even though Microsoft does not provide a tool, there are some undocumented functions which may be used to view the log. Because these procedures are undocumented, they are also not supported by Microsoft and as such comes with the warning of “Use at your own risk”. There has been some reports that fn_db_dumplog creates a hidden OS scheduler and some threads which apparently remains on the server until it is restarted. According to Paul Randal, Microsoft’s SQL Team is aware of this and will fix it sometime in the future.

fn_dblog()

This table-valued function (which was DBCC Log prior to SQL Server 2005) allows you to view the entries in online transaction log. This procedure accepts 2 parameters, the start and the end LSN. To see all available entries NULL can be passed for both parameters, and all the entries in the active portion of the online log will be displayed.

|

1 |

SELECT * FROM fn_dblog(NULL,NULL) |

fn_dump_dblog()

This function reads both the online log and log backups and accepts 68 parameters. All the parameters need to be specified in order to execute the statement.

|

1 2 3 4 5 6 7 8 9 |

SELECT * FROM fn_dump_dblog(NULL,NULL,'DISK',1 ,'D:\Program Files\Microsoft SQL Server\MSSQL11.MSSQLSERVER\MSSQL\Backup\AdventureWorks2012.bak' ,NULL,NULL,NULL,NULL,NULL,NULL,NULL,NULL,NULL ,NULL,NULL,NULL,NULL, NULL,NULL,NULL,NULL,NULL,NULL ,NULL,NULL,NULL,NULL, NULL,NULL,NULL,NULL,NULL,NULL ,NULL,NULL,NULL,NULL, NULL,NULL,NULL,NULL,NULL,NULL ,NULL,NULL,NULL,NULL, NULL,NULL,NULL,NULL,NULL,NULL ,NULL,NULL,NULL,NULL, NULL,NULL,NULL,NULL,NULL,NULL ,NULL,NULL,NULL,NULL) |

| Parameter | Description |

| @start | The start LSN |

| @end | The end LSN |

| @devtype | This is the backup device type. The default value is DISK. Other valid values are NULL(DISK) | DISK | TAPE | VIRTUAL_DEVICE |

| @seqnum | This indicates which backup to read from a backup device if there is more than one backup in a backup file. The default value is 1. |

| @fname1 | This parameter accepts the path to the backupfile. |

| @fname2 to @fname64 | These parameters are used to specify additional backup files, if the media set has multiple media families. |

Interpreting the data in fn_dblog and fn_dump_dblog

Now that we have seen how we may be able to view the information in the SQL Server Transaction Log, let’s have a look at how it is presented and how we can make sense of it.

This is an incredibly complicated subject, details of which are really beyond the scope of this article, but in order to understand the value of using a log reader tool, it is necessary to have a basic idea of what it would take to interpret the data in the log without the help of a tool.

The sequence of operations is indicated by the LSN (log sequence number) , but since multiple operations can happen simultaneously , all the entries linked to a specific transaction may not appear in sequence, so it is important to also look at the Transaction ID to know which log entries correspond to which transaction.

Let’s insert one row in a simple table named [dbo.attribute] and see what happened in the log.

|

1 2 3 |

INSERT INTO dbo.attribute VALUES ('Red') |

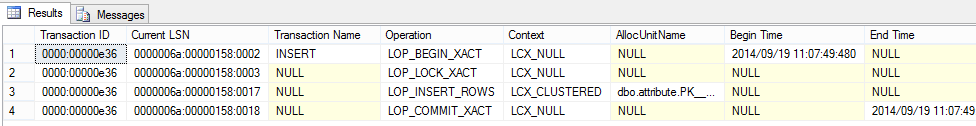

In this query I am only looking at entries which has the same transaction ID as the INSERT.

|

1 2 3 4 5 6 7 8 9 10 11 |

SELECT [Transaction ID], [Current LSN], [Transaction Name], [Operation], [Context],[AllocUnitName],[Begin Time],[End Time], [Transaction SID], [Num Elements] , [RowLog Contents 0], [RowLog Contents 1], [RowLog Contents 2], [RowLog Contents 3] FROM fn_dblog (NULL, NULL) WHERE [Transaction ID] = (Select [Transaction ID] FROM fn_dblog (null,null) WHERE [Transaction Name] = 'INSERT') GO |

Notice that the first entry is an LOP_BEGIN_XACT operation. This indicates the start of any transaction and shows the start time. Similarly, the last entry is a LOP_COMMIT_XACT operation which displays the end time of the transaction.

The second row indicates that a lock operation was done (LOP_LOCK_XACT). If you want to see more information about the lock, you can check out the [Lock Information] column.

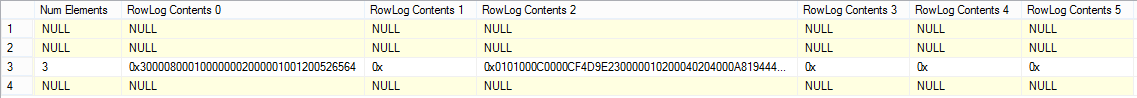

The third row is where it starts to get really interesting. This is the entry in which we will be able to find the actual data which was inserted in the table. The data values are stored in the RowLog Contents columns. There are 6 RowLog Contents columns. To know which of these columns are relevant to the transaction at hand, we can check the [Num Elements] column.

In this particular case there are 3 elements, which means we should only look at the values present in [RowLog Contents 0], [RowLog Contents 1] and [RowLog Contents 2]. As you can see, it’s not obvious by just looking at the data in these fields to know what was actually inserted. In order to know what the values were, we will actually have to deconstruct each entry.

The data in RowLog Contents 0 is:

0x300008000100000002000001001200526564

Which can be broken down like this:

| 30 | Status bit A |

| 00 | Status bit B |

| 0800 | Offset to find the number of columns in the row. |

| 01000000 | Data of fixed length col = 1 |

| 0200 | Number of columns |

| 00 | Null bitmap |

| 0100 | Number of variable length columns |

| 1200 | Position where first variable length column ends, this is byte swapped which is 0x0012 which translates to 18. |

| 526564 | Data in variable length column = Red |

The actual inserted value can be extracted from the log. Now let’s have a look at what happens when we update an entry. I’m going to change the value ‘Red’ to Rad.

|

1 |

UPDATE dbo.attribute SET name_e = 'Rad' WHERE id = 1 |

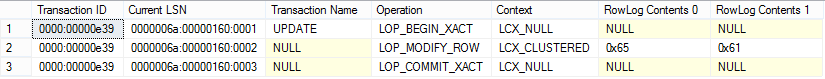

Let’s have a look at what was logged now.

|

1 2 3 4 5 6 7 8 |

SELECT [Transaction ID], [Current LSN], [Transaction Name], [Operation], [Context], [RowLog Contents 0], [RowLog Contents 1] FROM fn_dblog (NULL, NULL) WHERE [Transaction ID] = (Select [Transaction ID] FROM fn_dblog(null,null) WHERE [Transaction Name] = 'UPDATE') GO |

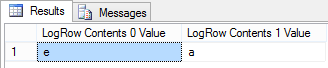

Notice the values in RowLog Contents 0 and RowLog Contents 1. If we convert this to varchar we will see that SQL Server only logged the actual change. RowLog Contents 0 holds the before value and RowLog Contents 1 holds the after value.

|

1 2 |

SELECT CAST(0x65 AS VARCHAR) SELECT CAST(0x61 AS VARCHAR) |

In this case the only change was that the ‘e’ changed to ‘a’. In order to obtain the before value of a field which has been updated from the log, you would have to know what the inserted value was before it was changed as well as all subsequent changes. In order to do this a full log chain is required, and of course a lot of effort to decode all of it.

Advantages of using a log reader tool

Due to the difficulty involved in reading the output of fn_dblog and fn_dump_dblog, having a tool which can do it for you is essential. Log reader tools do not have to be implemented in advance, since it solely depends on the availability of the SQL Server Transaction log. It can be installed after an unexpected event has occurred, providing the best possible chance of either tracking down the culprit or recovering the lost or damaged data.

Conclusion

Reading the transaction log offers the ability to audit and investigate database activity after the fact. The format in which the SQL Server transaction log is written requires careful decoding of each item to understand which values have been affected. Microsoft does not provide any log reader tools aside from 2 functions which reads and displays but does not decode the log data.

When it comes to investigating an unexpected event after the fact, reading the transaction log is the only option. As long as databases are managed and operated by humans. There will always be a need to be able to read the SQL Server transaction log.

- The end is nigh! (For SQL Server 2008 and SQL Server 2008 R2) - April 4, 2018

- 8 things to know about Azure Cosmos DB (formerly DocumentDB) - September 4, 2017

- Introduction to Azure SQL Data Warehouse - August 29, 2017