This article will walk you through the process to optimize the cost of Azure Database for PostgreSQL.

Introduction

Hosting a database on on-premises, as well as cloud infrastructure, comes with an associated cost. Generally, the first cost that comes to mind is the license cost, but that is not the only cost associated with a database server or instance. In the case of open-source products, it can be the case that the license cost may not even apply as many open-source products have a community version of the product, and only commercial distribution of the products have an associated cost. But one cost that is common to every database product is the hosting cost i.e. the infrastructure on which the database is hosted. The compute capacity, the storage capacity, and other features that are offered by the database product are often backed by the underlying hardware. On cloud platforms too, though the database is offered as a service, features like storage, virtual cores, and other such features come with an associated cost. When one kick starts a new solution, one may not have an up-front need for a production-grade database. Depending upon the use-case there may be a heavier need for one feature versus another. For example, some solutions need heavy compute capacity while others may need a heavier in-memory capacity. So there comes the need to optimize the cost of hosting a database by selecting a specific configuration and capacity. In this article, we will learn how to optimize the cost of Azure Database for PostgreSQL Server.

Azure PostgreSQL – Flexible Server

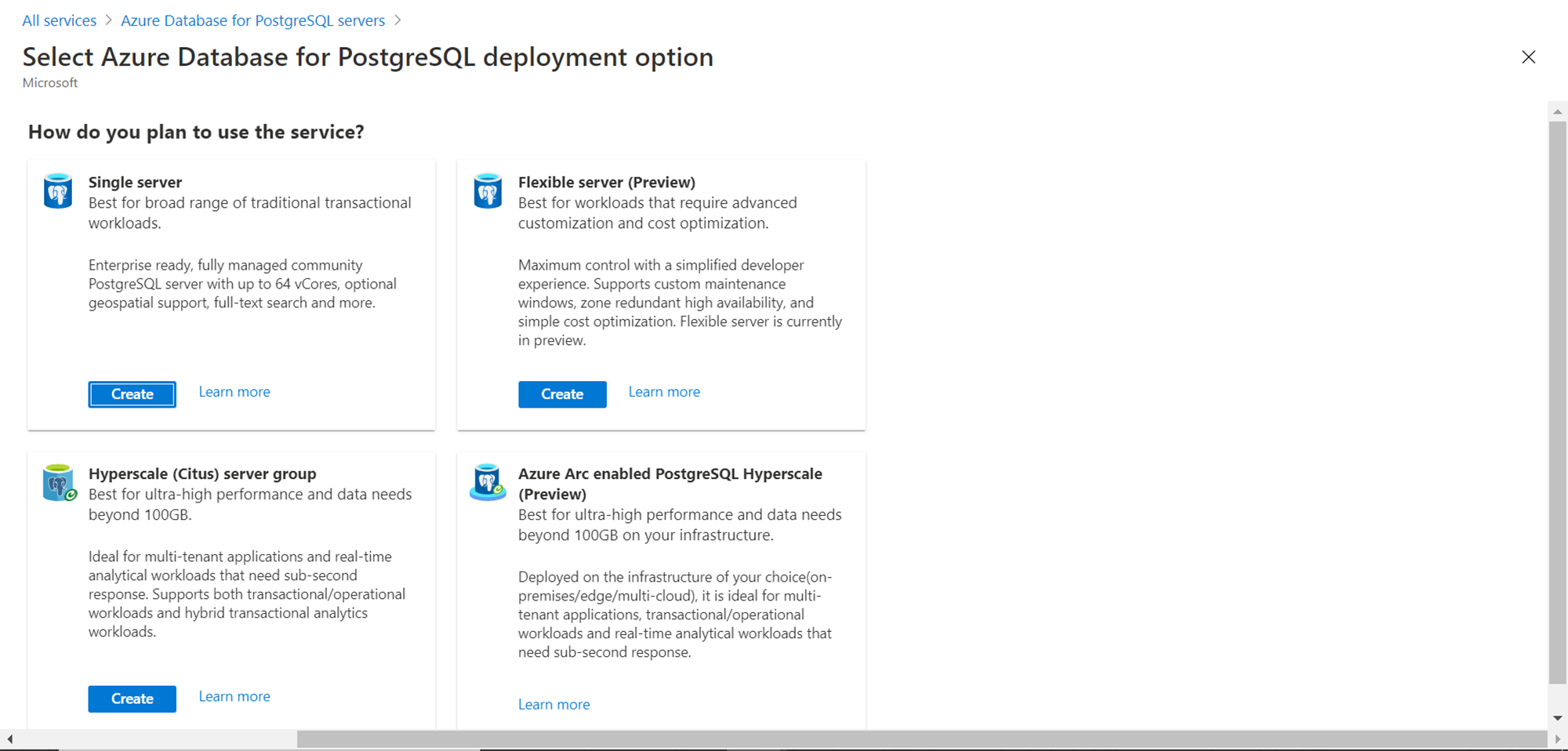

Azure’s offering for hosting PostgreSQL Servers on the Azure platform is the Azure Database for PostgreSQL Server service. This offers four modes of deployment. In the last article, Getting started with Azure Database for PostgreSQL, we learned how to get started with the Single Server option, which is the most frequently used and most fundamental option to start with. Azure has recently launched the Flexible Server option which is in public preview (as of the draft of this article). We will be learning how to use this mode of deployment and some specific features that can enable us to optimize the configuration as well as a usage to effectively optimize costs.

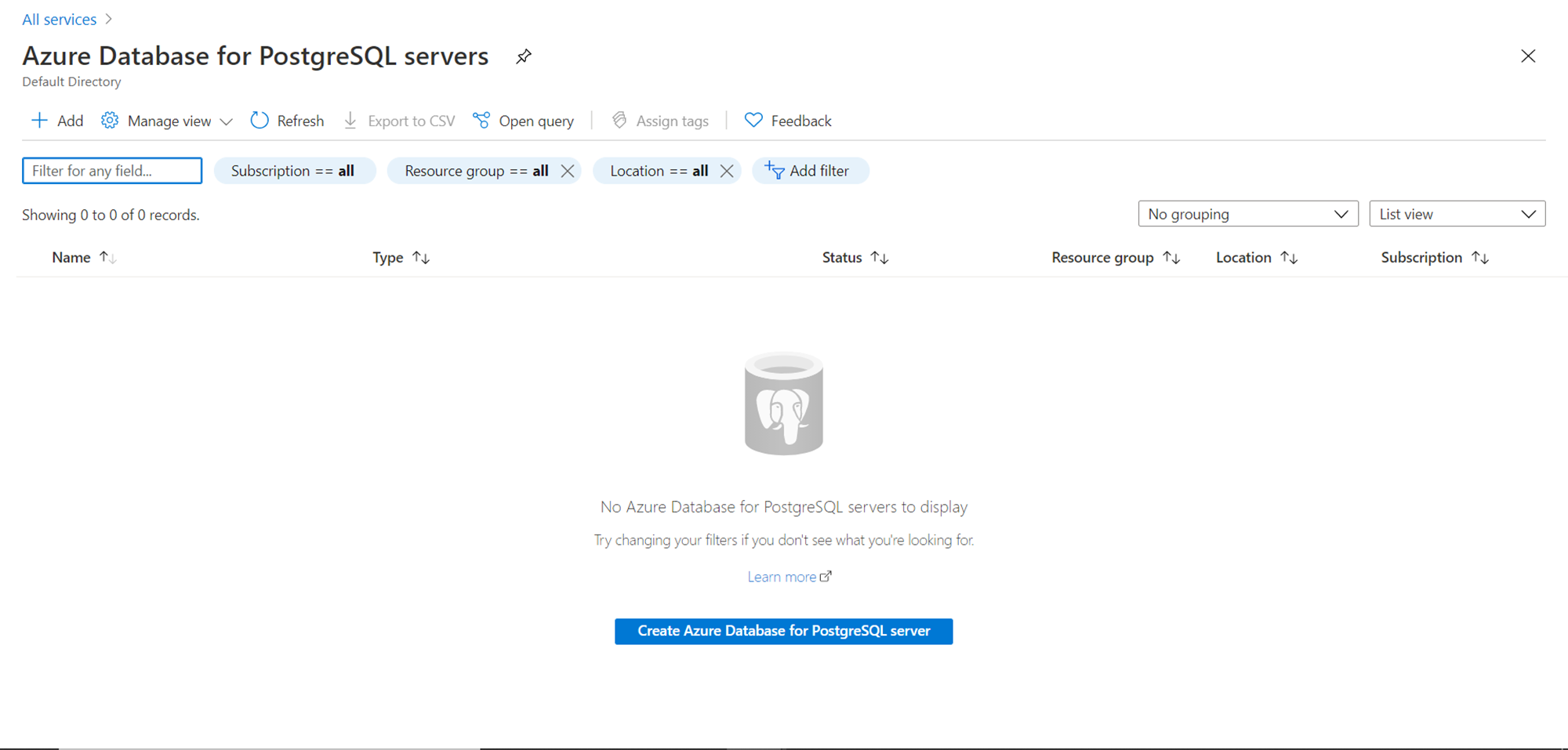

It is assumed that one has the required level of access to Azure portal and Azure Database for PostgreSQL Server service. Navigate to the Azure portal and the dashboard page of this service, and you should be able to find a screen as shown below. It is assumed that there are no existing instances of the database already created, and hence the screen would not have any instances listed.

We need to create an instance of the PostgreSQL database to explore the features provided by the Flexible Server mode of deployment. To get started, click on the “Create Azure Database for PostgreSQL server” button. This would invoke the database creation wizard, and the first option would be to select the deployment option. Select the Flexible server deployment option and click on the Create button.

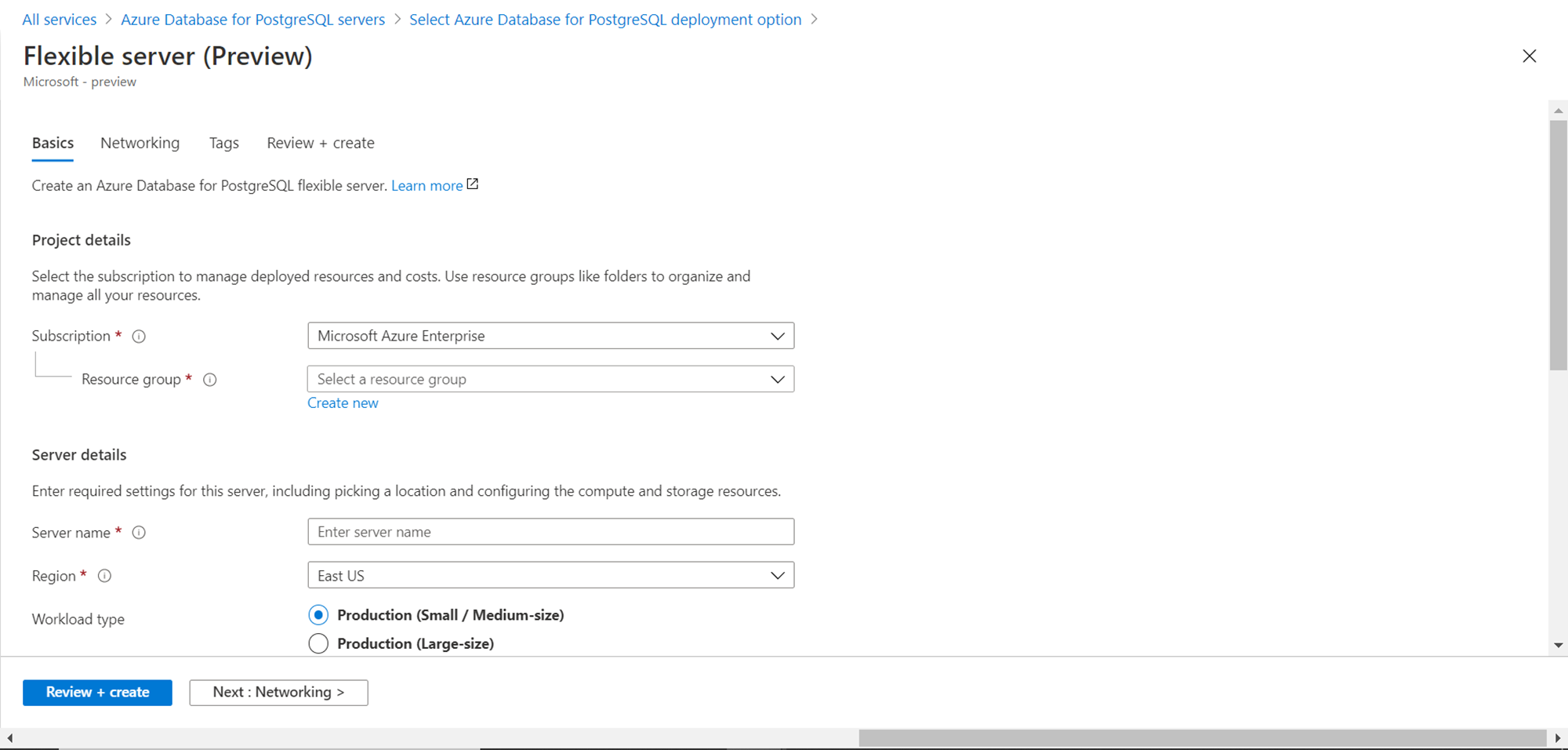

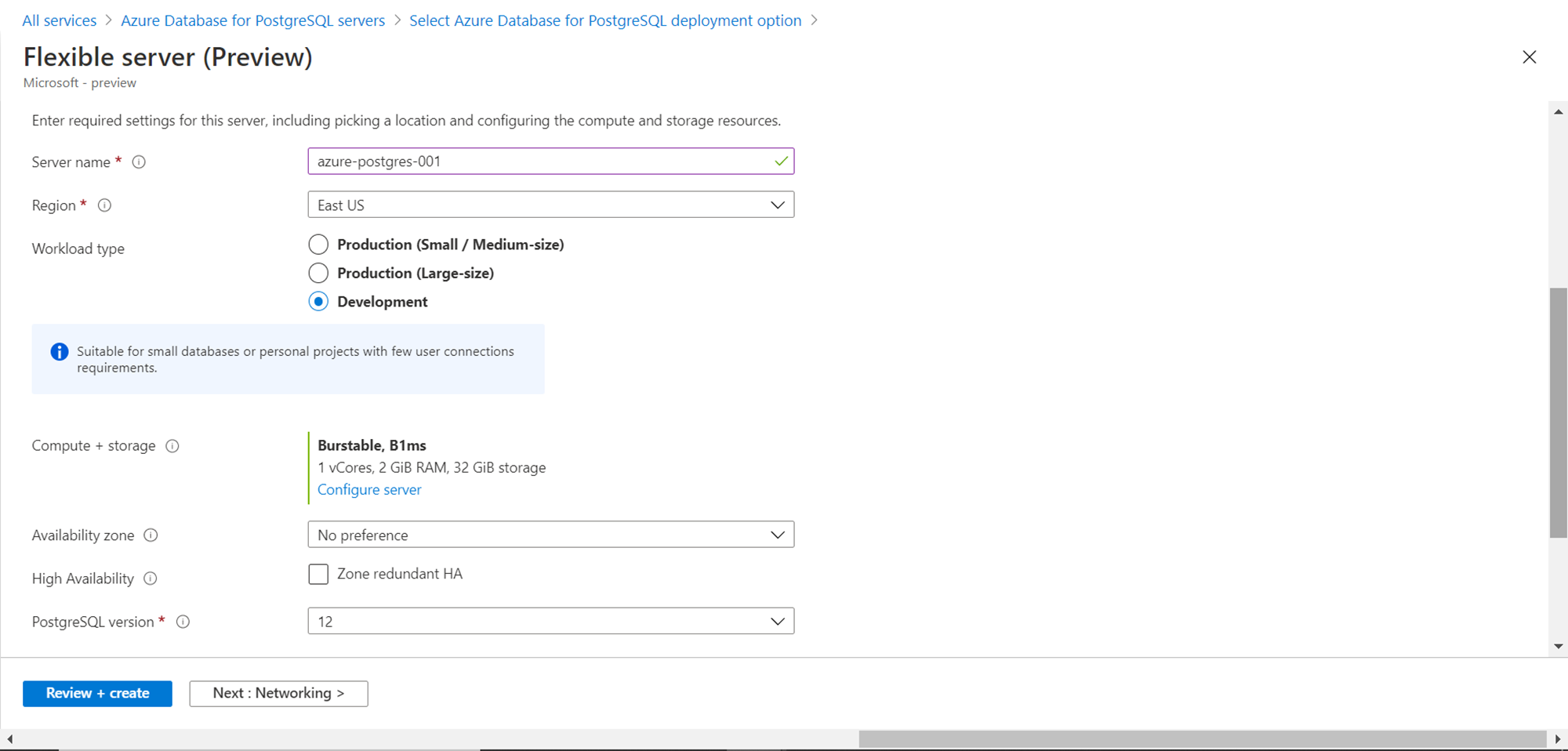

The first detail to provide is the Azure subscription, resource group in which the database instance would be created, the region in which the instance would be created as well as the name of the instance i.e. server name.

Next, we need to select the workload type. We have three options – Development, Production (large-size) and Production (small/medium-size). When creating the instance for a lower non-production environment, one can select development and for the rest of the cases, one can select production environment. As the production workload type has two configs, depending on the selection, it would select different capacities. The development workload type would select 1 vcore, 2 GB RAM and 32 GB storage by default. This can be configured in the next steps. We can select any preference for the availability zone and deploy the instance in multiple zones for high-availability or redundancy. The PostgreSQL version offered as of the draft of this article is 12 as shown below.

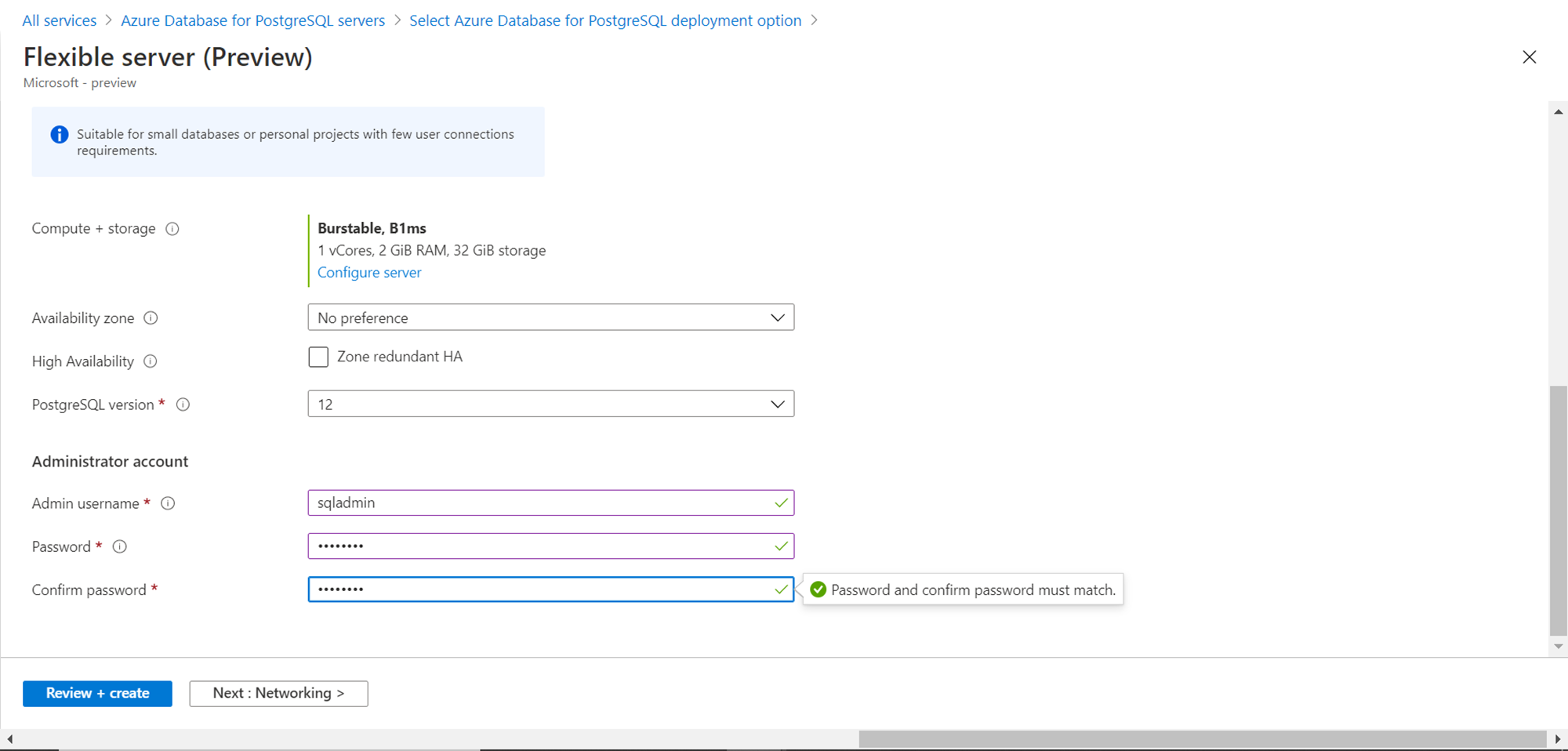

We need to provide administrative credentials for the instance being created as shown below before we can proceed to the next page. Provide the same and click on the Networking button to proceed to the next step.

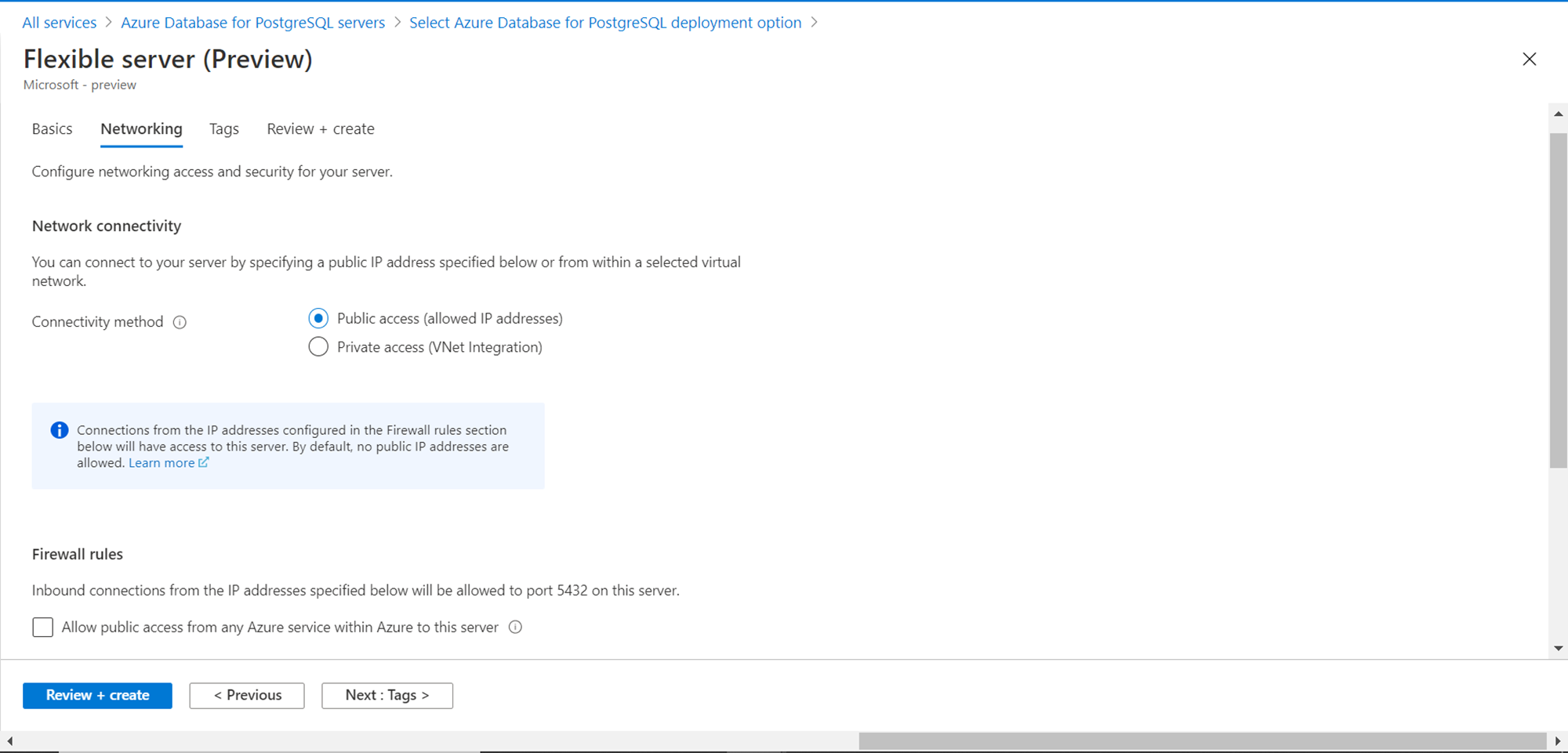

In this step, we need to define the accessibility of this database. Selecting public access will result in all the selected IP addresses that are part of the firewall settings being able to access the database instance, even over the public internet. Typically, this can be the case for ad-hoc access and only in non-production environments. Production instances are rarely exposed to the internet-facing zone and placed in private VNets which do not have any exposure to the internet-facing zone. For our purpose, we will select Public access. Port 5432 is the default port for Azure Database for the PostgreSQL server. By default, other azure services cannot connect to this port and instance. By checking the checkbox at the bottom of the page, one can allow access to this instance to other Azure services. Once done, click on the Tags button to move to the next step.

This is an optional step where we can define metadata for the instance. Provide any tags if required, and then click on the “Review + create” button to move to the next step.

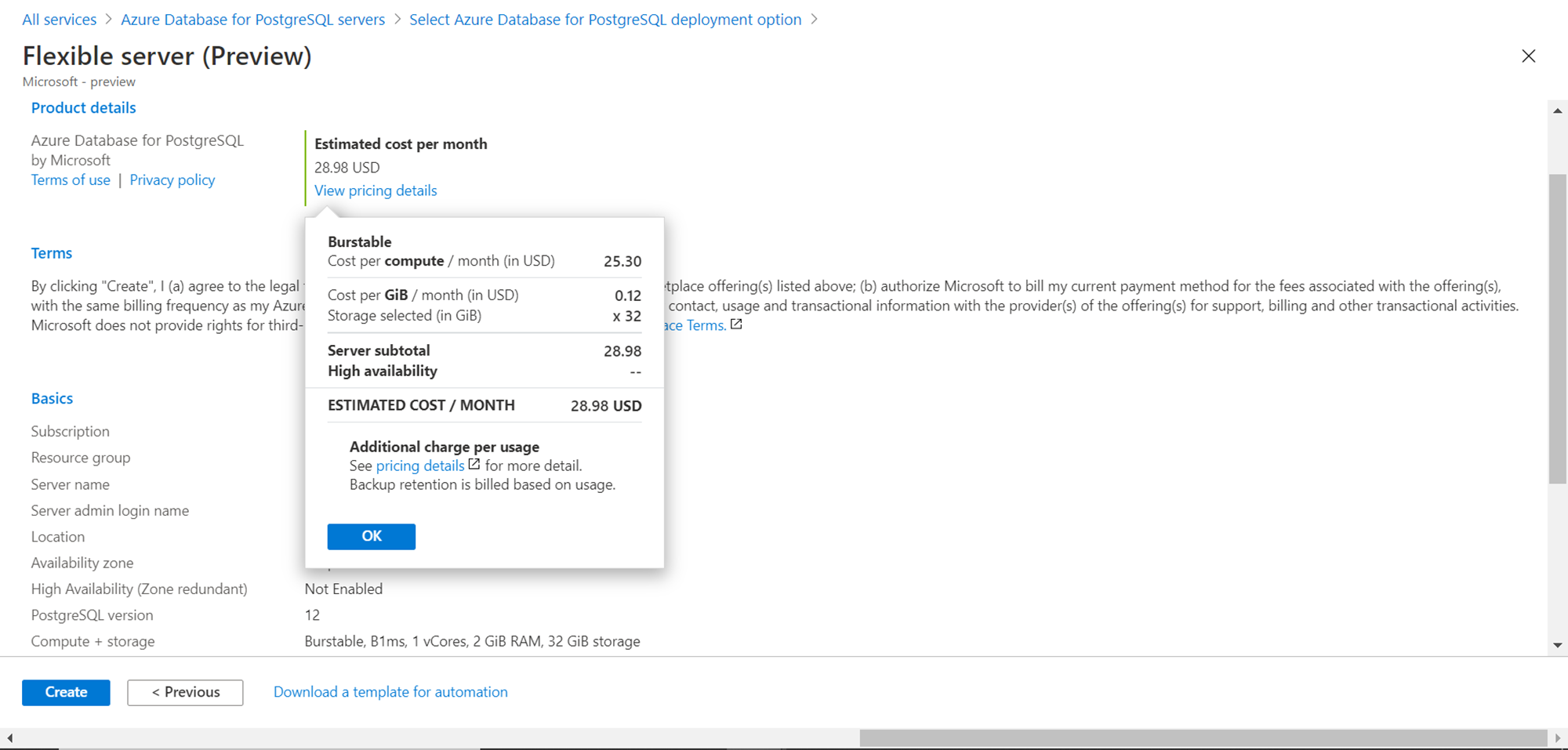

In the review step, we would be able to view the cost as well as configuration-related details as shown below. The cost with the options that we have selected so far comes out to be 28.98 USD. This is considering that we have chosen the minimum capacity configuration, so this should be treated as the bare minimum cost for constant monthly usage. We will discuss the cost in more detail shortly. Click on the Create button to create the Azure Database for the PostgreSQL server instance using the Flexible Server option.

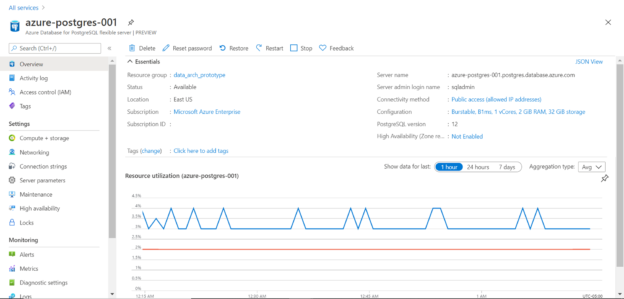

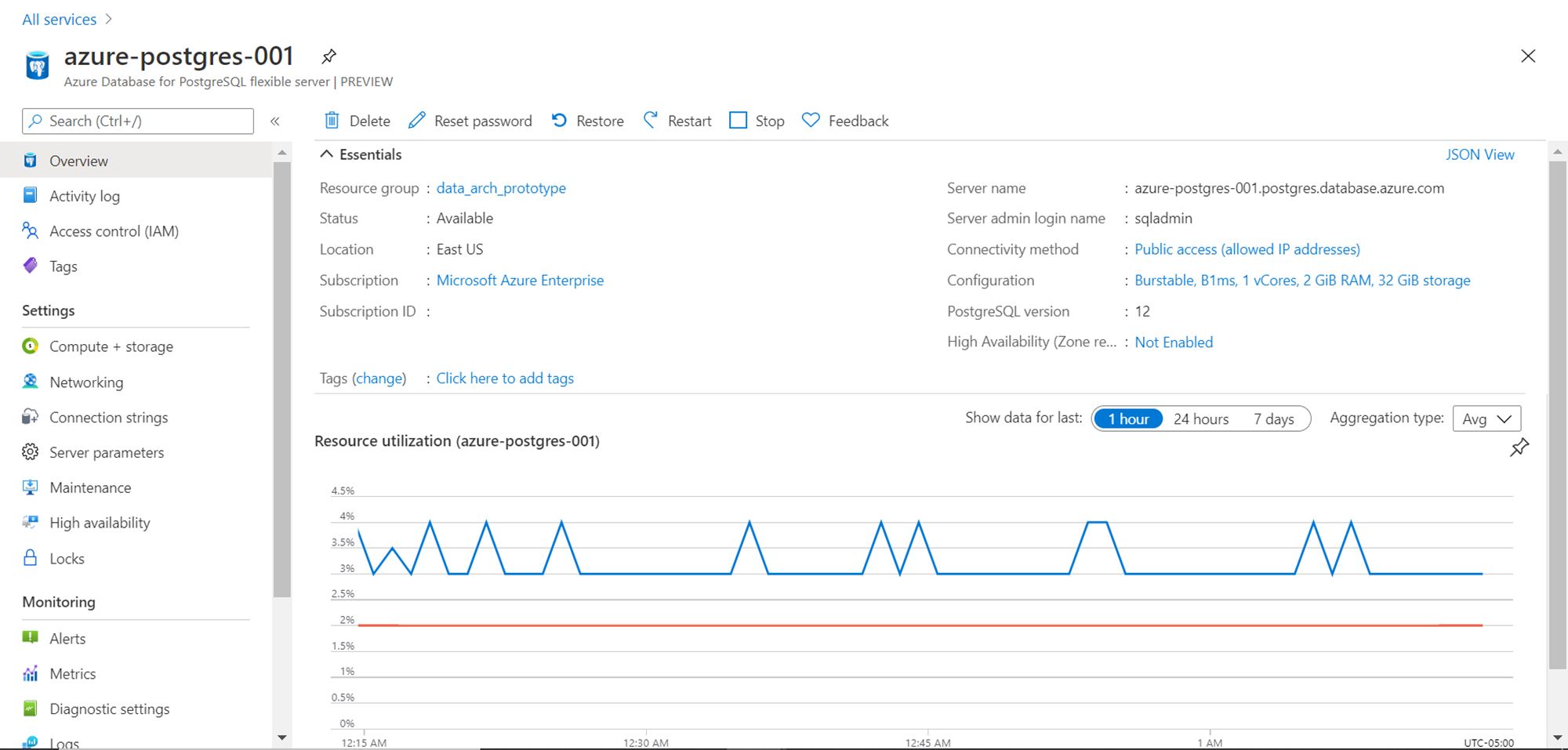

Once the Azure Database for the PostgreSQL server instance is created, click on the “Go to resource” button, which will land us on the dashboard of this page. Generally, once the instance is created, in a production environment, the databases are never taken offline except for critical maintenance activity. But in lower environments, a database may not be required to be used for the entire duration. In this deployment model, we have the option to “Stop” the database when not in use, which is the first way of saving costs. This is where the elastic nature of cloud-based hosting can be utilized to optimize costs.

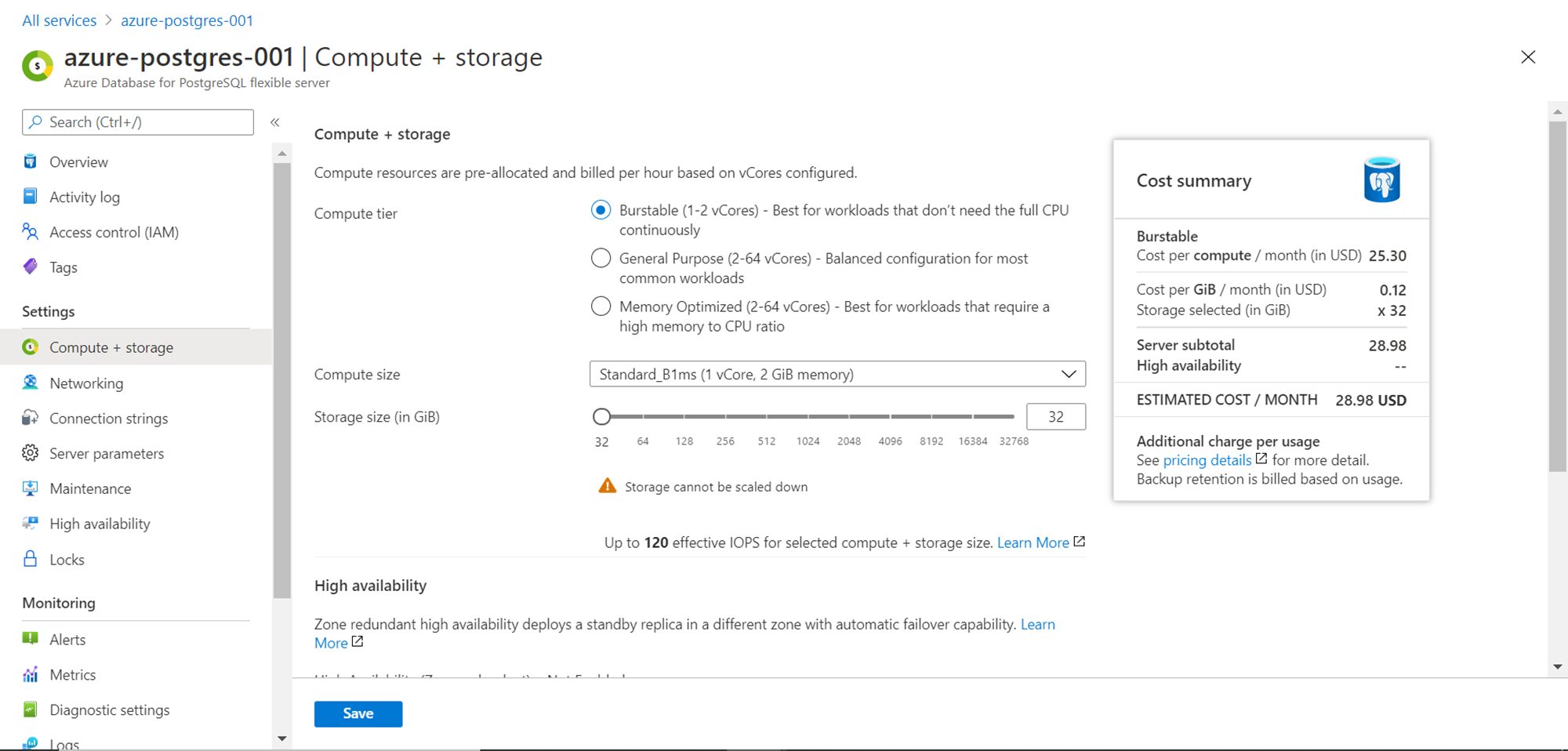

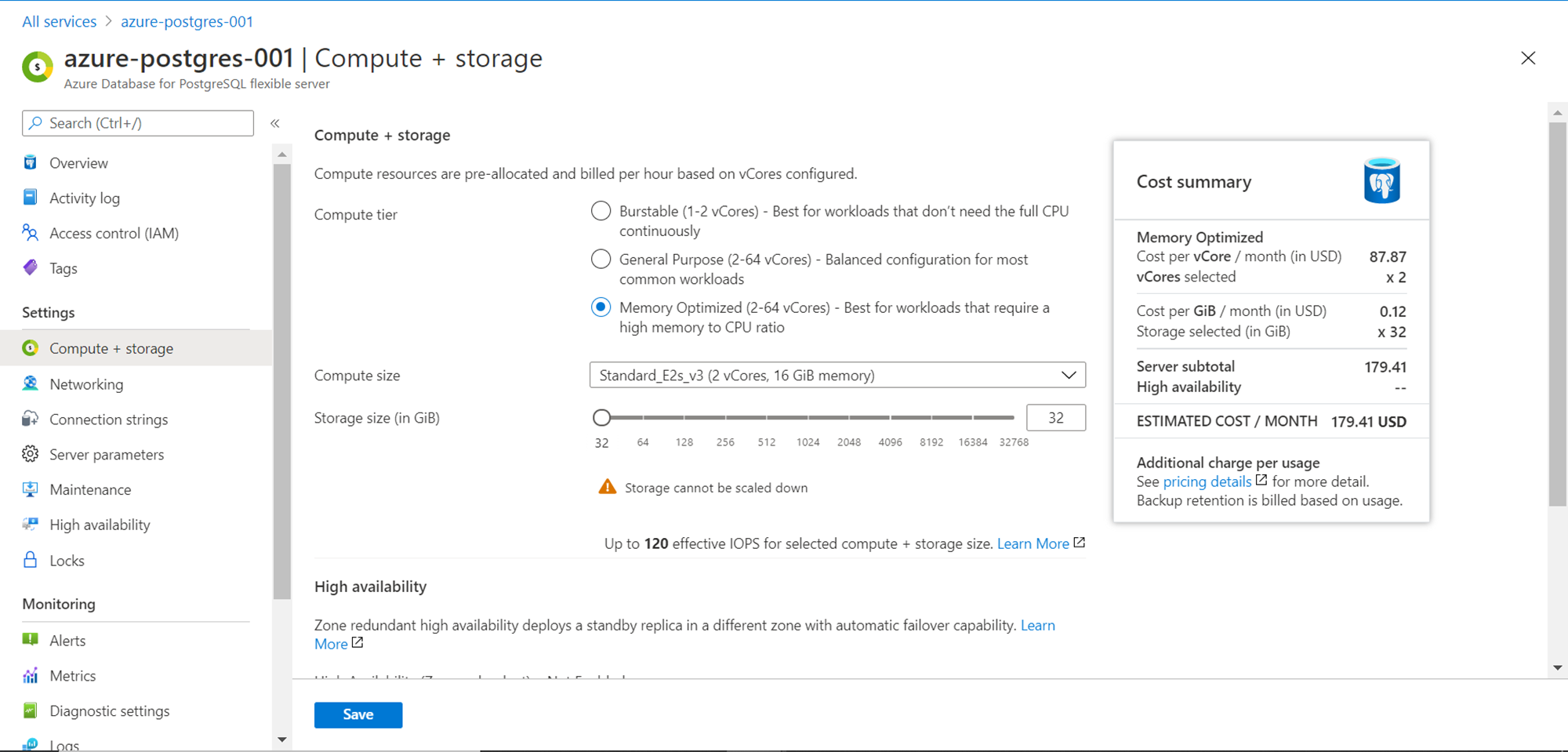

Click on the Compute + Storage settings to view the details. It would look as shown below. Here we are using the Burstable compute tier. If the database instance would be in a dormant state for most of the time and may be used to full capacity or even a bit more than existing capacity, the burstable tier is the most efficient mode. Within this tier, we can change the compute size which increases the number of cores and memory, as well as increase the storage size as well. This will have an impact on the cost as well.

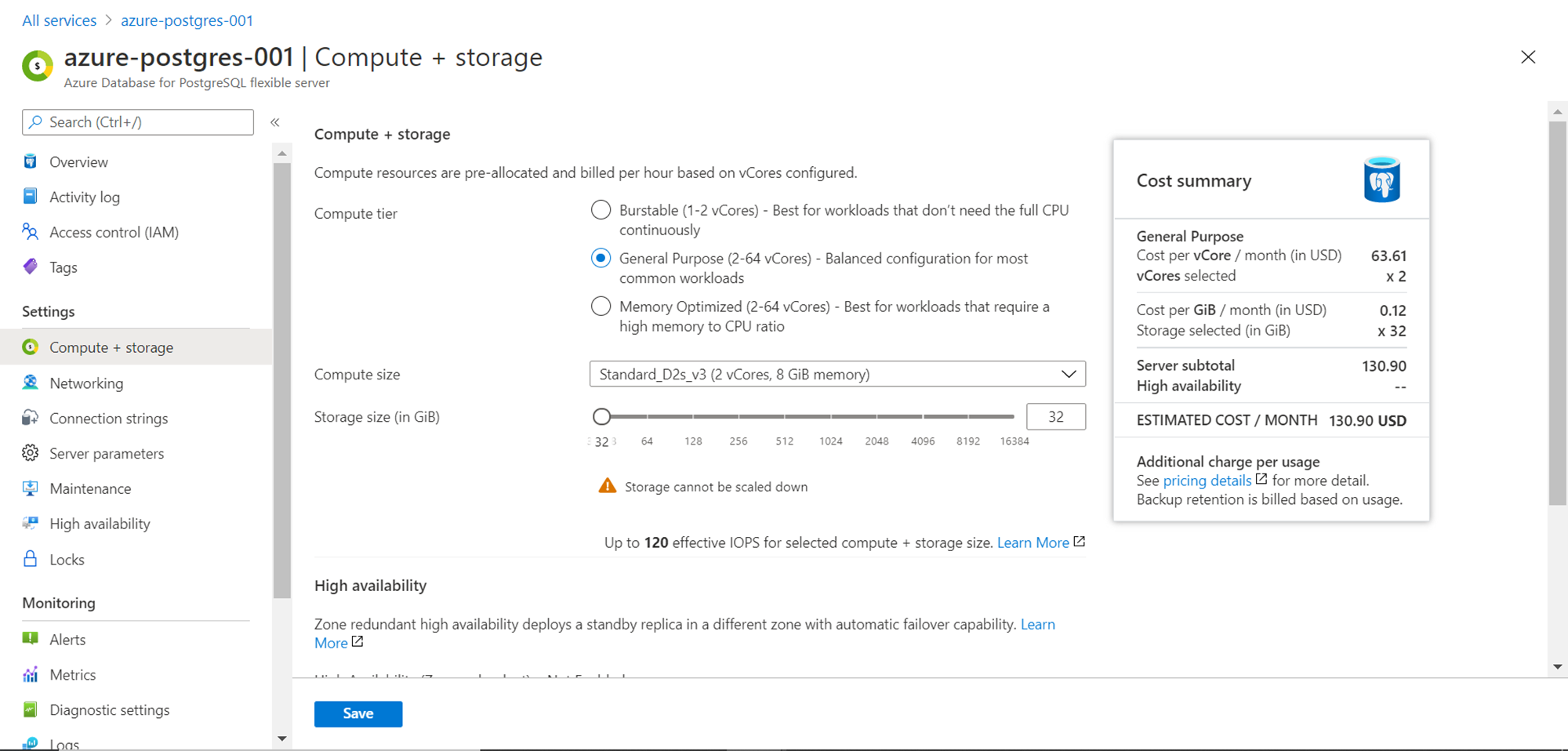

For regular workloads that need regular production-grade performance from small to medium scale applications, the next tier of the General-Purpose tier which supports 2 to 64 vcores can be used. In this tier, the bottom-line cost would be 130.90 USD as shown below, for constant monthly usage.

When the production environment scales to a massive scale, one may start employing a memory-optimized compute tier, which allows the same number of vcores but provides a higher memory to CPU ratio. This means that for the same number of cores, if one needs higher memory, instead of opting for a greater number of cores that deliver effectively more memory, and an efficient option would be to convert the compute tier. The bottom-line cost for this tier would be 179.41 USD.

These are the fundamental options to optimize the cost of Azure Database for PostgreSQL server instance. Many major and granular features can be fine-tuned for cost optimization. Considering reading about the same from here.

Conclusion

In this article, we learned about deploying the flexible server mode of Azure Database for the PostgreSQL server. We learned about different features of this deployment model like starting and stopping the database instance, different compute tiers, storage capacity, multi-zone deployment, etc. We looked at the absolute and relative cost difference between these tiers and understood how the same can be optimized by carefully using the right features and tiers depending upon use-case.

Table of contents

| Accessing Azure Database for PostgreSQL using pgAdmin |

| Azure Database for PostgreSQL Cost Optimization |

| Getting started with Azure Database for PostgreSQL |

- Oracle Substring function overview with examples - June 19, 2024

- Introduction to the SQL Standard Deviation function - April 21, 2023

- A quick overview of MySQL foreign key with examples - February 7, 2023