SQL Server locks, discussed in the article All about locking in SQL Server, which is applied on data for the duration of the logical operation to preserve logical transaction consistency. SQL Server latches, however, are a special type of low-level system locks which are held as long as the physical operation lasts on the memory page in order to protect memory consistency

SQL Server latches are an internal SQL Server mechanism that serves to protect shared memory resources, like pages and memory data structures inside the buffer pool, in order to coordinate access to those resources and protect them from corruption. Designed as an internal SQL Server mechanism that is not exposed outside the SQL Server Operating System (SQLOS), latches can be managed only by SQL Server, itself, and not by users (unlike locks that can be managed via NO LOCK hints). Every time SQL Server has to read memory, it will impose latches to the page or internal memory structure that cannot be accessed in a proper multi-threaded way. In this way, SQL Server establishes latches as a resource for the coordination of multiple physical thread execution in a SQL Server database

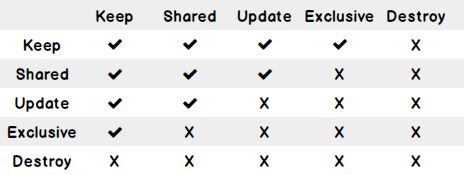

In the same manner as locks, SQL Server latches can come in various modes:

- Destroy Latch (DT): the most restrictive latch mode, acquired when a latch is destroyed and a buffer is to be removed from the cache. DT latches block even the KP latch

- Exclusive Latch (EX): acquires exclusive control of a page being written. Prevents all other latches to be acquired on the page where EX latch exists

- Update Latch (UP): restrictive similar to an exclusive latch, with an exception that it allows read operation to access the page, but restricts, explicitly, any write operation

- Keep Latch (KP): it serves to preserve a latch order record but also to ensure that it stays in the buffer when a new latch is being placed on it

- Shared Latch (SH): acquired on a page when a read request issued to a page is granted

Similarly to locks, there is a compatibility or incompatibility component between the various latch modes. The table below gives an insight in compatibility between the various SQL Server latches

There are many different types of SQL Server latches, but essentially they can be split into three general categories: I/O latches, buffer latches, and non-buffer latches.

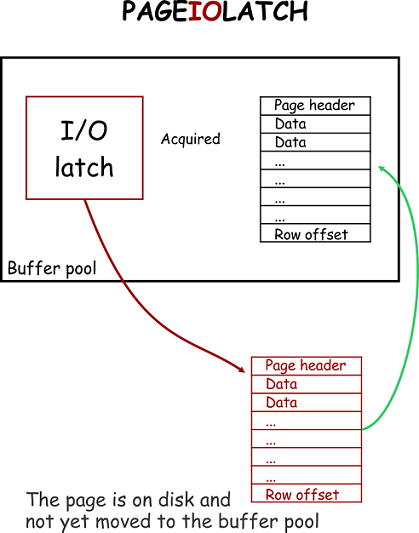

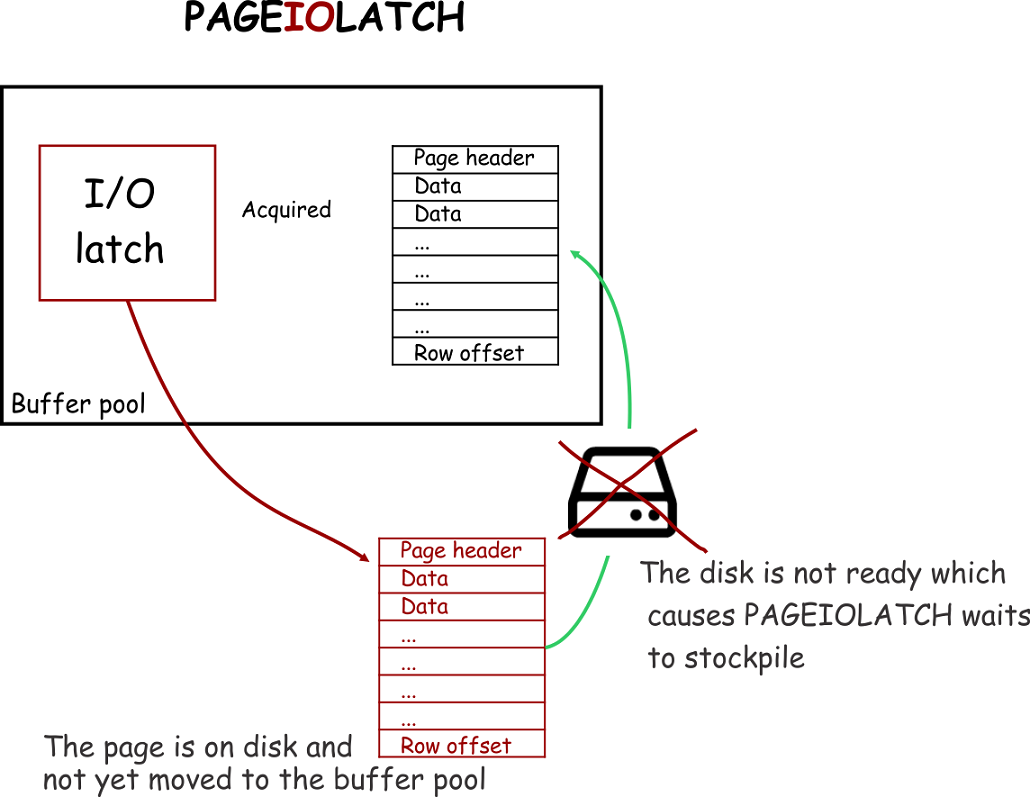

I/O latches

I/O Latches are acquired in situations when an outstanding I/O operation is executed over the pages stored in the buffer pool, or more precisely, when data has to be read from or written to physical storage. The SQL Server will use PAGEIOLATCH_XX wait types to report when a process is waiting for on a SQL Server I/O latch to be released

So, in situations when the page is requested to be brought from storage into a buffer pool, a PAGEIOLATCH will be acquired on that page, and if storage is not ready to be read the PAGEIOLATCH wait type count will increase

Buffer latches

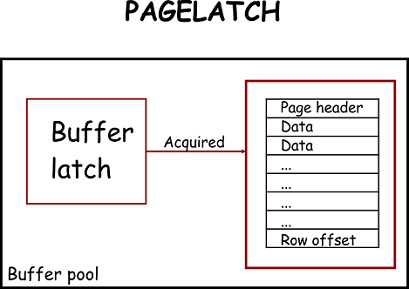

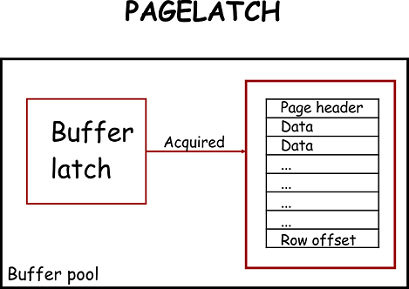

In order to properly understand buffer latches, it is important to properly understand the idea behind the memory buffer pool, which is designed around the goal of maximizing SQL Server performance. The buffer pool is a physical memory range where data that is read from disk is stored in data pages. Data in SQL Server tables is stored in pages and each page has a fixed size of 8192 bytes (8 KB). Whenever a data page has to be read or written to, it will be first brought into a buffer pool. In that way, any further access to that page will be read directly from the memory buffer pool, thus improving SQL Server performance by minimizing disk IO.

This implementation of the memory pool concept in SQL Server is what drives SQL Server physical memory usage can be high even in situations where there is no SQL Server activity. The loading of data in the buffer pool is based on the First-In First-Out (FIFO) principle.

SQL Server uses the buffer manager for managing the buffer pool and it is therefore in charge of any hash tables, the pool array that contain pages and for pages stored in the buffer. The SQLOS is accessing the data stored in the memory exclusively via the buffer manager

The pages that are modified in the buffer pool due to executed insert, delete or update command, are the so called “dirty” pages, while the unmodified pages are called “clean” pages. So when the page has to be accessed in memory, the SQL OS will acquire the buffer latch on that page. But unlike a lock, the SQL Server latch will not be held for the transaction duration but rather just during the critical period of a transaction, and it will be released as soon as it is no longer needed. SQL Server will use PAGELATCH_XX wait types to report when a process is waiting for on a SQL Server buffer latch to be released

Non-buffer latches

Non-buffer latches are designed to protect and guarantee any physical memory structure other than pages stored in the buffer pool. SQL Server will use LATCH_XX wait types to report when a process is waiting for on a SQL Server buffer latch to be released. Non-buffer latches are not often encountered during, and thus those are the least documented, but here are some use cases that can lead to SQL Server contention with non-buffer latches:

- Excessive parallelism – In a situation when a high level of parallelism is used on servers with 12+ logical processors, most if not all, queries can qualify to use parallel execution plans. In such a situation, non-buffered latches (LATCH_XX) will be acquired in memory to ensure the synchronization of internal memory structures used by parallel execution plans

- Too many auto-grow/auto-shrink operations – in systems with poor planning of database sizing or storage capacity (bad default database settings), auto-grow operations can be executed frequently. In addition, when auto-shrink is turned on, frequent database shrinking will occur. When growth and shrink operations are executed, SQL Server acquires FCB, FGCB_ADD_REMOVE and FGCB_ALLOC latches class to ensure the access to the file control block and to ensure synchronized access to information stored in the filegroup

- Very high frequency of DML operations on heap and BLOB data structures – In a situation where excessive DML operations are performed on heap and BLOB data, it is necessary to make sure to keep all internal memory structures in responsible for allocation and deallocation of pages to heap synchronized. In such situations, excessive LATCH_EX wait types can be encountered. When this occurs ALLOC_CREATE_FREESPACE_CACHE, ALLOC_FREESPACE_CACHE, ALLOC_EXTENT_CACHE wait types could be found as prevailing wait types via the sys.dm_os_latch_stats DMV

So, based on the previous, in situations when LATCH_XX wait type have excessive values or those are prevalent wait types, it is good to check which non-buffer latches are prevalent in the SQL Server using the following query

|

1 2 3 4 5 6 7 |

SELECT latch_class, wait_time_ms,waiting_requests_count, 100.0 * wait_time_ms / SUM (wait_time_ms) OVER() AS '% of latches' FROM sys.dm_os_latch_stats WHERE latch_class NOT IN ('BUFFER') AND wait_time_ms > 0 |

SuperLatches

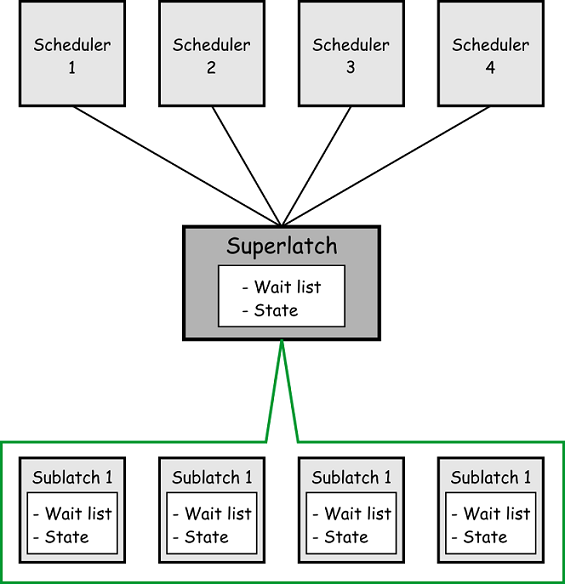

Starting with SQL Server 2005, superlatches (also called sublatches) were introduced to improve SQL Server efficiency in highly concurrent OLTP workloads for a certain pattern of usage (i.e. very high shared read only access to the page (SH) while write access is very low or not exists). Superlatches are used by SQL Server only in NUMA systems with 32+ logical processors. This mechanism is an efficient way of SQL Server to deal with a latch contention by dynamically promoting an array of latches to a Superlatch and thus allowing an SH mode request to the superlatch, while the containing sublatches can remain different modes. When this occurs, the superlatch becomes just a pointer to an array of SQL Server latches.

A Superlatch will behave as a single latch with sublatch structures and there can be one sublatch per partition per logical CPU core. So when a superlatch is created, the CPU worker thread will just have to acquire the shared (SH) sublatch that is assigned to the scheduler. This ensures that a shared (SH) superlatch uses less resources while at the same time access to pages is more efficient comparing to non-partitioned shared latches. The reason for this is that the superlatch do not require any synchronization of the global state as it will access only the local NUMA memory

Latch Contention

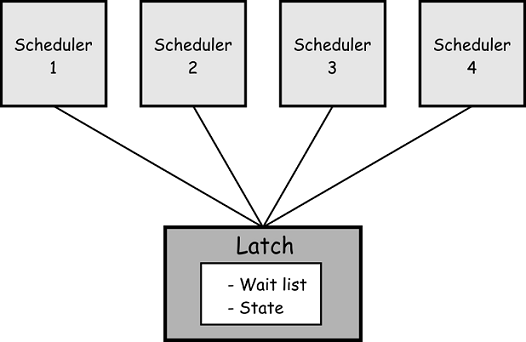

Latch contention is a frequent scenario for systems with large number of CPUs, and it is the consequence of situations when on the same in-memory structure, multiple threads are trying, concurrently, to acquire SQL Server latches that are not compatible with each other. Since SQL Server latches are controlled by an internal SQL Server mechanism, SQLOS will determine on its own when to use them. Due to the deterministic nature of SQL Server latches and their behavior, various parameters such as application design or database schema structure can significantly affect SQL Server latches

On high throughput systems which are designed for a large number of CPUs and thus, high-concurrency, active latch contention is expected as a regular occurrence of on memory structures are often accessed and protected using the latches. But the situation when latch contention and latch wait types wait time is large enough to decrease utilization of CPUs is what results in the reduced throughput

Recognizing and identifying the signs of latch contention is important, so let’s shed light on some symptoms of latch contention

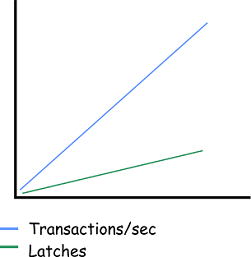

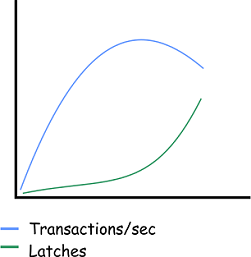

The expected behavior of SQL Server latches, in relation to the transactions per second, is that transactions per second will increase along with increasing average SQL Server latch waits, that themselves increase at a slow rate that will be within the margins of the throughput. Such a situation is represented in the image below and this is the desired system behavior which indicates that logical processors are not conflicting with each other. In such a scenario, adding more logical processors means that more can be done

Situations when transactions/sec value is dropping when enabling additional logical processors while, at the same time, average SQL Server latch wait times are increasing at a greater rate than the system throughput, potentially indicate that there is a high probability that a problem with a latch contention may exist. The following image represents a typical situation where adding new logical processors worked until the certain point when longer latch wait times started to occur. This results in a situation where adding new logical processors will not have any benefits, up to a point where transactions/sec starts to negatively affect performance. This is ta typical situation where adding new logical processors actually had a negative, vs. a positive effect, as the resulting system environment will be spending a lot of time in a waiting state.

Latch contention that can affect the OLTP performance is mainly caused when high concurrency is the result of some of the following factors:

- Application design based on high concurrency – when a client application issues a high number of concurrent requests against the database

- SQL Server logical files layout – allocation structures such as Global Allocation Map (GAM), Shared Global Allocation Map (SGAM), Page Free Space (PFS) and Index Allocation Map (IAM) can impact page latch contention when many concurrent threads are in conflict

- Database schema design – read, write, delete data access patterns, index B+tree depth, design of clustered and non-clustered indexes, rows size and density per page

- The performance of I/O subsystems – is a quite frequent cause since, due to low I/O subsystem performance, SQL Server must wait for the data to be moved to a buffer pool. Excessive PAGEIOLATCH_XX wait type is indicative of the slow I/O subsystem

- Large number of logical CPUs assigned to SQL Server – Excessive latch contention that affects the performance of SQL Server to a level that is not acceptable is indicated in the system with more than 16 logical CPUs, and more logical CPUs are available the higher level of contention might be

- SQL Server trace flags guide; from -1 to 840 - March 4, 2019

- How to handle the SQL Server WRITELOG wait type - June 13, 2018

- SQL Server performance counters (Batch Requests/sec or Transactions/sec): what to monitor and why - June 5, 2018