As a DBA I am often asked to provide lists of all active users ID’s or groups for a specific server or database. In addition to this, I am also asked to provide a list of failed logins. These requests are frequent enough that I decided to automate the process of gathering this data and provide two nicely formatted HTML emails. I am not going lie, manager types love these reports, and anything that makes managers love my work I am all about! I’m also all about automating anything that makes my job easier. Call me lazy or call me prepared, I hate having to do something over and over that I could easily throw into an SSIS package or Agent Job and just schedule it to do it for me. This entire process consists of using SQL Server Integration Services (SSIS), 4 tables and a SQL Agent Job containing 2 reports (Failed Logins and Active SQL Server Users). The SSIS package, along with all of the queries and scripts are attached at the end of the article.

I have created a database that I use for my DBA activities that I call DBA_Reports which is located on what I will call my main server. I also have a database on all servers that I monitor called DBA_Monitoring which I use for staging and gathering data. This one is optional just be sure to make the changes in the scripts if you choose to only use a single database for collecting data. Other than these two databases you will also need the following on your main server:

- DBMail enabled on your server – this is absolutely key to this process. Also make note of your mail profile name as you will need it for the reports.

- Depending how you are set up and what servers you are monitoring, you may need to create a linked server for each server. Or use a ProxyID.

- Create yourself as an operator so you can receive notifications if your job fails.

Personally I like to add a notification on every job I create in case it fails. This is optional.

I’m not going to go into details on how to set these up as they are readily available from other sources, however, just consider them as prerequisites.

Step 1: Create the main server tables in DBA_Reports

- Tables

- MonitoredServerList – this will house the servers to be monitored and should be prepopulated by you with the ServerName of each server to be monitored.

- AuthorizedUsers – this will house the authorized user information collected from each server monitored

- FailedLogins – this will house the failed login information collected from each server monitored

On the main server you will need to create all 3 tables. Copy/paste/Run the scripts below to create the tables:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 |

USE [DBA_Reports] GO CREATE TABLE [dbo].[MonitoredServerList] ( [Server] [varchar](128) NOT NULL ,CONSTRAINT [PK_MonitoredServerList] PRIMARY KEY CLUSTERED ([Server] ASC) WITH ( PAD_INDEX = OFF ,STATISTICS_NORECOMPUTE = OFF ,IGNORE_DUP_KEY = OFF ,ALLOW_ROW_LOCKS = ON ,ALLOW_PAGE_LOCKS = ON ,FILLFACTOR = 80 ) ON [PRIMARY] ) ON [PRIMARY] CREATE TABLE [dbo].[AuthorizedUsers] ( [ServerName] [nvarchar](128) NULL ,[UserID] [sysname] NOT NULL ,[Role] [varchar](13) NOT NULL ,[AccountType] [nvarchar](60) NULL ,[RunDate] [datetime] NOT NULL ) ON [PRIMARY] CREATE TABLE [dbo].[FailedLogins] ( [LogDate] [datetime] NOT NULL ,[ProcessInfo] [varchar](50) NOT NULL ,[Text] [varchar](max) NOT NULL ,[ServerName] [varchar](50) NULL ,[RunDate] [datetime] NULL ) ON [PRIMARY] TEXTIMAGE_ON [PRIMARY] GO |

Step 2: Create the staging tables in DB_Monitoring

These are similar to the scripts in step 1 however there is a slight variation on the FailedLogins table so please use these scripts below. These tables are used to house the data collected from each specific server monitored. I consider this to be staging data. I like to keep it separate from the main database just in case I need to go back into it later or if something failed along the way. I want to see it in its raw form on its source server. Just my preference, so this is optional. You will see later where these are used in the SSIS package. You could always swap out these stage tables for temp tables of course, again optional.

Tables

- Tables

- FailedLogins – this will house the failed login information collected from each server monitored

- AuthorizedUsers – this will house the authorized user information collected from each server monitored

On each monitored server in the DBA_Monitoring database you will need to create these 2 tables. Copy/paste/Run the scripts below to create the tables:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 |

USE [DBA_Monitoring] GO CREATE TABLE [dbo].[AuthorizedUsers] ( [ServerName] [nvarchar](128) NULL ,[UserID] [sysname] NOT NULL ,[Role] [varchar](13) NOT NULL ,[AccountType] [nvarchar](60) NULL ,[RunDate] [datetime] NOT NULL ) ON [PRIMARY] GO CREATE TABLE [dbo].[FailedLogins] ( [LogDate] [datetime] NOT NULL ,[ProcessInfo] [varchar](50) NOT NULL ,[Text] [varchar](max) NOT NULL ) ON [PRIMARY] TEXTIMAGE_ON [PRIMARY] GO |

Step 3: Create a simple SSIS package.

This is a really simple SSIS package for someone that has experience with SSIS. It consists of 5 SQL queries, two connection managers and 2 variables used for connections. The package name is ActiveUsersAndInvalidLogins. The SSIS package is included at the end of this article along with all queries above and below.

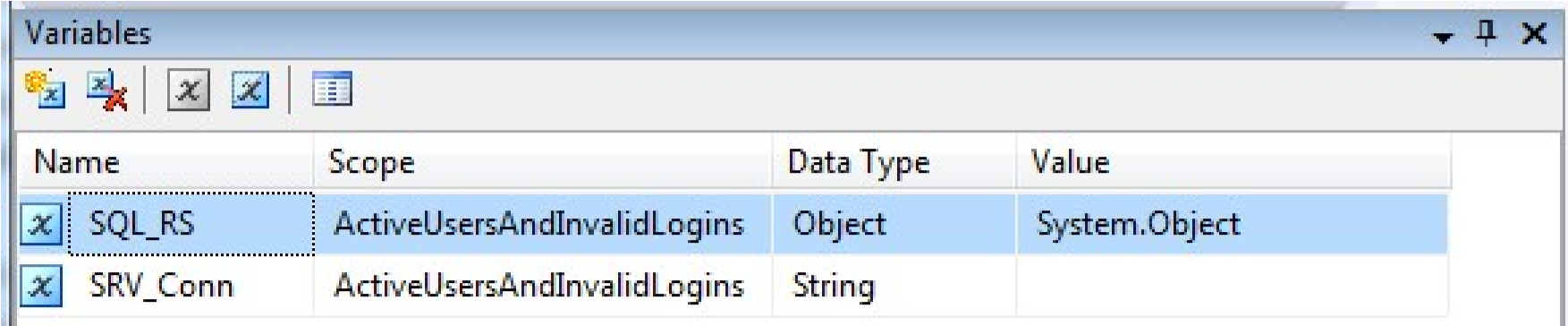

Variables:

These are used for connecting to the remote servers.

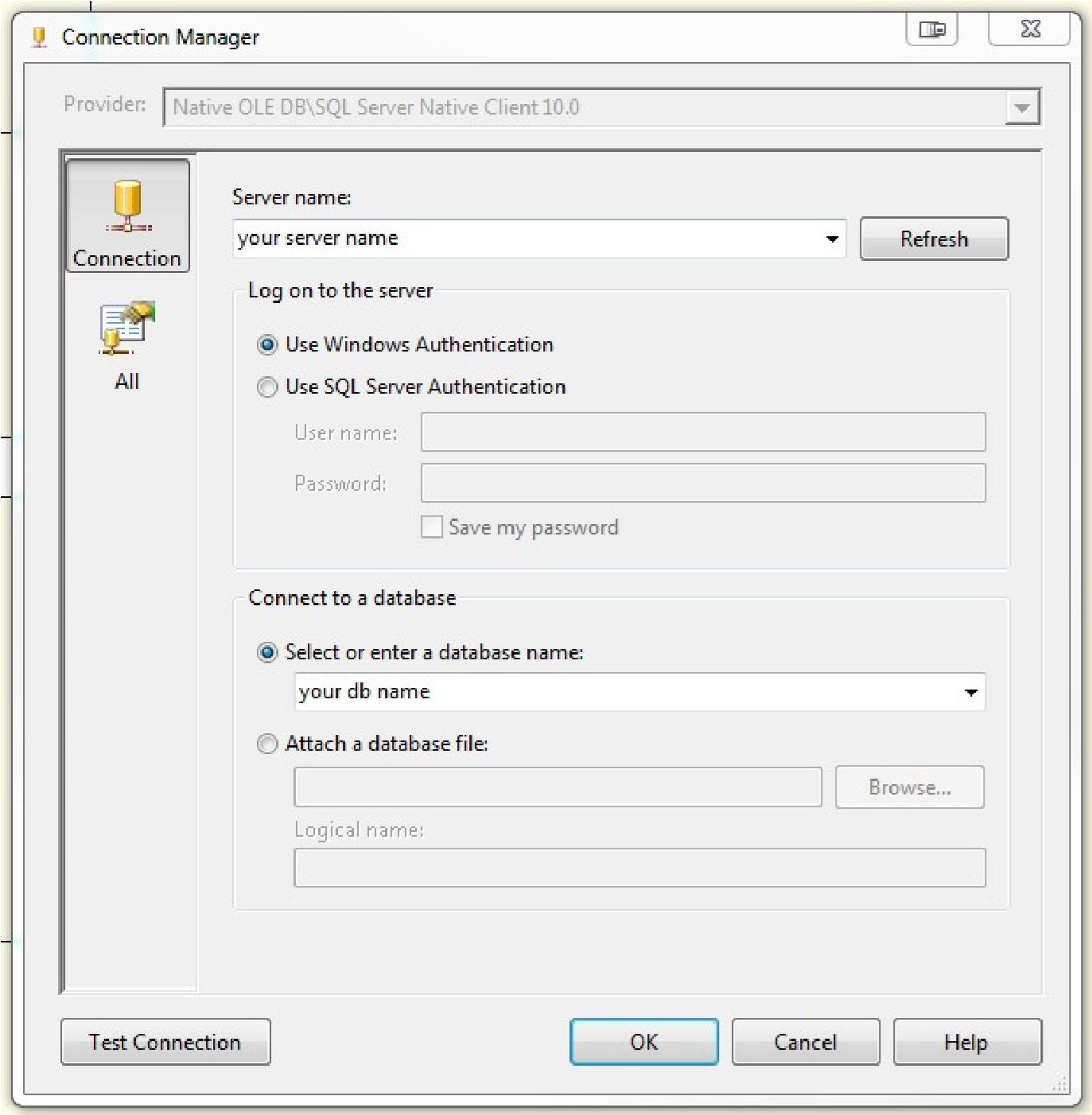

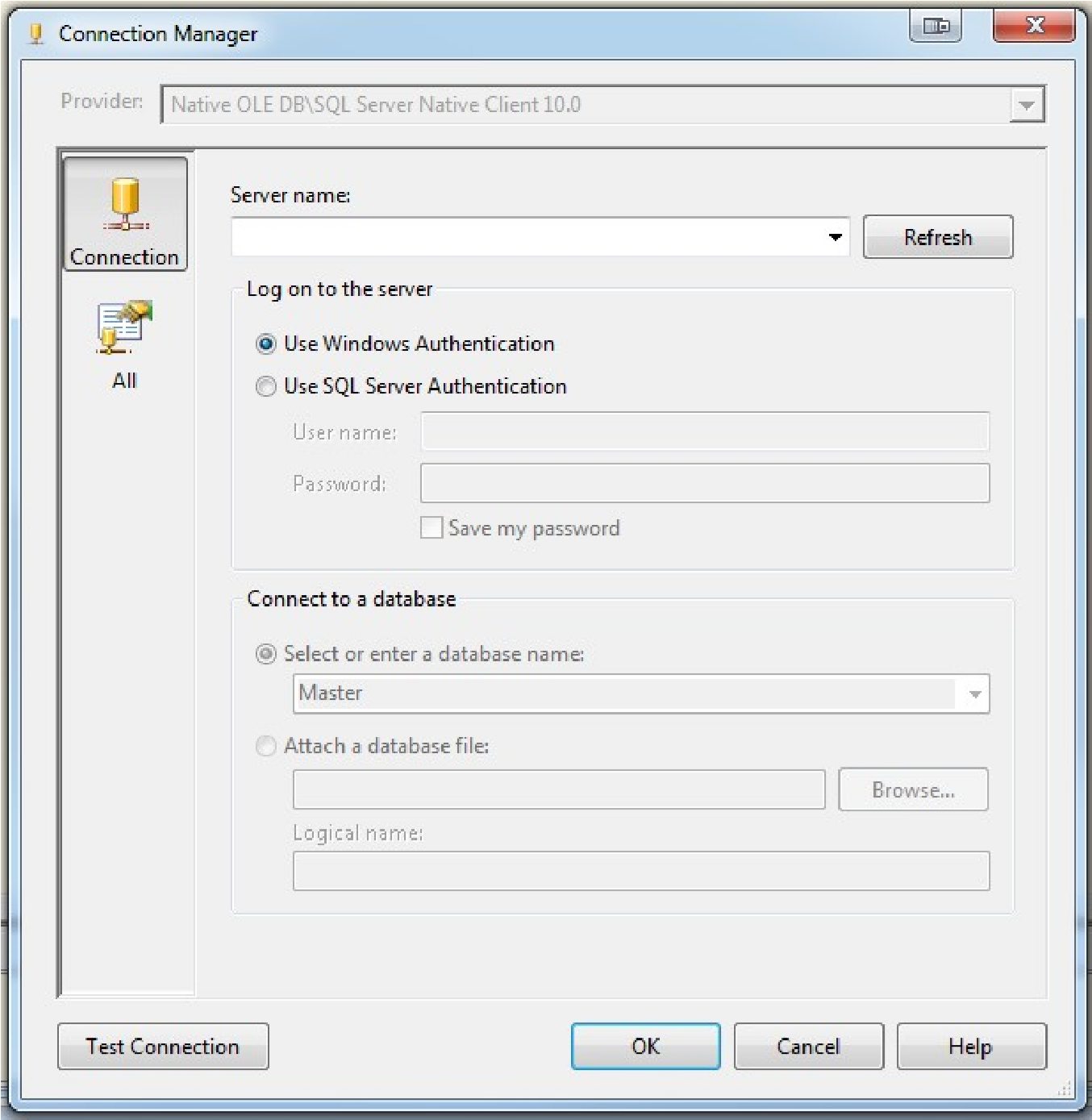

Connection Managers:

MainServer – this is the connection where I keep DBA_Reports

MonitoredServers – this is a connection to my remote servers that I am monitoring. In my case I use a proxyID called MonitoredServers on each server that has elevated permissions so it can crawl into each server and pull data. I also have a linked server to each monitored server on my main server. You don’t have to do this if you have an ID that is consistent across all servers. In this example, just create the connection manager and leave the server name blank and the database name blank.

- Tables:

- MonitoredServerList

- AuthorizedUsers

- FailedLogins

- Reports:

- SQL Server – Authorized User IDs

- SQL Server – Failed Login Attempts

- SSIS Package:

- ActiveUsersAndInvalidLogins

- SQL Server Audit (Database Engine)

- How to get the list of all database users

- SQL Server – Get all Login Accounts Using T-SQL Query – SQL Logins, Windows Logins, Windows Group

- How to automatically refresh a SQL Server database - October 4, 2017

- SQL Server Index vs Statistics a consultants woes…or rants - December 29, 2016

- How to Split a Comma Separated Value (CSV) file into SQL Server Columns - December 29, 2016

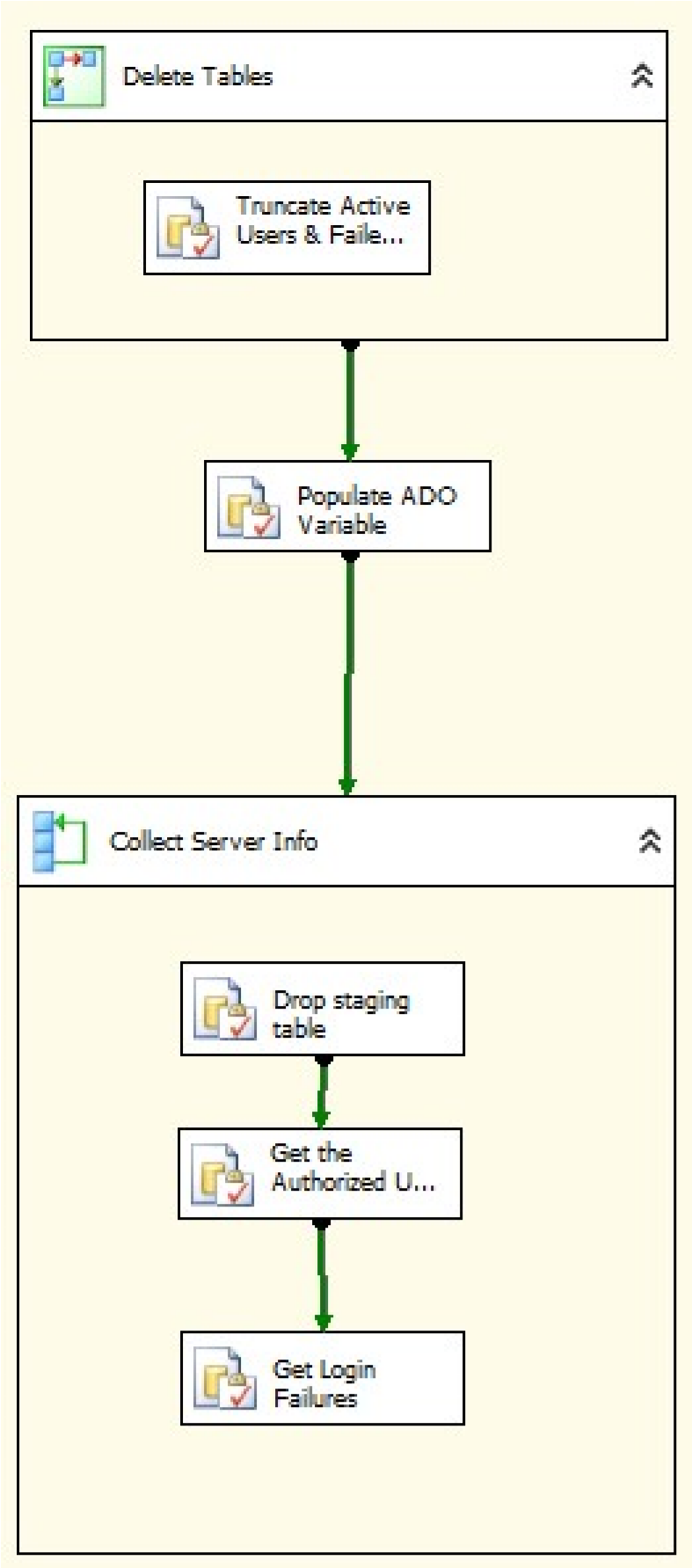

Inside the package

The Package consists of 3 nodes. The first node will remove old data from the tables on the main server. This step is optional if you want to maintain a history but then you will need to change the queries on the reports which are discussed later. I want fresh data daily so I remove old data. This task is contained within a sequence container.

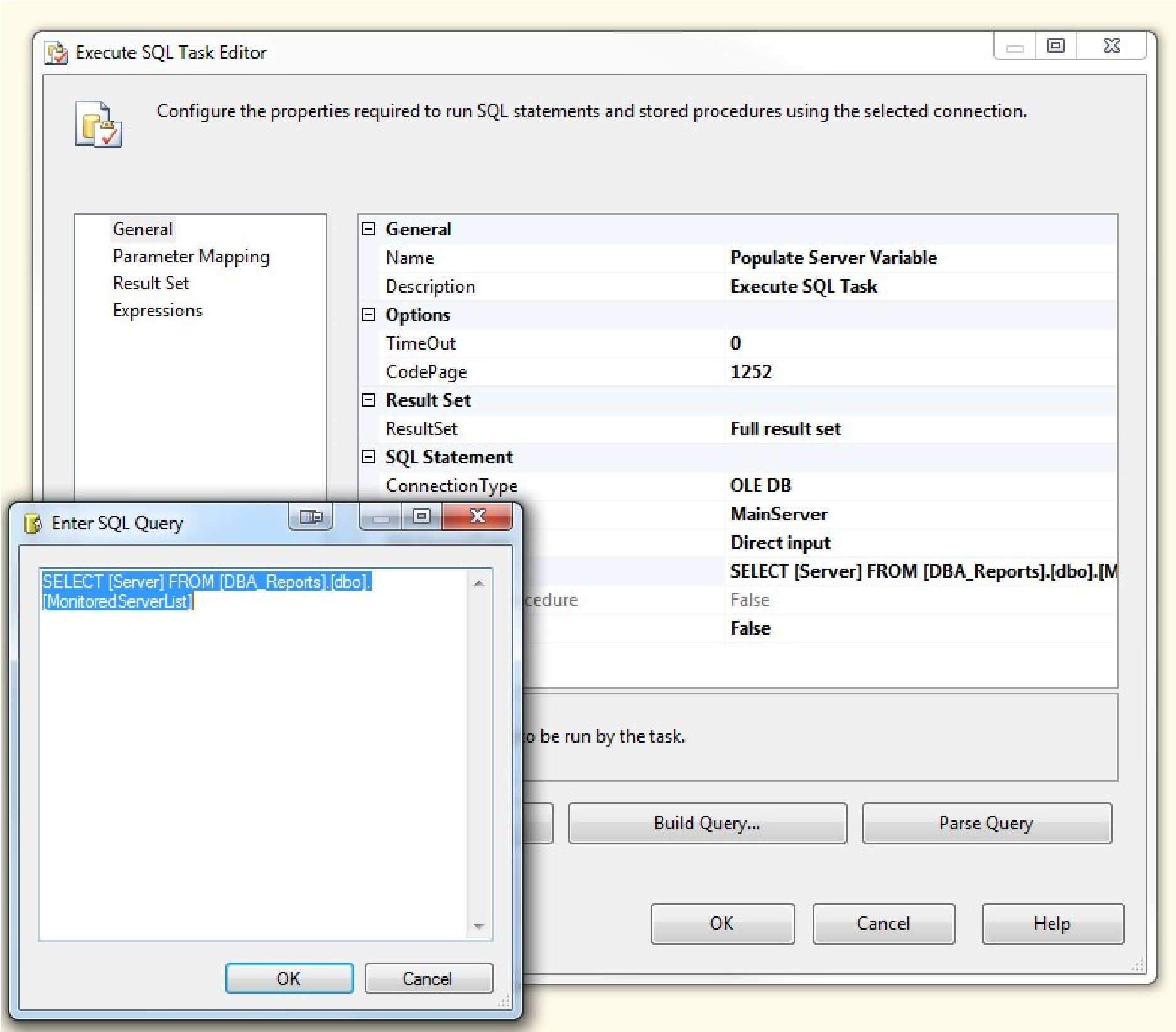

The second node is going to populate our variable inside the foreach loop in the following step which is our server names so that we can pull data from the DBA_Monitoring database on each monitored server.

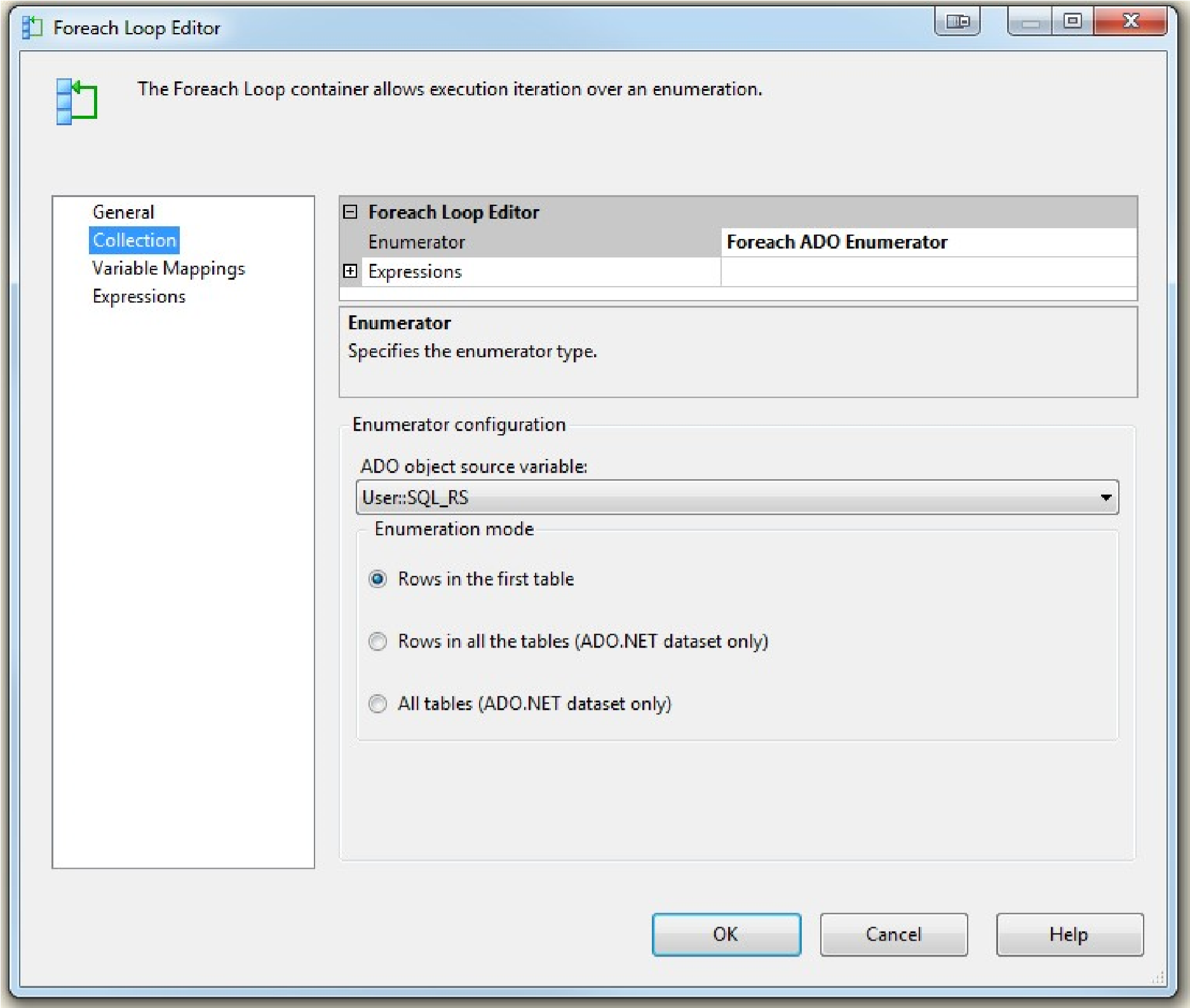

Next we have a foreach loop container that contains 3 queries that are run on each server that we are monitoring then saves the data into our main server. This container has a variable mapping that connects to our User::SRV_Conn variable.

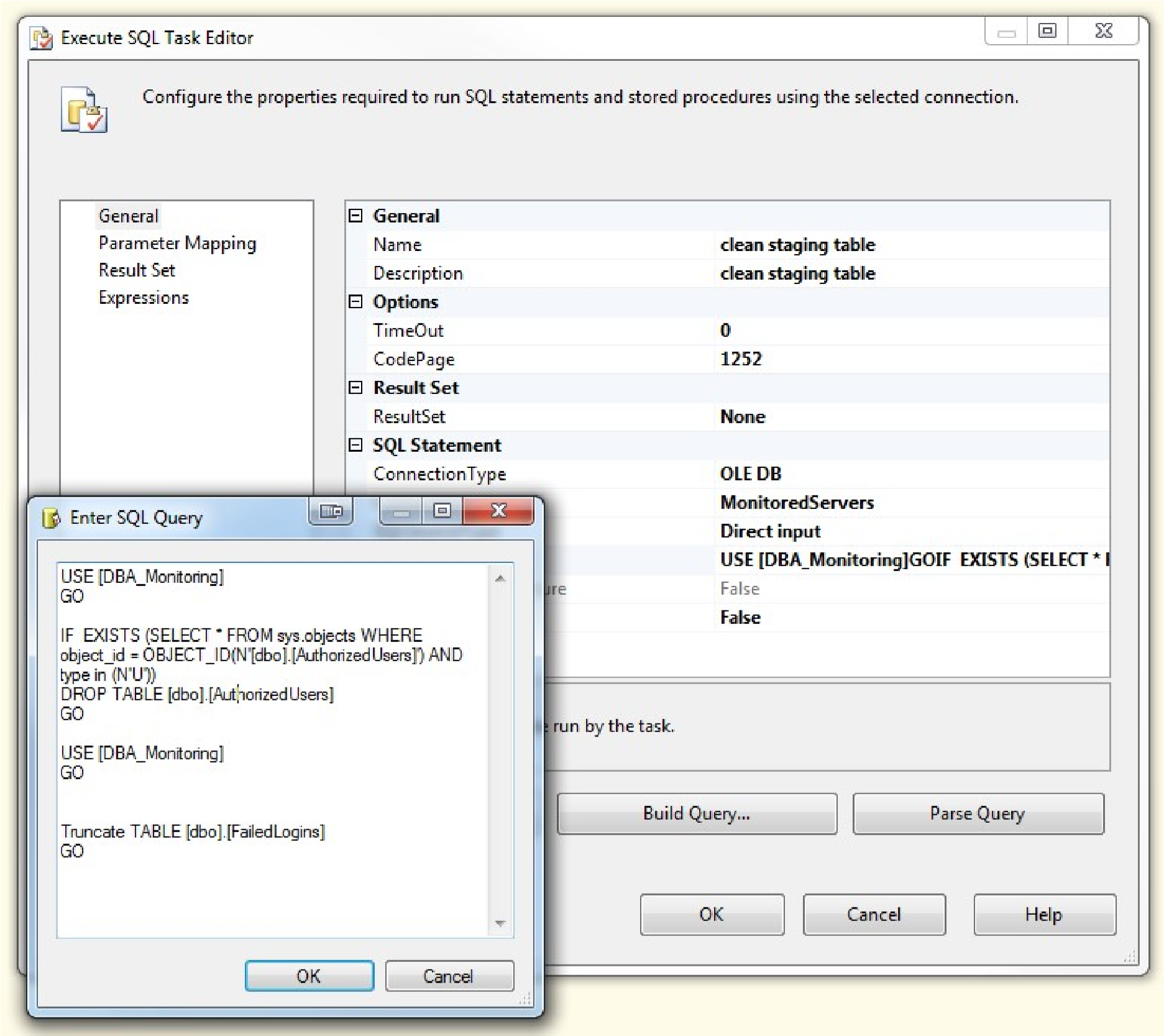

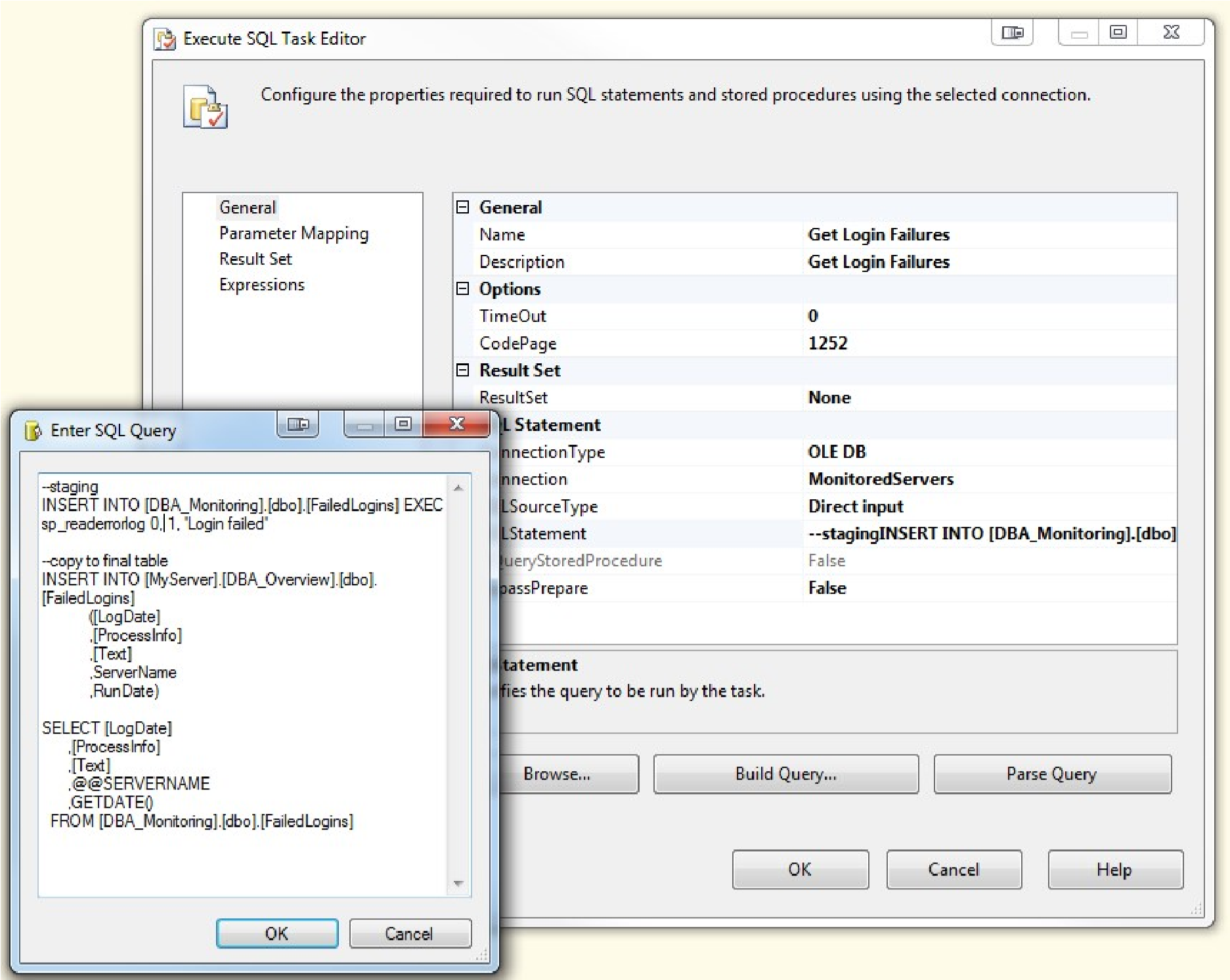

The first query shown below is used to the clean staging tables.

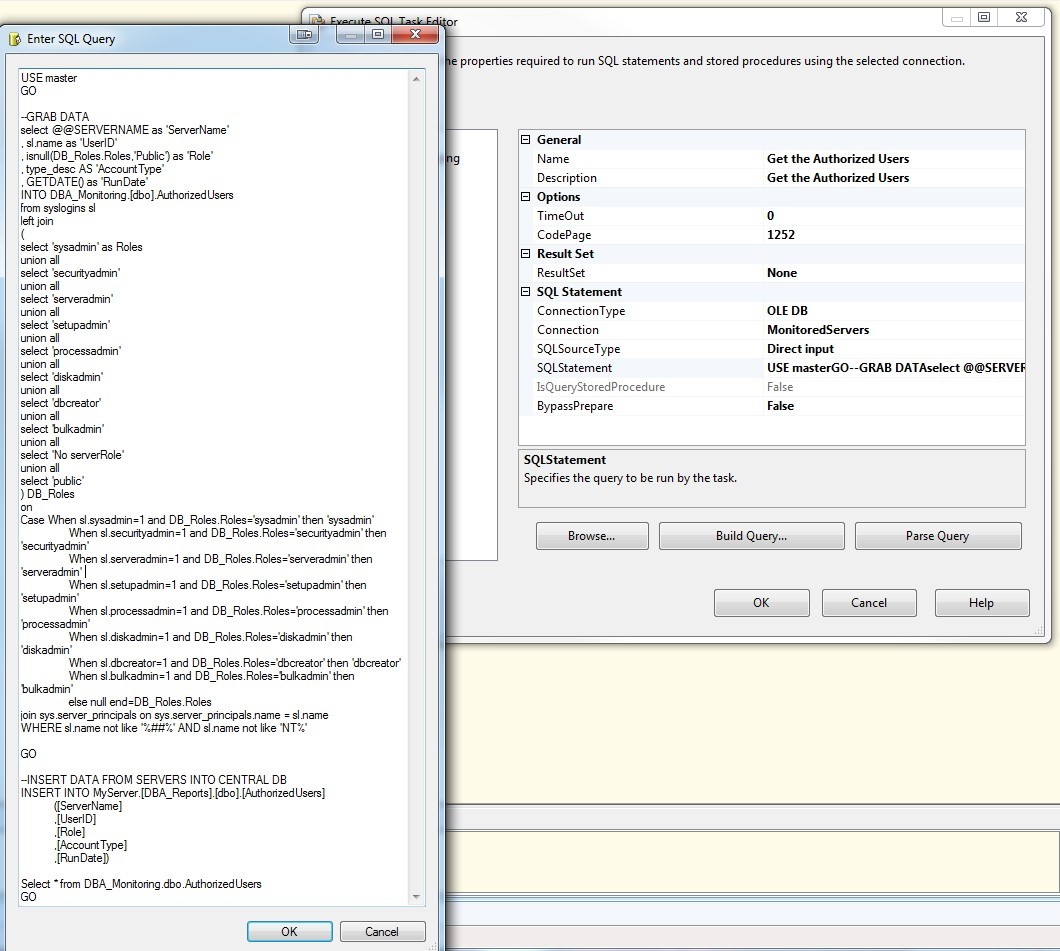

Our next query gets our Authorized SQL user information from our remote servers and writes the data into my main server.

The final step retrieves the failed logins from the remote servers and inserts the data into the main server.

Here is view of what the entire package looks like:

Step 4: Reports and Agent Job

I have created a SQL Agent job that contains two reports and is run on a schedule. In my case, I want to see these daily however this optional and you can make it run any schedule you want. I like daily specifically for invalid logins as those will defiantly change daily. Although, user access does not change daily in most cases, I personally like to run this audit daily as well. I have these reports in a single SQL Agent Job running at the same however they could easily be separated and run on different schedules.

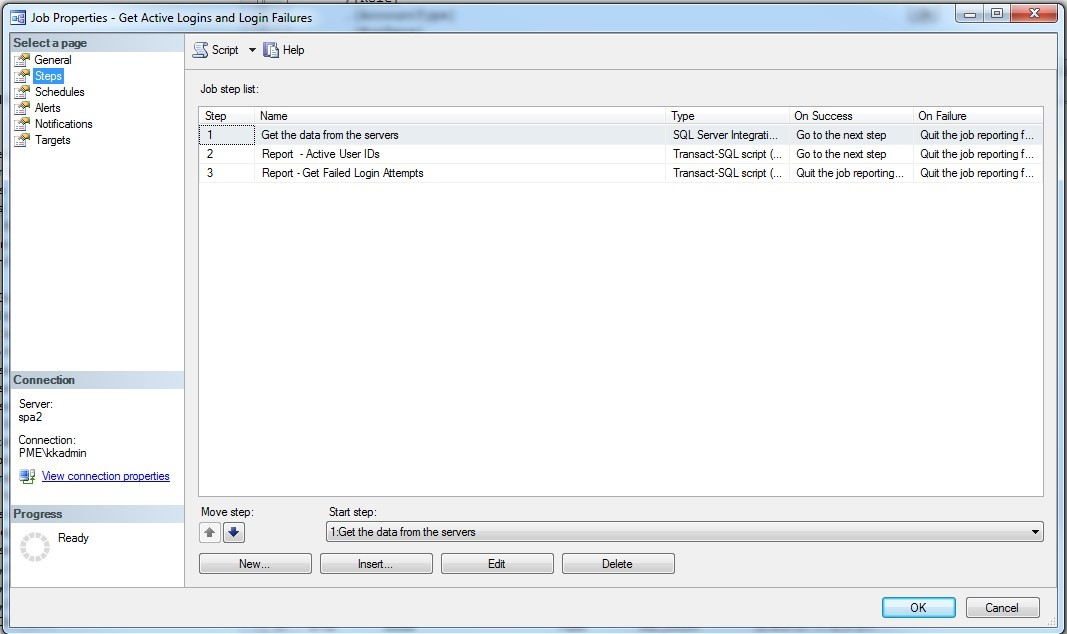

In the SQL Agent Job, on the steps branch, this job has 3 steps. Step 1 will call the SSIS package created Step 3 of this article, then after the data is collected, it will run two reports and email them to users listed.

Here is a script of the entire agent job which also includes the 2 report scripts, make sure to change the variables marked <add your info here>. Copy/paste and run this script to create the job and reports.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 177 178 179 180 181 182 183 184 185 186 187 188 189 190 191 192 193 194 195 196 197 198 199 200 201 202 203 204 205 206 207 208 209 210 211 212 213 214 215 216 217 218 219 220 221 222 223 224 225 226 227 228 229 230 231 232 233 234 235 236 237 238 239 |

USE [msdb] GO /****** Object: Job [Get Active Logins and Login Failures] ******/ BEGIN TRANSACTION DECLARE @ReturnCode INT SELECT @ReturnCode = 0 /****** Object: JobCategory [[Uncategorized (Local ******/ IF NOT EXISTS ( SELECT NAME FROM msdb.dbo.syscategories WHERE NAME = N’[Uncategorized (Local)]’ AND category_class = 1 ) BEGIN EXEC @ReturnCode = msdb.dbo.sp_add_category @class = N’JOB’ ,@type = N’LOCAL’ ,@name = N’[Uncategorized (Local)]’ IF ( @@ERROR <> 0 OR @ReturnCode <> 0 ) GOTO QuitWithRollback END DECLARE @jobId BINARY (16) EXEC @ReturnCode = msdb.dbo.sp_add_job @job_name = N’Get Active Logins and Login Failures’ ,@enabled = 1 ,@notify_level_eventlog = 0 ,@notify_level_email = 2 ,@notify_level_netsend = 0 ,@notify_level_page = 0 ,@delete_level = 0 ,@description = N’Get Active Logins and Login Failures on all instances’ ,@category_name = N’[Uncategorized (Local)]’ ,@owner_login_name = N’SA’ ,@notify_email_operator_name = N’<add your info here>’ ,@job_id = @jobId OUTPUT IF ( @@ERROR <> 0 OR @ReturnCode <> 0 ) GOTO QuitWithRollback /****** Object: Step [Get the data from the servers] ******/ EXEC @ReturnCode = msdb.dbo.sp_add_jobstep @job_id = @jobId ,@step_name = N’Get the data from the servers’ ,@step_id = 1 ,@cmdexec_success_code = 0 ,@on_success_action = 3 ,@on_success_step_id = 0 ,@on_fail_action = 2 ,@on_fail_step_id = 0 ,@retry_attempts = 0 ,@retry_interval = 0 ,@os_run_priority = 0 ,@subsystem = N’SSIS’ ,@command = N’/SQL "\ActiveUsersAndInvalidLogins" /SERVER <add integration services server name here> /CHECKPOINTING OFF /REPORTING E’ ,@database_name = N’master’ ,@output_file_name = N’<add your directory path here>\ActiveLoginsAndFailedLoginsErrors.txt’ ,@flags = 0 ,@proxy_name = N’<add proxyID if using>’ IF ( @@ERROR <> 0 OR @ReturnCode <> 0 ) GOTO QuitWithRollback /****** Object: Step [Report – Active User IDs] ******/ EXEC @ReturnCode = msdb.dbo.sp_add_jobstep @job_id = @jobId ,@step_name = N’Report – Active User IDs’ ,@step_id = 2 ,@cmdexec_success_code = 0 ,@on_success_action = 3 ,@on_success_step_id = 0 ,@on_fail_action = 2 ,@on_fail_step_id = 0 ,@retry_attempts = 0 ,@retry_interval = 0 ,@os_run_priority = 0 ,@subsystem = N’TSQL’ ,@command = N’SET nocount ON — DECLARE @Subject VARCHAR (100) SET @Subject="SQL Server – Authorized User IDs" DECLARE @Count AS INT SELECT @Count=COUNT(*) from DBA_Reports.dbo.AuthorizedUsers PRINT @Count IF @Count > 0 BEGIN DECLARE @tableHTML NVARCHAR(MAX) ; SET @tableHTML = N"<table border="1">" + N"<tr>" + N"<th>Server Name</th>" + N"<th>User ID</th>" + N"<th>Role</th>" + N"<th>Account Type</th>" + N"<th>Run Date</th>" + N"</tr>" + CAST ( ( SELECT distinct td=[ServerName],"" ,td=[UserID],"" ,td=[Role],"" ,td=[AccountType],"" ,td=[RunDate],"" FROM dbo.AuthorizedUsers FOR XML PATH("tr"), TYPE ) AS NVARCHAR(MAX) ) + N"</table>"; EXEC msdb.dbo.sp_send_dbmail @profile_name = "<add your dbmail profile here>", @recipients = "<add emails here>", @subject = @Subject, @body = @tableHTML, @body_format = "HTML" ; END ’ , @database_name = N’DBA_Reports’ ,@flags = 0 IF ( @@ERROR <> 0 OR @ReturnCode <> 0 ) GOTO QuitWithRollback /****** Object: Step [Report – Get Failed Login Attempts] ******/ EXEC @ReturnCode = msdb.dbo.sp_add_jobstep @job_id = @jobId ,@step_name = N’Report – Get Failed Login Attempts’ ,@step_id = 3 ,@cmdexec_success_code = 0 ,@on_success_action = 1 ,@on_success_step_id = 0 ,@on_fail_action = 2 ,@on_fail_step_id = 0 ,@retry_attempts = 0 ,@retry_interval = 0 ,@os_run_priority = 0 ,@subsystem = N’TSQL’ ,@command = N’SET nocount ON — DECLARE @Subject VARCHAR (100) SET @Subject="SQL Server – Failed Login Attempts" DECLARE @Count AS INT SELECT @Count=COUNT(*) from dbo.FailedLogins where LogDate >= GETDATE()-1 PRINT @Count IF @Count > 0 BEGIN DECLARE @tableHTML NVARCHAR(MAX) ; SET @tableHTML = N"<table border="1">" + N"<tr>" + N"<th>Server Name</th>" + N"<th>Error Text</th>" + N"<th>Log Date</th>" + N"</tr>" + CAST ( ( SELECT distinct td=[ServerName],"" ,td=[Text],"" ,td=[LogDate],"" FROM dbo.FailedLogins where LogDate >= GETDATE()-1 FOR XML PATH("tr"), TYPE ) AS NVARCHAR(MAX) ) + N"</table>" ; EXEC msdb.dbo.sp_send_dbmail @profile_name = "<add dbmail profile here>", @recipients = "<add emails here>", @subject = @Subject, @body = @tableHTML, @body_format = "HTML" ; END’ ,@database_name = N’DBA_Reports’ ,@flags = 0 IF ( @@ERROR <> 0 OR @ReturnCode <> 0 ) GOTO QuitWithRollback EXEC @ReturnCode = msdb.dbo.sp_update_job @job_id = @jobId ,@start_step_id = 1 IF ( @@ERROR <> 0 OR @ReturnCode <> 0 ) GOTO QuitWithRollback EXEC @ReturnCode = msdb.dbo.sp_add_jobschedule @job_id = @jobId ,@name = N’Daily’ ,@enabled = 1 ,@freq_type = 4 ,@freq_interval = 1 ,@freq_subday_type = 1 ,@freq_subday_interval = 0 ,@freq_relative_interval = 0 ,@freq_recurrence_factor = 0 ,@active_start_date = 20160109 ,@active_end_date = 99991231 ,@active_start_time = 60000 ,@active_end_time = 235959 IF ( @@ERROR <> 0 OR @ReturnCode <> 0 ) GOTO QuitWithRollback EXEC @ReturnCode = msdb.dbo.sp_add_jobserver @job_id = @jobId ,@server_name = N’(local)’ IF ( @@ERROR <> 0 OR @ReturnCode <> 0 ) GOTO QuitWithRollback COMMIT TRANSACTION GOTO EndSave QuitWithRollback: IF (@@TRANCOUNT > 0) ROLLBACK TRANSACTION EndSave: GO |

Here is an example of each report that is emailed

Conclusion

Here you have a quick and simple way to collect both failed logins and active sql server users in a nice neat HTML generated formatted email.

Included in this package, the following will be created when the scripts are run: